Evolving Observability Architecture for Cloud-Scale Event Data [2025]

In the rapidly expanding world of cloud computing, the ability to monitor and understand system behavior has become a cornerstone of reliability and performance. As businesses increasingly rely on complex, distributed systems, traditional observability methods often fall short. This article explores how evolving observability architectures are reshaping our approach to handling cloud-scale event data, enabling seamless operation and strategic insights.

TL; DR

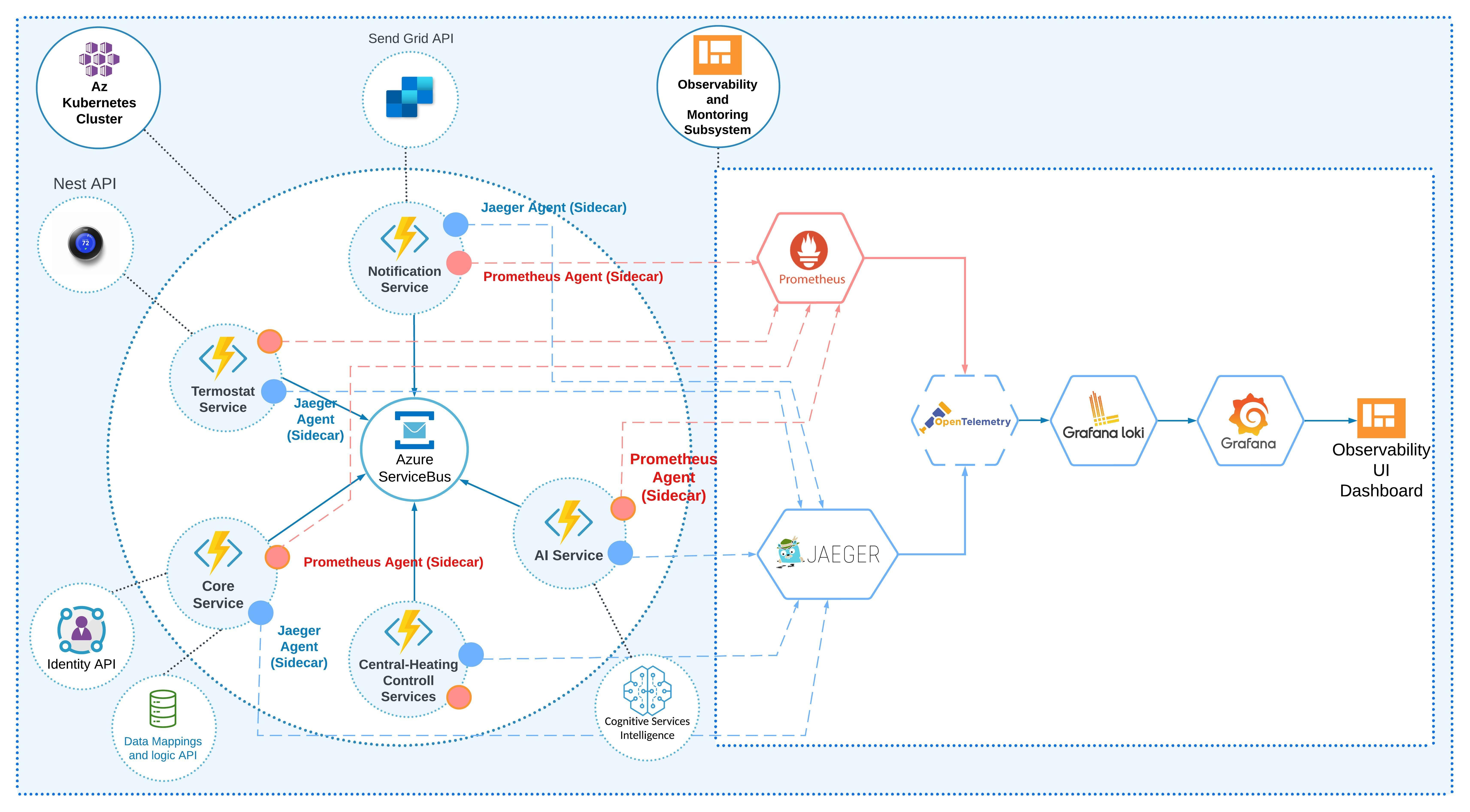

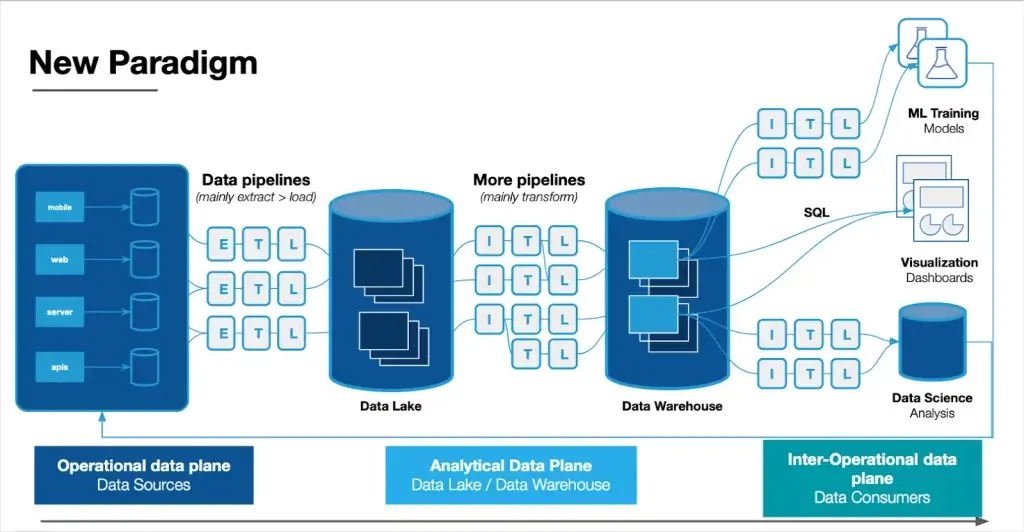

- Decoupled Architecture: Modern observability systems leverage decoupled architectures to handle massive event data efficiently, as discussed in AI Journ's article on event-driven architecture.

- Event-Native Monitoring: Emphasizes real-time insights from native cloud events, enhancing system understanding.

- Scalability Challenges: Addressing the challenges of data volume and velocity with innovative solutions.

- Practical Implementation: Offers a step-by-step guide to deploying cloud-scale observability.

- Future Trends: Predictions for the next generation of observability tools and strategies.

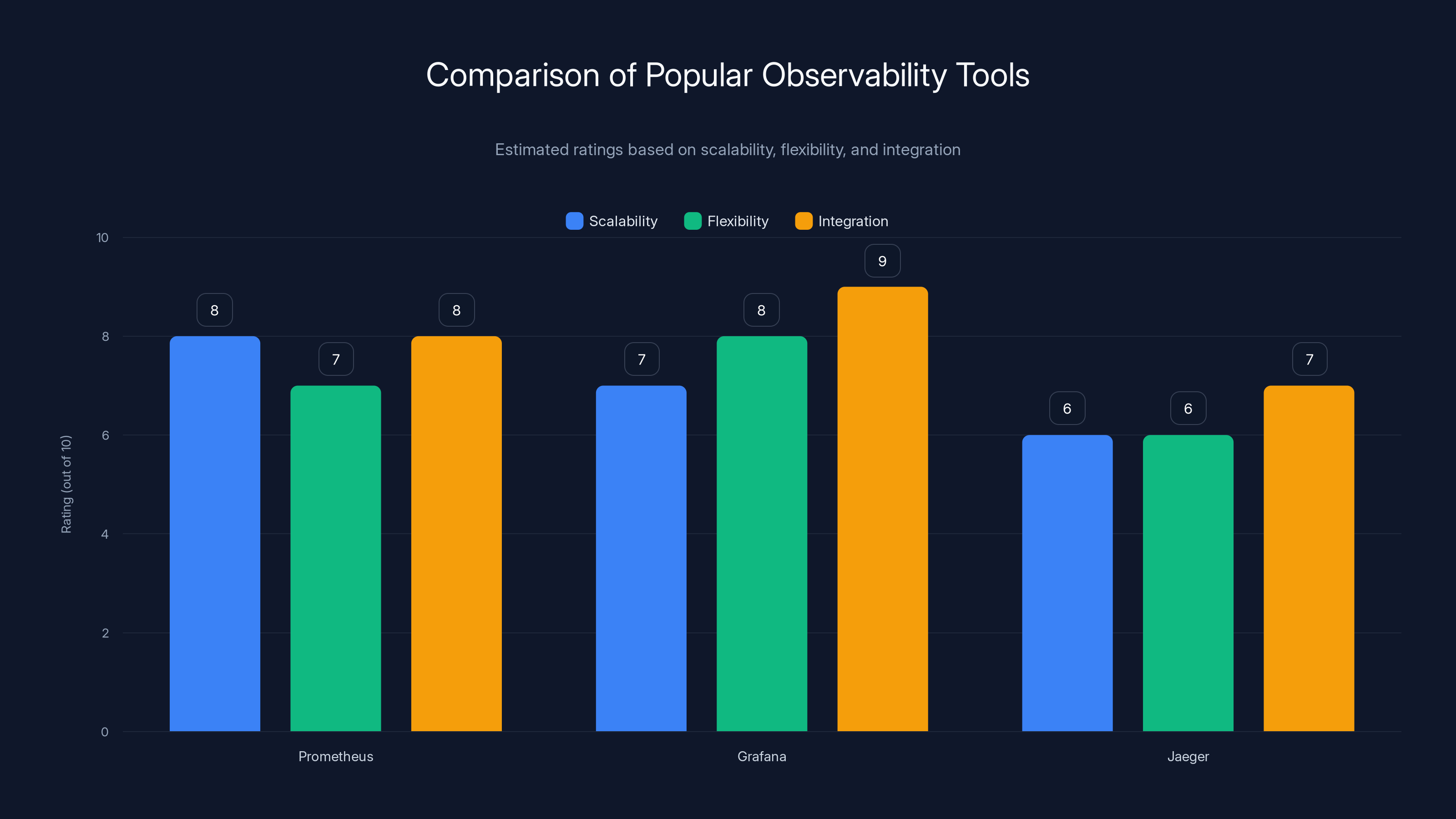

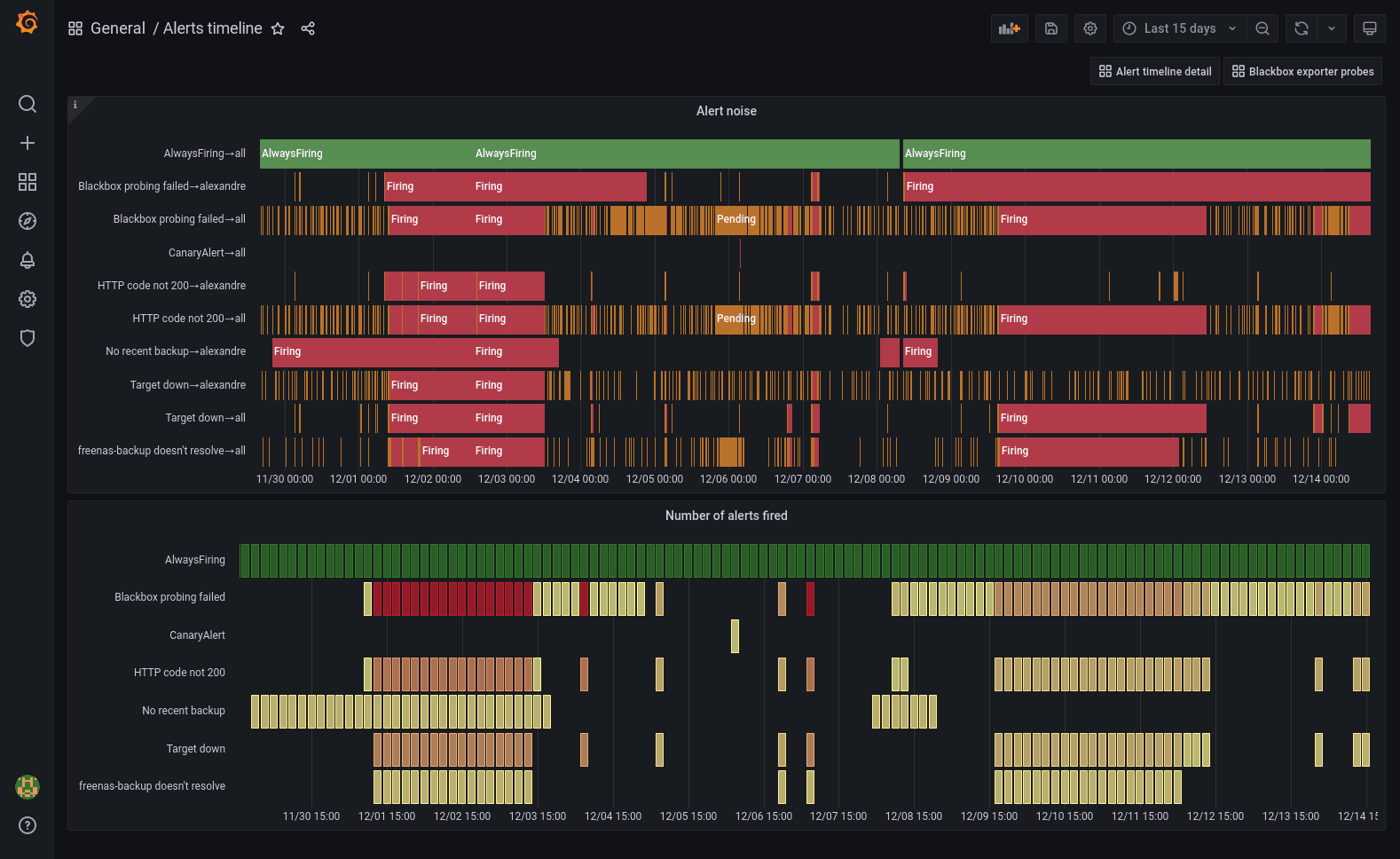

Grafana scores highest in integration, making it a versatile choice for dynamic dashboards. Estimated data.

The Need for Advanced Observability

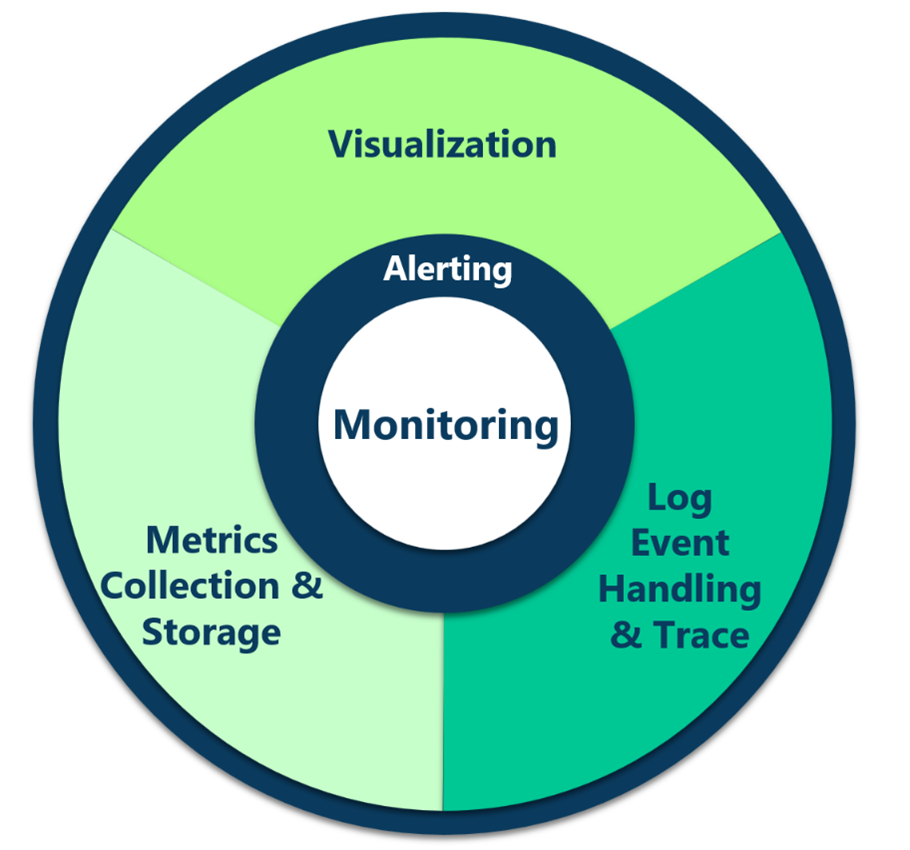

Cloud environments are inherently complex. They consist of microservices, containers, serverless functions, and other components that interact in dynamic ways. As a result, traditional monitoring tools that focus on metrics and logs often struggle to provide a comprehensive view.

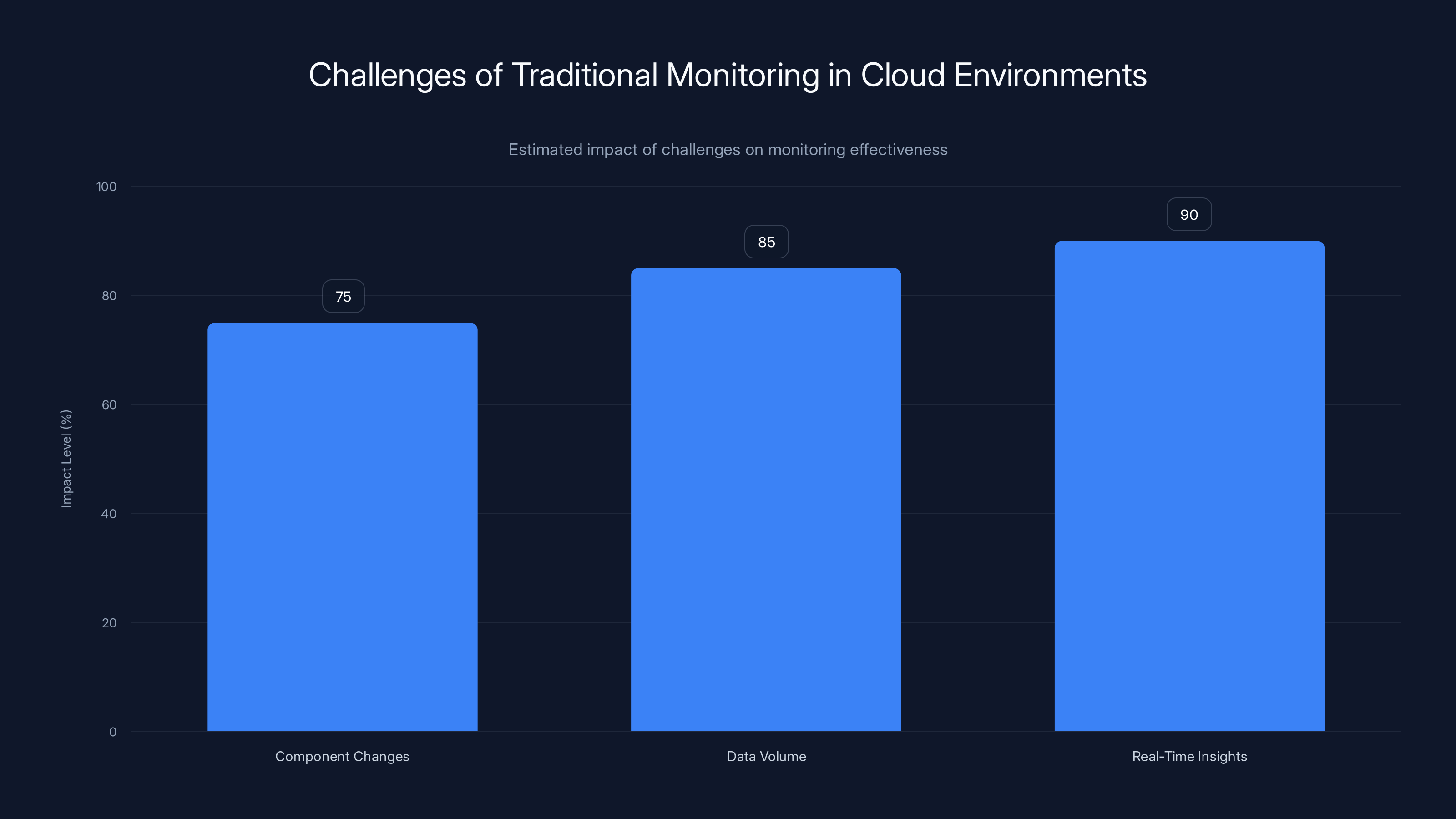

Challenges of Traditional Monitoring

Traditional monitoring tools were designed for static, monolithic applications. They typically rely on predefined metrics and logs, which can be inadequate in dynamic cloud environments where:

- Components Change Frequently: The ephemeral nature of cloud components means that the system's state can change rapidly.

- Data Volume is Massive: Cloud applications generate vast amounts of data, often overwhelming traditional systems.

- Real-Time Insights are Crucial: Immediate insights are necessary to maintain performance and reliability.

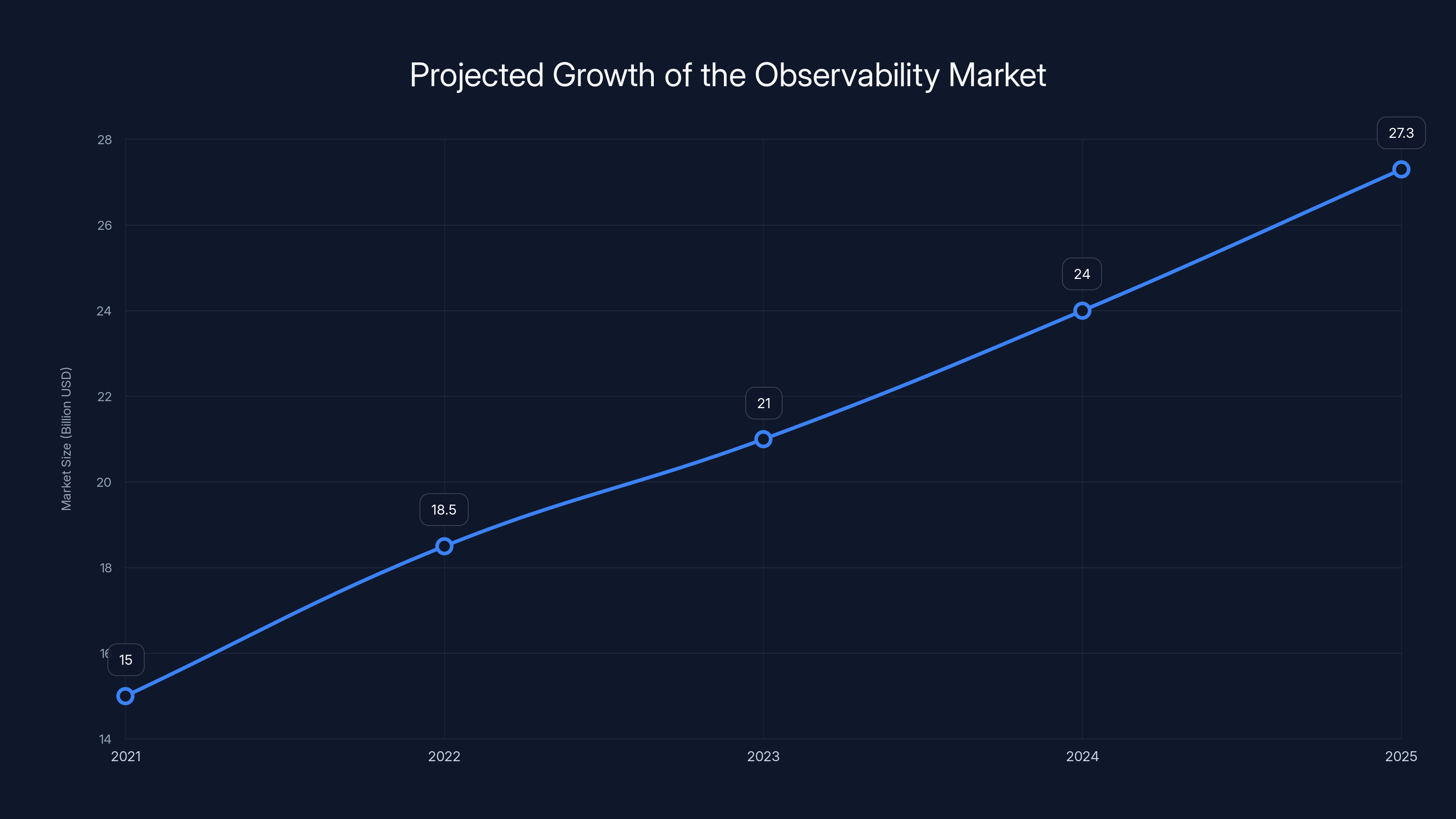

The observability market is expected to grow significantly, reaching $27.3 billion by 2025, driven by AI/ML advancements and full-stack observability. Estimated data.

What is Event-Native Observability?

Event-native observability shifts the focus from metrics and logs to events, which are richer data structures that capture the context of what happened, why it happened, and how it impacts the system.

Key Characteristics

- Contextual Information: Events include detailed context, making them more informative than traditional logs.

- Real-Time Processing: The ability to process and react to events in real-time is critical for maintaining system health, as highlighted in Nature's recent study.

- Decoupled Architecture: A decoupled approach allows for scalability and flexibility, enabling systems to consume and process events independently.

Implementing Event-Native Observability

Implementing event-native observability involves several steps, each crucial for building a robust monitoring framework.

Step 1: Define Your Observability Goals

Before implementing any solution, it's important to establish clear goals. Consider what you need to monitor and the insights you wish to gain. Common goals include:

- Performance Monitoring: Track key performance indicators (KPIs) to ensure optimal operation.

- Anomaly Detection: Identify and respond to unusual patterns or behaviors.

- Root Cause Analysis: Quickly diagnose and fix the underlying causes of issues.

Step 2: Choose the Right Tools

Selecting the right tools is essential. Look for solutions that offer:

- Scalability: Can handle the volume and velocity of your data.

- Flexibility: Supports various data types and sources.

- Integration: Easily integrates with your existing tech stack.

Popular tools in the market include:

- Prometheus: An open-source monitoring system with a flexible query language.

- Grafana: Provides interactive visualization dashboards.

- Jaeger: An open-source distributed tracing system.

Step 3: Implement a Data Pipeline

A robust data pipeline is necessary to collect, process, and analyze event data. Key components include:

- Data Collection: Use agents or APIs to gather data from various sources.

- Data Processing: Employ stream processing platforms like Apache Kafka or AWS Kinesis for real-time data handling.

- Data Storage: Choose scalable storage solutions like Amazon S3 or Google Cloud Storage for persistence.

Step 4: Establish Monitoring Dashboards

Visual dashboards help teams quickly interpret data and make informed decisions. Implement dynamic dashboards that can:

- Visualize Key Metrics: Display real-time data clearly and concisely.

- Alert on Anomalies: Set up alerts to notify teams of critical issues.

- Support Drill-Down Analysis: Allow users to explore data in-depth to find root causes.

The dynamic nature of cloud environments significantly impacts the effectiveness of traditional monitoring tools, with real-time insights being the most critical challenge. (Estimated data)

Addressing Scalability Challenges

Scalability is a major concern for any cloud-scale observability solution. As systems grow, so does the data they produce, leading to potential bottlenecks and performance issues.

Strategies for Scalability

- Decoupled Architecture: By decoupling data producers and consumers, systems can scale independently.

- Horizontal Scaling: Add more nodes to your system instead of increasing the capacity of existing ones.

- Load Balancing: Distribute incoming data evenly across servers to prevent overloading.

- Data Partitioning: Split data into manageable chunks to improve processing efficiency.

Best Practices for Cloud-Scale Observability

Adopting best practices can enhance the effectiveness and reliability of your observability framework.

1. Adopt a Unified Data Model

A unified data model ensures consistency across different data sources, making it easier to correlate and analyze information.

2. Prioritize Real-Time Processing

Real-time processing is crucial for detecting and responding to issues as they arise. Utilize stream processing technologies to handle data in real-time, as emphasized in Wiz's guide on incident response tools.

3. Automate Alerting and Responses

Automated alerting helps in promptly identifying issues without manual intervention. Use machine learning models to automate responses to common alerts.

4. Foster a Culture of Observability

Encourage teams to embrace observability by integrating it into development and operations workflows. Create a shared understanding of its importance across the organization.

Common Pitfalls and How to Avoid Them

While observability can provide significant benefits, there are common pitfalls that organizations should be aware of.

Pitfall 1: Overlooking Data Quality

Poor data quality can lead to inaccurate insights and decisions. Ensure data is clean, complete, and consistent, as highlighted in StateTech Magazine's article on configuration drift.

Pitfall 2: Ignoring Security Concerns

Event data can contain sensitive information. Implement strict access controls and encryption to protect data privacy, as discussed in The Scientist's analysis of the FDA's adverse event dashboard.

Pitfall 3: Underestimating Resource Requirements

Observability systems can be resource-intensive. Plan for sufficient computational and storage resources to support your architecture.

Future Trends in Observability

As technology evolves, so too will observability practices. Here are some trends to watch in the coming years:

1. Increased Use of AI and ML

Artificial intelligence and machine learning will play a larger role in observability, enabling predictive analytics and anomaly detection, as noted in ASTHO's report on AI in public health.

2. Shift Towards Full-Stack Observability

Full-stack observability will become more prevalent, providing end-to-end visibility across the entire application stack.

3. Greater Focus on User Experience

User-centric observability will gain importance, focusing on the performance and reliability of user interactions.

Conclusion

Evolving observability architectures are essential for managing the complexities of cloud-scale event data. By adopting event-native approaches and leveraging modern technologies, organizations can gain deep insights into their systems, ensuring performance and reliability.

Use Case: Automate your cloud monitoring workflows with AI-driven insights.

Try Runable For FreeFAQ

What is event-native observability?

Event-native observability focuses on capturing and analyzing events, which provide rich context and real-time insights, as opposed to traditional metrics and logs.

How does decoupled architecture enhance observability?

Decoupled architecture allows data producers and consumers to operate independently, improving scalability and flexibility in handling large volumes of event data.

What are the benefits of real-time data processing?

Real-time data processing enables immediate detection of issues, faster decision-making, and proactive system management, leading to improved performance and reliability.

Why is data quality important in observability?

High-quality data ensures accurate monitoring insights and effective decision-making. Poor data quality can lead to misleading conclusions and ineffective responses.

How can AI and ML enhance observability?

AI and ML can automate anomaly detection, predict potential issues, and optimize resource allocation, enhancing the overall efficiency of observability systems.

What is full-stack observability?

Full-stack observability provides comprehensive visibility across the entire application stack, from infrastructure to user interactions, ensuring end-to-end performance monitoring.

Key Takeaways

- Event-native observability provides real-time insights from cloud events.

- Decoupled architectures enhance scalability and flexibility.

- Real-time processing is crucial for proactive system management.

- AI and ML are transforming anomaly detection and predictive analytics.

- Full-stack observability ensures comprehensive system visibility.

Related Articles

- The AI Illusion: Why Businesses Are Spending Big but Fixing Nothing [2025]

- Transforming the Bloomberg Terminal with AI: A New Era in Financial Analysis [2025]

- Exploring Shapes: Bridging Humans and AI in Group Chats [2025]

- How AI Can Revolutionize the Fight Against Antibiotic Resistance [2025]

- Building Custom Reasoning Agents with Minimal Compute [2025]

- Amazon's Integration of OpenAI Products on AWS [2026]

![Evolving Observability Architecture for Cloud-Scale Event Data [2025]](https://tryrunable.com/blog/evolving-observability-architecture-for-cloud-scale-event-da/image-1-1777471608260.jpg)