Google's Landmark Discovery: AI-Generated Zero-Day Exploit [2025]

Last month, a groundbreaking discovery by Google's Threat Intelligence Group (GTIG) shook the cybersecurity world. They uncovered a zero-day exploit, but with a twist—it was crafted using artificial intelligence. This marks a pivotal moment in cybersecurity, where AI is not just a tool for defense, but potentially a weapon for attack. Let's dive deep into the implications, technical details, and what the future holds.

TL; DR

- AI-Crafted Exploit: Google's GTIG discovered a zero-day exploit made using AI, a first in cybersecurity.

- Mass Exploitation Averted: The exploit was intended for a mass attack, but proactive measures prevented its use.

- Technical Complexity: AI was used to identify vulnerabilities and automate the creation of the exploit.

- Future Concerns: AI in cybercrime could lead to more sophisticated and widespread threats.

- Security Recommendations: Emphasize proactive measures, AI-driven threat detection, and regular security updates.

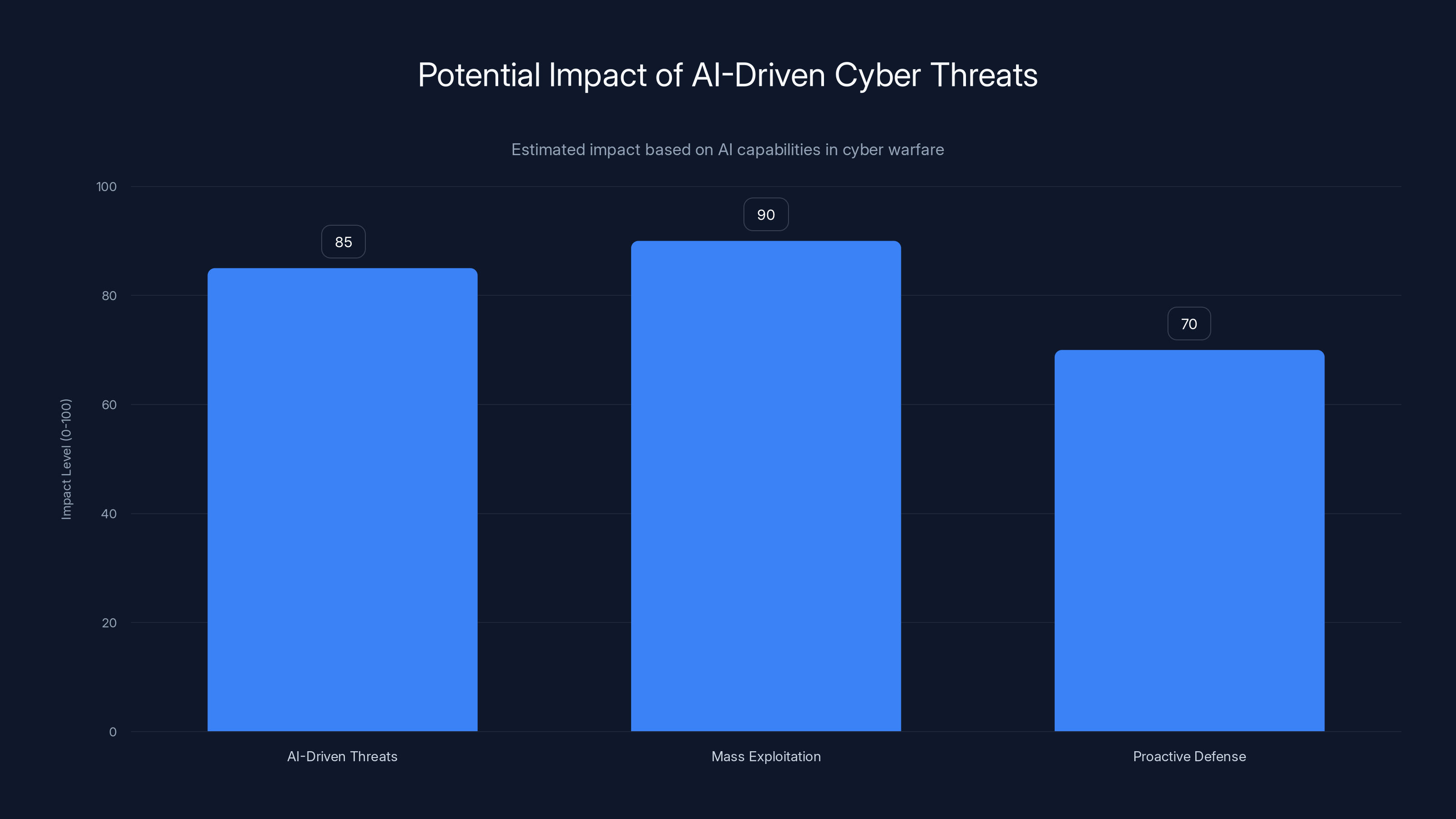

AI-driven threats and mass exploitation have high potential impact, while proactive defense is crucial for mitigation. Estimated data.

Understanding Zero-Day Exploits

Zero-day exploits are vulnerabilities that are unknown to the software vendor or the public. These exploits are particularly dangerous because there is no available fix, leaving systems vulnerable to attacks. The term "zero-day" signifies that developers have had zero days to address and patch the vulnerability.

How Zero-Day Exploits Are Traditionally Discovered

Traditionally, zero-day exploits are discovered through manual code analysis, bug bounty programs, or during the execution of malicious software in controlled environments. Security researchers often look for unusual patterns or behaviors in software that might indicate a vulnerability.

The Role of AI in Discovering Vulnerabilities

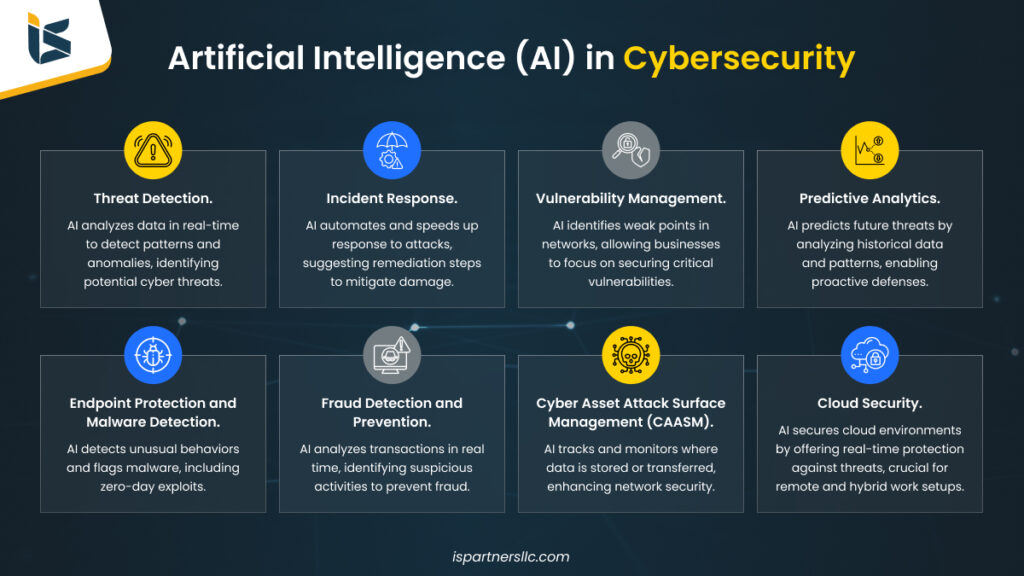

AI has been increasingly used to aid in the discovery of vulnerabilities. Machine learning models can analyze vast amounts of code and identify potential weaknesses faster than human analysts. AI models are trained to recognize patterns that signify possible security flaws, making the process more efficient and thorough.

AI significantly enhances threat detection and real-time response capabilities in cybersecurity. Estimated data.

The Landmark Discovery by Google's GTIG

Google's GTIG discovered the first-ever zero-day exploit crafted using AI. This discovery is significant for several reasons:

- AI-Driven Threats: It demonstrates that AI can be used not just defensively but offensively in cyber warfare.

- Mass Exploitation Potential: The exploit was intended for a mass attack, highlighting the scale at which AI-driven threats could operate.

- Proactive Defense: Google's early detection and response averted a potential disaster.

Technical Details of the AI-Crafted Exploit

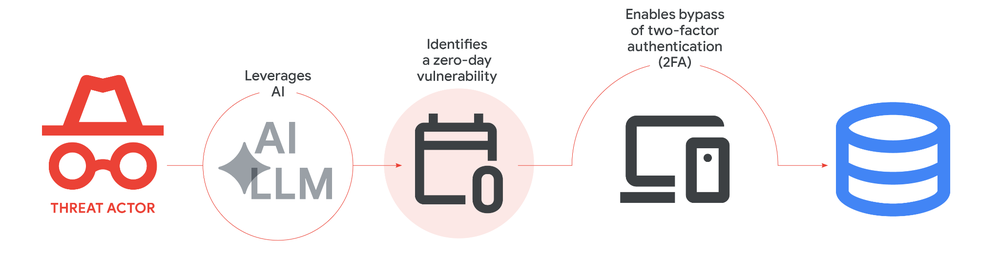

While specific technical details were not fully disclosed, we can infer several aspects based on common AI techniques:

- Pattern Recognition: AI models likely used pattern recognition to identify code vulnerabilities.

- Natural Language Processing (NLP): NLP could have been used to interpret code comments and documentation to locate potential weak points.

- Automated Code Generation: Once a vulnerability was identified, AI may have automated the creation of exploit code, optimizing it for effectiveness and stealth.

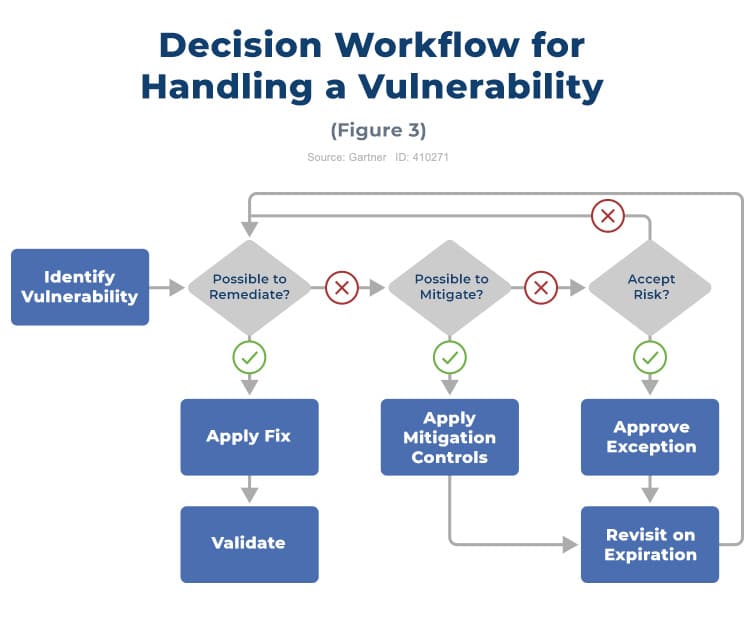

Implementation Best Practices

For organizations looking to defend against AI-generated threats, several best practices are crucial:

- Adopt AI-Powered Security Tools: Utilize AI-driven security solutions that can identify and respond to threats in real-time.

- Regular Security Audits: Conduct regular security audits and vulnerability assessments to identify potential weaknesses.

- Proactive Patch Management: Implement a robust patch management strategy to quickly address vulnerabilities as they are discovered.

Real-World Use Cases and Examples

Case Study: AI in Cyber Defense

A leading financial institution implemented AI-driven security measures after facing frequent cyber threats. By using AI to analyze network traffic and user behaviors, they reduced false positives and detected anomalies faster. This proactive approach allowed them to mitigate threats before they could cause significant damage.

Potential for AI in Offensive Cyber Operations

While AI offers numerous defensive applications, its potential in offensive operations cannot be overlooked. Cybercriminals can leverage AI to automate phishing attacks, craft more convincing social engineering schemes, and even generate malicious code more efficiently.

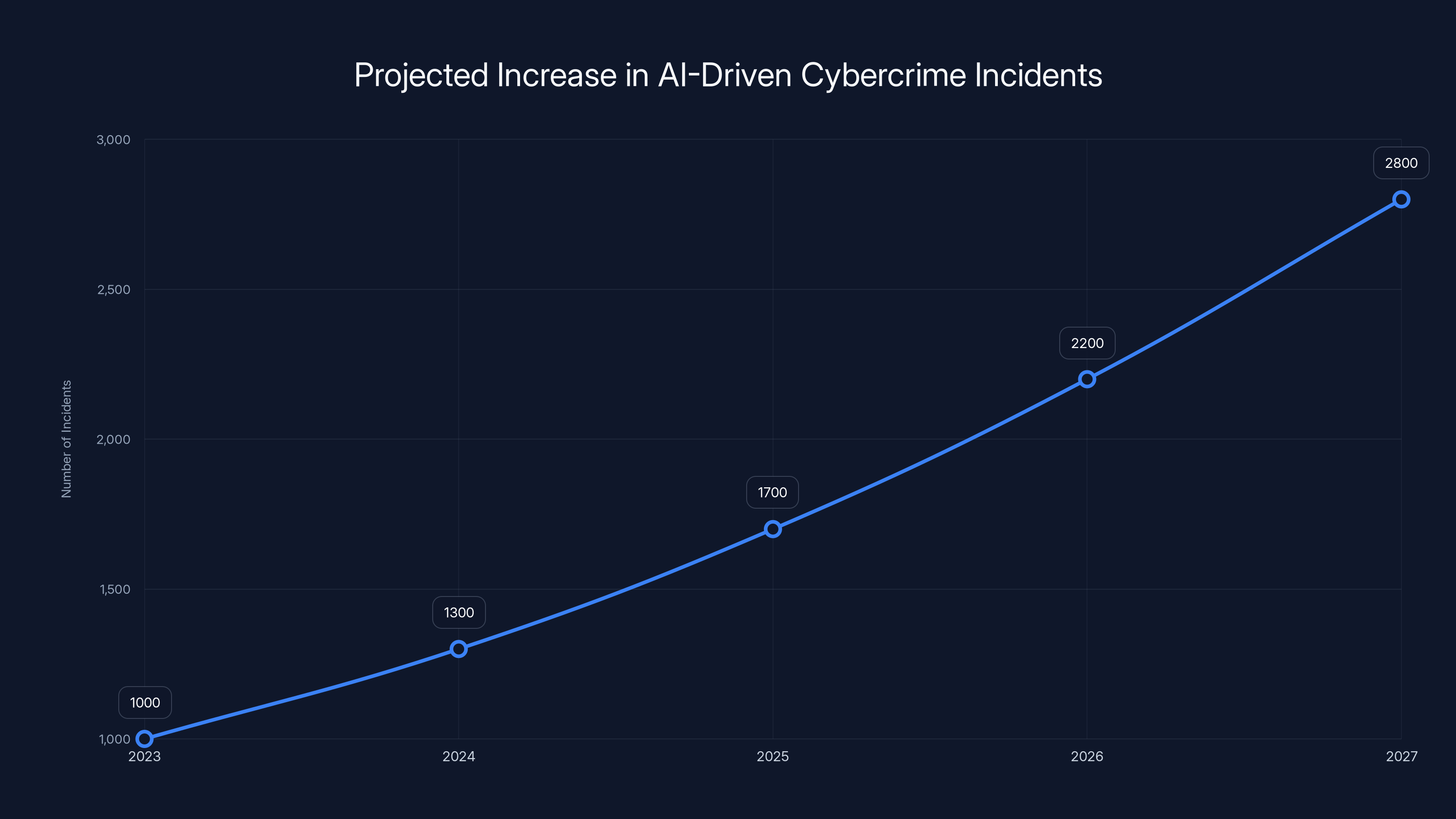

The number of AI-driven cybercrime incidents is projected to nearly triple from 2023 to 2027, highlighting the urgent need for enhanced cybersecurity measures. (Estimated data)

Common Pitfalls and Solutions

Over-Reliance on AI

One common pitfall is over-relying on AI for security. While AI can enhance threat detection, it should not replace human oversight. Combining AI with expert analysis ensures more comprehensive security coverage.

Data Privacy Concerns

AI-driven security systems often require access to vast amounts of data, raising privacy concerns. Organizations must ensure they comply with data protection regulations and implement robust privacy measures.

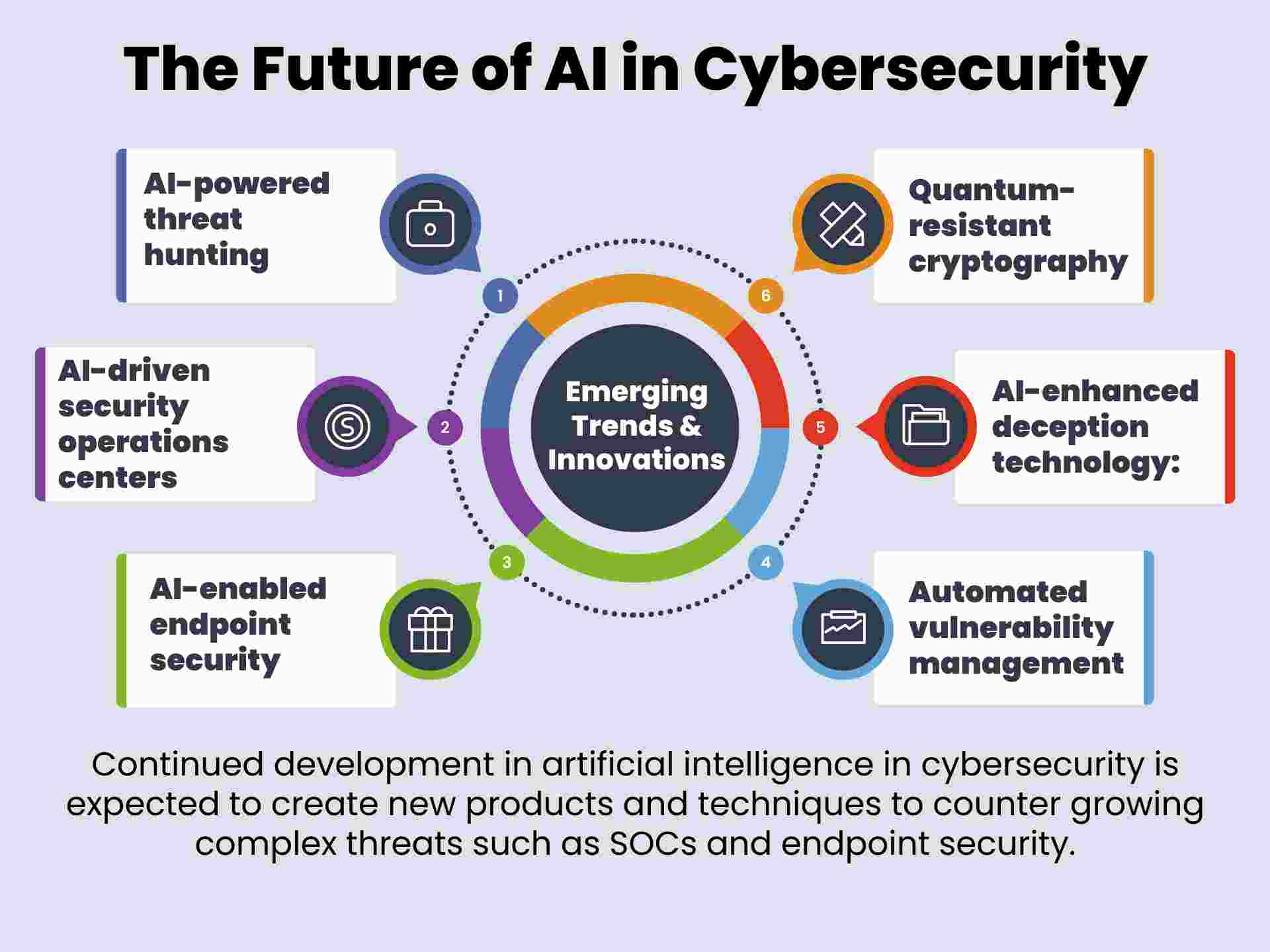

Future Trends and Recommendations

The Rise of AI in Cybercrime

As AI technology advances, its use in cybercrime will likely increase. We can expect more sophisticated attacks that are harder to detect and mitigate. This trend necessitates a shift in how we approach cybersecurity.

Recommendations for Organizations

- Invest in AI Research: Stay ahead of cybercriminals by investing in AI research and development.

- Collaborate on Threat Intelligence: Share threat intelligence across industries to enhance collective defense capabilities.

- Enhance Employee Training: Educate employees on recognizing and responding to AI-driven threats.

The Role of Governments and Regulatory Bodies

Governments and regulatory bodies must play a role in overseeing the ethical use of AI in cybersecurity. This includes setting standards and guidelines for AI deployment in both defense and potential offensive scenarios.

Conclusion

Google's discovery of an AI-generated zero-day exploit is a wake-up call for the cybersecurity industry. As AI continues to evolve, so too will the tactics of cybercriminals. By embracing AI for both defense and offense, and by fostering collaboration across industries, we can build a more secure digital future.

FAQ

What is a zero-day exploit?

A zero-day exploit refers to a vulnerability in software that is unknown to the vendor and public, leaving systems vulnerable to attacks with no immediate fix.

How does AI contribute to cybersecurity?

AI contributes by automating threat detection, analyzing vast amounts of data, identifying vulnerabilities, and responding to threats in real-time.

What are the risks of AI in cybercrime?

AI can be used to craft more sophisticated cyberattacks, automate phishing, and generate malicious code, posing significant security challenges.

How can organizations defend against AI-generated threats?

Organizations can defend by adopting AI-driven security tools, conducting regular audits, and implementing proactive patch management strategies.

What role should governments play in AI and cybersecurity?

Governments should set standards and guidelines for the ethical use of AI in cybersecurity and encourage collaboration across industries to enhance defense capabilities.

What future trends can we expect in AI and cybersecurity?

Expect more sophisticated AI-driven attacks, increased use of AI in defense, and greater collaboration on threat intelligence across industries.

Key Takeaways

- AI can be used offensively in cyberattacks, not just defensively.

- Google's proactive measures averted a mass exploitation event.

- AI-driven security tools are crucial for modern cybersecurity.

- Organizations must balance AI use with human oversight.

- Future threats will likely involve more sophisticated AI techniques.

- Governments should regulate the ethical use of AI in cybersecurity.

Related Articles

- The Anthropic-xAI Partnership: Unpacking the Implications for AI and Cloud Computing [2025]

- Hackable Robot Lawn Mower Unlocks a New Nightmare | WIRED

- Navigating the Rise of Agentic AI in 2026 [2025]

- 5 Compelling Insights from Monday's $1.4 Billion ARR: Stock Surge, NDR Growth, and AI's Future [2025]

- Living Among Machines: Joanna Stern's Robotic Experience [2025]

- Why CUDA Makes Nvidia a Software Powerhouse [2025]

![Google's Landmark Discovery: AI-Generated Zero-Day Exploit [2025]](https://tryrunable.com/blog/google-s-landmark-discovery-ai-generated-zero-day-exploit-20/image-1-1778524639780.jpg)