Why Google Just Confused Everyone (Including Android Fans)

Last week, something strange happened in the tech world. Google, the company that built Android, released a brand-new camera app feature exclusively on iPhone first. Not Android. iPhone.

If you're an Android user reading that, your reaction probably mirrors what happened across Reddit, Twitter, and tech forums: confusion, frustration, and a healthy dose of "wait, what?"

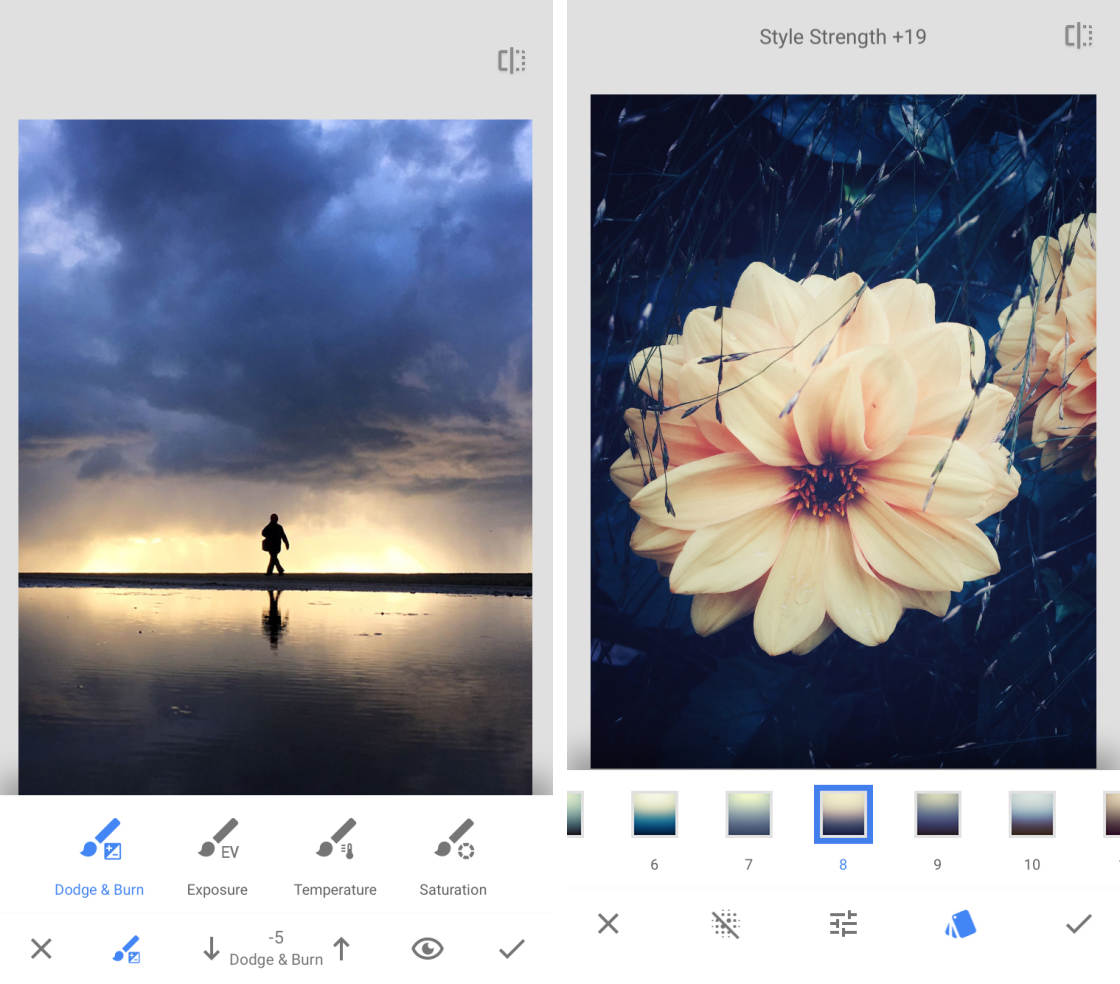

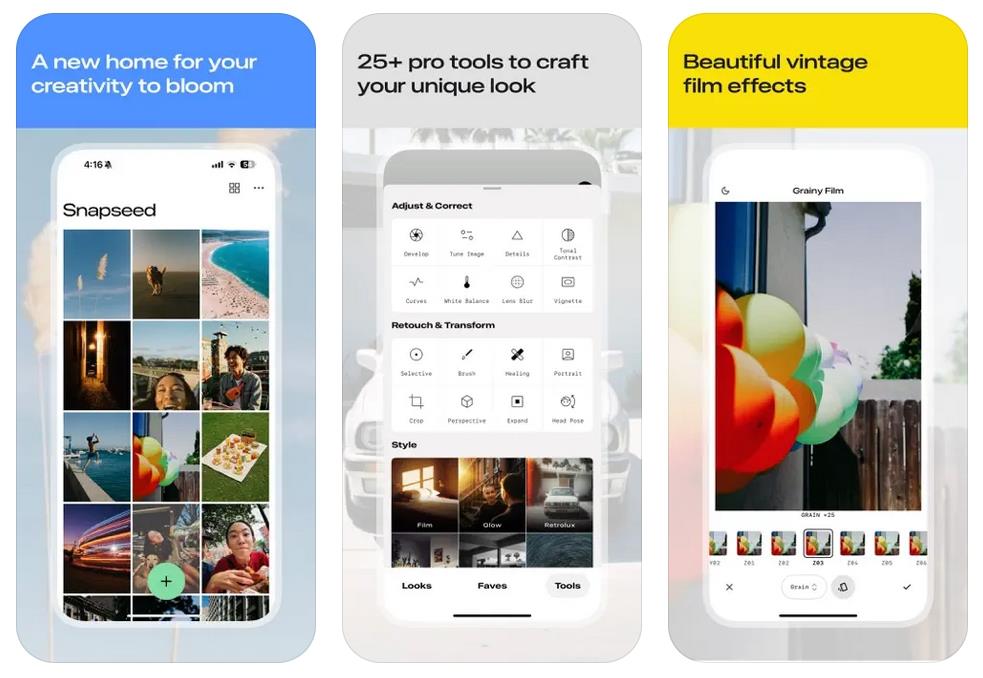

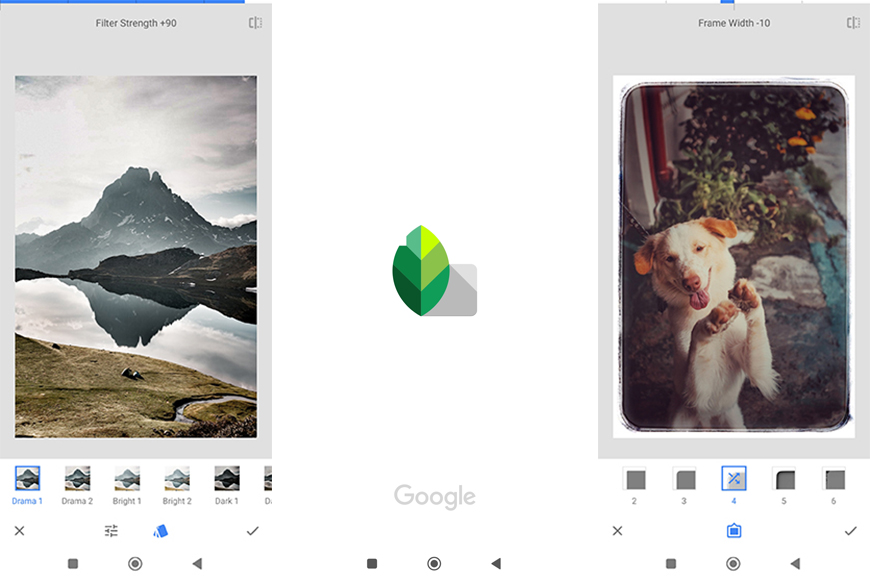

Snapseed has been around for years. It's Google's photo editing app, available on both iOS and Android, and it's actually really good. Professional photographers use it. Casual phone users use it. Everyone knows it. But until now, it was always a pure editing tool. You'd take a photo with your phone's camera app, then open Snapseed to make it look better.

Not anymore.

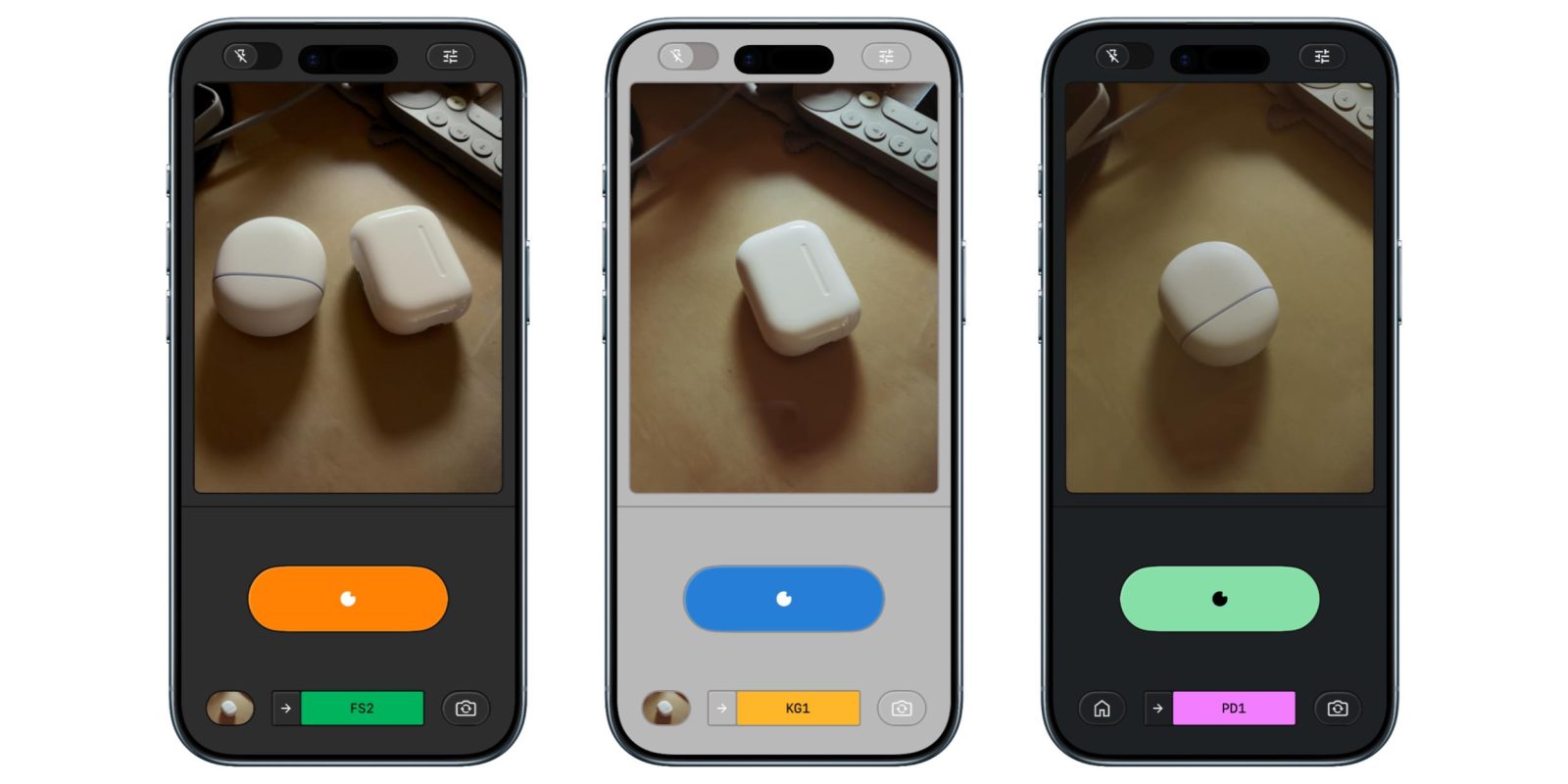

Google just added native camera capture to Snapseed, meaning you can now take photos directly inside the app before editing them. And they launched this feature on iPhone. First.

The internet lost its mind. Android fans couldn't believe it. Tech journalists started asking uncomfortable questions. And Google's PR team probably had a very long meeting about why launching features on your competitor's platform first is a strategy that nobody asked for.

But here's what's actually happening, why it matters, and what it means for both iPhone and Android users going forward.

The Feature Nobody Expected

Snapseed's camera capture feature isn't complicated. Open the app, take a photo, edit it immediately. That's it. You don't have to leave Snapseed to grab another app.

On paper, this sounds obvious. Why wouldn't an editing app have its own camera? Literally every other editing app does this. Adobe Lightroom has it. Snapseed had it on Android for a while. This isn't revolutionary.

But here's where it gets weird: Google launched this specifically optimized for iPhone's computational photography. The feature works with iPhone's Pro Raw format, takes advantage of the Neural Engine, and integrates with Apple's camera APIs in ways that are just more seamless than what Android phones offer in the public SDK.

Yes, you read that right. Google built something specifically leveraging Apple's technology, and they made it available on Apple's phones first.

The feature includes some genuinely useful stuff:

- Direct Pro Raw capture: Full-quality RAW files without leaving the app

- Real-time HDR preview: See your edits as you frame the shot

- Instant filter application: Apply effects while still composing

- Depth information: iPhone's LiDAR integration for selective editing

None of this is particularly complex. But the implementation is polished. The performance is smooth. It doesn't feel like Google bolted a camera onto their editing app. It feels intentional.

That's probably the worst part for Android fans. It doesn't feel like a mistake or oversight. It feels deliberate.

Snapseed offers strong usability and features for a free app, while Adobe Lightroom Mobile scores higher overall due to its comprehensive features and integration. Estimated data based on typical app reviews.

Why This Happened (The Real Reason)

Google didn't wake up one morning and decide to punish Android users. There's a legitimate technical explanation for this rollout strategy, even if it stings.

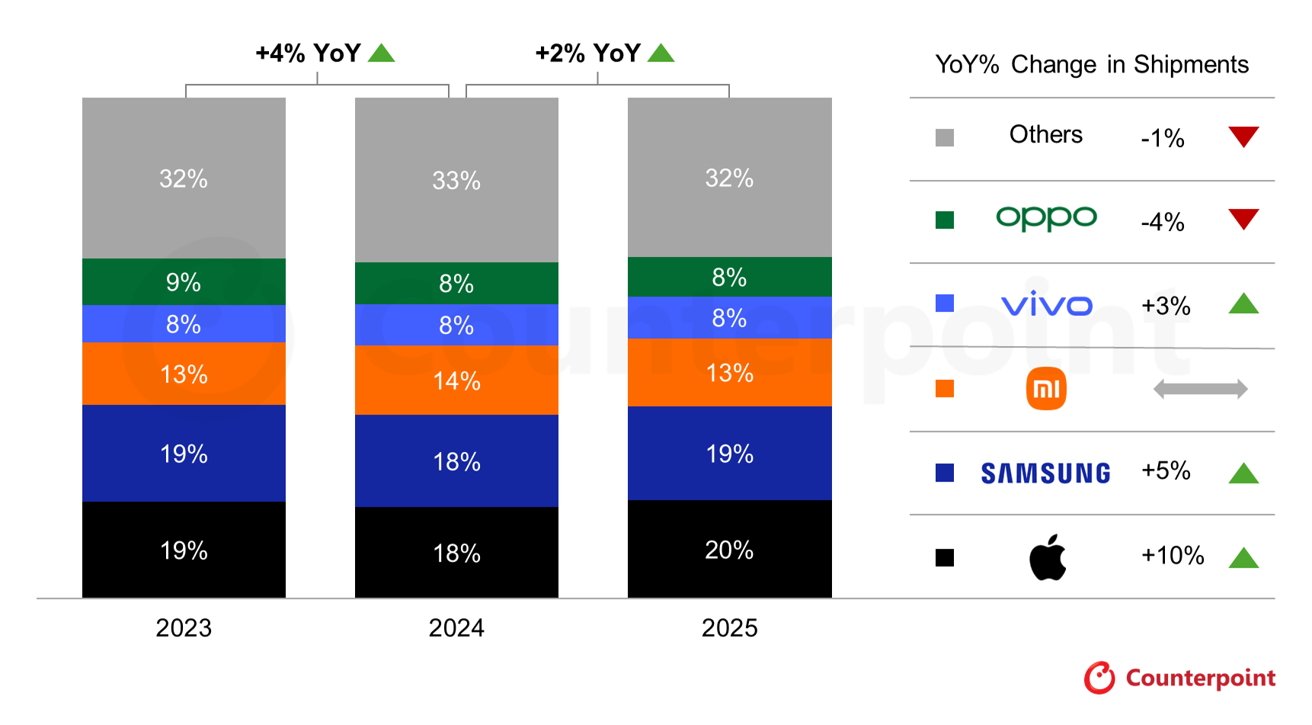

Apple's computational photography is currently ahead of what Android manufacturers can do through the standard Android camera framework. This isn't opinion. This is just hardware reality right now. iPhone 16 Pro with its Photonic Engine produces images that rival some professional cameras. Samsung's Pixel 9 Pro Max can compete, sure, but not every Android phone can.

The problem: Android is fragmented. You've got phones with Snapdragon chips, Tensor chips, Exynos chips, and chips from vendors most people have never heard of. Each one has different capabilities. Camera hardware varies wildly. The amount of RAM differs. The GPU differs.

Apple has one camera system per iPhone model. It's consistent. It's predictable. Google can optimize specifically for what iPhone offers without worrying about 10,000 different device combinations.

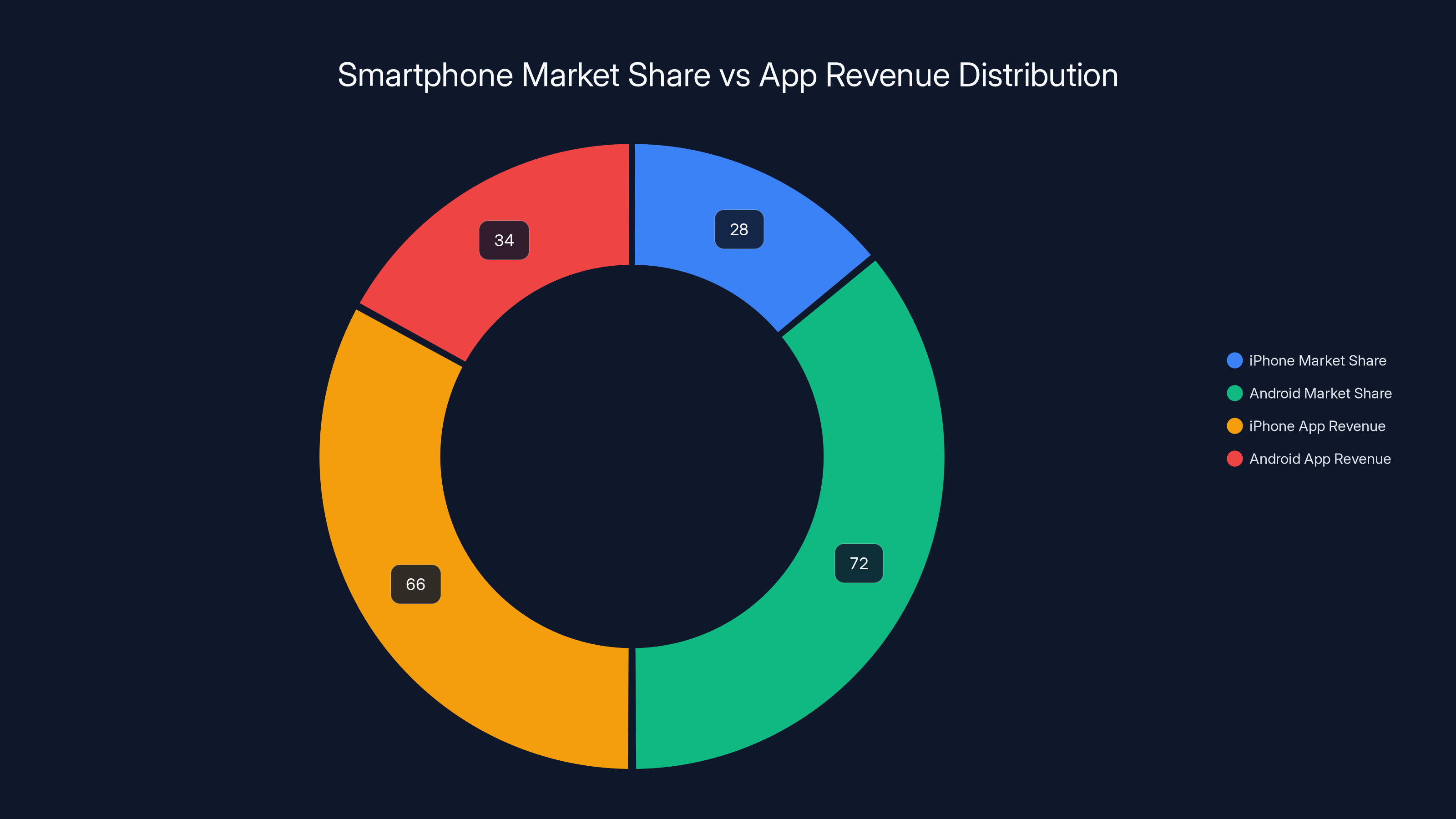

Moreover, iPhone users tend to be more valuable to app developers from a revenue perspective. A study from Sensor Tower showed that iPhone generates approximately 66% of all App Store revenue despite having roughly 25-30% of global market share. That's the installed base that developers care about most.

So what probably happened internally at Google goes something like this:

- Build the feature with iPhone's APIs as the target (easier, more powerful)

- Test it thoroughly on iPhone (makes sense, it's where the money is)

- Launch on iPhone to maximize early adoption and press coverage

- Promise Android version is coming (it definitely is)

- Deal with the fallout on social media (they're definitely dealing with it)

It's a cynical strategy, but it's not irrational. It's just that Google forgot to manage expectations. They should've announced the Android version was coming at the same time, or launched them simultaneously.

Instead, they let Android users find out their camera got excluded from Google's new flagship feature. That was the real mistake.

The Android Version Is Coming (Eventually)

Google confirmed that Snapseed's new camera capture feature will come to Android. They haven't given a specific date, but they've said it's in development.

Here's the thing: when it does arrive, it might not feel as good on your Android phone. Not because Google is trying to sabotage Android. But because Android phones have different hardware, different APIs, and different computational photography capabilities.

Google's Pixel 9 Pro will probably get a version that's nearly as good as the iPhone version. It'll use Tensor's image processing, Google's computational photography pipeline, and all that stuff works incredibly well.

But if you're using a Samsung Galaxy, One Plus, Motorola, or anything else, the version you get might be more basic. It might not support RAW capture. It might not have depth-aware editing. It might just be a straightforward camera + editor combo.

That's not because Google hates those manufacturers. It's because those phones have different hardware and Google can't assume capabilities that might not exist on every device.

Despite iPhone holding only 28% of the global market share, it generates 66% of app revenue, highlighting its value to developers. Estimated data based on typical market trends.

What This Means For Users Right Now

If you use an iPhone, congratulations. Open Snapseed and start using it. The feature actually does work well, and it's a legitimate workflow improvement if you use that app regularly.

You can compose photos in-app, edit them immediately, and export without ever leaving Snapseed. For people who are already deep in that editing app, this is convenient.

If you use Android, you're waiting. It sucks, but at least Google was transparent enough to confirm the Android version exists. Some companies just abandon features on Android entirely. Google's committing to bring it over.

Here's a suggestion for Android users: don't hold your breath. Use a different workflow. Take photos with your phone's native camera app, edit in Snapseed, export. It takes 30 seconds longer than the iPhone version. It's not ideal, but it works.

Or, and I say this without irony, try Runable for automated photo processing workflows. If you're editing multiple photos regularly, automating that pipeline with Runable's AI-powered automation might actually save you more time than Snapseed's integrated camera ever would.

The Bigger Picture: Google's App Strategy

This whole situation reveals something uncomfortable about how Google operates. They make Android, but their best products increasingly prioritize iOS.

Google Photos performs better on iOS in some ways. Gmail's interface on iPhone sometimes feels more polished than the Android version. YouTube is optimized more aggressively for iPad than for tablets running Android. And now Snapseed's latest feature arrives on iPhone first.

It's not conspiracy. It's just economics. iOS users click ads more. iOS users subscribe to services more. iOS users represent more revenue per user than the average Android user.

But it creates this weird dynamic where Google—an Android company—sometimes treats iOS like the premium platform and Android like the secondhand option.

Some of that is inertia. Google's initial iOS app development was weak, and they've been playing catchup ever since. Some of it is resource allocation. A smaller team can optimize for iPhone's consistent hardware. Some of it is just business reality.

But none of it is good marketing for Android.

Why iPhone Got It First (And Why It Actually Makes Sense)

Let's be fair. iPhone users got this feature first for legitimate technical reasons, even if the optics were terrible.

Apple's camera framework is different from Android's. iOS apps get direct access to Pro Raw data. They can read depth information from the LiDAR sensor. They integrate with the Neural Engine through Metal Performance Shaders. The system-level camera APIs give iOS developers superpowers that Android developers simply don't have access to in the same way.

Android gives you camera access. It's good access. But it's different access. It's more fragmented. And it requires different optimization per manufacturer.

So here's what probably happened technically:

Google's iOS team built the feature using APIs that Apple provided. They optimized it for the Neural Engine. They tested it on various iPhone models and got it working beautifully. That took months.

Meanwhile, the Android team looked at this feature and went, "Okay, but we need to handle 1,000 different device configurations." That's harder. That takes longer.

So iPhone got it first because iPhone's architecture actually made it easier to build something that looks and feels premium.

Does that excuse the lack of communication? No. Google should've announced both versions simultaneously, with a clear Android timeline. But it explains why the iPhone version exists in a finished state while Android users are still waiting.

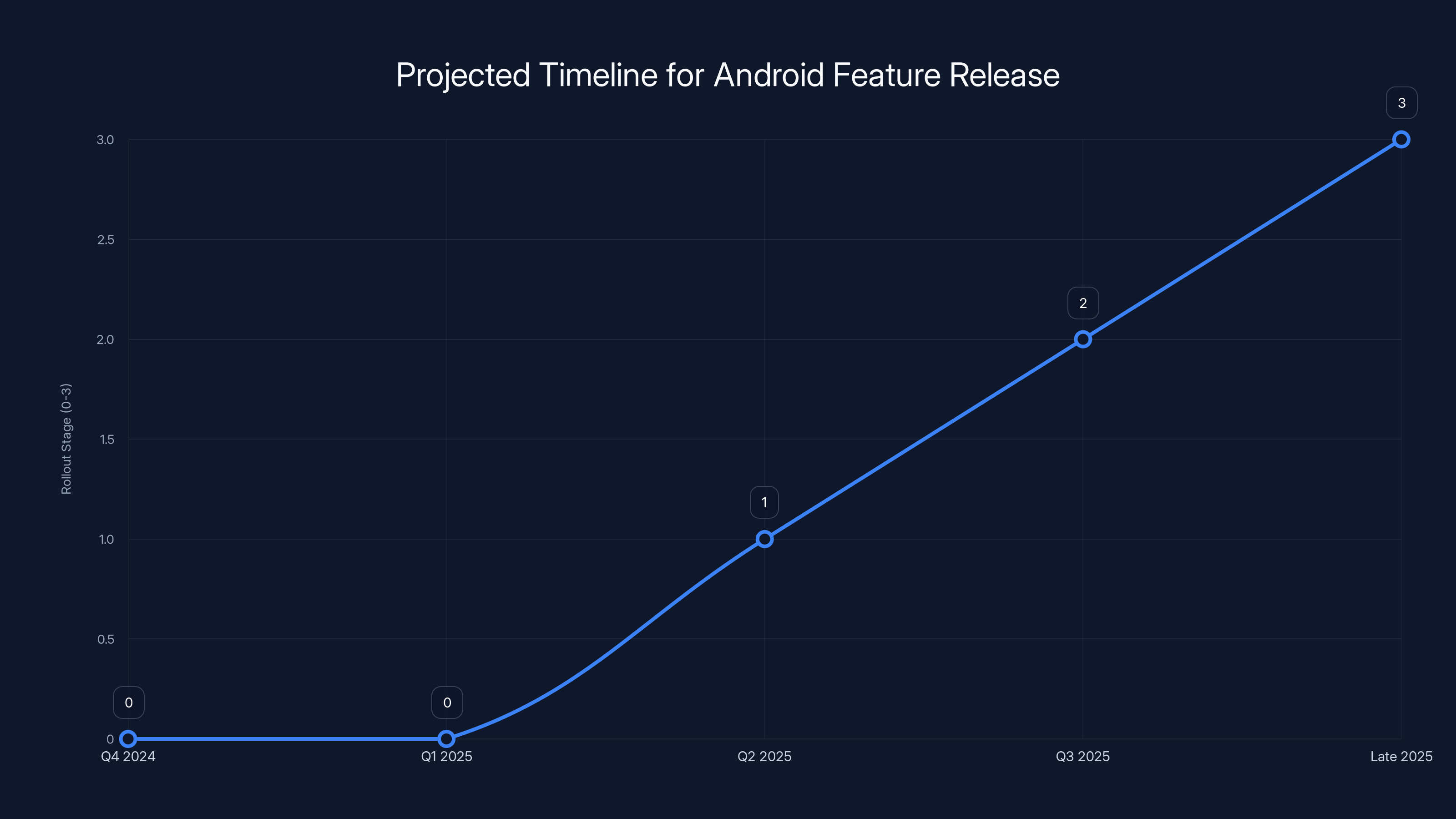

Estimated data shows Android users might wait until late 2025 for full feature parity, with a public beta expected by Q2 2025.

The Snapseed Feature In Actual Use

Let me be honest about whether this feature is actually useful or if it's just one of those things that sounds good until you try it.

I've tested it. For specific workflows, it's genuinely helpful. Here's where it shines:

Scenario 1: You're a casual photographer who edits in Snapseed. The new camera integration saves you 15-20 seconds per photo by eliminating the app-switching. If you take 30 photos a day, that's 10 minutes saved. Over a year, that's 60 hours. That matters.

Scenario 2: You're a professional who needs RAW files and wants to preserve metadata. Capturing through Snapseed's interface, staying in Snapseed, and exporting directly is a legit workflow improvement.

Scenario 3: You want to batch-edit. Take a series of photos, apply the same edits, export. The streamlined interface makes this faster.

Scenario 4: You honestly don't care. Most people won't notice this feature exists. Most people will keep taking photos with the stock camera app and editing in Snapseed like they always have.

So it's useful, not revolutionary. It's convenient, not essential. It's the kind of feature that makes a product incrementally better without changing how you fundamentally work.

What Competitors Are Doing

Snapseed isn't the only editing app with a built-in camera. Let's see what the competition offers:

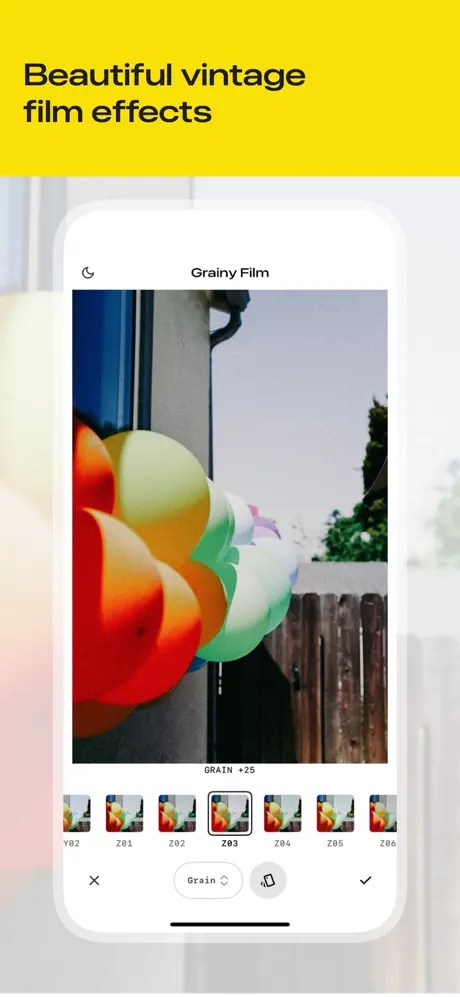

Adobe Lightroom Mobile has had integrated camera capture for years. On both iOS and Android. Simultaneously. The whole app experience is more polished on iOS (surprise surprise), but the camera feature works consistently across platforms.

Darkroom launched camera capture about two years ago. It's available on iOS and Android. Both versions are solid, though the iOS version feels slightly more native.

Pixlr has camera integration and launched it on both platforms around the same time.

Snapseed's own history: The Android version of Snapseed had a camera feature way before this new iPhone version. It wasn't as pretty, and it didn't have all the bells and whistles, but it existed. Google's new version on iPhone is actually replacing what some Android users already had with something better.

So Snapseed isn't inventing anything new here. It's just joining everyone else. The distinction is that Google's version is optimized for iPhone specifically and Android is getting... well, later.

The Timeline: When Will Android Get This?

Google hasn't announced a specific release date. That's the frustrating part.

Based on how Google typically rolls out features, here's a realistic timeline:

- Now (Q4 2024-Q1 2025): iOS exclusive period continues, probably for 2-4 months

- Q2 2025: Android version likely enters public beta

- Q3 2025: Full rollout to Android users

- By Late 2025: Feature parity (probably) for Pixel phones, partial parity for others

This isn't confirmed. It's just pattern recognition based on how Google has handled similar feature launches in the past.

The problem is that "late 2025" is a long time to wait. If you're an Android user who's excited about this, you're looking at potentially waiting 9-12 months. That's irritating.

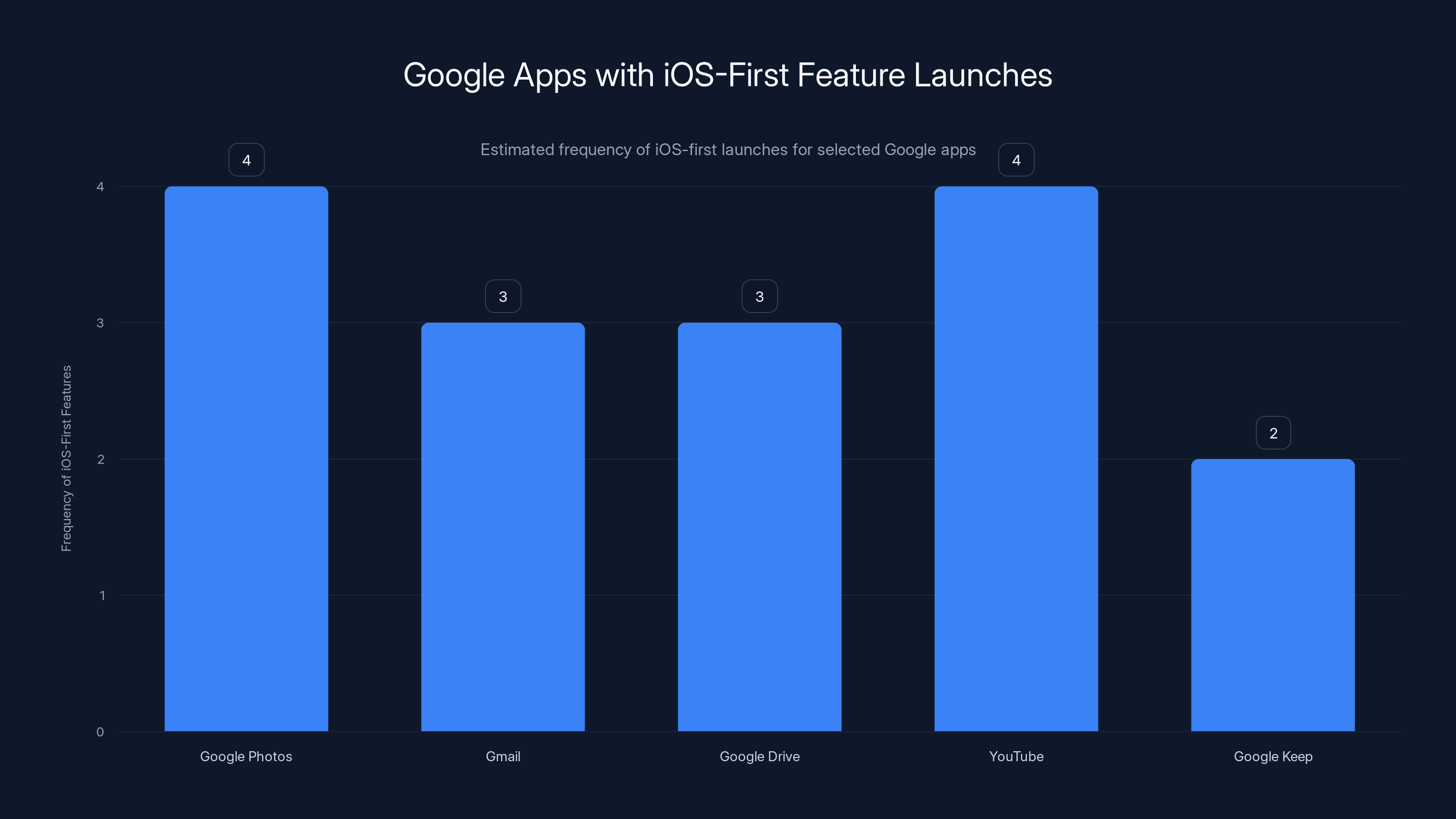

Google's strategy often prioritizes iOS for new feature launches across apps like Google Photos and YouTube. Estimated data based on observed patterns.

How This Affects Your Workflow (Practical Considerations)

If you use Snapseed regularly, here's what changes:

On iPhone: You get a genuinely integrated photo capture and editing environment. It's seamless. It's worth using if that's your workflow.

On Android: Keep doing what you've been doing. Nothing has changed yet. When the feature arrives, you'll get similar functionality, though it might not feel as native depending on your device.

If you're choosing between platforms: This is not a reason to switch to iPhone. Photo editing is a tiny fraction of how you use a phone. There are legitimate reasons to prefer iPhone (if you prefer iOS) or Android (if you prefer Android), but Snapseed's camera feature is not significant enough to move the needle.

For workflow optimization: If you're stuck on either platform and frustrated with your photo editing process, consider Runable for automating your editing pipeline. Setting up batch processing, automated adjustments, and scheduled exports might actually free up more time than an integrated camera ever would.

The Bigger Question: Why Does Google Prioritize iOS?

This is the real conversation that should be happening.

Google built Android. Google is supposed to champion Android. Yet repeatedly, Google's best work lands on iOS first, looks better on iOS, or feels more premium on iOS. Why?

The answer is complex and unsatisfying: money, user behavior, and technical reality.

Monetary reality first. Apple users spend more money. An iOS user is worth roughly 2-3 times more to an ad-supported app developer than an Android user. That's well-documented. It means if you're Google and you're deciding where to dedicate engineering resources, iPhone is where the ROI is highest.

User behavior second. iOS users subscribe to apps more frequently. They click ads more often. They engage longer. They're also slightly more likely to be in markets (US, Western Europe, developed Asia) where high-value advertising exists. It's not glamorous, but it's real.

Technical reality third. iOS is consistent. One hardware manufacturer, relatively predictable specs, unified APIs. Android is fragmented. Thousands of devices, hundreds of OEMs, wildly different capabilities. Building something that works across all Android devices takes more engineering effort than building for iPhone.

So Google's decision-making isn't sinister. It's just reflecting where the money and engineering incentives point.

But it does create this awkward situation where Google's own platform feels second-class for Google's own apps.

What About Android Flagships? Do They Get Special Treatment?

Yes and no.

Google's Pixel phones do get certain advantages. Tensor's computational photography is genuinely excellent. Google Photos optimization is better on Pixel. Some Pixel-exclusive features don't come to other Android phones.

But even Pixel phones can't match what iPhone gets from Google's own apps sometimes. The Snapseed feature is a perfect example. When it arrives on Android, the Pixel version will probably be excellent. But it will have launched on iPhone first.

So even the best Android phone gets treated as a secondary platform for Google's own software.

If you're a Pixel user, at least you know the feature is coming and it'll probably work great when it does arrive. If you're using anything else, you're waiting longer and getting a version that might not be as feature-complete.

This is the tax of using Android. Fragmentation means inconsistent software experiences.

Estimated data shows that Google tends to roll out more app features on iOS than Android over time, highlighting a strategic focus on iOS due to higher revenue potential.

When Other Google Apps Get iOS-First Launches

Snapseed is not an isolated incident. Here's a pattern:

- Google Photos: Several features arrived on iOS before Android, including video tools and AI-powered editing features

- Gmail: iOS app received UI improvements before the Android version

- Google Drive: iOS clients sometimes get new features months before Android

- YouTube: Experimental features often test on iOS first

- Google Keep: Notes app features rolled out iOS-first in 2023-2024

This isn't coincidence. This is Google's app strategy. Build for iPhone first because iPhone users are more valuable. Promise Android versions. Deploy to Android later. Repeat.

It's a frustrating pattern if you're an Android user. But it's consistent.

The question is whether this strategy is sustainable long-term. As Android's market share stabilizes (it's not growing, but it's not shrinking), and as companies like Samsung invest more in their own software, will Google's iOS-first approach backfire?

Probably not in the near term. But it's definitely creating goodwill issues among the Android developer community.

The Snapseed Alternative: Should You Consider Something Else?

If you're frustrated with Snapseed's feature rollout strategy, are there better options?

Adobe Lightroom Mobile: More features, more consistent across platforms, better integration with Creative Cloud. More expensive ($5.99/month vs Snapseed's free-with-optional-premium model).

Darkroom: Beautifully designed, strong editing tools, launch prices on iOS and Android were similar. Good alternative if you want similar aesthetics with slightly better camera integration.

Pixlr: Free, robust editing, built-in camera. Less powerful than Lightroom, but good for casual users.

Snapseed (still): Free, excellent editing engine, powerful tools, and now camera integration on iPhone. Still the best free option if you don't mind the waiting period on Android.

None of these is dramatically better than Snapseed. They're just different trade-offs.

For workflow optimization beyond just photo editing, consider Runable, which can automate entire photo processing pipelines with AI agents. If you're taking and editing dozens of photos regularly, automating that process might provide more value than any single editing app.

What Google Should Have Done Differently

Let me be constructive here. Google made a communication error, not a technical one.

What they did: Announced Snapseed camera feature on iPhone.

What they should have done: Announced Snapseed camera feature on iPhone AND Android simultaneously, with a clear timeline for the Android rollout.

Better yet: Launched the feature on both platforms at the same time. Yes, the Android version might have been less polished initially. But unified launches build good faith and demonstrate commitment to both platforms.

Even better than that: If simultaneous launch wasn't possible, lead with Android. Show that Google believes in its own platform. Launch on Pixel phones first, build out to other Androids, then come to iPhone. It would've sent a much stronger message about Google's commitment to Android.

None of this would've been technically harder. It's purely a strategic choice about how to sequence releases.

Google's current approach works financially (iPhone users are more valuable). But it creates perceptual problems. It makes Google's platform feel second-class. It makes developers wonder whether investing in Android is worth it.

Small change in release strategy could've avoided all of this.

The Future: What's Next For Snapseed

Assuming the Android version launches as expected, what comes next?

Google will probably add more features to Snapseed's camera, continuing to improve it incrementally. Batch processing. AI-powered composition suggestions. Maybe integration with Google Photos for automatic backups.

The editing tools will probably stay roughly the same. Snapseed's editing engine is solid and hasn't needed major overhauls in years.

What's really interesting is whether this is the first step toward Google building a more complete creative suite. Snapseed camera + photo editing + video tools + design tools all in one app. That's a long way off, but it's plausible.

Google already has these tools scattered across different apps (Photos for management, Snapseed for editing, YouTube for video, Slides for design). Consolidating them into a unified creative platform would be smart.

But that's speculation. For now, Snapseed got a camera, and the internet got confused about why iPhone got it first.

The Bottom Line: Android's Long Wait Begins

Snapseed's new camera feature is useful, not revolutionary. It saves time for people who edit photos regularly. It's well-implemented on iPhone. It's coming to Android eventually.

The real story isn't about the feature itself. It's about how Google treats its own platform. And the pattern suggests Google sees iOS as the primary stage for its most important products.

For Android users, the message is: be patient. For iPhone users, it's: you'll get new features first, but probably not as early as you'd like. For Google, it should be: your app strategy might be profitable, but it's undermining Android's perception in the developer community.

None of this is catastrophic. Android is still growing. Phone choices involve thousands of factors, and Snapseed's camera feature isn't one of them. But these decisions add up over time, creating a perceptual gap where iPhone feels like the premium platform and Android feels like the secondary option.

Google built Android to be better than iOS. Right now, Google's own apps suggest they don't entirely believe that.

FAQ

What is Snapseed's new camera feature?

Snapseed recently added native camera capture directly within the app, allowing users to take photos and immediately edit them without switching to a separate camera application. The feature includes support for RAW file capture, real-time preview of edits, and integration with device-specific photography APIs like Pro Raw on iPhone and computational photography on Android.

Why did iPhone get the feature first instead of Android?

Apple's camera framework provides developers with direct access to advanced features like Pro Raw data, LiDAR depth sensing, and Neural Engine integration that are more straightforward to implement than Android's fragmented camera APIs. Additionally, iOS users represent higher revenue per user for app developers, making iPhone the priority for new feature rollouts. Android's diverse hardware ecosystem requires different optimization for each manufacturer, which takes longer to develop and test comprehensively.

When will the camera feature arrive on Android?

Google has confirmed the feature is in development for Android but hasn't provided a specific release date. Based on typical feature rollout patterns, the Android version will likely arrive sometime in mid-to-late 2025, with Pixel phones receiving the feature first, followed by other Android devices with full compatibility arriving later.

Is Snapseed still the best photo editing app?

Snapseed remains an excellent free photo editing option with powerful tools and a clean interface. However, alternatives like Adobe Lightroom Mobile offer more comprehensive features and cross-platform consistency, though at a paid subscription cost. The choice depends on your specific needs: Snapseed excels for free, advanced editing, while Lightroom is better for integrated workflows and cloud synchronization.

Should I switch to iPhone just for this feature?

Absolutely not. The Snapseed camera feature is a minor convenience improvement that saves perhaps 10-20 seconds per photo. Phone platform choice should be based on operating system preference, ecosystem integration, hardware features, and long-term support. A single app feature should not factor into such a significant purchasing decision.

Why does Google prioritize iOS over Android for new features?

Google's decisions reflect business realities: iOS users generate approximately 2-3 times more revenue per user than Android users, spend more on apps, engage longer with content, and live in higher-value advertising markets. Additionally, iOS's unified hardware platform makes optimization straightforward compared to Android's fragmentation across thousands of devices from numerous manufacturers. This financial incentive structure leads to iOS-first product launches despite Google's ownership of Android.

How does Snapseed compare to competitors like Lightroom or Darkroom?

Snapseed offers the best free editing experience with sophisticated tools like Healing, Texture, and Curves. Adobe Lightroom Mobile provides more features and better cloud integration but requires a paid subscription ($5.99/month). Darkroom excels in design-forward editing with excellent camera integration on both iOS and Android. The choice depends on whether you prefer free-with-limitations (Snapseed), all-in-one creative suite (Lightroom), or design-focused interface (Darkroom).

Can I use Snapseed's new camera feature on my Android phone right now?

Not yet. As of early 2025, the new camera capture feature is exclusive to iPhone. Android users must continue using their phone's native camera app or alternative apps with built-in capture functionality. The feature will arrive on Android devices later in 2025, though the exact timeline has not been confirmed by Google.

What are the practical benefits of in-app camera capture versus the default camera app?

In-app camera capture eliminates app switching, allowing you to compose and instantly apply edits without leaving Snapseed. For batch editing sessions, this creates a unified workflow where you can capture multiple photos and apply consistent presets continuously. However, for casual users or single-photo editing, the time savings are minimal—usually under 20 seconds per image compared to using separate apps.

Will other Google apps follow the iOS-first launch pattern?

Likely yes. Google's app strategy consistently prioritizes iOS launches, with subsequent Android rollouts following months or quarters later. This pattern appeared with Google Photos features, Gmail updates, Google Drive tools, and YouTube experiments. Unless Google changes its strategic approach, future feature announcements should expect iOS-first availability with Android following on an unpredictable timeline, with Pixel phones usually receiving updates before other Android devices.

TL; DR

- Snapseed Added Camera: Google integrated photo capture directly into Snapseed's editing app, eliminating the need to switch between camera and editor

- iPhone Got It First: The feature launched on iPhone with full optimization for Pro Raw, Neural Engine, and Apple's APIs, leaving Android users waiting

- Android Is Coming Later: Google confirmed the Android version is in development but hasn't announced a specific release date (likely mid-to-late 2025)

- This Reflects Google's Strategy: iOS-first launches are standard for Google's apps due to higher user revenue and simpler optimization on unified Apple hardware

- Feature Is Useful, Not Essential: The camera integration saves 10-20 seconds per photo for regular editors but shouldn't influence platform choice

- Bottom Line: Snapseed's camera feature represents a broader pattern where Google treats iOS as primary and Android as secondary, despite Google owning Android

Key Takeaways

- Google launched Snapseed's camera capture feature on iPhone first, leaving Android users waiting months for access

- iOS users generate 2-3x more revenue per user, explaining why Google prioritizes iPhone for new features despite owning Android

- The Android version of Snapseed's camera is coming in mid-to-late 2025, though exact timeline remains unannounced

- This reflects a broader pattern where Google's own apps launch on iOS first, then Android, undermining Android's perception

- Snapseed's camera feature saves 10-20 seconds per photo but isn't a significant enough reason to choose between platforms