Introduction: The Massive Scale of AI at AT&T

AT&T, one of the largest telecommunications companies globally, faced a staggering challenge: managing over 8 billion tokens daily through its AI systems. This scale is not just a technical marvel but also a potential financial sinkhole if not managed properly. The company realized that pushing such a volume through large language models (LLMs) was neither feasible nor economical.

TL; DR

- 8 billion tokens daily: AT&T's AI systems manage a colossal amount of data, pushing their infrastructure to its limits.

- Cost reduction by 90%: By rethinking their orchestration layer, AT&T cut down operational costs significantly.

- Multi-agent system: Utilized a flexible orchestration strategy with several smaller, purpose-driven agents.

- Lang Chain implementation: Built their system on Lang Chain, a framework for managing complex AI tasks.

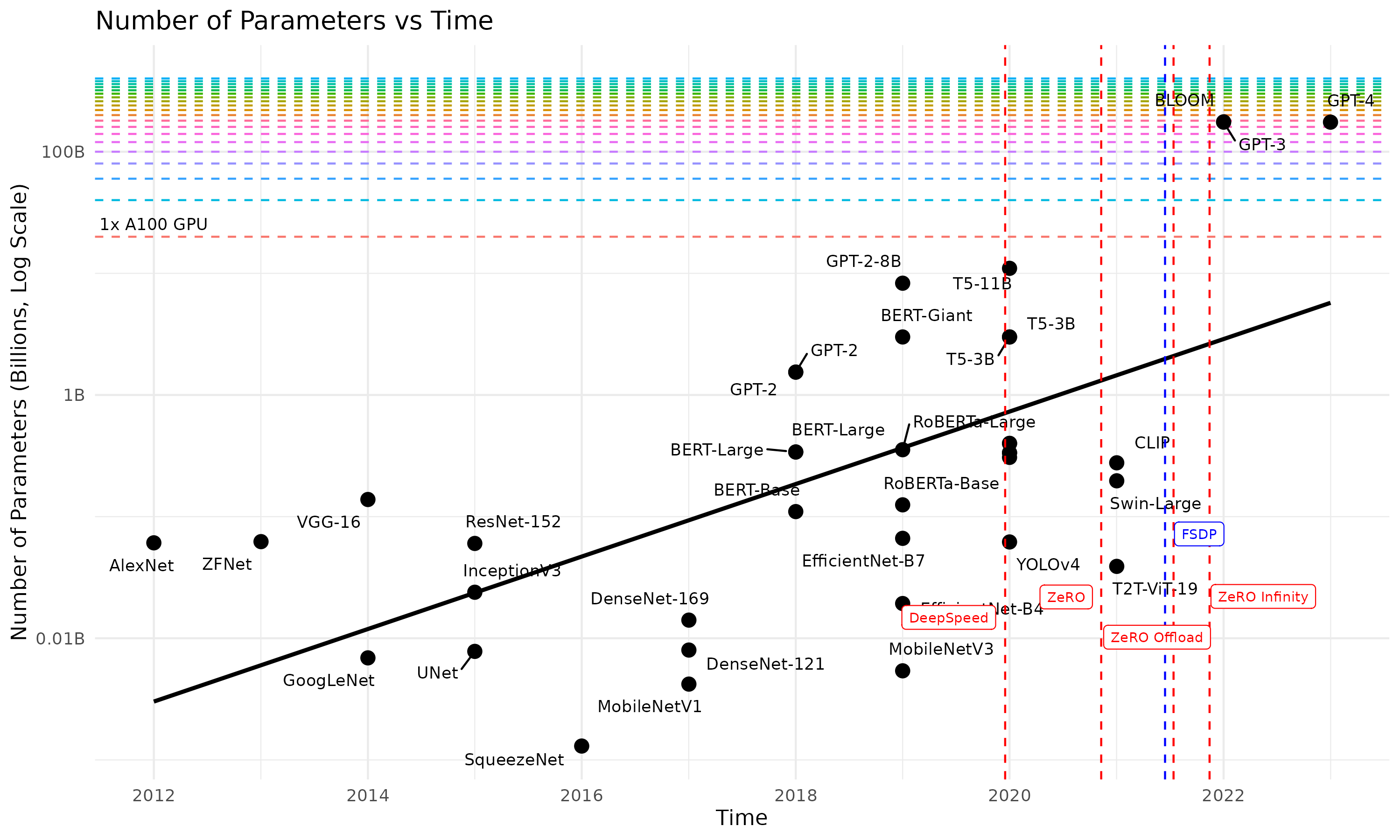

- Future of AI: Focus on small language models (SLMs) for efficiency and accuracy.

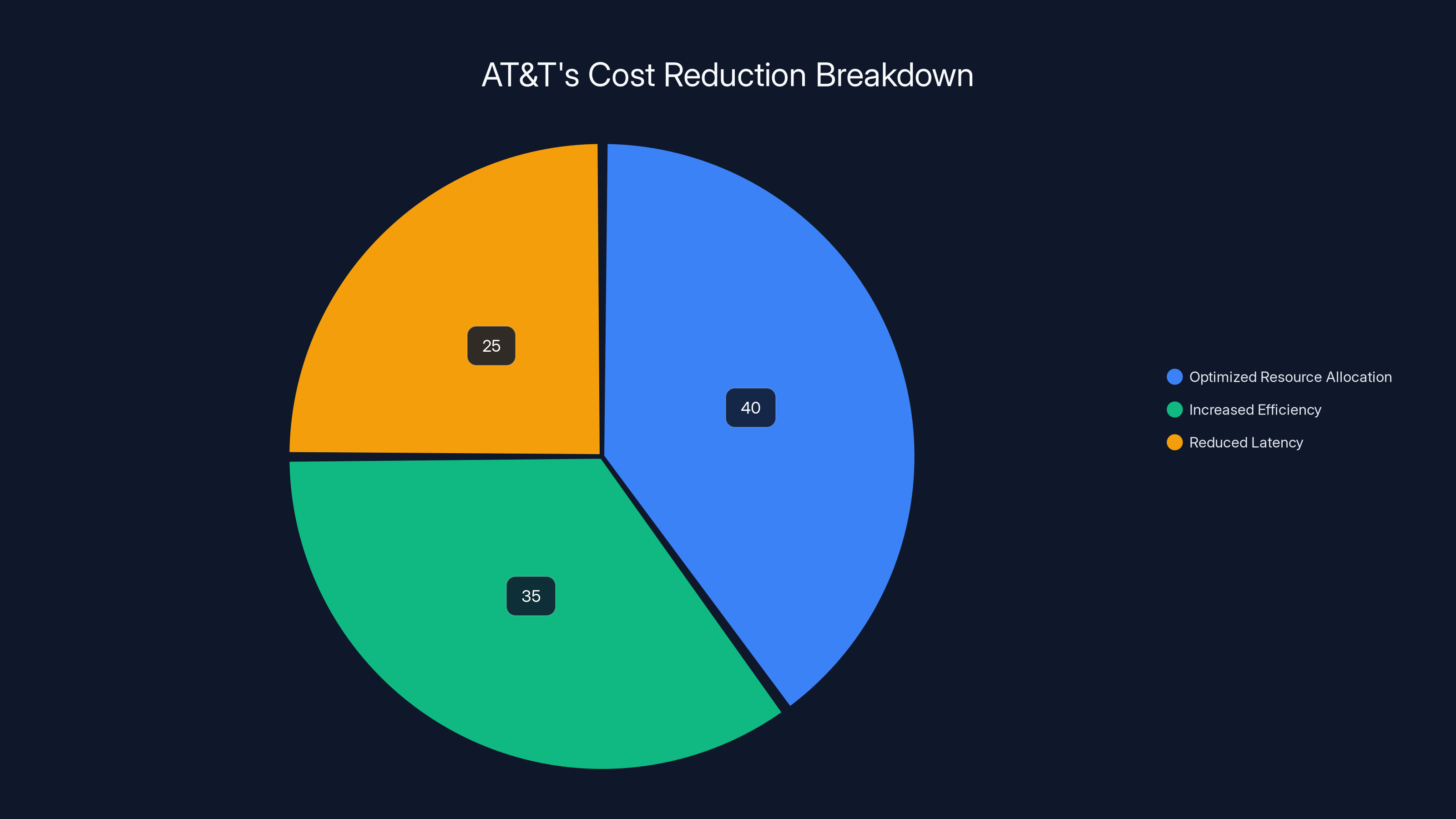

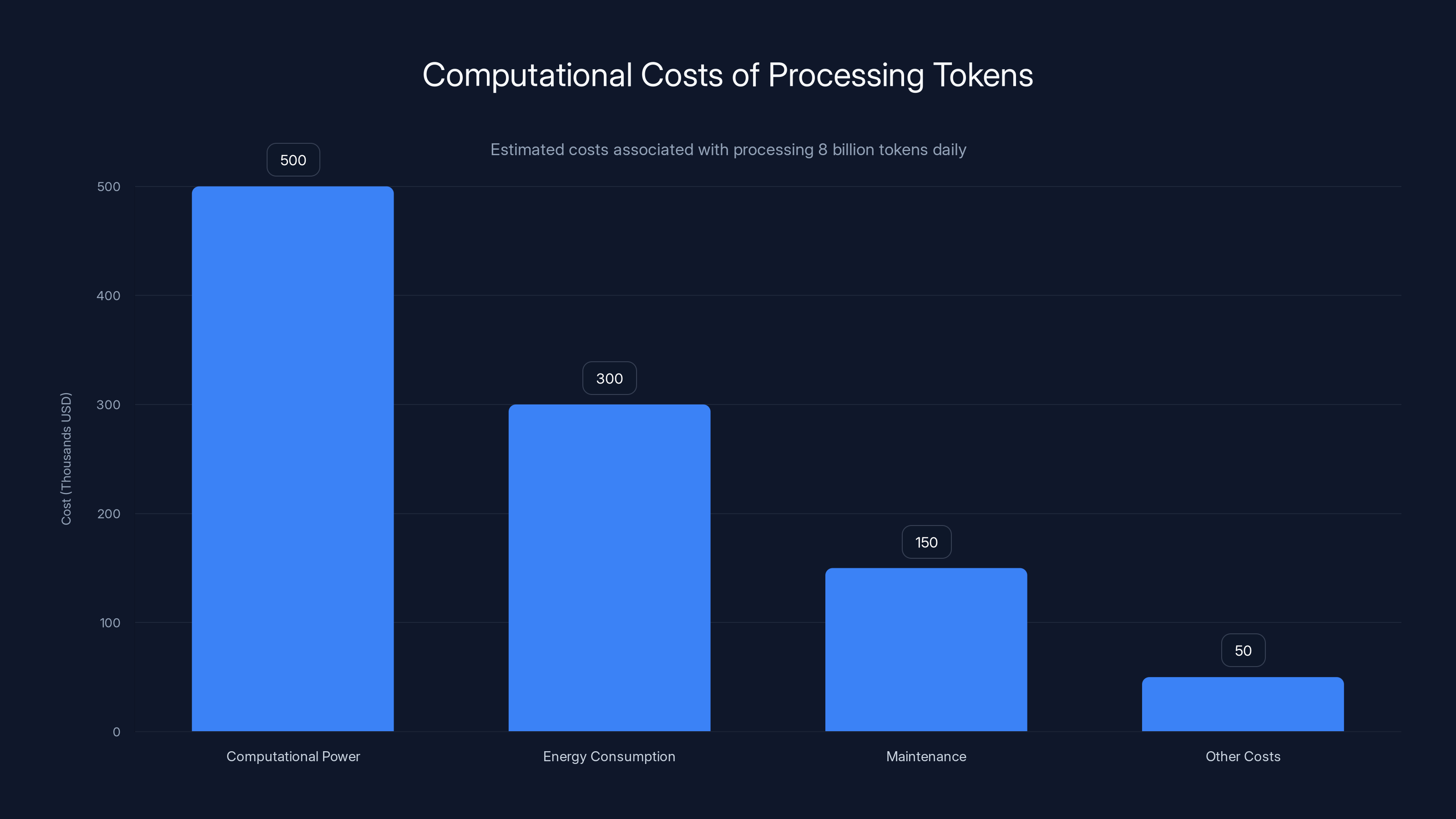

AT&T achieved a 90% cost reduction through optimized resource allocation (40%), increased efficiency (35%), and reduced latency (25%). Estimated data.

The Challenge of Scale: Why 8 Billion Tokens Matters

Handling 8 billion tokens daily is no small feat. It involves extensive computational resources, which can quickly become cost-prohibitive. AT&T's chief data officer, Andy Markus, along with his team, identified that relying heavily on large reasoning models was not sustainable.

The Problem with Large Models

Large language models, while powerful, are notorious for their resource demands. They require significant computational power, leading to high energy consumption and increased costs. For a company like AT&T, this meant that every token processed was adding to an already hefty bill.

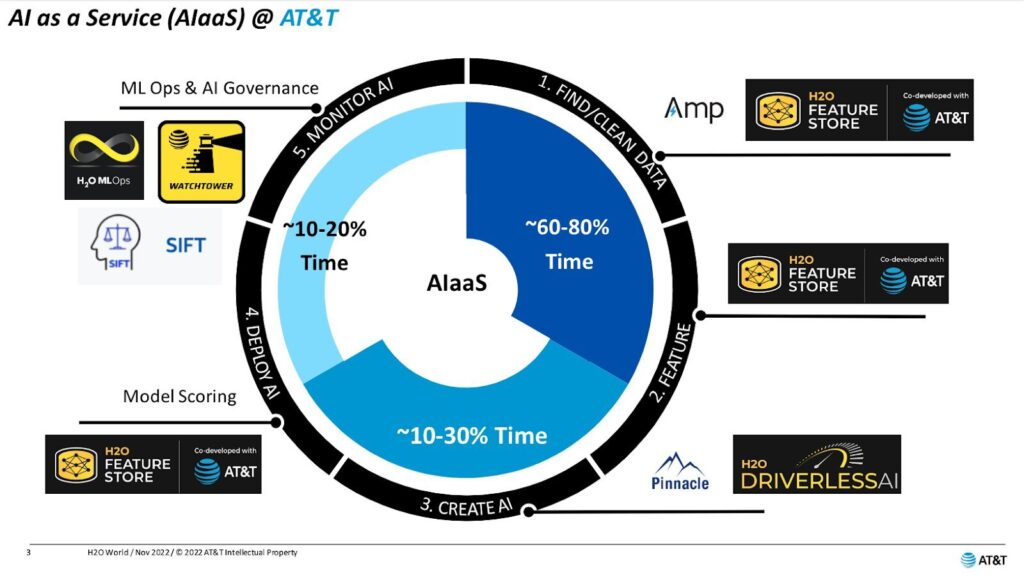

Rethinking AI Orchestration: A New Approach

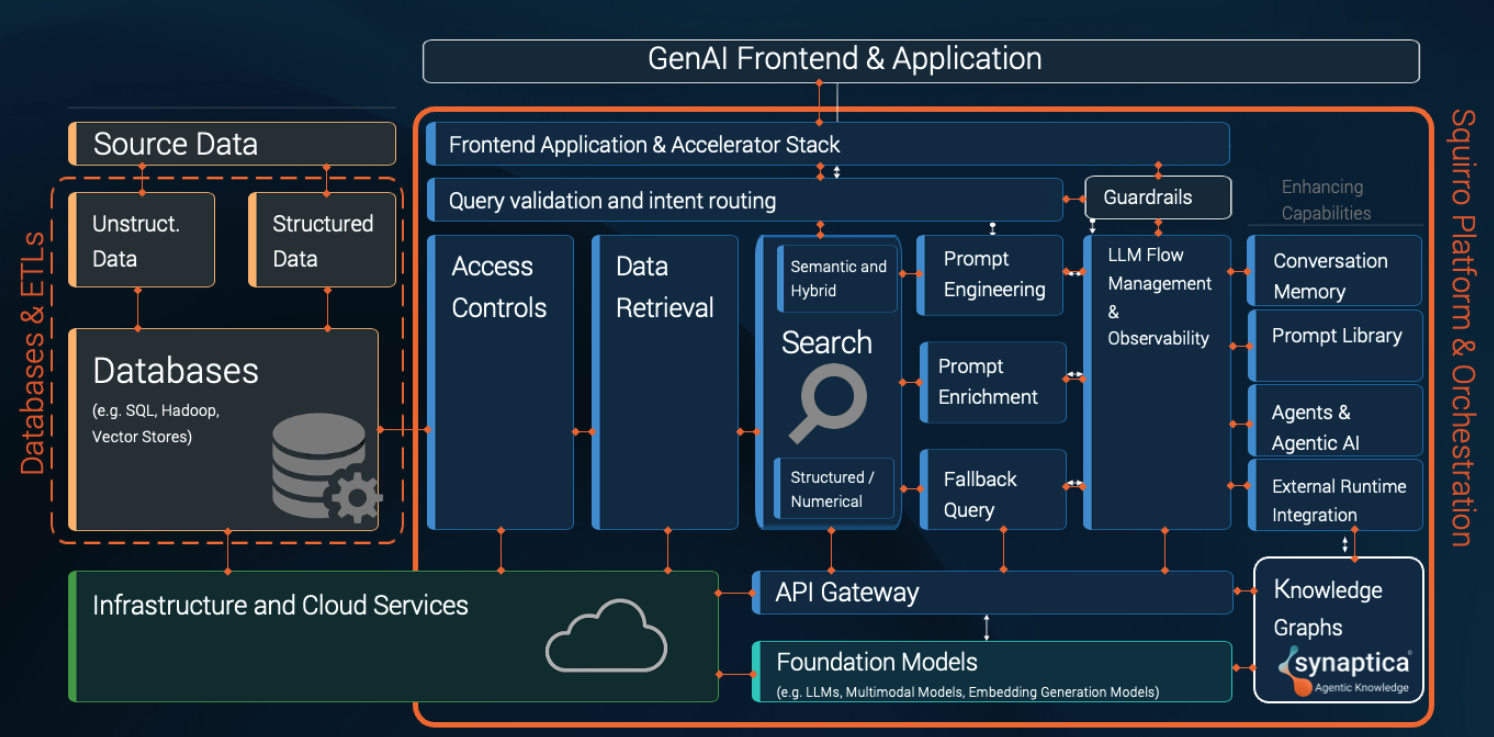

To address these challenges, AT&T re-engineered their AI orchestration layer. The solution was to construct a multi-agent system where large "super agents" direct smaller, more efficient "worker" agents. This allowed for specialized processing, reducing the load on any single model.

What is AI Orchestration?

AI orchestration involves managing the flow and execution of tasks within an AI system. It ensures that tasks are completed efficiently and that resources are allocated optimally.

- Super Agents: These are responsible for overseeing the entire operation, making high-level decisions based on incoming data.

- Worker Agents: These handle specific tasks, often requiring less computational power than the super agents.

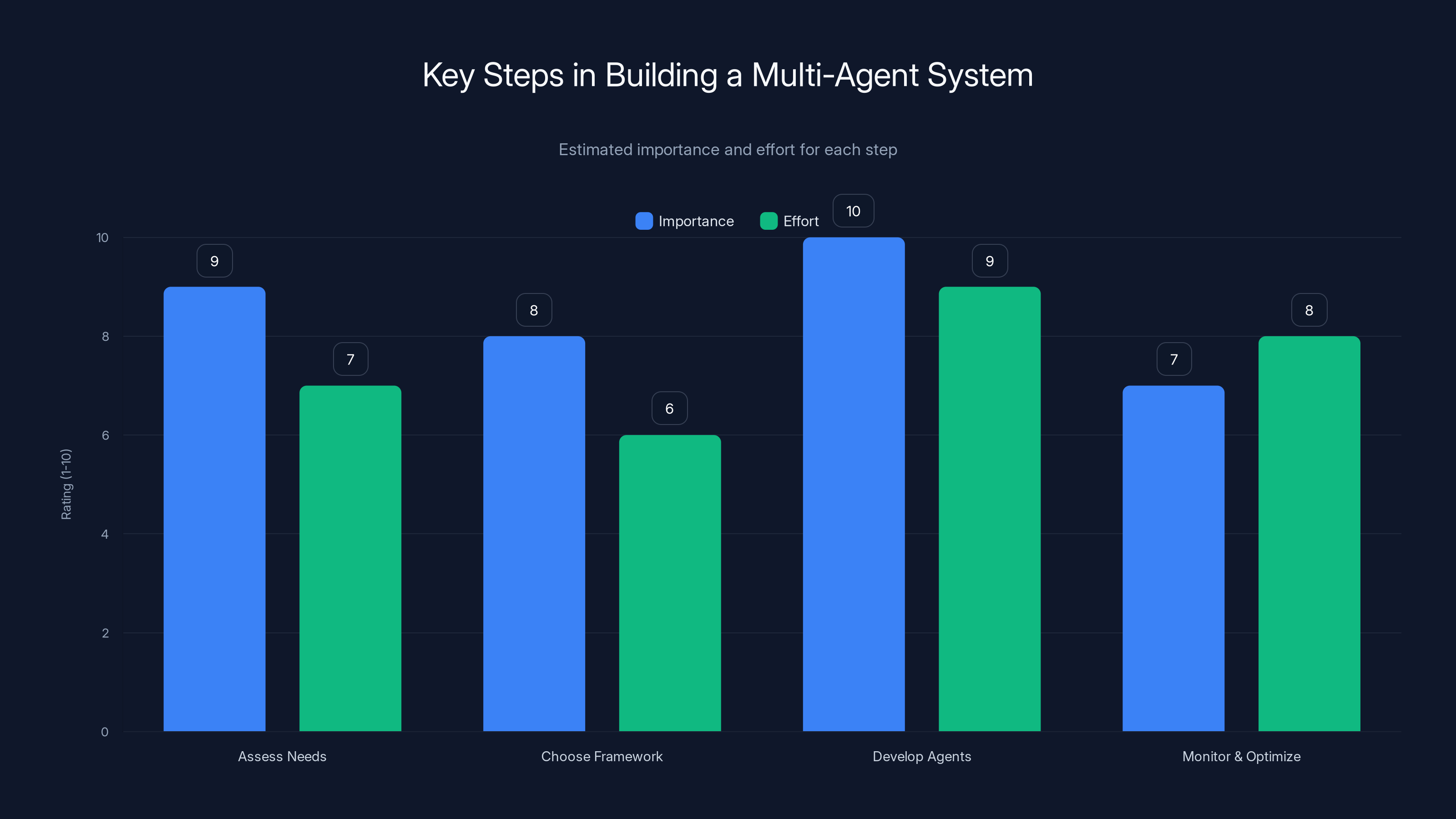

Developing agents is the most critical and effort-intensive step in building a multi-agent system. Estimated data.

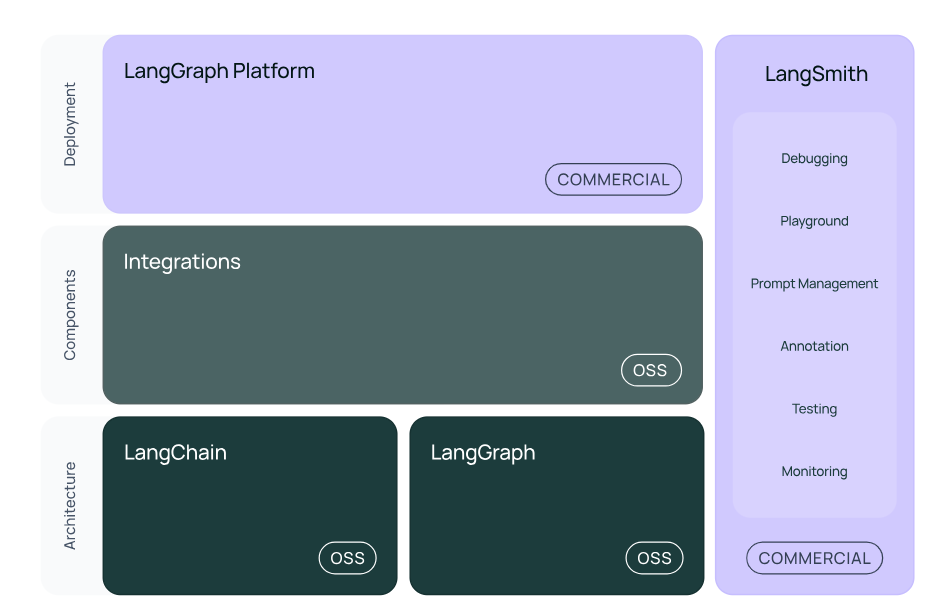

Implementing a Multi-Agent System with Lang Chain

Lang Chain, a framework designed for complex AI task management, became the backbone of AT&T's new orchestration strategy. It enabled the seamless integration of multiple agents, allowing them to communicate and work together efficiently.

Key Features of Lang Chain

- Modular Architecture: Facilitates the integration of various agents and models.

- Scalability: Designed to handle large-scale operations without compromising performance.

- Efficiency: Optimizes resource allocation, reducing unnecessary computational overhead.

How AT&T Achieved a 90% Cost Reduction

The results of AT&T's new orchestration approach were nothing short of revolutionary. By shifting to a multi-agent system, they reduced operational costs by up to 90%.

Key Strategies for Cost Reduction

- Optimized Resource Allocation: Ensures that each task uses the most suitable agent, preventing resource wastage.

- Increased Efficiency: Smaller, purpose-driven agents handle specific tasks more effectively than larger models.

- Reduced Latency: Faster response times lead to improved user experience and reduced processing time.

Practical Implementation Guide: Building Your Own System

For companies looking to implement a similar orchestration strategy, here are some practical steps:

- Assess Your Needs: Identify the specific tasks and challenges your AI system must address.

- Choose the Right Framework: Consider using Lang Chain or similar frameworks that support multi-agent systems.

- Develop Super and Worker Agents: Design agents with specific roles and functionalities.

- Monitor and Optimize: Continuously monitor system performance and make adjustments as necessary to improve efficiency.

Code Example: Setting Up a Basic Multi-Agent System with Lang Chain

pythonfrom langchain import Agent, Orchestrator

# Define super agent

class Super Agent(Agent):

def process(self, data):

# High-level decision-making

return self.delegate(data)

# Define worker agent

class Worker Agent(Agent):

def process(self, task):

# Task-specific processing

return task.result()

# Orchestrator setup

orchestrator = Orchestrator()

super_agent = Super Agent()

worker_agent = Worker Agent()

orchestrator.add_agent(super_agent)

orchestrator.add_agent(worker_agent)

Processing 8 billion tokens daily involves significant costs, with computational power and energy consumption being the largest contributors. (Estimated data)

Common Pitfalls and Solutions

Even with a robust framework like Lang Chain, there are challenges that may arise:

- Integration Issues: Ensure that all agents can communicate effectively. Regular testing and debugging are essential.

- Performance Bottlenecks: Continuously monitor agent performance and optimize code to prevent slowdowns.

- Scalability Challenges: As your system grows, ensure that it can scale without degrading performance.

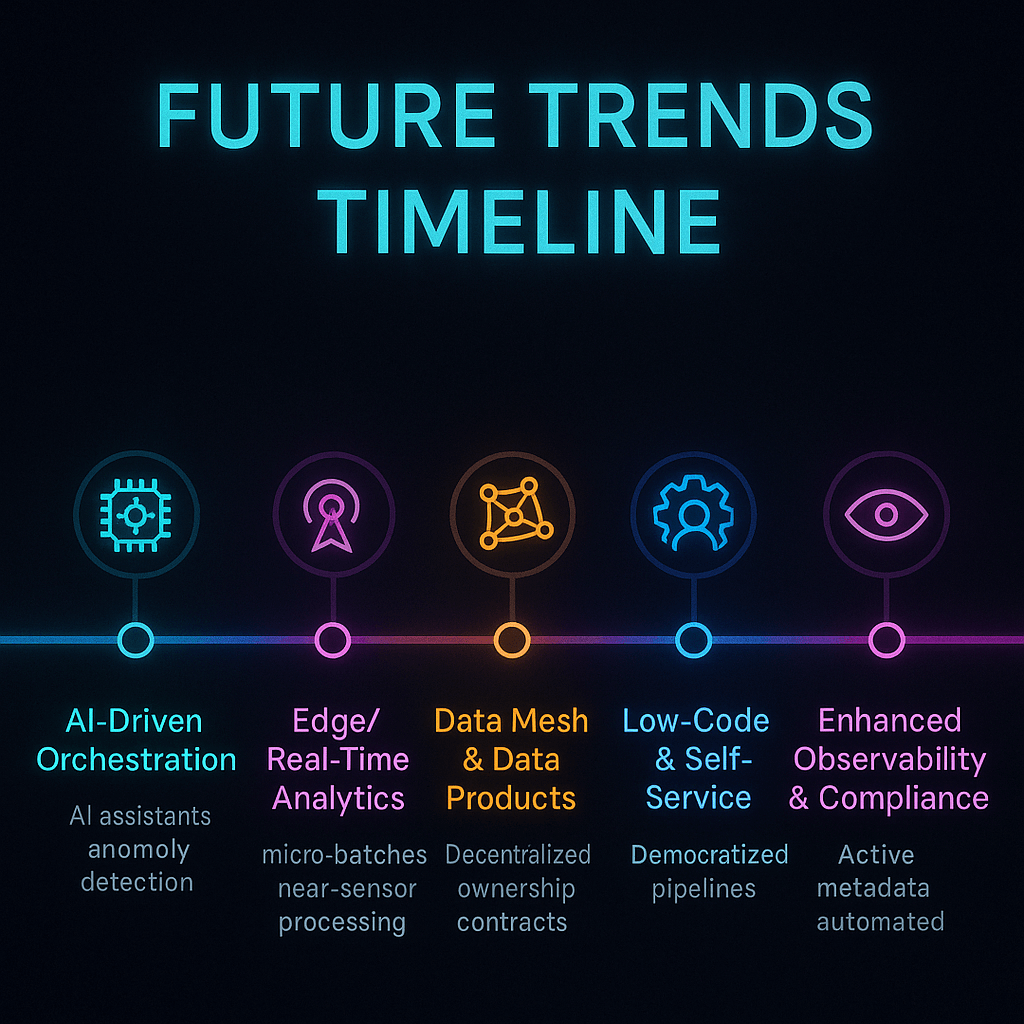

Future Trends in AI Orchestration

The landscape of AI orchestration is evolving rapidly. Here are some trends to watch:

- Increased Use of SLMs: Small language models offer a balance of efficiency and accuracy, making them ideal for many applications.

- Integration of AI and IoT: As IoT devices proliferate, integrating AI orchestration will become crucial for managing data flows and enhancing device capabilities.

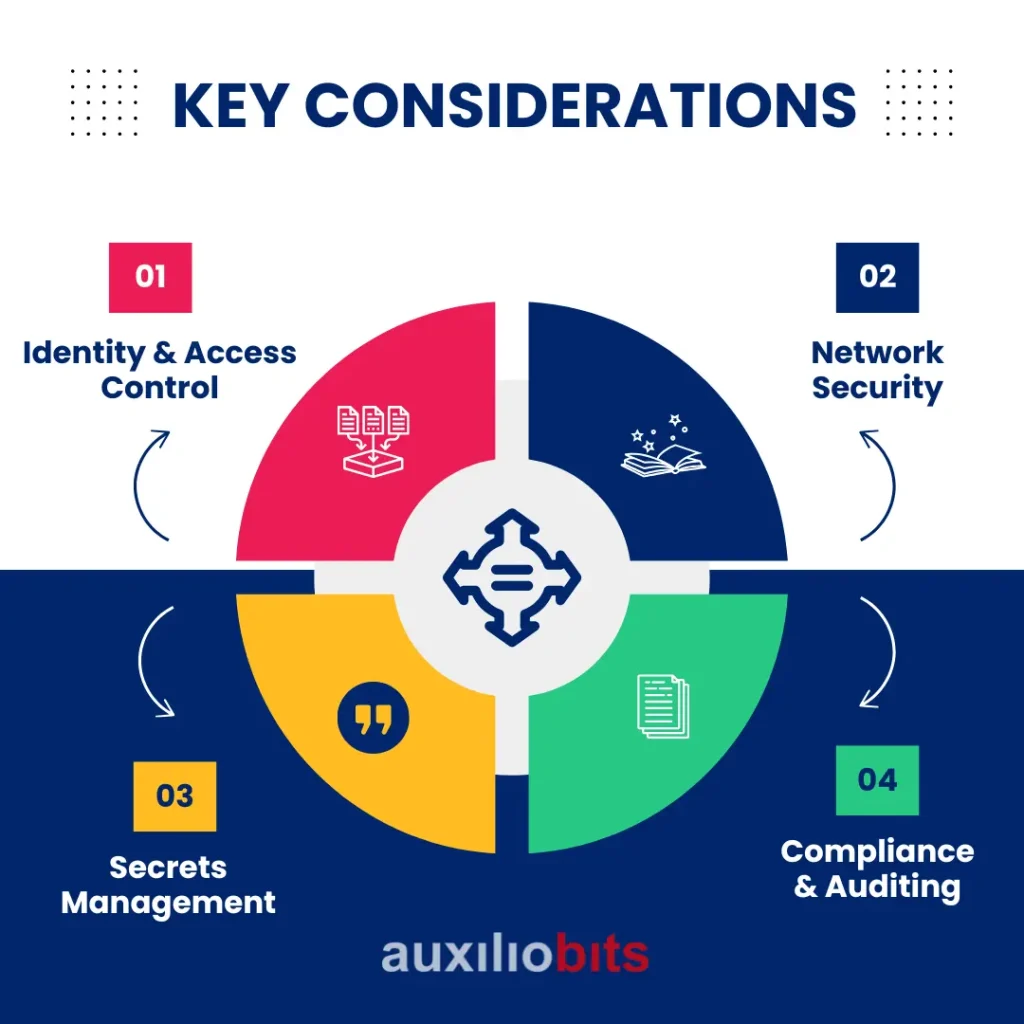

- Enhanced Security Protocols: With the increasing complexity of AI systems, robust security measures will be necessary to protect sensitive data.

Recommendations for AI Orchestration

For businesses looking to optimize their AI orchestration, consider the following best practices:

- Invest in Training: Ensure your team is well-versed in the latest AI orchestration techniques and tools.

- Adopt a Modular Approach: Build systems that can easily adapt to new technologies and requirements.

- Focus on Scalability: Design your system with future growth in mind, ensuring it can handle increased demand.

Conclusion: AT&T's Pioneering Approach

AT&T's innovative approach to AI orchestration has set a new standard in the industry. By leveraging a multi-agent system and focusing on efficiency, they have not only reduced costs significantly but also improved the overall performance and responsiveness of their AI systems. As more companies face similar challenges, AT&T's strategy offers a roadmap for success.

FAQ

What is AI orchestration?

AI orchestration involves managing the execution of tasks within an AI system, ensuring efficient completion and optimal resource allocation.

How did AT&T reduce costs by 90%?

By implementing a multi-agent system using Lang Chain, which optimized resource allocation and improved efficiency.

What are small language models (SLMs)?

SLMs are compact AI models designed for specific tasks, offering efficiency and accuracy without the computational overhead of larger models.

Why is Lang Chain used for AI orchestration?

Lang Chain provides a modular architecture that supports large-scale AI operations, facilitating the integration and management of multiple agents.

What are the benefits of a multi-agent system?

They offer optimized resource use, reduced latency, increased efficiency, and the ability to handle complex tasks through specialized agents.

Can other companies implement AT&T's strategy?

Yes, by assessing their needs, selecting the right framework, and designing specific super and worker agents, companies can achieve similar efficiencies.

Key Takeaways

- 8 billion tokens daily: Managing such scale requires efficient AI orchestration.

- Cost reduction by 90%: AT&T's strategy led to significant savings.

- Multi-agent system: Flexible and efficient task management.

- Lang Chain: A key framework for managing AI tasks.

- Future of AI: Focus on small language models (SLMs) for specific tasks.

Tags

"AT&T", "AI orchestration", "Lang Chain", "AI cost reduction", "multi-agent systems", "small language models", "AI scalability", "technology innovation", "AI efficiency", "AI trends"

Related Articles

- Alibaba's Qwen 3.5 397B-A17: How Smaller Models Beat Trillion-Parameter Giants [2025]

- AI Companies and Energy: Addressing the Impact of Rising Electricity Costs [2025]

- Embracing Playfulness in AI Development: Insights from OpenClaw's Creator [2025]

- Mastering Adobe's AI Video Editing: A Comprehensive Guide [2025]

- How to Make Alexa More Friendly, Blunt, or Chilled Out: A Comprehensive Guide [2025]

- How Claude Code Revolutionized Programming: Claude Cowork's Next Frontier [2025]

![How AT&T's AI Orchestration Cut Costs by 90% [2025]](https://tryrunable.com/blog/how-at-t-s-ai-orchestration-cut-costs-by-90-2025/image-1-1772057083793.png)