How Databricks Sells to Diverse Industries Without a Single Vertical Product [2025]

Last month, I got a message from a data analyst friend, "Have you seen how Databricks is transforming industries without going vertical?" Intrigued, I dove into the world of Databricks, exploring how a single-platform approach has made waves across sectors ranging from healthcare to finance.

TL; DR

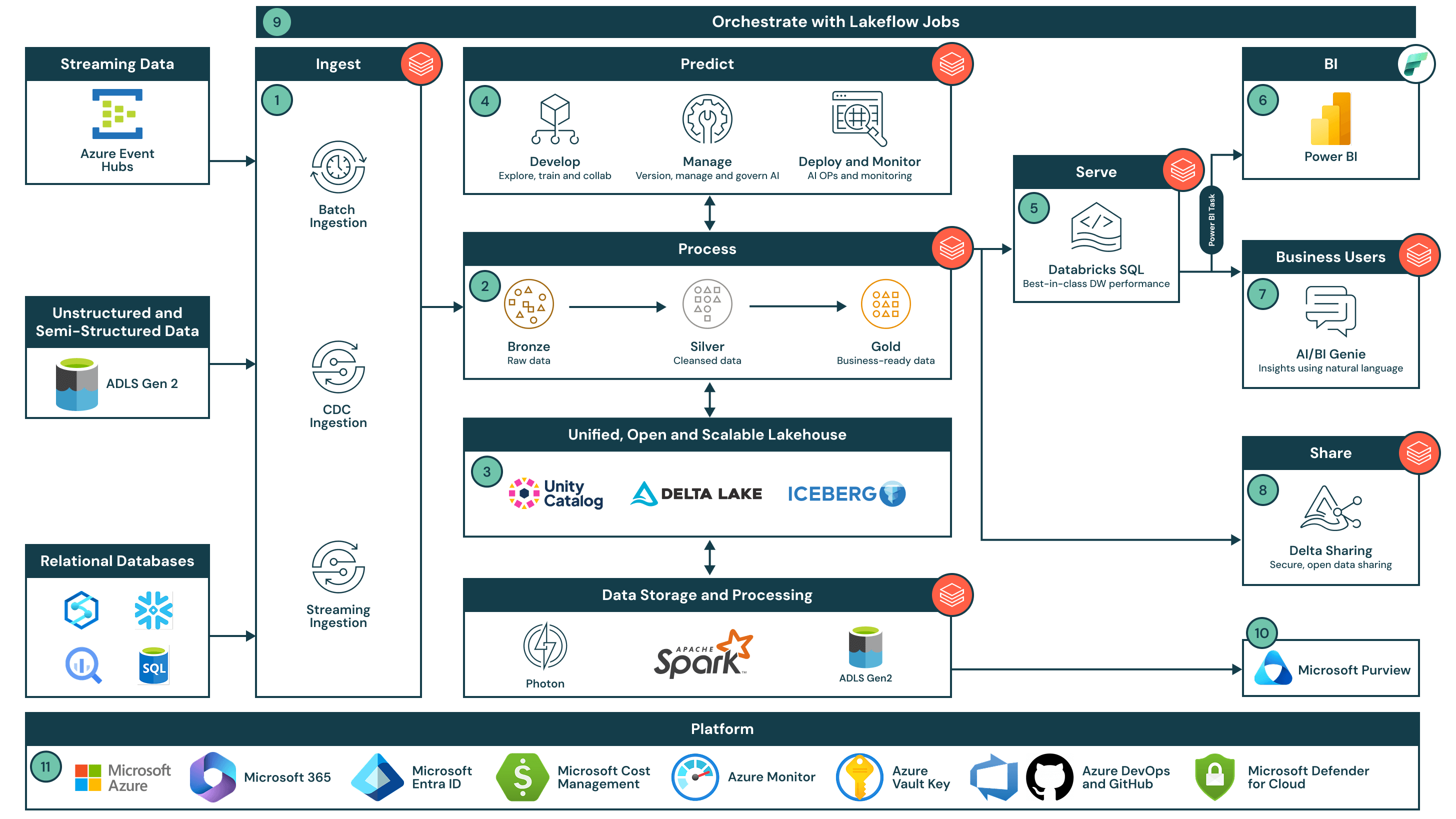

- Unified Platform: Databricks offers a single, versatile platform that suits numerous industries by focusing on broad, adaptable solutions.

- Industry Customization: Instead of vertical products, Databricks tailors use cases and integrations to meet specific industry needs.

- Scalable Solutions: The platform's scalability ensures it can handle small startups to large enterprises.

- Community and Ecosystem: A strong community and broad ecosystem of partners enable continuous innovation and support.

- Future-Proofing: Databricks invests in future technologies like AI and machine learning to stay ahead of industry trends.

- Bottom Line: By focusing on flexibility and scalability, Databricks serves a wide array of industries without the need for industry-specific products.

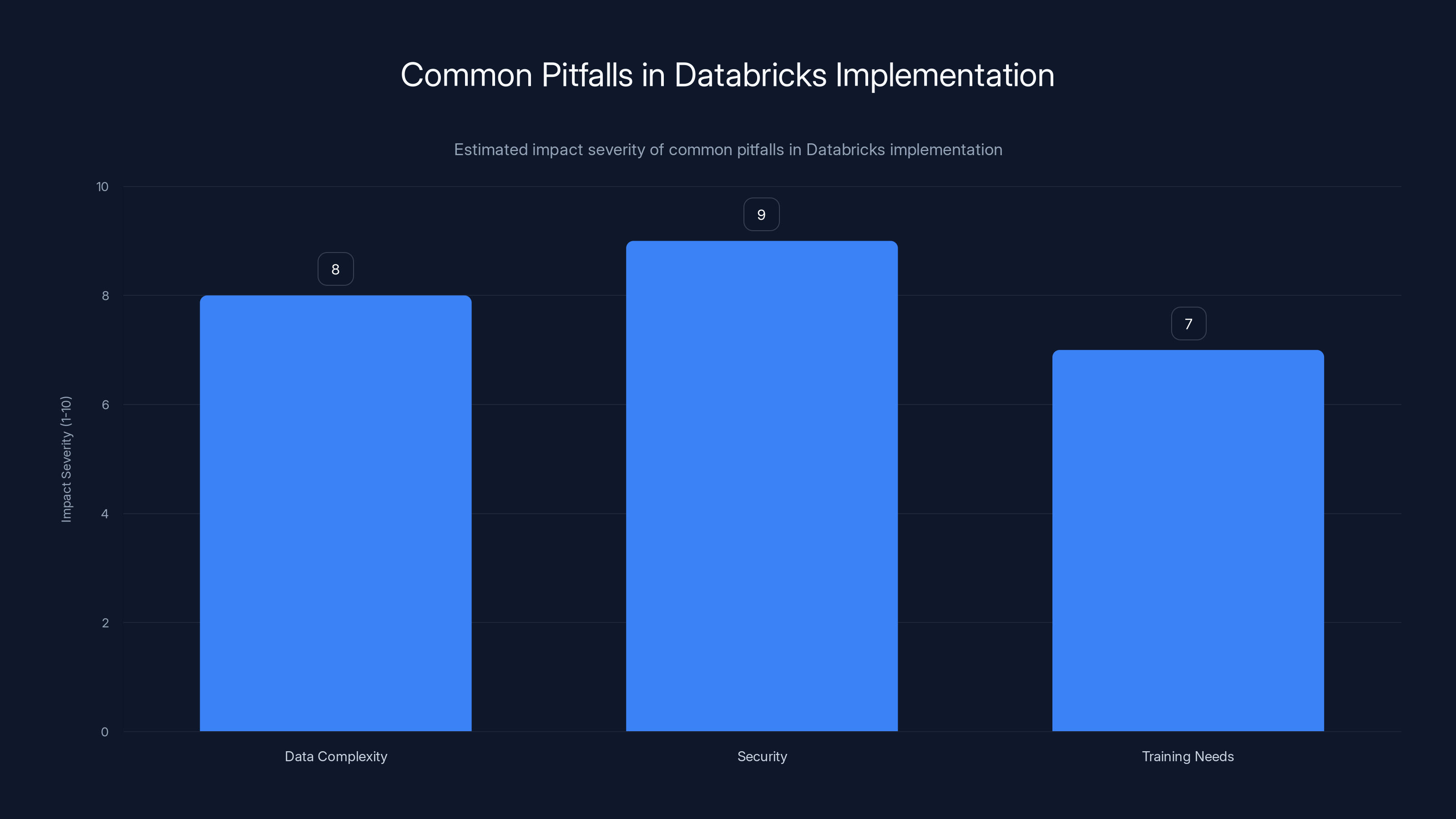

This chart estimates the impact severity of common pitfalls in Databricks implementation, highlighting security as the most critical concern. Estimated data.

Introduction

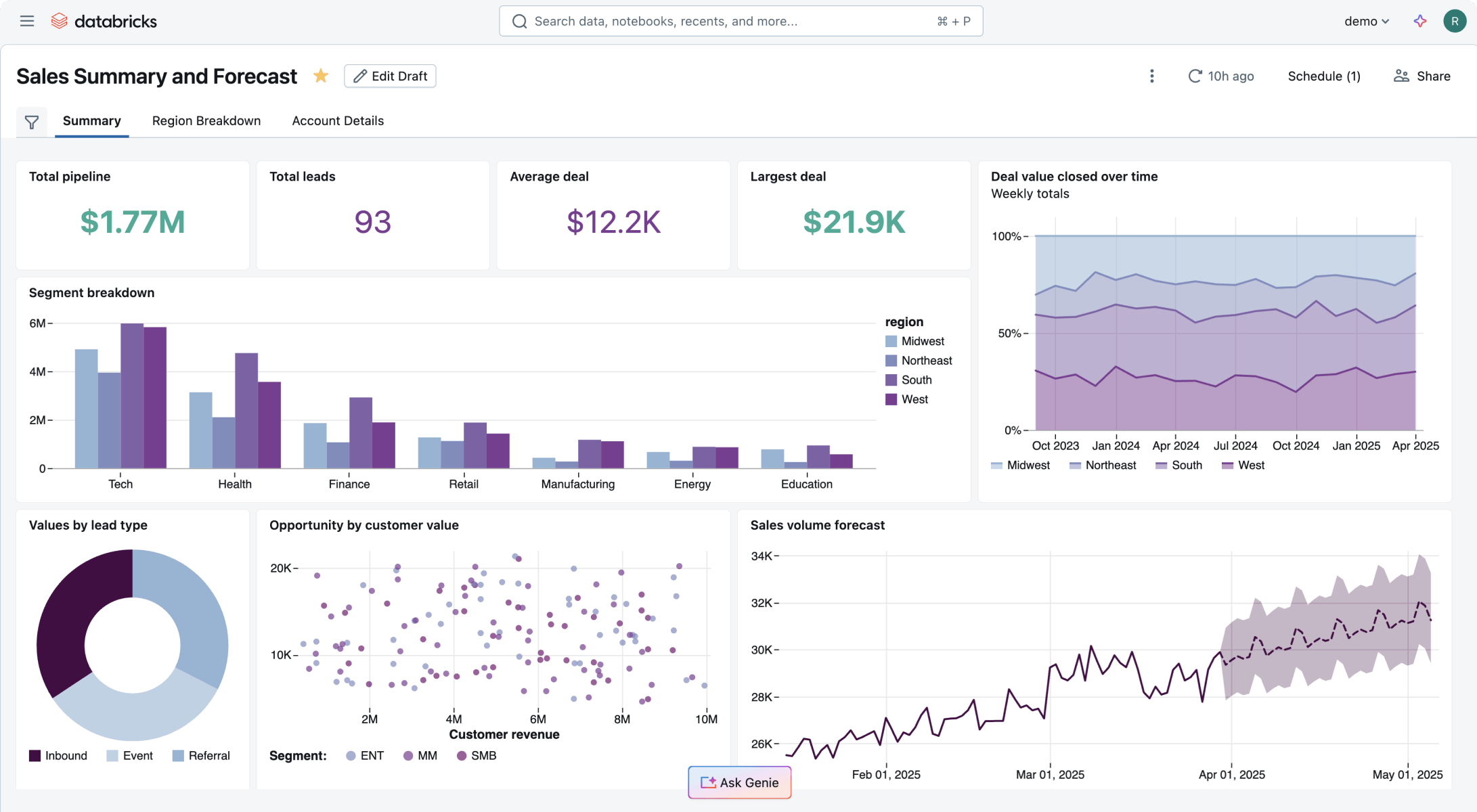

Databricks is a name that frequently pops up when discussing data analytics and cloud computing. But here's the thing: unlike many tech giants that develop industry-specific products, Databricks sticks to a single, robust platform. So, how does it manage to cater to dozens of industries without tailoring vertical solutions?

A Universal Platform for Diverse Needs

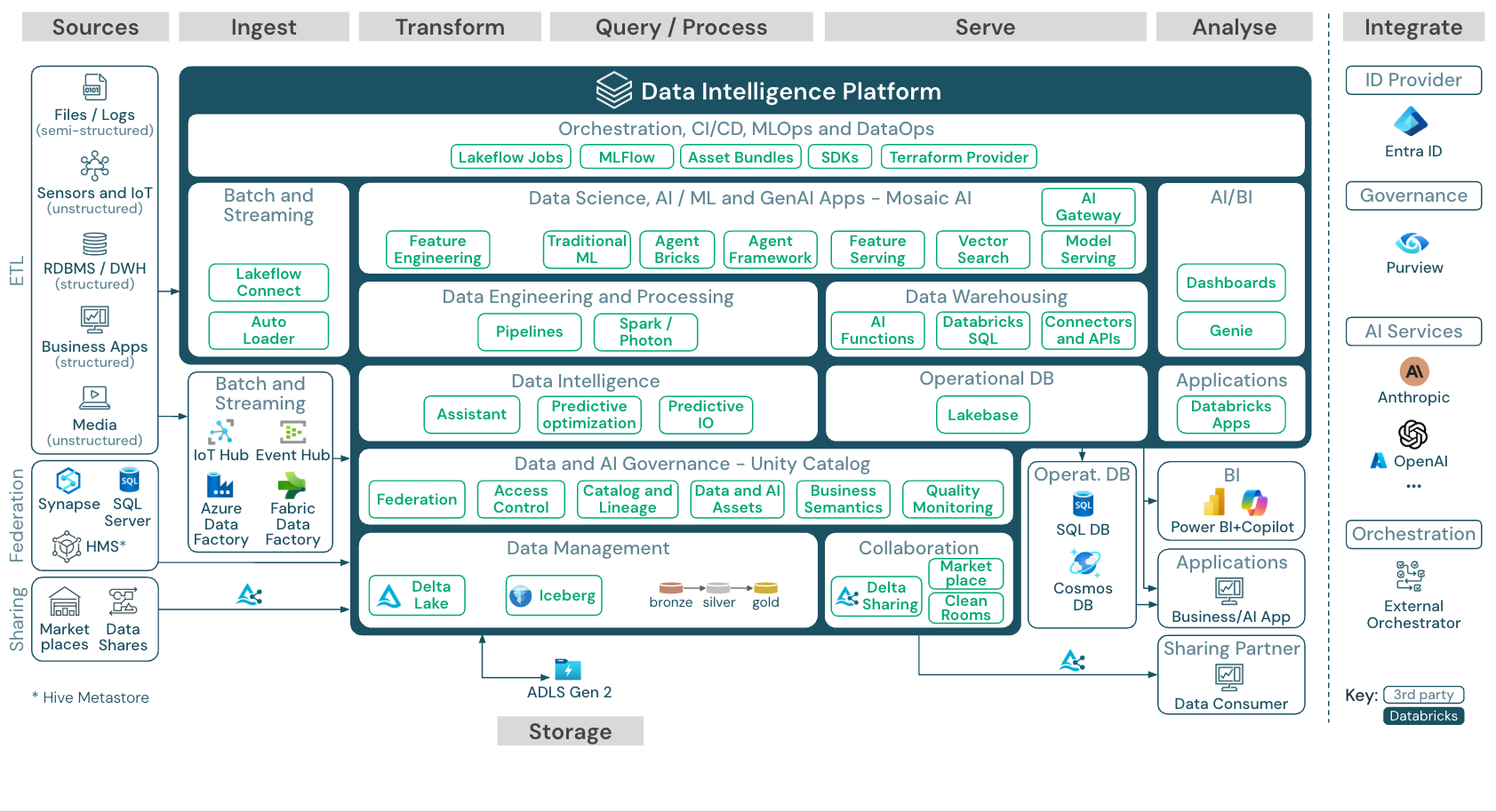

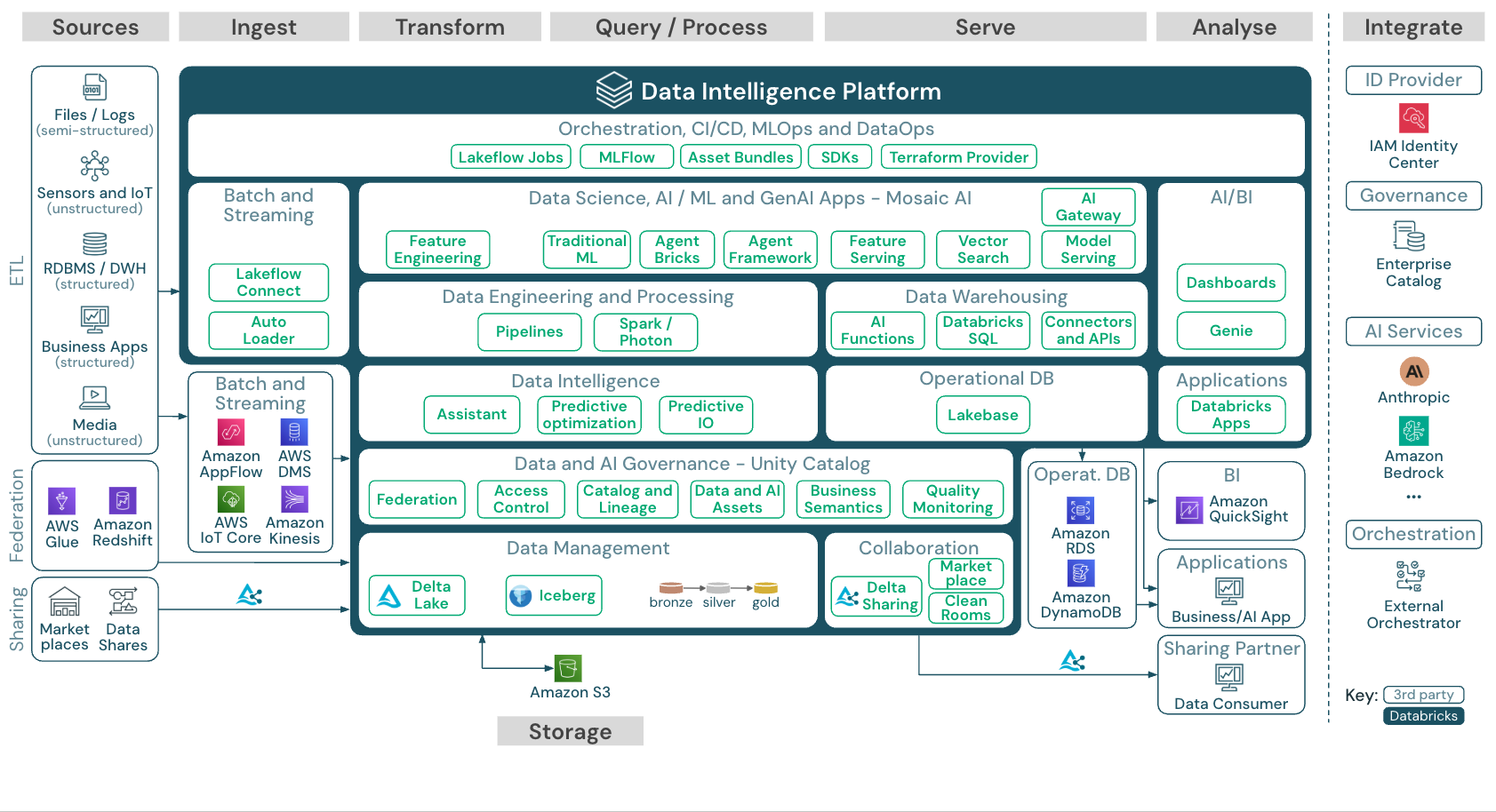

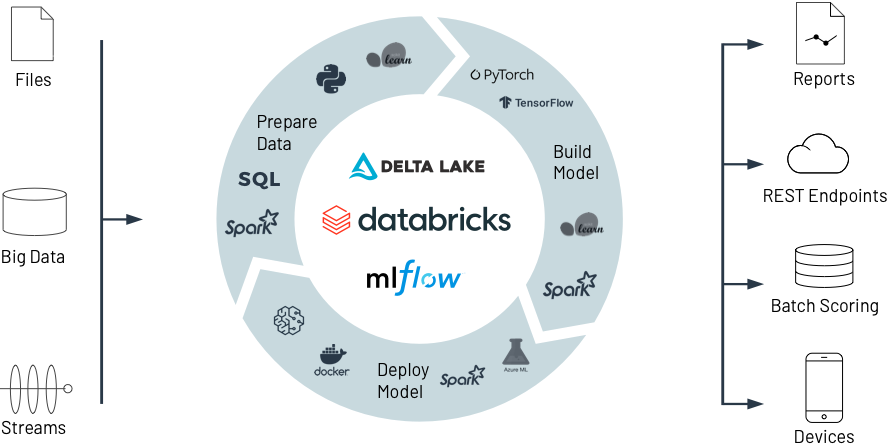

Databricks' secret sauce lies in its highly adaptable platform. At its core, Databricks doesn't sell a vertical product; it sells a data platform capable of addressing the unique needs of myriad industries through customization and scalability.

What Databricks Offers:

- Unified Data Platform: Combines data engineering, data science, and machine learning into one platform.

- Scalability: Accommodates workloads from small to massive scales.

- Open Source Embrace: Built on top of Apache Spark, it benefits from continuous updates and community innovations.

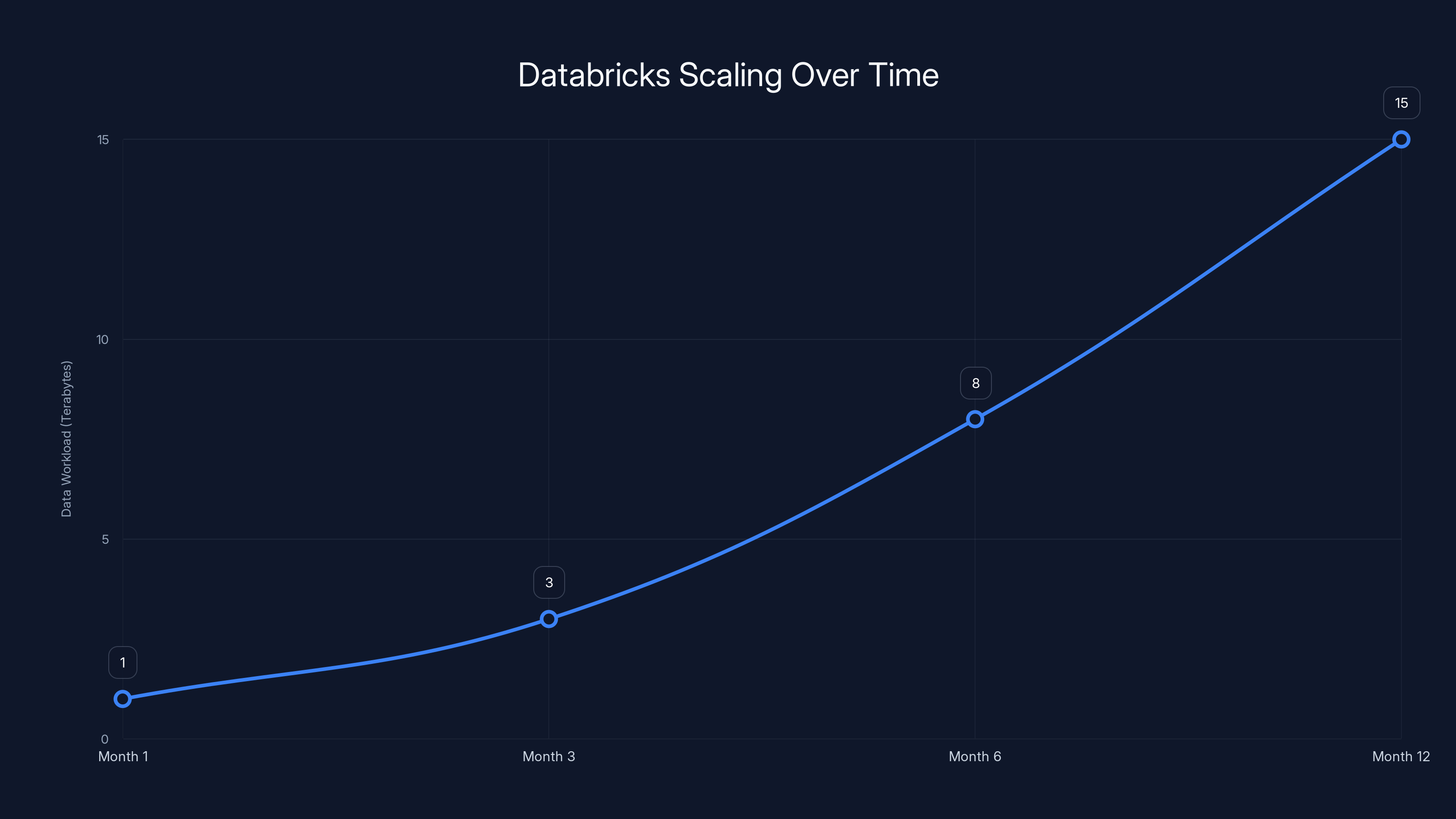

This line chart illustrates how a startup's data workload can scale over a year using Databricks, from 1 TB to 15 TB. Estimated data.

The Industry Customization Approach

Databricks excels by focusing on adaptable solutions rather than creating bespoke products for every industry. This approach allows it to efficiently allocate resources while maintaining a high level of flexibility for its clients.

Industry-Specific Use Cases

Rather than crafting a unique product for each sector, Databricks develops use cases that demonstrate how its platform can solve problems specific to each industry.

Examples of Industry Use Cases:

- Healthcare: Optimizing patient data management and accelerating drug discovery.

- Finance: Enhancing fraud detection and risk management through real-time analytics.

- Retail: Personalizing customer experiences and optimizing supply chain operations.

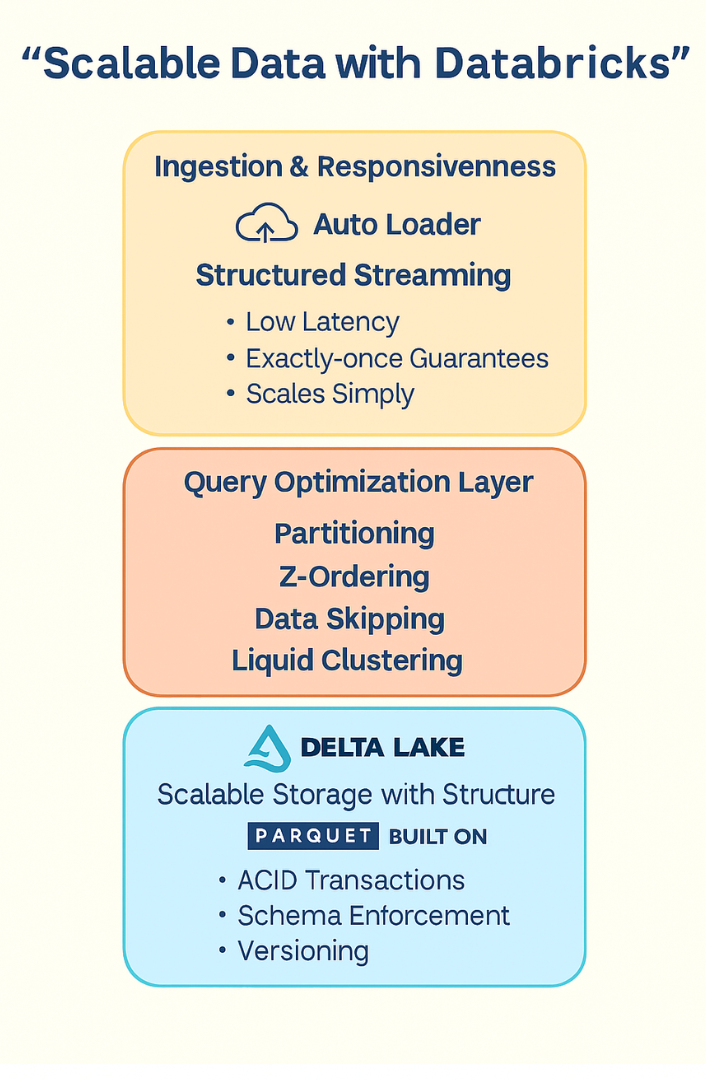

Scalable Solutions for All Sizes

One of the standout features of Databricks is its ability to scale. Whether you're a burgeoning startup or a sprawling enterprise, Databricks can adapt to your data needs.

How Databricks Scales:

- Elastic Compute: Automatically scales resources based on workload demands.

- Data Lakehouse Architecture: Combines the best of data warehouses and data lakes.

- Seamless Integration: Works with a variety of data sources and services, ensuring compatibility and ease of use.

Real-World Example: A Startup's Journey

Consider a startup aiming to harness data for better customer insights. Initially, they might need modest compute resources. As they grow, Databricks effortlessly scales up, providing them with the necessary power without the need for significant infrastructure changes.

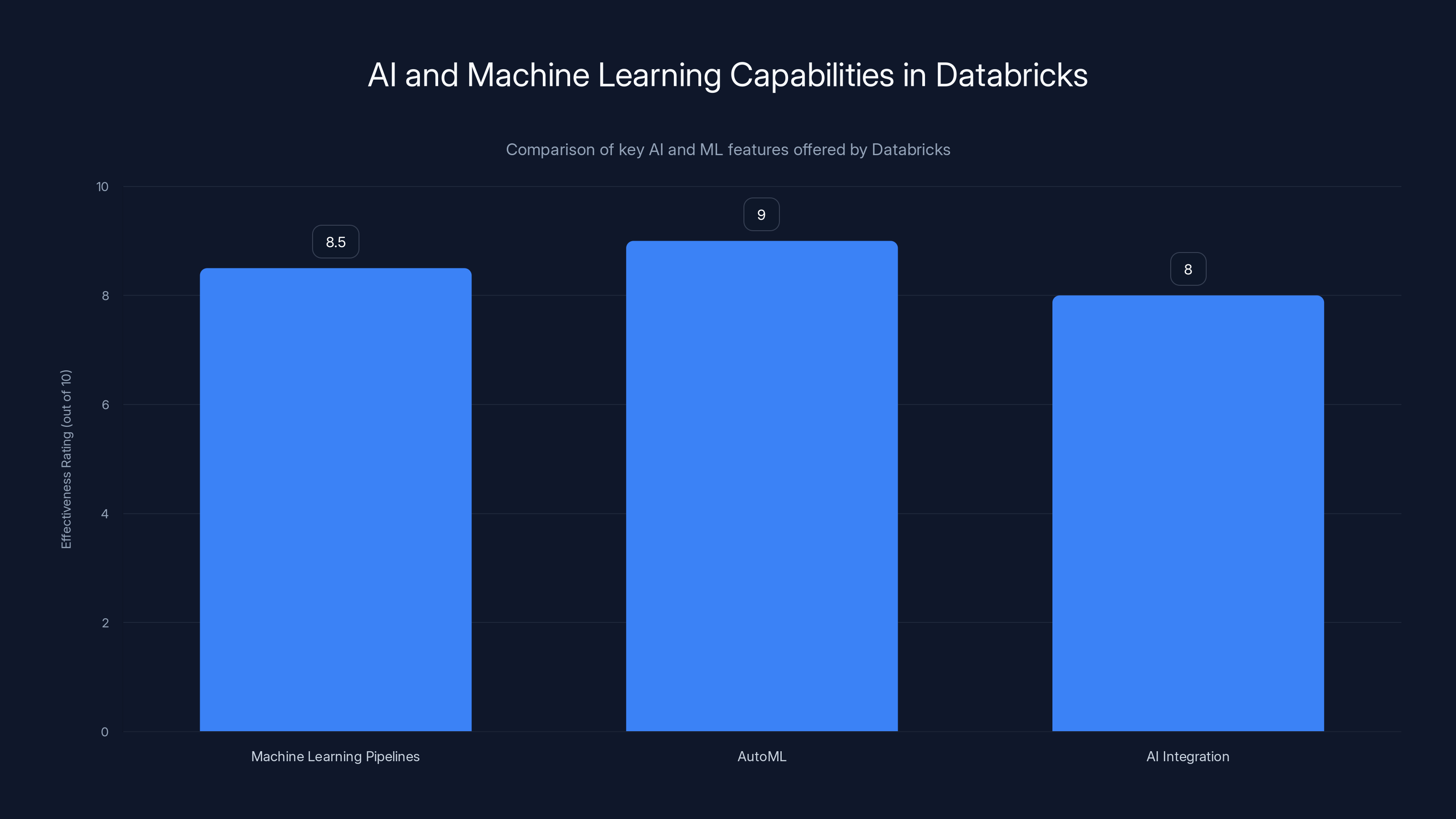

Databricks' AutoML feature is rated highest for accelerating model development, followed by Machine Learning Pipelines and AI Integration. Estimated data.

Building a Strong Community and Ecosystem

Databricks' success isn't just due to its technology but also its strong community and ecosystem. By fostering an environment of collaboration and innovation, Databricks ensures its platform remains cutting-edge and relevant.

Community and Ecosystem Benefits:

- Continuous Innovation: Regular contributions and updates from the open-source community.

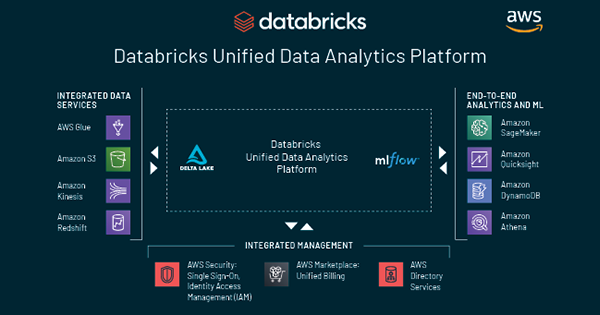

- Partner Ecosystem: Collaborations with cloud providers, data service companies, and application developers.

- Educational Resources: Comprehensive learning materials and certifications to empower users.

The Power of Partnerships

Through partnerships with major cloud providers like AWS, Azure, and Google Cloud, Databricks extends its reach and functionality, allowing users to deploy their solutions across various environments.

Future-Proofing with AI and Machine Learning

Databricks continually invests in future technologies, ensuring its platform is equipped to handle the next wave of innovation. By integrating AI and machine learning capabilities, Databricks stays ahead of the curve, ready to tackle tomorrow's challenges.

AI and Machine Learning Capabilities:

- Machine Learning Pipelines: Simplifies the creation, deployment, and management of ML models.

- Auto ML: Provides automated machine learning capabilities to accelerate model development.

- AI Integration: Seamlessly integrates AI into existing workflows to enhance decision-making processes.

Predictive Analytics in Manufacturing

In the manufacturing sector, Databricks enables predictive maintenance by analyzing sensor data to forecast equipment failures, reducing downtime and maintenance costs.

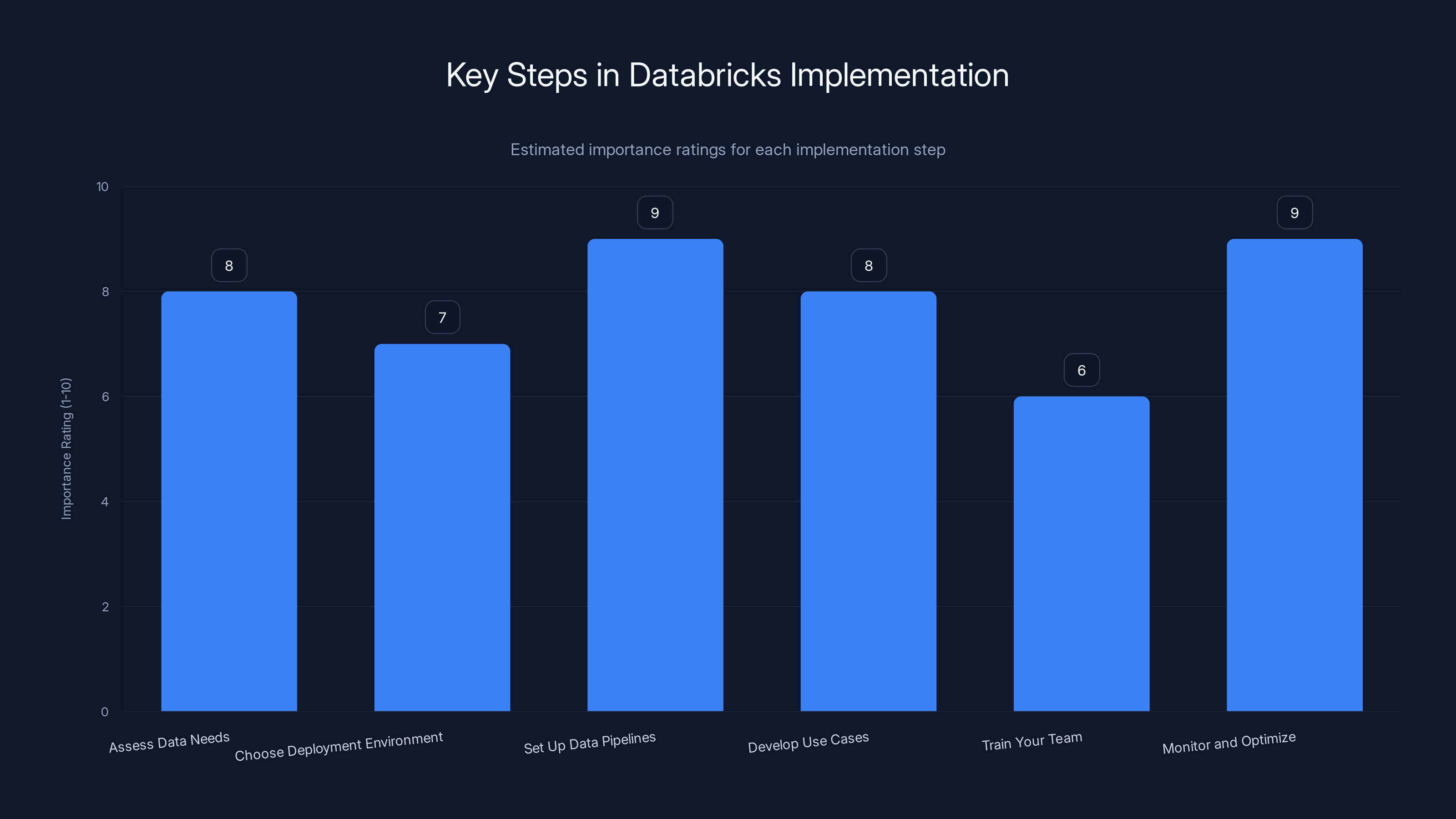

Setting up data pipelines and monitoring are crucial steps in Databricks implementation, both rated at 9 for importance. Estimated data.

Practical Implementation Guide

Implementing Databricks in a business involves several key steps to ensure seamless integration and maximum benefit. Here's a practical guide to getting started with Databricks:

- Assess Data Needs: Identify your data sources, types, and volume to determine the necessary compute resources.

- Choose Deployment Environment: Decide whether to deploy on AWS, Azure, or Google Cloud based on existing infrastructure and preferences.

- Set Up Data Pipelines: Use Databricks' built-in tools to create data pipelines that automate data ingestion and processing.

- Develop Use Cases: Collaborate with Databricks experts to develop industry-specific use cases that align with business goals.

- Train Your Team: Utilize Databricks' educational resources to train your team on platform capabilities and best practices.

- Monitor and Optimize: Continuously monitor performance and optimize data workflows to improve efficiency.

Common Pitfalls and Solutions

While Databricks offers a powerful platform, there are common pitfalls businesses might encounter during implementation. Here's how to avoid them:

- Underestimating Data Complexity: Ensure a thorough understanding of data types and sources before implementation.

- Overlooking Security: Implement robust security measures to protect sensitive data.

- Ignoring Training Needs: Invest in comprehensive training to ensure your team can fully leverage the platform's capabilities.

Solution Strategies

For each pitfall, consider the following strategies:

- Data Complexity: Conduct a detailed data audit and engage data experts to map out a clear data strategy.

- Security: Use Databricks' security features like role-based access control and data encryption.

- Training: Schedule regular training sessions and certifications for team members.

Future Trends and Recommendations

As data continues to play a pivotal role in business strategy, staying ahead of trends is crucial. Here are some future trends and recommendations for leveraging Databricks effectively:

- Embrace Real-Time Analytics: The demand for real-time data insights is growing, and Databricks is well-positioned to provide these capabilities.

- Expand AI Usage: AI will become more integrated into business processes, and Databricks' AI capabilities will be essential.

- Focus on Data Privacy: With increasing regulations, maintaining data privacy and compliance will be critical.

Proactive Steps

- Invest in AI: Allocate resources to AI initiatives and explore new use cases for Databricks' AI capabilities.

- Real-Time Readiness: Upgrade infrastructure to support real-time analytics and decision-making.

- Privacy Compliance: Stay updated on data privacy laws and implement necessary compliance measures.

Conclusion

Databricks' approach of selling a unified, scalable platform that meets the needs of multiple industries without developing vertical products is a testament to its flexibility and foresight. By focusing on customization, scalability, and innovation, Databricks continues to lead in the data analytics space, proving that a one-size-fits-all approach can be incredibly effective when executed with precision and adaptability.

Use Case: Automate your data workflows with AI-powered insights using Runable for efficient data management.

Try Runable For FreeFAQ

What is Databricks?

Databricks is a data platform that combines data engineering, data science, and machine learning to provide a unified solution for data analytics.

How does Databricks cater to different industries?

Databricks caters to different industries by offering a flexible platform that can be customized through industry-specific use cases and integrations.

What are the key features of Databricks?

Key features include a unified data platform, scalability, open-source support, AI and machine learning capabilities, and a strong community and partner ecosystem.

How can businesses implement Databricks effectively?

Businesses can implement Databricks effectively by assessing data needs, choosing the right deployment environment, setting up data pipelines, developing use cases, and ensuring team training.

What are common pitfalls in using Databricks?

Common pitfalls include underestimating data complexity, overlooking security, and ignoring training needs. Solutions involve data audits, robust security measures, and comprehensive training.

What future trends should Databricks users be aware of?

Future trends include the growing importance of real-time analytics, expanded AI usage, and the need for data privacy compliance.

Why is Databricks' approach effective?

Databricks' approach is effective because it focuses on providing a flexible, scalable platform that can adapt to the unique needs of various industries, eliminating the need for vertical products.

How does Databricks support innovation?

Databricks supports innovation through continuous updates from the community, partnerships with major cloud providers, and investment in AI and machine learning technologies.

Key Takeaways

- Databricks offers a unified platform that adapts to various industry needs.

- Customization through use cases replaces the need for vertical products.

- Scalability allows Databricks to serve both startups and enterprises.

- Strong community and ecosystem drive continuous innovation.

- Future-proofing through AI and machine learning investments is crucial.

- Avoid common pitfalls by understanding data complexity and security needs.

- Real-time analytics and data privacy are key future trends.

Related Articles

- Unlock Massive Savings on TechCrunch Disrupt 2026 Tickets [2025]

- Europe's Sovereignty Ambitions Require Smarter Infrastructure [2025]

- Why Most Agentic AI Projects Fail and How to Avoid Being One of Them [2025]

- Quantum Computing's Looming Threat to Bitcoin Encryption [2025]

- Behind the Wheel: The Hidden Role of Remote Operators in Robotaxis [2025]

- Mastering Game Audio: How Battlefield 6's Team Redefines Soundscapes [2025]

![How Databricks Sells to Diverse Industries Without a Single Vertical Product [2025]](https://tryrunable.com/blog/how-databricks-sells-to-diverse-industries-without-a-single-/image-1-1775563508259.jpg)