How UK Businesses Can Bolster AI Crisis Management in 2025

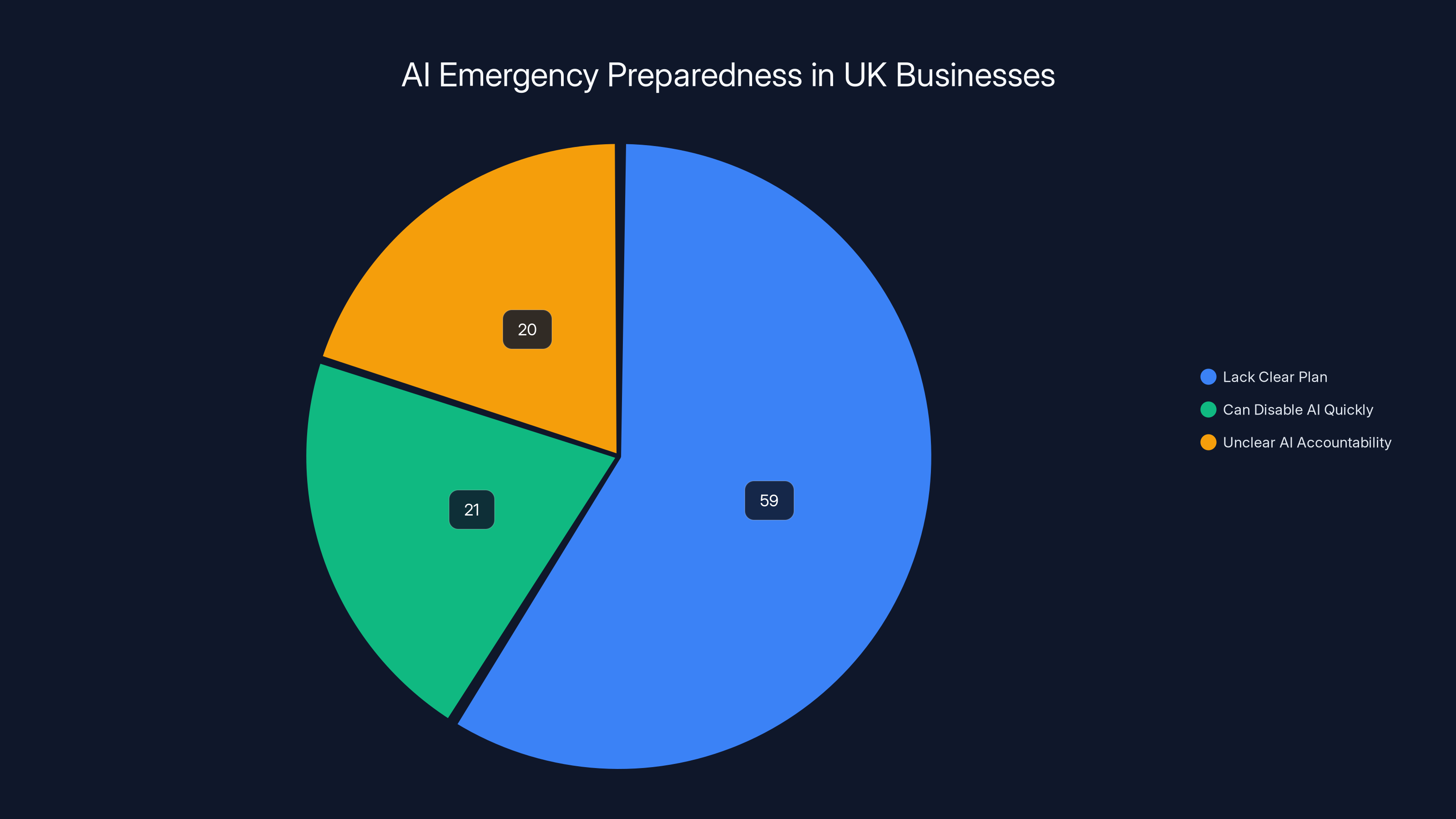

The rapid adoption of artificial intelligence (AI) has transformed industries across the globe, enhancing efficiency, innovation, and competitive advantage. Yet, a recent survey suggests that over half of UK businesses are unprepared to halt AI systems in a crisis—a revelation that highlights a significant gap in AI governance and accountability.

TL; DR

- 59% of UK businesses lack a clear plan to stop AI in emergencies.

- Only 21% can disable AI systems within 30 minutes.

- AI accountability remains unclear in many organizations.

- Future regulations will demand better explainability and control.

- Proactive measures can mitigate risks and enhance AI resilience.

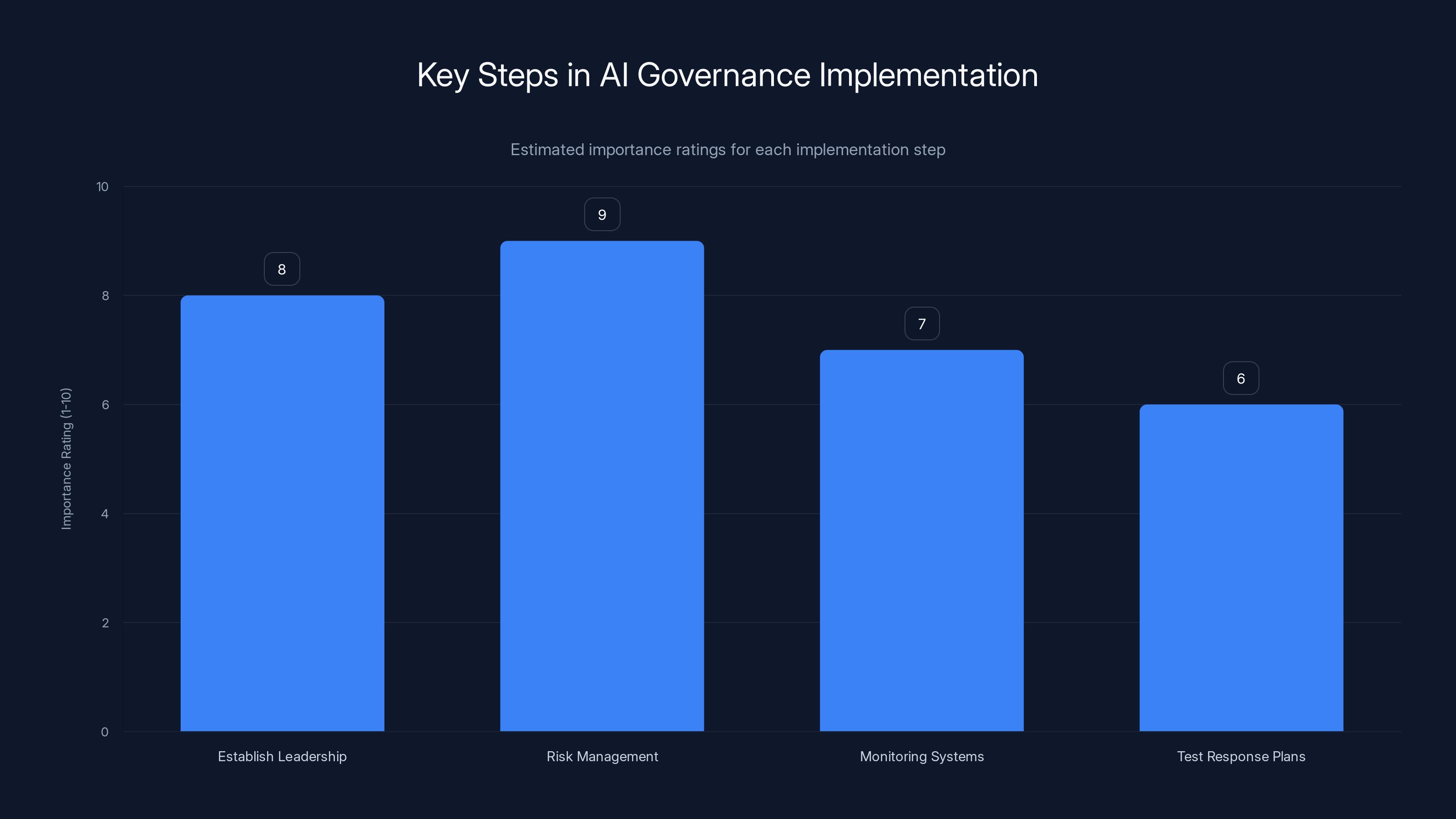

Establishing leadership and developing a risk management strategy are crucial steps, rated highest in importance. (Estimated data)

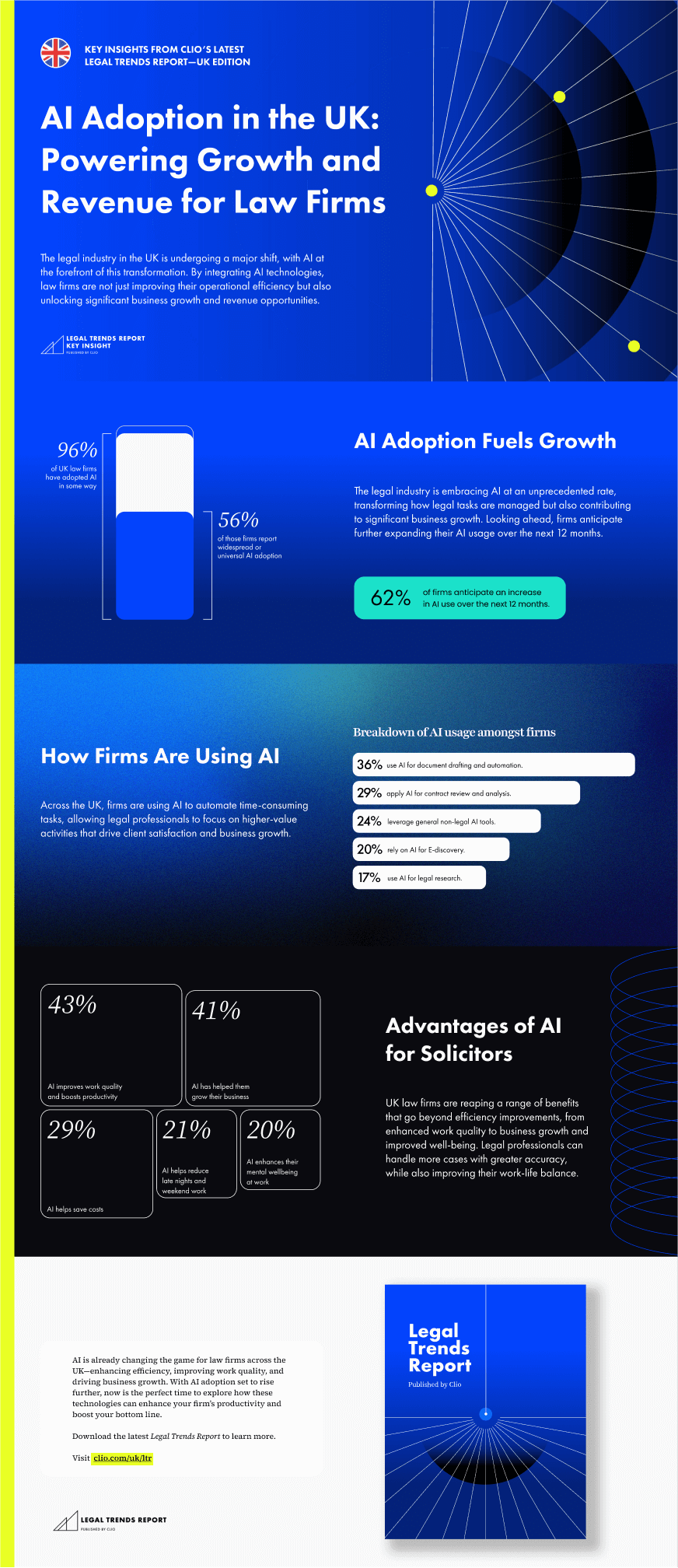

The Current AI Landscape in the UK

The UK has been a leader in AI adoption, with applications ranging from customer service chatbots to complex predictive analytics. However, the rapid integration of AI hasn't been matched with adequate crisis management strategies. Many companies could find themselves vulnerable if an AI system malfunctioned or was compromised.

Understanding the AI Crisis

An AI crisis can occur due to several factors, such as algorithmic errors, security breaches, or data mismanagement. These incidents can disrupt operations, lead to financial losses, and damage reputations. In the worst-case scenarios, they may even pose legal or regulatory challenges, as noted in CNBC's analysis of AI risks.

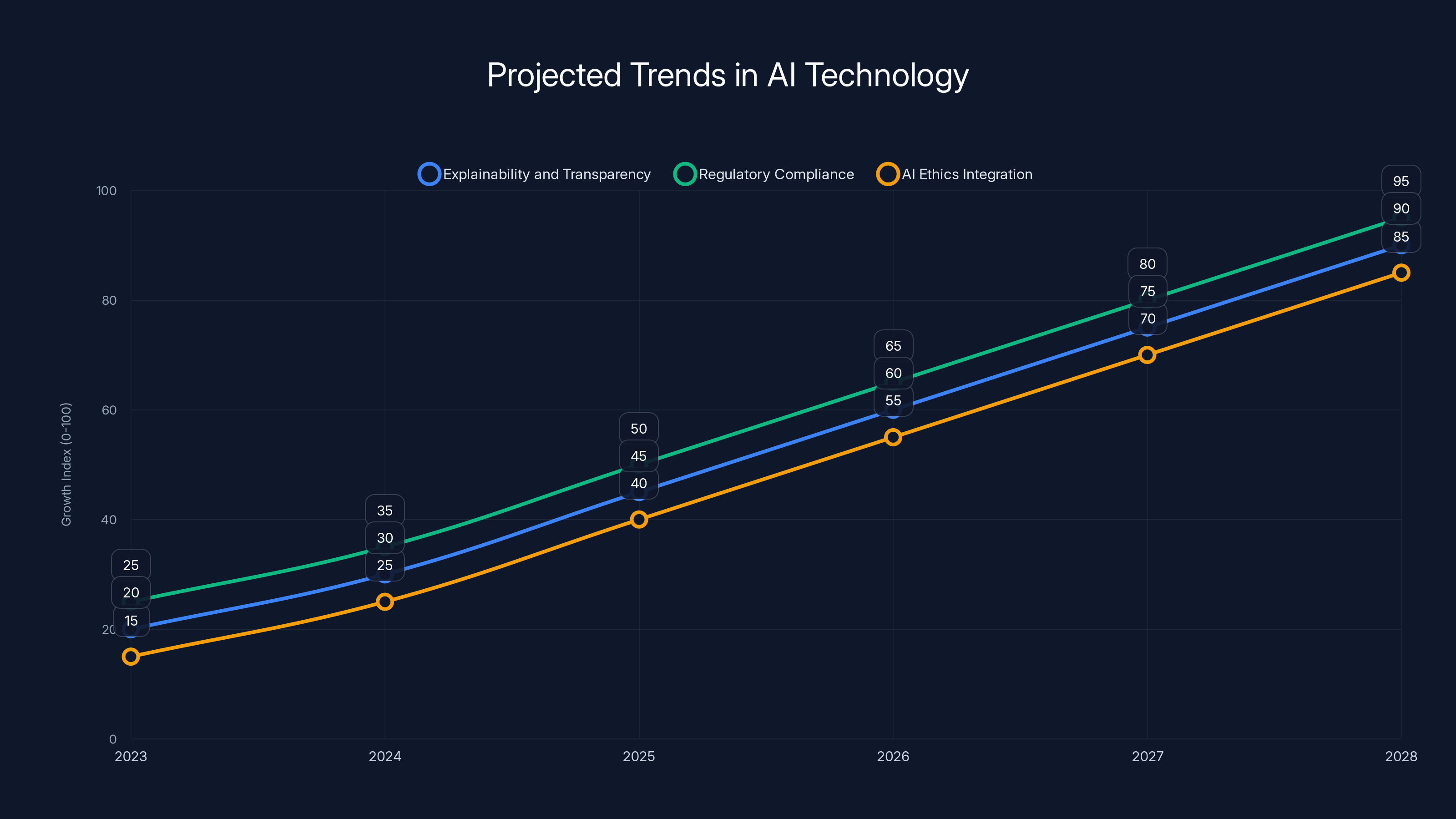

Estimated data shows significant growth in AI explainability, compliance, and ethics integration by 2028, highlighting the increasing importance of these areas.

The Accountability Gap

A significant issue facing UK businesses is the lack of clear accountability regarding AI operations. According to a recent study, only 38% of employees can accurately identify who is responsible for AI systems within their organizations.

Why Accountability Matters

Assigning accountability ensures that there are designated individuals or teams responsible for AI oversight, risk management, and decision-making. This clarity is crucial for swift action during crises, as it facilitates coordinated responses and effective communication, as emphasized by Cornerstone OnDemand.

AI Crisis Management: Best Practices

Implementing a robust AI crisis management strategy requires understanding potential risks and establishing clear procedures. Here are some best practices:

- Develop an AI Governance Framework: Establish policies and procedures that define roles, responsibilities, and processes for managing AI systems.

- Conduct Regular Risk Assessments: Identify potential vulnerabilities and assess the impact of AI-related risks.

- Implement Monitoring Tools: Use advanced monitoring solutions to detect anomalies and potential security threats, as recommended by Qualys.

- Create a Response Plan: Develop a comprehensive plan that outlines steps to take in the event of an AI failure.

- Train Employees: Ensure staff are trained in AI operations and crisis management protocols.

59% of UK businesses lack a clear plan for AI emergencies, while only 21% can disable AI systems within 30 minutes. Estimated data highlights the need for improved AI accountability.

Practical Implementation Guide

Implementing these best practices involves several steps:

Step 1: Establish Clear Leadership

Assign an AI governance team responsible for overseeing AI operations and crisis management. This team should include representatives from IT, security, legal, and business units, as suggested by Accenture.

Step 2: Develop a Risk Management Strategy

Conduct a thorough risk assessment to identify potential AI-related threats. Use this information to create a risk management strategy that prioritizes high-impact scenarios.

Step 3: Deploy Monitoring and Alert Systems

Implement monitoring tools that provide real-time insights into AI system performance. Set up alerts for anomalies and potential security threats to enable quick response, as highlighted by Wiz.io.

Step 4: Test Crisis Response Plans

Regularly test your crisis response plans through simulations and drills. This ensures that all team members are familiar with their roles and responsibilities during an actual crisis.

Common Pitfalls and Solutions

Pitfall 1: Lack of Clarity in Roles

Solution: Clearly define roles and responsibilities within the AI governance framework to avoid confusion during a crisis.

Pitfall 2: Inadequate Testing

Solution: Regularly test crisis response plans and update them based on lessons learned from drills and simulations.

Pitfall 3: Insufficient Training

Solution: Provide ongoing training to ensure employees are equipped to handle AI-related incidents effectively.

Future Trends and Recommendations

As AI technology continues to evolve, so do the challenges and opportunities associated with its use. Here are some trends and recommendations for the future:

1. Enhanced Explainability and Transparency

With the EU AI Act emphasizing explainability, businesses will need to ensure AI decisions are transparent and understandable. This involves implementing tools that trace AI decision-making processes and provide clear explanations.

2. Regulatory Compliance

Organizations must stay informed of regulatory changes and ensure compliance with evolving AI governance standards. This includes adhering to data protection laws and ethical guidelines, as noted by EY's survey.

3. Integration of AI Ethics

AI ethics will play an increasingly important role in shaping AI development and deployment. Companies should integrate ethical considerations into their AI strategies to ensure responsible use, as discussed in Pew Research findings.

Conclusion

The gap in AI crisis preparedness among UK businesses is a critical issue that requires immediate attention. By implementing robust governance frameworks, conducting regular risk assessments, and training employees, organizations can enhance their resilience and mitigate potential risks.

For businesses looking to proactively manage AI risks, Runable offers AI-powered automation solutions that can streamline crisis management processes, improve communication, and enhance accountability.

Use Case: Streamline your AI crisis response with automated workflows and real-time alerts.

Try Runable For Free

FAQ

What is an AI crisis?

An AI crisis involves situations where AI systems malfunction, leading to operational disruptions or potential harm to an organization. This can occur due to algorithmic errors, security breaches, or data mismanagement.

Why is accountability important in AI management?

Accountability ensures that there are designated individuals responsible for AI oversight, risk management, and decision-making. This clarity is crucial for swift action during crises and facilitates effective coordination.

How can businesses improve AI crisis management?

Businesses can enhance AI crisis management by developing governance frameworks, conducting risk assessments, implementing monitoring tools, creating response plans, and training employees.

What role does the EU AI Act play in AI governance?

The EU AI Act emphasizes AI explainability and accountability, requiring businesses to ensure their AI systems are transparent and comply with regulatory standards.

How can Runable help in AI crisis management?

Runable provides AI-powered automation solutions that streamline crisis management processes, improve communication, and enhance accountability through automated workflows and real-time alerts.

What are the future trends in AI governance?

Future trends in AI governance include enhanced explainability, regulatory compliance, and the integration of AI ethics into development and deployment strategies.

Key Takeaways

- 59% of UK businesses lack a plan to halt AI in emergencies.

- Only 21% of businesses can disable AI systems within 30 minutes.

- AI accountability is unclear in many organizations.

- Future regulations will demand better explainability and control.

- Proactive measures can mitigate risks and enhance AI resilience.

Related Articles

- The Rogue AI Incident at Meta: Unveiling the Security Challenges [2025]

- Senator Blackburn's Federal AI Bill: A Comprehensive Draft for the Future [2025]

- AI Startups Are Revolutionizing the Venture Industry: A Deep Dive [2025]

- Nvidia GTC 2026: NemoClaw, Robot Olaf, and the $1 Trillion AI Revolution [2025]

- Why People Really Hate AI: Unpacking the Concerns and Implications [2025]

- Is Marc Andreessen a Philosophical Zombie? [2025]