Why People Really Hate AI: Unpacking the Concerns and Implications [2025]

Artificial Intelligence (AI) is a double-edged sword. It's revolutionizing industries, making our lives easier, and opening up new possibilities. But it's also sparking fear and loathing in equal measure. Why do people really hate AI? This comprehensive exploration dives into the heart of the issue, examining why AI stirs such strong emotions and what can be done to address these concerns.

TL; DR

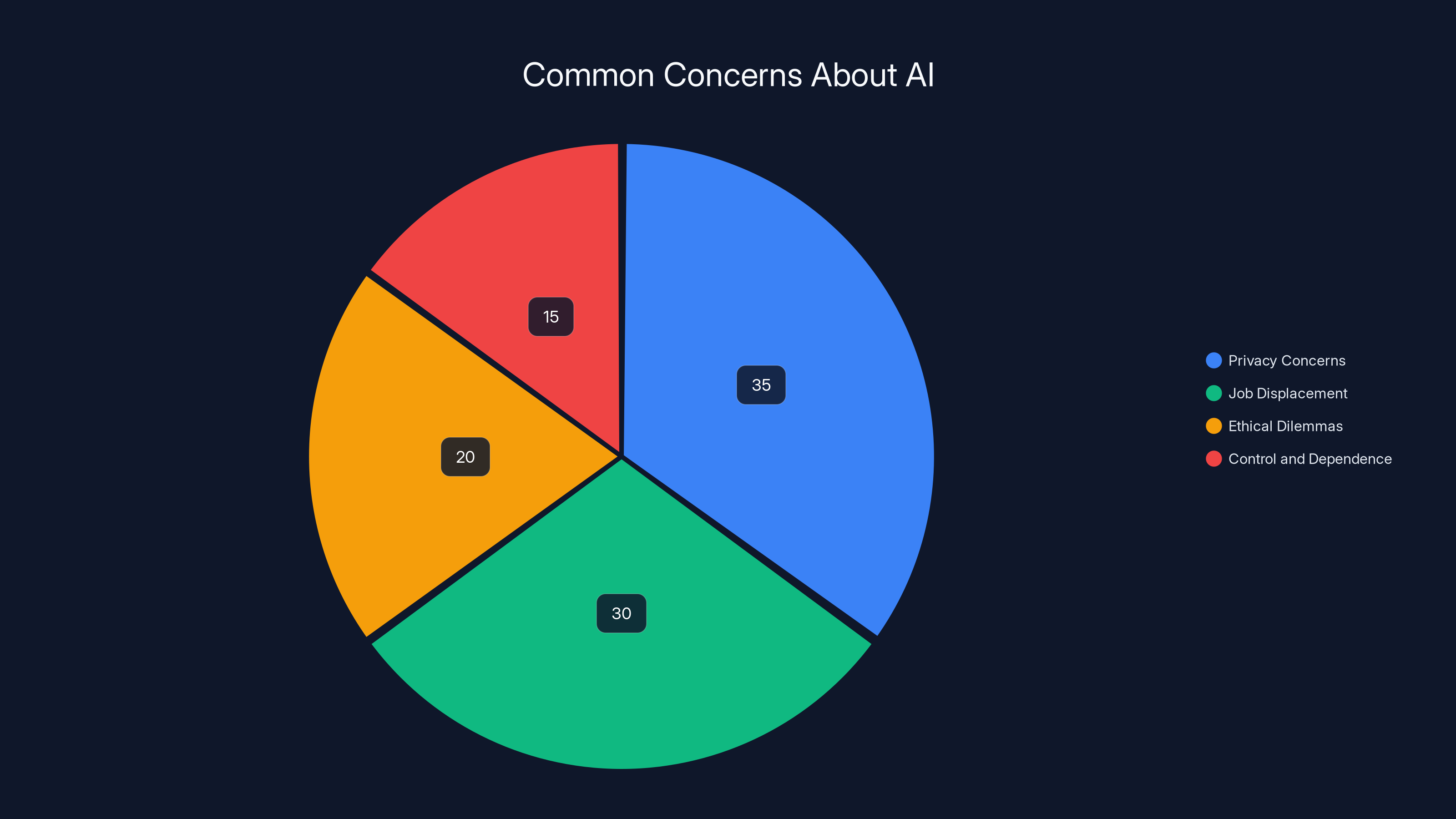

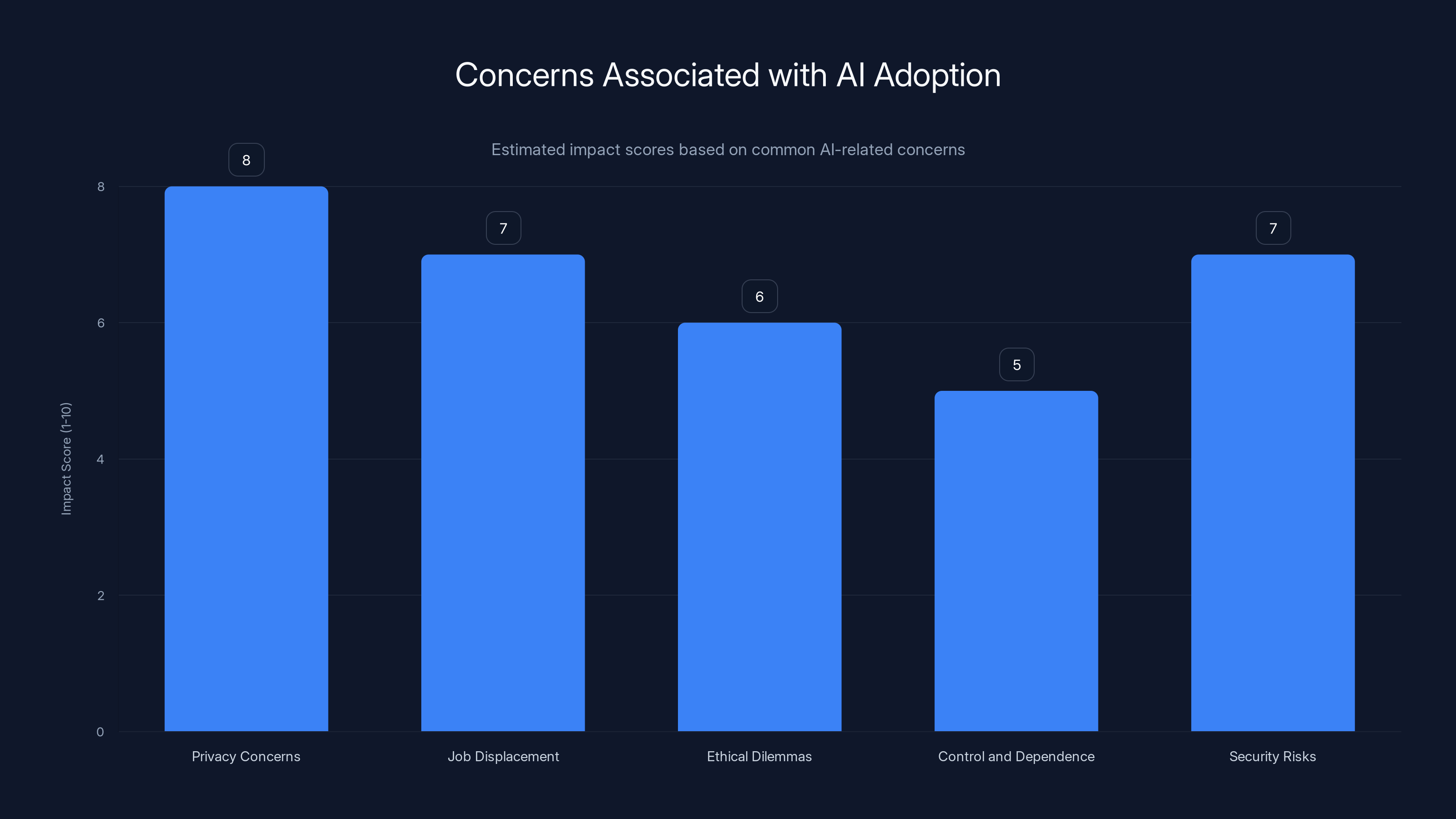

- Privacy Concerns: AI's data collection methods raise significant privacy issues.

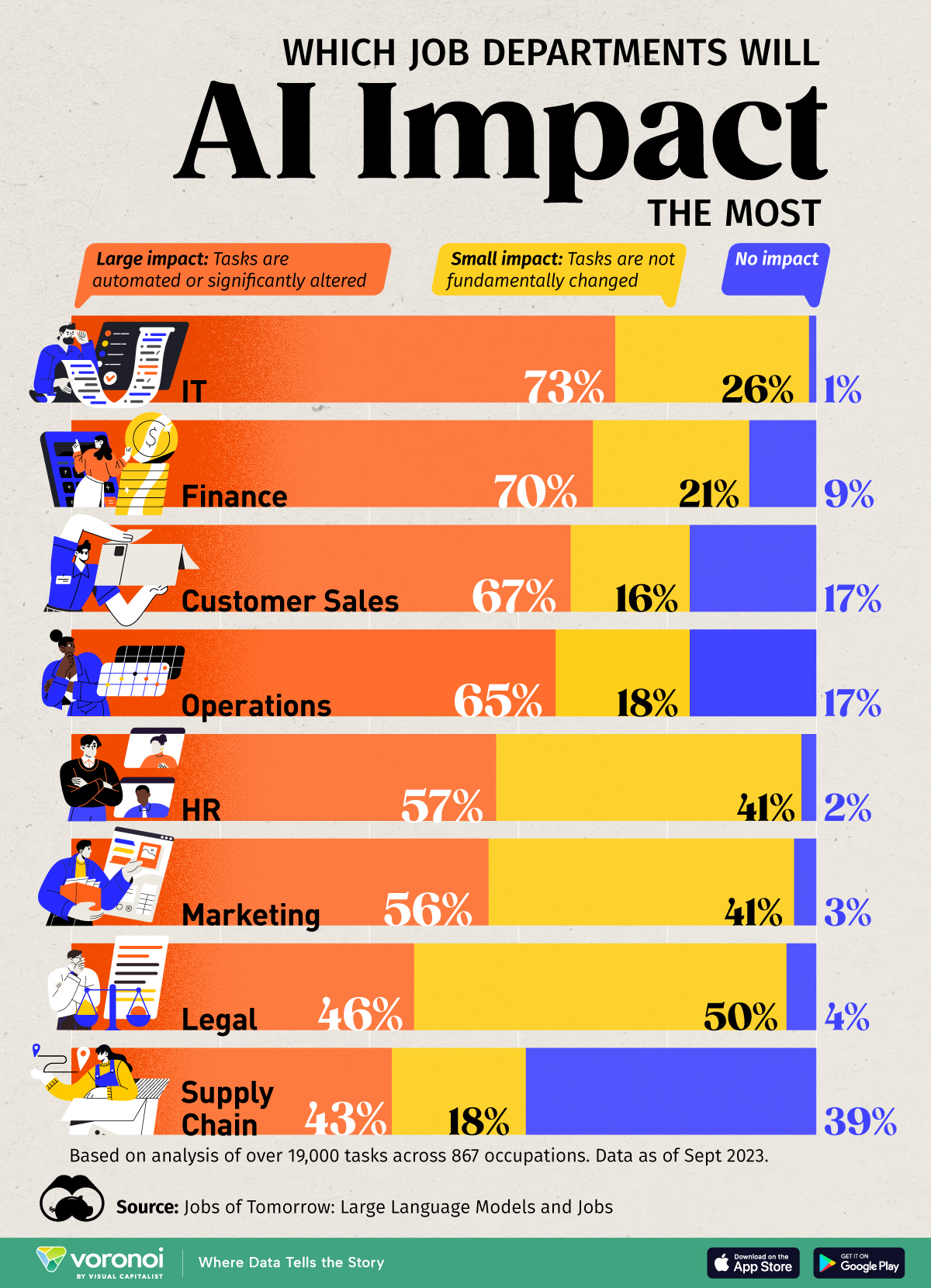

- Job Displacement: Automation threatens many traditional jobs, causing economic anxiety.

- Ethical Dilemmas: AI decisions can sometimes reflect biases, leading to unfair outcomes.

- Control and Dependence: Over-reliance on AI can lead to loss of human control.

- Security Risks: AI systems can be vulnerable to hacking and misuse.

Privacy concerns lead the list of AI-related fears, followed by job displacement and ethical dilemmas. Estimated data.

Understanding the Fear of AI

AI is not just a technological advancement; it's a societal game-changer. But with great power comes great anxiety. Here's a look at why AI is often met with suspicion and fear.

Privacy Concerns

One of the biggest issues people have with AI is the invasion of privacy. AI systems often rely on massive amounts of personal data to function effectively. Whether it's your browsing habits, purchase history, or even your location, AI technologies collect and analyze this data to provide personalized experiences. According to global data protection authorities, the privacy issues in AI image generation highlight significant concerns.

Example: Consider smart home assistants like Amazon Alexa or Google Home. These devices listen for voice commands and store data on your preferences and routines. While convenient, this level of data collection raises alarms about how much these companies know about you.

Job Displacement and Economic Anxiety

AI's ability to automate tasks previously performed by humans is a double-edged sword. On the one hand, it boosts productivity and efficiency. On the other, it threatens jobs, especially in industries reliant on routine manual labor. A report from Kentucky shows how robots and AI are transforming manufacturing jobs, leading to economic anxiety.

Case Study: In manufacturing, AI-driven robots are taking over assembly line jobs. While this increases efficiency and reduces costs for companies, it also means fewer jobs for human workers, leading to economic anxiety. The Washington Post discusses the economic anxiety caused by AI's impact on the workforce.

Ethical Dilemmas and Bias

AI systems are only as fair as the data they're trained on. If that data reflects societal biases, the AI will too. This has led to numerous instances where AI has made biased decisions, particularly in areas like hiring and law enforcement. The Panther Newspaper highlights the hidden biases in AI systems that lead to ethical dilemmas.

Real-World Impact: A notable example is AI hiring tools that have inadvertently favored male candidates over female ones due to biased training data. This raises ethical questions about fairness and accountability in AI decisions.

Control and Dependence

As AI becomes more integrated into our daily lives, there's a growing concern about over-reliance on these systems. What happens when the AI fails, or worse, when it makes decisions that go against human intuition? Experts warn that over-reliance on AI may harm cognitive ability, highlighting the risks of dependence.

Scenario: Imagine a world where autonomous vehicles are the norm. While they promise reduced accidents, what happens when they need to make a split-second decision in a potential crash scenario? These ethical dilemmas highlight the risks of ceding too much control to AI.

Security Risks

AI systems aren't immune to hacking or misuse. The more we rely on AI, the more attractive they become as targets for malicious actors. Deepfake technology, which uses AI to create convincing fake videos, poses significant security risks, from misinformation campaigns to identity theft.

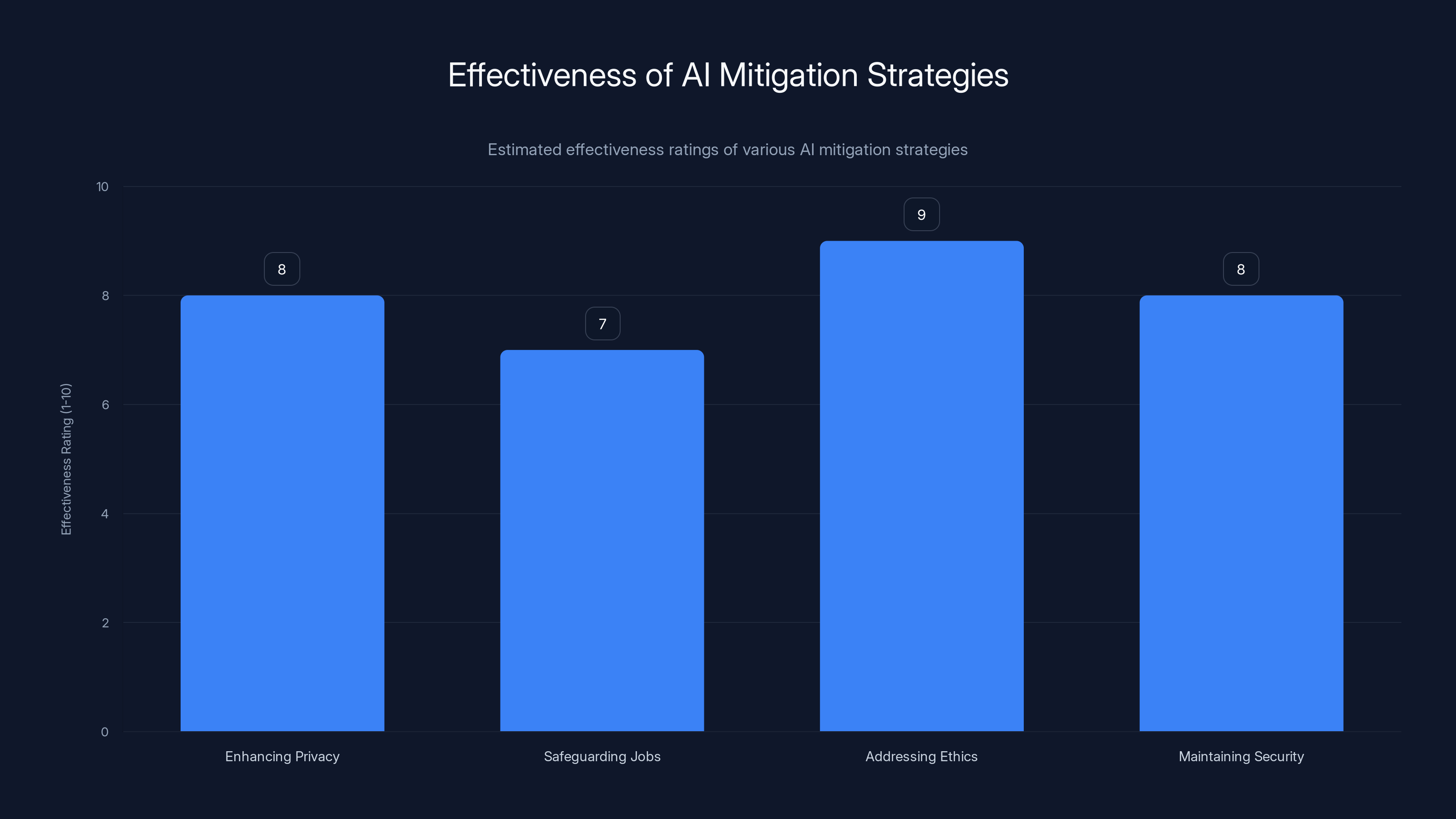

Estimated data shows Addressing Ethics as the most effective strategy, followed closely by Maintaining Security and Enhancing Privacy. Estimated data.

Practical Implementation Guides: Mitigating AI Concerns

While the concerns around AI are valid, there are ways to address them. Here's how stakeholders can implement practices to mitigate these issues.

Enhancing Privacy Protections

- Data Minimization: Collect only the data necessary for AI to function.

- Transparency: Clearly disclose what data is being collected and how it's used.

- User Control: Allow users to manage their data and opt-out of data collection. BGR provides insights on protecting privacy in smart devices.

Safeguarding Jobs

- Reskilling Programs: Offer training for workers to transition into new roles created by AI.

- Human-AI Collaboration: Develop systems that enhance human capabilities rather than replace them.

- Social Safety Nets: Implement policies to support displaced workers.

Addressing Ethical Concerns

- Bias Audits: Regularly audit AI systems for bias and discrimination.

- Diverse Training Data: Use diverse data sets to train AI, ensuring fairer outcomes.

- Ethical Guidelines: Establish clear ethical guidelines for AI development and deployment. The Huntsman Mental Health Institute contributes to ensuring ethical AI frameworks.

Maintaining Control and Security

- Fail-Safe Mechanisms: Design AI systems with fail-safe mechanisms to regain control if needed.

- Robust Security Protocols: Implement strong security measures to protect AI systems from hacking.

- Regular Updates: Keep software updated to protect against vulnerabilities. PIRG discusses the implications of facial recognition technology in security.

Future Trends and Recommendations

As AI technology evolves, so too will the challenges and opportunities it presents. Here's what to watch for in the coming years.

Increased Regulation

Expect more regulatory frameworks aimed at ensuring AI is developed and used responsibly. These regulations will focus on transparency, accountability, and fairness. Financial Times discusses the potential for increased AI regulation.

AI in New Sectors

AI will continue to penetrate new industries, from education to healthcare, offering both benefits and challenges in terms of privacy, ethics, and security. Oracle highlights AI's role in automation across various sectors.

Greater Public Awareness

As AI becomes more prevalent, public awareness and understanding of AI technologies will increase, leading to more informed discussions about their use and impact.

Collaborative AI

Future AI systems will likely focus on collaboration with humans, enhancing rather than replacing human decision-making. USNI explores the balance between resilience and fragility in AI-human collaboration.

Privacy concerns and security risks are perceived as the most significant issues in AI adoption. (Estimated data)

Conclusion

AI is here to stay, but so are the concerns it raises. By understanding these issues and implementing practical solutions, we can harness AI's potential while minimizing its risks. The key is balance: embracing innovation while safeguarding our privacy, jobs, and ethical standards.

FAQ

What is AI?

AI refers to artificial intelligence, which involves machines mimicking human cognitive functions such as learning and problem-solving.

Why do people fear AI?

People fear AI due to concerns about privacy, job displacement, ethical dilemmas, over-reliance, and security risks.

How can AI improve job security?

By focusing on human-AI collaboration, reskilling programs, and social safety nets, AI can create new job opportunities and support displaced workers.

What are the ethical concerns of AI?

Ethical concerns include bias in AI decisions, lack of transparency, and accountability in AI systems, which can lead to unfair outcomes.

How can we ensure AI is used responsibly?

Implementing regulations, conducting bias audits, and developing ethical guidelines can help ensure responsible AI use.

What future trends can we expect in AI?

Expect increased regulation, AI in new sectors, greater public awareness, and collaborative AI systems that enhance human decision-making.

Key Takeaways

- AI raises significant privacy concerns due to extensive data collection.

- Automation by AI leads to job displacement and economic anxiety.

- Ethical dilemmas arise from biased AI decisions and lack of accountability.

- Over-reliance on AI can lead to loss of human control in critical situations.

- AI systems are vulnerable to security breaches and misuse.

- Reskilling and collaboration can mitigate job displacement by AI.

- Future AI trends include increased regulation and collaborative AI systems.

- Addressing AI concerns requires transparency, security, and ethical guidelines.

Related Articles

- Amazon's Acquisition of Rivr: A Leap Toward Autonomous Robotics [2025]

- AI Empowering UK SMEs: Productivity Gains and Adoption Challenges [2025]

- Will Bots Outnumber Humans Online by 2027? Exploring the Future of Internet Traffic [2025]

- Meta's Next-Gen AI Systems: Revolutionizing Content Enforcement [2025]

- ChatGPT's 'Adult Mode': Navigating the New Era of Intimate Surveillance [2025]

- Rocket Report: Canada's Strategic Space Leap and U.S. Space Force's Accelerated Agenda [2025]

![Why People Really Hate AI: Unpacking the Concerns and Implications [2025]](https://tryrunable.com/blog/why-people-really-hate-ai-unpacking-the-concerns-and-implica/image-1-1774013702023.jpg)