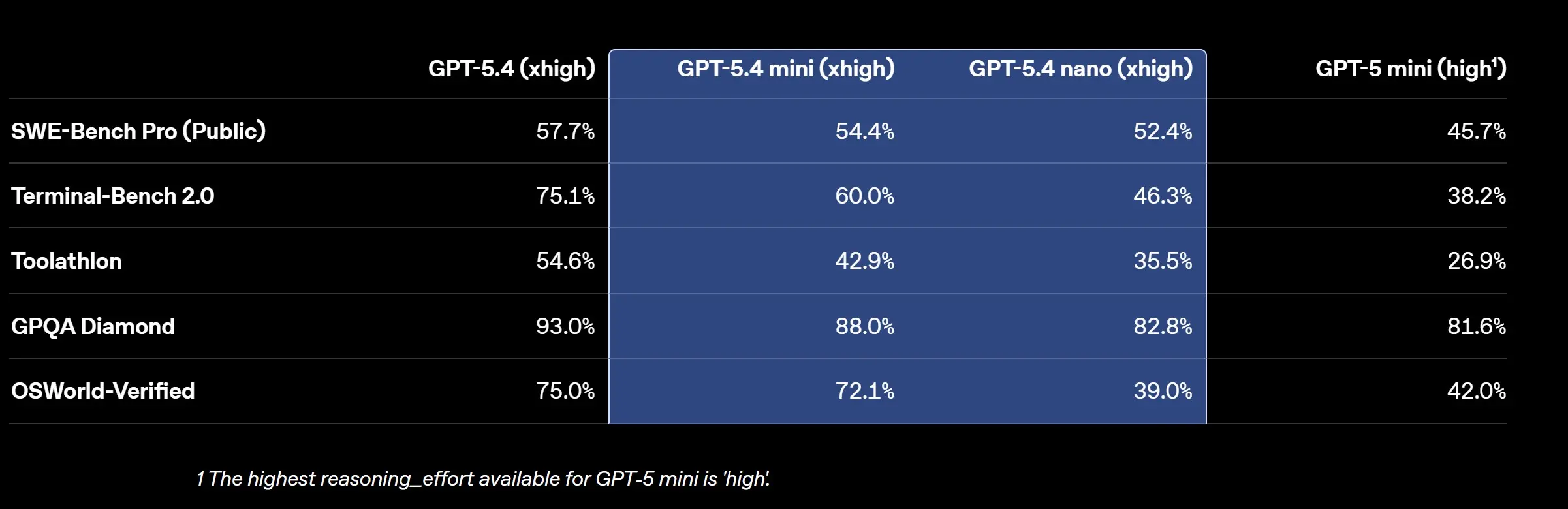

I Tested the New Chat GPT-5.4 Mini and Nano Models — Here's What I Found [2025]

Introduction

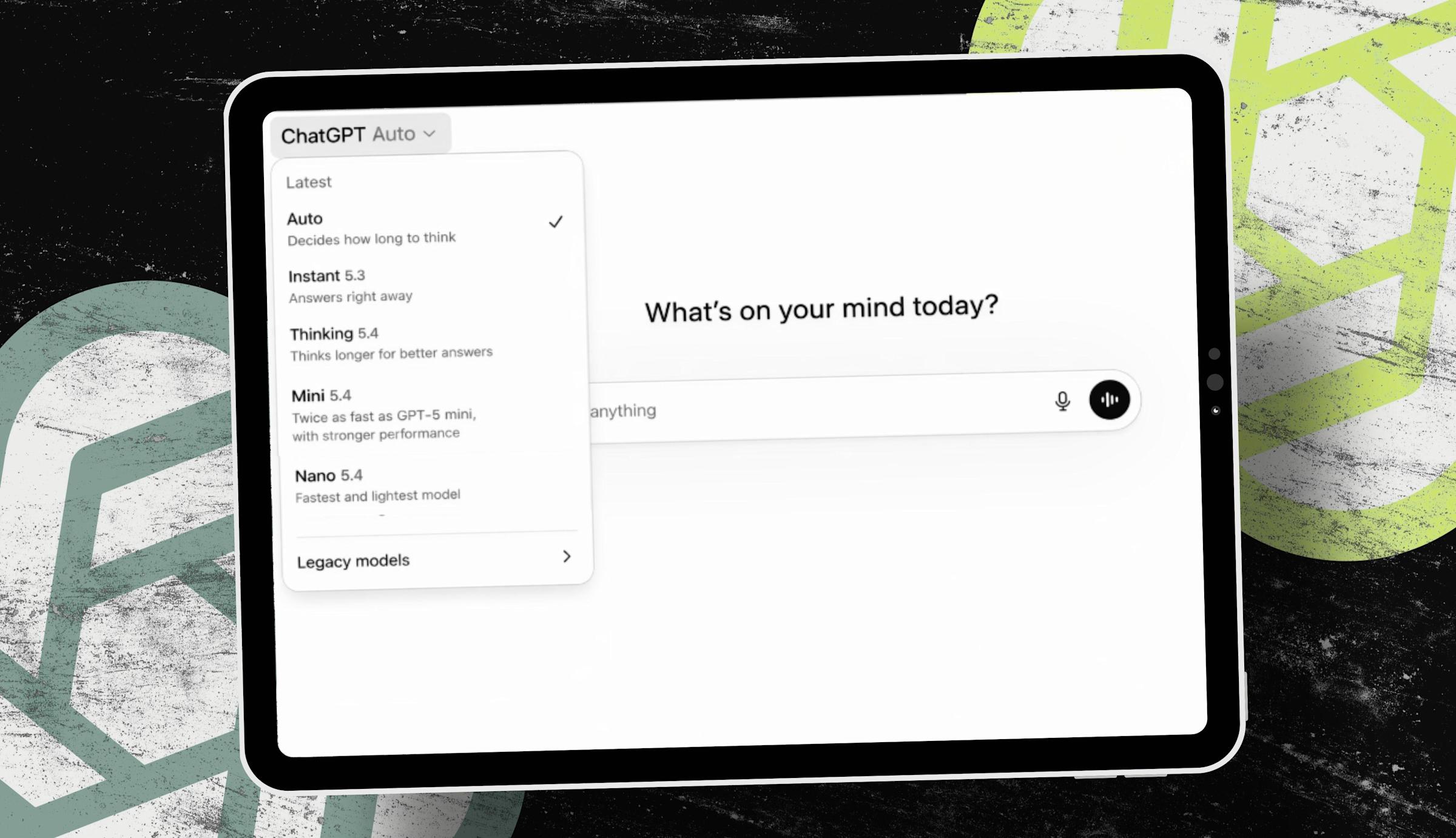

Last week, I had the opportunity to delve into the new Chat GPT-5.4 Mini and Nano models — and I was genuinely surprised by their capabilities. OpenAI has been pushing the boundaries of conversational AI, and these two models are proof that size doesn't dictate power.

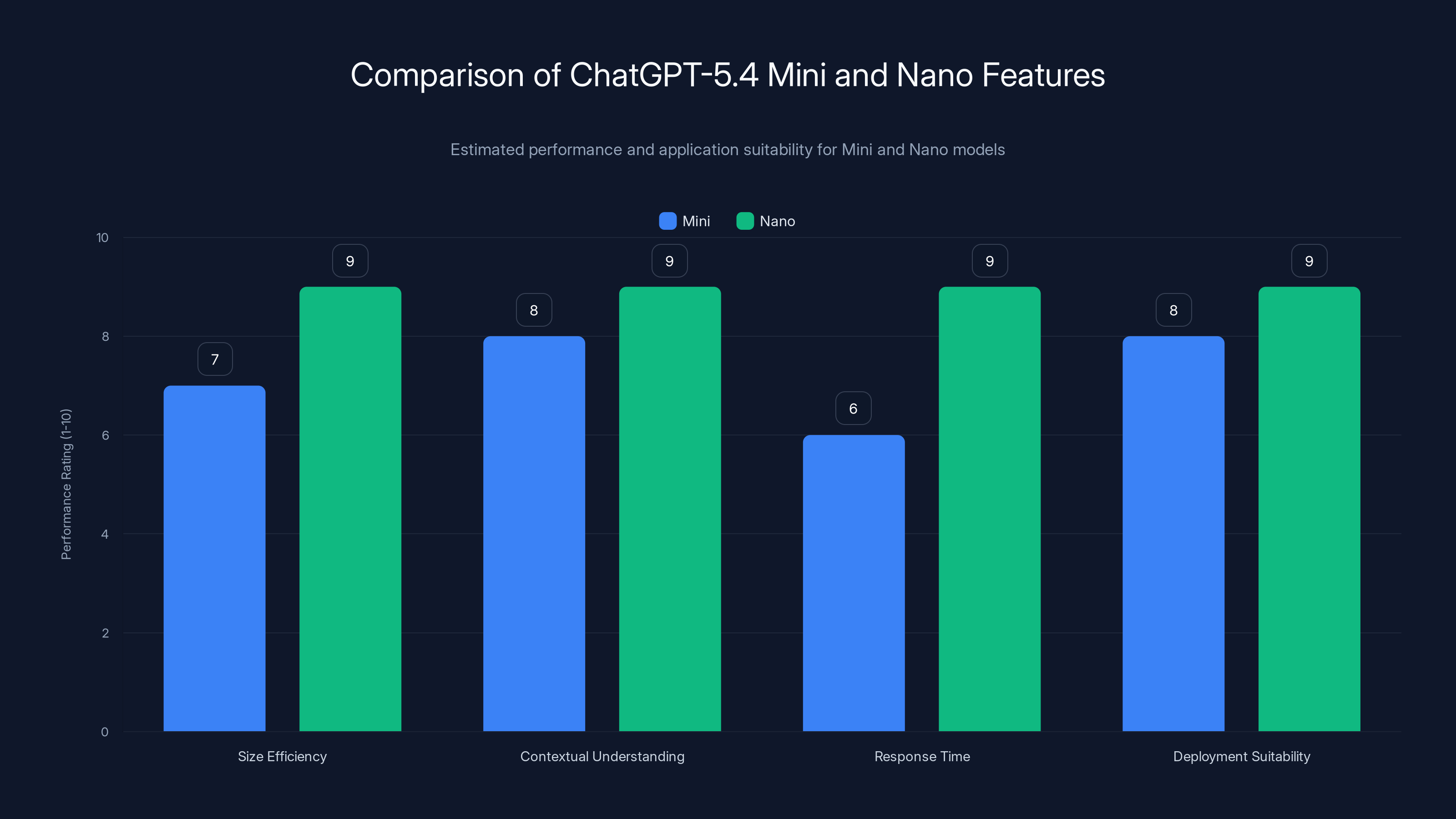

The Nano model excels in size efficiency and response time, making it highly suitable for resource-constrained devices. Estimated data.

TL; DR

- Mini and Nano models offer substantial computational efficiency.

- Real-time applications are now feasible with lower latency.

- Significant improvements in contextual understanding.

- Better suited for mobile and embedded systems.

- The models provide flexible deployment options across various platforms.

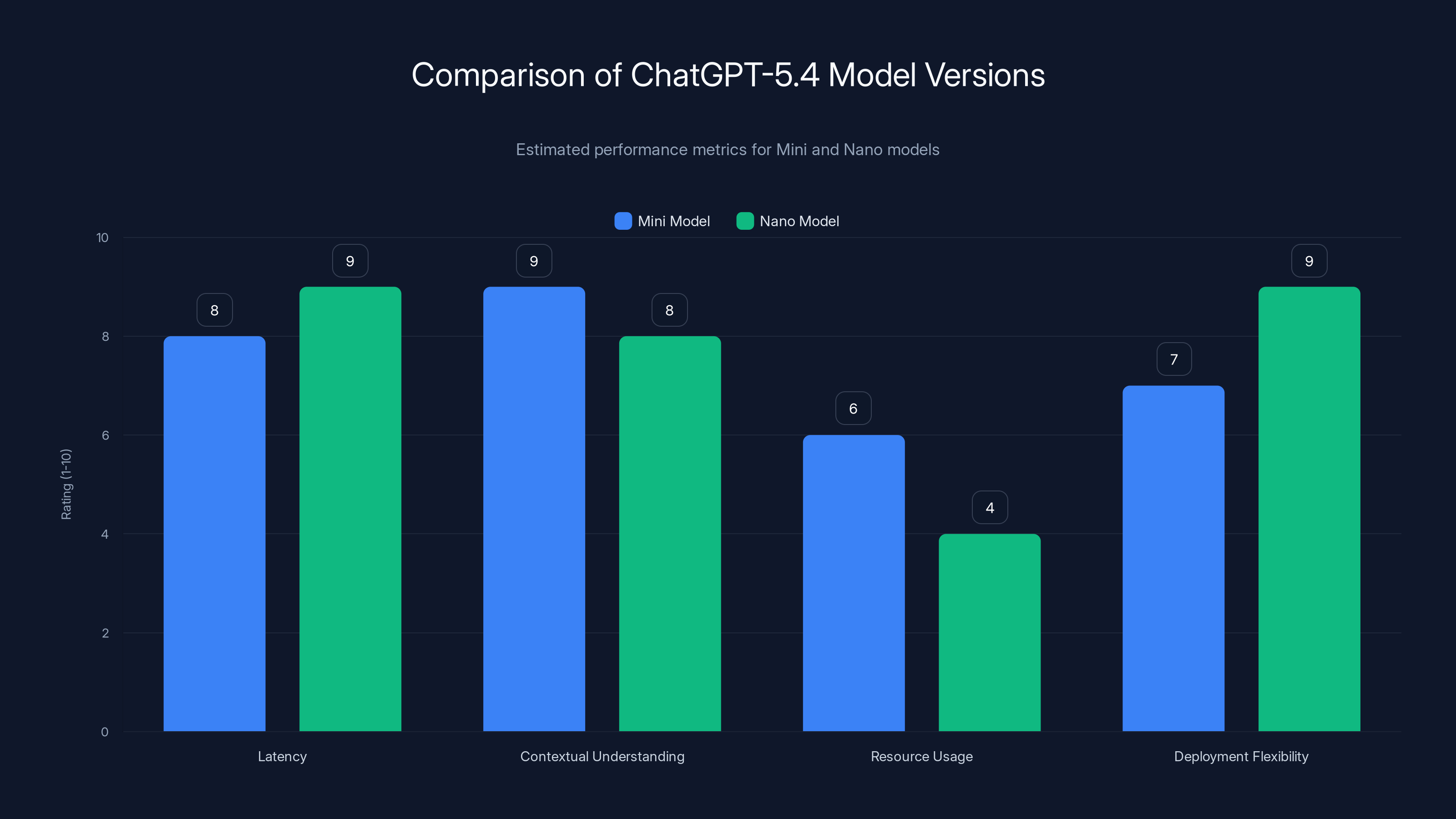

The Nano model excels in deployment flexibility and low resource usage, making it ideal for mobile applications. Estimated data.

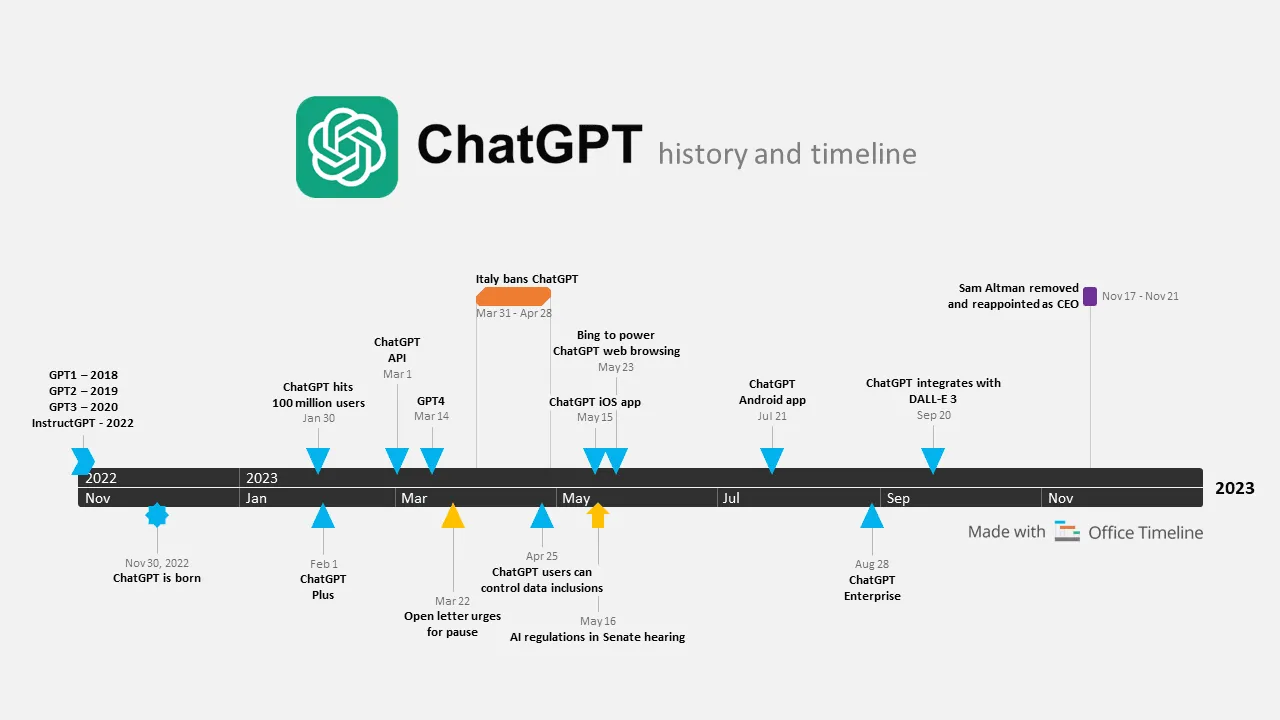

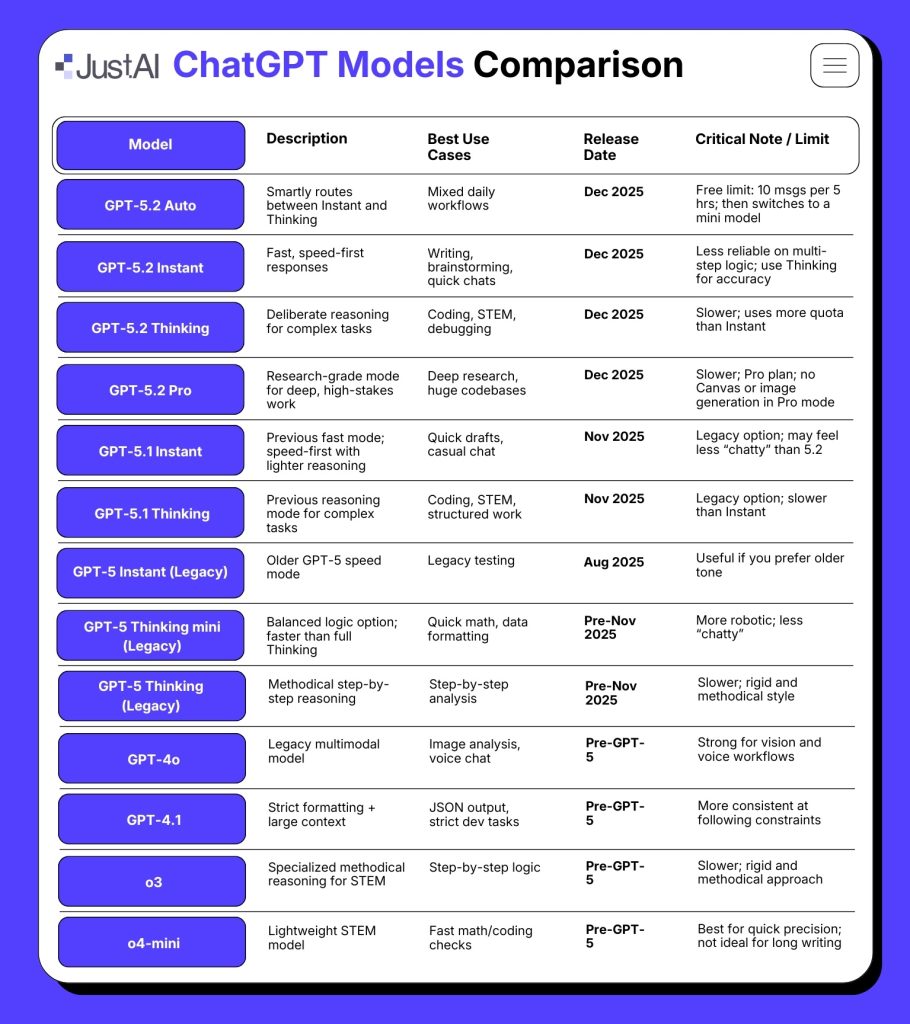

The Evolution of Chat GPT

Chat GPT has come a long way since its early iterations. Initially, the models were limited by computational constraints and required significant resources to run effectively. With the introduction of the Mini and Nano versions, OpenAI has managed to shrink the model size without compromising performance.

What’s New in Chat GPT-5.4 Mini and Nano?

Size vs. Power

The standout feature of the new models is their size. The Mini and Nano versions are designed to be lightweight, making them perfect for devices with less processing power. Yet, they still retain the sophisticated language capabilities of their larger counterparts. This makes them ideal for deployment on mobile devices and IoT gadgets.

Enhanced Contextual Understanding

While the size has decreased, the ability to understand and generate contextually relevant responses has been significantly enhanced. This is largely due to improved training techniques that focus on context retention and reduced noise in data processing.

Real-Time Applications

Latency has always been a challenge for conversational AI. The Mini and Nano models have cut down response times significantly, allowing for real-time applications such as live customer support and voice-assisted technologies. This opens up new possibilities for integrating AI in everyday appliances and services.

Implementation and Best Practices

Deploying these models involves careful planning. Here’s a quick guide:

- Choose the Right Platform: Determine where the model will be deployed. Mobile applications might benefit more from the Nano version, while web applications can leverage the Mini model.

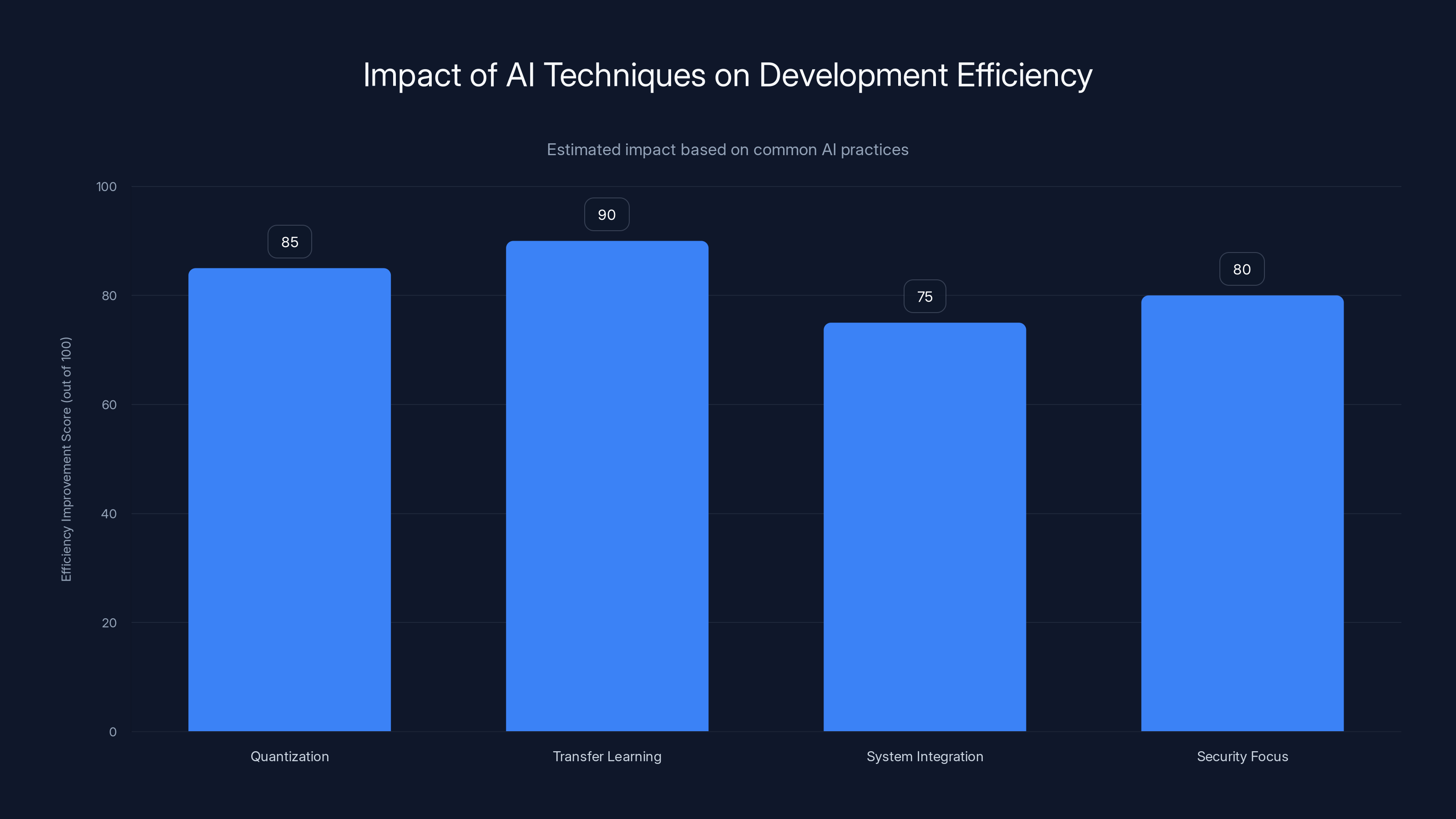

- Optimize for Efficiency: Use quantization techniques to reduce the size and improve speed without sacrificing too much accuracy.

- Monitor and Adapt: Implement monitoring tools to track performance and adapt the models based on real-world data.

Quantization and Transfer Learning are estimated to significantly improve development efficiency, with scores of 85 and 90 respectively. Estimated data.

Real-World Use Cases

Customer Support

Imagine a world where customer support is instant and precise. With the Chat GPT-5.4 models, companies can deploy chatbots that understand customer queries in real-time and provide accurate responses, reducing the need for human intervention.

Embedded Systems

The compact size of the Nano model makes it perfect for embedded systems, such as smart home devices and automotive interfaces. These systems require fast, reliable processing with minimal computational overhead.

Education and Training

In educational environments, the Mini model can be used to create interactive learning tools that adapt to a student’s learning pace, providing personalized feedback and guidance.

Common Pitfalls and Solutions

Over-Reliance on Default Settings

Many developers make the mistake of relying on default settings. It’s crucial to customize the model’s parameters to fit the specific needs of the application.

Solution: Spend time fine-tuning hyperparameters and testing different configurations to find what works best for your use case.

Insufficient Testing

Deploying without thorough testing can lead to unexpected behavior in production environments.

Solution: Implement comprehensive testing protocols, including A/B testing and user feedback loops.

Future Trends

As AI technology continues to evolve, we can expect further miniaturization and efficiency improvements in model design. OpenAI’s focus on optimizing for diverse platforms indicates a shift towards universal AI applications that can operate across both high-end and low-end devices seamlessly.

Recommendations

For Developers

- Explore Quantization: This technique reduces model size and increases speed, making it ideal for both Mini and Nano implementations.

- Leverage Transfer Learning: Use pre-trained models as a base to adapt to specific industry needs, reducing development time.

For Businesses

- Integrate with Existing Systems: Evaluate how these models can complement existing systems to enhance efficiency and productivity.

- Focus on Security: As AI models become more embedded in daily operations, ensuring data privacy and security is paramount.

Conclusion

The Chat GPT-5.4 Mini and Nano models have redefined what’s possible with conversational AI. Their compact size, combined with powerful capabilities, allows for a wide range of applications, making real-time AI accessible in more contexts than ever before.

FAQ

What is Chat GPT-5.4?

Chat GPT-5.4 is the latest iteration of OpenAI's conversational AI models, featuring Mini and Nano versions that are optimized for efficiency and real-time applications.

How does it differ from previous versions?

The Mini and Nano models are smaller, designed for devices with limited computational power, yet they maintain high-quality language processing capabilities.

What are the benefits of using these models?

Benefits include reduced latency, improved contextual understanding, and flexibility in deployment across various platforms and devices.

Can these models be used in mobile applications?

Yes, especially the Nano model, which is optimized for mobile and embedded systems, offering efficient performance with minimal resource usage.

What should I consider when deploying these models?

Consider the target platform, optimize for efficiency, monitor performance, and ensure security and privacy in your implementation.

Key Takeaways

- Mini and Nano models offer substantial computational efficiency.

- Real-time applications are now feasible with lower latency.

- Significant improvements in contextual understanding.

- Better suited for mobile and embedded systems.

- The models provide flexible deployment options across various platforms.

- Quantization techniques enhance model efficiency.

- Future trends indicate further miniaturization and efficiency improvements.

Related Articles

- Google Maps' Chatty Makeover: Exploring the Gemini-Powered Revolution [2025]

- Nvidia's Nemotron 3 Super: A Triple Hybrid Model Revolutionizing AI Throughput [2025]

- Nvidia's $26 Billion Bet on Open-Weight AI Models [2025]

- OpenAI’s Upcoming Sora Video Generator: A Game Changer for ChatGPT [2025]

- Google's Gemini Embedding 2: Redefining Enterprise Efficiency with Multimodal Support [2025]

- Inside OpenAI’s Efforts to Innovate in AI Coding [2025]

![I Tested the New ChatGPT-5.4 Mini and Nano Models — Here's What I Found [2025]](https://tryrunable.com/blog/i-tested-the-new-chatgpt-5-4-mini-and-nano-models-here-s-wha/image-1-1773842792518.jpg)