Introduction

Nvidia has always been at the forefront of GPU technology and AI innovation. Their latest creation, the Nemotron 3 Super, is no exception. This 120-billion-parameter model showcases a revolutionary approach by merging three distinct architectures: transformers, state-space models, and a novel latent mixture-of-experts design. By doing so, Nvidia aims to surpass existing models like GPT-OSS and Qwen, particularly in terms of throughput. What makes this model unique is its ability to maintain high performance without the excessive resource consumption typical of dense reasoning models.

TL; DR

- Nemotron 3 Super: Combines three architectures to enhance throughput over GPT-OSS and Qwen.

- 120 Billion Parameters: Offers a balance of performance and resource efficiency.

- Triple Architecture: Utilizes transformers, state-space models, and latent mixture-of-experts.

- Open Weights: Available on Hugging Face for commercial use.

- Agentic Workflows: Designed for complex, long-horizon tasks.

- Future Trends: Paves the way for more efficient AI models in enterprise applications.

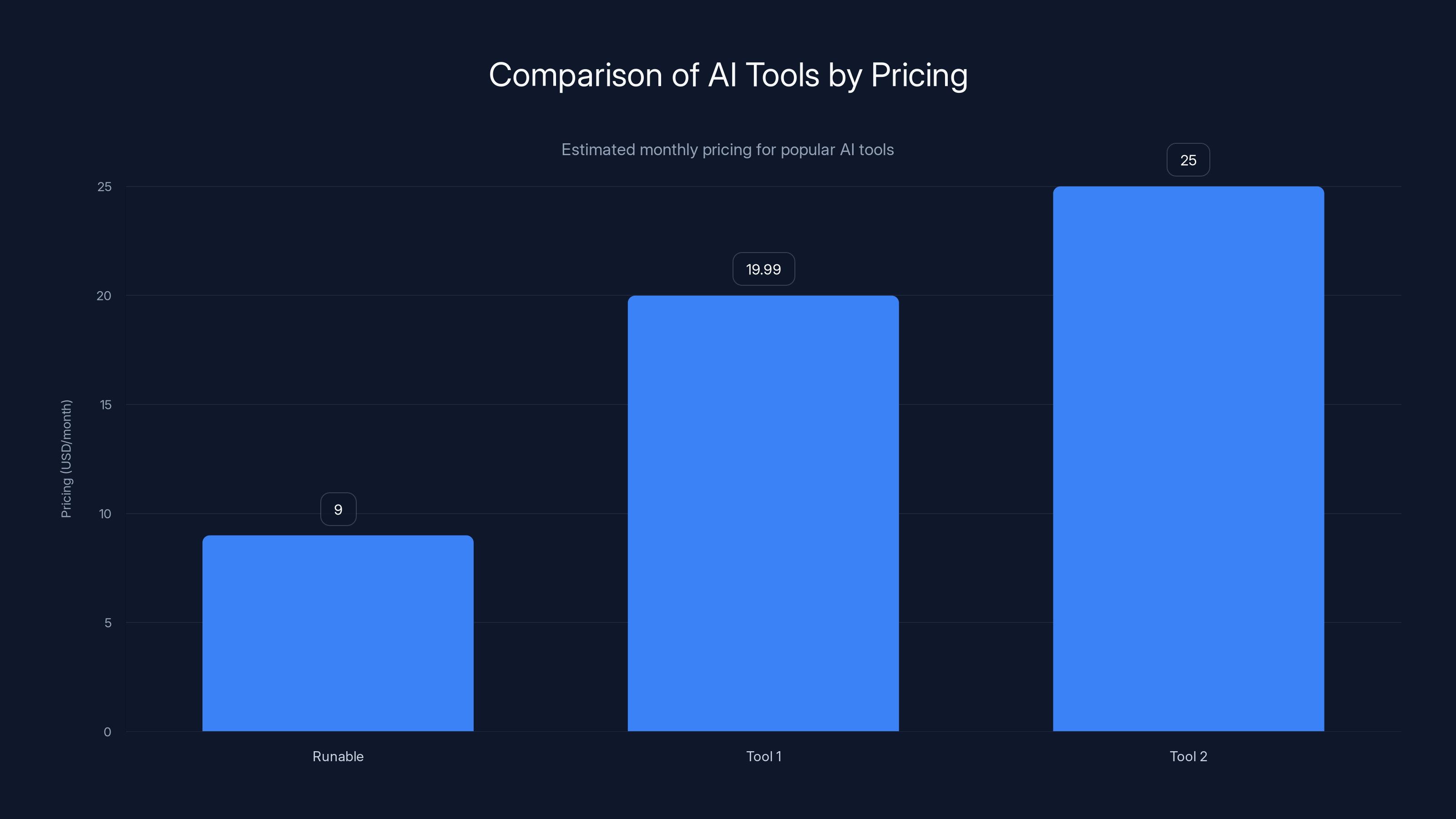

This chart compares the estimated monthly pricing for different AI tools, highlighting Runable as the most affordable option at $9/month. Estimated data for Tool 2 based on industry standards.

The Foundations of Nemotron 3 Super

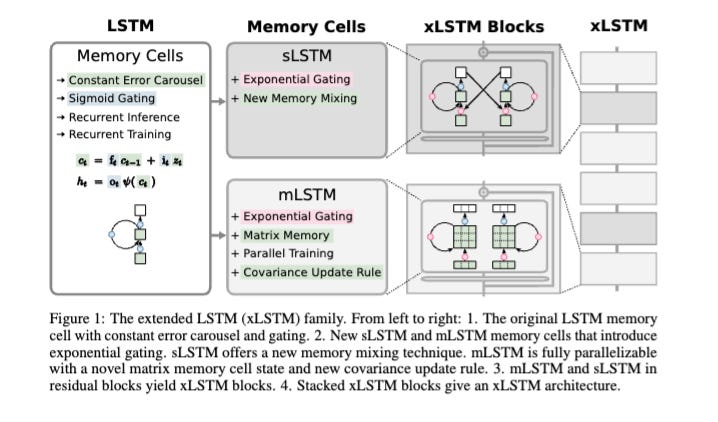

Understanding the Three Architectures

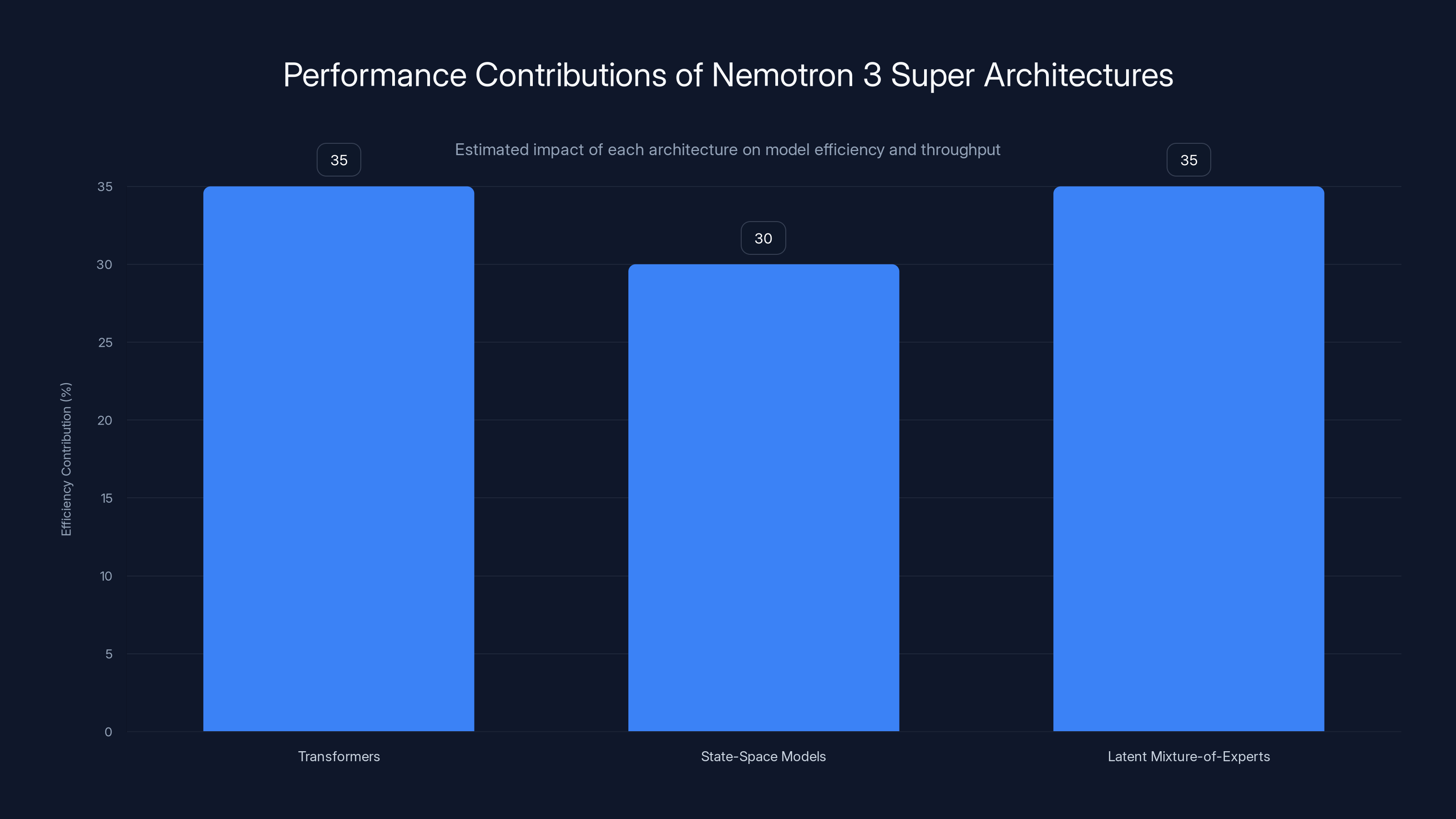

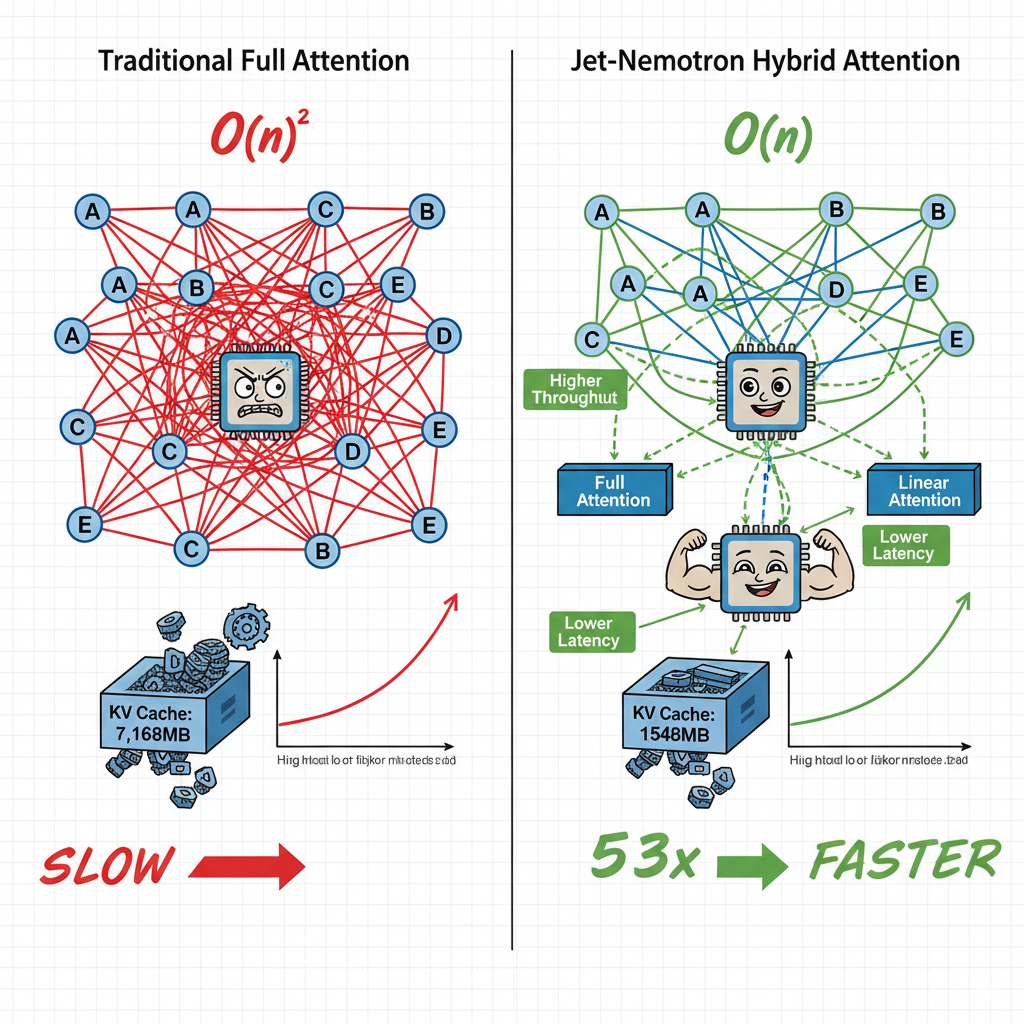

The innovation in Nemotron 3 Super lies in its architectural diversity. By combining transformers, state-space models, and a latent mixture-of-experts design, Nvidia creates a model that is both powerful and efficient.

-

Transformers: Known for their ability to handle sequence-to-sequence tasks, transformers are the backbone of many modern AI models, including the well-known GPT series. They excel in tasks involving language understanding and generation, making them ideal for predictive text and conversational AI.

-

State-Space Models: These models bring a mathematical rigor to AI, effectively managing processes that evolve over time. They are particularly useful in scenarios where the system states need to be estimated continuously, such as in real-time tracking and forecasting.

-

Latent Mixture-of-Experts Design: This novel approach allows parts of the model to specialize in specific tasks, improving efficiency. By activating only the necessary components for a given task, the model can perform complex operations without unnecessary computational overhead.

Why Combine These Architectures?

The primary goal of merging these architectures is to maximize throughput — a critical factor for enterprise applications where speed and efficiency are paramount. Traditional dense models often suffer from diminishing returns when scaled up due to increased resource demands. By contrast, the triple hybrid structure of Nemotron 3 Super leverages the strengths of each architecture to maintain high performance without excessive computational costs.

The Nemotron 3 Super's architecture combines transformers, state-space models, and latent mixture-of-experts, each contributing significantly to overall efficiency and throughput. Estimated data.

Practical Implementation of Nemotron 3 Super

Getting Started with Nemotron 3 Super

To harness the power of Nemotron 3 Super, developers can access the model's open weights on Hugging Face. This openness facilitates experimentation and integration into existing workflows, enabling rapid deployment of AI solutions.

-

Installation: Begin by ensuring your environment is equipped with the latest versions of PyTorch or TensorFlow, as these frameworks will support the model's architecture.

bashpip install torch transformers -

Loading the Model: Utilize the Hugging Face Transformers library to load Nemotron 3 Super.

pythonfrom transformers import AutoModel model = AutoModel.from_pretrained('nvidia/nemotron-3-super') -

Fine-Tuning for Specific Tasks: Given its architecture, Nemotron 3 Super is highly adaptable. Developers can fine-tune the model on specific datasets to optimize for particular tasks, such as natural language processing (NLP), predictive analytics, or real-time decision-making.

Use Cases in Industry

1. Software Engineering: Nemotron 3 Super can automate code generation, bug detection, and even refactoring, significantly reducing development time.

2. Cybersecurity: The model's state-space capabilities enable real-time anomaly detection and threat prediction, enhancing security measures.

3. Customer Service: With its advanced language processing, Nemotron 3 Super can power chatbots that understand and respond to customer queries with human-like accuracy.

Common Pitfalls and Solutions

Pitfall 1: Resource Management

Challenge: Large models like Nemotron 3 Super can be resource-intensive. Without proper management, they may overwhelm computational resources.

Solution: Implement dynamic resource allocation strategies. Utilizing cloud-based GPU services can help manage peaks in demand without requiring a permanent infrastructure investment.

Pitfall 2: Overfitting

Challenge: As with any robust model, there is a risk of overfitting, especially when fine-tuning on small datasets.

Solution: Employ data augmentation techniques and cross-validation to ensure the model generalizes well across different datasets.

Pitfall 3: Integration Complexities

Challenge: Integrating a sophisticated AI model into existing systems can be daunting.

Solution: Leverage modular deployment frameworks and APIs. These tools can simplify the integration process, allowing for seamless interaction between Nemotron 3 Super and existing software stacks.

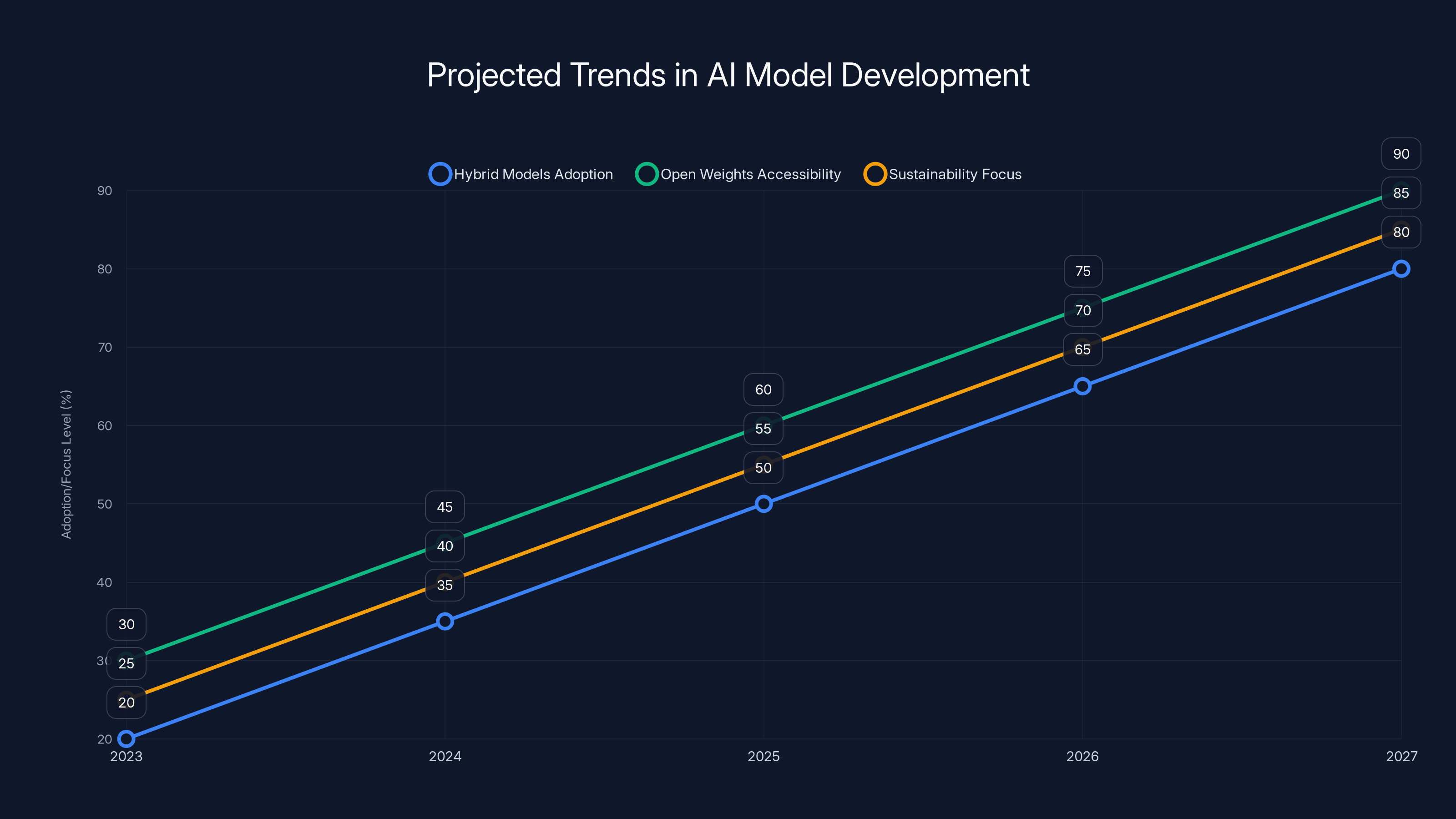

Projected trends indicate a significant increase in the adoption of hybrid models, open weights accessibility, and sustainability focus in AI development over the next five years. Estimated data.

Future Trends and Recommendations

Trends in AI Model Development

1. Hybrid Models: The success of Nemotron 3 Super indicates a growing trend towards hybrid models that combine multiple architectures for enhanced functionality and efficiency.

2. Open Weights Accessibility: As seen with Nemotron 3 Super, the movement towards open weights is likely to continue, promoting transparency and innovation in AI development.

3. Sustainability in AI: With increasing awareness of AI's environmental impact, models like Nemotron 3 Super that offer high throughput with reduced resource consumption will become more desirable.

Recommendations for Developers

1. Continuous Learning: Stay updated with the latest developments in AI architectures and frameworks. Participation in workshops and online courses can keep your skills sharp.

2. Experimentation: Don't hesitate to experiment with different configurations and hyperparameters. The flexibility of Nemotron 3 Super allows for a wide range of applications, so exploring various setups can uncover new potential uses.

3. Collaboration: Engage with the broader AI community. Platforms like Hugging Face provide opportunities to connect with other developers and share insights and best practices.

Conclusion

Nvidia's Nemotron 3 Super marks a significant advancement in AI model design. By integrating multiple architectures, it not only surpasses leading models like GPT-OSS and Qwen in throughput but also sets a new standard for efficiency and adaptability. As industries continue to embrace AI, models like Nemotron 3 Super will be crucial in driving innovation while maintaining resource-conscious operations.

FAQ

What is Nemotron 3 Super?

Nemotron 3 Super is Nvidia's latest AI model that combines transformers, state-space models, and a latent mixture-of-experts design to achieve high throughput and efficiency.

How does Nemotron 3 Super work?

It works by leveraging the strengths of its three architectures to perform complex tasks efficiently, making it suitable for enterprise applications.

What are the benefits of using Nemotron 3 Super?

Benefits include high throughput, reduced computational costs, and adaptability to various tasks, from NLP to cybersecurity.

How can I implement Nemotron 3 Super in my workflow?

Start by accessing its open weights on Hugging Face, then integrate the model into your existing systems using frameworks like PyTorch or TensorFlow.

What are the common challenges when using large AI models?

Challenges include resource management, risk of overfitting, and integration complexities, but they can be mitigated with best practices and strategic planning.

What future trends are likely to impact AI model development?

Expect more hybrid models, increased openness with model weights, and a focus on sustainability in AI development.

Can Nemotron 3 Super be used for real-time applications?

Yes, its state-space capabilities make it highly effective for real-time tracking and decision-making tasks.

Is Nemotron 3 Super suitable for small businesses?

While designed for enterprise tasks, its open weights and efficient architecture make it accessible to smaller businesses looking to leverage advanced AI capabilities.

The Best AI Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | AI orchestration | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data quality | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for specific use case

- Tool 2 for specific use case

Key Takeaways

- Nemotron 3 Super combines transformers, state-space models, and a latent mixture-of-experts design.

- Outperforms GPT-OSS and Qwen in throughput.

- Open weights available on Hugging Face for commercial use.

- Ideal for complex, long-horizon tasks in enterprise settings.

- Future trends include more hybrid models and open weights accessibility.

- Sustainability becomes a key focus in AI model development.

Related Articles

- Nvidia's $26 Billion Bet on Open-Weight AI Models [2025]

- Huawei's Atlas 950 AI SuperPoD: A New Era in AI Infrastructure [2025]

- Harnessing AI: Why Better Models Alone Won't Get Your AI Agent to Production [2025]

- Anthropic's Renewed Discussions with the Defense Department: Implications and Opportunities [2025]

- Introducing Perplexity's 'Computer': The Future of AI Task Management [2025]

- The Three Frontiers of AI Model Capability: Beyond Raw Intelligence [2025]

![Nvidia's Nemotron 3 Super: A Triple Hybrid Model Revolutionizing AI Throughput [2025]](https://tryrunable.com/blog/nvidia-s-nemotron-3-super-a-triple-hybrid-model-revolutioniz/image-1-1773273872590.png)