Lessons from Fighter Pilots for Making Effective Enterprise AI Decisions [2025]

When it comes to split-second decision-making, few professions are as demanding as that of a fighter pilot. The intense pressure, high stakes, and rapid pace of air combat create a unique environment in which pilots must excel. Interestingly, many of the decision-making principles used by fighter pilots can be applied to enterprise AI decisions, offering a fresh perspective on accountability, performance, and strategic implementation.

TL; DR

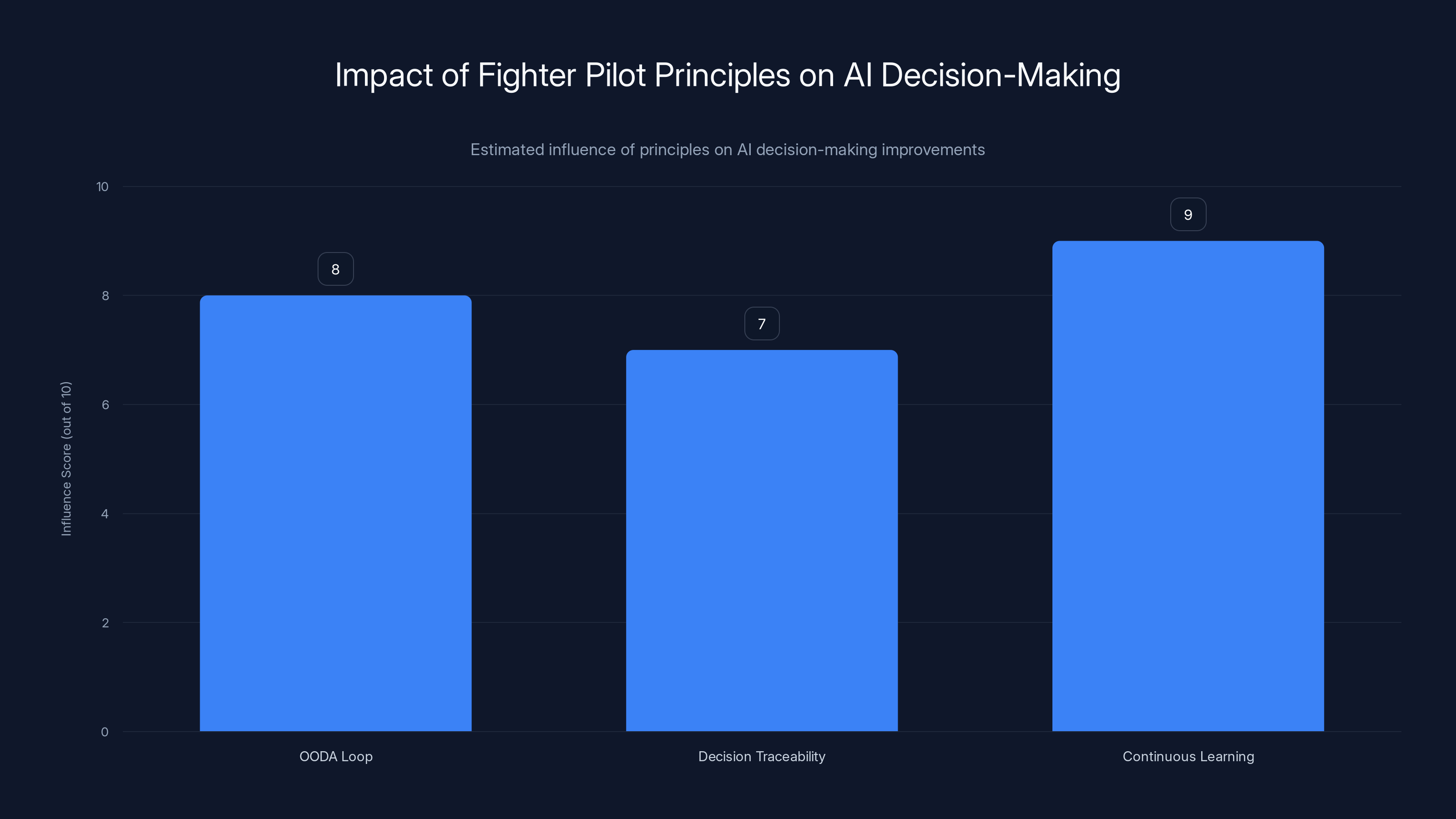

- Fighter pilots excel in high-pressure decision-making by leveraging OODA loops, which can enhance AI processes, as discussed in TechRadar's analysis.

- Human judgment remains crucial in AI systems to ensure accountability and ethical considerations, as highlighted by The Hastings Center.

- Implementing decision traceability helps in understanding AI outcomes and improving transparency, according to PwC's insights.

- Continuous learning and adaptation are necessary for both pilots and AI to handle evolving challenges, a point emphasized by Andreessen Horowitz.

- Bottom Line: Applying fighter pilot strategies can significantly improve enterprise AI decision-making.

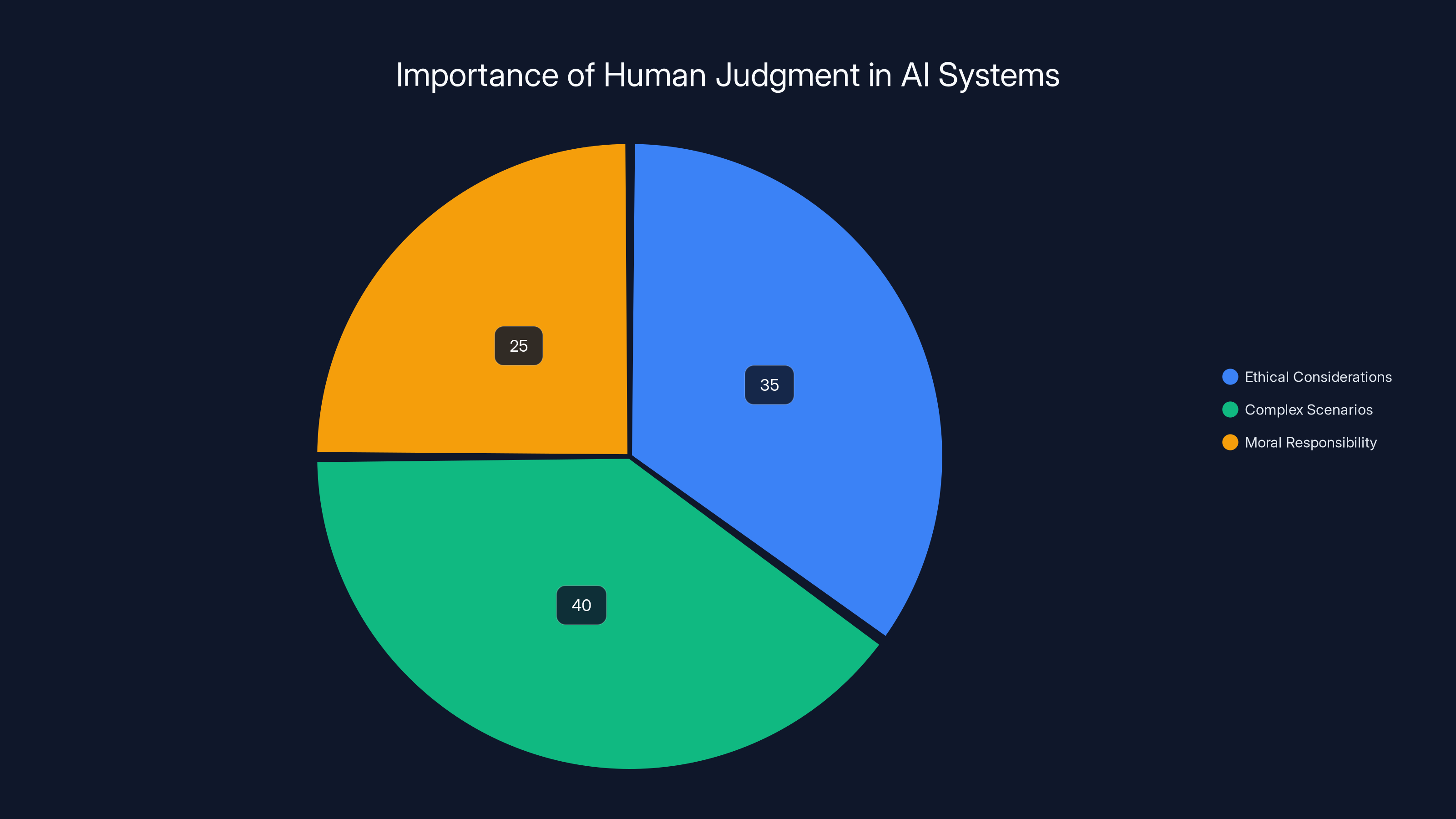

Human judgment is vital in AI for ethical considerations (35%), handling complex scenarios (40%), and moral responsibility (25%). Estimated data.

The OODA Loop: A Framework for Decision-Making

The OODA loop—Observe, Orient, Decide, Act—is a decision-making framework developed by U.S. Air Force pilot John Boyd. This loop emphasizes the importance of speed and adaptability in making decisions, which is essential in both combat and enterprise AI.

Observe

In the first phase, the focus is on collecting data. In air combat, this means observing the enemy's position, speed, and maneuvers. In enterprise AI, it involves gathering data from various sources to understand the current situation.

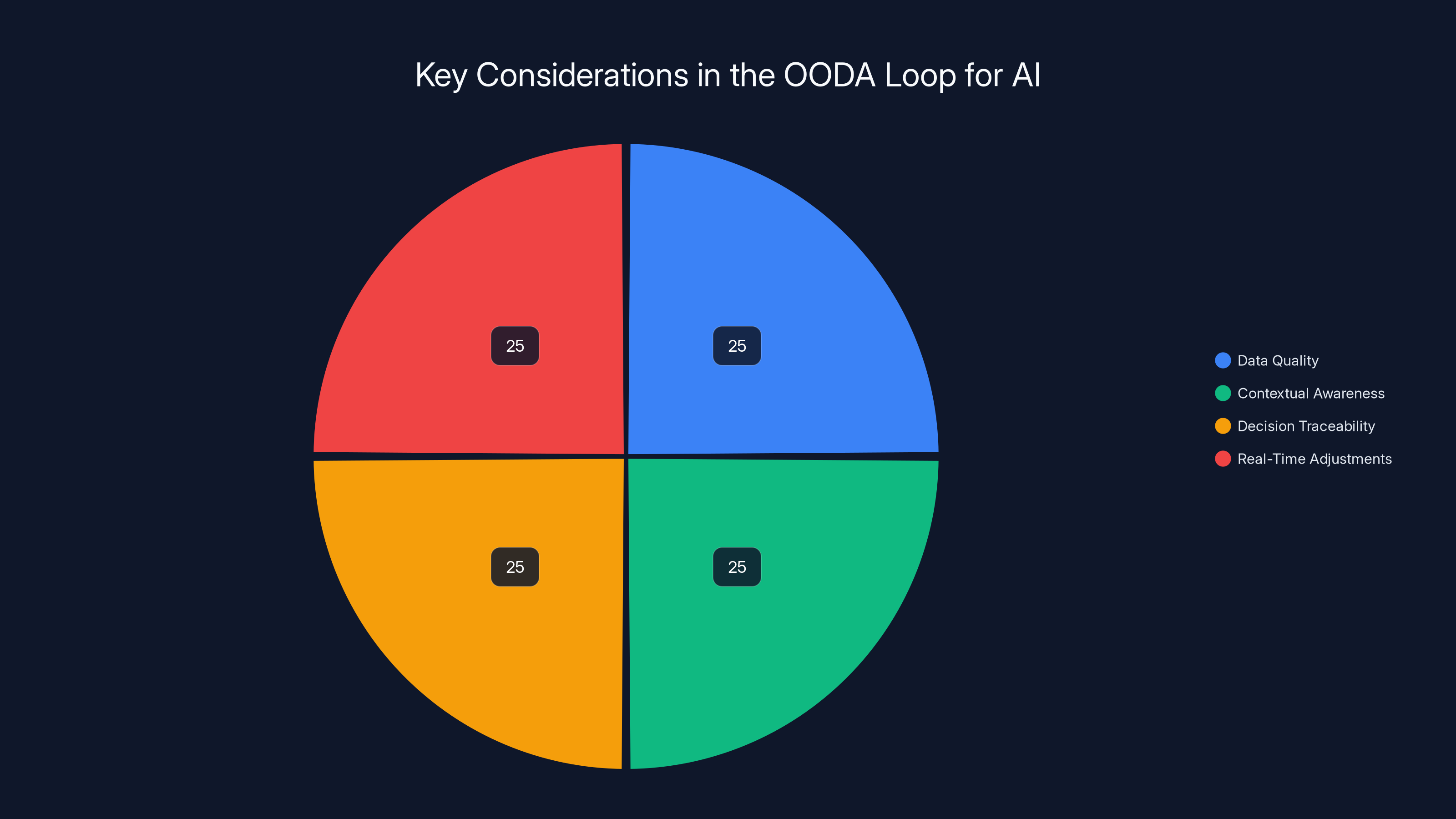

Key Considerations for AI:

- Data Quality: Ensure the data is accurate, relevant, and timely, as noted in Microsoft's data insights.

- Data Sources: Utilize a mix of structured and unstructured data for a complete view, as suggested by Fast Company.

Orient

Orientation involves processing information to understand the context. Pilots use their training and situational awareness to interpret observations. Similarly, AI systems must process data to gain insights.

Key Considerations for AI:

- Contextual Awareness: AI must understand the context to make relevant decisions, as discussed in BioSpace's analysis.

- Bias Mitigation: Implement strategies to reduce algorithmic bias during data interpretation, a concern highlighted by UNU's research.

Decide

This phase involves choosing the best course of action based on the information at hand. For pilots, this means selecting tactics to outmaneuver the enemy. In AI, it's about choosing the right algorithm or model to address the problem.

Key Considerations for AI:

- Decision Traceability: Maintain a record of decision paths to ensure accountability, as emphasized by PwC.

- Human Oversight: Involve human decision-makers in critical AI decisions to apply ethical considerations, as noted by The Hastings Center.

Act

Finally, action is taken based on the decision. In air combat, this is executing a maneuver. In AI, it involves deploying the chosen model or making system adjustments.

Key Considerations for AI:

- Real-Time Adjustments: Ensure AI systems can adapt to new data and changing circumstances, as outlined by Nature's study.

- Feedback Loops: Implement mechanisms for continual learning and improvement, as recommended by Andreessen Horowitz.

The OODA Loop in AI decision-making emphasizes equally on data quality, contextual awareness, decision traceability, and real-time adjustments. (Estimated data)

Decision Traceability in AI Systems

In enterprise AI, decision traceability is crucial for accountability and transparency. It involves maintaining a clear record of how decisions are made, which is essential for audits, compliance, and understanding AI behavior.

Benefits of Decision Traceability

- Improved Accountability: Organizations can track decision paths and hold systems accountable for outcomes, as discussed by PwC.

- Enhanced Transparency: Stakeholders gain insight into AI processes, building trust in the system, a point emphasized by Fast Company.

- Better Compliance: Helps meet regulatory requirements by documenting AI decision-making processes, as noted by The Hastings Center.

Implementing Decision Traceability

To implement decision traceability effectively, organizations can:

- Use Version Control Systems: Track changes in AI models and datasets.

- Maintain Decision Logs: Record decision-making processes and outcomes.

- Incorporate Explainable AI: Use techniques that make AI decisions understandable to humans, as recommended by Nature.

The Role of Human Judgment in AI

Despite the capabilities of AI, human judgment remains essential, especially in critical decision-making scenarios. Fighter pilots rely on their training and instincts, which are built from experience. AI systems benefit from similar human input.

Why Human Judgment Matters

- Ethical Considerations: Humans can assess the ethical implications of AI decisions, as highlighted by The Hastings Center.

- Complex Scenarios: Not all situations can be anticipated by AI; humans can provide the needed insight, as discussed in TechRadar's article.

- Moral Responsibility: Humans are ultimately responsible for AI outcomes, necessitating their involvement, a point emphasized by UNU.

Integrating Human Judgment

- Human-in-the-Loop Systems: Design AI systems that allow for human intervention in decision-making, as suggested by Fast Company.

- Training and Education: Equip employees with the skills to understand and work alongside AI, as recommended by Microsoft.

- Collaborative Decision-Making: Foster environments where humans and AI collaborate to reach decisions, as discussed by TechRadar.

Estimated data suggests that continuous learning has the highest influence on improving AI decision-making, followed by the OODA loop and decision traceability.

Continuous Learning and Adaptation

Both fighter pilots and AI systems must continuously learn and adapt to remain effective. In air combat, this involves learning from missions and adapting tactics. For AI, it means refining models and algorithms based on new data.

Continuous Learning in AI

- Data-Driven Improvements: Use data to refine AI models and improve accuracy, as highlighted by Andreessen Horowitz.

- Feedback Mechanisms: Implement systems that learn from user feedback and adapt accordingly, as discussed in Nature's research.

- Agility in Change: Ensure AI systems can pivot quickly in response to new challenges, as recommended by TechRadar.

Common Pitfalls in AI Decision-Making

Despite the potential benefits, there are common pitfalls in AI decision-making that organizations must be aware of to avoid.

Data Bias

Data is the foundation of AI, but if it's biased, the AI system will be too.

Solution: Conduct regular audits of data sources and implement bias detection tools, as suggested by UNU.

Lack of Transparency

AI systems are often seen as 'black boxes,' making it hard to understand how decisions are made.

Solution: Use explainable AI techniques to illuminate decision-making processes, as recommended by Nature.

Over-Reliance on AI

Blindly trusting AI can lead to poor decisions, especially in unprecedented situations.

Solution: Maintain human oversight and involve experts in AI-driven decisions, as emphasized by TechRadar.

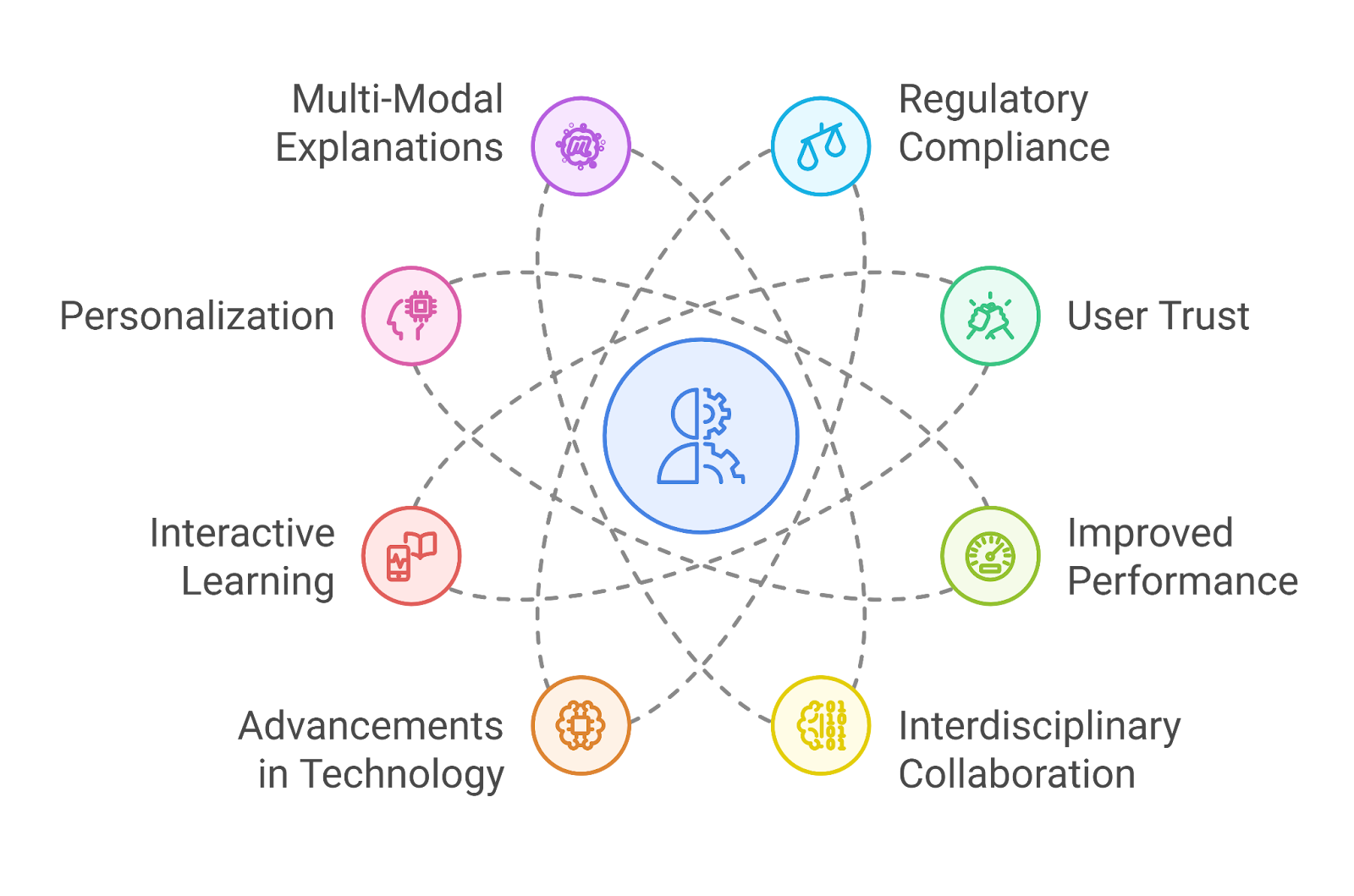

Future Trends in AI Decision-Making

As technology advances, new trends are emerging in AI decision-making that organizations should prepare for.

Ethical AI

There's growing emphasis on developing AI systems that align with ethical standards and values.

Trend: Implementing frameworks for ethical AI use and decision-making, as discussed by The Hastings Center.

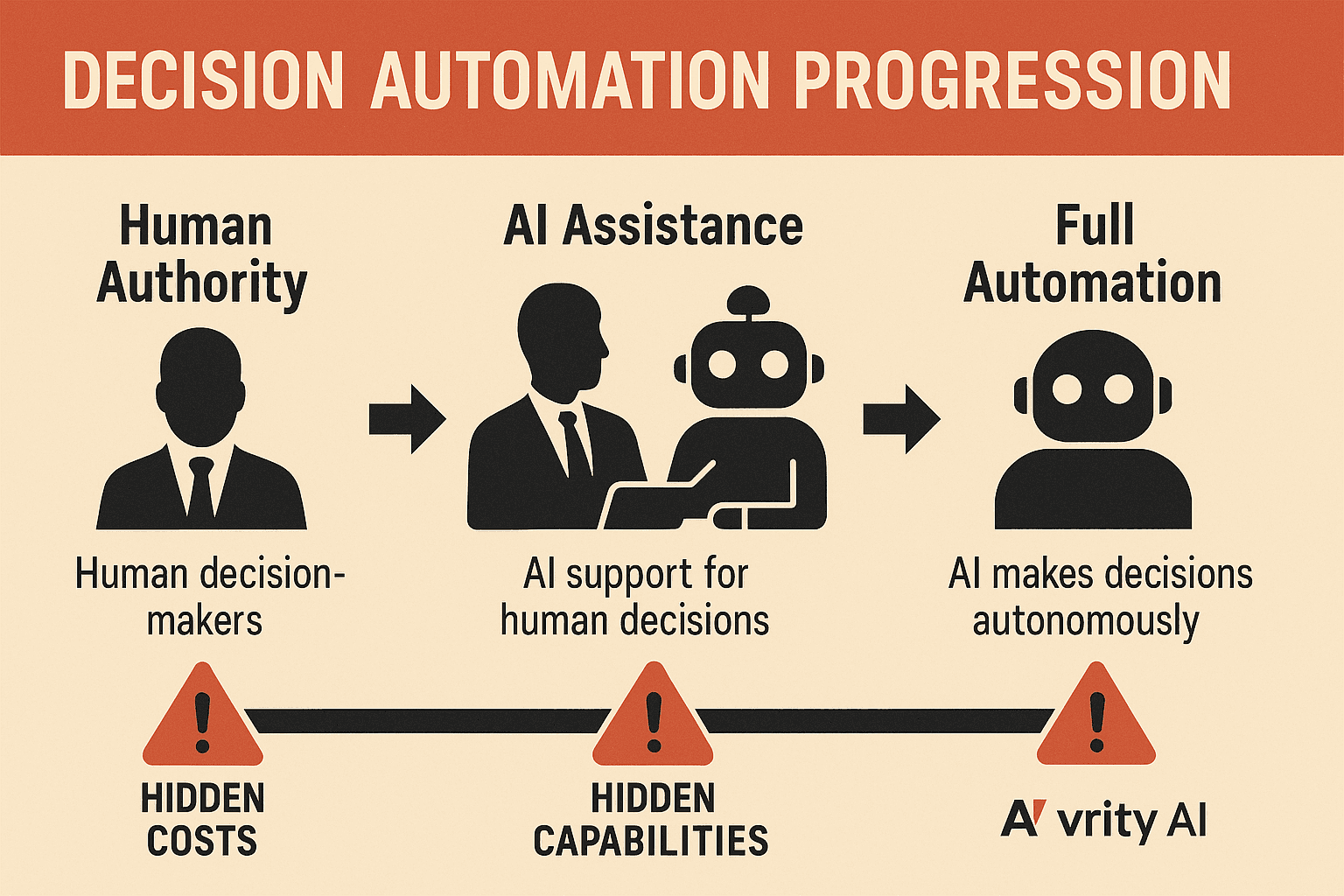

Augmented Decision-Making

Combining human intuition with AI's analytical power to enhance decision quality.

Trend: More organizations adopting AI to support rather than replace human decision-making, as noted by World Economic Forum.

AI in Edge Computing

AI is moving closer to data sources, enabling faster and more efficient decision-making.

Trend: Increased use of edge computing to process data locally and reduce latency, as highlighted by Fast Company.

Recommendations for Implementing AI in Enterprises

For enterprises looking to implement AI, here are some best practices to ensure success:

- Start Small: Begin with pilot projects to test AI applications and build expertise, as recommended by TechRadar.

- Focus on Data Quality: Invest in data management practices to ensure high-quality inputs, as discussed by Microsoft.

- Prioritize Transparency: Use explainable AI to build trust and understanding among stakeholders, as emphasized by Nature.

- Invest in Talent: Develop skills within the organization to work effectively with AI, as suggested by Andreessen Horowitz.

- Monitor and Adapt: Continuously monitor AI systems and adapt to new developments and insights, as highlighted by TechRadar.

Conclusion

Fighter pilots have long been celebrated for their decision-making abilities under pressure. By applying their principles—such as the OODA loop, decision traceability, and continuous learning—enterprises can enhance their AI decision-making processes. As AI continues to evolve, integrating these strategies will be essential for maintaining accountability, improving performance, and achieving strategic goals.

FAQ

What is the OODA loop?

The OODA loop stands for Observe, Orient, Decide, Act. It's a decision-making framework developed by a fighter pilot that emphasizes speed and adaptability, which can be applied to AI systems for improved decision-making, as explained by TechRadar.

How does decision traceability improve AI accountability?

Decision traceability involves maintaining records of how AI decisions are made, which improves accountability by providing transparency and enabling audits and compliance checks, as discussed by PwC.

What role does human judgment play in AI decisions?

Human judgment is crucial for considering ethical implications, assessing complex scenarios, and taking moral responsibility for AI outcomes. It complements AI's capabilities by adding context and ethical considerations, as highlighted by The Hastings Center.

Why is continuous learning important for AI systems?

Continuous learning allows AI systems to improve over time by adapting to new data, user feedback, and evolving challenges, ensuring they remain effective and relevant, as emphasized by Andreessen Horowitz.

What are common pitfalls in AI decision-making?

Common pitfalls include data bias, lack of transparency, and over-reliance on AI. These can be mitigated by regular data audits, using explainable AI, and maintaining human oversight, as discussed by UNU.

What future trends are emerging in AI decision-making?

Emerging trends include ethical AI practices, augmented decision-making combining human and AI strengths, and the use of AI in edge computing for faster decision-making, as highlighted by Fast Company.

Key Takeaways

- Fighter pilots' OODA loop can improve AI processes, as discussed by TechRadar.

- Human judgment is crucial for AI accountability, as highlighted by The Hastings Center.

- Decision traceability enhances transparency and compliance, as noted by PwC.

- Continuous learning keeps AI systems effective, as emphasized by Andreessen Horowitz.

- Data bias requires regular audits to ensure AI fairness, as suggested by UNU.

- Explainable AI builds stakeholder trust, as recommended by Nature.

Related Articles

- Cohere's Strategic Merger: Building a Transatlantic AI Powerhouse [2025]

- Navigating the AI Money Squeeze: Strategies for Growth in the AI Era [2025]

- Mastering the Monitoring of LLM Behavior: Drift, Retries, and Refusal Patterns [2025]

- Cohere's Game-Changing Merger with Aleph Alpha: A New Era in AI [2025]

- Top 10 Insights from Sapphire Ventures’ 2026 Software x AI Report: Navigating the New AI Landscape [2026]

- Inside GPT-5.5: OpenAI's Bold Leap Forward [2025]

![Lessons from Fighter Pilots for Making Effective Enterprise AI Decisions [2025]](https://tryrunable.com/blog/lessons-from-fighter-pilots-for-making-effective-enterprise-/image-1-1777288022966.jpg)