Meta Held Accountable for Teen Harm: Navigating the New Landscape [2025]

Last week, Meta faced two landmark legal defeats, underscoring a pivotal shift in accountability for tech giants. These cases have set a precedent, thrusting social media companies into the spotlight over how they prioritize user safety, particularly concerning younger audiences.

TL; DR

- Meta's Legal Losses: Meta was found liable for harm to teens in New Mexico and Los Angeles, as detailed in the New York Times.

- Impact on Industry: Sets a precedent for future lawsuits against tech companies, according to The Wall Street Journal.

- User Safety Focus: Renewed emphasis on protecting vulnerable users, especially teens, as discussed in PBS NewsHour.

- Regulatory Changes: Potential shifts in policies governing social media platforms, highlighted by BBC News.

- Future Trends: Increased scrutiny and possible redesigns of addictive features, as noted in American Action Forum.

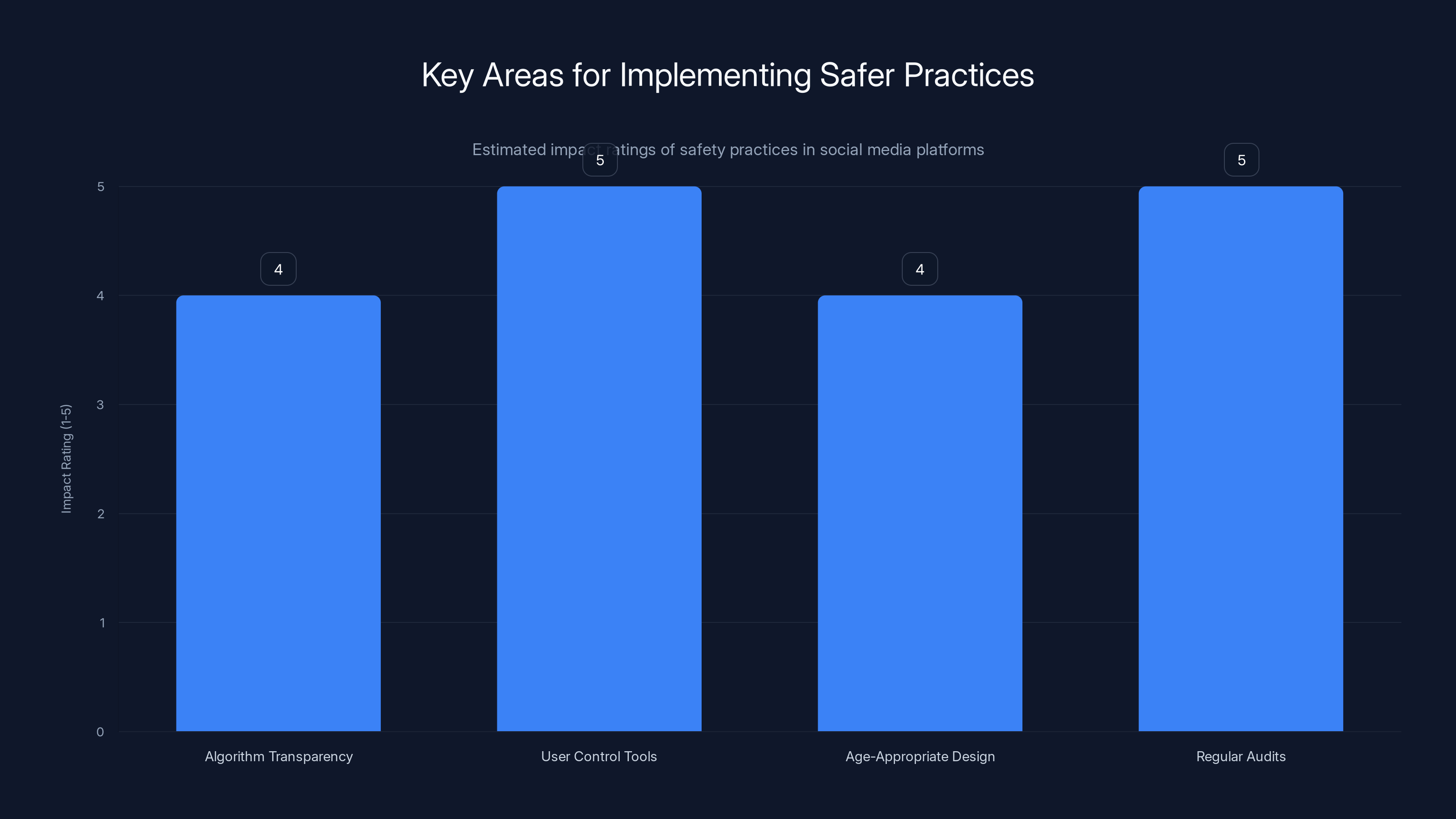

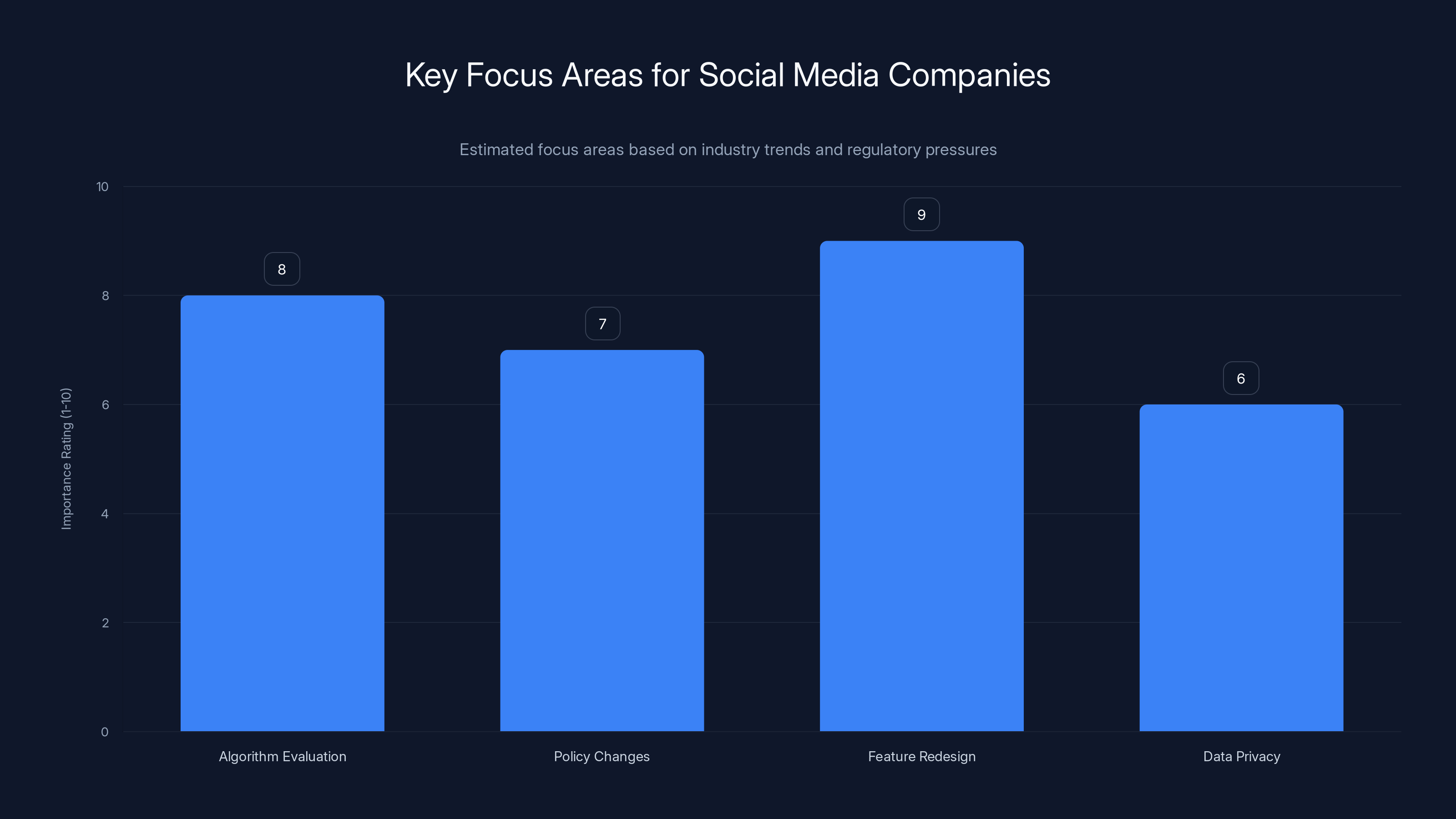

User control tools and regular audits are rated highest in impact for enhancing user safety. Estimated data.

The Landmark Cases and Their Implications

The New Mexico Case

The State of New Mexico's lawsuit against Meta culminated in a court ruling that held the company accountable for endangering child safety. This case is significant because it challenges the long-standing legal protections that social media companies have enjoyed under Section 230 of the Communications Decency Act. Traditionally, these protections have shielded platforms from liability for user-generated content, as reported by Reuters.

In contrast, the New Mexico case focused on the design and operational aspects of Meta's platforms. The court found that Meta's algorithms and engagement strategies were knowingly designed to engage and potentially harm young users. This distinction is critical, as it shifts the focus from content moderation to platform design, as noted by CalMatters.

The Los Angeles Verdict

In Los Angeles, a jury found Meta culpable for designing its apps to be addictive, specifically targeting children and teens. The plaintiff, a 20-year-old known as K. G. M., argued that Meta's platforms had a detrimental effect on their mental health, as reported by KTEN.

This case underscores the increasing recognition of the psychological impact social media can have on young users. It also highlights the potential for more lawsuits based on the addictive nature of these platforms, as discussed by NPR.

Broader Industry Impact

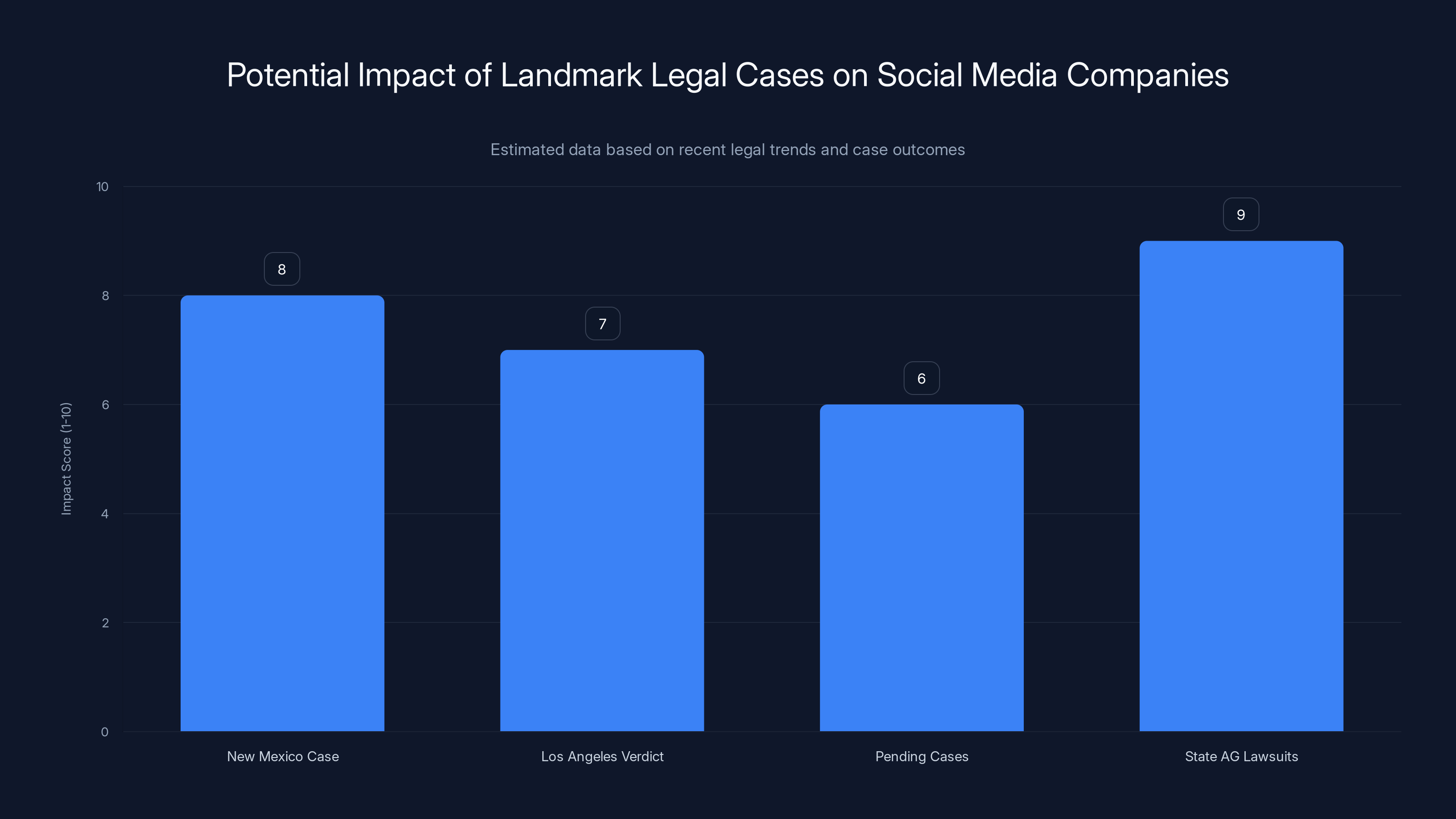

These legal outcomes have sent ripples across the tech industry, signaling a potential wave of similar lawsuits. With thousands of cases pending and 40 state attorneys general filing related lawsuits, the pressure is mounting on social media companies to reassess their user engagement strategies, as noted by Vogue.

The New Mexico and Los Angeles cases have significantly impacted the legal landscape for social media companies, with high potential for future lawsuits. (Estimated data)

What This Means for Social Media Companies

Reevaluating Platform Design

Social media platforms, including Meta, must now critically evaluate their algorithms and design choices to mitigate potential harm. This involves a delicate balance between user engagement and safety, as highlighted by AI Multiple.

Potential Policy Changes

Regulatory bodies worldwide are likely to take cues from these cases, prompting changes in how social media companies operate. This could lead to stricter guidelines regarding data privacy, algorithm transparency, and user consent, as discussed by FOX 9.

Redesigning Addictive Features

One of the core challenges for social media companies will be addressing the addictive elements of their platforms. This may involve redesigning features that encourage prolonged use or excessive engagement, as noted by NPR.

Implementing Safer Practices

Best Practices for User Safety

- Algorithm Transparency: Companies should provide clearer explanations of how their algorithms function and the impact they have on user engagement, as suggested by NPR.

- User Control Tools: Implement features that allow users to manage their engagement, such as time limits and content filters.

- Age-Appropriate Design: Tailor platform features to be age-appropriate, limiting potentially harmful content for younger users.

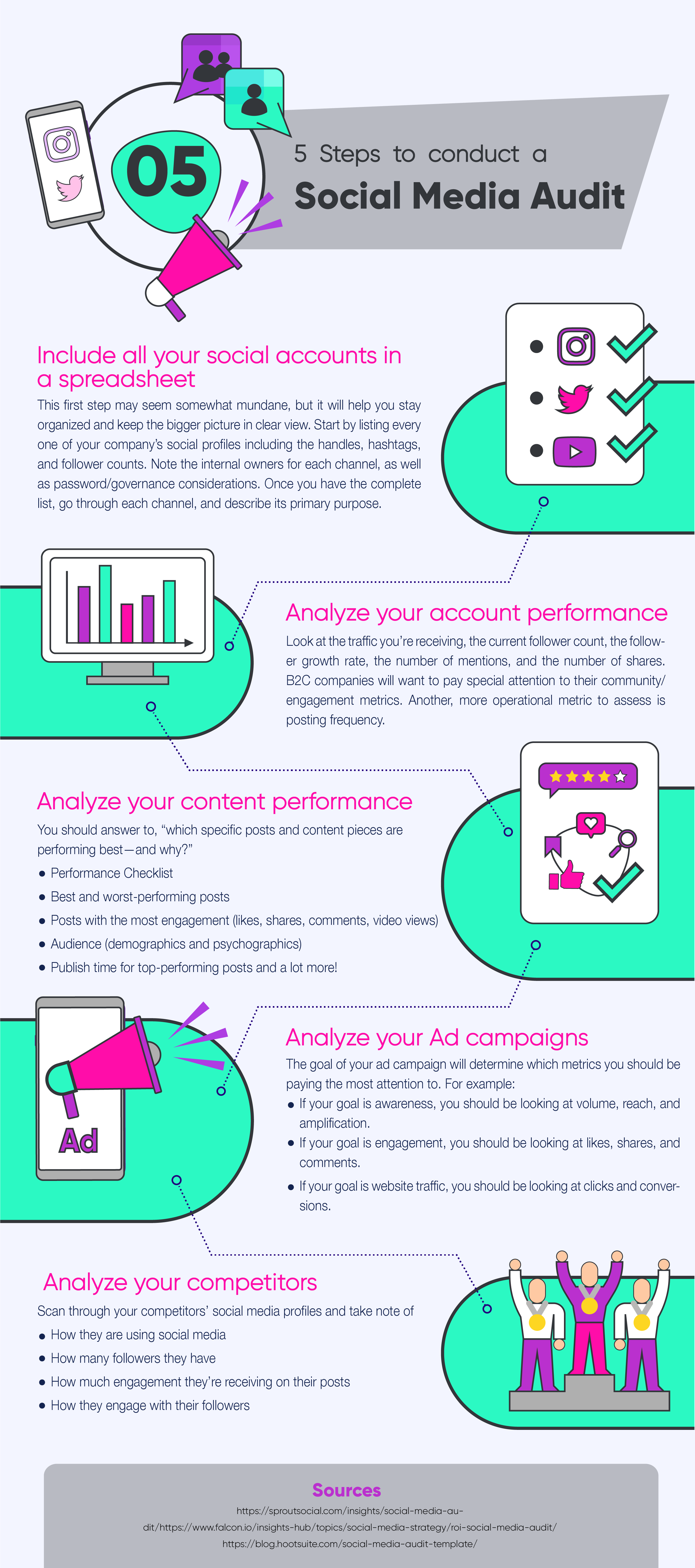

- Regular Audits: Conduct regular audits of platform features to ensure they align with user safety goals.

Common Pitfalls and Solutions

Pitfall: Over-Reliance on Engagement Metrics

Solution: Shift focus from purely quantitative metrics to qualitative assessments of user well-being and satisfaction.

Pitfall: Insufficient Age Verification

Solution: Implement robust age verification systems to better protect young users from content that may not be age-appropriate.

Future Trends and Recommendations

Increased Scrutiny and Accountability

As legal precedents continue to develop, social media companies will face increasing scrutiny. It's imperative for these platforms to proactively adapt to changing expectations and regulations, as highlighted by NPR.

Emphasis on Mental Health

Expect a growing emphasis on mental health support within digital platforms. This could include partnerships with mental health organizations and the integration of support resources directly within apps.

The Role of AI in User Safety

AI can play a crucial role in enhancing user safety, from identifying harmful content to personalizing user experiences that prioritize well-being, as discussed by AI Multiple.

Social media companies are expected to prioritize feature redesign and algorithm evaluation to address user safety and regulatory compliance. (Estimated data)

Case Studies: Learning from the Past

Case Study 1: Tik Tok's Approach to User Safety

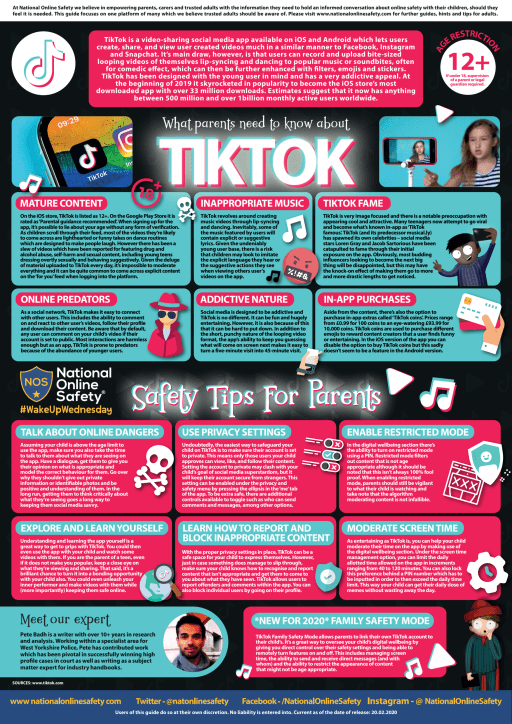

Tik Tok has introduced several features aimed at protecting young users, such as screen time management tools and restricted modes for younger audiences. These initiatives offer valuable insights into balancing engagement with safety.

Case Study 2: You Tube's Restricted Mode

You Tube has long faced criticism over inappropriate content reaching young users. Its Restricted Mode attempts to filter out unsuitable content, though effectiveness and accuracy remain challenges.

Conclusion: Navigating the Road Ahead

The recent legal actions against Meta mark a turning point for social media accountability. Companies must now navigate a landscape where user safety isn't just a regulatory requirement but a moral imperative, as emphasized by NPR.

By prioritizing transparency, user control, and mental health, social media platforms can better serve their diverse user bases while mitigating potential legal risks.

Use Case: Implementing AI-driven safety audits to ensure compliance with new regulatory standards.

Try Runable For FreeFAQ

What legal changes have occurred due to Meta's recent lawsuits?

These cases have set a precedent for holding social media companies accountable for design choices affecting user safety, focusing on algorithm transparency and user engagement practices, as reported by The New York Times.

How can social media platforms ensure user safety?

By implementing algorithm transparency, age-appropriate designs, and regular audits, platforms can better protect users, especially vulnerable populations like teens, as discussed by NPR.

What role does AI play in enhancing social media safety?

AI can identify harmful content, personalize user experiences, and automate interventions to enhance user safety and well-being, as noted by AI Multiple.

What are the implications of these legal cases for other tech giants?

These cases signal potential regulatory changes and increased scrutiny, prompting tech companies to adopt safer practices and transparent operations, as highlighted by The Wall Street Journal.

How should platforms address addictive features?

By redesigning features that encourage excessive use and implementing user control tools like time limits and content filters, as suggested by NPR.

What future trends can we expect in social media regulation?

Increased focus on mental health resources, stricter data privacy regulations, and enhanced scrutiny of algorithmic operations are likely future trends, as discussed by PBS NewsHour.

Key Takeaways

- Meta's legal defeats highlight the need for accountability in tech, as reported by The New York Times.

- Social media platforms must prioritize user safety over engagement, as discussed by NPR.

- Algorithm transparency and user control are becoming mandatory, as noted by AI Multiple.

- Future regulations will focus on mental health and privacy, as highlighted by PBS NewsHour.

- AI can enhance user safety and personalization, as discussed by AI Multiple.

- Platform redesigns are necessary to address addictive features, as suggested by NPR.

Related Articles

- Instagram's New Feature: Paying for Anonymous Story Viewing [2025]

- Understanding Instagram’s PG-13 Rating Adjustments [2025]

- The Evolution of 'Woke 2.0': Navigating the New Frontiers of Social Consciousness [2025]

- The Best Time to Drink Coffee for Productivity (and When Not To) [2025]

- World Backup Day 2026: Ultimate Guide to UGreen's 2-Bay NAS and Best Practices

- Citrix NetScaler Flaw: CISA's Patch Warning and What It Means [2025]

![Meta Held Accountable for Teen Harm: Navigating the New Landscape [2025]](https://tryrunable.com/blog/meta-held-accountable-for-teen-harm-navigating-the-new-lands/image-1-1774987591108.jpg)