Meta's AI Content Moderation Shift [2025]

Meta, the parent company of Facebook and Instagram, is embarking on a significant transformation by reducing its reliance on human content moderators and shifting towards AI-based systems. This move is not just about cutting costs—it's a strategic pivot aimed at enhancing the speed and accuracy of content moderation across its platforms, as detailed in Meta's official announcement.

TL; DR

- Meta is transitioning to AI-based content moderation, reducing human involvement, according to Bloomberg.

- AI systems can handle more languages than human moderators, reaching 98% of global users, as noted by TechBuzz.

- Cost efficiency and speed are key drivers of this shift.

- Human moderators will still oversee critical decisions, ensuring complex cases are handled with care.

- AI moderation has already shown fewer over-enforcement errors, improving user trust.

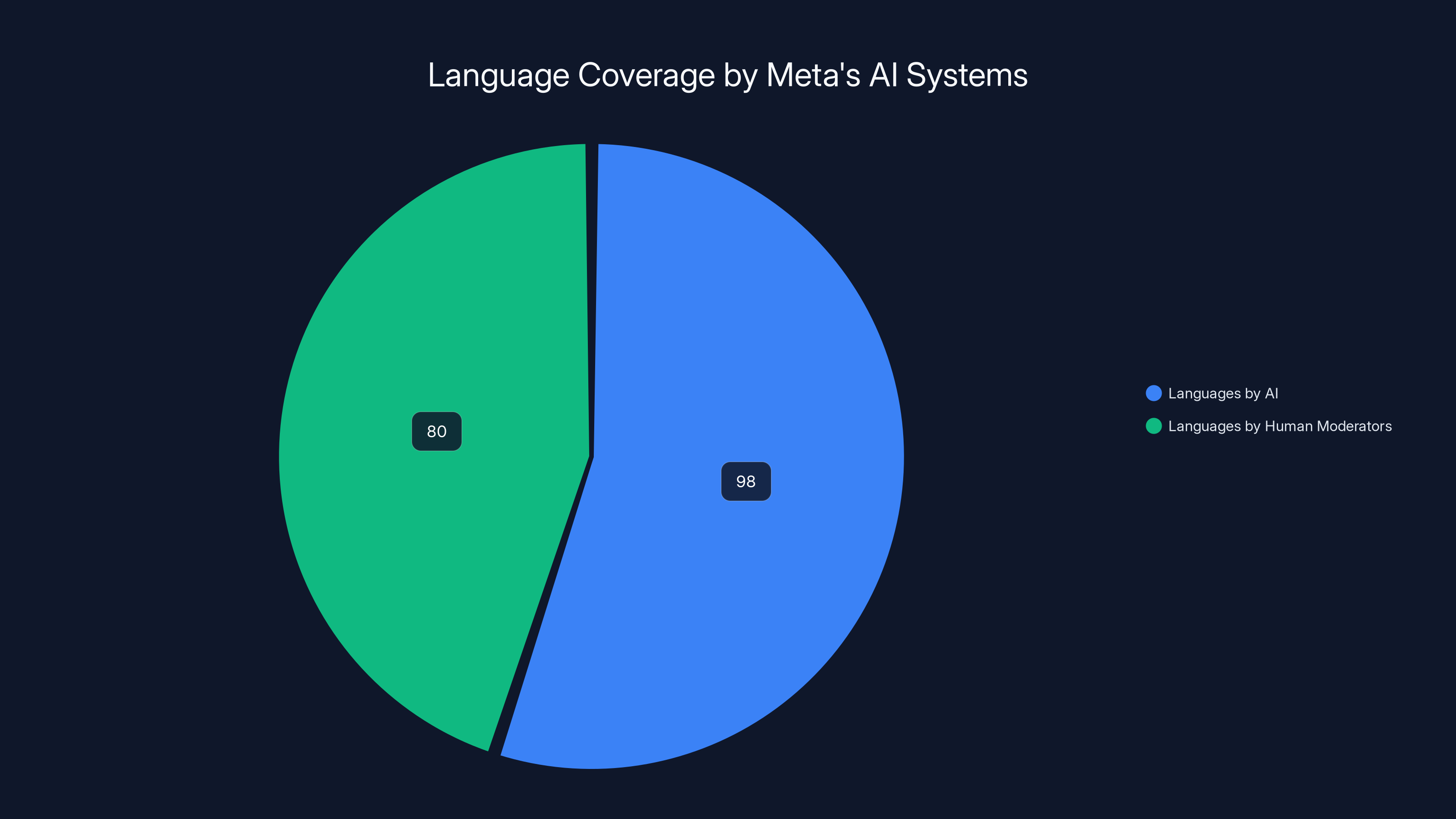

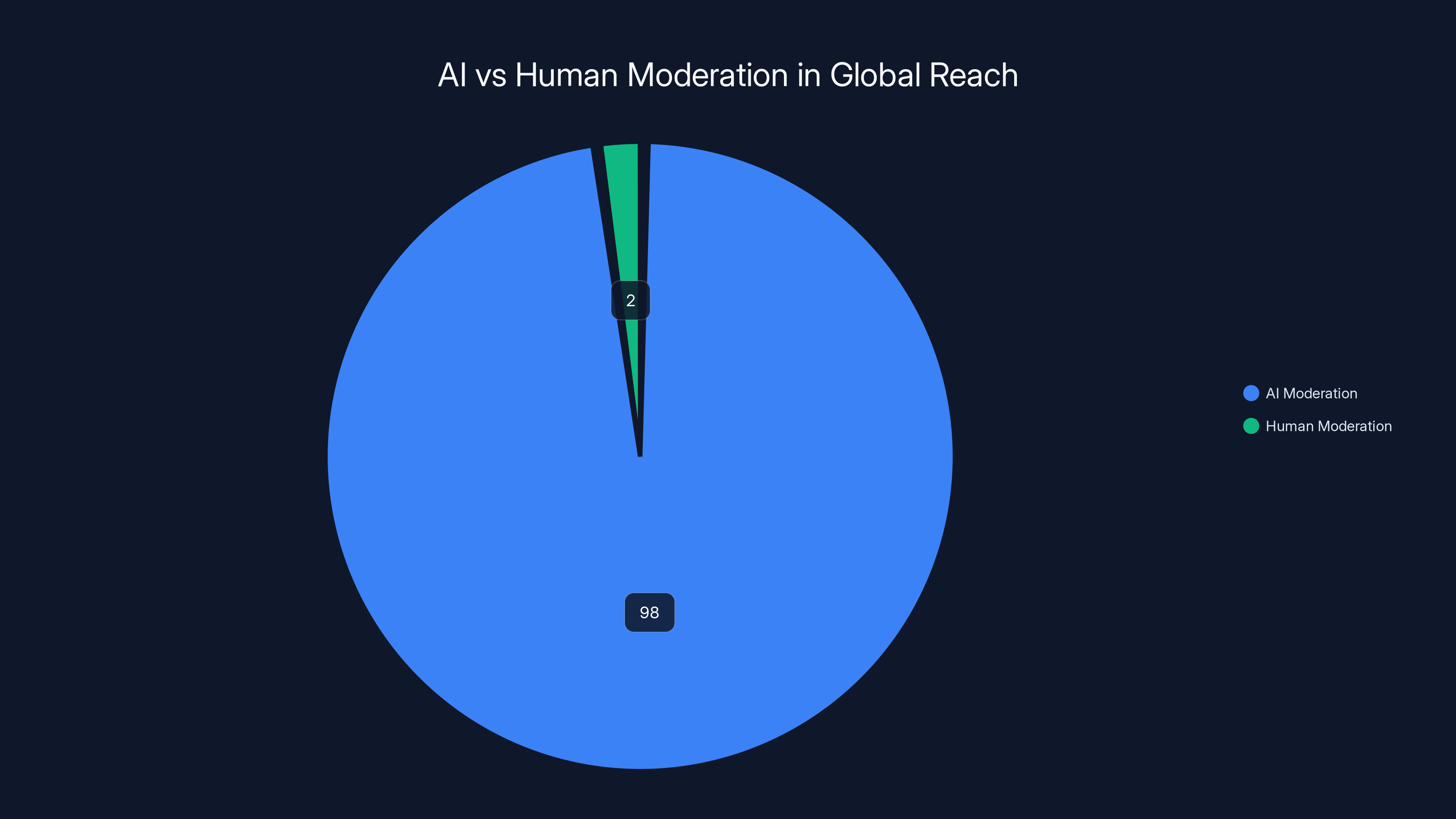

Meta's AI systems support languages used by 98% of people online, significantly more than the 80 languages supported by human moderators. Estimated data.

The Evolution of Content Moderation

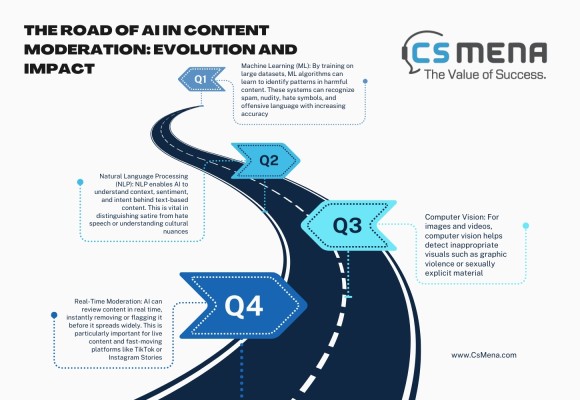

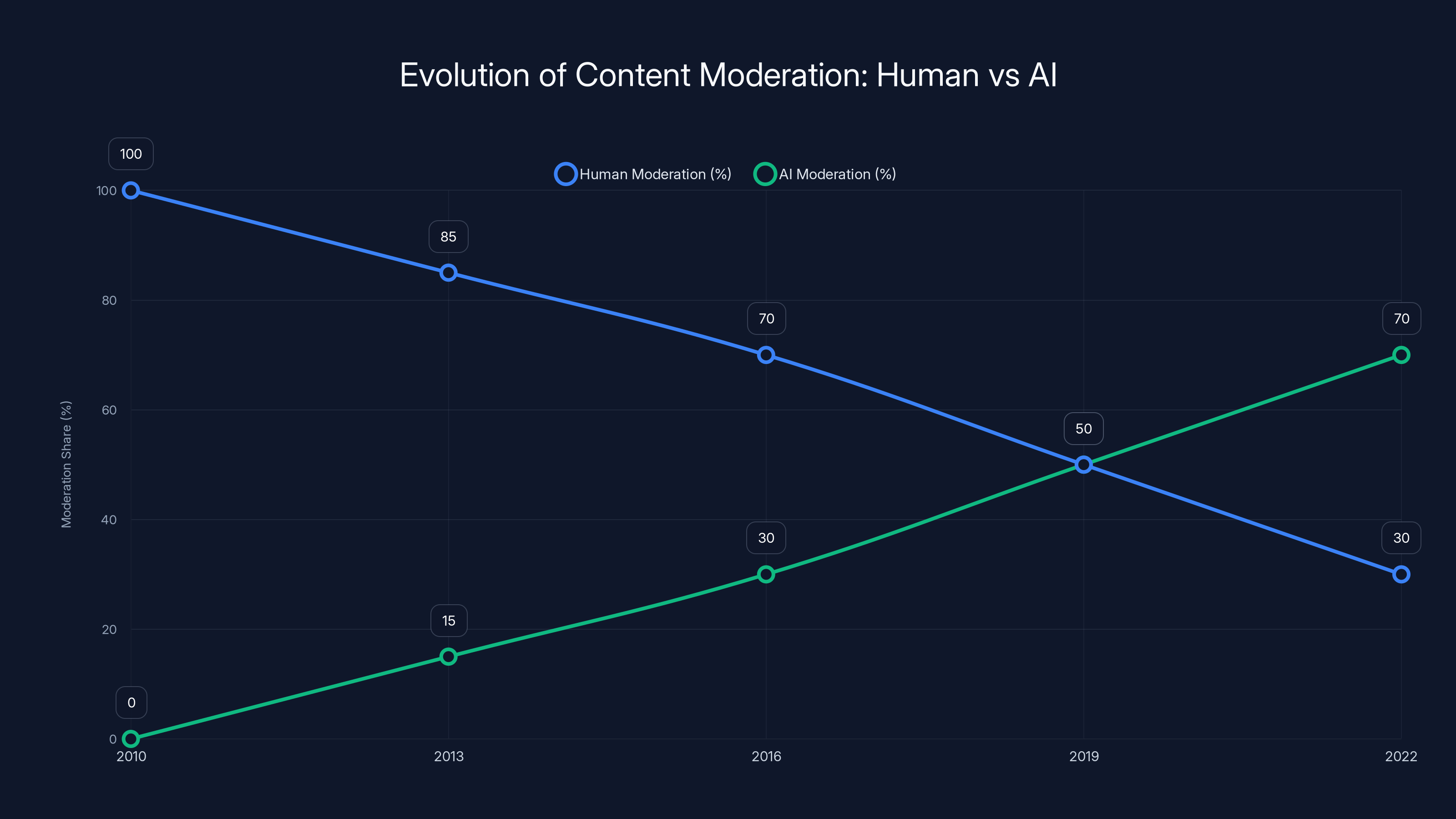

Content moderation has always been a cornerstone of social media management. Initially, platforms relied heavily on human moderators to sift through vast amounts of user-generated content. However, as platforms like Facebook expanded, the volume of content became too overwhelming for human-only moderation.

The Rise of AI in Moderation

Over the years, AI has been increasingly integrated into the content moderation process. AI systems are capable of analyzing text, images, and videos at a scale unmatched by human moderators. According to Meta, these systems can process languages used by 98% of people online, a significant leap from the 80 languages currently supported by human moderation teams.

Why Shift to AI?

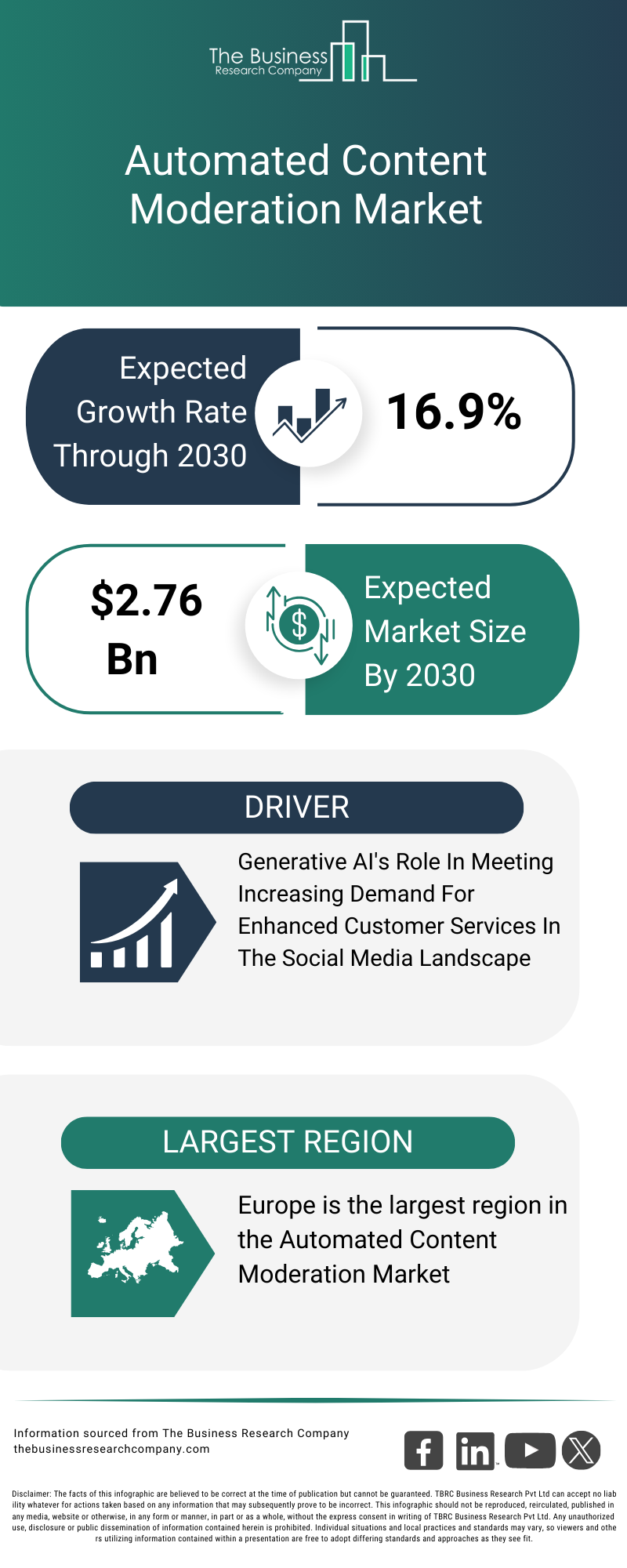

The transition to AI-driven content moderation is driven by several factors. First, AI systems offer unmatched scalability and speed. They can process millions of content pieces in real-time, identifying potential violations faster than any human team could. Additionally, AI systems reduce the cost of moderation, a critical factor for a company managing billions of posts daily, as reported by TechBuzz.

The chart illustrates the estimated shift from human to AI moderation from 2010 to 2022, highlighting AI's growing role in handling content at scale. Estimated data.

Human Moderators: Still Essential

Despite the shift towards AI, Meta acknowledges that human moderators will continue to play a crucial role, especially in high-stakes decisions.

Key Roles for Human Moderators

Meta has outlined that human moderators will be central in making complex, high-impact decisions. For instance, they will handle appeals of account disablements, reports to law enforcement, and training AI systems to improve accuracy, as highlighted by The Guardian.

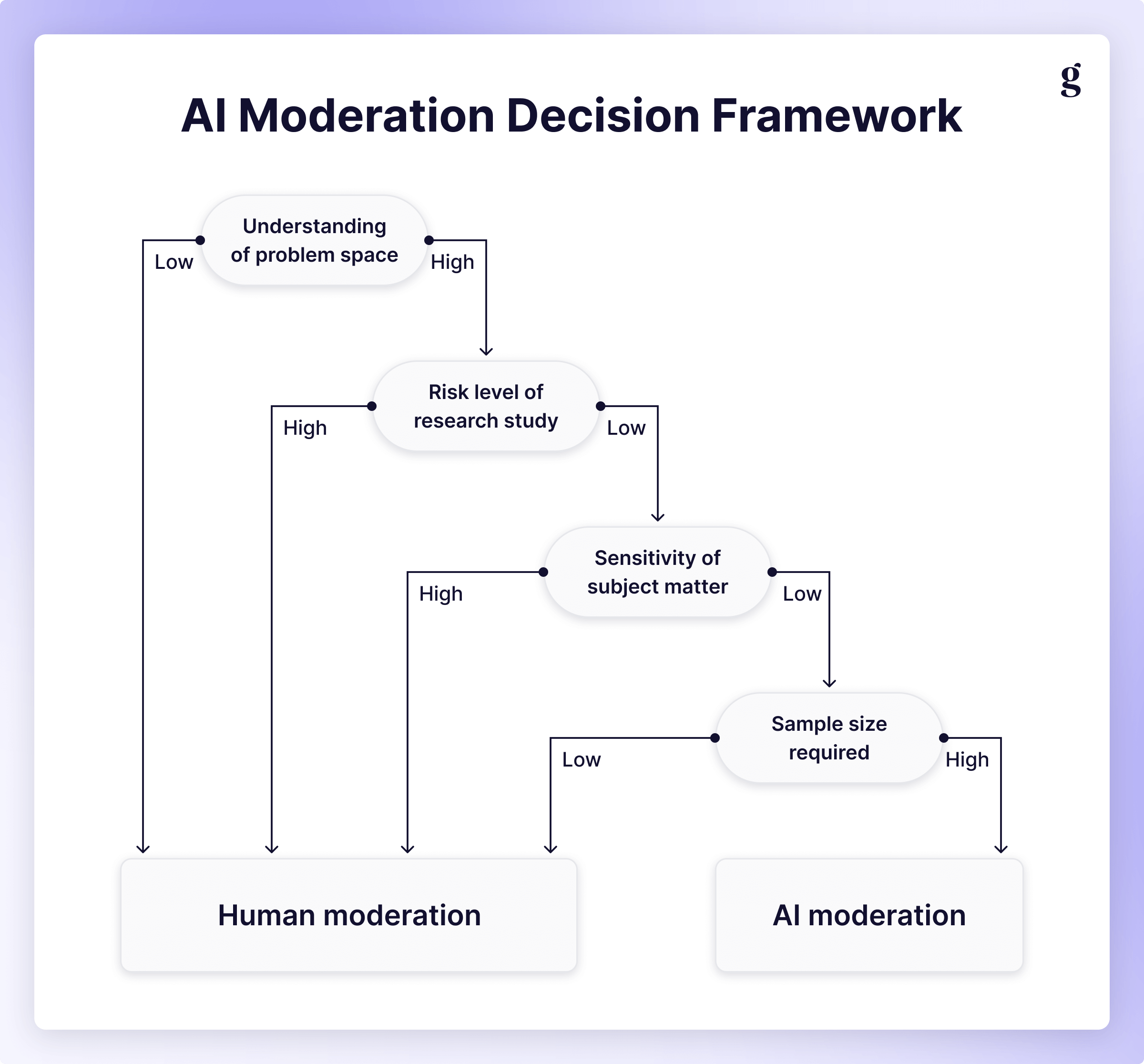

Balancing AI and Human Oversight

While AI can handle the bulk of moderation tasks, human oversight is essential to ensure fairness and accuracy, particularly in nuanced cases. Human moderators provide the context and understanding that AI cannot, ensuring decisions are made with a human touch.

AI Moderation in Practice

Meta has been testing large language models (LLMs) for content moderation, with promising results.

Early Successes and Challenges

Initial tests of AI-driven moderation systems have shown fewer over-enforcement errors, catching more severe violations effectively. However, challenges remain, particularly in handling nuanced content that requires human judgment, as noted in Engadget.

AI-based content moderation is estimated to reach 98% of global users, significantly expanding language coverage and efficiency. Estimated data.

Financial Implications

Reducing the number of human moderators has significant financial implications for Meta.

Cost Savings

By shifting to AI, Meta can significantly reduce its workforce, leading to substantial cost savings. The company employs thousands of contractors worldwide, and reducing this number can lead to millions in savings annually, as reported by Morningstar.

Reinvestment in Technology

These savings can be reinvested into further developing AI technologies, ensuring Meta remains at the forefront of innovation in content moderation, as discussed in Oracle's insights on AI automation.

User Experience and Trust

The shift towards AI moderation impacts user experience and trust in Meta's platforms.

Improving User Experience

AI systems can provide faster resolutions to content disputes, enhancing the overall user experience. By reducing the time taken to resolve issues, Meta aims to improve user satisfaction and trust, as noted by Market.us.

Addressing User Concerns

However, there are concerns about AI systems making mistakes, leading to unjust content removal or account bans. Meta aims to address these concerns by ensuring human moderators are involved in critical decisions and appeals, as reported by TechBuzz.

The Future of Content Moderation

The transition to AI-based content moderation is just the beginning.

Long-term Vision

Meta envisions a future where AI systems are fully integrated into all aspects of content moderation, with humans playing a supervisory role. This shift will allow Meta to handle the growing volume of content while maintaining the quality and accuracy of moderation, as outlined in Meta's official blog.

Potential Challenges

As AI systems become more prevalent, new challenges will arise. Ensuring AI systems remain unbiased, transparent, and fair will be crucial to maintaining user trust and regulatory compliance, as discussed by The Guardian.

Conclusion

Meta's shift towards AI-driven content moderation marks a significant evolution in how social media platforms manage content. While the move offers numerous benefits, including cost savings and improved efficiency, human moderators will remain essential in ensuring complex cases are handled appropriately. As AI technologies continue to evolve, Meta's approach to content moderation will serve as a blueprint for other platforms navigating similar transitions.

Use Case: Automate your content review process and improve efficiency with AI-powered solutions.

Try Runable For Free

FAQ

What is AI content moderation?

AI content moderation involves using artificial intelligence to automatically review and manage user-generated content on social media platforms.

How does Meta's AI moderation work?

Meta's AI systems analyze text, images, and videos to identify potential policy violations, supported by human moderators for complex decisions.

What are the benefits of AI moderation?

AI moderation is scalable, fast, and cost-effective, processing vast amounts of content in real-time while reducing human workload.

Will human moderators still be involved?

Yes, human moderators will handle high-impact decisions, such as account appeals and law enforcement reports, ensuring fairness and accuracy.

What challenges does AI moderation face?

Challenges include handling nuanced content, ensuring fairness, and maintaining user trust while avoiding biases in AI systems.

How will this shift impact Meta's workforce?

Meta plans to reduce its contract workforce, investing savings into developing AI technologies and improving content moderation systems.

What languages can Meta's AI systems handle?

Meta's AI systems can process languages used by 98% of people online, significantly more than the 80 languages supported by human moderators.

How does AI moderation improve user experience?

AI systems provide faster resolutions to content disputes, enhancing user satisfaction and trust in Meta's platforms.

What is Meta's long-term vision for moderation?

Meta aims for AI systems to handle most moderation tasks, with human oversight ensuring complex cases are addressed appropriately.

What role will AI play in future social media management?

AI will become integral to content moderation, data analysis, and user interaction, shaping the future of social media management.

Key Takeaways

- Meta's shift to AI moderation aims for efficiency and cost savings.

- AI systems can process more languages than human moderators.

- Human oversight remains crucial for high-impact decisions.

- AI moderation has shown fewer over-enforcement errors.

Related Articles

- Meta's VR Metaverse: Future Plans Revealed [2025]

- Meta Revives Horizon Worlds VR: A Strategic Pivot [2025]

- Meta's Next-Gen AI Systems: Revolutionizing Content Enforcement [2025]

- Why Meta is Keeping Horizon Worlds on VR: An In-depth Look [2025]

- Waymo's Journey to 170 Million Miles: Navigating the Future of Autonomous Driving [2025]

- The Future of Internet Law: Exploring the Implications of Proposed Legislative Changes [2025]

![Meta's AI Content Moderation Shift [2025]](https://tryrunable.com/blog/meta-s-ai-content-moderation-shift-2025/image-1-1773947206067.jpg)