The Uncomfortable Truth About Meta and Your Health Data

Mark Zuckerberg wants your wrist. Not metaphorically. Meta is investing heavily in wearable technology, and if history tells us anything, it's that this should worry you.

When you hear "Meta smartwatch," you might picture another gadget competitor—something like an Apple Watch alternative. But here's what actually concerns me: Meta isn't primarily a hardware company. Meta is a data company. And your heart rate, sleep patterns, stress levels, and exercise habits are valuable data.

I've been covering tech for over a decade, and I've watched Meta's relationship with user data evolve in ways that should make anyone pause. This isn't paranoia. It's pattern recognition.

Let me walk you through exactly why a Meta smartwatch represents a fundamentally different kind of privacy risk than competitors face—and what you need to know before Meta convinces you to strap their device to your wrist.

Understanding Meta's Data Extraction Playbook

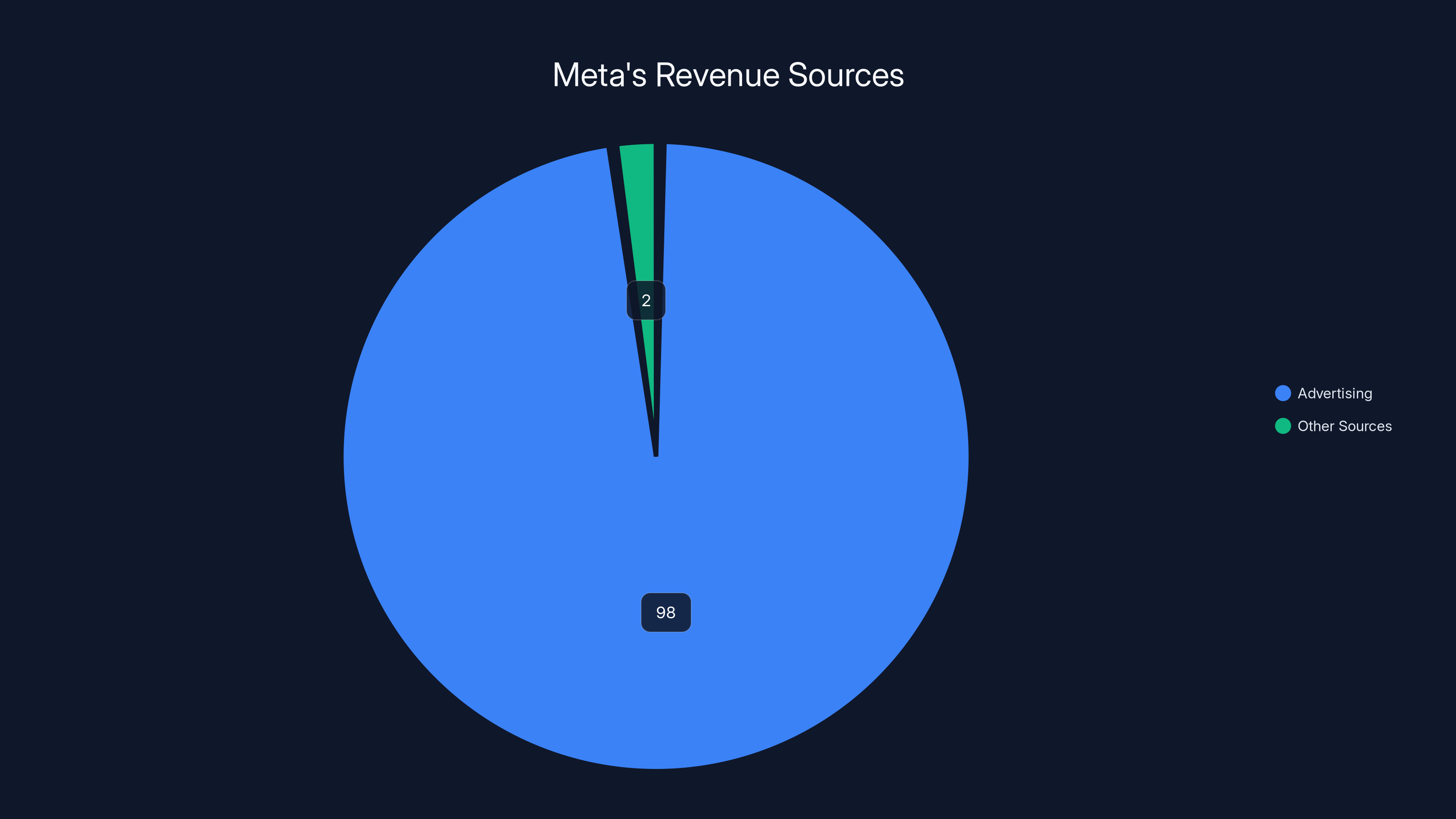

Meta's business model is built on a simple equation: more data equals more targeted advertising equals more revenue. The company generates roughly 98% of its annual revenue from advertising, according to their financial reports. That's not a slight lean toward ads. That's dependence.

The thing is, Meta didn't start with your wrist data. They started with Facebook profiles. Then Instagram. Then WhatsApp. Then they acquired VR companies like Oculus (now Meta Quest). Each expansion followed the same pattern: acquire users, extract data, optimize targeting, maximize advertising revenue.

Consider what Meta has already done with health-adjacent data. In 2021, it was revealed that Meta was tracking people across websites using pixels and collecting data about their medical conditions, financial situations, and personal struggles. They weren't just passively observing—they were actively building psychological profiles to determine which users were most vulnerable to certain types of persuasion.

That was data they gathered without even owning a smartwatch.

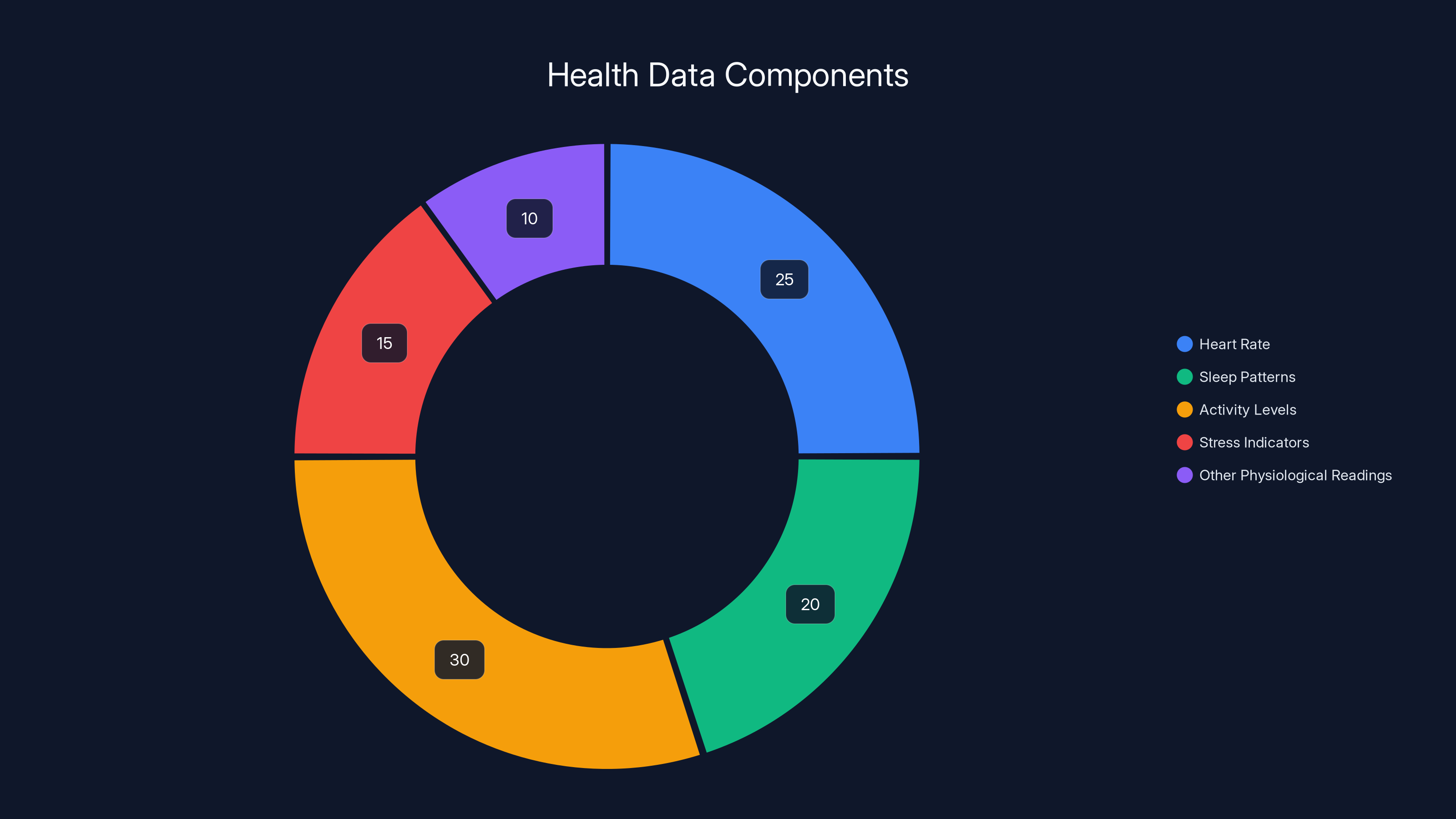

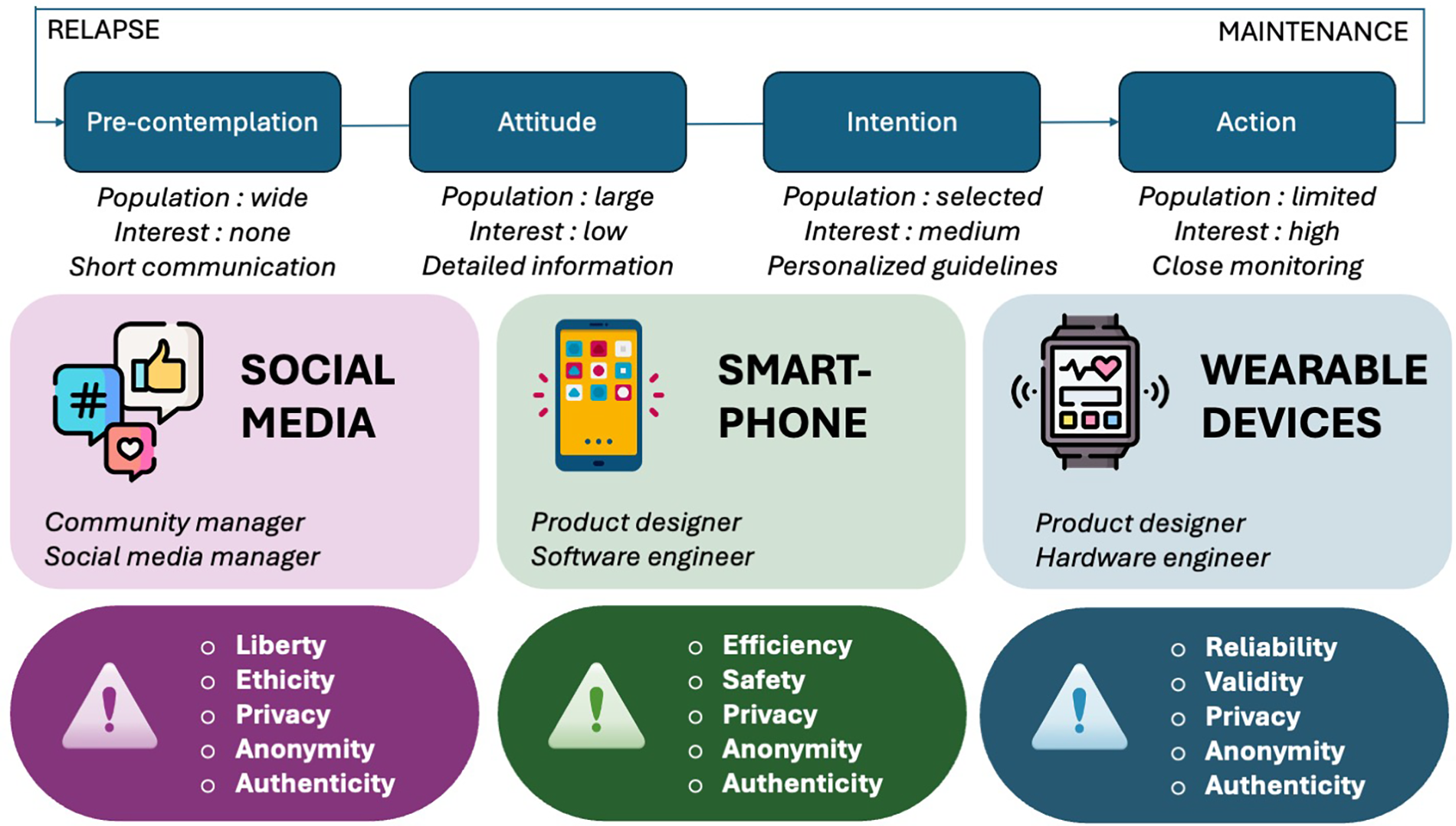

Now imagine Meta with direct access to biometric readings. Your resting heart rate. Sleep architecture (light, deep, REM phases). Stress levels measured through heart rate variability. Caloric burn. Whether you've been sick (measured through changes in resting heart rate). Whether you're anxious. Whether you're exercising intensely.

This isn't just personal information. This is intimate information.

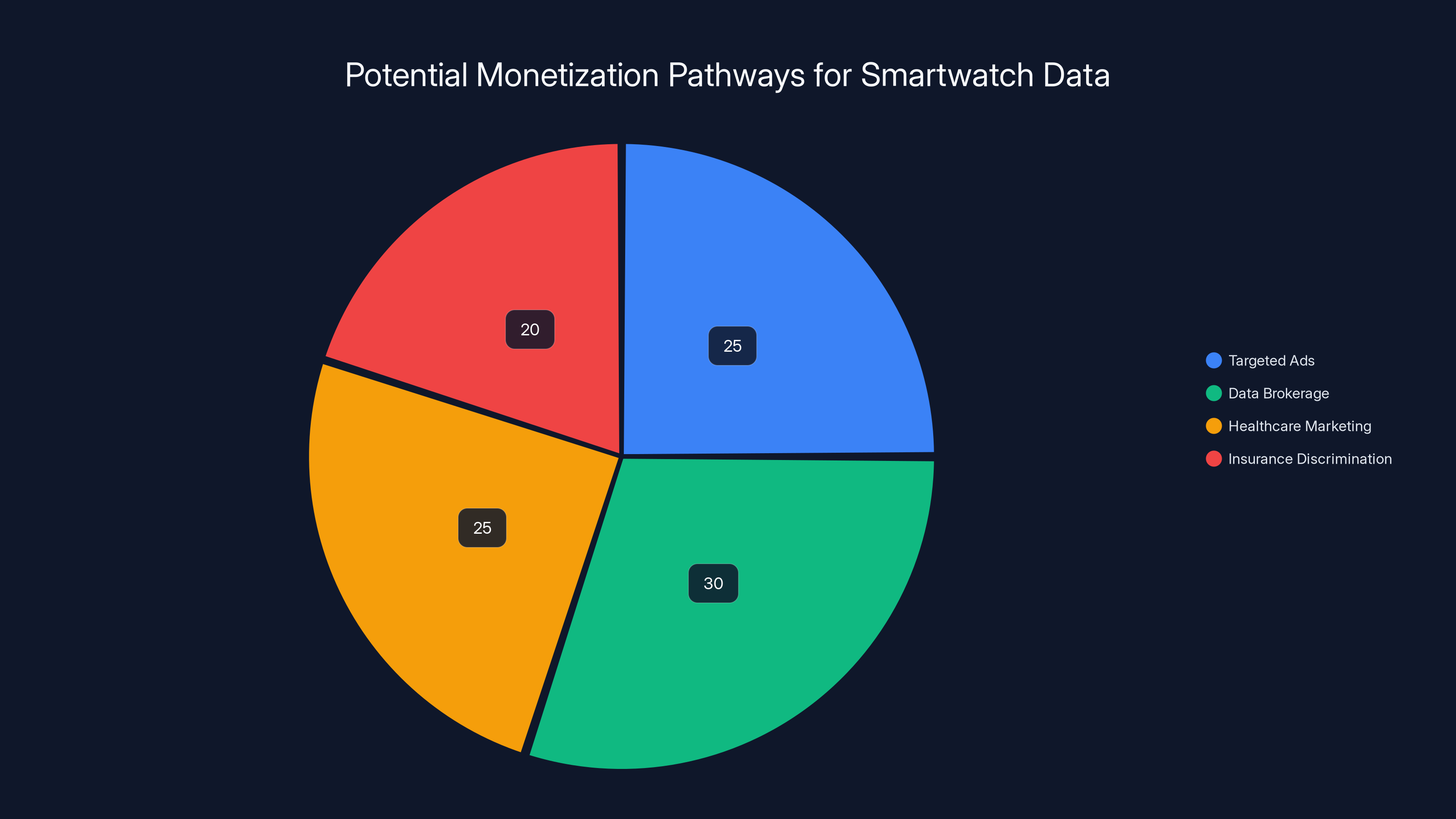

Estimated data shows potential monetization pathways for smartwatch data, with data brokerage and healthcare marketing as significant avenues.

The Specific Privacy Failures That Demand Attention

Meta's track record with health and wellness data specifically is... not good.

The WhatsApp Backups Controversy

When Meta owned WhatsApp, they began encrypted backups. Sounds good, right? Privacy-preserving encryption. But here's the catch: users could choose to back up their messages to Google Drive or iCloud. And if they did, Meta explicitly stated they would track and analyze the metadata—who talked to whom, when, and how frequently.

They claimed this was only for spam prevention. But the infrastructure was clearly designed to extract communication patterns. For a health-focused device, imagine Meta tracking when you contact your doctor, your therapist, your fitness trainer—not the content, but the pattern. That metadata alone reveals intimate details about your health status.

The Facebook Research Payments Controversy

In 2019, it was uncovered that Meta was paying users as young as 13 years old to install a "research app" called "Facebook Research." This app had virtually unlimited access to user device data—everything they did, viewed, or typed. Teenagers were getting paid $20 per month to essentially give Meta surveillance access to their phones.

Meta later apologized. But the strategy reveals something crucial: when Meta believes they need data, they'll go to extreme lengths to get it. They're not shy about asking users to trade privacy for money or services.

With a smartwatch, they wouldn't need to ask. The data collection would be baked into the device itself.

The Fitness Data De-anonymization Problem

This is where things get especially concerning. Research from MIT and other institutions has shown that fitness data—the exact type of data a smartwatch collects—can be re-identified even when anonymized. Your exercise patterns are like a fingerprint. The combination of when you exercise, how intensely, for how long, and which routes you take creates a unique signature.

Even if Meta promised to anonymize fitness data (which they probably wouldn't), that data could theoretically be matched back to your identity through other data streams Meta controls. They operate Instagram, Facebook, WhatsApp, and Threads. They see where you're posting from, what you're engaging with, and who you're following. Cross-reference that with anonymized fitness data showing someone leaving their home at 6 AM for a 5-mile run, and anonymity evaporates.

Smartwatches collect a variety of biometric data, with activity levels and heart rate being the most significant components. Estimated data.

How Smartwatch Data Creates New Categories of Risk

Unlike a smartphone, which you use deliberately (you choose to open apps, visit websites, engage with content), a smartwatch is always on. It's always measuring. It's always recording.

Your phone knows what you do. Your smartwatch knows what your body does.

This distinction matters enormously.

Biometric Data as a Behavioral Prediction Tool

Meta employs some of the world's best machine learning engineers. They've built systems to predict human behavior with frightening accuracy. With biometric data from a smartwatch, those prediction capabilities explode.

Heart rate variability indicates stress and emotional state. Sleep patterns reveal depression, anxiety, and mental health challenges. Exercise intensity and frequency indicate body image concerns and eating behaviors. Resting heart rate trends show fitness level changes and potential overtraining or illness.

Meta wouldn't just use this data to sell you products. They'd use it to predict your vulnerabilities—and then target you with content, ads, or information designed to exploit those vulnerabilities.

Want to lose weight? Meta sees your increased exercise routine. They'll start showing you ads for diet supplements, weight loss programs, and fitness coaching—not because you searched for them, but because your wrist data betrayed your goals.

Feeling stressed? Your elevated resting heart rate and irregular sleep pattern don't lie. Meta will target you with anxiety-inducing content, conspiracy theories, or engagement bait designed to make you click (because engagement metrics are profitable, regardless of your mental health).

The Algorithmic Nudge Problem

Let's say Meta owns your smartwatch. They know your sleep schedule, your activity level, your stress patterns. They also own the apps you use—Instagram, WhatsApp, Facebook, Threads. They control your feed.

Now imagine they start algorithmically nudging you. You tend to sleep poorly on nights after you use Instagram heavily? The algorithm learns this. Does Meta reduce addictive content on nights when your sleep data shows degradation? Almost certainly not. Instead, they optimize for engagement, knowing this is a user cohort that will spend time scrolling at night.

This isn't conspiracy thinking. This is how algorithmic systems work when designed for engagement optimization rather than user wellbeing.

Comparing Meta to Competitors: Why This Matters

To understand the specific risk a Meta smartwatch poses, let's compare it to other popular options.

Apple Watch and the Privacy Comparison

Apple makes most of its money from hardware and services, not advertising. Yes, they collect health data from Apple Watch users. Yes, that data is centralized and valuable. But Apple's business model doesn't depend on selling your attention to advertisers. They're not building surveillance infrastructure to maximize advertising effectiveness.

Apple has been criticized for privacy issues, certainly. But their incentives are fundamentally different. They benefit from users trusting their devices. A privacy breach for Apple is bad for their brand and their business.

For Meta, a privacy breach is... also bad for their brand. But their business has survived repeated, documented privacy failures. They've been fined billions. They've been investigated by governments worldwide. And their revenue keeps growing because their advertising system is so effective that advertisers will pay premium prices regardless of how the data was obtained.

Fitbit and the Google Acquisition

Google bought Fitbit in 2021, and health privacy advocates were, understandably, concerned. Google is also an advertising company. Google does track users across websites and services.

But here's the difference: Google hasn't integrated Fitbit data into its advertising targeting system as aggressively as observers feared. Part of this is regulatory pressure (they committed to not using Fitbit health data for ad targeting in the EU). Part of this is reputation management.

And part of it is that Google has other massive data streams. They own search. They own YouTube. They own Android. They don't need your fitness data to profile you—they already have your search queries, your browsing history, and your video watching habits.

Meta is different. Meta has fewer data streams than Google. Social media is their primary data source. They're hungrier for new types of data because they have fewer alternatives.

Garmin and Specialized Purpose

Garmin is a company primarily focused on sports watches and fitness technology. They do collect data. But Garmin's business model is selling devices and services to fitness enthusiasts—not selling advertising.

Their incentives are misaligned with surveillance. They benefit from building trustworthy products. A Garmin watch is more expensive than an Apple Watch, but people buy them because Garmin has earned reputation in the fitness community.

Meta has no such reputation to protect in the wearable space. They're entering as a new player, which means they'd likely be aggressive about monetizing the data advantage they'd gain.

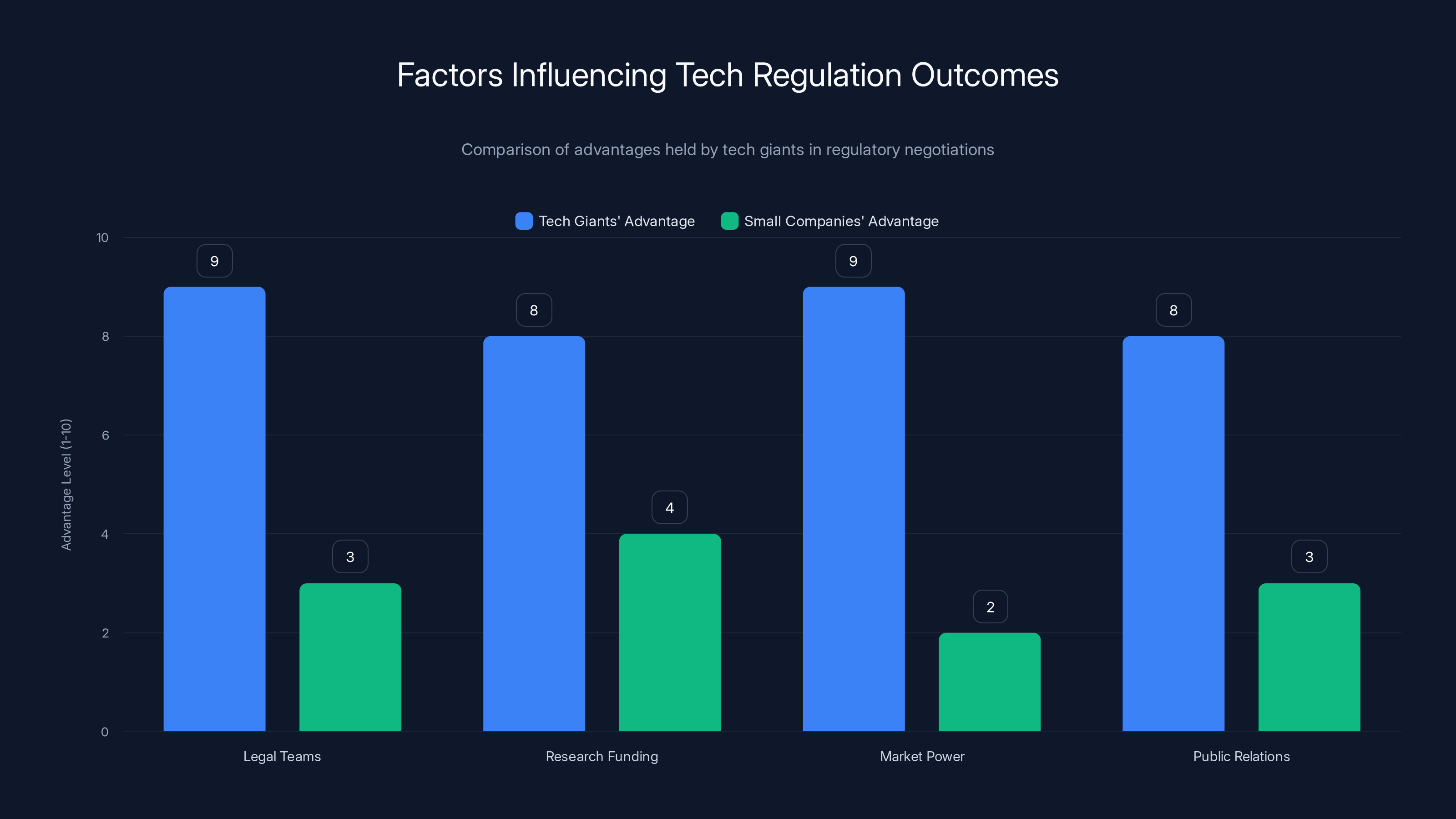

Tech giants have significant advantages in regulatory negotiations due to their legal, financial, and market resources. Estimated data.

The Regulatory Landscape and Why It Matters Less Than You'd Think

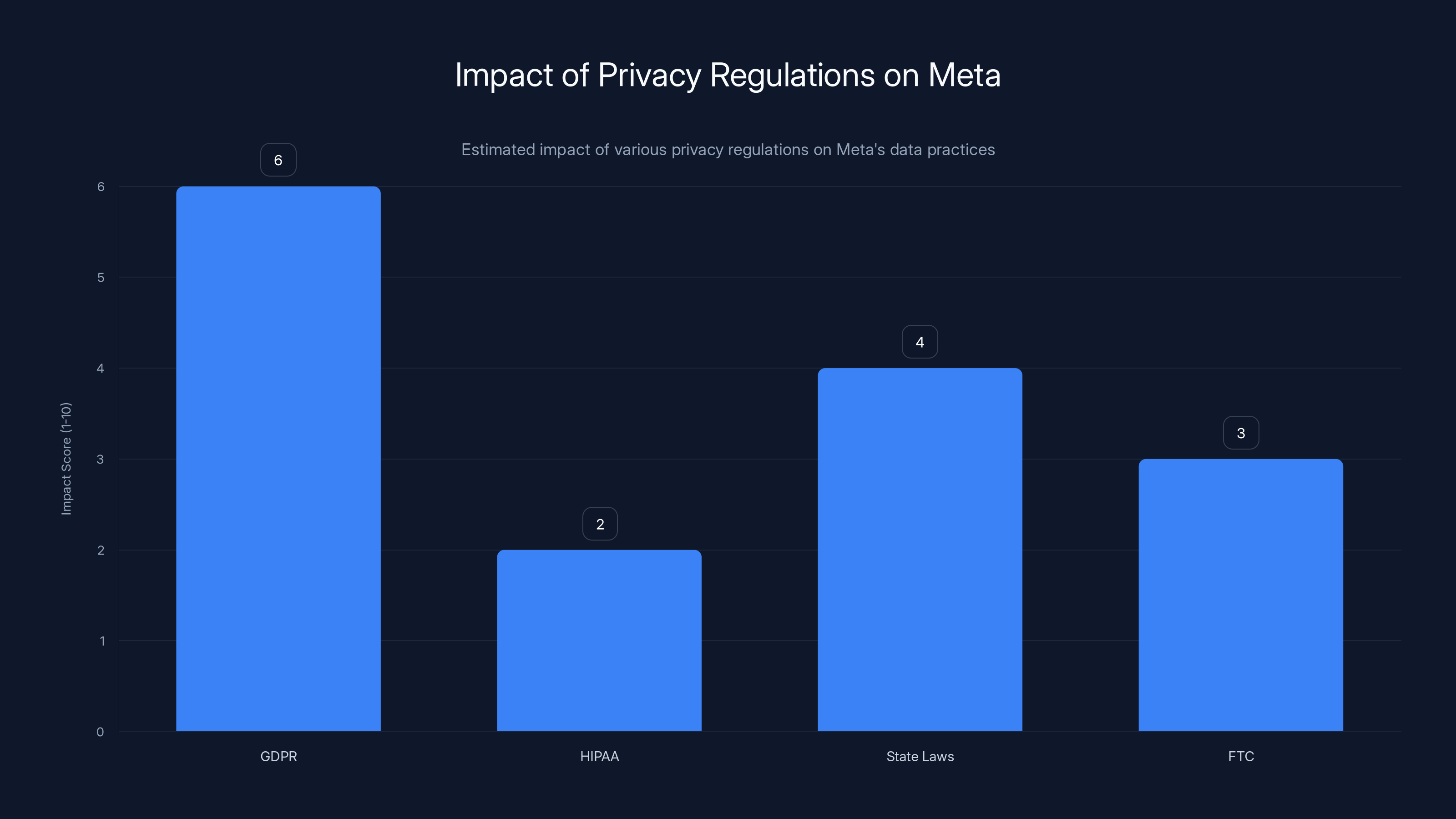

You might be thinking: "Okay, but regulations like GDPR and HIPAA exist, right? That's why Meta can't just harvest health data without consequences."

It's a reasonable thought. And it's partially correct. But regulations have serious limitations.

GDPR's Weak Enforcement on Meta

The EU's General Data Protection Regulation is the world's strongest privacy law. Meta operates in the EU and technically falls under GDPR. The company has been fined billions for violations.

But here's the thing about GDPR: it requires consent for non-essential data processing. Meta's strategy? Make consent a condition of using the service. Want to use Instagram or Facebook? Here's our privacy policy. Here are our data practices. Accept or leave.

Most users accept, because they don't want to leave. GDPR gives users rights to understand and control data. But exercising those rights requires time, knowledge, and often legal resources that most people don't have.

HIPAA Doesn't Apply (This Is Important)

HIPAA is the US health privacy law. It's strict. But it only applies to healthcare providers, insurance companies, and their business associates.

Meta is not a healthcare provider. A Meta smartwatch is not a medical device (unless they specifically market it that way). HIPAA doesn't apply.

This is the regulatory gap. Wearable companies that aren't regulated as healthcare providers can collect health data with minimal privacy requirements. The FTC can require reasonable data security practices, but that's about it.

State Laws Are Slowly Catching Up (But Not Quickly)

Some US states have passed biometric privacy laws (Illinois, Texas, Washington). Some have passed health privacy laws. But these regulations are uneven, fragmented, and often fail to address the specific practices that concern privacy experts.

Meanwhile, Meta operates globally. They'll build systems that satisfy the most restrictive jurisdiction (probably EU regulations) and then apply those practices globally. This means they'll claim they're following strong privacy standards while maintaining maximum data collection optionality in less-regulated markets.

The Hidden Monetization Pathways You Should Worry About

When you think about how Meta would monetize a smartwatch, you probably think: "They'll use the health data to sell targeted ads."

That's one pathway. But it's not the only one. And some alternatives are even more concerning.

Data Brokerage and Aggregation

Meta could sell aggregated, anonymized fitness data to insurance companies, employers, pharmaceutical companies, and researchers. Legally (in most jurisdictions), this is allowed. "Anonymized" data can be worth enormous amounts of money.

Imagine this scenario: A large employer wants to offer wellness programs. They contact Meta and ask: "Show us fitness trends among 35-45 year old males in Boston earning

Now the employer sees that this demographic is less active and has higher resting heart rates in winter months. They design a winter fitness incentive program. All because Meta monetized health data in a way most users didn't anticipate.

Pharmaceutical and Healthcare Marketing

This is where things get dystopian. Imagine Meta's AI systems detect patterns in smartwatch data that suggest certain users are entering pre-diabetic or pre-hypertensive states based on fitness metrics and sleep quality.

Meta could sell this information to pharmaceutical companies in the form of "high-intent user cohorts." These are users who aren't explicitly searching for diabetes treatment, but whose biometric data suggests they're at risk. Pharmaceutical companies could then bid for advertising access to this cohort.

This isn't happening today (as far as we know). But the infrastructure would support it. And Meta's history suggests they'd pursue it if the profit margin was attractive.

Insurance and Employment Discrimination

This is the nightmare scenario that keeps privacy advocates awake. If Meta's smartwatch data becomes integrated with insurance company databases (either through data sales or Meta's own insurance initiatives), users could face discrimination based on their fitness data.

Someone with irregular sleep patterns might be declined for life insurance. Someone with consistently high resting heart rate might be charged higher premiums. Someone whose fitness data shows declining activity after a certain age might be marked as a higher-risk employee.

Again, this isn't explicitly allowed under current law in most jurisdictions. But legal frameworks move slowly, and tech companies operate quickly. Meta would likely build the capability first, argue for it later.

GDPR has the highest impact on Meta's data practices, while HIPAA has minimal effect due to its specific scope. State laws and FTC regulations have moderate influence. Estimated data.

What Meta's Previous Health Data Initiatives Tell Us

Meta has actually tried to build health-focused products before. And each attempt reveals important things about their approach to health data.

Meta's Health Monitoring Initiatives

Meta conducted research into health monitoring on its platforms. The company worked on COVID-19 symptom tracking, pandemic-related misinformation monitoring, and mental health resource recommendations.

Sounds noble, right? But here's what actually happened: Meta built the infrastructure to identify users discussing health symptoms on Facebook and Instagram. They created systems to detect health-related content and conversations. They tested algorithms that could infer health status from posting patterns.

They claimed this was for public health. And maybe some of it was. But they also built a system to identify and track health-related discussions and users. That data, that capability, that infrastructure—it all gets folded into Meta's larger user profile.

The Mental Health Prediction Problem

Meta's AI systems have been trained to predict mental health status based on Facebook activity. Research has shown that Meta's models can identify depression, anxiety, and suicidal ideation based on posting patterns.

This is powerful information. It's also dangerous information in Meta's hands, because Meta's business model incentivizes exploiting human vulnerabilities for engagement.

A user showing signs of depression might be algorithmically steered toward content that triggers emotional responses—because engagement is profitable, regardless of impact on mental health. Someone showing signs of anxiety might see ads for anxiety-inducing products or content.

Meta's defense is that they don't explicitly use mental health predictions for this purpose. But absence of explicit policy doesn't equal absence of impact. Algorithms optimize for what they're designed to optimize for. If Meta's feed is optimized for engagement, and Meta's systems know which users are vulnerable to engagement, the outcome is predictable.

The Tone-Deaf Public Health Launches

Meta tried to position itself as a health-focused company. They created features for tracking water intake, reminders for medication, and mental health resources.

But every feature came with the same underlying data collection. Every health tool was also a health data extraction tool. And the primary purpose wasn't health outcomes—it was capturing more granular health data about users.

Compare this to Apple's health features or Google Fit. Both of these companies built health features too. But the incentive structures are different. Apple wants you to trust Apple with your health data so you'll buy more Apple devices. Google wants you to use Google Fit so you'll engage more with Google services.

Meta wants your health data so they can build more effective advertising profiles.

The Rationalization Meta Would Offer (And Why It Doesn't Hold Up)

If Meta released a smartwatch tomorrow, they'd make certain promises about privacy. Let me predict what they'd say, and why each claim has problems.

"We'll anonymize the data"

Anonymization sounds good. It's meaningless. As mentioned earlier, fitness data is highly identifiable. Combine smartwatch data with Meta's other data sources (Instagram posts, Facebook location history, Threads activity), and anonymity becomes theoretical.

Moreover, anonymization is reversible. If Meta anonymizes data today but retains the keys to de-anonymize it later, that's not real privacy protection.

"Users control their own data"

This is the classic meta-response (pun intended). "You can delete your data," they'd say. "You can choose not to sync with certain apps. It's your choice."

Choice without meaningful alternatives isn't really choice. If you want a smartwatch, and Meta's smartwatch is the most affordable option, the integration with your existing Meta ecosystem, and the overall experience is the best, then you don't really have a choice—you just have the illusion of one.

Moreover, "control" in Meta's framing means control within their system. You can choose not to share specific data types. But Meta still owns the infrastructure. They still set the terms. The asymmetry of power remains.

"We won't sell data to third parties"

Meta probably wouldn't directly sell individual user data. That would be too explicitly violating. Instead, they'd use the data internally (for advertising) and sell access to anonymized, aggregated insights.

This distinction doesn't matter to your privacy. You're still being profiled, still being targeted, still being optimized against.

Meta generates a staggering 98% of its revenue from advertising, highlighting its heavy reliance on data-driven ad targeting.

The Broader Ecosystem Problem: Smartwatch Data in a Meta-Dominated World

Here's something important: a Meta smartwatch doesn't exist in isolation. It exists within Meta's broader ecosystem and within the ecosystem of wearable devices generally.

Network Effects and Lock-In

If Meta releases a smartwatch that integrates seamlessly with Instagram, Facebook, WhatsApp, and Threads, there's pressure to use it—not because it's technically superior, but because it's integrated. Your fitness data syncs automatically. Your workouts populate Instagram Stories. Your health insights influence which content you see.

This creates lock-in. You can't easily switch to another smartwatch without losing that integration. Meta profits from lock-in because it prevents users from migrating to privacy-respecting alternatives.

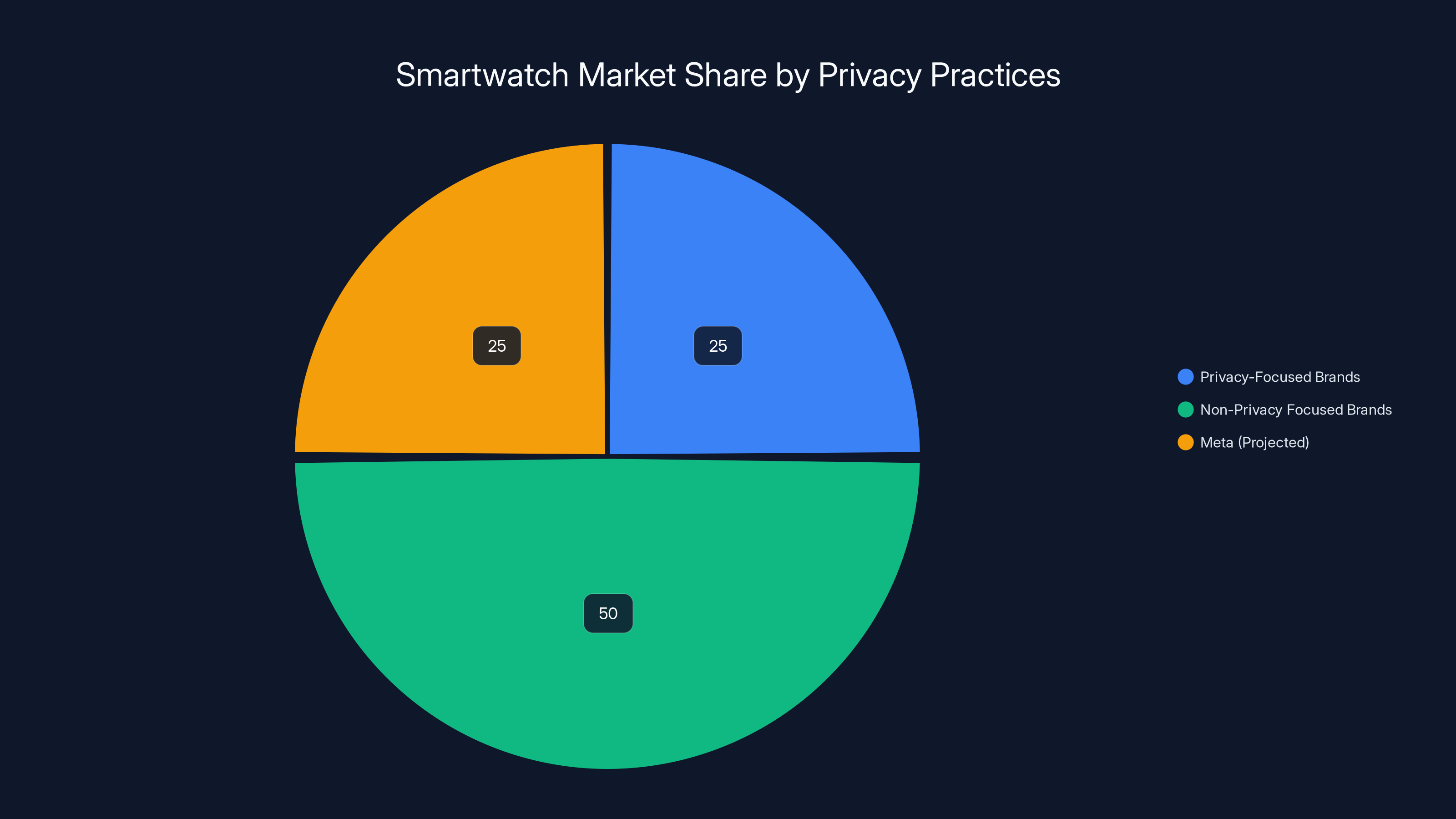

The Competitive Disadvantage of Privacy

Here's a paradox: companies that respect privacy are at a competitive disadvantage against companies that don't. Apple, despite its privacy claims, is losing market share to cheaper smartwatches made by companies with weaker privacy practices.

Meta would undercut Apple on price by monetizing health data. They'd offer "free" watch features funded by ad targeting. They'd create a race to the bottom where privacy becomes a luxury good.

Cross-Device Tracking at Scale

Meta already tracks users across devices. They know when you're on mobile, desktop, or their VR headsets. A smartwatch would be another tracking vector.

Imagine Meta's algorithm learning that users with high fitness activity on weekends tend to scroll Instagram for longer on Sunday evenings (post-workout relaxation time). That behavioral pattern gets baked into the algorithm. Every wearable user's Sunday evening feed gets adjusted accordingly.

One more data point. One more optimization vector. One more way to extract maximum engagement.

What Privacy-Conscious Consumers Should Actually Do

Obviously, the simplest recommendation is: don't buy a Meta smartwatch. But that's not realistic for everyone, and it ignores the broader issue.

If You Already Use Meta Products

If you're on Facebook or Instagram already, you're already in Meta's ecosystem. A Meta smartwatch would deepen that relationship, but it wouldn't fundamentally change your privacy exposure.

That said, you could:

- Minimize the data Meta collects by using privacy-focused browser settings and ad blockers

- Use privacy-respecting third-party apps to manage your social media rather than the official apps

- Consider gradually migrating to non-Meta platforms (harder said than done, I know)

- If you choose a Meta smartwatch, deny as many permission requests as possible

If You're Choosing Your First Smartwatch

Consider these alternatives:

- Apple Watch: Privacy isn't perfect, but incentives are better aligned with user benefit

- Garmin: Purpose-built for fitness, minimal advertising integration

- Withings: European company with stricter privacy standards baked into DNA

- Samsung Galaxy Watch: Less aggressive data extraction than Meta, more aggressive than Apple

The Systemic Problem

Honestly? Individual choices matter, but they're not sufficient. The real solution is regulation. We need laws that:

- Explicitly restrict use of health data for advertising purposes

- Require true consent for biometric data collection

- Create consequences painful enough to deter violations

- Allow private lawsuits (not just regulatory fines)

- Mandate transparency about data uses

Regulation won't happen overnight. Tech companies have massive lobbying budgets. But it's the only lever that actually works against surveillance capitalism at scale.

Estimated data shows non-privacy focused brands, including a potential Meta smartwatch, capturing a larger market share due to lower costs and integrated ecosystems.

The Specific Risks of Heart Rate and Sleep Data

Let me get into the weeds on what a smartwatch actually measures and why each measurement concerns me.

Heart Rate Variability and Your Inner Life

Your heart rate isn't constant. It fluctuates based on your autonomic nervous system—the part of your nervous system that you don't consciously control. This variability is measured as HRV (heart rate variability), and it tells you enormous amounts about your mental and physical state.

High HRV generally indicates good health and stress resilience. Low HRV suggests stress, overtraining, illness, or mood disorders. It's one of the most sensitive biomarkers for psychological stress.

Meta could use this to identify:

- Users undergoing psychological stress (target them with escapist content)

- Users with mood disorders (target them with products that exploit anxiety)

- Users with health conditions that cause irregular autonomic response

- Users who are sleep-deprived (target them with stimulating content during critical focus time)

For each of these categories, the optimal algorithm from a revenue standpoint is probably harmful to the user. More stress = more engagement. More anxiety = more clicks. More sleep deprivation = more daytime scrolling.

Sleep Architecture and Mental Health

Sleep is where your body processes emotions and consolidates memories. Your smartwatch doesn't just measure total sleep—it measures sleep stages. Deep sleep. REM sleep. Light sleep. Awakenings during the night.

This granular data is incredibly revealing. Disturbed REM sleep suggests depression or trauma. Frequent awakenings suggest anxiety. Short sleep duration suggests depression, bipolar disorder, or ADHD.

Meta could use this to identify users in psychological crisis. Then target them accordingly.

Think about the ethical implications. A user experiencing depression (indicated by poor REM sleep and total sleep duration) is algorithmically steered toward isolating, doomscrolling content. Not because Meta explicitly decided "depressed users are profitable," but because algorithms optimize for engagement, and engagement is what depressed users do most.

Movement and Your Physical Health

Smartwatch movement data seems innocuous. Just tracking when you exercise. But it's revealing about:

- Whether you have a sedentary job or active job

- Your socioeconomic status (time for exercise correlates with income)

- Your health status (illness typically causes movement decrease)

- Your mental health (depression causes movement decrease)

- Your body image concerns (spike in movement tracking suggests weight loss attempt)

- Your social patterns (group workouts show up in movement data)

Meta could use this to determine which users are:

- Vain (high fitness tracking = likely to engage with appearance-focused content)

- Financially stressed (low gym attendance could correlate with financial hardship)

- Lonely (no group workout activity)

- In crisis (sudden activity decrease)

Again, none of this requires Meta to explicitly use movement data for manipulation. It just requires them to optimize algorithms for engagement while having access to movement data. The outcome is automatic.

Real-World Examples of How Health Data Gets Misused (Because It Already Is)

You don't have to imagine how health data could be misused. It's already happening in the real world. Let me give you some documented examples.

The Uber Health Scenario

Uber, the ride-sharing company, offers a product called Uber Health that provides rides to medical appointments. It's positioned as a health initiative. But it also gives Uber data about which users are seeking medical care, when, and where.

Uber's insurance partners could theoretically use this data to identify high-healthcare-utilization customers. Not for better healthcare, but for pricing insurance accordingly.

This is legal (mostly). It's still happening. And it shows how ostensibly health-focused products create data collection vectors.

The Glass Bridge Life Insurance Scenario

A now-defunct company called Glass Bridge offered life insurance that was cheaper if customers wore activity trackers. Sounds good—incentivize healthy behavior. But what actually happened is that insurance companies got real-time data about customer health status and could potentially use it to deny or reprrice coverage.

The company shut down, but not before showing that health data monetization reaches into insurance and financial services.

The Oura Ring Controversy

Oura makes a wearable ring that tracks biometric data. Recently, Oura received investment from venture capital firms with ties to insurance companies. Immediately, users became worried: would insurance companies use Oura data for underwriting?

Oura said no. But the fact that insurance companies were interested in investing tells you they see the value of health data for risk stratification and pricing.

The Fitbit-Google Integration Concerns

When Google bought Fitbit, privacy advocates worried that Google would integrate Fitbit health data into its advertising profiles. Google publicly committed not to do this. But in 2023, it was revealed that Google had started exploring partnerships with healthcare providers—creating a pathway for health data integration.

Each of these examples shows the same pattern: health data collection capabilities get built, and monetization pathways follow. Sometimes explicitly, sometimes implicitly through regulatory pressure.

Meta would follow the same pattern.

The Technical Architecture That Would Enable the Worst Scenarios

Let me get into the technical weeds on how Meta would actually implement a smartwatch and why that implementation matters.

Syncing and Real-Time Data Collection

A Meta smartwatch would sync with Meta's servers constantly. Not just when you explicitly upload data, but continuously throughout the day. Heart rate readings. Movement. Sleep stages. All flowing back to Meta's data centers.

This data wouldn't be siloed in a "health" database. It would be merged with your other Meta data in real-time. Your 6 AM heart rate spike (anxiety? alarm clock?) would be correlated with your 6:30 AM Instagram posts (what did you post that morning?). Your Friday evening activity would be correlated with your social media behavior.

Meta's machine learning systems would find patterns that even humans couldn't identify. Correlations between biometric data and behavioral data that would be impossible to detect without algorithmic analysis.

Cross-Device Tracking Infrastructure

Meta already tracks users across devices. They track when you move from desktop to mobile. They track when you switch between Facebook and Instagram. They track time spent in each app.

A smartwatch would be another node in this tracking network. Your morning run becomes a data point in your daily engagement profile. Your sleep quality becomes a variable in your evening content recommendation algorithm.

The Advertising Optimization Layer

Meta's ad targeting system is absurdly sophisticated. Advertisers can target users by dozens of variables: interests, behaviors, purchase history, income level, relationship status, education, even personality traits (implied from Facebook data).

With smartwatch data, advertisers could add biometric variables. "Show my fitness app ads only to users with low activity levels." "Show my anxiety medication ads only to users with high stress indicators." "Show my sleep aid ads only to users with poor sleep quality."

These capabilities don't require Meta to explicitly violate privacy policies. They're just advertising tools. Tools that happen to exploit human vulnerabilities.

Comparing the Risk: Meta vs. Every Other Major Tech Company

I want to be fair here. All major tech companies collect data. All of them monetize it. The difference with Meta is one of degree and incentive structure.

Google's Surveillance Capitalism

Google is enormous, and Google definitely collects health-adjacent data. But Google's primary revenue source is search advertising. They know what you're searching for. That's incredibly revealing data about your health, your interests, your vulnerabilities.

But Google makes most of its money from search ads, not from deep behavioral profiling for social media targeting. And Google has multiple diversified revenue sources (YouTube, Cloud, Hardware). They don't need health data as badly.

Amazon's Consumer Data Advantage

Amazon knows what you buy. They know your purchase history, your browsing history, your price sensitivity. That's valuable. But Amazon's business is selling products, not advertising.

Amazon does advertising (growing rapidly). But it's not their primary business. They could lose their advertising business tomorrow and still be profitable.

Meta can't lose advertising and remain profitable. Advertising is Meta.

Apple's Privacy Positioning (But Reality Is Complicated)

Apple makes 50%+ of revenue from hardware. They don't need your data as desperately as Meta does. They benefit from privacy messaging because it differentiates their products.

But Apple has their own privacy issues. They work with governments on surveillance. They have weaker privacy practices in China. They scan devices for CSAM. Privacy claims aren't perfect.

But incentive-wise, Apple benefits from privacy. Meta benefits from surveillance.

Microsoft's Enterprise Focus

Microsoft has moved toward enterprise and cloud services. They collect massive amounts of data from businesses. But they're less aggressive about monetizing consumer health data because their primary revenue is from enterprise customers, not advertising.

Meta's Desperate Hunger for Data

Meta is in a precarious position. They're facing regulatory pressure. They're losing younger users to TikTok. They've invested billions in the metaverse with unclear returns. Their advertising business is under pressure from Apple's privacy changes.

Meta needs new data streams. New behavioral signals. New ways to understand users and predict their vulnerabilities.

A smartwatch would be perfect for that. Biometric data that their competitors don't have. An always-on sensor. Direct integration with their ecosystem.

Meta would use a smartwatch more aggressively than any competitor would. Not because Meta is uniquely evil, but because Meta is uniquely desperate.

The Regulatory Future and Why You Shouldn't Count on It

You might think that regulations are getting stricter, and companies will be forced to respect privacy. I hope you're right. But the history of tech regulation is discouraging.

Regulations Lag Technology by Years

Smartwatch technology exists. Regulatory frameworks that adequately address smartwatch data privacy don't. By the time regulations are written, negotiated, debated, and implemented, the data has been collected and monetized for years.

Meta could release a smartwatch today. Run it for five years. Collect health data on tens of millions of users. Build an incredibly sophisticated health data monetization infrastructure. And then, if new regulations emerge, they could claim they were operating in the gray zone.

Tech Companies Win Regulatory Negotiations

Apple successfully argued that App Store scanning for CSAM was about child safety (privacy advocates saw it as unprecedented surveillance). Amazon successfully argued that real-time location tracking was about convenience. Google successfully argued that comprehensive web tracking was about ad relevance.

Meta would argue that smartwatch health data is about providing better recommendations and experiences. They'd point to features that legitimate their data collection. They'd fund research showing benefits. They'd lobby regulators.

Tech companies have massive advantages in these negotiations:

- They have sophisticated legal teams and lobbyists

- They have research funding to support their positions

- They have market power (what does the government do if Meta just refuses to comply?)

- They have public relations budgets to shape narrative

Small companies like Oura or Withings can't compete on this level. So regulatory winners are usually big companies, and big companies know this.

Regulations Are Often Poorly Designed

GDPR is EU's strongest privacy law. But Meta operates under GDPR and continues to collect and monetize data aggressively. Why? Because GDPR has loopholes.

Requiring consent is one loophole—if Meta conditions service use on data collection, users have chosen (in theory) to give consent.

Allowing data processing for "legitimate interests" is another—Meta can argue that health data collection serves legitimate interests (improving user experience, platform security, etc.).

New regulations on wearables and health data would likely have similar loopholes. Regulators are usually one step behind tech companies' innovation in exploitation.

The Path Forward: What You Should Actually Worry About

I've painted a dark picture. Let me conclude with what actually matters and what you can actually do.

The Concrete Risk: Not Conspiracy, But Incentive Alignment

I'm not claiming Meta is secretly building surveillance infrastructure to sell to insurance companies (though they might). I'm claiming Meta's incentive structure pushes them toward surveillance, and they'll implement what's profitable and legal.

If health data monetization through advertising is profitable (and it would be), Meta will do it. If health data brokerage is legal (and it mostly is), Meta will explore it. If aggressive targeting of vulnerable users is possible (and it's not just possible, it's standard practice), Meta will implement it.

These aren't evil mastermind decisions. They're rational business decisions given Meta's incentive structure.

The Systemic Risk: A Race to the Bottom

If Meta succeeds with a privacy-hostile smartwatch, competitors will follow. Apple might be pressured to collect more health data to compete. Google will certainly do so. The standard for wearable privacy will drop.

Users will have a choice between privacy-respecting smartwatches (expensive, fewer features) and privacy-hostile smartwatches (cheap, integrated, better UX). Most will choose the latter.

Privacy becomes a luxury good. The poor get surveillance. The rich get privacy.

The Real Solution: Regulation + Abstraction

Regulation: We need laws that explicitly restrict use of biometric data for behavioral targeting. Laws with teeth. Laws with consequences. This won't happen overnight, but it's the only thing that actually works.

Abstraction: We need companies that abstract away from big surveillance platforms. Companies like Runable that focus on empowering users rather than exploiting them. Companies that build tools for user benefit, not advertiser benefit.

You could also consider building your own solutions. For example, Runable offers $9/month automation tools that empower you to build custom workflows and automate tasks without relying on surveillance capitalism platforms. It's not perfect (no solution is), but it's a step toward reducing dependence on Big Tech's ecosystem.

Individual Choice: You can choose not to participate. You can use privacy-respecting alternatives. You can minimize your data footprint. It's inconvenient, but it's possible.

The Bottom Line: A Meta Smartwatch Should Concern You

A Meta smartwatch would give Mark Zuckerberg and company a direct pipeline to your body. Not your content consumption patterns. Not your social connections. Your biometric data. Your intimate health information.

Given Meta's history, business model, incentive structure, and technical capabilities, there's no reason to believe they'd handle that data carefully. There are many reasons to believe they'd monetize it aggressively.

So my advice is straightforward: don't buy one. And if you're already in Meta's ecosystem, consider ways to minimize your data contribution. And if you care about this at a systemic level, push for regulation, support privacy-respecting companies, and talk to others about why this matters.

The surveillance capitalism ecosystem is profitable for the companies involved and profitable for themselves. That's the whole problem.

FAQ

What exactly is health data and why is it valuable?

Health data includes biometric measurements like heart rate, sleep patterns, activity levels, stress indicators, and other physiological readings. It's valuable because it reveals intimate details about your physical and mental state—information that advertisers and data brokers can use to identify vulnerabilities and predict behavior. Unlike search queries or browsing history, health data is extremely difficult to fake or hide, making it particularly revealing and profitable for sophisticated profiling.

How would Meta actually use smartwatch data for advertising?

Meta could integrate smartwatch biometric data into their advertising profile for each user, allowing advertisers to target by health status. For example, fitness companies could target users with low activity levels, or pharmaceutical companies could target users showing stress indicators. More concerningly, Meta could use the data to optimize their own algorithms—identifying vulnerable users and steering them toward engaging content, regardless of its effects on user wellbeing. This wouldn't necessarily require explicit Meta policy; it would emerge naturally from algorithms optimized for engagement.

Isn't my health data already protected by laws like HIPAA?

No. HIPAA only applies to healthcare providers, insurance companies, and their business associates. Consumer wearables made by tech companies like Meta are not classified as medical devices and don't fall under HIPAA. The FTC can require reasonable data security practices, but there are no specific laws restricting how consumer wearable companies use health data for advertising or profiling. This is a major regulatory gap that Meta would likely exploit.

How does a smartwatch's data collection differ from a smartphone's health apps?

Smartphones are used deliberately—you choose when to open apps and what to measure. Smartwatches are continuous sensors that measure biometric data all day and night without active user input. They collect data while you sleep, work, exercise, and rest. The constant, passive nature of smartwatch data collection creates unique privacy risks. A smartwatch provides Meta with far more granular behavioral and physiological information than a smartphone could.

Could I just not install certain apps or permissions on a Meta smartwatch?

Theoretically, yes. But in practice, no. Meta controls the operating system and the default applications. They'd build health data collection into the core OS, not optional apps. Denying permissions typically degrades user experience significantly (watch won't sync, notifications won't work, features become unreliable). Additionally, metadata about what you denied is itself revealing. Meta can infer your privacy consciousness and potentially target you differently based on your privacy settings.

What's the difference between Meta and Apple or Google in terms of health data privacy?

The key difference is incentive structure. Apple makes most money from hardware and services, not advertising. Google makes most money from search advertising, not behavioral profiling. Both companies benefit from privacy messaging. Meta makes 98% of revenue from advertising and relies on behavioral data to target ads effectively. Meta is incentivized to collect more health data and use it more aggressively than competitors. None of these companies are perfectly privacy-respecting, but Meta's business model creates the strongest incentive to exploit health data.

Can I trust Meta's privacy promises about health data?

Meta's track record suggests caution. The company has repeatedly violated privacy policies, misused user data, paid major fines, and implemented surveillance despite public commitments to privacy. When Meta promises not to use health data for advertising, you're essentially trusting the company that created systems to profile depressed teenagers and target vulnerable populations. Historically, Meta has used every data it can access for maximum profit. Health data would follow the same pattern.

What are the most sensitive health insights a smartwatch could reveal?

Heart rate variability reveals your stress and emotional state in real-time. Sleep architecture (light, deep, REM stages) reveals mental health status—depression, anxiety, trauma all show up in sleep disruption. Resting heart rate trends indicate fitness level and health status changes. Activity patterns show your schedule, income level, social life, and mental health. Together, these measurements create a comprehensive health profile more revealing than most people share with their doctors. Meta would have continuous access to this information.

What practical steps can I take to protect my health privacy?

If you're buying a smartwatch, choose companies with better privacy incentives: Apple Watch (device revenue focused), Garmin (sports focus), or Withings (European, stricter standards). If you already use Meta products, use privacy browser extensions, deny as many app permissions as possible, and consider gradually migrating to non-Meta platforms. At a systemic level, support privacy regulation efforts, vote for candidates focused on tech regulation, and consider using privacy-respecting tools like Runable for productivity needs instead of relying on Meta or other surveillance platforms.

Key Takeaways

- Meta's business model (98% advertising revenue) creates incentive to aggressively monetize health data in ways competitors don't

- Biometric data from smartwatches is far more revealing than smartphone data—heart rate, sleep patterns, and activity levels expose mental and physical health status

- Meta has documented history of violating privacy policies, misusing health data, and targeting vulnerable users despite public commitments to privacy protection

- Regulatory frameworks like HIPAA don't apply to consumer wearables, creating legal gap for health data exploitation

- Cross-referencing smartwatch biometric data with Meta's existing social media data makes anonymization impossible despite company claims

- Privacy-respecting smartwatch alternatives exist (Apple Watch, Garmin, Withings) but face competitive disadvantage against privacy-hostile cheaper options

- Solutions require both individual choice (avoiding Meta products) and systemic regulation explicitly restricting use of biometric data for behavioral targeting

![Meta Smartwatch Privacy: Why Your Health Data Matters [2025]](https://tryrunable.com/blog/meta-smartwatch-privacy-why-your-health-data-matters-2025/image-1-1771843118663.jpg)