Introduction

Last week, Minnesota made headlines by becoming the first state to pass a law specifically targeting the creation and distribution of AI-generated fake nudes. This groundbreaking legislation not only imposes hefty fines but also sets a legal precedent that could influence technology regulation across the United States, as detailed in Ars Technica.

The law is a response to the growing concern over 'nudification' apps—tools that use artificial intelligence to remove clothing from images of real people, often without their consent. These apps have sparked outrage for their invasion of privacy and potential to cause severe emotional harm, as reported by CBS News.

In this article, we'll delve into the details of Minnesota's new law, explore the implications for developers and users, and provide practical guidance for navigating this evolving legal landscape.

TL; DR

- Hefty Fines: Developers face fines up to $500,000 per infraction, according to Ars Technica.

- Legal Precedent: Minnesota leads in legislating against AI-generated fake nudes.

- Focus on Victims: Fines fund victim services for assault and abuse, as highlighted by The Deep Dive.

- Developer Impact: High compliance costs and potential for product bans.

- National Implications: Could influence future laws across the U.S.

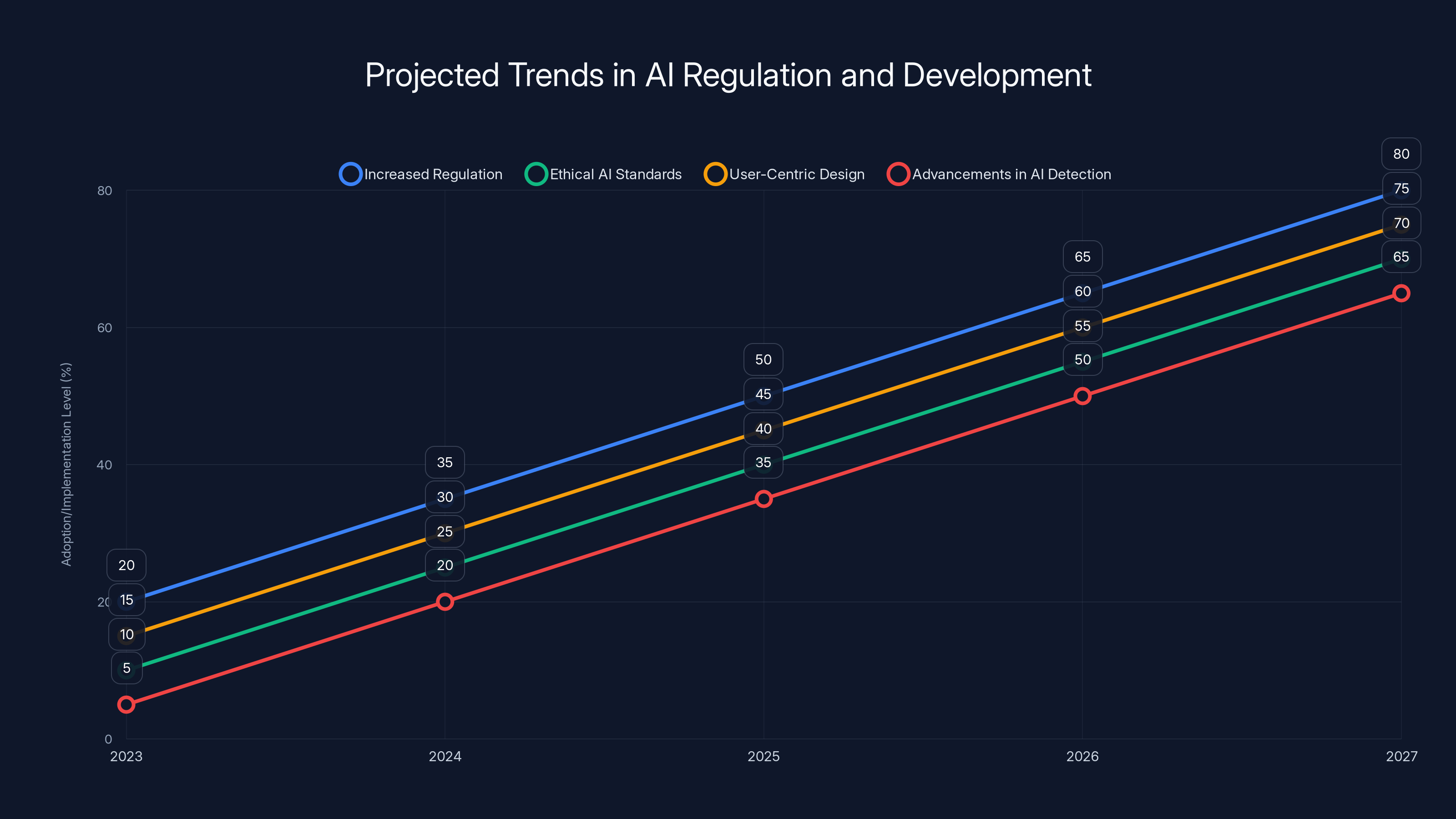

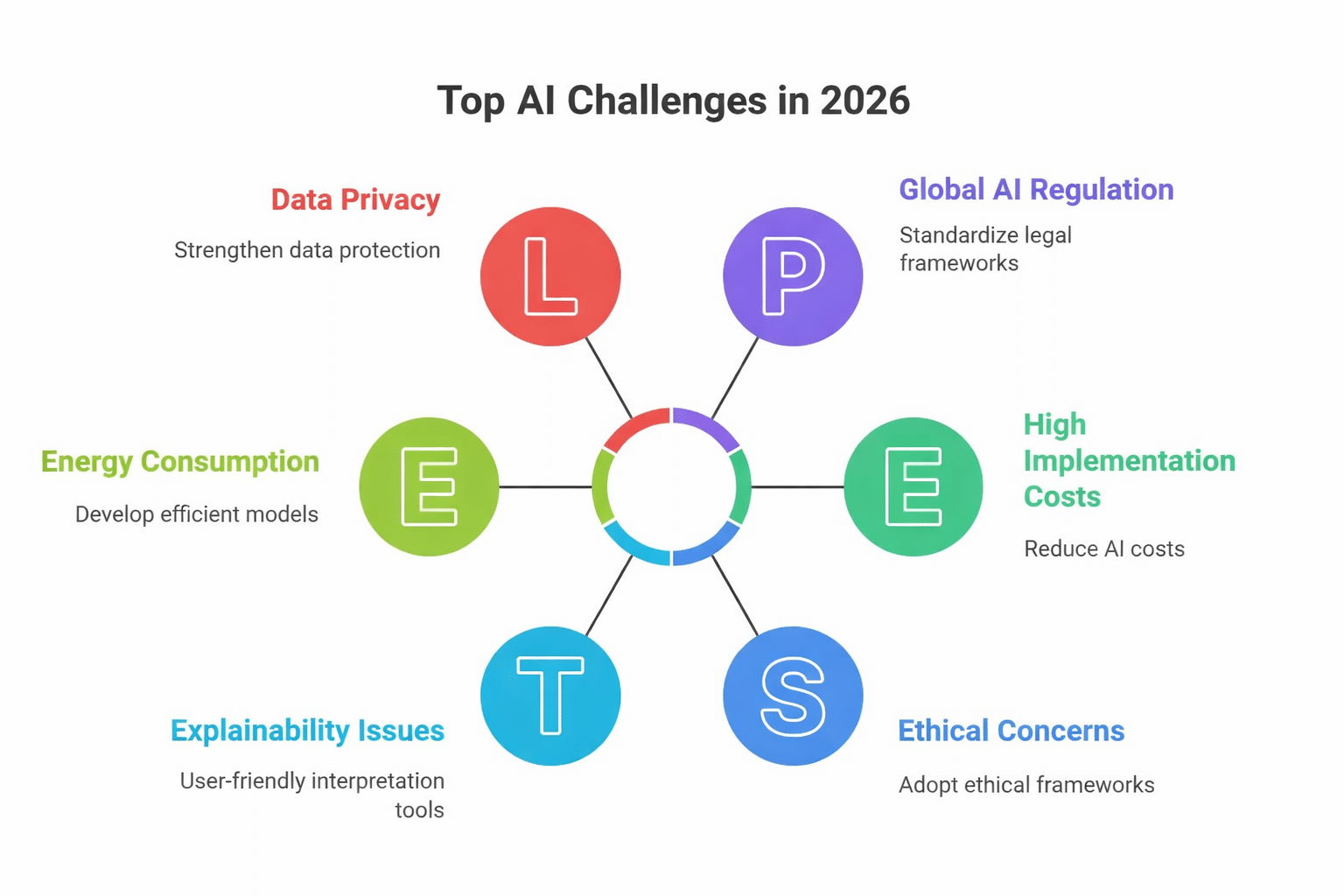

Estimated data shows a steady increase in AI regulation and ethical standards, with user-centric design and detection advancements also gaining momentum.

Why This Law Matters

The advent of AI technology has brought about unprecedented capabilities in image manipulation. While such advancements offer creative opportunities, they also pose significant ethical and legal challenges. The ability to create hyper-realistic fake nudes can lead to severe invasions of privacy and emotional distress for the individuals depicted, as discussed by Wired.

The Rise of 'Nudification' Apps

'Nudification' apps leverage deep learning algorithms to create realistic images of people without clothing. These algorithms are trained on large datasets of images, learning to predict and fill in the gaps when clothing is removed. Some of these apps have been downloaded millions of times, indicating a significant user base and demand, as noted by NewsBytes.

However, the potential for misuse is enormous. These images can be used for blackmail, harassment, or revenge porn, causing irreparable harm to victims.

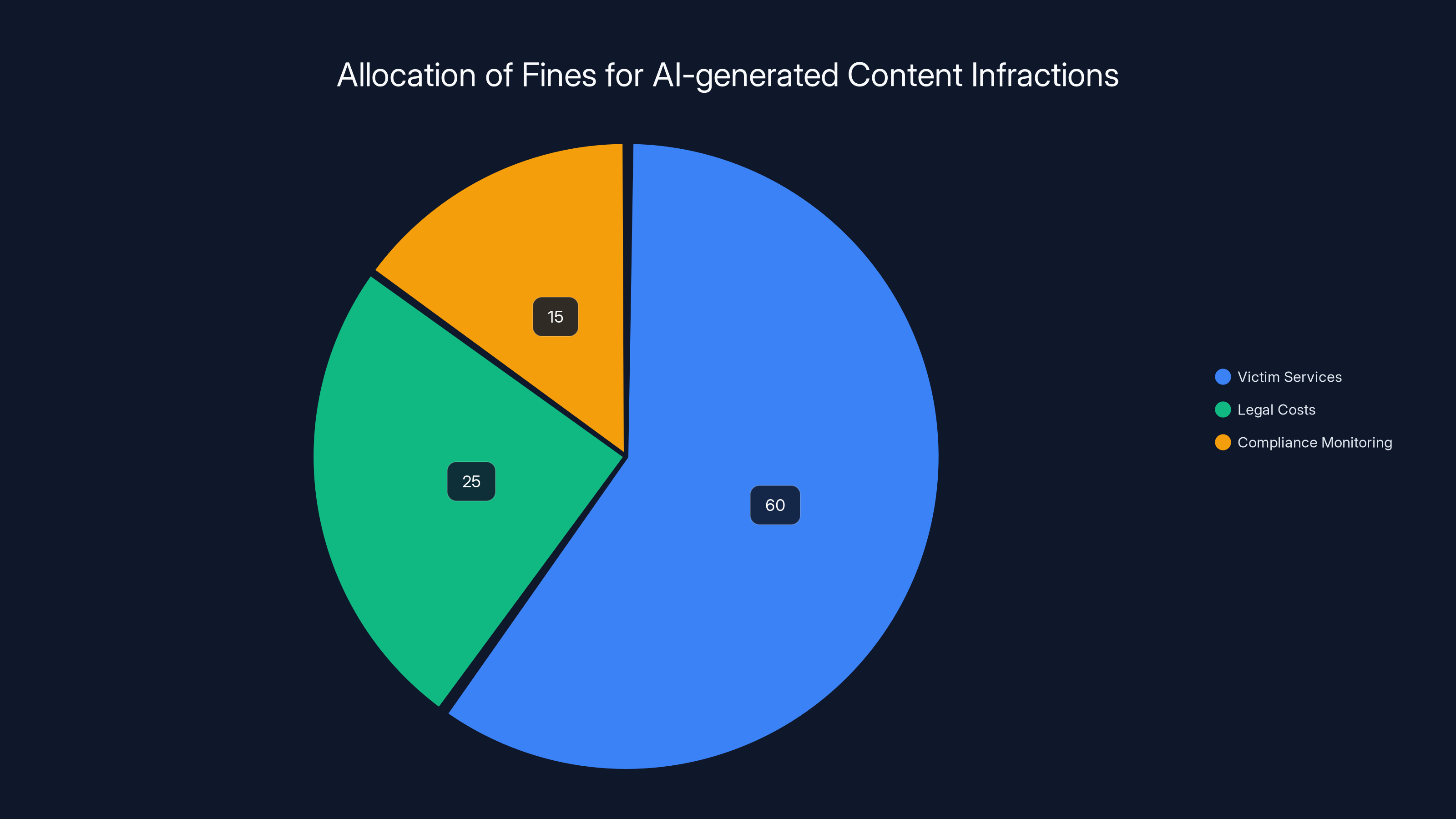

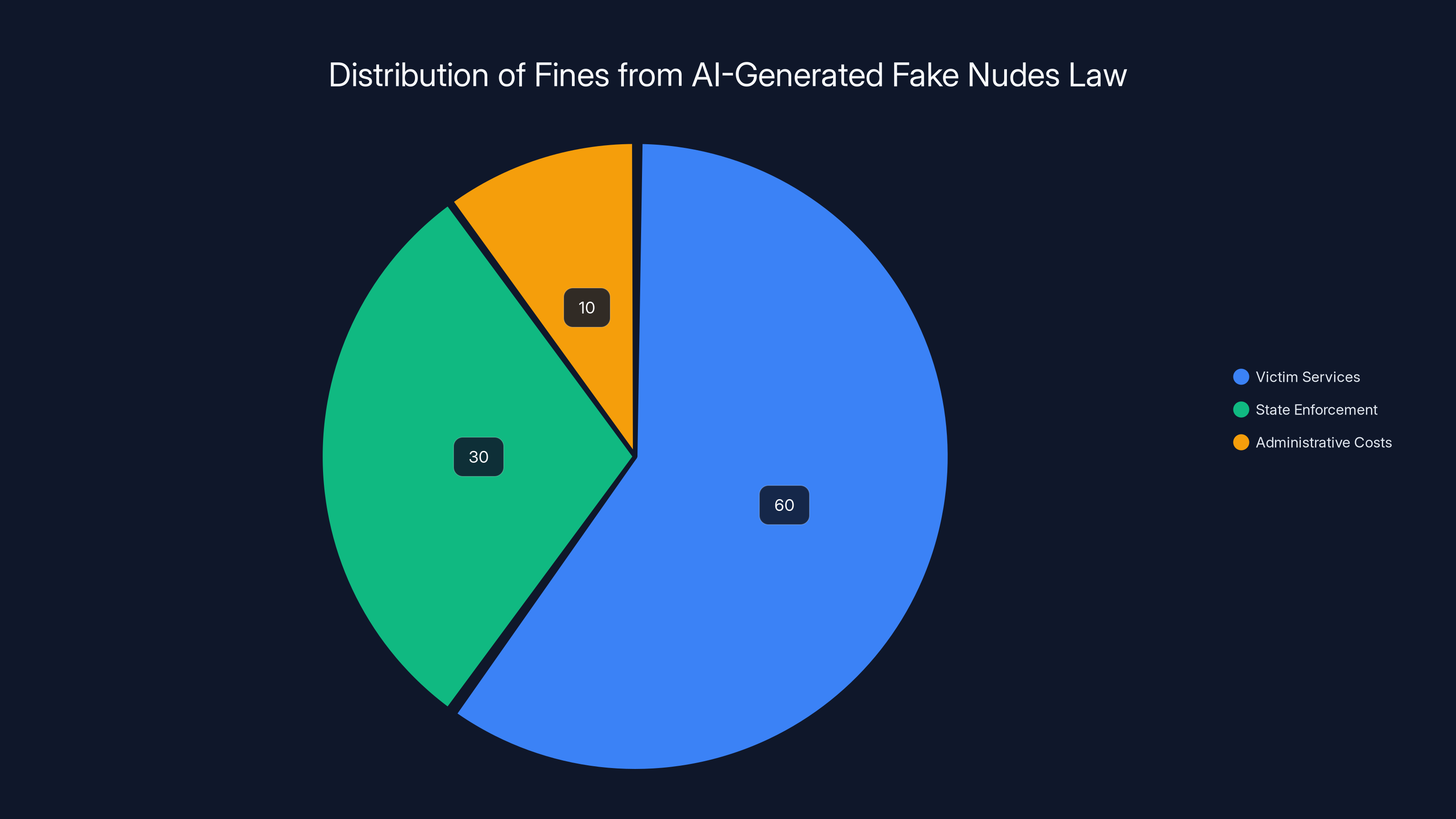

Estimated data shows that a significant portion of fines is allocated to victim services, highlighting the focus on supporting victims of AI-generated content abuse.

Understanding Minnesota’s Law

Minnesota's new legislation is comprehensive in its approach to tackling the issue of AI-generated fake nudes. Let's break down the key components of the law:

Definitions and Scope

The law explicitly targets any technology used to alter images to create fake nudes. This includes applications, software, and online services designed for this purpose. The broad definition ensures that all forms of such technology are covered, leaving no loopholes for developers or distributors, as outlined by Ars Technica.

Legal and Financial Repercussions

Developers and distributors of these technologies face severe penalties. The law allows victims to sue for damages, including punitive damages, which can be substantial. Additionally, Minnesota's attorney general has the authority to impose fines up to $500,000 per instance of a fake AI nude, as reported by The Deep Dive.

The collected fines serve a dual purpose: they deter potential offenders and fund critical services for victims of sexual assault, domestic violence, and other crimes.

Enforcement and Compliance

The law empowers the state to block access to offending products, effectively banning them within Minnesota. This poses a significant compliance challenge for developers, as they must ensure their products are not accessible in the state or risk legal action, as noted by Ars Technica.

Technical and Ethical Considerations for Developers

Developers of AI tools must navigate a complex web of technical and ethical considerations to remain compliant with laws like Minnesota's. Here’s what developers need to keep in mind:

Implementing Safeguards

To prevent misuse, developers should implement robust safeguards, such as:

- Age Verification: Ensuring that users are of legal age to use image manipulation tools.

- Consent Mechanisms: Requiring explicit consent from individuals before their images can be altered.

- Content Moderation: Employing AI to automatically detect and block the creation of fake nudes.

Ethical AI Development

Ethical AI development is crucial in mitigating the risks associated with image manipulation. Developers should adhere to the following principles:

- Transparency: Clearly communicate the potential risks and limitations of AI tools to users.

- Accountability: Establish processes for handling complaints and removing inappropriate content swiftly.

- Bias Mitigation: Ensure datasets used for training AI do not perpetuate harmful stereotypes or biases, as emphasized by UC Law SF.

Estimated data shows that the majority of fines collected under the new law are allocated to victim services, highlighting the state's focus on supporting affected individuals.

Best Practices for Compliance

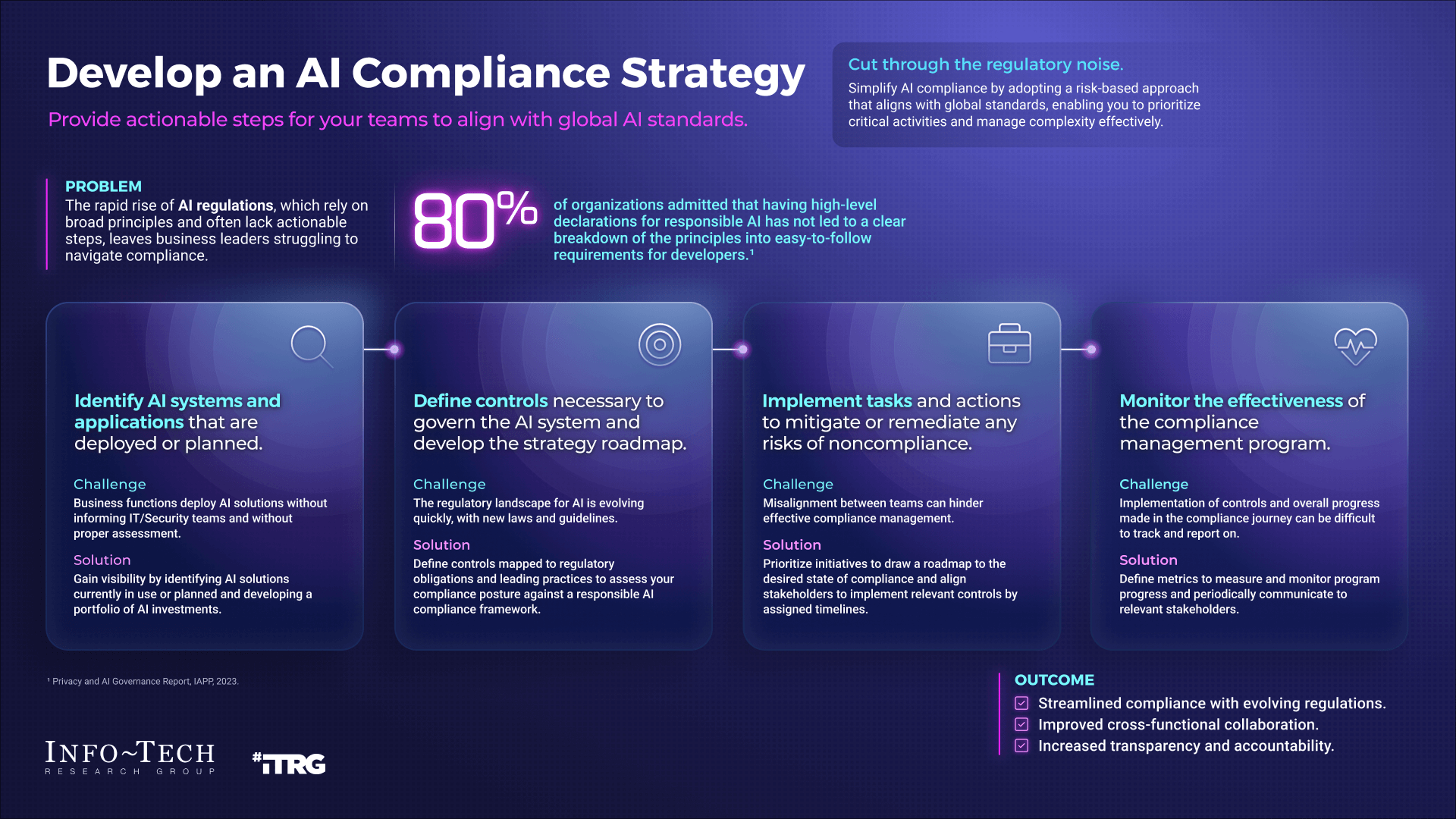

Staying compliant with laws like Minnesota's requires a proactive approach. Here are some best practices for developers and companies:

Legal Audits

Conduct regular legal audits to ensure compliance with local, national, and international laws. This involves reviewing software and processes to identify potential legal risks.

User Education

Educate users about the ethical use of AI tools through clear guidelines and tutorials. This can help prevent misuse and reduce the risk of legal issues.

Collaboration with Legal Experts

Work closely with legal experts to stay updated on evolving laws and regulations. Legal counsel can provide valuable insights into compliance strategies and risk management.

Common Pitfalls and How to Avoid Them

Despite best efforts, developers may encounter pitfalls when navigating legal and ethical challenges. Here are common issues and solutions:

Lack of Consent Mechanisms

Pitfall: Failing to implement consent mechanisms can lead to unauthorized image manipulation.

Solution: Integrate user consent forms and verification processes into the app's workflow to ensure compliance.

Ineffective Content Moderation

Pitfall: Inadequate content moderation can result in the distribution of harmful images.

Solution: Utilize advanced AI tools to automatically detect and flag inappropriate content for review.

Ignoring Cultural Sensitivities

Pitfall: Overlooking cultural differences can lead to unintended harm or offense.

Solution: Engage with diverse user groups to understand cultural sensitivities and incorporate feedback into the development process.

Future Trends and Recommendations

The passage of Minnesota's law signals a growing recognition of the need for regulation in the AI space. Here are some trends and recommendations for the future:

Increased Regulation

As concerns over AI misuse grow, more states and countries are likely to introduce similar legislation. Developers should be prepared for a more regulated environment and prioritize compliance in their operations, as suggested by Ars Technica.

Ethical AI Standards

Industry-wide ethical AI standards may emerge as a way to guide developers and companies. These standards could address issues like consent, data privacy, and bias mitigation, fostering a more responsible AI ecosystem.

User-Centric Design

Designing AI tools with user privacy and protection in mind will become increasingly important. Emphasizing user-centric design can help developers create tools that are both innovative and respectful of individual rights.

Advancements in AI Detection

Advancements in AI detection technology could provide new ways to identify and block harmful content. Developers should stay informed about these technologies and consider integrating them into their products.

Conclusion

Minnesota's landmark law against AI-generated fake nudes is a significant step toward addressing the ethical and legal challenges posed by advancing technology. By understanding the law's implications and adopting best practices, developers can navigate this complex landscape and contribute to a safer and more ethical digital environment.

As the legal landscape evolves, staying informed and proactive will be key to success. Developers and companies that prioritize compliance and ethical AI development will be well-positioned to thrive in this new era of technology regulation.

Key Takeaways

- Minnesota imposes $500K fines for AI-generated fake nudes.

- Developers must implement consent and moderation safeguards.

- Laws like this set a precedent for AI regulation across the U.S.

- Ethical AI development requires transparency and accountability.

- Legal audits and user education are crucial for compliance.

- Future trends point towards increasing AI legislation globally.

- User-centric design and AI detection advancements can mitigate risks.

Related Articles

- 'ChatGPT: Navigating Ethical Boundaries in AI Assistance' [2025]

- Ubuntu's AI Strategy Ignites a Debate Among Linux Users [2025]

- Unpacking OpenAI's 'Goblin' Problem: Why It Matters and How to Tame Your Own Goblins [2025]

- Inside ChatGPT's Goblin Obsession: The Nerdy Evolution of AI [2025]

- The Hidden Cost of Google's AI Defaults and the Illusion of Choice [2025]

- Understanding the Impact of Canceled Digital Rights Conferences on Global Policy [2025]

FAQ

What is Minnesota's Landmark Law Against AI-Generated Fake Nudes: A Comprehensive Guide [2025]?

Last week, Minnesota made headlines by becoming the first state to pass a law specifically targeting the creation and distribution of AI-generated fake nudes.

What does introduction mean?

This groundbreaking legislation not only imposes hefty fines but also sets a legal precedent that could influence technology regulation across the United States.

Why is Minnesota's Landmark Law Against AI-Generated Fake Nudes: A Comprehensive Guide [2025] important in 2025?

The law is a response to the growing concern over 'nudification' apps—tools that use artificial intelligence to remove clothing from images of real people, often without their consent.

How can I get started with Minnesota's Landmark Law Against AI-Generated Fake Nudes: A Comprehensive Guide [2025]?

These apps have sparked outrage for their invasion of privacy and potential to cause severe emotional harm.

What are the key benefits of Minnesota's Landmark Law Against AI-Generated Fake Nudes: A Comprehensive Guide [2025]?

In this article, we'll delve into the details of Minnesota's new law, explore the implications for developers and users, and provide practical guidance for navigating this evolving legal landscape.

What challenges should I expect?

- Hefty Fines: Developers face fines up to $500,000 per infraction.

![Minnesota's Landmark Law Against AI-Generated Fake Nudes: A Comprehensive Guide [2025]](https://tryrunable.com/blog/minnesota-s-landmark-law-against-ai-generated-fake-nudes-a-c/image-1-1777658884936.jpg)