Chat GPT: Navigating Ethical Boundaries in AI Assistance [2025]

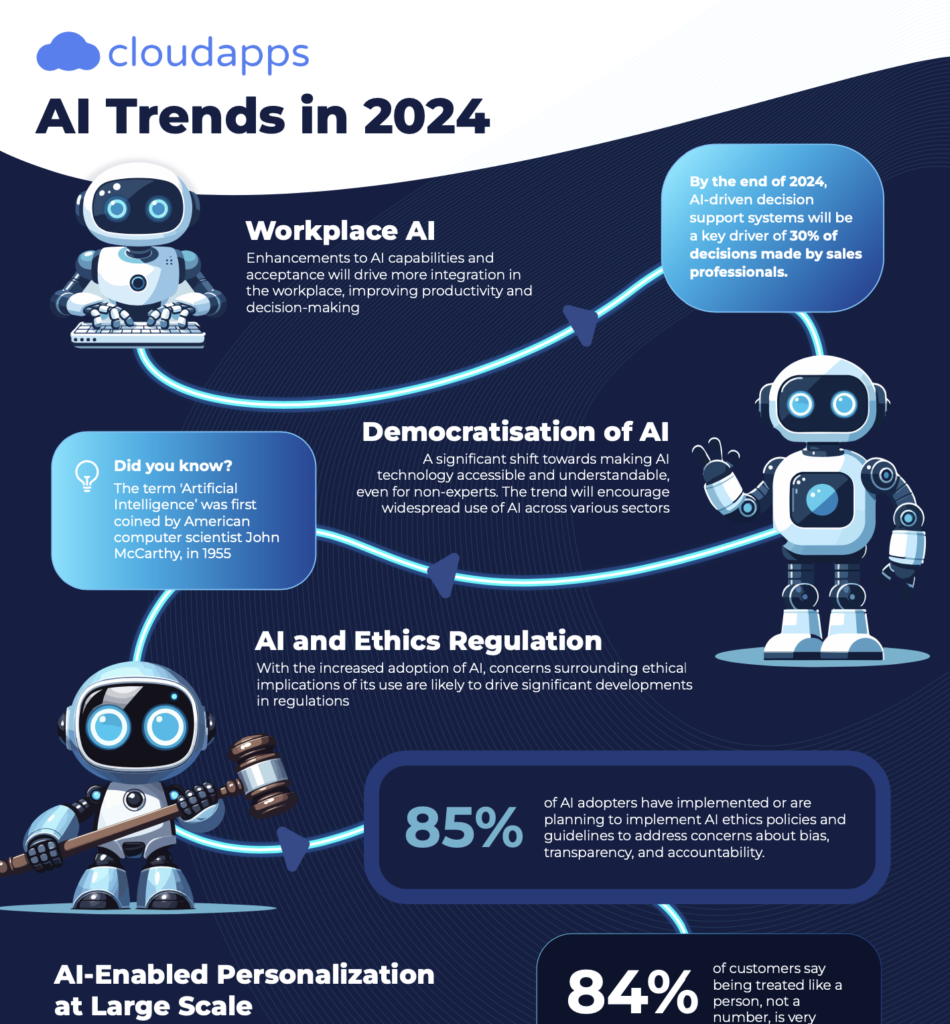

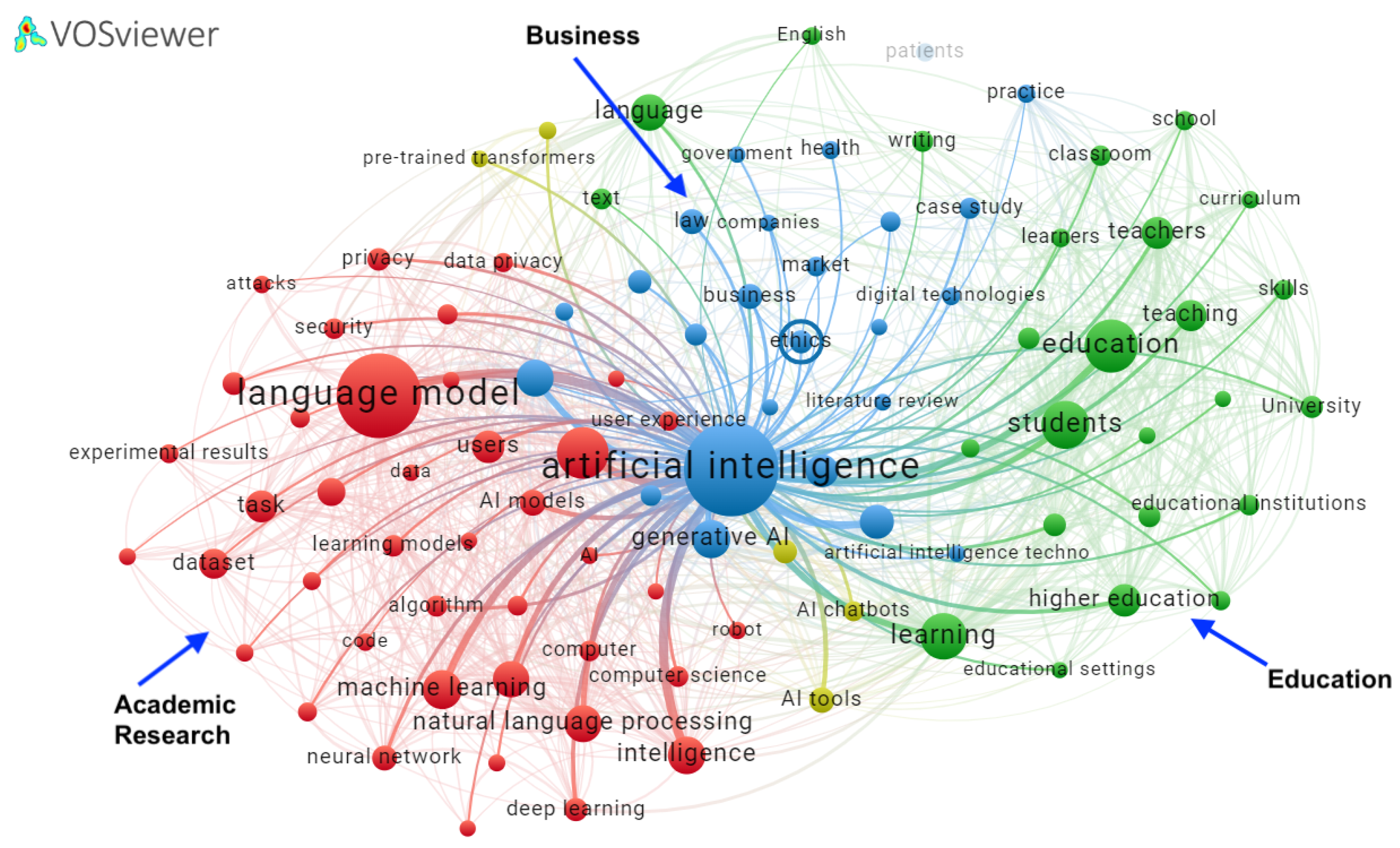

Artificial Intelligence has undoubtedly transformed the way we interact with technology, offering a range of services from customer support to creative writing. Yet, as its capabilities expand, so too do concerns about its potential misuse. One such example is OpenAI's Chat GPT, a sophisticated AI language model that is constantly in the spotlight for its ethical use.

TL; DR

- Ethical Commitment: Chat GPT is designed to avoid assisting in illegal activities, as outlined in OpenAI's commitment to community safety.

- Technical Safeguards: Built-in filters and human moderation help maintain ethical use, as detailed in OpenAI's privacy filter introduction.

- Challenges: AI models face difficulties in context understanding and moral reasoning, a challenge discussed by IBM's insights on the context gap.

- User Responsibility: Users play a crucial role in maintaining ethical guidelines.

- Future Outlook: Ongoing developments aim to enhance AI's ethical boundaries while expanding capabilities, as seen in the Hastings Center's briefing on AI in healthcare.

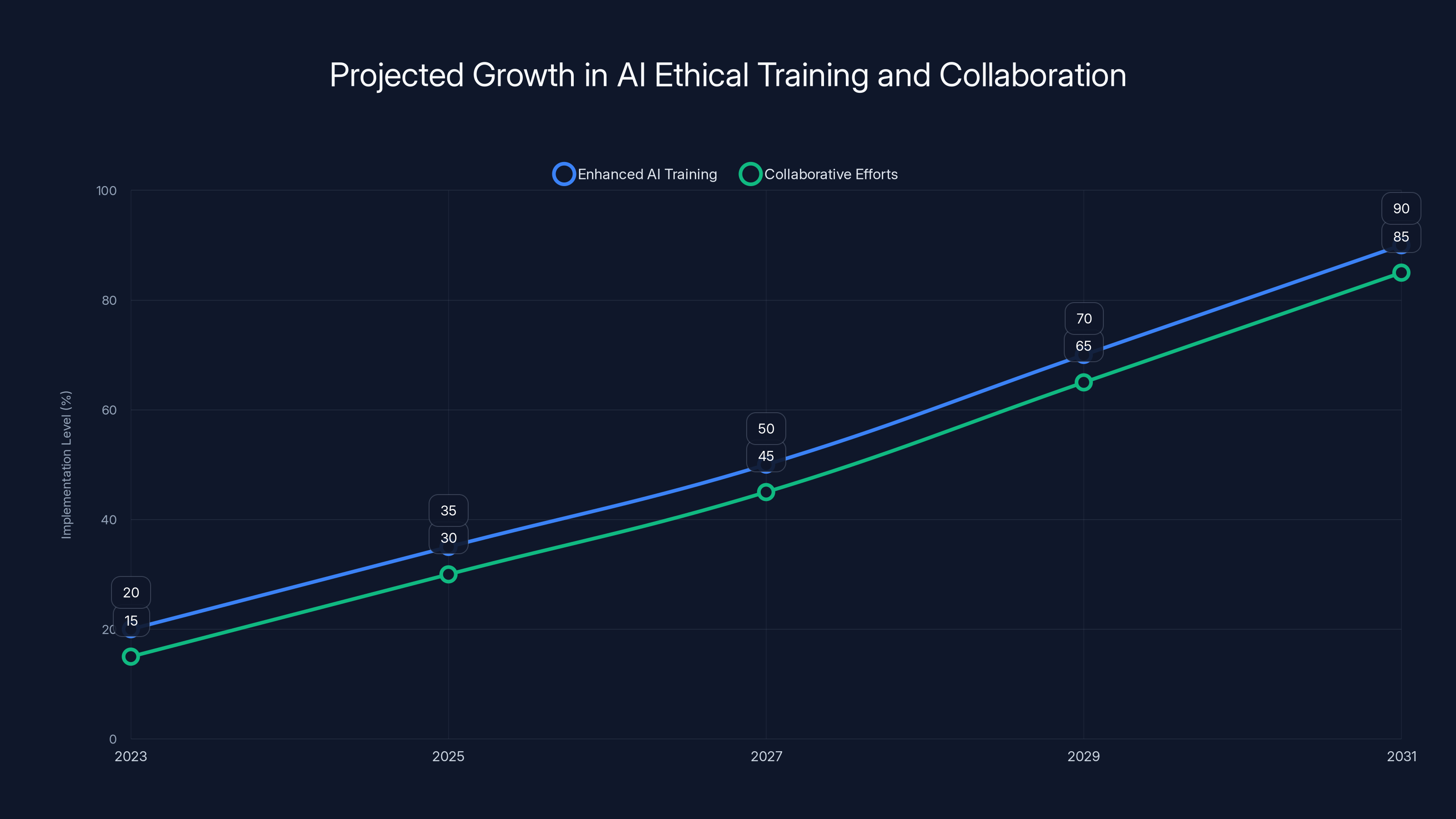

Projected growth shows significant increases in AI ethical training and collaboration efforts, indicating a strong focus on ethical considerations in AI development. Estimated data.

The Foundation of Ethical AI

In the realm of AI, ethical considerations are paramount. OpenAI has emphasized its dedication to ensuring that Chat GPT does not provide instructions, tactics, or advice that could facilitate criminal activities. This commitment is embedded in its design and operational guidelines, as highlighted in OpenAI's community safety commitment.

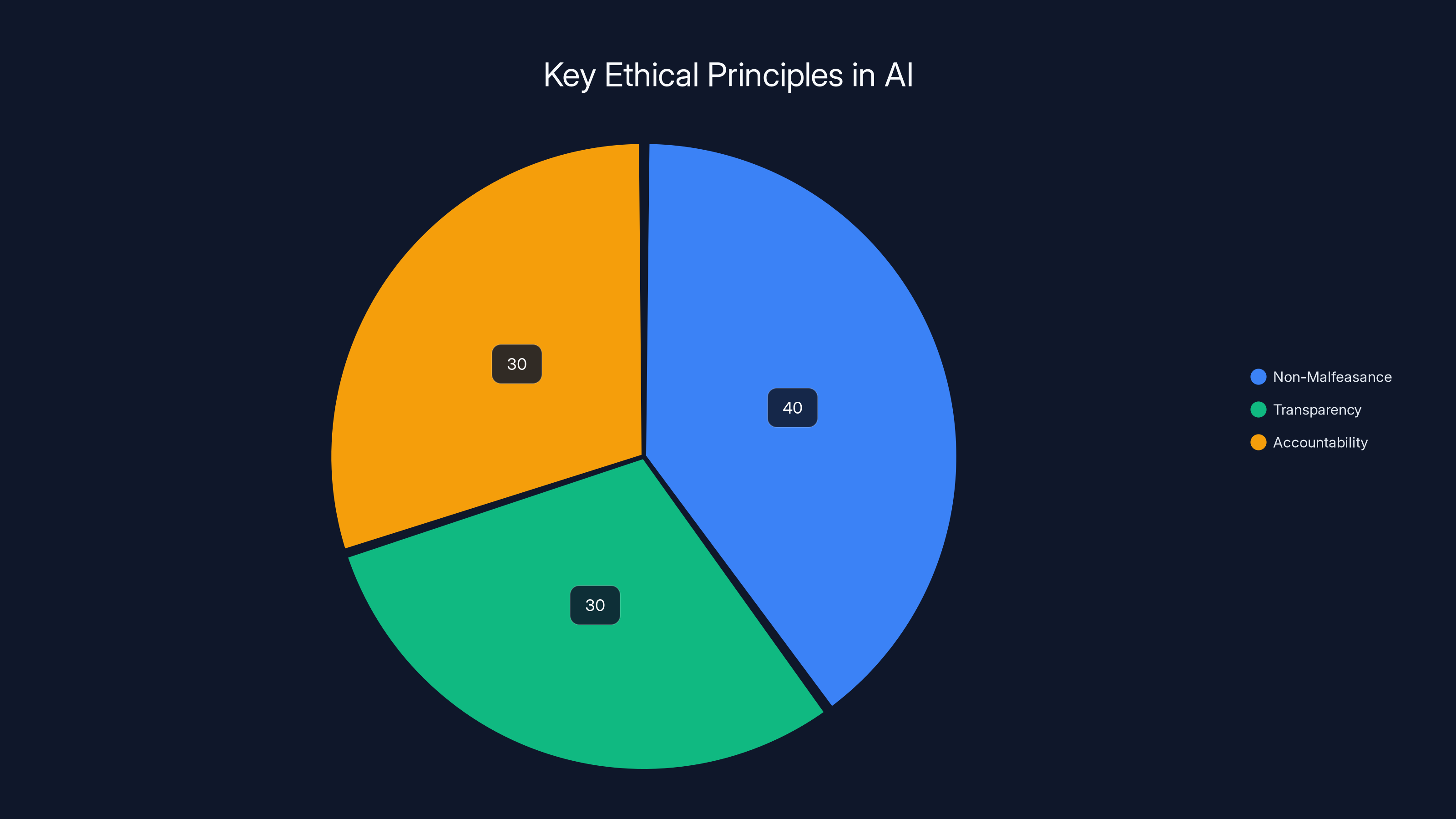

Key Ethical Principles

- Non-Malfeasance: Chat GPT is engineered to avoid causing harm or enabling illegal activities.

- Transparency: Users are informed about the AI's capabilities and limitations, as part of OpenAI's GPT-5.5 introduction.

- Accountability: Systems are in place to address misuse and enhance safety.

The pie chart illustrates the estimated focus distribution among key ethical principles in AI, with non-malfeasance receiving the highest emphasis. Estimated data.

Technical Safeguards in Chat GPT

To uphold these ethical principles, OpenAI employs a series of technical safeguards. These are designed to prevent the AI from being misused while still providing valuable assistance to users.

Built-In Filters

Chat GPT includes filters that screen for potentially harmful or illegal requests. These filters are continually updated based on new data and user interactions, as explained in OpenAI's privacy filter documentation.

- Keyword Detection: Identifies and flags terms associated with illegal activities.

- Contextual Analysis: Evaluates the context of requests to prevent subtle attempts at misuse.

Human Moderation

In addition to automated systems, human moderators play a crucial role in overseeing Chat GPT's interactions. This dual approach ensures higher accuracy and ethical compliance.

- Review Processes: Human moderators assess flagged interactions and make decisions on appropriate responses.

- Feedback Loops: User feedback is crucial in refining AI behavior and improving moderation efficacy, as discussed in HackerNoon's analysis on online chat.

Common Pitfalls and Solutions

Despite these safeguards, challenges remain. AI models, including Chat GPT, can sometimes misinterpret context or fail to recognize nuanced requests that skirt ethical lines.

Contextual Misunderstandings

AI's ability to understand context is limited, which can lead to unintentional assistance in inappropriate scenarios, as noted by Understanding AI's report on measurement challenges.

Solution: Enhancing AI training datasets with diverse scenarios to improve contextual understanding and response.

Ambiguous Requests

Vague or ambiguous requests can slip through filters, posing a risk of misuse.

Solution: Developing more sophisticated algorithms that can decipher nuanced language and intent, as suggested by TrendHunter's insights on the Natter platform.

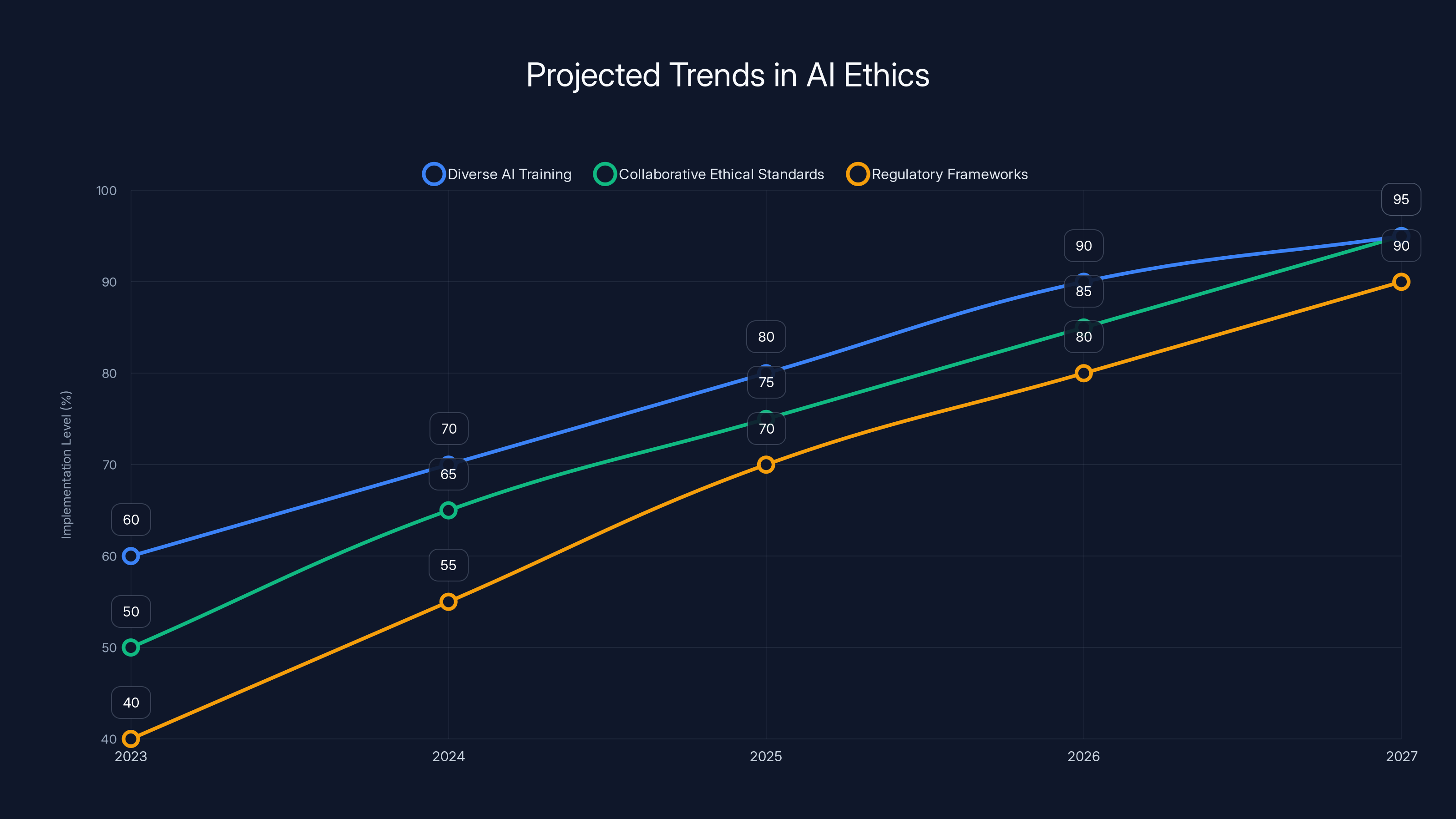

Projected trends indicate significant growth in diverse AI training, collaborative ethical standards, and regulatory frameworks by 2027. Estimated data.

The Role of Users in Ethical AI Use

While OpenAI implements numerous safeguards, user responsibility is equally important in maintaining ethical AI use.

User Education

Educating users about the ethical use of AI is crucial. Users should understand the potential risks and appropriate uses of AI tools.

- Guidelines and Best Practices: Providing users with clear guidelines on ethical interactions with AI.

- Awareness Campaigns: Initiatives to raise awareness about the implications of AI misuse, as highlighted in Daily News' report on collaborative efforts.

Future Trends and Recommendations

As AI technology progresses, so too will its ethical considerations. Here are some trends and recommendations for the future.

Enhanced AI Training

AI models will benefit from more comprehensive training datasets that include ethical scenarios and diverse contexts.

- Diverse Data Sources: Incorporating a wider range of data to improve AI's understanding of context and ethics.

- Scenario-Based Training: Using simulated scenarios to train AI on ethical decision-making, as recommended by MEXC's news on AI training.

Collaborative Efforts

Collaboration between AI developers, ethicists, and policymakers is essential to establish robust ethical guidelines.

- Industry Standards: Developing industry-wide ethical standards for AI use.

- Regulatory Frameworks: Implementing regulations that balance innovation with ethical considerations, as discussed in IBM's insights.

Conclusion

Chat GPT and similar AI models represent significant advancements in technology, but they also pose ethical challenges. By implementing technical safeguards, fostering user responsibility, and engaging in ongoing ethical discussions, AI can continue to evolve in a manner that benefits society while minimizing risks.

FAQ

What is ethical AI?

Ethical AI refers to the development and use of artificial intelligence systems that adhere to moral principles, ensuring they do not cause harm or facilitate illegal activities.

How does Chat GPT avoid misuse?

Chat GPT employs built-in filters and human moderation to prevent misuse, flagging potentially harmful interactions for review, as detailed in OpenAI's privacy filter introduction.

What role do users play in ethical AI?

Users are responsible for interacting with AI tools ethically, understanding their potential risks, and adhering to guidelines provided by developers.

What are future trends in AI ethics?

Future trends include enhanced AI training with diverse data, collaborative efforts for ethical standards, and regulatory frameworks to guide AI development, as discussed in The Hastings Center's briefing.

How can AI developers ensure ethical use?

Developers can ensure ethical use by implementing robust technical safeguards, engaging in user education, and collaborating with ethicists and policymakers.

Why is context understanding important for AI?

Understanding context is crucial for AI to accurately interpret user requests and avoid providing inappropriate assistance, as highlighted by Understanding AI's report.

Can AI models be improved for better ethical compliance?

Yes, through enhanced training datasets, improved algorithms, and ongoing user feedback, AI models can achieve better ethical compliance, as suggested by TrendHunter's insights.

Key Takeaways

- ChatGPT's commitment to ethical use prevents assistance in illegal activities, as outlined in OpenAI's community safety commitment.

- Technical safeguards include filters and human moderation, as detailed in OpenAI's privacy filter documentation.

- User responsibility is vital in maintaining ethical AI interactions.

- Enhanced training and collaboration are key to future AI ethics, as discussed in MEXC's news on AI training.

- Context understanding remains a challenge for AI models, as noted by Understanding AI's report.

Related Articles

- Musk and Altman Go to Court: A Deep Dive into the Legal Battle [2025]

- Exploring ChatGPT's Linguistic Evolution: The 'Strawberry' Test and Beyond [2025]

- Building Custom Reasoning Agents with Minimal Compute [2025]

- The Complex Dynamics of AI Responsibility: A Case Study on Tumbler Ridge and OpenAI [2025]

- Exploring Shapes: Bridging Humans and AI in Group Chats [2025]

- AWS and OpenAI Forge New Cloud Partnership [2025]

!['ChatGPT: Navigating Ethical Boundaries in AI Assistance' [2025]](https://tryrunable.com/blog/chatgpt-navigating-ethical-boundaries-in-ai-assistance-2025/image-1-1777482396414.jpg)