Musk's $134 Billion Open AI Lawsuit: What's Really Happening [2025]

Last year, tech news cycles were dominated by one bombshell claim: Elon Musk filed a lawsuit against OpenAI and Microsoft demanding anywhere from

But here's the thing: this lawsuit didn't appear out of nowhere in 2024. The story is messier, longer, and way more complicated than the headlines suggest. It involves betrayal narratives, conflicting visions for AI development, billions in funding rounds, and two former co-founders now running companies in direct competition with each other.

I've been following the AI industry for years, and this case reveals something fundamental about how the highest-stakes tech deals actually work—the tensions, the broken promises, and the legal fallout when visions diverge. So let's break down what Musk is actually claiming, why he filed this lawsuit, what the evidence shows, and what happens next.

TL; DR

- The Core Claim: Musk invested 79-$134 billion as damages for the company's shift from non-profit to for-profit

- The Background: OpenAI was founded as a non-profit in 2015, but moved toward commercialization after Microsoft's massive investment

- The Legal Theory: The lawsuit argues OpenAI breached its founding charter and owes early contributors a share of "wrongful gains"

- The Precedent Problem: Cases like this are historically rare in tech, making the legal theory untested

- The Real Issue: This reflects a broader debate about whether AI companies can pivot away from open, non-profit missions once they achieve scale

The timeline highlights OpenAI's transformation from a non-profit to a major player in AI, marked by key events such as the release of GPT-3 and major investments from Microsoft.

The Original Vision: How Open AI Started

To understand why Musk is suing, you need to understand what OpenAI promised to be when it was founded in December 2015.

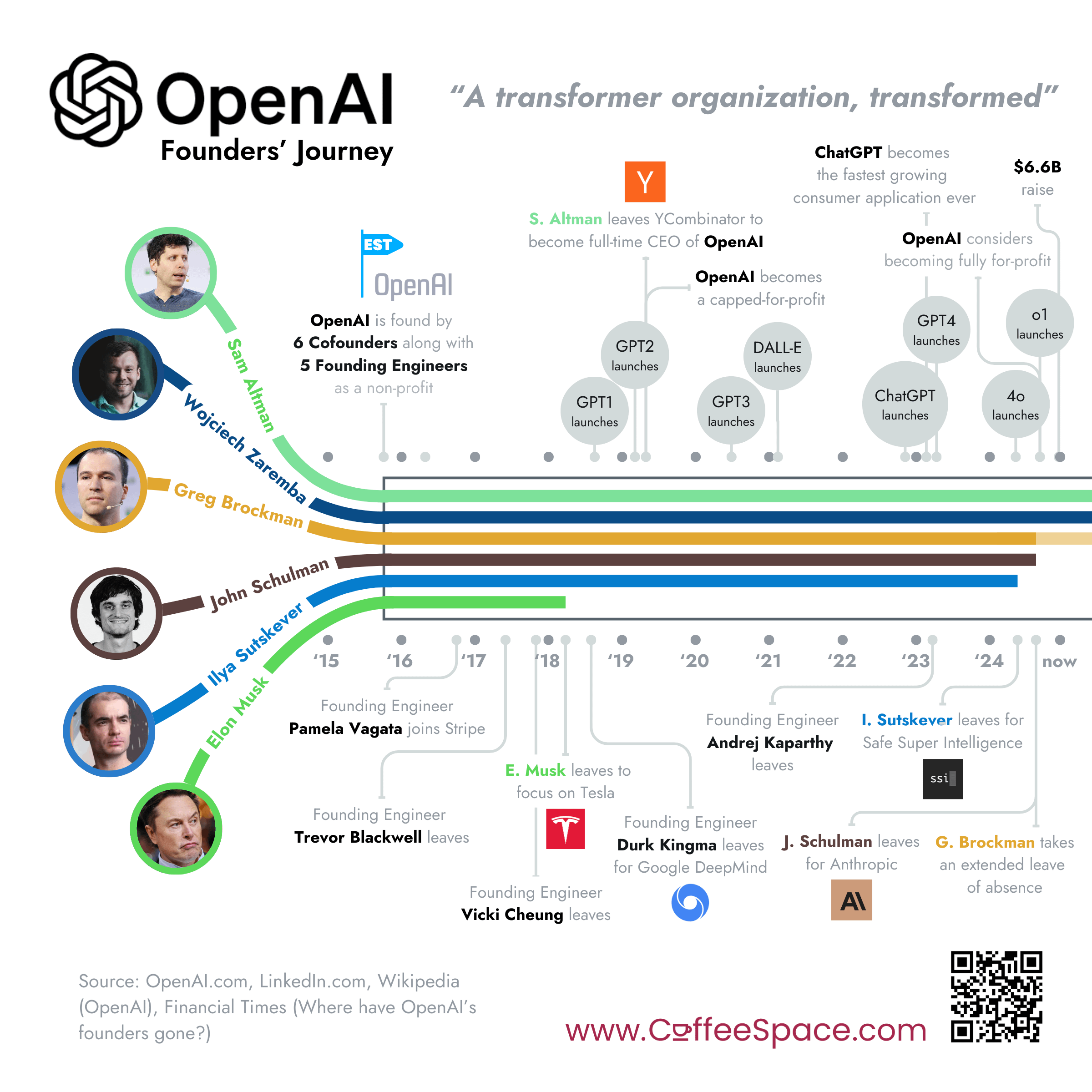

Elon Musk, Sam Altman, and others co-founded the organization with an explicit mission: develop artificial general intelligence (AGI) safely and responsibly, as a non-profit, with the goal of ensuring AI benefited humanity rather than concentrating wealth among a few tech giants.

Let me be clear about what that meant in practice. A non-profit structure means no shareholders, no private equity exits, and theoretically no financial incentive to extract maximum profits. The charter was genuinely different from how Google, Meta, or any other venture-backed tech company operates.

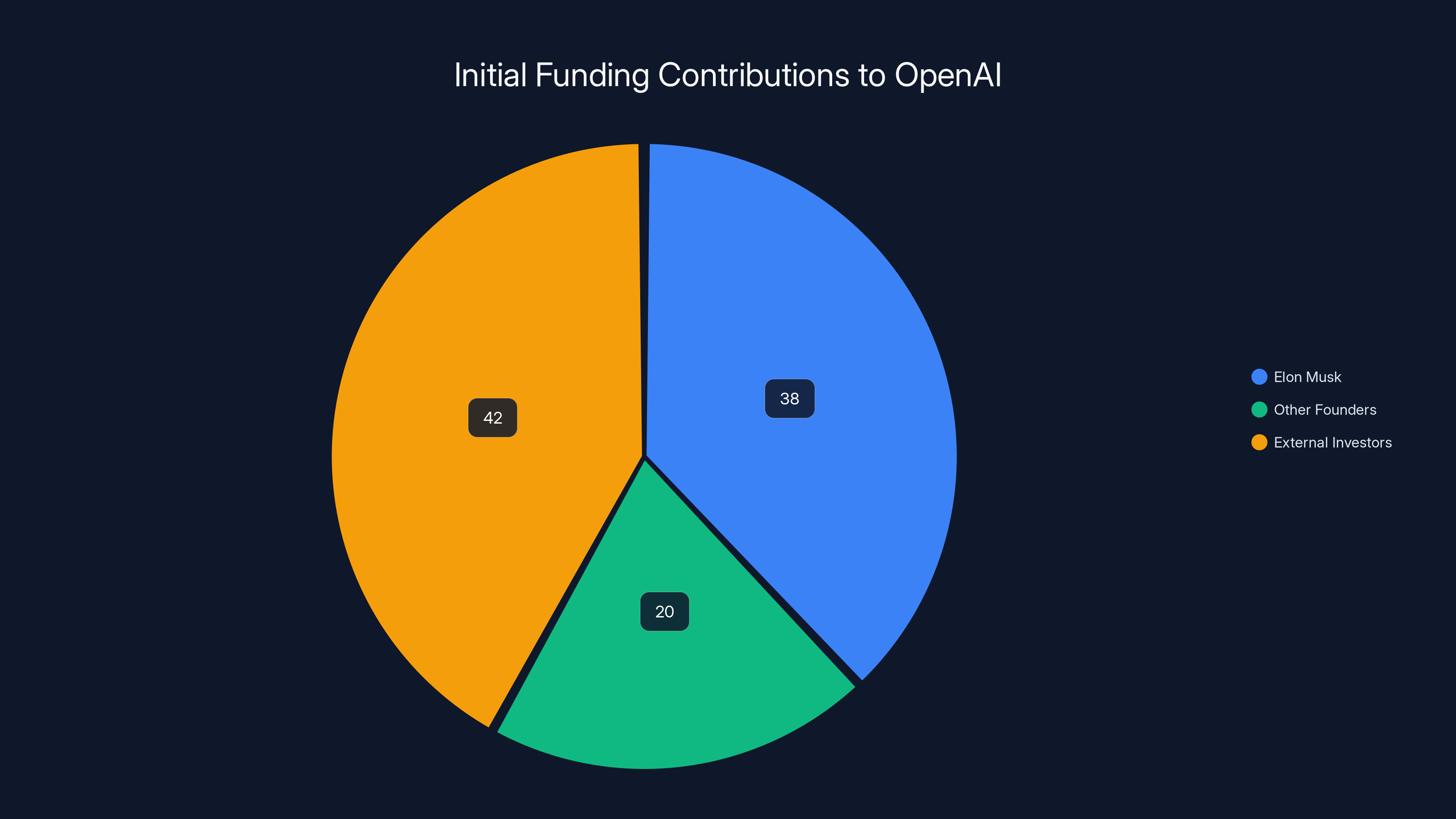

Musk put his money where his mouth was. According to the lawsuit filing, he contributed approximately $38 million in seed funding during the company's early years. But it wasn't just capital. Musk provided strategic guidance, helped recruit key talent, introduced the team to business contacts, and advised on foundational decisions about the company's direction.

The original team believed they were building something different. Not a startup racing to exit, but a research organization that would pursue AGI development on its own terms. That distinction matters because it shaped every early decision, every hiring choice, and every research direction.

The Shift: From Non-Profit Ideals to Microsoft Billions

Here's where things got complicated.

By 2019, OpenAI was burning through cash. Serious AI research costs real money—compute, talent, infrastructure. The non-profit funding model wasn't cutting it. The organization needed capital, and non-profit sources weren't sufficient to compete with Google's and Meta's billion-dollar AI research budgets.

So in 2019, OpenAI created a hybrid structure: a non-profit parent organization with a for-profit subsidiary. This let the company raise capital from venture investors while technically maintaining non-profit status. It was a creative solution to a real problem—but it opened the door to future conflict.

Then came Microsoft.

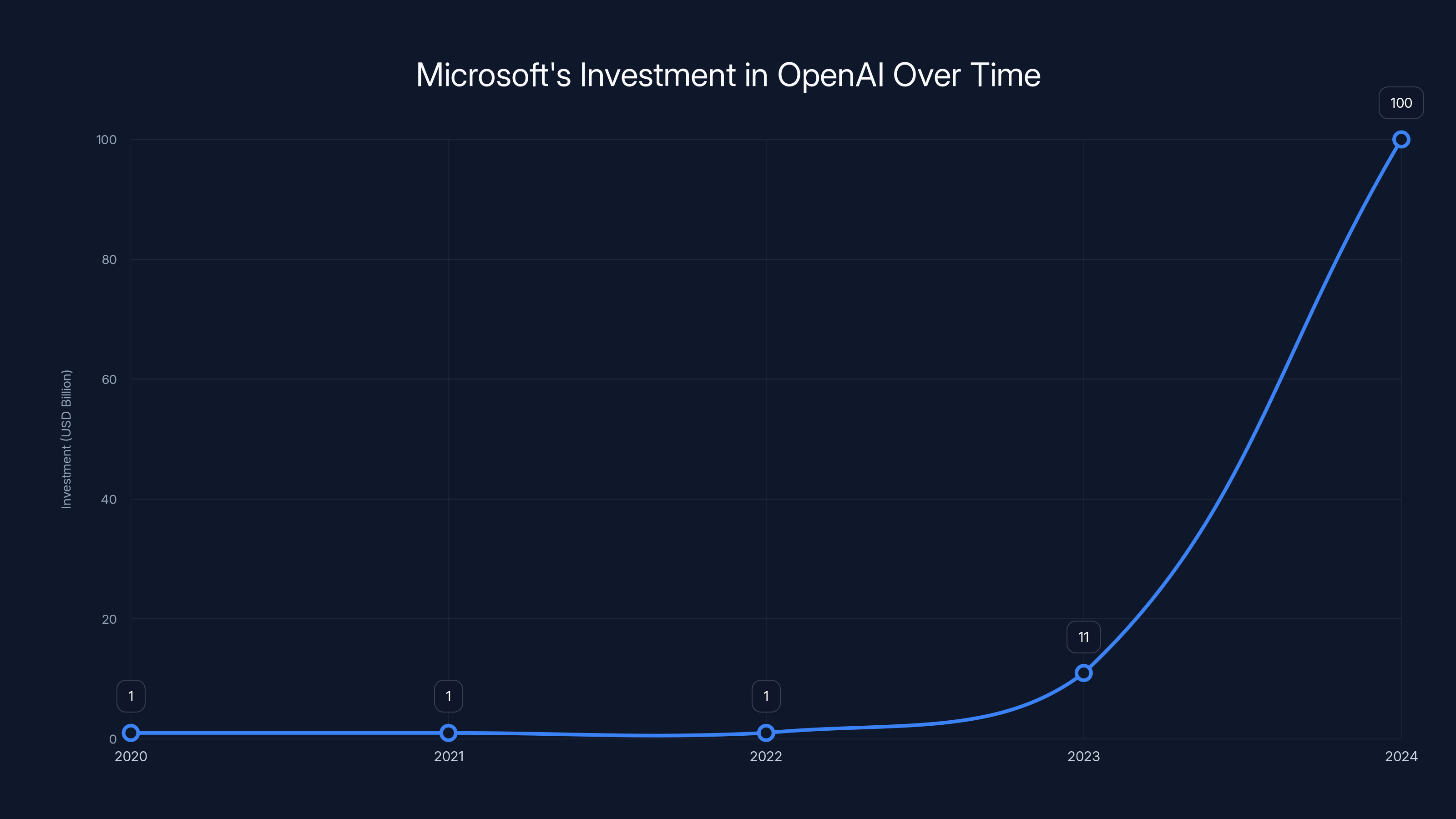

In 2020, Microsoft committed

With each injection of Microsoft money, the incentive structure shifted. Microsoft isn't a non-profit—it's a public company with shareholders who expect returns. Suddenly, OpenAI wasn't pursuing AGI safety as an end goal anymore. It was pursuing AGI as a product to monetize.

Musk noticed this shift. And he wasn't happy about it.

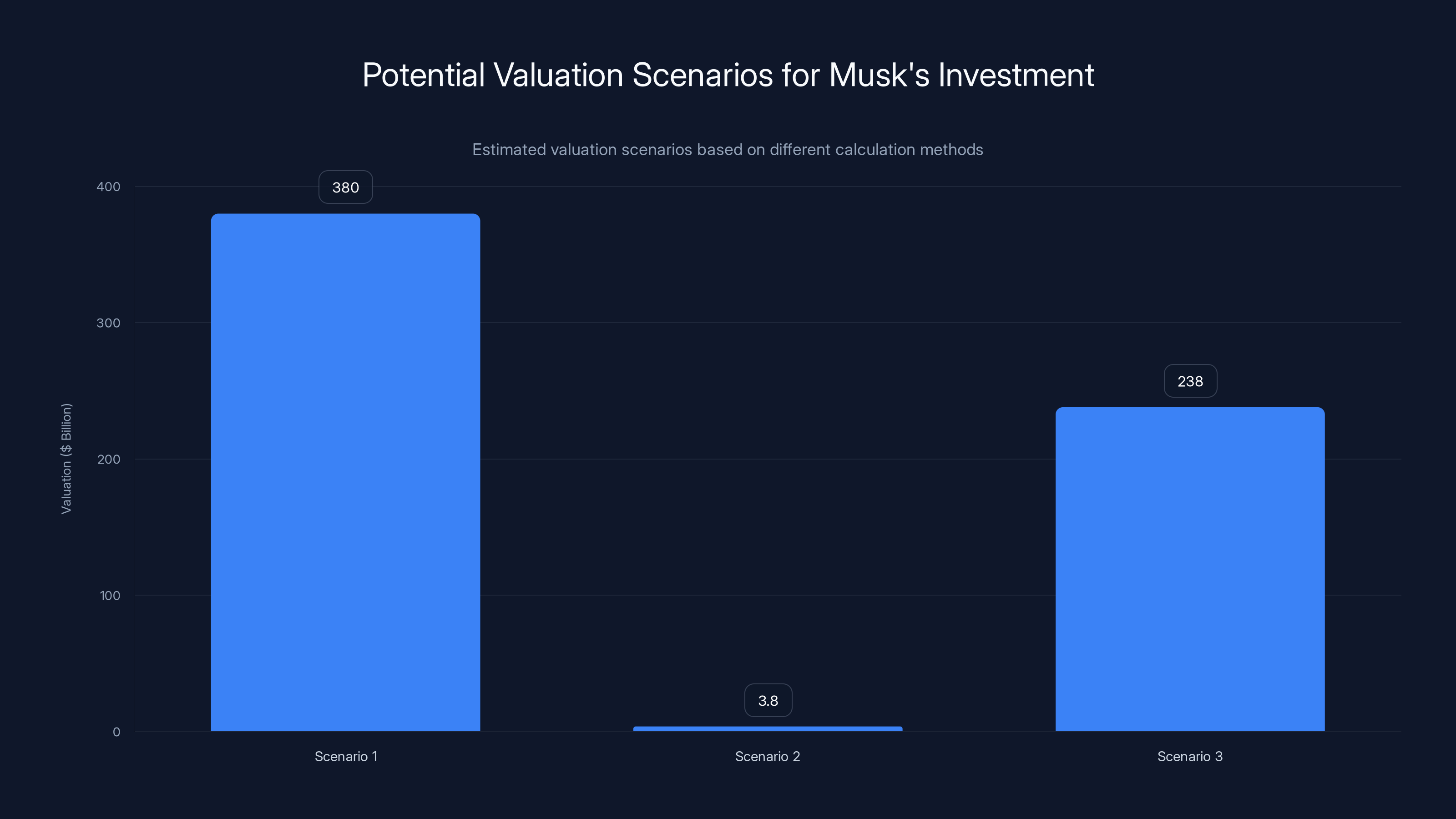

This chart illustrates three potential valuation scenarios for Musk's investment in OpenAI, ranging from

The Lawsuit: What Musk Is Actually Claiming

The lawsuit, filed in March 2024, makes several interconnected claims. Let's break them down because the legal theory matters here.

Claim 1: Breach of Fiduciary Duty

Musk argues that by accepting his $38 million investment and his contributions as an advisor, OpenAI took on a fiduciary obligation—meaning they had to act in his interest, or at minimum, in the interest of the non-profit mission he contributed to. By pivoting to a commercial model, the lawsuit argues, they breached that duty.

This is a big claim. If successful, it could establish that early investors in non-profit organizations have specific legal protections that carry through even after restructuring.

Claim 2: Unjust Enrichment

The lawsuit goes further. It argues that OpenAI and Microsoft are illegally holding gains that rightfully belong to Musk. He contributed

According to the lawsuit, this unjust enrichment could be as high as

Claim 3: Violation of the Non-Profit Charter

Musk's legal team argues that OpenAI's founding documents created an irrevocable commitment to the non-profit mission. By converting to a for-profit structure and prioritizing commercial returns over safety research, the company violated its own charter.

If this claim holds water, it could set a precedent that founding missions have legal teeth—that you can't just pivot away from them once you've achieved market success.

Why This Lawsuit Is Difficult to Win

Here's the honest assessment: Musk faces significant legal hurdles.

Precedent Problem

There's limited case law on this specific issue. Non-profit organizations that transition to for-profit structures aren't common in tech. Wikipedia remains non-profit. Mozilla remains non-profit. But OpenAI is attempting something novel.

Without clear precedent, courts have to construct the legal framework from first principles. That gives OpenAI's lawyers room to argue that the company's structure, while unusual, isn't illegal.

The Hybrid Structure Defense

OpenAI will argue that the 2019 hybrid structure (non-profit parent with for-profit subsidiary) was explicitly disclosed and allows for-profit operations under a non-profit umbrella. This structure is increasingly common in biotechnology and other capital-intensive fields.

If the courts accept this defense, then OpenAI can claim it never actually violated its charter because the charter allowed for this exact scenario.

Damages Calculation

Musk's damages claim—

Musk's lawyers will argue for a high percentage (maybe 10-15% of current valuation). OpenAI's lawyers will argue that his contribution, while valuable, was just one of many factors enabling the company's success. They'll point to the thousands of employees, researchers, and Microsoft's infrastructure and distribution channels.

The truth is probably somewhere in the middle. But quantifying that in a courtroom is exceptionally difficult.

The Timeline: How We Got Here

Understanding the lawsuit requires understanding the timeline that led to it.

2015: OpenAI founded as a non-profit with Elon Musk, Sam Altman, and others as co-founders. Mission: develop AGI safely, as a non-profit, for the benefit of humanity.

2016-2018: Early research phase. OpenAI publishes research on reinforcement learning and robotics. The organization operates with modest budgets and significant non-profit funding.

2019: OpenAI announces the hybrid structure—a non-profit parent with a for-profit subsidiary. This allows venture capital investment while maintaining non-profit status. Elon Musk leaves the board but maintains his investment.

2020: Microsoft invests $1 billion. Tension begins between non-profit mission and commercial pressure.

2021: OpenAI releases GPT-3. The model is powerful but expensive—inferencing costs roughly $0.002 per prompt completion. Commercialization becomes inevitable.

2022: Chat GPT launches on November 30. It reaches 1 million users in 5 days, 100 million in 2 months. Suddenly, OpenAI has a mass-market product. Microsoft integrates it into Bing and Office.

2023: Microsoft commits another $10 billion. OpenAI is now generating substantial revenue from API access and enterprise contracts.

Early 2024: Elon Musk files the lawsuit, claiming that OpenAI has abandoned its non-profit mission and owes him damages.

The timeline shows a clear evolution: from pure non-profit research (2015-2019), to hybrid structure allowing some commercialization (2019-2022), to essentially a Microsoft-backed commercial enterprise (2023-present).

The lawsuit faces significant challenges due to the lack of precedent, the hybrid structure defense, and the complexity of calculating damages. Estimated data.

The Deeper Issue: What Does Non-Profit Actually Mean?

Here's where this lawsuit touches on something bigger.

A non-profit organization can still generate revenue. Universities are non-profits and charge tuition. Hospitals are non-profits and charge for services. The key difference is that all revenue is reinvested in the organization's mission—not distributed to shareholders.

OpenAI's argument will be: we're still a non-profit parent organization. All revenue from the for-profit subsidiary flows back to the non-profit to fund research.

But Musk's argument—and it has merit—is that this becomes a legal fiction once you introduce outside investors who expect returns. Microsoft didn't invest $100 billion to fund abstract AI safety research. They invested to capture the upside of AI commercialization.

The tension is real. You can't simultaneously serve two masters: one demanding returns on capital, and one demanding openness and safety above profit. Eventually, one wins.

Most observers think Microsoft won.

Musk's Own AI Ambitions: Why He Cares

This lawsuit doesn't exist in a vacuum. Elon Musk has his own AI company now: x AI.

Founded in 2023, x AI is positioned as an alternative to OpenAI. It promises to be more open, more transparent, and less captured by corporate interests. There's obvious irony here—Musk complaining that OpenAI abandoned openness while building his own closed company.

But there's also strategic logic. By suing OpenAI, Musk accomplishes several things:

- He gets media attention for the narrative that OpenAI betrayed its mission

- He potentially wins 134 billion to fund x AI development

- He establishes himself as the AI company founder who actually cares about the non-profit mission

- He creates legal uncertainty around OpenAI's governance structure

Whether any of this is legally sound is a separate question. But strategically? Musk is playing a long game.

The Microsoft Angle: What Does a $100 Billion Investor Want?

Microsoft is named in this lawsuit, but the company didn't create the problem—they capitalized on it.

Microsoft invested in OpenAI for a straightforward reason: they wanted access to cutting-edge AI technology to integrate into their products. Bing, Copilot, Office 365, GitHub—all leveraging OpenAI's models.

From Microsoft's perspective, the non-profit mission was never relevant. They're a for-profit company investing in a technology partner. They expect returns.

The lawsuit argues that Microsoft is complicit in OpenAI's mission drift because they provided the capital that made commercialization inevitable. That's a more novel argument because it suggests that third-party investors can be held liable for how they influence a non-profit's trajectory.

If this argument prevails, it could change how large companies invest in non-profits. You could suddenly face legal liability if your investment causes the organization to abandon its mission. That would likely chill investment in non-profit research organizations—probably not what anyone wants.

Microsoft's investment in OpenAI grew from

Similar Cases: Has This Ever Happened Before?

Legal cases involving non-profit mission drift are rare, but they do exist.

The most famous is probably the case of Lowell Observatory in the 1990s. Lowell Observatory, founded in 1894 as a non-profit dedicated to astronomical research, received a significant bequest from a donor. The dispute wasn't about commercialization, but it did involve whether the observatory was adhering to its founding mission. Courts ruled that while some flexibility is allowed, the organization couldn't completely abandon its stated purpose.

There's also precedent in the nonprofit sector regarding donor intent. If a non-profit accepts funds with explicit conditions attached, they can't legally redirect those funds to other purposes. Musk's lawyers will likely argue that his $38 million contribution was conditional on maintaining the non-profit mission.

But OpenAI's lawyers will argue that the hybrid structure (announced before any new funding rounds) was disclosed and understood. Musk continued to hold his investment after 2019, implicitly accepting the new structure.

This ambiguity is precisely why the case will be difficult to resolve.

The Numbers: How Would Damages Even Be Calculated?

Let's walk through the math, because this is where the $134 billion claim gets strange.

Musk invested

Scenario 1: Percentage of Current Valuation

If OpenAI is worth

His lawyers might argue:

- Total early-stage funding: ~$50 million (rough estimate)

- Musk's contribution: $38 million = 76% of early funding

- Therefore: 76% × 380 billion

But that calculation ignores Microsoft's $100 billion investment and the thousands of employees whose work created the actual value.

Scenario 2: Return on Investment Multiple

A venture capitalist might argue:

- Early investment: $38 million

- Current implied valuation of that stake: ???

- Appropriate return multiple: 10x? 100x? 1000x?

If early investors typically see 100x returns in successful AI companies, then Musk's

Scenario 3: Contribution Value Beyond Capital

Musk's lawyers will argue that his strategic contributions (recruiting, business introductions, advice) had independent value. If we assign, say,

But quantifying advisory contributions is subjective and heavily contested. A court would struggle to assign a specific number.

The honest truth: there's no clean formula for calculating damages here. The

What Happens Next: The Legal Process

As of early 2025, this case is still in the early stages. Here's what we can expect:

Phase 1: Discovery (currently ongoing or about to begin)

Both sides exchange documents, emails, and evidence. This is where things get interesting. Discovery might reveal internal OpenAI communications discussing the mission shift, the impact of Microsoft's investment, and strategic decisions prioritizing commercialization.

If OpenAI's internal documents show that leadership knowingly abandoned the non-profit mission to serve Microsoft's interests, that's damaging. If the documents show pragmatic reasoning ("we need capital to compete"), that's more defensible.

Phase 2: Motions (likely 2025-2026)

OpenAI's lawyers will probably file a motion to dismiss, arguing that the case lacks legal merit or that Musk lacks standing to sue. These motions rarely succeed entirely, but they can narrow the scope of the case significantly.

Phase 3: Settlement Negotiations (potentially throughout)

Most high-stakes tech litigation settles before trial. A settlement would likely involve some payment to Musk—enough to avoid the PR damage of a full trial, but not the

Phase 4: Trial (if no settlement, likely 2027-2028)

If the case actually goes to trial, expect arguments from both sides' expert witnesses about valuation methods, non-profit governance standards, and fiduciary obligations. The trial could last weeks or months.

Phase 5: Appeal (inevitable if the judgment is large)

Whoever loses will almost certainly appeal, adding another 2-3 years to the process.

So realistically, this case won't be resolved until 2027-2028, and possibly later. That's the normal speed of the legal system.

Elon Musk's seed funding and strategic contributions are valued significantly lower than the $134 billion in claimed damages. Estimated data for strategic contributions.

The Broader Implications: What This Case Means for AI Governance

Beyond Musk and OpenAI, this lawsuit raises important questions about how the AI industry should be governed.

Can Non-Profits Scale in AI?

OpenAI's experience suggests that non-profit structures don't work well for capital-intensive AI research. The moment you need billions in funding, you're forced to either dilute your mission or accept commercial investment that shapes your strategy.

Alternatives include:

- Government funding (but that comes with its own dependencies)

- Large endowments (like universities, but extremely difficult to establish)

- Cooperative structures (shared ownership among stakeholders)

- Benefit corporations (legally required to balance profit with social mission)

Should Founding Missions Have Legal Teeth?

The lawsuit implicitly asks: can a company legally pivot away from its founding mission once it achieves success? Currently, the answer is mostly yes. Non-profits have more constraints than for-profits, but they still have significant flexibility.

If courts decide that founding missions do have legal weight, that could change how tech companies structure themselves. Companies might be more careful about making promises they can't keep, or they might avoid founding missions altogether.

What Do Investors Owe Non-Profits?

By naming Microsoft, the lawsuit suggests that large investors can be held accountable for how they influence a non-profit's trajectory. If that theory takes hold, expect to see more scrutiny of venture capital investments in mission-driven organizations.

This could be good (preventing mission drift) or bad (discouraging investment in non-profit research), depending on perspective.

Where Are the Other Founders? The Bigger AI Story

Musk isn't the only one with opinions on OpenAI's direction. The co-founder dynamics are worth understanding.

Sam Altman, OpenAI's current CEO, has publicly defended the commercialization strategy. His argument: making AI safe at scale requires enormous computing resources and a massive revenue stream. The non-profit structure couldn't support that. The hybrid structure allows the company to pursue its mission while having the resources to execute.

Ilya Sutskever, OpenAI's Chief Scientist, was initially supportive of the commercialization but has expressed concerns in private conversations (reported by insiders) about whether the company is prioritizing safety adequately.

Dario Amodei, co-founder of Anthropic (a competitor to OpenAI founded by former OpenAI employees), has been more critical. Anthropic was partly founded specifically to pursue AI safety research without the commercial pressure of OpenAI's model.

So Musk's lawsuit reflects a real schism in the AI community about how to balance commercialization with safety and openness.

The Counter-Narrative: Why Open AI's Position Isn't Unreasonable

To be fair, OpenAI's defense has merit.

Resource Constraints Are Real

Training GPT-4 required an estimated 1,000+ GPUs running continuously for months. The compute cost was probably in the hundreds of millions of dollars. Non-profit funding mechanisms don't support that scale of research.

If OpenAI had remained pure non-profit, it probably wouldn't exist today. DeepMind (owned by Google) and Meta's AI division would dominate the AI space, funded by their parent companies' advertising revenue. Is that better?

The Hybrid Structure Was Disclosed

OpenAI's 2019 announcement about the hybrid structure was public. Musk could have exited his investment or publicly opposed it. He did neither. That tacit acceptance could be interpreted as consent to the new arrangement.

Commercial Success Enabled Research

Interestingly, Chat GPT's commercial success has funded OpenAI's largest safety research initiatives. The non-profit research operations today are bigger and better funded than they would be if OpenAI had remained a pure non-profit.

So there's an argument that commercialization actually enabled the company to pursue its safety mission more effectively, not undermined it.

The Mission Hasn't Been Abandoned

OpenAI still publishes substantial research on AI alignment, safety, and interpretability. The company still has explicit safety teams. That doesn't read like mission abandonment—it reads like mission evolution.

Elon Musk contributed approximately $38 million, making up a significant portion of OpenAI's initial funding. Estimated data for other contributors.

Anthropic: The Road Not Taken

If you want to see what a purely commercial, safety-focused AI company looks like, Anthropic is instructive.

Founded in 2021 by former OpenAI employees including Dario Amodei and Daniela Amodei, Anthropic is a for-profit company from day one. It prioritizes AI safety research while also building commercial products (Claude, their AI assistant).

Anthropic has demonstrated that you don't need a non-profit structure to care about safety and responsible AI development. You just need founders who prioritize it.

That might be OpenAI's best defense: "We're pursuing our mission just fine under a for-profit structure, like Anthropic does."

The Tesla-Space X Parallel: Musk's Pattern

It's worth noting that Musk has a pattern of filing major lawsuits when he feels wronged or when his interests aren't being prioritized.

At Tesla, he fought with the SEC over tweets and governance. At SpaceX, he's been involved in disputes with contractors and government agencies. At Twitter (now X), he filed lawsuits over employment disputes and advertiser relationships.

Musk is litigious when he feels wronged. That doesn't mean his OpenAI lawsuit is frivolous, but it does suggest he's comfortable with high-stakes legal battles as a business tactic.

The Settlement Possibility: What Would Resolve This?

If I had to guess how this ends, I'd guess settlement.

A trial would be extremely expensive for both sides (legal bills could exceed $100 million). Both sides would face discovery of embarrassing internal communications. The outcome would be uncertain.

For OpenAI, settling might be worth $2-5 billion if it:

- Removes legal uncertainty

- Ends Musk's public criticism campaign

- Allows the company to move forward without ongoing litigation headlines

For Musk, settling for less than the claimed

The wildcard: Microsoft. If the case goes against OpenAI, Microsoft could face liability too. That gives Microsoft incentive to help fund a settlement just to protect themselves.

The Real Debate: Open vs. Closed AI

Beneath the lawsuit is a genuine philosophical disagreement about how AI should be developed and deployed.

Musk's position (and x AI's positioning): AI should be developed openly, with transparency and access for the broader research community. Closed systems controlled by a few companies are dangerous and anticompetitive.

OpenAI's position: The most capable AI systems require careful safety measures. Open-sourcing state-of-the-art models could enable misuse. Commercial models with guardrails are safer than open-source models without them.

There's truth on both sides. Open systems enable more innovation and decentralization. Closed systems with safety teams can implement guardrails more carefully. The question of which approach is better for society isn't settled.

Musk's lawsuit is partly about money, but it's also partly about winning the argument that open is better. If his suit succeeds, it sends a signal that companies can't just abandon their founding principles of openness for commercial gain.

Lessons for Future AI Companies

What should future AI companies learn from this saga?

1. Be Clear About Structure From Day One

If you're starting a non-profit AI company and you think you might commercialize later, say so explicitly. Draft bylaws that allow for commercial subsidiaries. Get all founders to agree upfront about the path forward.

OpenAI did announce the hybrid structure in 2019, but the fact that it was newsworthy suggests founder expectations weren't fully aligned.

2. Get Early Investors to Agree to the Model

Have Elon Musk sign documents explicitly acknowledging the 2019 hybrid structure change. If he refused, you'd know about conflict upfront. If he agreed, you'd have legal cover later.

3. Prioritize Mission Over Capital

If your mission matters, don't accept investment that requires you to compromise it. It's better to grow slowly as a pure non-profit than to pivot away from your founding mission once you've achieved scale.

Anthropic chose to be a for-profit from the start, avoiding this entire problem.

4. Document Leadership Intent

If there are debates about strategy and mission within your leadership team, document those debates. When Musk sues years later, you'll want internal emails showing you took the mission seriously and struggled with the commercialization decision.

What About Other Non-Profit AI Players?

Mozilla, Wikipedia, and the Free Software Foundation remain pure non-profits. They generate revenue (through donations, partnerships, and services) but don't commercialize their core mission.

The difference is that they're not trying to be at the frontier of AI capability. They're not competing with Google, Meta, and Microsoft for research talent and compute resources.

Here's a hard truth: in capital-intensive research, being non-profit is a competitive disadvantage. You can't offer the same salaries. You can't fund the same computing infrastructure. You'll lose top talent to well-funded commercial labs.

That's not an excuse for mission drift. But it's context for why OpenAI faced such pressure to commercialize.

The Role of Public Opinion

Musk's lawsuit also functions as a PR campaign. By filing publicly and making dramatic claims about OpenAI abandoning its mission, he shapes how the public understands the company.

If you ask people on the street, "Is OpenAI still doing non-profit research?" many would probably answer no, largely because of Musk's lawsuit and the media coverage it generated.

That perception affects:

- Recruiting decisions (Do researchers want to work for a company that abandoned its mission?)

- Government regulation (Do policymakers treat OpenAI as a non-profit or a commercial entity?)

- Customer trust (Should enterprises trust a company that broke its founding promises?)

- Competitive dynamics (Does it make x AI more appealing as an alternative?)

So even if Musk loses the lawsuit on technical legal grounds, he might win the broader cultural argument.

Timeline for Resolution

Let me give you realistic expectations:

2025: Discovery phase continues. Both sides will demand internal documents and emails. Preliminary motions filed. Settlement talks likely begin.

2026: If settlement talks stall, trial scheduled. More likely: settlement reached for an undisclosed amount (though legal observers will speculate).

2027+: If trial proceeds, verdict issued. Appeal filed. Resolution 2027-2028.

The most probable outcome: a settlement announcement in mid-2025 for somewhere between $1-5 billion, framed by Musk as a partial victory and by OpenAI as a cost of resolving legal uncertainty.

FAQ

What exactly is Elon Musk suing Open AI and Microsoft for?

Musk is suing OpenAI and Microsoft for

Did Open AI ever publicly say it would remain a non-profit forever?

Not explicitly. OpenAI was founded as a non-profit in 2015 with the stated mission to develop AI safely for the benefit of humanity. However, in 2019, the organization announced a hybrid structure (non-profit parent with for-profit subsidiary) to enable venture capital investment. Musk's lawsuit argues that this pivot violated the founding mission he contributed to. OpenAI's defense is that the hybrid structure was disclosed and technically maintains non-profit status.

How much money did Elon Musk actually invest in Open AI?

According to the lawsuit filing, Musk invested approximately $38 million in seed funding during OpenAI's early years. Beyond capital, Musk claims he provided valuable strategic contributions including helping recruit key talent, making business introductions, and providing startup advice. The lawsuit argues these non-financial contributions added significant value that should be factored into damages.

Could Open AI actually owe Musk $134 billion?

It's highly unlikely. The

Why does Elon Musk care about this now, years later?

Musk has multiple motivations. First, he genuinely believes OpenAI betrayed him and its founding mission, and he wants compensation. Second, he's building his own AI company, x AI, and winning this lawsuit would provide capital and positioning. Third, the lawsuit reinforces his narrative that OpenAI is no longer pursuing its original mission of open, safe AI development. The lawsuit is partly legal strategy and partly PR strategy.

What does Microsoft have to do with this lawsuit?

Microsoft is named as a co-defendant because the lawsuit argues that Microsoft's massive investment (starting with

When will this lawsuit be resolved?

The case is still in early stages. Discovery (document exchange and testimony) will continue through 2025. Settlement talks are likely. If a settlement doesn't happen, trial could occur in 2026 or 2027, with appeals potentially extending into 2028 or beyond. Most complex tech litigation cases take 3-5 years from filing to resolution. The fastest realistic outcome is a settlement in 2025-2026 for an undisclosed amount.

What does this lawsuit mean for AI governance more broadly?

The case raises fundamental questions about whether founding missions have legal enforceability, whether non-profit structures can credibly commit to open research in capital-intensive fields, and whether large investors in non-profits can be held liable for influencing the organization's trajectory. If courts rule in Musk's favor, it could establish precedent that would affect how other non-profit research organizations operate and how they manage investor relationships. A ruling against Musk would suggest that organizations have more flexibility to pivot away from founding missions, especially under hybrid structures.

Is there precedent for a lawsuit like this?

Not in tech. Non-profit mission drift cases do exist, but they're rare and typically involve traditional non-profits like hospitals or educational institutions, not cutting-edge research companies. OpenAI's situation is novel. Legal observers are watching closely because the outcome could establish important precedent for how courts treat non-profit commercial transitions. The closest precedent is probably cases involving donor intent and restricted funds, but those involve different legal principles than what Musk is arguing.

Should founders of AI companies care about this case?

Absolutely. If you're starting an AI company and considering a non-profit structure, this case suggests you should: (1) be crystal clear upfront about whether and how you might commercialize later, (2) get all founders and early investors to explicitly agree to the governance model, (3) document the strategic reasoning for any major pivots, and (4) consider whether you actually need a non-profit structure or if you'd be better served by a for-profit company committed to responsible AI development (like Anthropic). The lawsuit highlights the risks of ambiguity around founding missions and governance structures.

The Bottom Line: What Actually Happened Here

Here's my honest take after tracking this situation for months:

OpenAI genuinely did pivot away from its founding non-profit mission. That's not disputable. In 2015, the organization was structured as a pure non-profit. In 2024, it's essentially a commercial enterprise backed by a for-profit subsidiary and a $100 billion Microsoft investment. The mission evolved from "do safe, open AI research" to "build and deploy powerful AI products profitably."

But here's where it gets complicated: that pivot might have been necessary. You arguably can't compete at the frontier of AI research as a pure non-profit in 2024. The compute costs are too high. The talent competition is too fierce. The capital requirements are too immense.

So OpenAI made a pragmatic choice. It accepted that being a non-profit didn't work at scale, so it built a hybrid structure that allows for-profit operations under a non-profit umbrella.

Was that legal? Probably yes, at least under the hybrid structure they announced.

Was it what Elon Musk signed up for in 2015? Probably not.

Did Elon Musk have the right to object in 2019 when they announced the hybrid structure? Yes, but he didn't.

Is he entitled to

Is he entitled to something? Possibly. If courts decide that early contributors to non-profits have certain rights over the organization's future direction, they might award damages. But those damages would be in the low single-digit billions, not the claimed $134 billion.

Most likely outcome: settlement for $2-5 billion, announced sometime in 2025 or 2026, positioned as both a victory and a pragmatic resolution by both sides.

And then the AI industry moves on, having learned that founding missions matter but aren't inviolable, and that hybrid structures are legally ambiguous enough that they'll need clearer regulation going forward.

The real question isn't whether Musk wins or loses. It's whether this case prompts future AI companies to be more thoughtful about governance structures from the start. Based on the precedent, my bet is yes.

Key Takeaways

- Musk invested 79-$134 billion for the company's pivot from non-profit to commercial operation

- OpenAI's 2019 hybrid structure (non-profit parent with for-profit subsidiary) created legal ambiguity about whether the founding mission remained binding

- Microsoft's $100 billion investment fundamentally changed OpenAI's incentive structure from open research to profitable commercialization

- The lawsuit faces significant legal hurdles: limited precedent for non-profit mission drift cases and unclear damages calculation methodology

- Most likely resolution is a settlement between $1-5 billion in 2025-2026, with broader implications for AI governance and non-profit commercial transitions

![Musk's $134 Billion OpenAI Lawsuit: What's Really Happening [2025]](https://tryrunable.com/blog/musk-s-134-billion-openai-lawsuit-what-s-really-happening-20/image-1-1768912633254.jpg)