Introduction

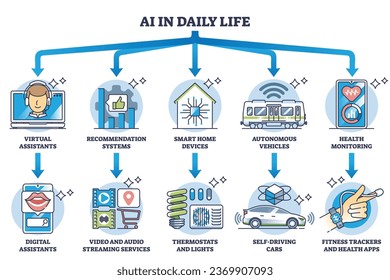

Artificial Intelligence (AI) has permeated many aspects of our daily lives, from virtual assistants that manage our schedules to algorithms that recommend the next movie to watch. However, with this growing integration, AI's role in providing advice—especially in sensitive areas like health and safety—has sparked intense debate. One emerging concern is the reliance on AI for guidance about critical decisions, such as drug use. This article delves into the complexities of AI advice, the potential pitfalls, and how we can responsibly harness AI for safer outcomes.

TL; DR

- AI's Role in Advice: Increasingly used in health and safety contexts, but not without risks.

- Human Oversight is Crucial: AI should complement, not replace, expert human advice.

- Ethical AI Development: Focus on transparency and accountability to avoid misinformation.

- Future Trends: AI improvements and regulations are necessary to ensure safe implementations.

- Actionable Steps: Develop robust guidelines and educate users on AI limitations.

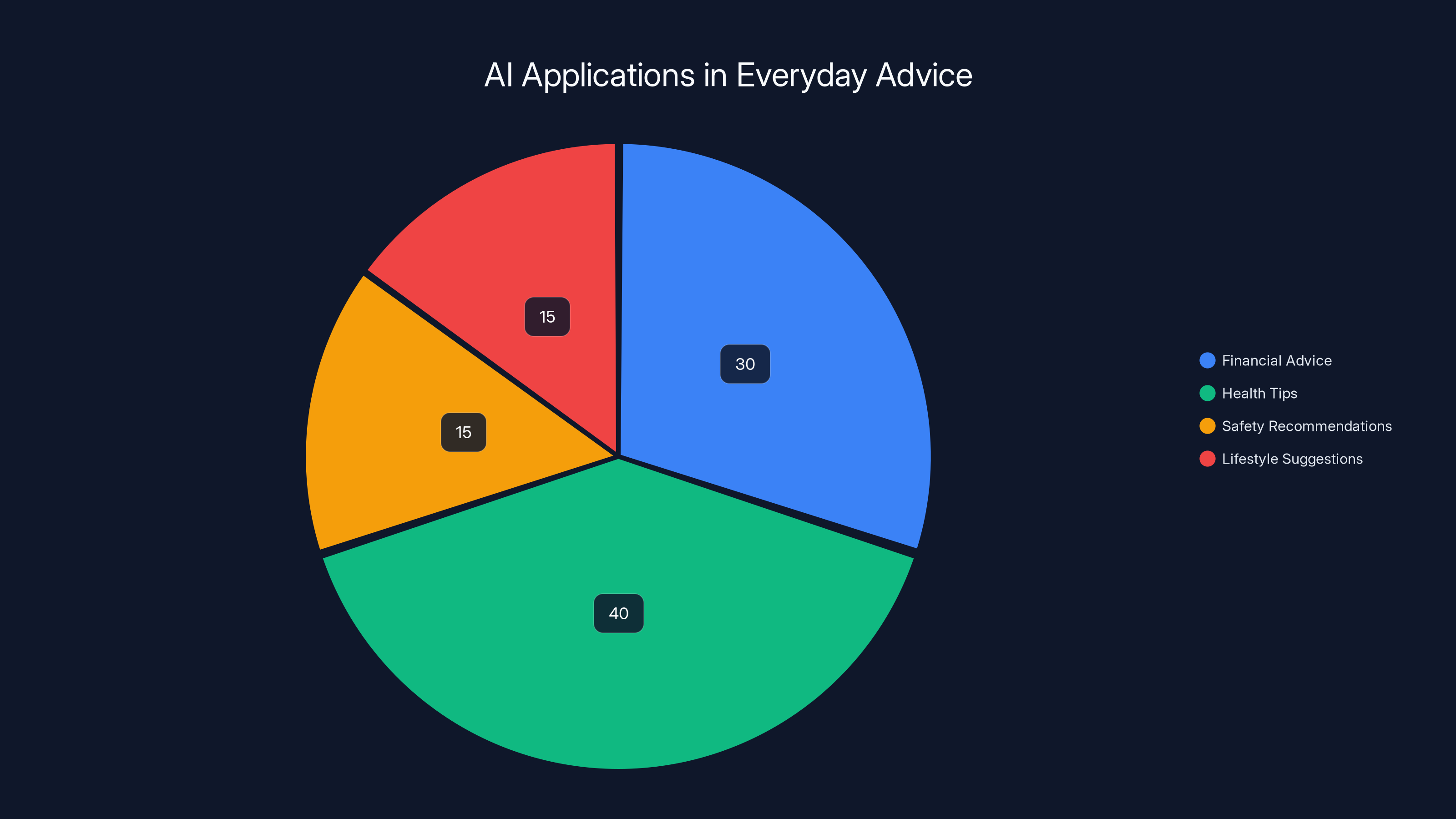

AI is predominantly used in health tips (40%) and financial advice (30%), with smaller shares in safety (15%) and lifestyle (15%). Estimated data.

The Rise of AI in Everyday Advice

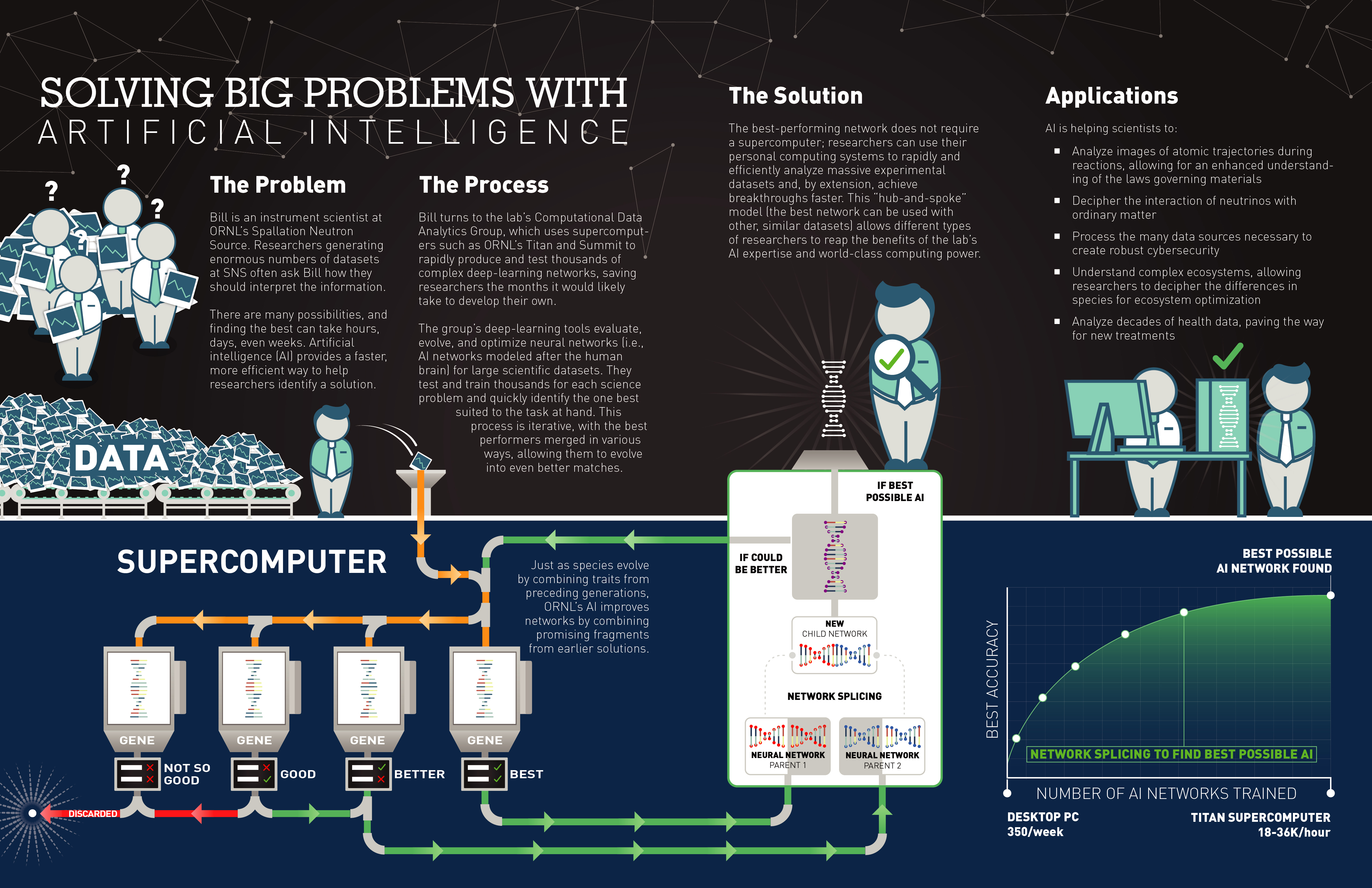

AI's ability to process vast amounts of data quickly and efficiently has made it an attractive tool for generating advice. From financial investments to health tips, AI systems are increasingly relied upon for decision-making. These algorithms, powered by machine learning, analyze patterns and predict outcomes based on historical data. According to Britannica, AI is being used extensively in financial sectors to provide insights and recommendations.

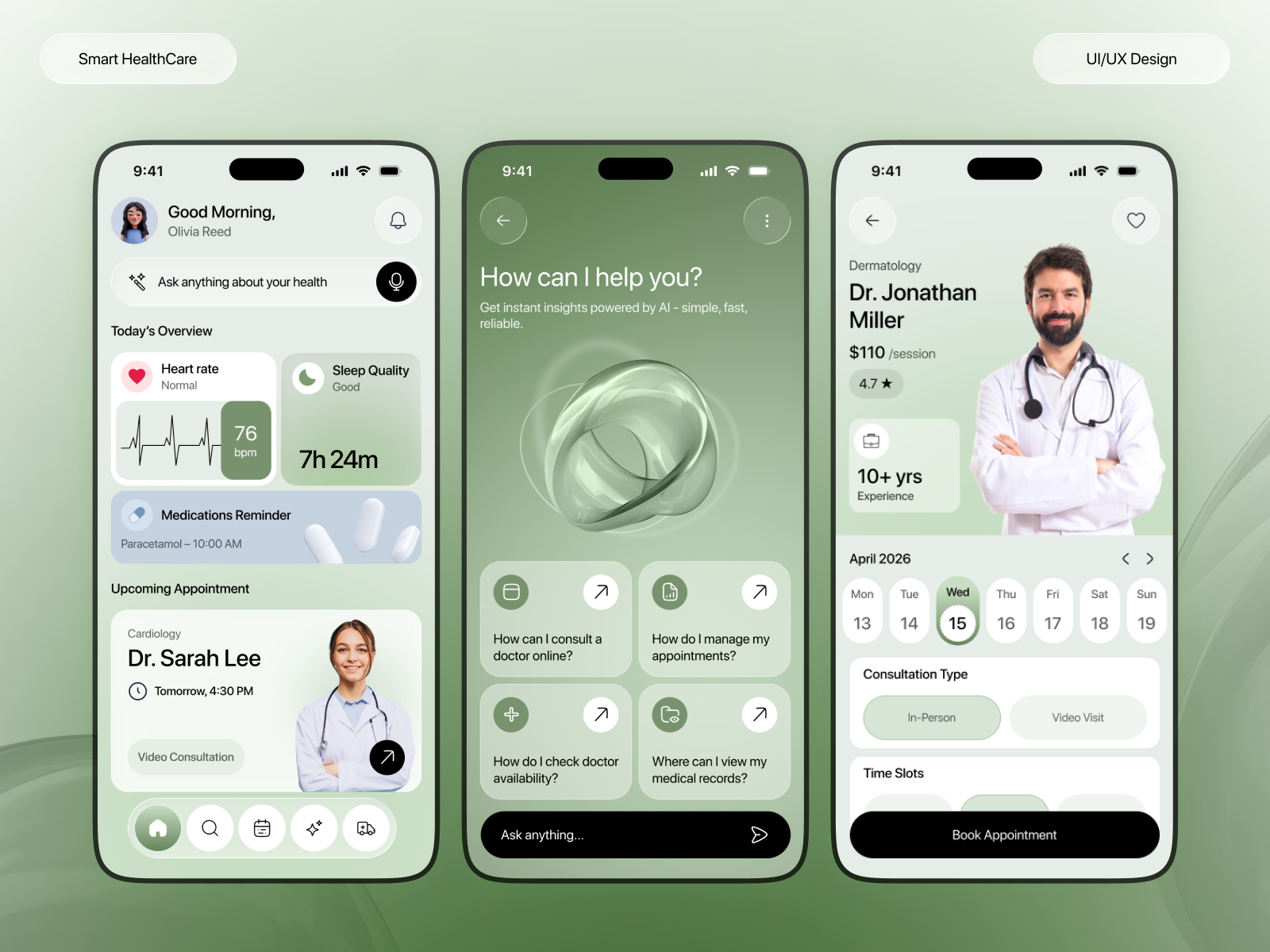

AI in Health and Safety

In the realm of health, AI has shown promise by identifying disease patterns and recommending treatment plans. For instance, AI algorithms can predict potential health risks by analyzing data from wearable devices. However, when it comes to safety, particularly advice about drug use, the stakes are higher. Misinformation or a lack of nuance in AI-generated advice can lead to dire consequences. A recent study highlights the potential of AI in predicting health outcomes, but also warns of the risks associated with incorrect predictions.

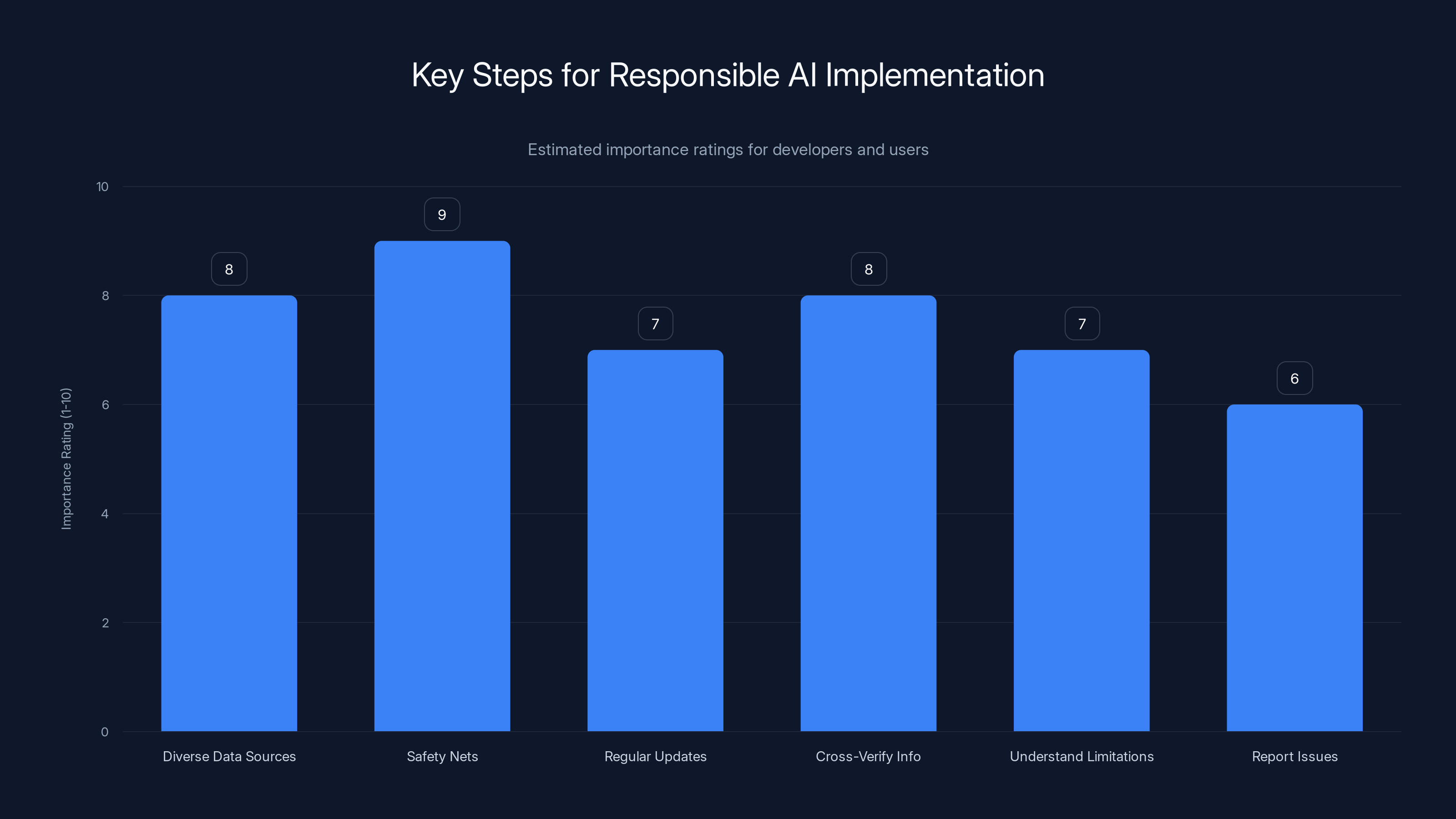

Estimated data suggests 'Safety Nets' and 'Diverse Data Sources' are highly prioritized for responsible AI implementation.

The Dangers of Relying Solely on AI

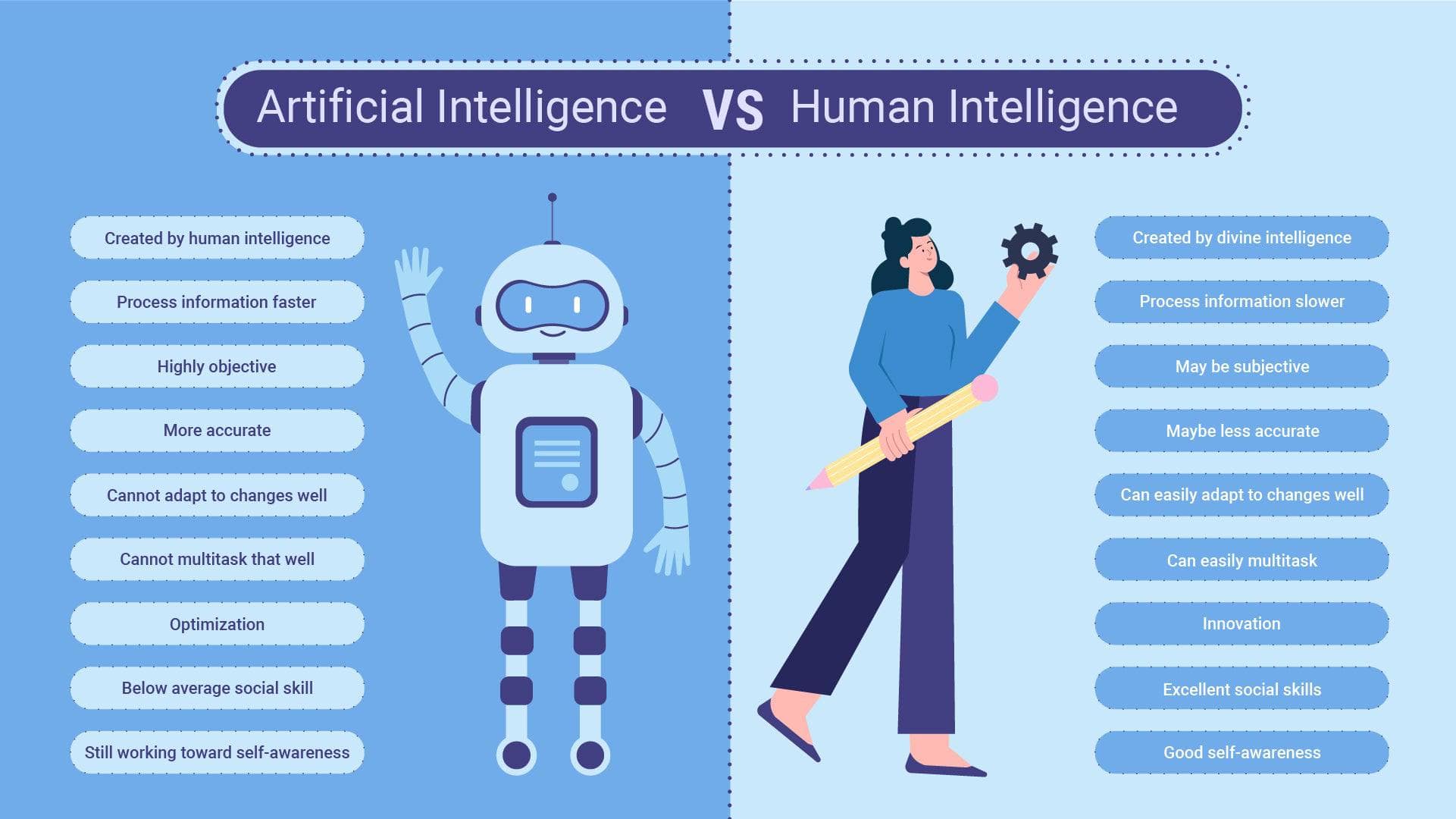

While AI can be a powerful tool, it is not infallible. AI systems lack the ability to understand context and nuance in the way humans do. This limitation is particularly dangerous when dealing with advice about recreational drug use, which can vary significantly in safety based on context and individual circumstances. According to Telehealth.org, AI's role in mental health monitoring shows promise but also faces significant challenges related to bias and privacy.

Understanding AI Limitations

AI's primary limitation in giving advice is its reliance on historical and static data. It lacks the ability to adapt to the nuances of human experience, which are often crucial in making safe decisions. For example, an AI might provide generalized advice on drug interactions without accounting for individual health factors or environmental influences. A study published in Nature emphasizes the importance of considering these factors in AI applications.

Key Limitations of AI Advice:

- Contextual Understanding: AI often misses the subtlety of human situations.

- Data Dependency: AI's accuracy is limited by the quality and breadth of its data sources.

- Lack of Empathy: AI cannot provide the empathetic support often needed in sensitive situations.

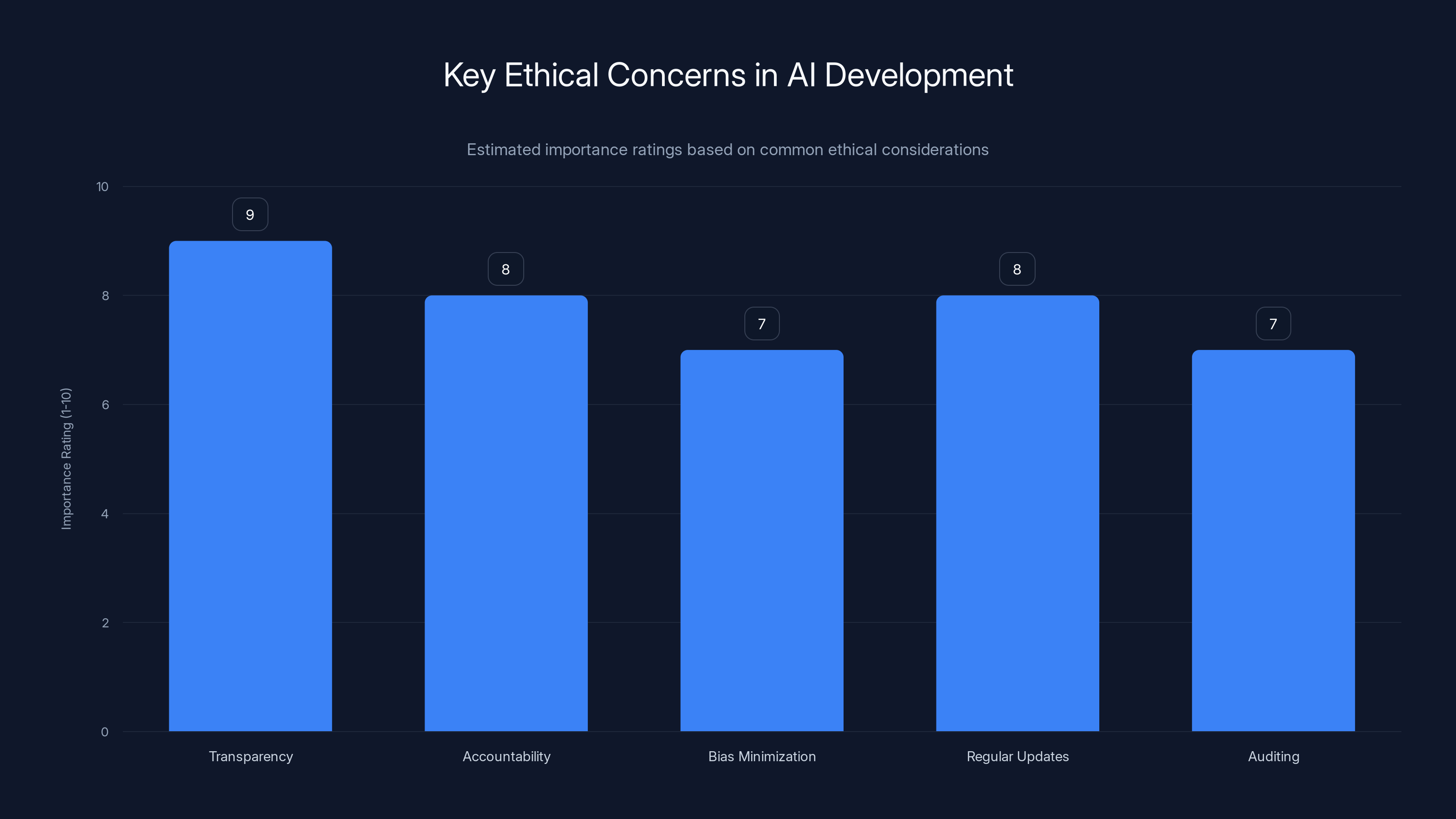

Ethical Considerations in AI Development

Developing AI systems that are safe and ethical is crucial. This involves not only technical considerations but also ethical ones. AI developers must prioritize transparency, accountability, and the establishment of robust ethical guidelines. The Info-Tech Research Group warns about the limitations of static governance models in AI, emphasizing the need for dynamic and ethical frameworks.

Ensuring Transparency and Accountability

Transparency in AI involves making the decision-making processes of algorithms understandable to humans. This can be achieved through clear documentation and open-source models. Accountability, on the other hand, requires that developers and companies take responsibility for the outcomes of their AI systems. The principle of human-in-the-loop is crucial for maintaining control and ensuring ethical AI practices.

Steps to Enhance AI Ethics:

- Transparent Algorithms: Make AI decision-making processes clear and understandable.

- Regular Audits: Conduct frequent reviews of AI systems to ensure ethical compliance.

- User Education: Provide resources to help users understand AI limitations and safe usage.

Transparency and accountability are rated as the most critical ethical concerns in AI development. Estimated data.

Practical Implementation Guides

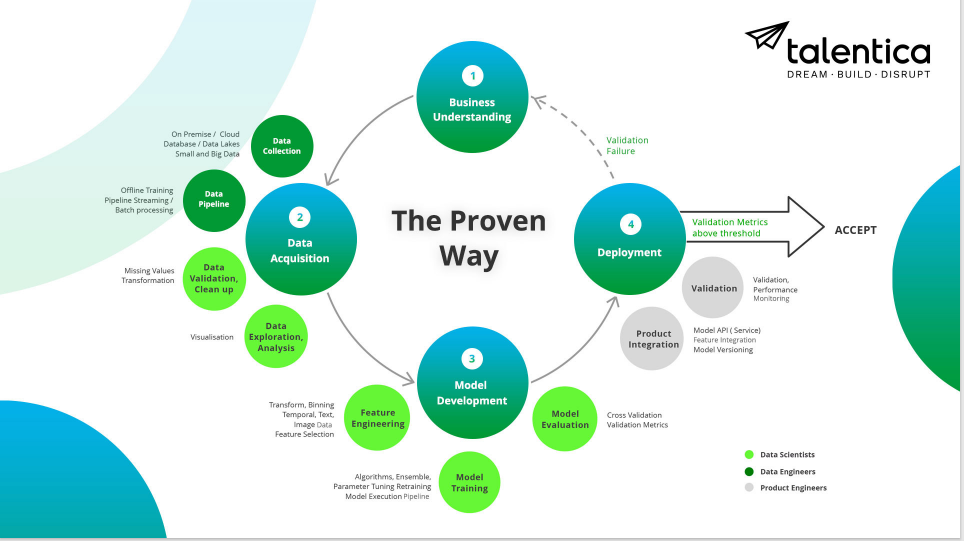

Implementing AI responsibly involves several practical steps that both developers and users can take to mitigate risks. According to UTC Business Blog, AI is transforming work processes, necessitating new guidelines for implementation.

For Developers

- Prioritize Diverse Data Sources: Ensure AI systems have access to a wide range of data, reducing bias and improving accuracy.

- Implement Safety Nets: Build mechanisms to flag potentially harmful advice for human review.

- Regular Updates and Training: Continuously update AI systems with the latest data and ethical guidelines.

For Users

- Cross-Verify Information: Always validate AI advice with trusted human experts.

- Understand AI Limitations: Be aware of the contexts in which AI may fail to provide accurate advice.

- Report Issues: Inform developers of any misleading or potentially harmful AI-generated advice.

Common Pitfalls and Solutions

AI systems are not immune to errors, and understanding common pitfalls can help in creating more robust solutions. The National Academies highlight the importance of addressing these pitfalls to ensure effective AI integration.

Pitfalls

- Overdependence on AI: Users may overly rely on AI for critical decisions without adequate human oversight.

- Data Bias: AI systems trained on biased data may perpetuate these biases in their advice.

- Complexity in Interpretation: Users may misinterpret AI advice due to complex or unclear presentations.

Solutions

- Foster Human-AI Collaboration: Encourage a blend of AI insights and human expertise in decision-making processes.

- Enhance Data Diversity: Use diverse data sets to train AI systems, minimizing bias and improving reliability.

- Simplify AI Outputs: Present AI advice in an easily digestible format to avoid misinterpretation.

Future Trends in AI-Generated Advice

The future of AI advice is likely to be shaped by advancements in technology and regulatory frameworks. According to McKinsey, generative AI is poised to revolutionize healthcare, offering insights into future trends.

Technological Advancements

AI systems are expected to become more sophisticated, with improvements in natural language processing and contextual understanding. These advancements could enable AI to provide more nuanced and reliable advice.

Regulatory Developments

Governments and organizations are likely to introduce stricter regulations to govern the development and use of AI, particularly in sensitive areas like health and safety. These regulations will aim to ensure that AI systems are safe, ethical, and accountable.

Recommendations for Safe AI Use

To ensure that AI systems are used safely, several recommendations can be made for both developers and users.

For Developers

- Adopt Ethical AI Frameworks: Implement frameworks that emphasize ethical considerations in AI development.

- Engage with Stakeholders: Work with a diverse range of stakeholders, including ethicists, users, and policymakers, to ensure comprehensive AI solutions.

- Focus on User Education: Provide clear guidelines and educational resources to users on the safe use of AI.

For Users

- Stay Informed: Keep up-to-date with the latest developments and guidelines in AI technology.

- Seek Multiple Opinions: When in doubt, consult multiple sources, including AI and human experts, for advice.

- Advocate for Transparency: Support initiatives that promote transparency and accountability in AI systems.

Conclusion

AI holds tremendous potential to enhance our lives, but it is not without risks. As AI systems become more integrated into decision-making processes, particularly in sensitive areas like health and safety, it is essential that we navigate these developments responsibly. By prioritizing ethical considerations, fostering human-AI collaboration, and focusing on user education, we can harness the power of AI while minimizing its risks.

FAQ

What is the primary role of AI in advice generation?

AI is increasingly used to analyze data and generate insights or recommendations in various fields, including healthcare and finance. However, its role should complement human expertise rather than replace it.

How can users ensure the safety of AI-generated advice?

Users should cross-verify AI advice with human experts, understand AI's limitations, and report any misleading information to developers.

What are the key ethical concerns in AI development?

Ethical AI development requires transparency, accountability, and the use of diverse data sources to minimize bias. Developers must also ensure that AI systems are regularly updated and audited.

How can AI be integrated responsibly in health and safety contexts?

AI should be used as a supportive tool in health and safety contexts, with mechanisms in place for human oversight and verification of AI-generated advice.

What future trends can we expect in AI advice systems?

We can expect advancements in AI's contextual understanding and natural language processing, along with stricter regulatory frameworks to ensure safety and accountability.

How can developers improve AI-generated advice?

Developers can improve AI advice by prioritizing diverse data sources, implementing safety nets, and providing clear, digestible outputs for users.

Why is human oversight crucial in AI advice?

Human oversight ensures that AI-generated advice is contextualized and verified, reducing the risk of harm from potential AI errors or biases.

What steps can be taken to educate users about AI limitations?

Developers and organizations should provide clear guidelines, educational resources, and training to help users understand AI's capabilities and limitations.

Key Takeaways

- AI-generated advice can be beneficial but is risky in sensitive contexts.

- Human oversight is essential to ensure the safety of AI advice.

- Transparency and accountability are crucial in ethical AI development.

- Future AI systems need improved contextual understanding and NLP.

- Stricter regulations will likely govern AI advice in health and safety.

- Users should educate themselves about AI limitations and verify advice.

- Developers must prioritize diverse data and regular audits for AI systems.

- Human-AI collaboration is key to effective and safe AI implementations.

Related Articles

- Will AI Eat Software? Not So Fast [2025]

- AI Evolution: Could It Outpace Human Control? [2025]

- Google's Strategic Move: Investing in Eve Online to Advance AI Training [2025]

- 'Trust and AI: Technological Capability Outpaces Acceptance [2025]'

- The End of Xbox Copilot AI: Lessons Learned and Future Implications [2025]

- Revolutionizing Voice Agents: OpenAI's GPT-5-Class Reasoning in Real-Time Voice [2025]

![Navigating AI in Advice: Safety, Ethics, and the Future [2025]](https://tryrunable.com/blog/navigating-ai-in-advice-safety-ethics-and-the-future-2025/image-1-1778605722484.png)