Navigating the AI Privacy Tightrope: Lessons from Mozilla's Critique and Microsoft's Copilot Backlash [2025]

Artificial Intelligence (AI) has become a double-edged sword in the tech industry—offering unprecedented capabilities while simultaneously raising significant ethical concerns. The recent developments involving Microsoft's Copilot and Mozilla's critical stance highlight the delicate balance between innovation and user consent. This article delves into the intricate dynamics of AI deployment, user privacy, and the ethical guidelines tech companies must navigate.

TL; DR

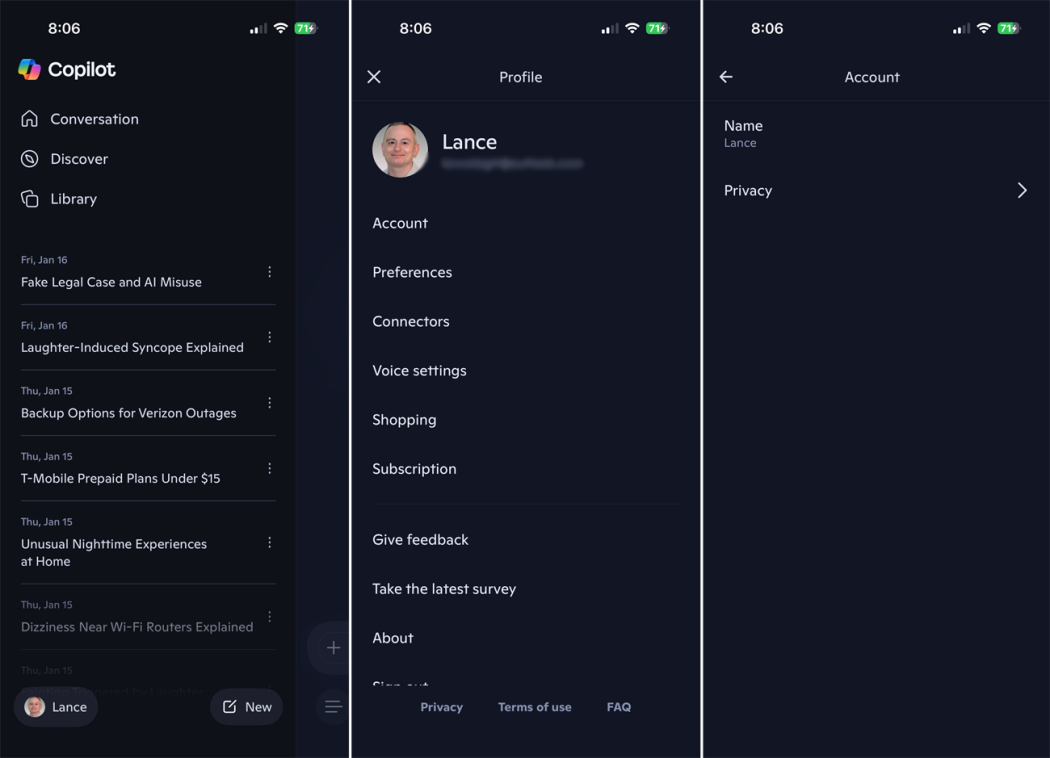

- AI Integration Challenges: Microsoft's Copilot faced backlash due to insufficient user consent mechanisms, as noted in recent reports.

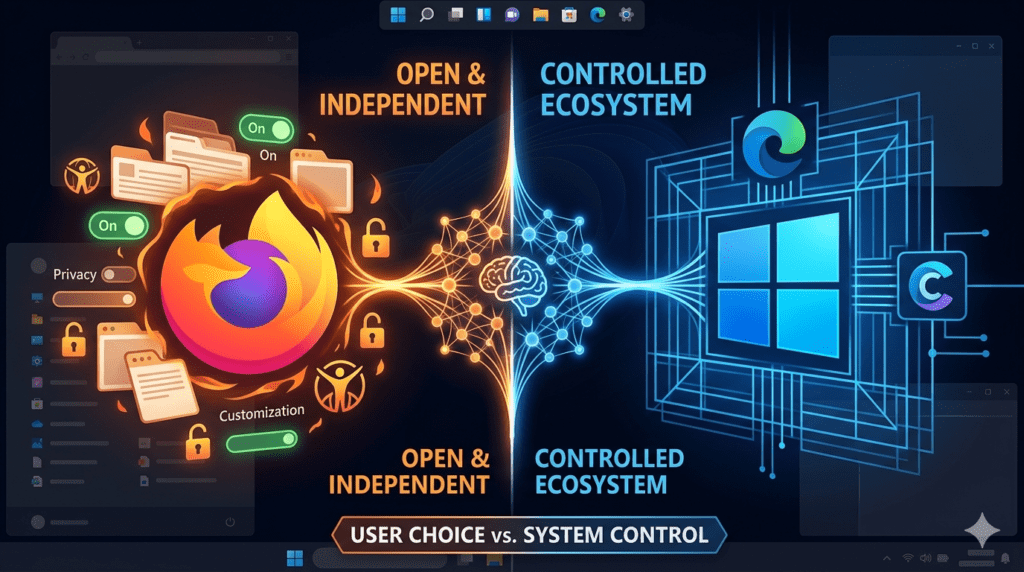

- Mozilla's Stand: Mozilla criticized Microsoft's approach, emphasizing user privacy and transparency, according to AI Multiple.

- Best Practices: Implementing clear consent processes and user education is crucial for ethical AI deployment.

- Future Trends: Expect stricter regulations and increased demand for transparent AI systems, as discussed in McKinsey's insights.

- Bottom Line: Balancing innovation with user rights is essential for sustainable AI growth.

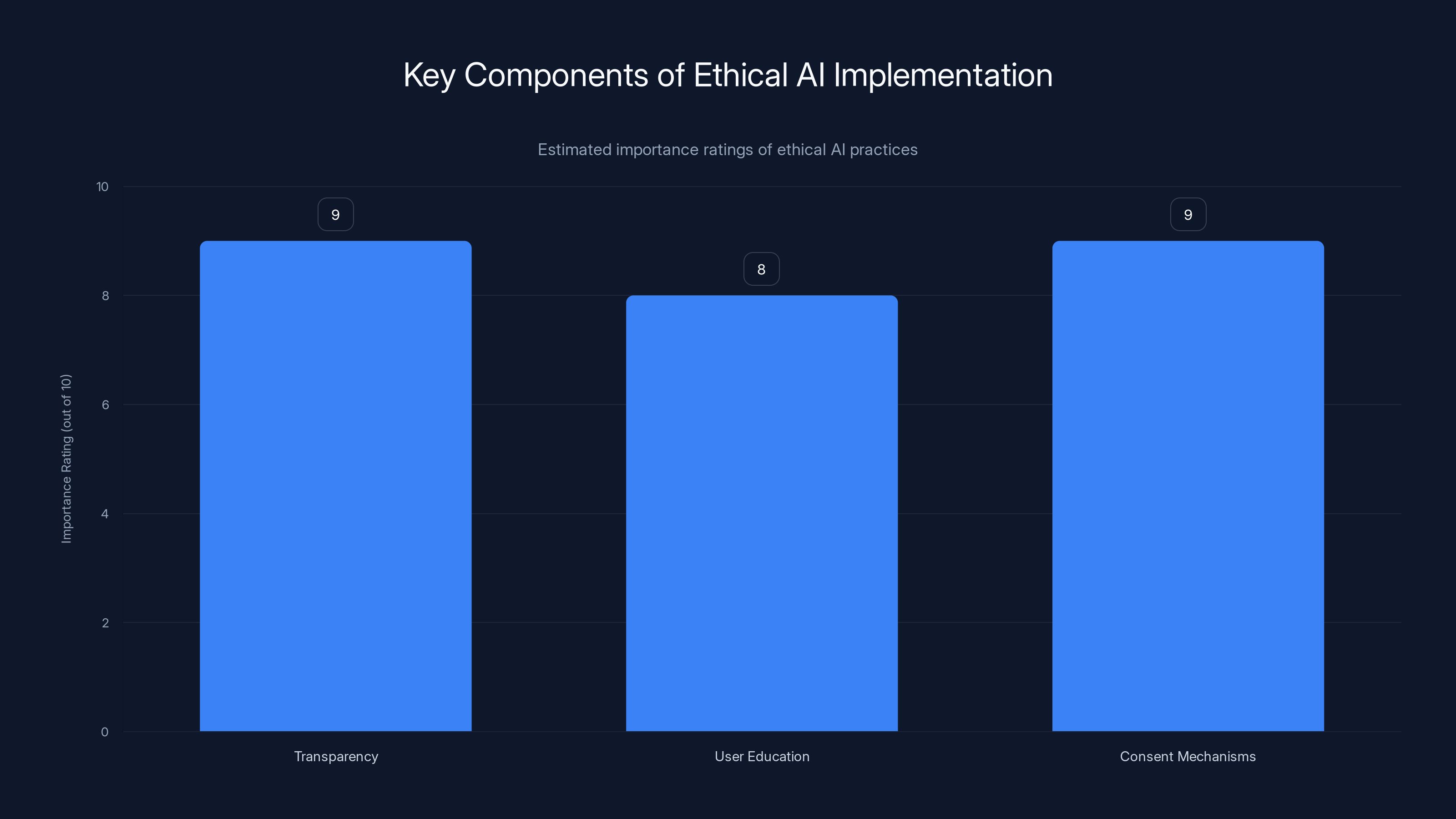

Transparency and consent mechanisms are rated highest in importance for ethical AI implementation, highlighting their critical role in user trust and data privacy. (Estimated data)

The AI Landscape: A Brief Overview

AI technologies have transformed various industries, from healthcare to finance, by automating complex tasks and providing data-driven insights. However, with great power comes great responsibility. As AI systems become more integrated into everyday applications, issues surrounding user consent and privacy have surged to the forefront, as highlighted by journalistic reviews.

The Rise of AI-Powered Tools

AI tools like Microsoft's Copilot are designed to enhance productivity by automating repetitive tasks and providing intelligent suggestions. Copilot, integrated into Microsoft's suite of products, uses machine learning models to assist developers in writing code more efficiently. However, the integration of such tools requires careful consideration of user data and privacy, as discussed in Cloud Wars.

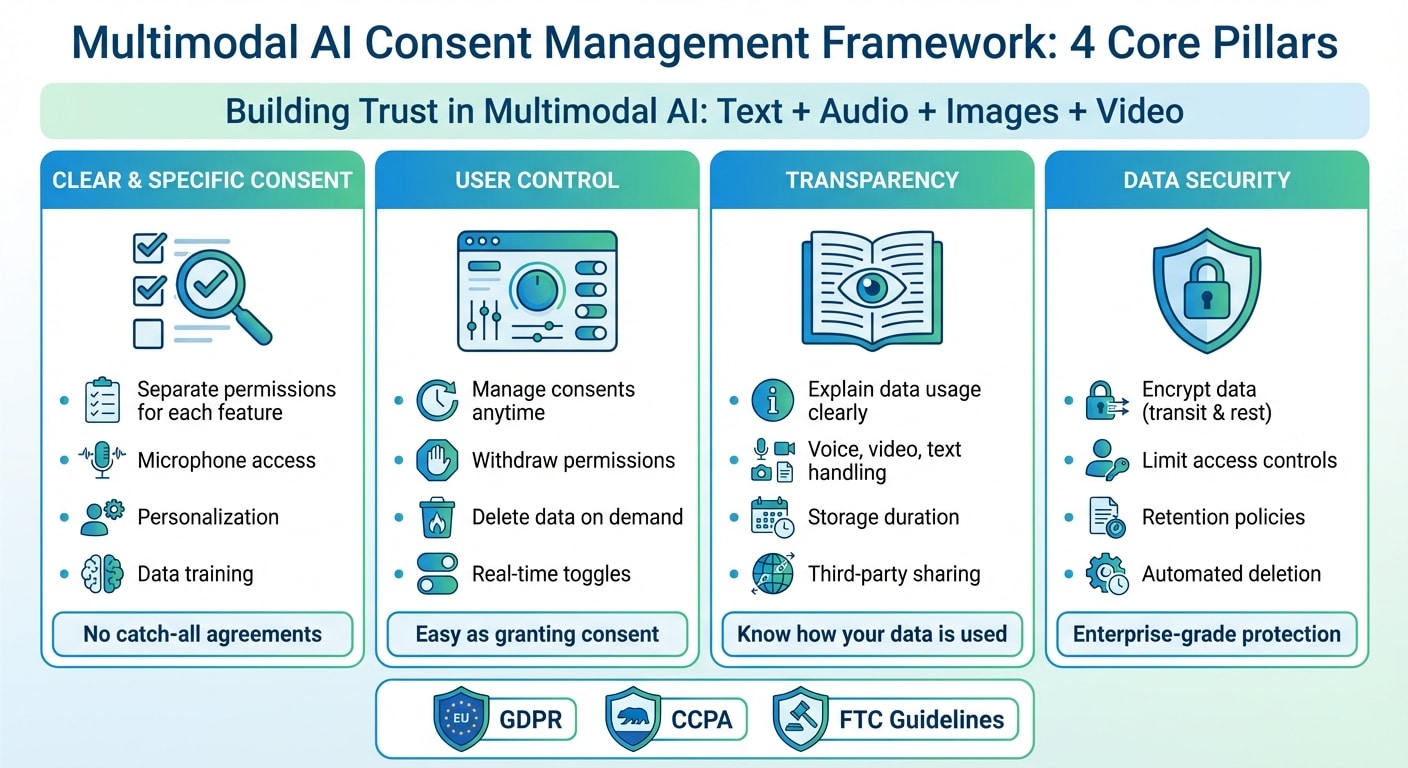

User Consent: The Cornerstone of Ethical AI

Consent is not just a legal requirement but a fundamental principle of ethical AI use. Users must be informed about how their data is collected, processed, and used by AI systems. Without explicit consent, users may feel their privacy is being compromised, leading to backlash and loss of trust in technology providers.

Key Points on Consent:

- Clear communication on data usage

- Opt-in mechanisms for data collection

- Transparent privacy policies

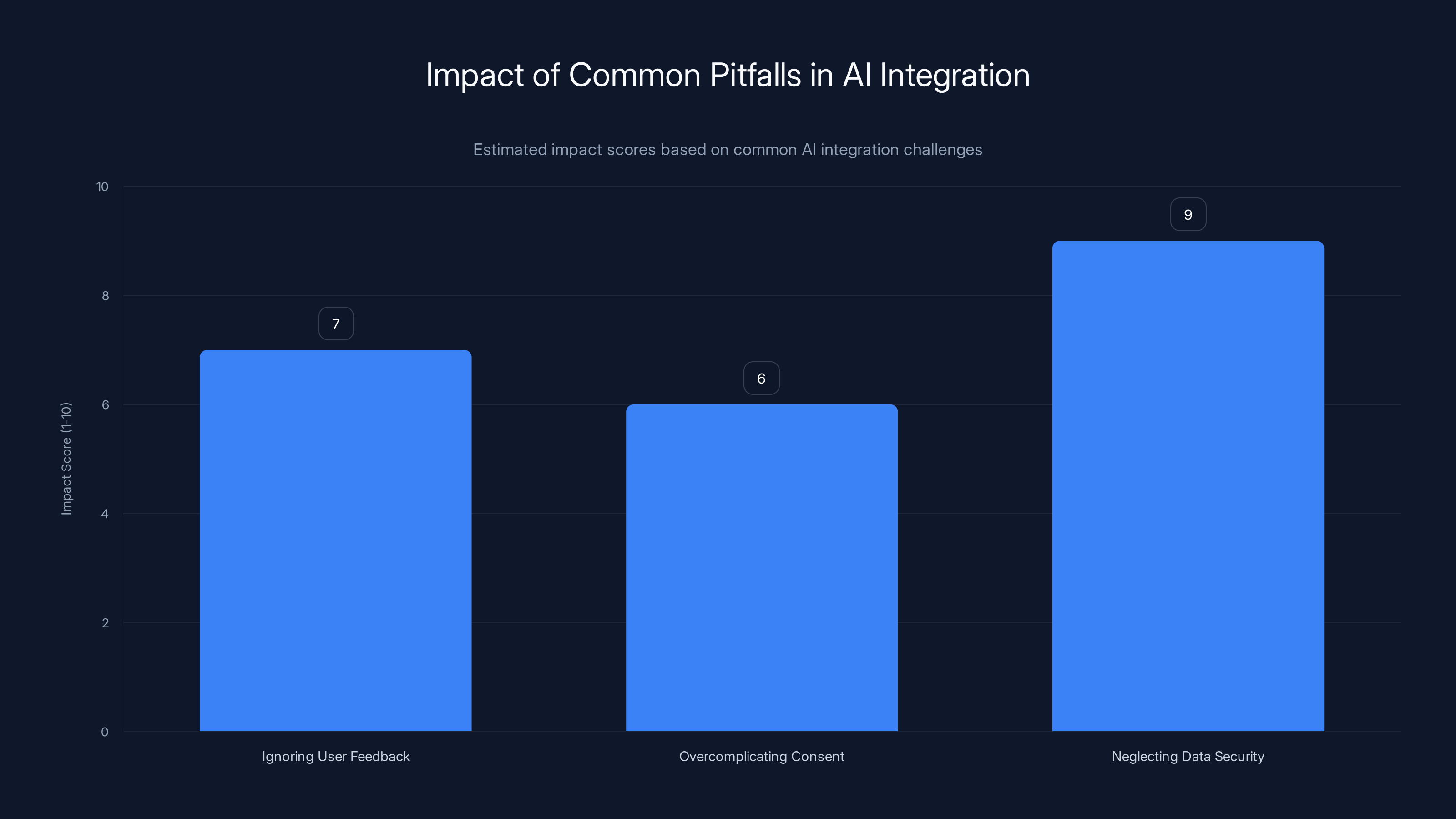

Neglecting data security is estimated to have the highest impact on user trust, with a score of 9 out of 10. Estimated data.

Mozilla's Criticism and Microsoft's Response

Mozilla, known for its commitment to user privacy, publicly criticized Microsoft's approach to AI deployment, particularly the lack of transparent consent processes in Copilot. This section explores the specifics of Mozilla's critique and how Microsoft responded to address these concerns, as reported by Let's Data Science.

Mozilla's Perspective

Mozilla's criticism centers on the idea that AI tools should not overstep user boundaries without explicit consent. They argue that Microsoft's Copilot did not adequately inform users about data usage, which is a significant breach of privacy ethics.

"Users deserve to know how their data is being used and should have the power to make informed decisions," Mozilla emphasized in a statement.

Microsoft's Adjustments

In response to the backlash, Microsoft scaled back some of Copilot's features and introduced more explicit consent options. They also committed to improving transparency around data collection processes, as detailed in Microsoft's customer stories.

Changes Implemented:

- Enhanced user consent dialogues

- Detailed privacy settings

- Clearer communication of data usage

Implementing Ethical AI: Best Practices

To avoid similar controversies, tech companies should adopt best practices in AI deployment that prioritize user consent and data privacy. Here are some strategies that can help ensure ethical AI use.

Designing for Transparency

Transparency is crucial for building trust with users. AI systems should be designed to clearly communicate how they function and what data they require, as suggested by AI Multiple's insights.

- Documentation: Provide comprehensive user guides and FAQs.

- User Interfaces: Design intuitive interfaces that highlight privacy controls.

- Feedback Mechanisms: Allow users to provide feedback on AI interactions.

Prioritizing User Education

Educating users about AI capabilities and limitations is essential for informed consent. This includes explaining how AI models work and the potential risks involved, as emphasized by RIT's research.

- Workshops and Webinars: Host events to educate users about AI technologies.

- Interactive Tutorials: Offer tutorials that guide users through AI features safely.

Implementing Robust Consent Mechanisms

Implementing robust consent mechanisms involves more than just a checkbox. It requires a thoughtful approach to user engagement and data privacy, as highlighted by The Hastings Center.

- Granular Consent Options: Allow users to choose specific data they consent to share.

- Regular Updates: Keep users informed about changes in data policies and AI features.

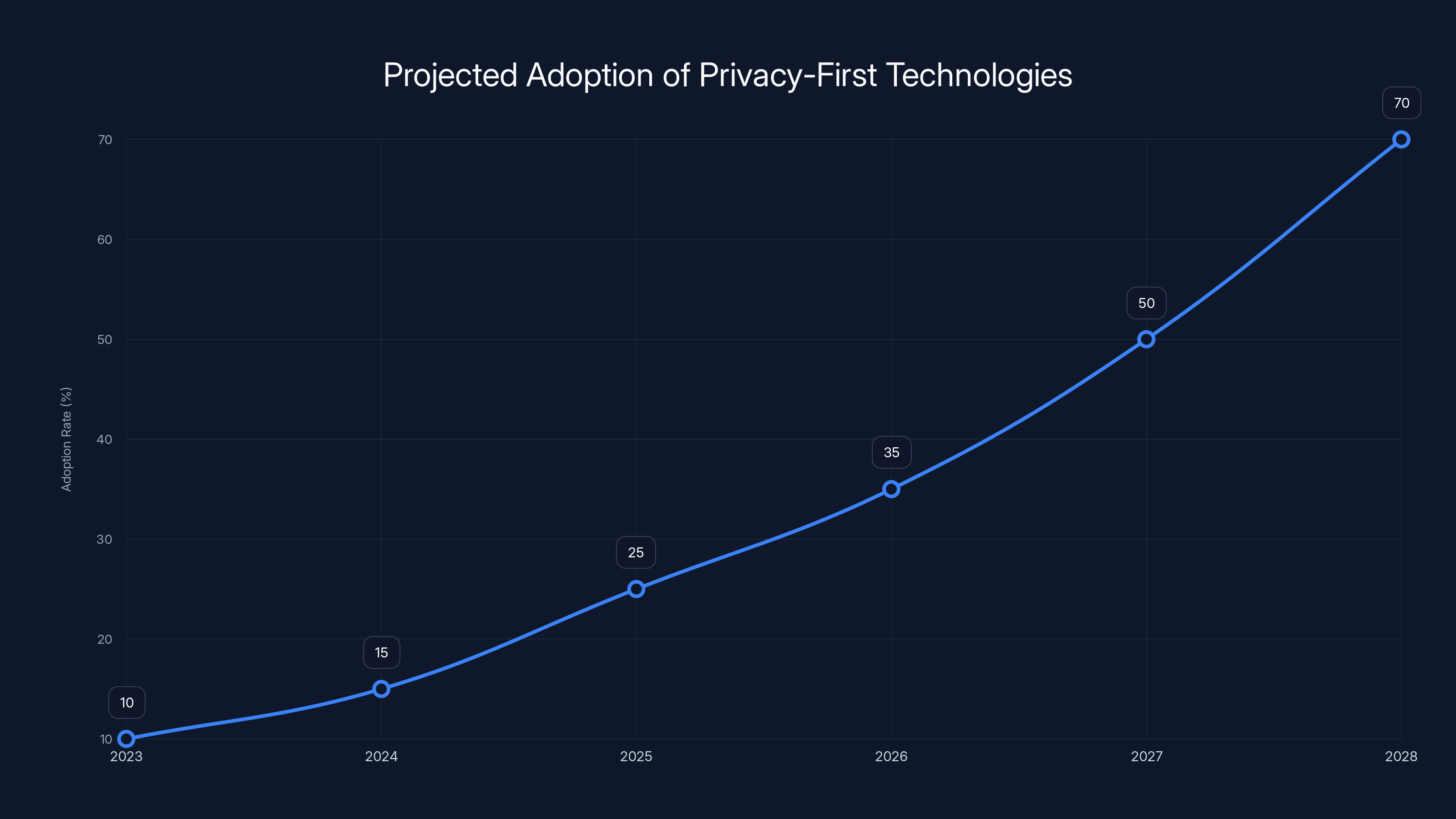

Estimated data suggests a significant increase in the adoption of privacy-first technologies, with a projected growth from 10% in 2023 to 70% by 2028.

Common Pitfalls and How to Avoid Them

While integrating AI, companies often encounter pitfalls that can undermine user trust. Identifying and addressing these issues proactively can prevent negative outcomes.

Ignoring User Feedback

Failure to incorporate user feedback can lead to dissatisfaction and mistrust.

- Solution: Establish feedback loops and act on user suggestions.

Overcomplicating Consent Processes

Complex consent processes can frustrate users and lead to noncompliance.

- Solution: Simplify consent dialogues and use clear, non-technical language.

Neglecting Data Security

Data breaches can have severe consequences for both users and companies.

- Solution: Implement robust security measures and conduct regular audits, as recommended by Malwarebytes.

Future Trends in AI and Privacy

As AI technologies evolve, so do the expectations and regulations surrounding user privacy. Companies must stay ahead of these trends to ensure sustainable growth and compliance.

Stricter Regulatory Frameworks

Governments worldwide are implementing stricter regulations to protect user data and privacy.

- Example: The General Data Protection Regulation (GDPR) in the European Union sets a high standard for data protection.

Demand for Explainable AI

Users increasingly demand AI systems that can explain their decision-making processes.

- Trend: Expect AI tools to incorporate features that provide insights into their algorithms.

Rise of Privacy-First Technologies

Technologies that prioritize privacy are gaining traction, offering users more control over their data.

- Example: Privacy-focused browsers and search engines that limit data tracking.

Recommendations for Sustainable AI Deployment

To ensure ethical and sustainable AI deployment, companies should adopt the following recommendations:

Foster a Culture of Privacy

Embed privacy considerations into the company culture and decision-making processes.

- Actions: Conduct regular privacy training and audits.

Engage with Stakeholders

Involve users, regulators, and industry experts in AI development processes.

- Benefits: Gain diverse perspectives and enhance user trust.

Invest in Privacy-Enhancing Technologies

Adopt technologies that enhance privacy without compromising functionality.

- Examples: Differential privacy and federated learning techniques.

Conclusion

The AI revolution holds immense potential to transform industries and improve lives. However, as demonstrated by the backlash against Microsoft's Copilot, the path forward must prioritize user consent and privacy. By adopting best practices, avoiding common pitfalls, and staying ahead of future trends, tech companies can navigate the AI privacy tightrope ethically and sustainably.

FAQ

What is AI user consent?

AI user consent refers to the informed agreement of users to allow AI systems to collect and process their data. It involves clear communication and opt-in mechanisms.

How does Mozilla view AI privacy?

Mozilla advocates for transparent and ethical AI practices, emphasizing the importance of user consent and data privacy in AI deployments, as discussed in Evangelical Focus.

What are the benefits of transparent AI systems?

Transparent AI systems build user trust, enhance accountability, and reduce the risk of misuse by clearly communicating how they function and use data.

What is the role of privacy-enhancing technologies?

Privacy-enhancing technologies protect user data while maintaining AI functionality. They include techniques like differential privacy and federated learning.

How can companies improve AI user consent?

Companies can improve user consent by providing clear communication on data usage, offering granular consent options, and regularly updating users on changes.

What future trends are shaping AI privacy?

Future trends include stricter regulatory frameworks, demand for explainable AI, and the rise of privacy-first technologies.

How can companies avoid AI deployment pitfalls?

Companies can avoid pitfalls by acting on user feedback, simplifying consent processes, and maintaining robust data security measures.

Why is user education important in AI?

User education is crucial for informed consent, helping users understand AI capabilities, limitations, and potential risks.

Key Takeaways

- AI tools require robust consent mechanisms to maintain user trust.

- Mozilla criticized Microsoft's Copilot for lacking transparency in data use.

- Implementing clear user consent processes is crucial for ethical AI.

- Future trends indicate stricter regulations and demand for explainable AI.

- Balancing innovation with user rights is essential for sustainable AI.

Related Articles

- Inside OpenAI: Challenges, Innovations, and the Path Forward [2025]

- How Federal Workers Regained Claude Access: The AI Standoff Unpacked [2025]

- Tame Your AI Gremlins Before Chaos Becomes Permanent [2025]

- 'Why AI Investment Demands Strategy, Not Blind Spending' [2025]

- AI Code Wars: The Battle for Software Supremacy [2025]

- The Audacity: A Satirical Dive into Tech Culture [2025]

![Navigating the AI Privacy Tightrope: Lessons from Mozilla's Critique and Microsoft's Copilot Backlash [2025]](https://tryrunable.com/blog/navigating-the-ai-privacy-tightrope-lessons-from-mozilla-s-c/image-1-1776081881930.jpg)