How Federal Workers Regained Claude Access: The AI Standoff Unpacked [2025]

In a landmark decision that has reverberated through the corridors of power and technology, federal workers have regained access to Claude AI following a U.S. court's ruling. This case not only highlights the complex interplay between government regulation and AI innovation but also sets a precedent for future policy in the AI space.

TL; DR

- Federal workers can now access Claude AI: A court ruling overturned the previous ban, citing constitutional concerns. According to Reason, the ruling emphasized the importance of maintaining a balance between national security and technological advancement.

- Trump's move deemed 'Orwellian': The court criticized the ban as an overreach against Anthropic, the AI's parent company, as reported by VPM.

- AI policy implications are significant: This decision could influence future regulatory frameworks, as noted by Cato Institute.

- Anthropic and the Pentagon's ongoing dialogue: The case has intensified discussions around AI in defense, highlighted by Global Affairs.

- Long-term impacts on AI development: Expect shifts in how AI companies engage with government entities, as discussed by Accenture.

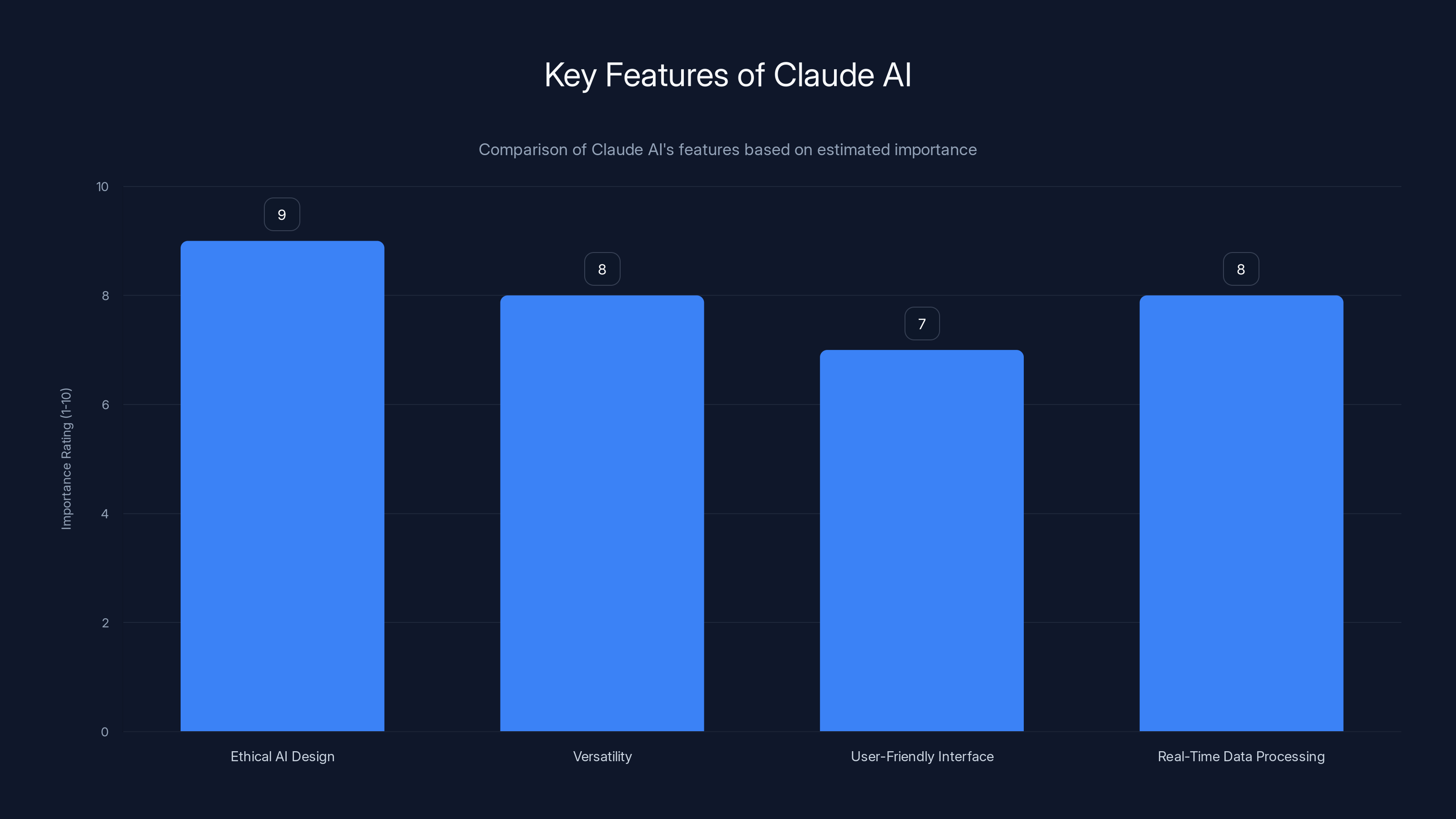

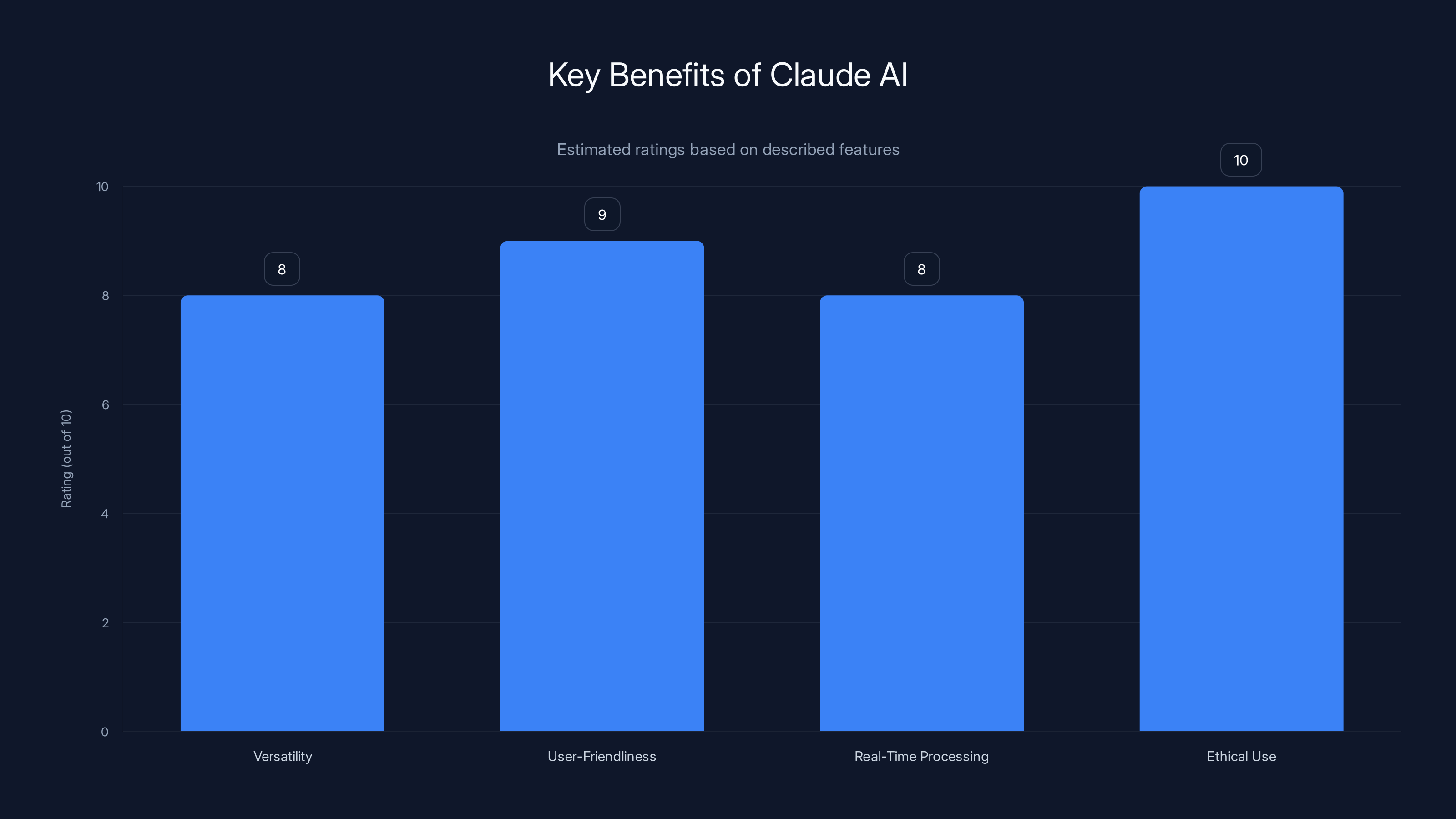

Claude AI's features are rated for their importance, with Ethical AI Design and Real-Time Data Processing being highly valued. (Estimated data)

The Anatomy of the AI Standoff

The recent court ruling that restored access for federal workers to Claude AI has been a pivotal moment in the ongoing debate around AI regulation. But to understand its full impact, we need to delve into the origins of the standoff.

The Initial Ban

In early 2023, the Trump administration imposed a ban on federal access to Claude AI, developed by Anthropic. The move was justified as a preventive measure against potential national security risks, but it raised eyebrows across the tech industry. Critics labeled it as an unwarranted intervention that stifled innovation, as reported by BBC News.

Court's Intervention

The ban was challenged in court, where a federal judge described the action as an 'Orwellian' overreach. The judge ruled that the ban was unconstitutional, citing the First Amendment and the importance of maintaining a balance between national security and technological advancement, as detailed by Pacific Legal Foundation.

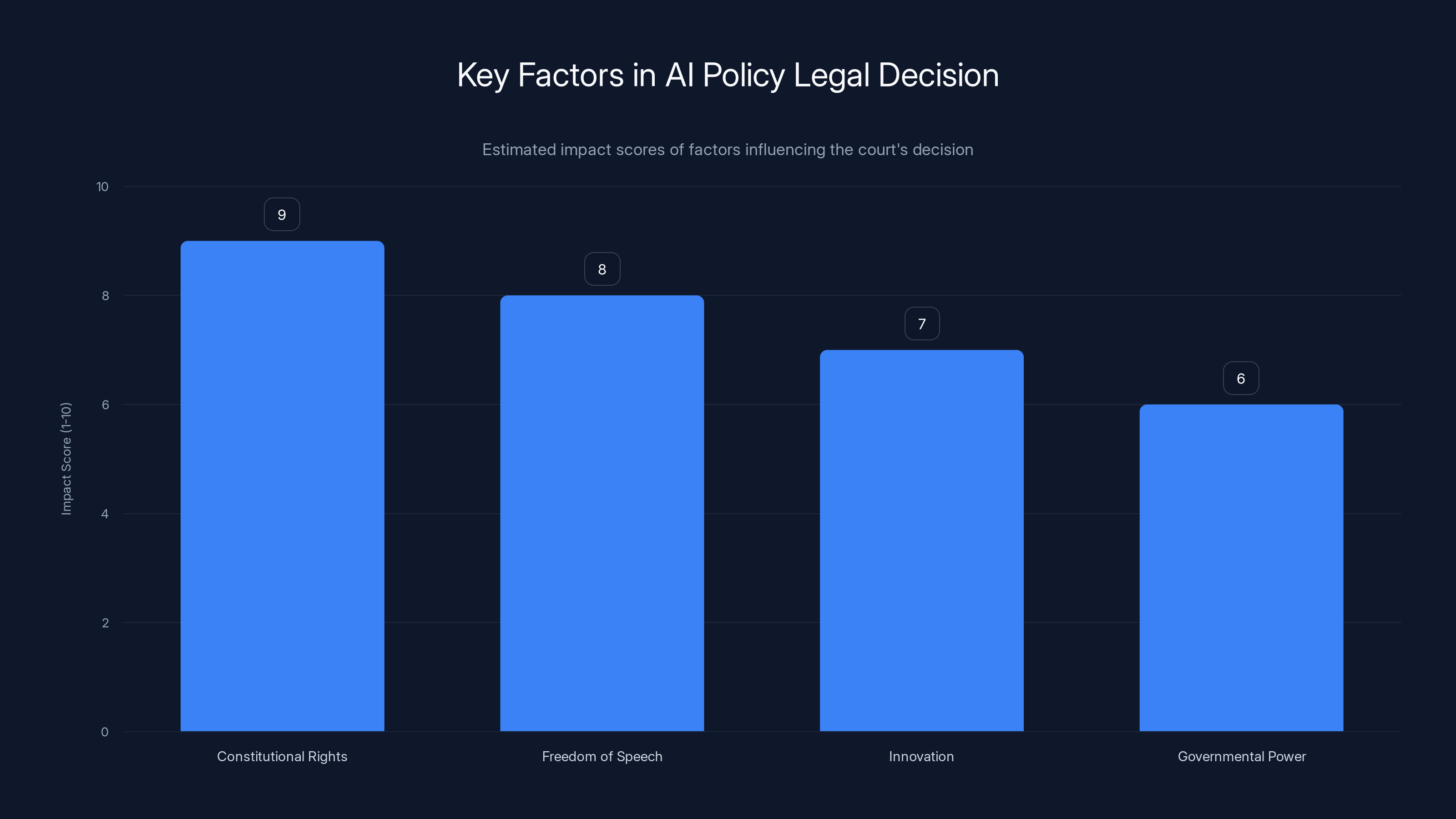

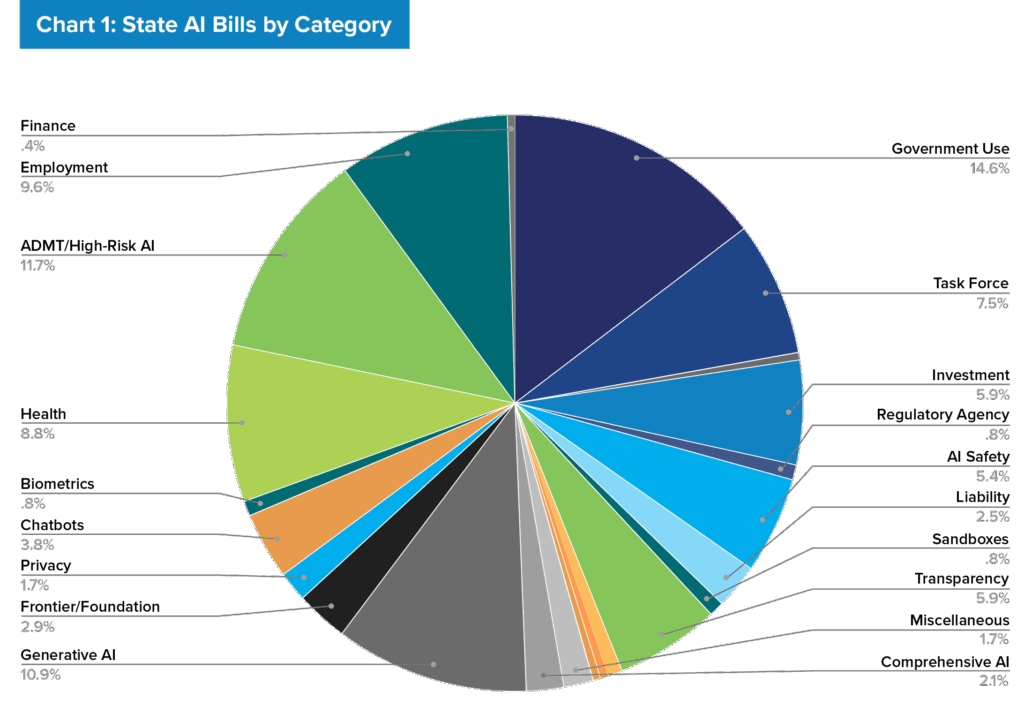

The court's decision was heavily influenced by constitutional rights and freedom of speech, with innovation and governmental power also playing significant roles. (Estimated data)

Understanding Claude AI

What is Claude AI?

Claude AI, developed by Anthropic, is a sophisticated AI model designed to assist in various tasks ranging from data analysis to creative writing. Unlike traditional AI models, Claude AI emphasizes safety and ethical considerations in its design.

Key Features

- Ethical AI Design: Built with an emphasis on safety to prevent misuse, as highlighted in Anthropic's Glasswing documentation.

- Versatility: Capable of performing a wide range of tasks across different sectors.

- User-Friendly Interface: Designed to be accessible even to those with minimal technical expertise.

- Real-Time Data Processing: Offers insights and analytics at unprecedented speeds, as noted by Built In.

Real-World Applications

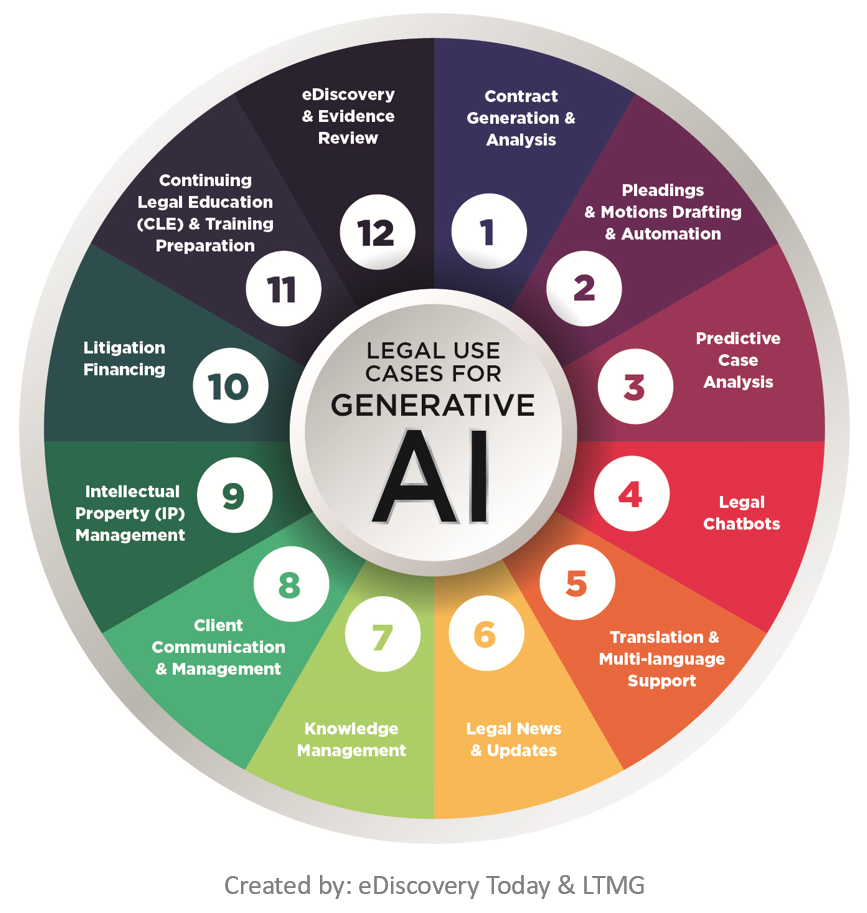

Federal agencies have utilized Claude AI for tasks such as processing large datasets and automating routine paperwork. Its ability to handle sensitive information while maintaining privacy protocols is particularly valued in government applications, as reported by The New York Times.

The Legal Battle and Its Implications

Constitutional Concerns

The court's decision to overturn the ban was based on the argument that the government's action infringed on constitutional rights. The judge emphasized the need to protect freedom of speech and innovation, highlighting the potential dangers of unchecked governmental power, as discussed by Mintz.

Impact on AI Policy

This ruling sets a significant precedent for future AI-related regulations. It underscores the necessity for clear guidelines that balance security concerns with the need to foster technological growth, as noted by inewsource.

Anthropic's Response

Following the court's decision, Anthropic has doubled down on its efforts to collaborate with government bodies. They've proposed new frameworks for AI usage in federal agencies to ensure that both security and innovation are prioritized, as reported by Benzinga.

Claude AI excels in ethical use and user-friendliness, with strong real-time processing capabilities. Estimated data based on feature descriptions.

Future Trends in AI Regulation

Evolving Policies

The case has spotlighted the need for evolving AI policies that can adapt to the rapid pace of technological advancement. Policymakers are now tasked with crafting regulations that not only protect national interests but also promote innovation, as highlighted by Brennan Center for Justice.

International Implications

The decision has also caught the attention of international bodies, with several countries re-evaluating their own AI policies. This could lead to more unified global standards for AI regulation, as discussed by Global Affairs.

AI and National Security

As AI becomes more integral to national security strategies, the dialogue between tech companies and government entities is expected to intensify. Collaborative frameworks will be crucial in ensuring both security and technological progress, as noted by Accenture.

Practical Implementation Guides

For Federal Agencies

Federal agencies looking to integrate AI technologies like Claude should consider the following steps:

- Assess Needs and Objectives: Clearly define what you hope to achieve with AI.

- Choose the Right Model: Select AI models that align with your security and ethical requirements.

- Implement Gradually: Start with pilot projects to test the efficacy of AI solutions.

- Monitor and Evaluate: Continuously assess the impact of AI on operations and make adjustments as needed.

- Ensure Compliance: Stay updated on regulations to ensure AI usage is compliant with legal standards.

For AI Developers

AI developers should focus on building models that are not only innovative but also secure and ethical. Key considerations include:

- Data Privacy: Implement robust measures to protect user data.

- Transparency: Clearly communicate how AI models work and make decisions.

- Collaboration: Work with regulatory bodies to ensure compliance and foster trust.

Common Pitfalls and Solutions

Pitfalls

- Overlooking Security: Neglecting security measures can lead to breaches and loss of trust.

- Lack of Transparency: Users are increasingly demanding transparency in how AI systems operate.

- Ignoring Ethical Considerations: Failing to address ethical concerns can result in backlash and regulatory issues.

Solutions

- Enhanced Security Protocols: Regularly update security measures to protect against new threats.

- User Education: Provide clear information on how AI systems work and the benefits they offer.

- Ethical AI Design: Prioritize ethical guidelines in AI development to ensure responsible usage.

Future Predictions

Rise of Ethical AI

Ethical AI will likely become a standard expectation across industries. Consumers and businesses alike will demand AI solutions that prioritize safety, privacy, and ethical considerations.

Increased Collaboration

Expect increased collaboration between AI developers and regulatory bodies to create frameworks that benefit all stakeholders.

Global Standards

As AI becomes more integral to various sectors, there will be a push towards establishing global standards for AI regulation and implementation.

Conclusion

The restoration of Claude AI access for federal workers marks a significant moment in the AI regulatory landscape. It highlights the delicate balance between innovation and security and sets the stage for future developments in AI policy. As the dialogue between technology and regulation continues, stakeholders must work collaboratively to ensure that AI’s potential is fully realized in a manner that benefits society at large.

FAQ

What is Claude AI?

Claude AI is an advanced AI platform developed by Anthropic, designed to perform a wide range of tasks with a focus on safety and ethical considerations.

How does Claude AI work?

Claude AI uses machine learning algorithms to process data and provide insights, with a strong emphasis on privacy and ethical use.

What are the benefits of Claude AI?

Claude AI offers versatility, user-friendliness, and real-time data processing capabilities, making it ideal for both government and commercial applications.

How can federal agencies implement AI like Claude?

Agencies should assess their needs, choose the right AI model, start with pilot projects, and ensure compliance with regulations.

What are the implications of the court ruling on AI policy?

The ruling underscores the need for balanced AI policies that protect national security while fostering innovation.

What trends can we expect in AI regulation?

Expect a rise in ethical AI, increased collaboration between developers and regulators, and the establishment of global standards.

Key Takeaways

- Federal workers regain Claude AI access after court ruling.

- Court criticizes ban as unconstitutional, citing freedom of speech.

- Significant implications for future AI policy and regulation.

- Anthropic to collaborate with government on AI frameworks.

- Increased focus on ethical AI and global regulatory standards.

Related Articles

- Understanding Anthropic's Mythos AI Model: A New Era in Cybersecurity [2025]

- Zero Shot: The New Venture Fund with OpenAI Roots Aiming to Reshape AI Investment [2025]

- Anthropic's Potential Expansion in London: Opportunities and Challenges [2025]

- Anthropic's Ascent in Private Markets: Opportunities and Challenges [2025]

- The Autonomous Frontier: Rethinking Security with Mythos [2025]

- The AI Industry's Make-or-Break Moment: Navigating Challenges and Opportunities [2025]

![How Federal Workers Regained Claude Access: The AI Standoff Unpacked [2025]](https://tryrunable.com/blog/how-federal-workers-regained-claude-access-the-ai-standoff-u/image-1-1775772241563.jpg)