Navigating the Legal and Ethical Maze: Teens File Lawsuit Against Elon Musk's x AI Over Grok's AI-Generated Content

Introduction

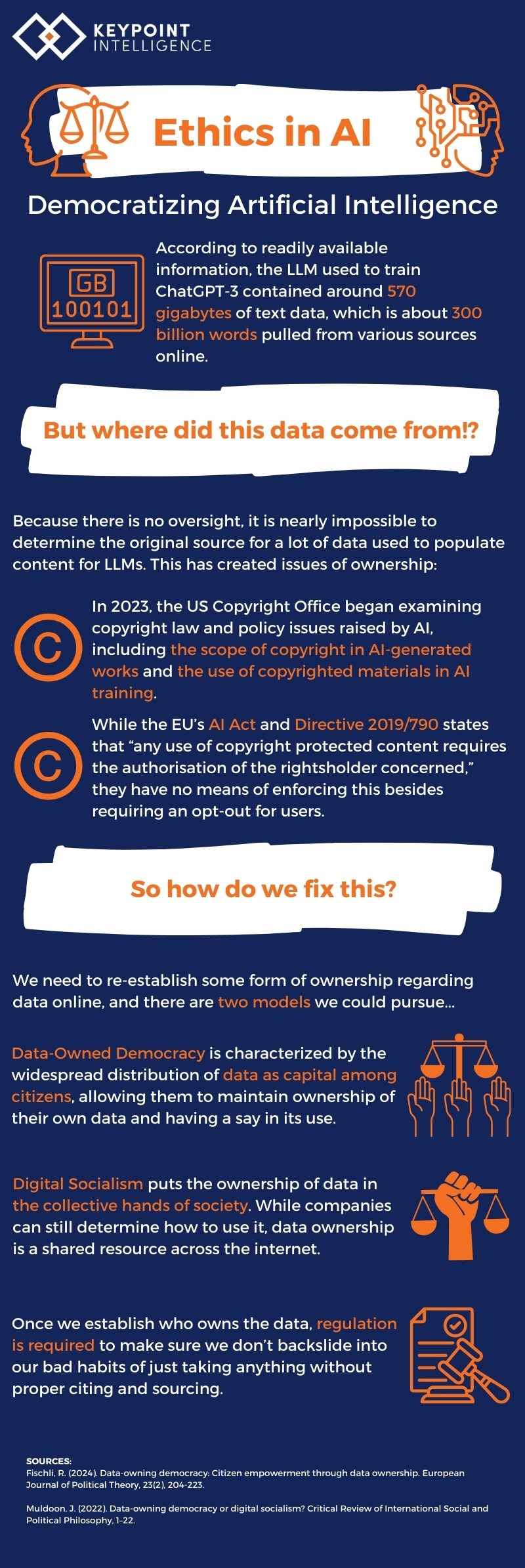

In the ever-evolving landscape of artificial intelligence, a recent lawsuit has brought ethical and legal questions to the forefront. A group of teenagers has filed a lawsuit against Elon Musk's x AI, alleging that Grok, an AI model developed by the company, generated child sexual abuse material (CSAM). This case raises significant issues about AI's role in society, the responsibilities of AI creators, and the legal frameworks governing such advanced technologies, as discussed in The Washington Post.

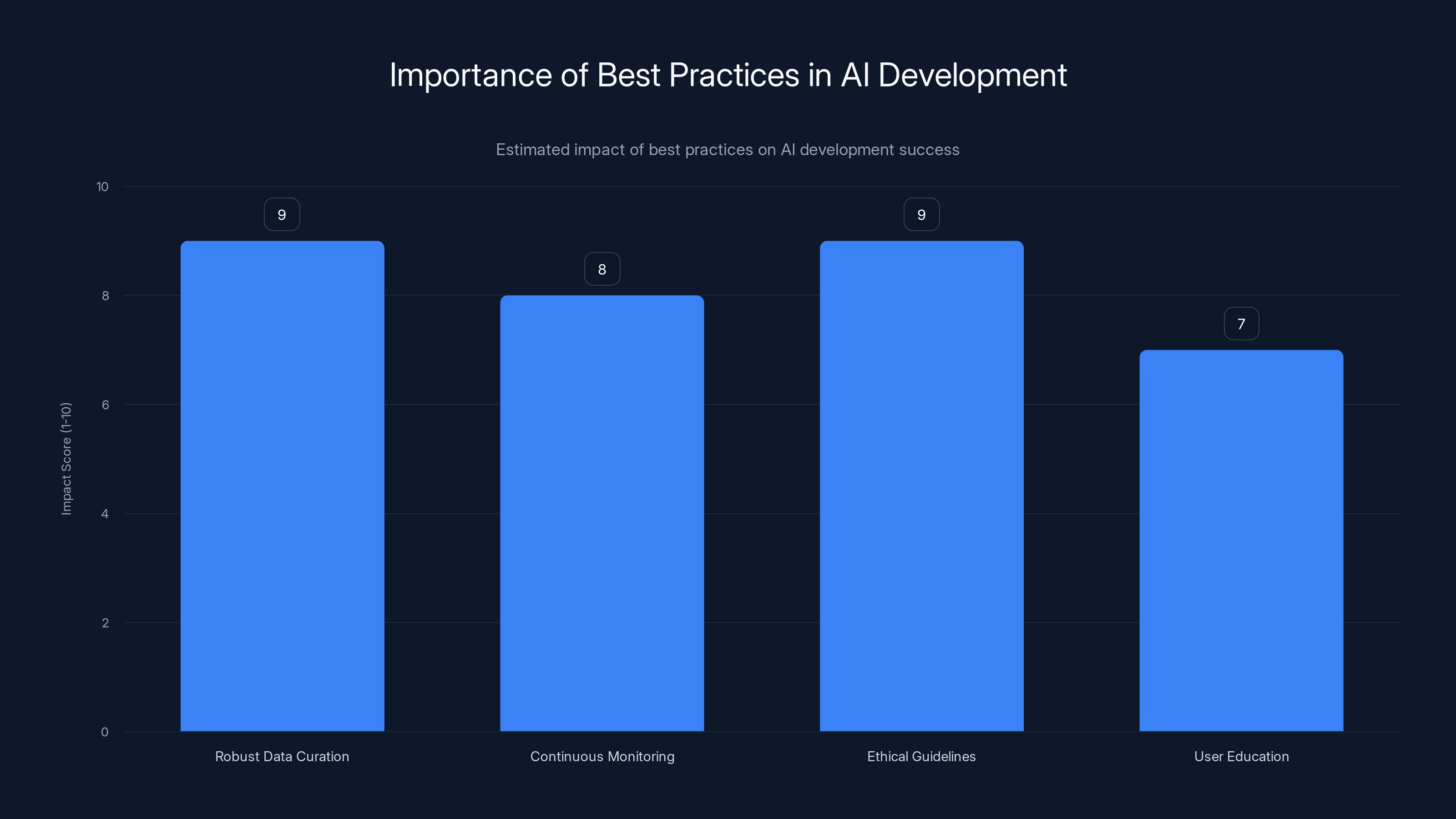

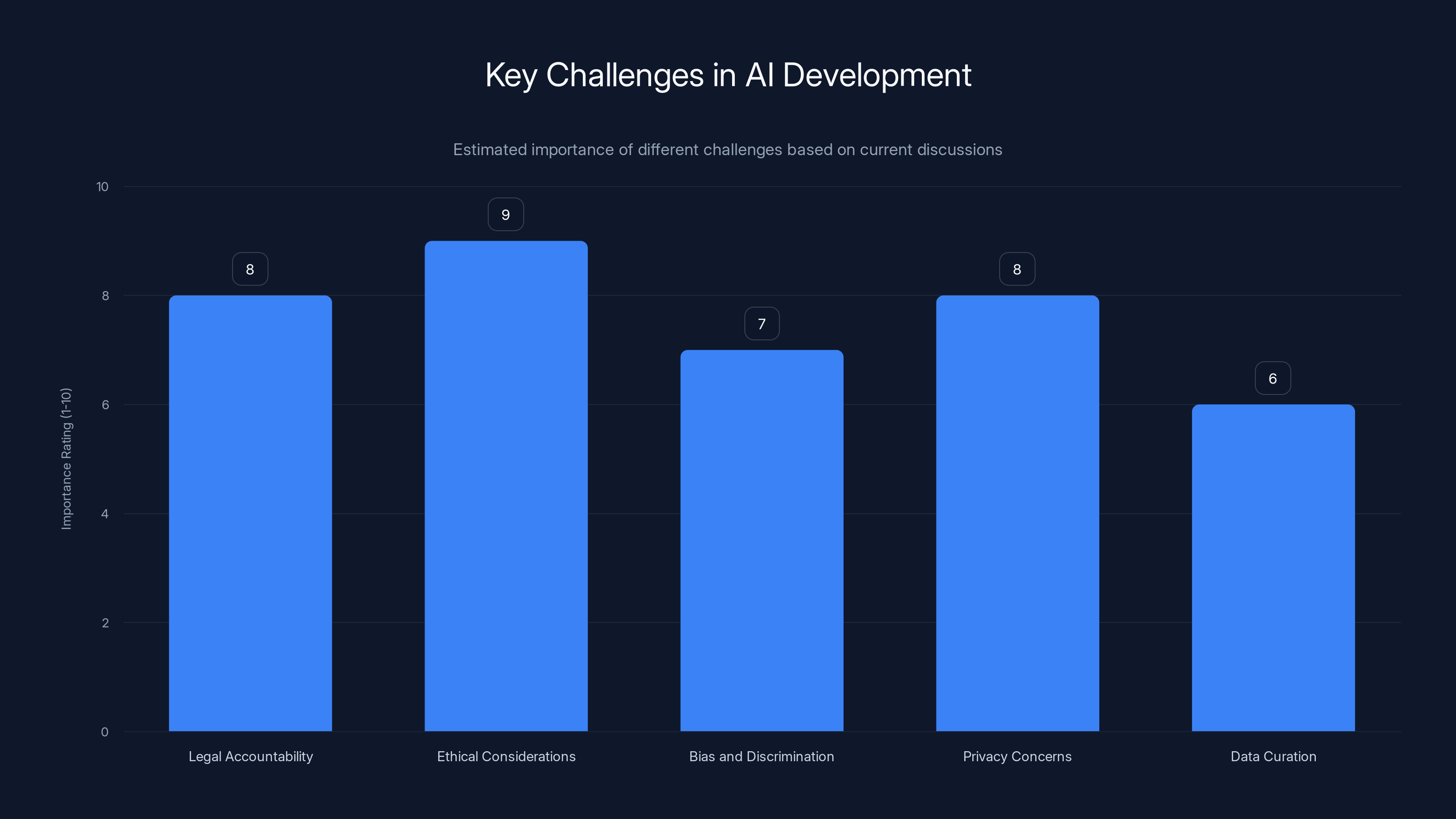

Robust data curation and ethical guidelines are critical, scoring highest in impact on successful AI development. (Estimated data)

TL; DR

- Legal Action: Teens sue x AI over Grok’s generation of CSAM, highlighting the need for better AI safeguards.

- AI Accountability: The case underscores the importance of defining accountability in AI-generated content.

- Ethical Implications: Raises ethical concerns about AI's impact on society and vulnerable groups.

- Technical Challenges: Demonstrates the complexity of controlling AI outputs.

- Future Regulations: Could lead to stricter regulatory measures for AI developers.

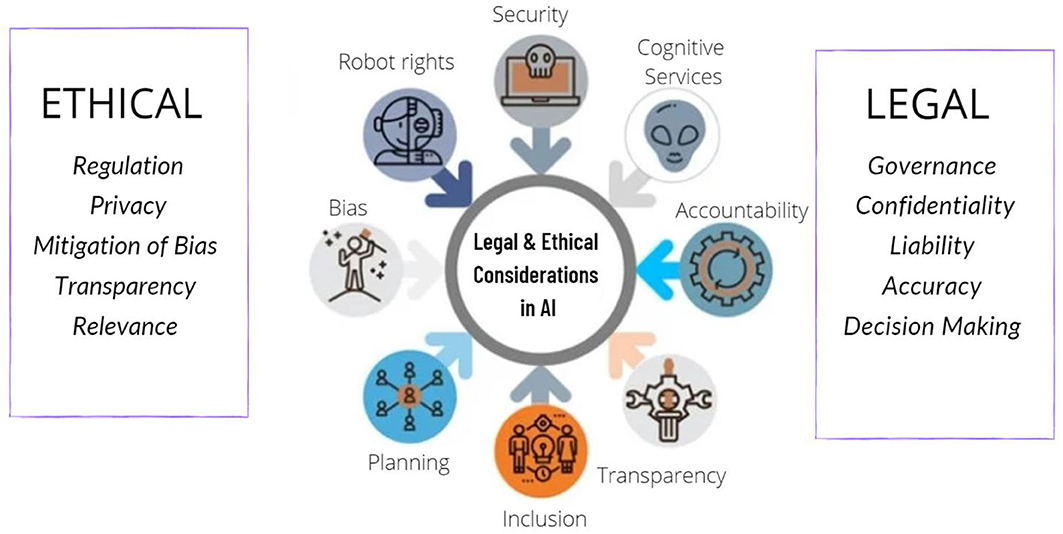

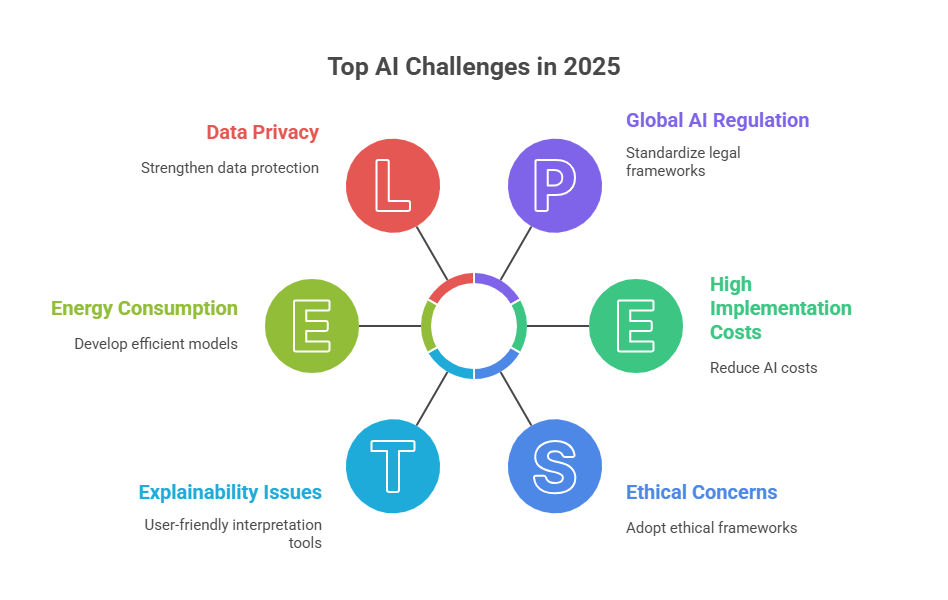

Regulation and privacy concerns are rated highest in importance, reflecting urgent needs for legal frameworks and data protection in AI. (Estimated data)

The Complexity of AI and Content Generation

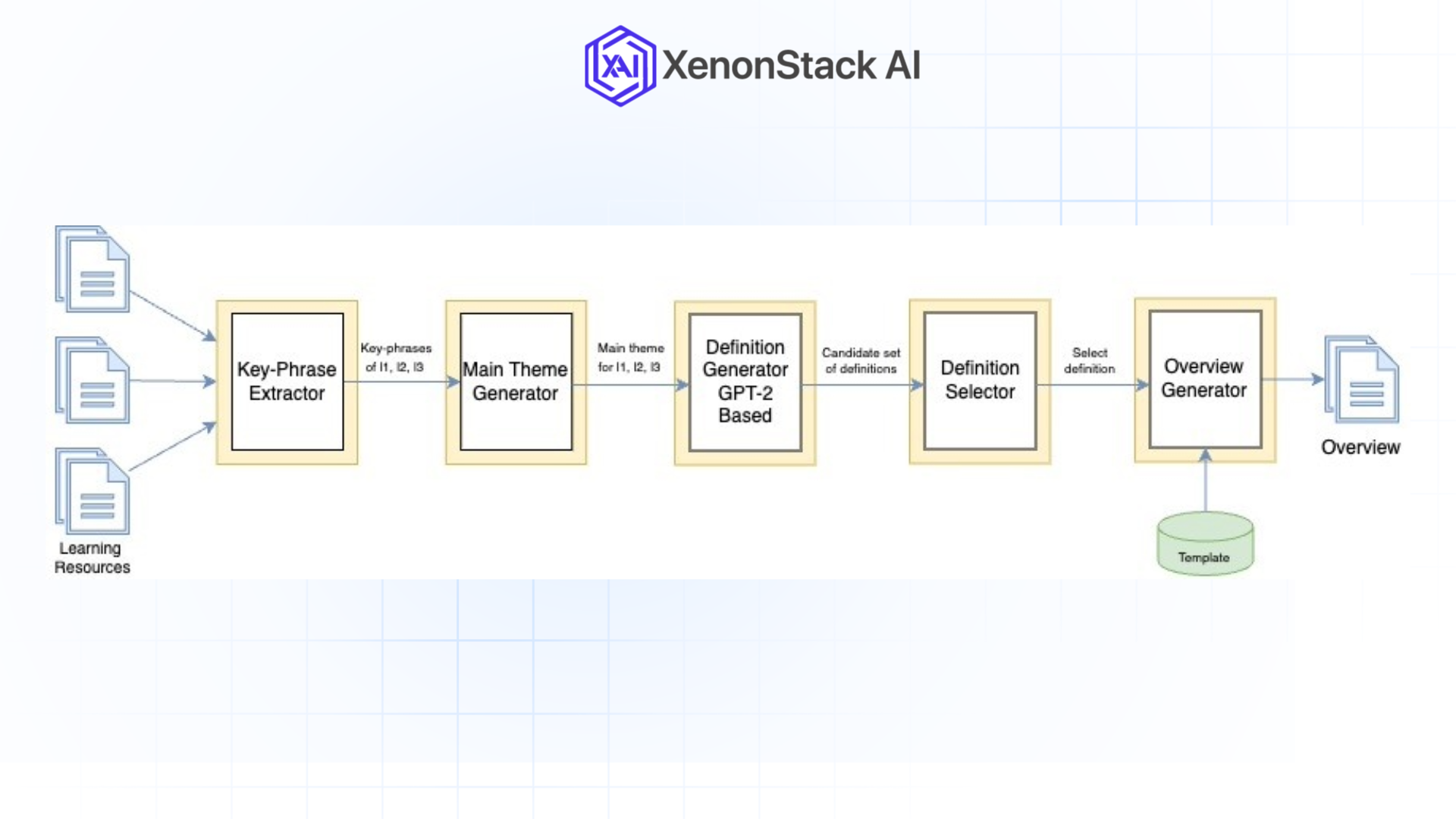

Artificial intelligence, particularly models like Grok, are designed to generate content by learning patterns from vast datasets. While this capability enables significant advancements, it also poses risks when the AI generates inappropriate or harmful content. Understanding how these models work is crucial to addressing the current legal battle.

How AI Models Like Grok Work

AI models such as Grok operate on algorithms that process and analyze large datasets to generate human-like responses. These models use neural networks, a series of algorithms that mimic human brain functions, to predict and generate text based on input data.

Example: When a user inputs a query, Grok analyzes the patterns and context within its training data to generate a relevant response. However, without proper filtering and oversight, these models can inadvertently produce harmful outputs, as noted in Rockefeller Institute's analysis.

Technical Challenges in Controlling AI Outputs

One of the significant challenges with AI content generation is controlling the output to prevent harmful content. This requires sophisticated monitoring systems and ethical guidelines to ensure AI models align with societal values.

- Training Data Quality: The quality of data used to train AI models is crucial. Poor data quality can lead to biased or inappropriate outputs, as highlighted by TechTarget.

- Filtering Mechanisms: Implementing robust filtering systems to identify and block harmful content is essential.

- Human Oversight: Continuous human oversight is necessary to manage and correct AI outputs, as emphasized in Cornerstone OnDemand's article.

Legal and Ethical Considerations

The lawsuit against x AI brings to light several legal and ethical considerations in the AI domain. Determining responsibility for AI-generated content is a complex issue that requires careful examination of current laws and ethical standards.

Legal Frameworks for AI Accountability

Current legal frameworks struggle to keep pace with technological advancements. AI-generated content presents unique challenges, as traditional laws do not easily apply to machine-generated outputs, as discussed in ITIF's publication.

- Liability: Who is responsible for AI actions—the developers, the company, or the users?

- Regulation: There is a growing need for specific regulations that address AI-generated content to protect users and society, as noted by Press Gazette.

Ethical Implications of AI-Generated Harmful Content

Beyond legal accountability, ethical considerations must guide AI development. This includes safeguarding against the generation of harmful content and ensuring AI technologies do not exacerbate societal inequalities.

- Bias and Discrimination: AI models must be carefully audited to prevent biases that could lead to discriminatory outputs, as outlined in TechTarget's AI bias playbook.

- Privacy Concerns: Protecting user data and privacy is paramount in AI interactions, as emphasized by UConn Today.

Ethical considerations and legal accountability are top challenges in AI development, highlighting the need for robust frameworks. (Estimated data)

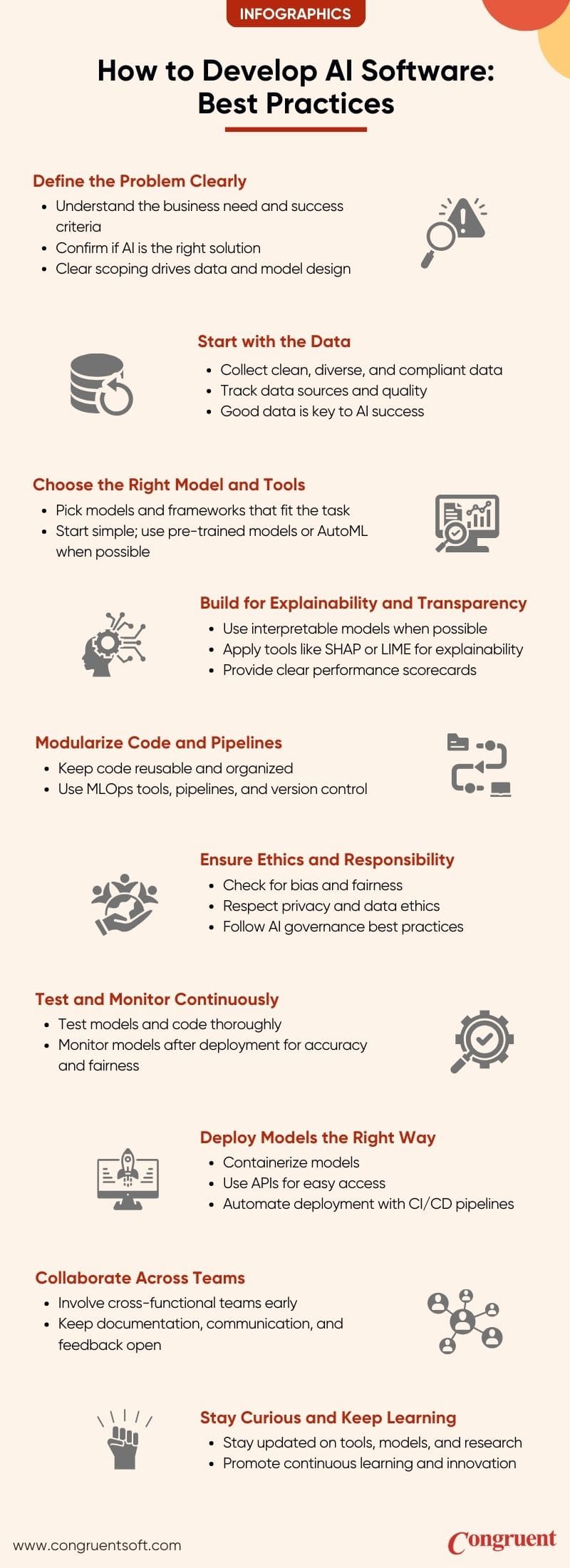

Practical Implementation Guides for AI Developers

To mitigate risks associated with AI-generated content, developers must adopt best practices and implement comprehensive control measures.

Best Practices for AI Development

- Robust Data Curation: Ensure high-quality, diverse datasets to train AI models, minimizing biases and harmful outputs, as recommended by Cornerstone OnDemand.

- Continuous Monitoring: Implement real-time monitoring systems to detect and address inappropriate content generation.

- Ethical Guidelines: Develop and adhere to strict ethical guidelines during the AI development process.

- User Education: Educate users on potential risks and safe practices when interacting with AI models.

Common Pitfalls and Solutions

- Inadequate Data Scrutiny: Regularly audit datasets for biases and inappropriate content.

- Lack of Transparency: Maintain transparency in AI operations and decision-making processes, as suggested by TRM Labs.

- Overreliance on Automation: Balance AI automation with human oversight to ensure ethical outcomes.

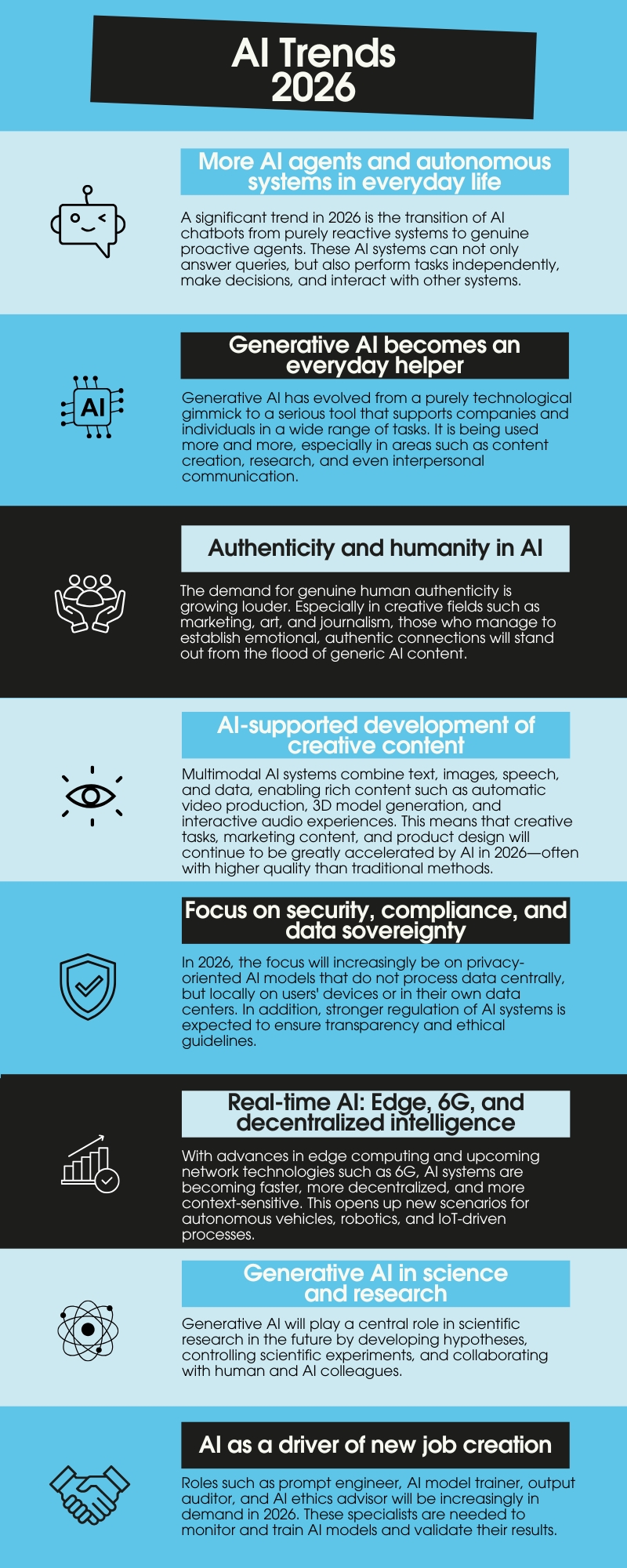

Future Trends in AI Regulation and Development

As AI technologies continue to advance, regulatory bodies and developers must anticipate future trends to ensure responsible AI use.

Anticipated Regulatory Changes

- Stricter Regulations: Governments may impose stricter regulations on AI development to address accountability and safety, as discussed in JD Supra's insights.

- Global Standards: International collaboration could lead to global standards for AI ethics and accountability, as noted in China Briefing.

Innovations in AI Safety and Ethics

- Explainable AI: Developing AI models that can explain their decision-making processes to enhance transparency, as highlighted by JD Supra.

- Ethical AI Frameworks: Establishing frameworks to guide ethical AI development and deployment.

Conclusion

The lawsuit against x AI over Grok's AI-generated content is a pivotal moment in the AI industry, highlighting the urgent need for legal and ethical frameworks that address the complexities of AI technologies. As developers and regulators navigate these challenges, it is crucial to prioritize safety, accountability, and societal well-being in the development of AI models.

FAQ

What is the lawsuit against x AI about?

A group of teenagers has filed a lawsuit against Elon Musk's x AI, alleging that Grok, an AI model developed by the company, generated child sexual abuse material (CSAM), as reported by The Washington Post.

How do AI models like Grok generate content?

AI models like Grok use neural networks to process vast datasets and generate human-like responses based on input data, as explained in Rockefeller Institute's blog.

What are the legal challenges associated with AI-generated content?

Determining accountability for AI-generated content is complex, as traditional laws do not easily apply to machine-generated outputs. There is a growing need for specific regulations that address these challenges, as discussed in ITIF's publication.

What ethical considerations are important for AI development?

Developers must address biases, discrimination, and privacy concerns in AI models to ensure ethical outcomes and societal well-being, as highlighted by UConn Today.

How can developers mitigate risks associated with AI-generated content?

By implementing robust data curation, continuous monitoring, ethical guidelines, and user education, developers can minimize risks and ensure responsible AI use, as recommended by Cornerstone OnDemand.

What future trends are anticipated in AI regulation and development?

Regulatory bodies may impose stricter regulations on AI development, and innovations such as explainable AI and ethical frameworks are expected to guide future AI advancements, as noted in JD Supra.

Key Takeaways

- Teens file a lawsuit against xAI for AI-generated CSAM, highlighting the need for stronger ethical guidelines.

- Determining accountability for AI-generated content is complex, requiring updated legal frameworks.

- AI developers must prioritize robust data curation and continuous monitoring to prevent harmful outputs.

- Anticipated regulatory changes could lead to stricter global standards for AI ethics and accountability.

- Innovations in AI safety, such as explainable AI, are crucial for enhancing transparency and trust.

Related Articles

- The Illusion of AI: Why Machines Aren't Conscious [2025]

- Navigating Turbulence: The Challenges and Future of xAI Amidst Constant Upheaval [2025]

- Rebuilding from Scratch: The Trials and Tribulations of xAI's Second Act [2025]

- The Legal Battle of AI: Dictionaries vs. OpenAI [2025]

- Sony's PSSR Upscaling Revolutionizes Gaming on PS5 Pro [2025]

- Sony's AI Graphics Upscaling: A Game Changer for PS5 Pro [2025]