Open AI and Google Support Anthropic in High-Stakes Legal Battle Against US Government [2025]

Last month, a seemingly routine legal filing caught the attention of the tech industry. More than 30 employees from Open AI and Google, including prominent figures like Google Deep Mind chief scientist Jeff Dean, filed an amicus brief in support of Anthropic. This move was not just a gesture of solidarity; it marked a pivotal moment in the ongoing tension between AI companies and government oversight.

TL; DR

- Anthropic faces sanctions from the US government, potentially impacting AI industry competitiveness.

- Open AI and Google employees filed amicus briefs supporting Anthropic's legal stance.

- Potential consequences for AI development and US industrial competitiveness are at stake.

- Legal complexities involve supply-chain risk designations affecting military contracts.

- Long-term implications for AI governance and international relations are significant.

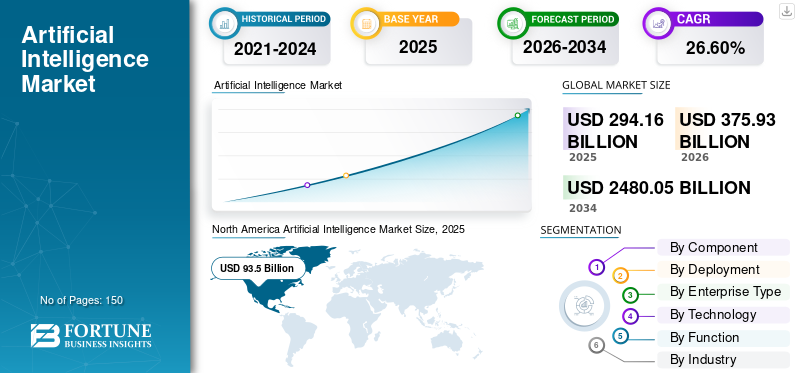

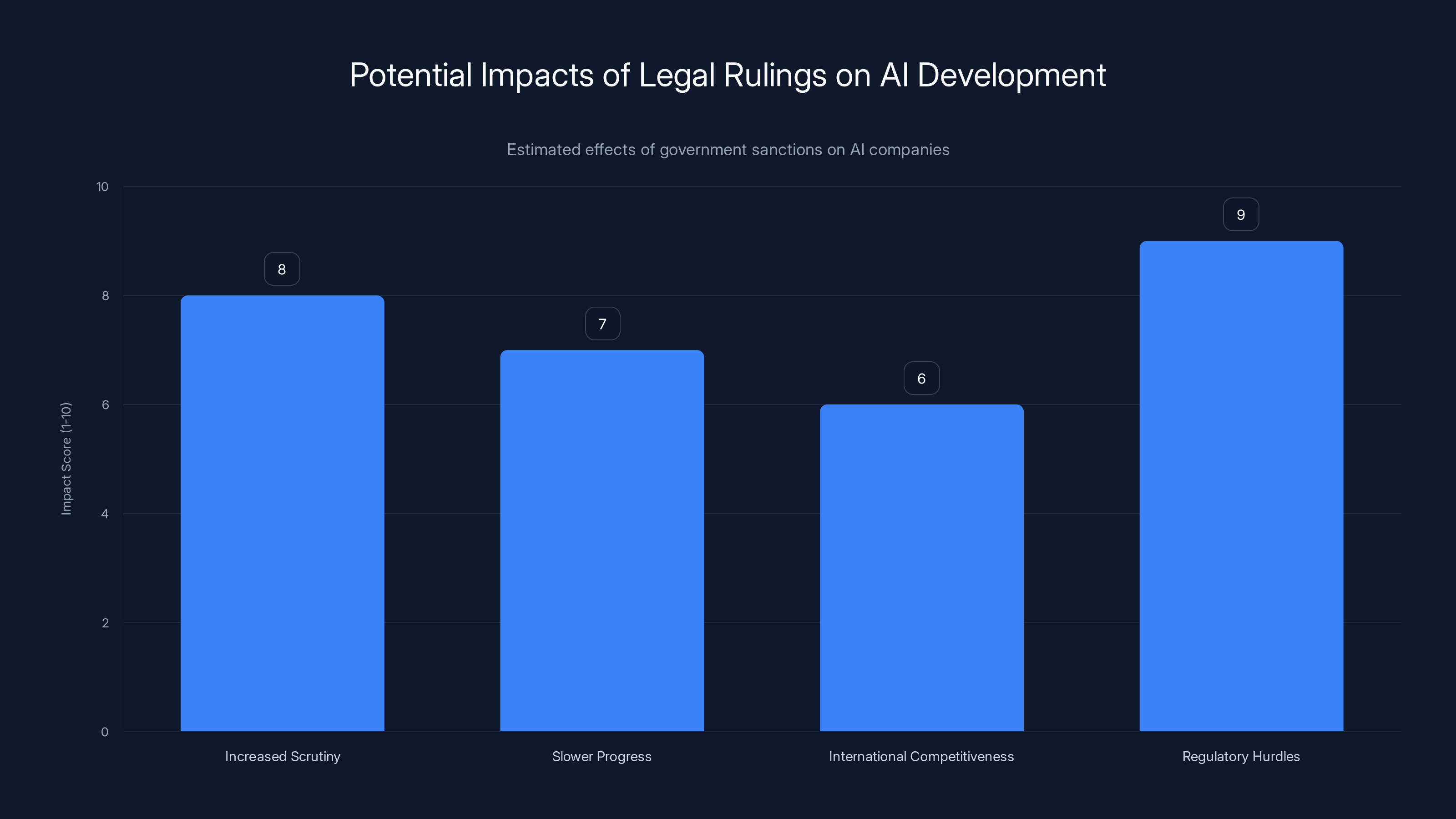

Regulatory actions could significantly impact AI companies' competitiveness, innovation, and growth potential, with increased regulatory scrutiny being a major concern. Estimated data based on FAQ insights.

The Context: Anthropic's Legal Challenge

Anthropic, an AI startup, recently found itself in a legal battle with the US government. The Department of Defense (DoD) designated the company as a “supply-chain risk”, a decision that restricts its ability to engage with military contractors. This designation came after failed negotiations between Anthropic and the Pentagon. In response, Anthropic filed a lawsuit seeking a temporary restraining order to continue its operations without the imposed limitations.

What Is a Supply-Chain Risk Designation?

A supply-chain risk designation is a label that can significantly hinder a company’s ability to function in certain sectors, particularly those involving government contracts. This label is typically reserved for entities that the government believes could pose a risk to national security due to vulnerabilities in their supply chains.

Why Anthropic?

Anthropic’s designation as a supply-chain risk is particularly contentious given the company’s focus on creating safe and reliable AI systems. The startup, founded by former Open AI employees, has been vocal about its commitment to ethical AI development. The designation, therefore, raises questions about the criteria used by the DoD and the potential implications for tech companies striving to maintain ethical standards.

Estimated data suggests that regulatory hurdles and increased scrutiny could significantly impact AI development, potentially affecting international competitiveness.

The Amicus Brief: A Stand for AI Competitiveness

The amicus brief filed by Open AI and Google employees argues that penalizing Anthropic could undermine the United States' competitive edge in the AI sector. The brief suggests that actions against leading AI companies could stifle innovation, limiting the country’s technological advancements and its position in the global AI landscape.

Key Arguments in the Brief

- Industrial Competitiveness: The brief emphasizes the importance of maintaining a strong domestic AI industry to ensure the US remains competitive globally.

- Scientific Progress: Without leading AI companies like Anthropic, the brief argues that scientific progress in AI could slow, impacting various sectors reliant on cutting-edge technology.

- Innovation Stifling: The brief warns that punitive measures against AI firms could deter innovation, as companies may become wary of government interactions.

Implications of the Legal Battle

The legal battle between Anthropic and the US government holds significant implications for the AI sector and beyond. At the heart of the dispute is a broader conversation about how AI companies interact with government entities and the role of regulation in fostering or hindering innovation.

Impact on AI Development

Should the court side with the government, it could set a precedent that impacts how AI companies operate within the US. Companies might face increased scrutiny and barriers when entering agreements with government agencies, potentially slowing down technological progress.

International Competitiveness

The US has long been a leader in AI development, but this position is not guaranteed. Other countries, notably China, are investing heavily in AI, and any hindrance to US companies could shift the balance of power. If US firms find themselves restricted, they may lose ground to international competitors.

Regulatory Environment

The case also highlights the complex regulatory environment that AI companies navigate. While regulations are designed to protect national interests, they can also create significant hurdles for companies that need to adapt quickly to market changes and technological advancements.

Security investment is the top priority for AI companies, followed by developing a robust compliance framework. Estimated data.

Practical Implementation Guides for AI Companies

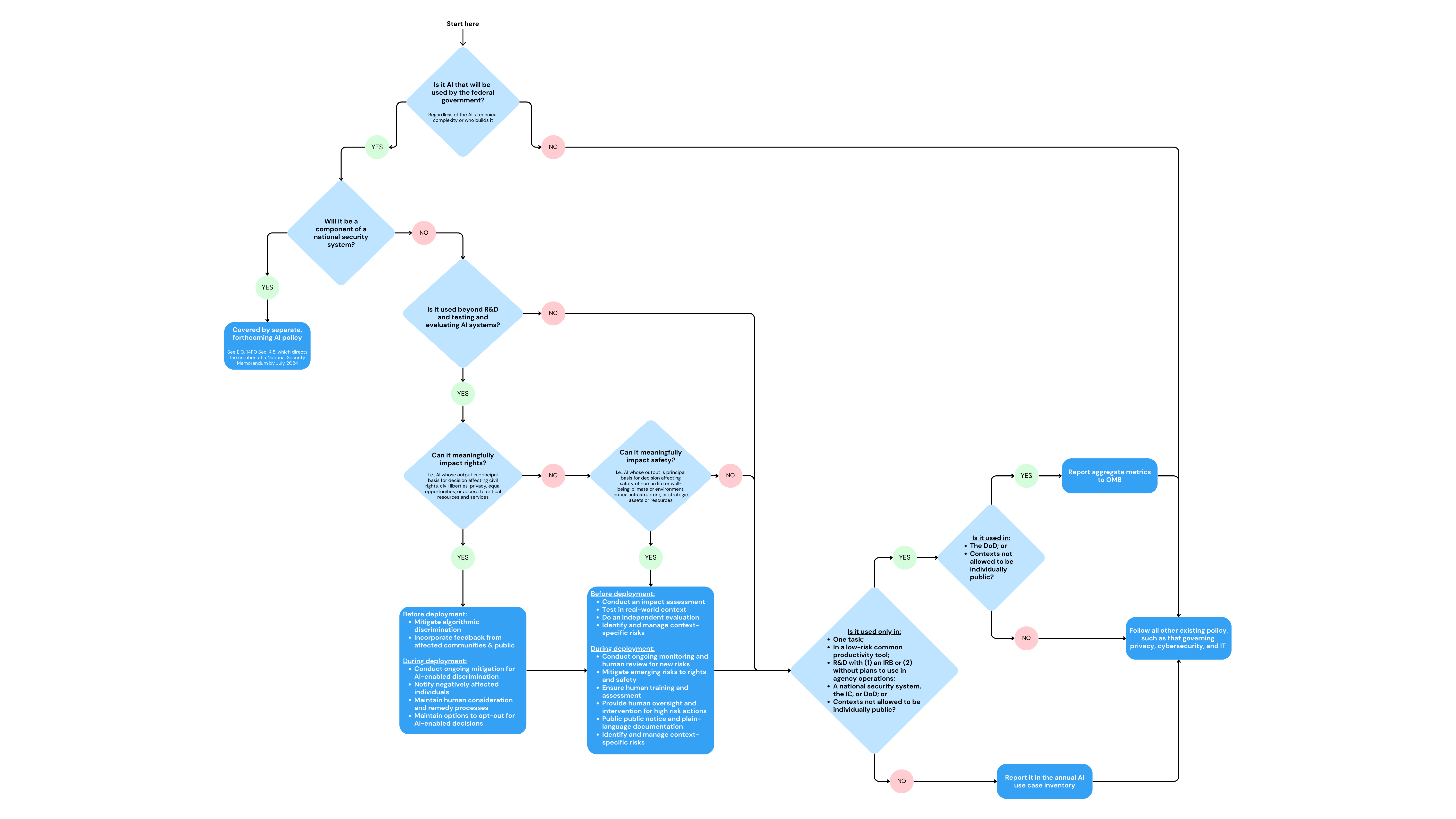

For AI companies navigating this complex landscape, understanding the intricacies of government relations is crucial. Here are some practical steps and best practices to consider:

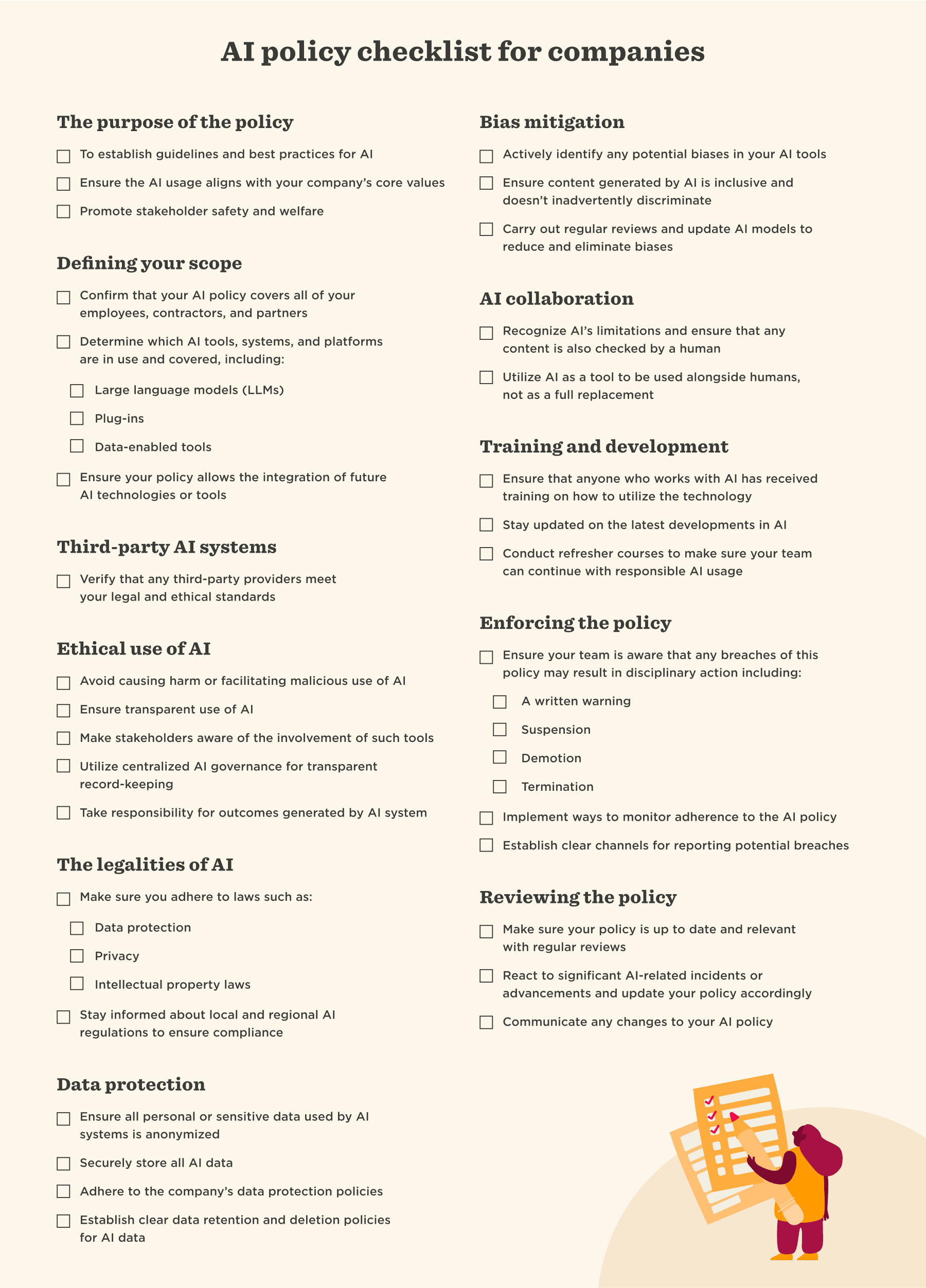

- Develop a Robust Compliance Framework: Ensure your company is fully compliant with existing regulations to avoid potential conflicts with government agencies.

- Engage with Policymakers: Maintain open lines of communication with policymakers to understand their concerns and work collaboratively on solutions.

- Invest in Security: Prioritize cybersecurity and supply-chain integrity to mitigate risks that could lead to government scrutiny.

- Document Ethical Practices: Clearly document your company’s ethical practices and commitment to safe AI development to build trust with stakeholders.

Common Pitfalls and Solutions

Navigating government relations can be fraught with challenges. Here are some common pitfalls and solutions for AI companies:

Pitfall: Lack of Transparency

Solution: Transparency is key in building trust with government agencies. Regularly update stakeholders on company practices and any changes to operations or policies.

Pitfall: Reactive Approach

Solution: Adopt a proactive approach by anticipating regulatory changes and preparing for potential impacts on your business.

Pitfall: Insufficient Risk Assessment

Solution: Conduct thorough risk assessments of your supply chain and operations to identify vulnerabilities that could attract government scrutiny.

Future Trends and Recommendations

As the AI industry continues to evolve, companies must stay ahead of regulatory trends and adapt to changing landscapes. Here are some predictions and recommendations:

Increasing Government Involvement

Governments worldwide are likely to increase their involvement in AI regulation as the technology becomes more pervasive. Companies should prepare for more stringent oversight and be ready to adapt.

Ethical AI as a Differentiator

As regulations tighten, companies that prioritize ethical AI practices will stand out. Building a reputation for ethical AI can serve as a competitive advantage.

Collaboration is Key

Collaboration between AI companies and government entities will be crucial in shaping regulations that protect national interests without stifling innovation.

Conclusion

The ongoing legal battle between Anthropic and the US government is more than just a corporate dispute; it is a pivotal moment for the AI industry. The outcome of this case could shape the future of AI development, influence international competitiveness, and redefine the relationship between tech companies and government entities. As AI continues to transform industries, maintaining a balance between regulation and innovation will be essential.

FAQ

What is the significance of the amicus brief filed by Open AI and Google employees?

The amicus brief underscores the potential impact of government actions on the US's competitiveness in the AI sector. It highlights concerns that punitive measures against AI companies could stifle innovation and reduce the US's technological edge.

How does a supply-chain risk designation affect AI companies?

A supply-chain risk designation can severely limit a company's ability to engage with government contracts, impacting its operations and growth potential. It can also signal to other companies that the designated entity poses security risks.

What are the potential consequences of the legal battle for Anthropic?

The outcome could set a precedent affecting how AI companies interact with government agencies. A ruling against Anthropic might lead to increased regulatory scrutiny and barriers, while a ruling in its favor could affirm the importance of innovation-friendly policies.

How can AI companies prepare for regulatory changes?

AI companies should develop robust compliance frameworks, engage with policymakers, invest in security, and document ethical practices to navigate regulatory changes effectively.

What future trends should AI companies be aware of?

AI companies should anticipate increased government involvement in regulation, recognize the value of ethical AI as a differentiator, and prioritize collaboration with government entities to shape favorable policies.

[Continue with more relevant questions...]

Key Takeaways

- OpenAI and Google employees filed an amicus brief supporting Anthropic against US government sanctions.

- The legal battle could impact US AI industry competitiveness and innovation.

- A supply-chain risk designation can significantly hinder a company's operations and growth.

- AI companies should develop compliance frameworks and engage with policymakers.

- Future trends include increased government involvement and the importance of ethical AI.

Related Articles

- Understanding the Implications of Anthropic's Legal Battle with the Department of Defense [2025]

- Understanding the Conflict: Anthropic's Legal Battle with the Defense Department [2025]

- Navigating the Pentagon's AI Controversy: Implications for Startups in Defense [2025]

- Mastering AI-Driven Code Review: The Future of Software Development [2025]

- OpenAI's Acquisition of Promptfoo: Securing AI Agents for the Future [2025]

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

![OpenAI and Google Support Anthropic in High-Stakes Legal Battle Against US Government [2025]](https://tryrunable.com/blog/openai-and-google-support-anthropic-in-high-stakes-legal-bat/image-1-1773090379927.jpg)