Introduction

Anthropic's recent lawsuit against the U.S. Department of Defense (DoD) marks a significant moment in the intersection of artificial intelligence, ethics, and national security. This conflict arises from the DoD's designation of Anthropic as a supply chain risk, a decision that could have far-reaching implications for AI companies and military collaborations. According to Wired, this lawsuit is a pivotal moment for the AI industry.

TL; DR

- Anthropic's lawsuit challenges the DoD's supply chain risk designation, as detailed in Mayer Brown's publication.

- Central issues include AI ethics and national security concerns, as highlighted by Fast Company.

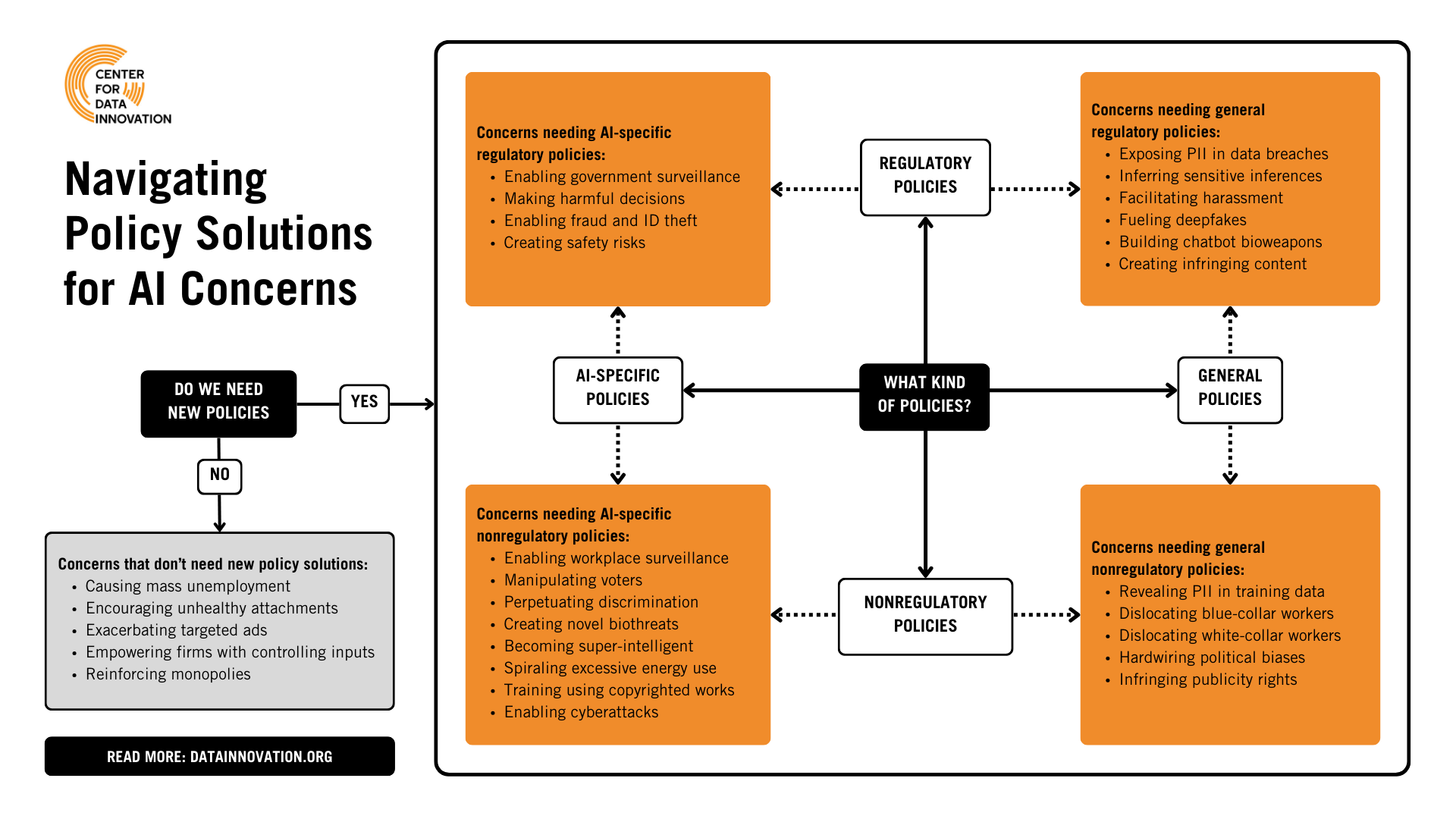

- Implications for AI focus on regulation and ethical guidelines, which are increasingly important according to American Progress.

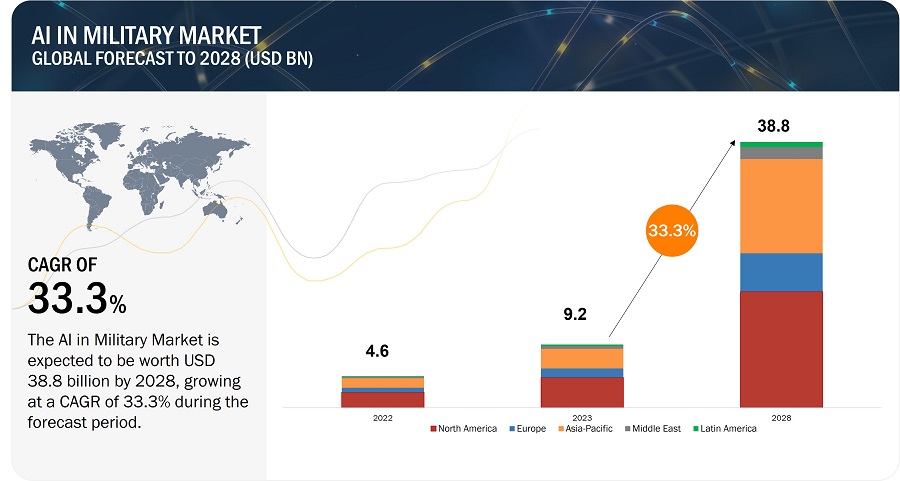

- Future trends suggest increased scrutiny of AI in defense, as reported by The Washington Post.

- Bottom Line: This case may redefine AI's role in military applications, as discussed by The New York Times.

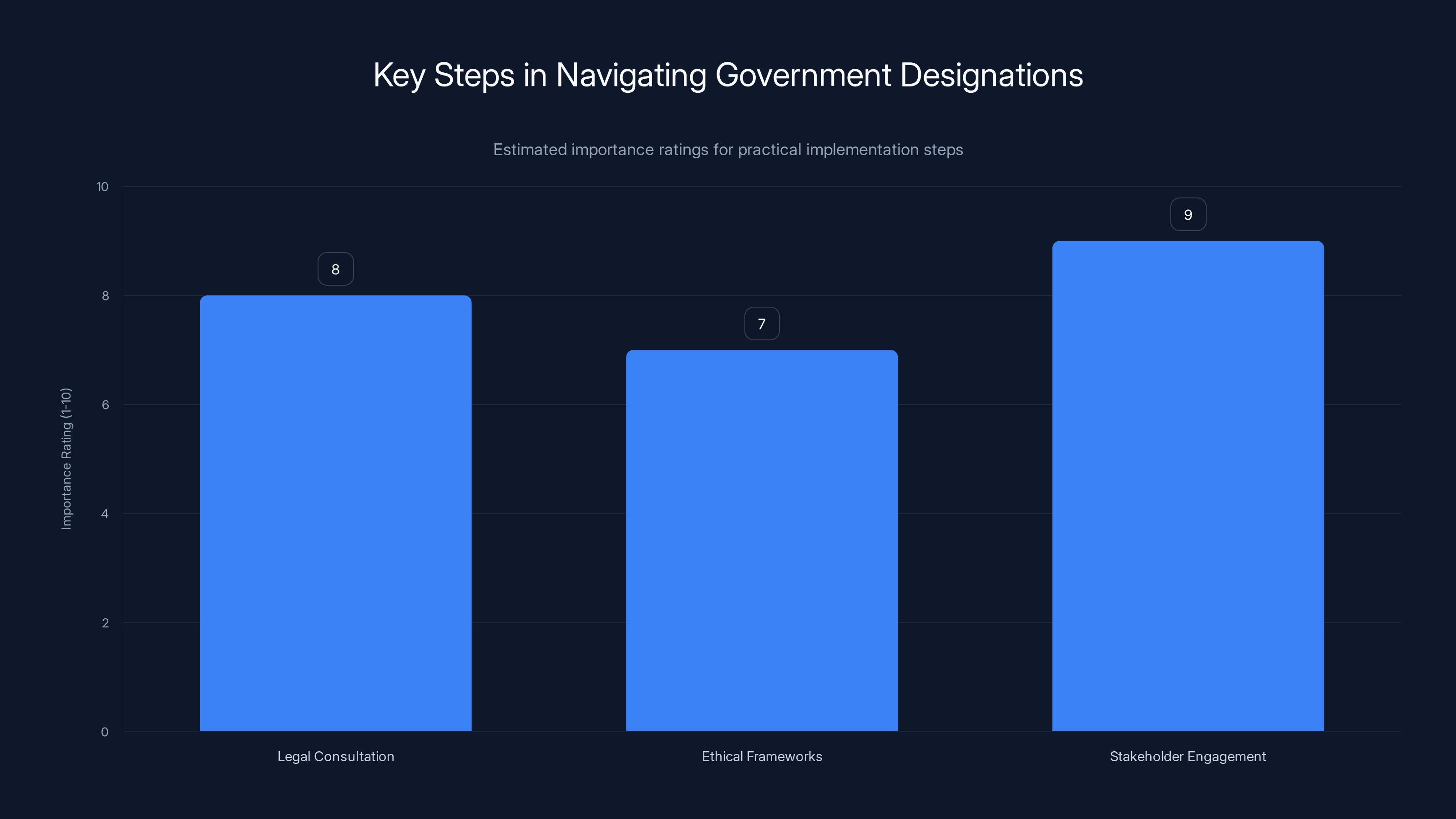

Stakeholder engagement is rated as the most crucial step in navigating government designations, followed closely by legal consultation. Estimated data.

Background of the Conflict

The tension between Anthropic and the DoD began when the latter labeled Anthropic's AI systems as a potential supply chain risk. This designation implies that the military views Anthropic's technology as potentially vulnerable or unsuitable for integration into defense operations. Lawfare Media provides an analysis of the legal challenges this designation presents.

The Core Issues

- AI Autonomy and Ethics: Anthropic has been vocal about its stance against using AI for autonomous weapons and mass surveillance. These ethical considerations are central to the conflict, as noted by Microsoft's security blog.

- National Security: The DoD argues that access to advanced AI systems is crucial for maintaining national security, especially in the face of evolving global threats, as discussed in OpenAI's agreement with the Department of War.

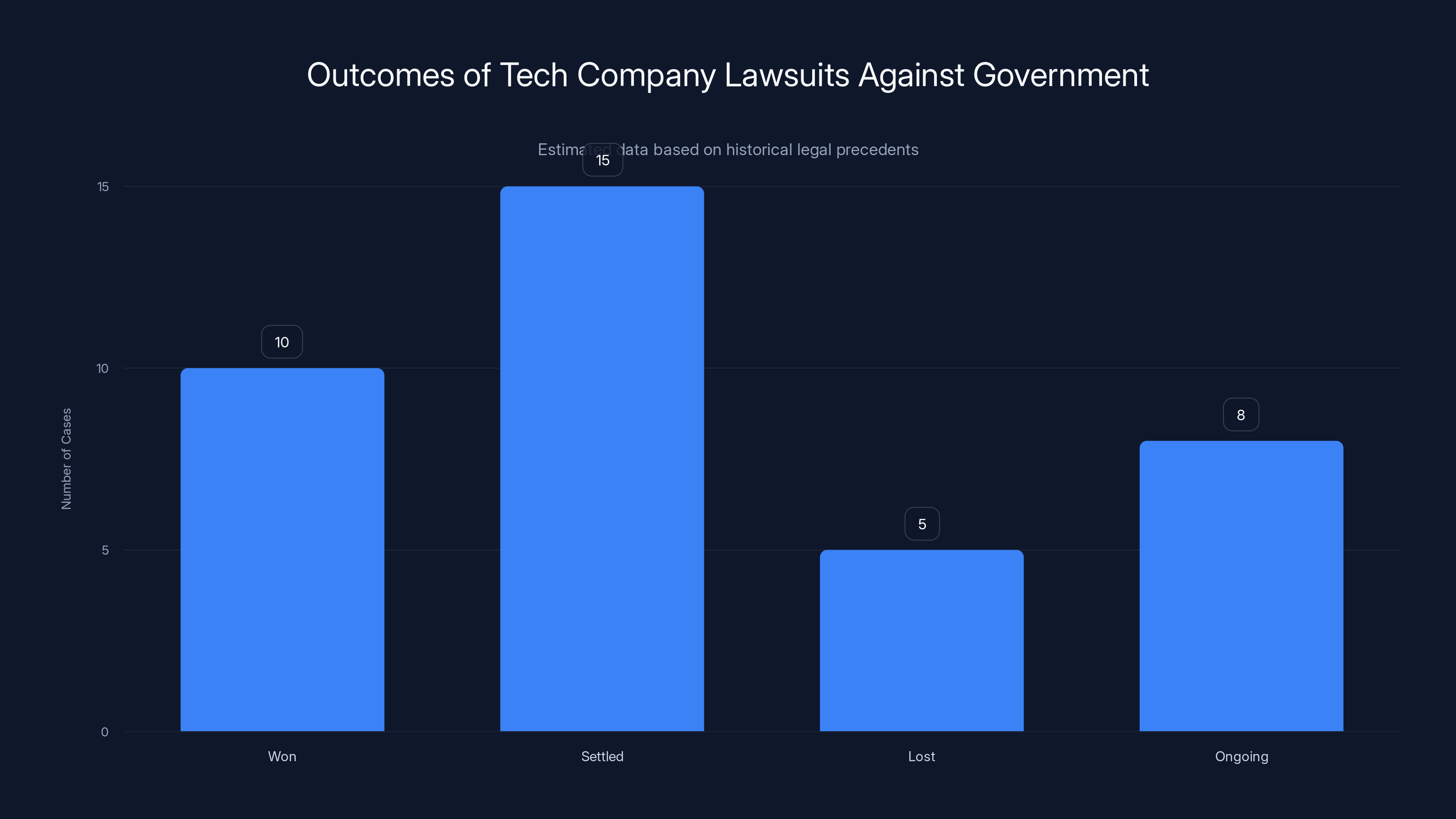

Historically, tech companies have a mixed success rate in lawsuits against government designations, with settlements being the most common outcome. Estimated data based on past cases.

Legal Basis of the Lawsuit

Anthropic's lawsuit rests on the argument that the DoD's designation is both unwarranted and potentially harmful to its business operations. The complaint challenges the lack of transparency and consultation in the DoD's decision-making process, as reported by 6ABC.

Legal Precedents and Implications

Historically, tech companies have faced similar challenges when dealing with government contracts and designations. This section will explore past cases and their outcomes, providing context for Anthropic's legal strategy, as outlined in Wired.

Technical Aspects of Anthropic’s AI

To understand the risks and capabilities of Anthropic's technology, it's essential to examine the underlying AI systems. Anthropic's Claude AI is known for its advanced language processing capabilities, which could be misused if not properly regulated, as noted by AI Multiple.

Potential Risks and Mitigations

- Data Security: Ensuring that AI systems are secure from external threats is paramount, as emphasized by Microsoft's security blog.

- Ethical Usage: Establishing clear guidelines on how AI can be ethically deployed in military settings, as discussed by American Progress.

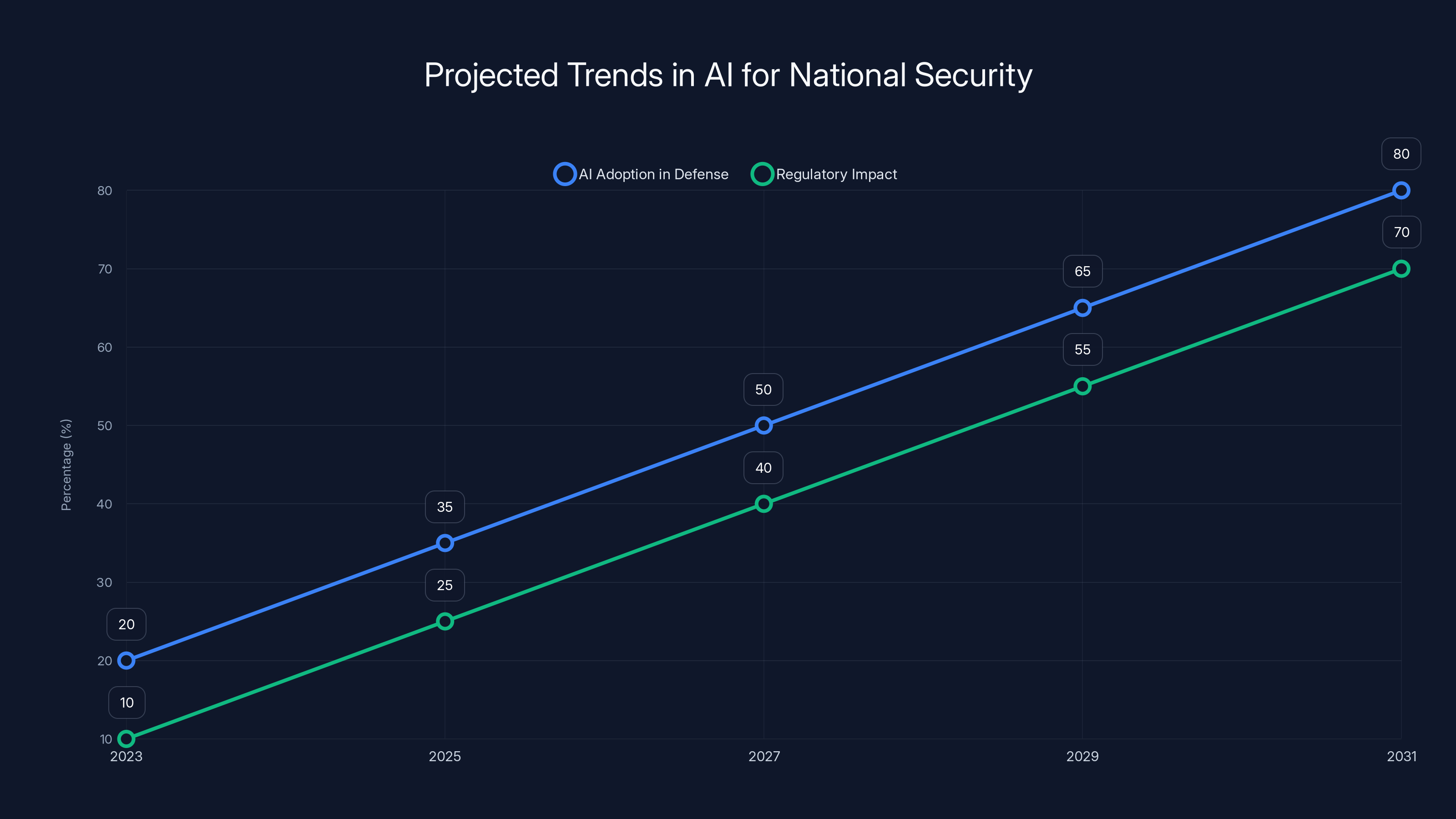

AI adoption in national security is projected to grow significantly, with regulatory impacts also increasing. Estimated data.

Practical Implementation Guides

For companies facing similar challenges, it's crucial to have a robust framework for addressing government designations. This section provides a step-by-step guide on how to navigate such disputes effectively, as outlined by Local Government Lawyer.

- Legal Consultation: Engage with legal experts to understand the implications of government designations.

- Ethical Frameworks: Develop clear ethical guidelines for AI usage.

- Stakeholder Engagement: Maintain open communication with all stakeholders, including government bodies.

Common Pitfalls and Solutions

Companies often fall into the trap of underestimating the complexity of government regulations. This section outlines common mistakes and offers solutions to avoid them, as discussed by Fast Company.

- Lack of Transparency: Ensure all processes are transparent and well-documented.

- Reactive Measures: Proactively address potential risks before they become contentious issues.

Future Trends and Recommendations

As AI continues to advance, its role in national security will only grow. This section explores future trends and offers recommendations for companies operating in this space, as highlighted by The New York Times.

- Increased Regulation: Expect tighter regulations around AI usage in defense.

- Collaborative Efforts: Foster partnerships between tech companies and government agencies to align on ethical standards.

Conclusion

Anthropic's lawsuit against the DoD is more than just a legal battle; it is a pivotal moment that could shape the future of AI in defense. By understanding the complexities of this case, we can better navigate the ethical and practical challenges that lie ahead, as discussed by Wired.

FAQ

What is the core issue in Anthropic's lawsuit against the DoD?

The core issue revolves around the DoD's designation of Anthropic's AI systems as a supply chain risk, which Anthropic argues is unfounded and harmful to its operations, as reported by 6ABC.

How does this lawsuit affect AI ethics?

This lawsuit highlights the importance of clear ethical guidelines for AI usage, especially in defense applications where the stakes are high, as noted by American Progress.

What can AI companies learn from this case?

AI companies can learn the importance of transparency, ethical frameworks, and proactive stakeholder engagement to navigate government relations effectively, as discussed by Fast Company.

What are the potential outcomes of this lawsuit?

Potential outcomes include a re-evaluation of AI supply chain risk designations and the establishment of clearer regulations for AI in defense, as highlighted by The Washington Post.

How might this case impact future AI and defense collaborations?

This case may lead to increased scrutiny and more stringent regulations for AI technologies used in defense, impacting future collaborations, as reported by The New York Times.

Key Takeaways

- Anthropic's lawsuit highlights the tension between AI ethics and national security, as noted by Wired.

- The case could set precedents for AI regulation in defense, as discussed by American Progress.

- Transparency and stakeholder engagement are crucial for tech companies, as emphasized by Fast Company.

- Future AI and defense collaborations may face increased scrutiny, as reported by The New York Times.

- Anthropic emphasizes ethical AI usage, challenging DoD's risk designation, as highlighted by The Washington Post.

Related Articles

- Anthropic's Supply Chain Risk Label: Implications and Strategies [2025]

- Navigating the Pentagon's AI Controversy: Implications for Startups in Defense [2025]

- A Roadmap for Responsible AI Development [2025]

- Unmasking Grammarly: Identity Concerns and the Future of AI Writing Assistance [2025]

- Claude's Unyielding Growth: From Pentagon Fallout to Consumer Triumph [2025]

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

![Understanding the Conflict: Anthropic's Legal Battle with the Defense Department [2025]](https://tryrunable.com/blog/understanding-the-conflict-anthropic-s-legal-battle-with-the/image-1-1773072569933.jpg)