Over 29 Million Secrets Were Leaked on GitHub in 2025, and AI Isn't Helping [2025]

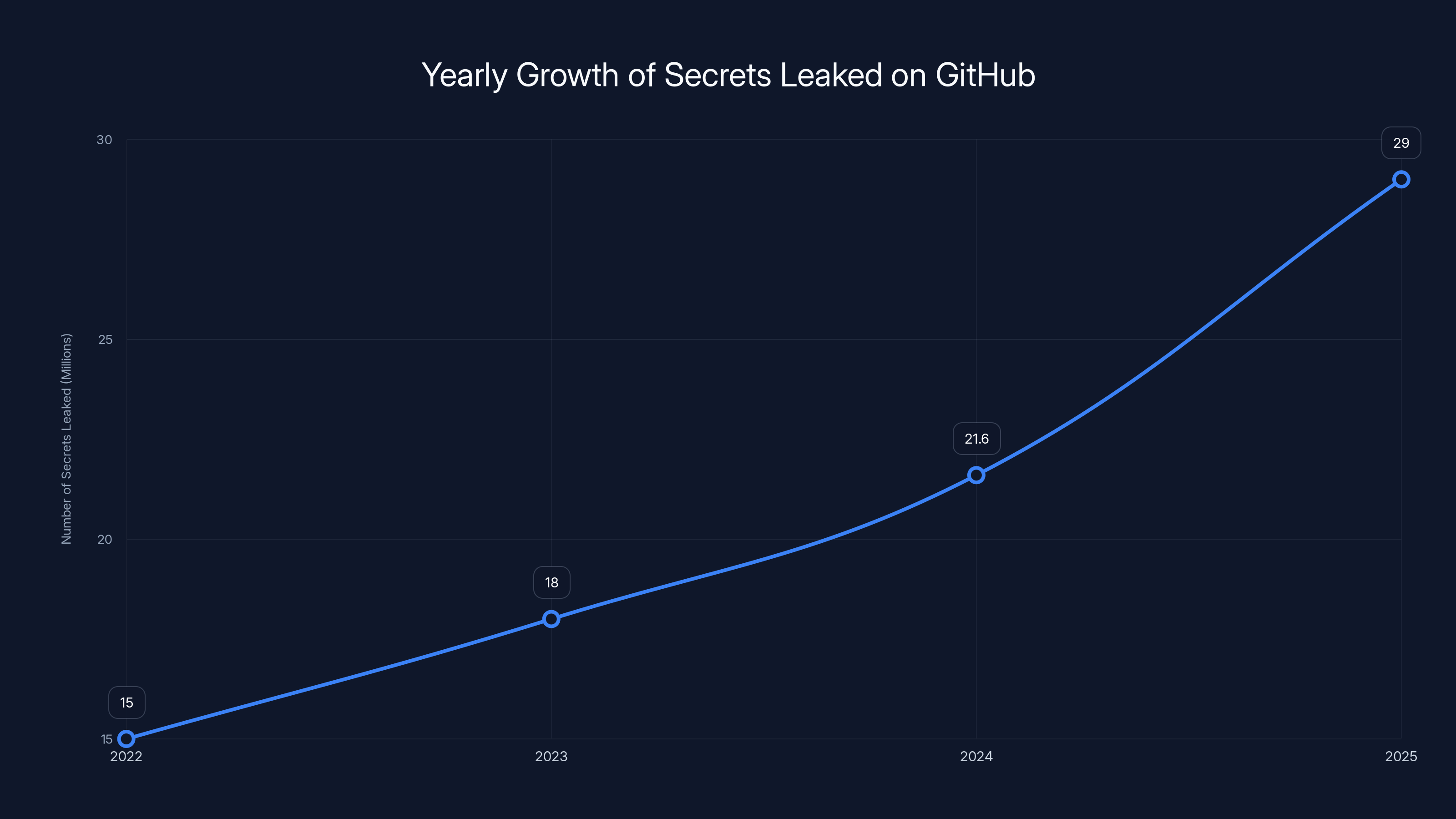

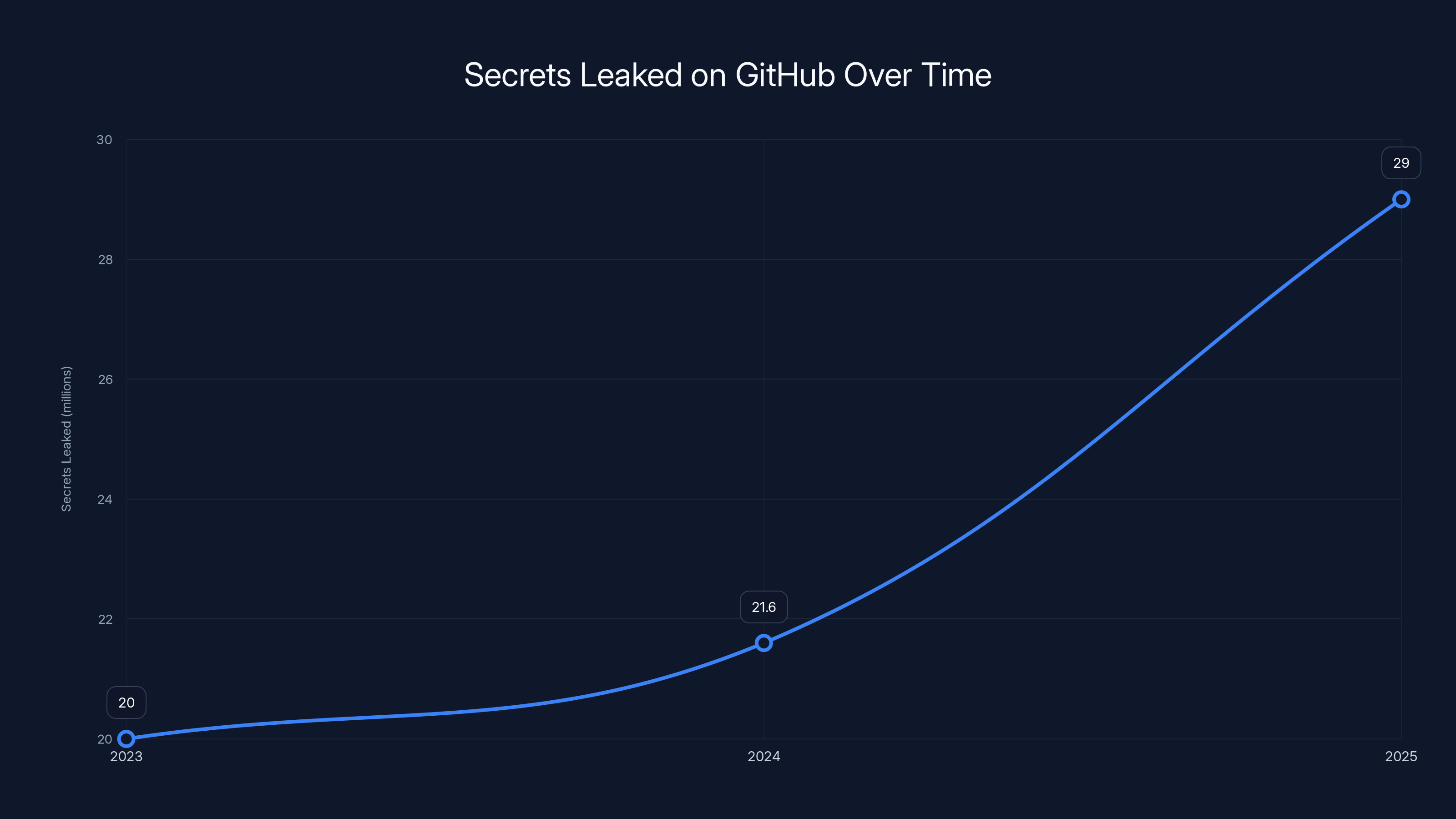

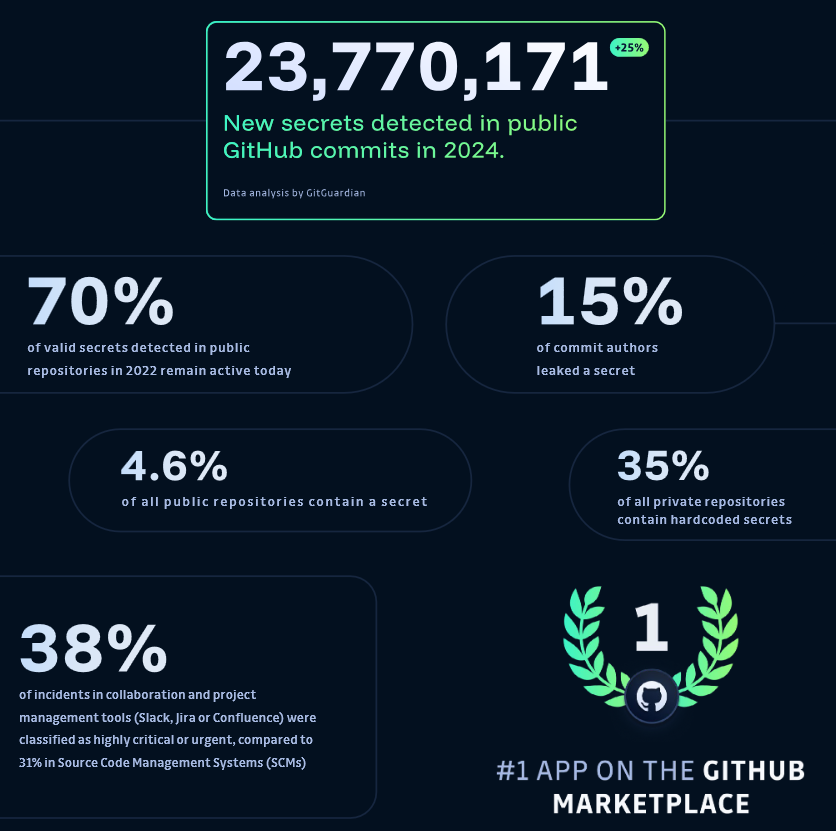

In 2025, GitHub witnessed an unprecedented surge in exposed secrets, with over 29 million credentials leaked. This represents a staggering 34% year-over-year increase in data breaches, primarily fueled by AI-assisted coding errors. Despite the promise of AI to streamline development processes, its integration into software engineering has introduced significant security challenges.

TL; DR

- 29 million secrets leaked on GitHub in 2025, a 34% increase from the previous year

- AI-assisted coding doubled baseline leak rates, with MCP configurations as a major risk factor

- Inexperienced developers using AI tools are leaving significant security vulnerabilities

- Best practices for secret management include environment variables and secrets management tools

- Future trends indicate a need for better AI training and developer education

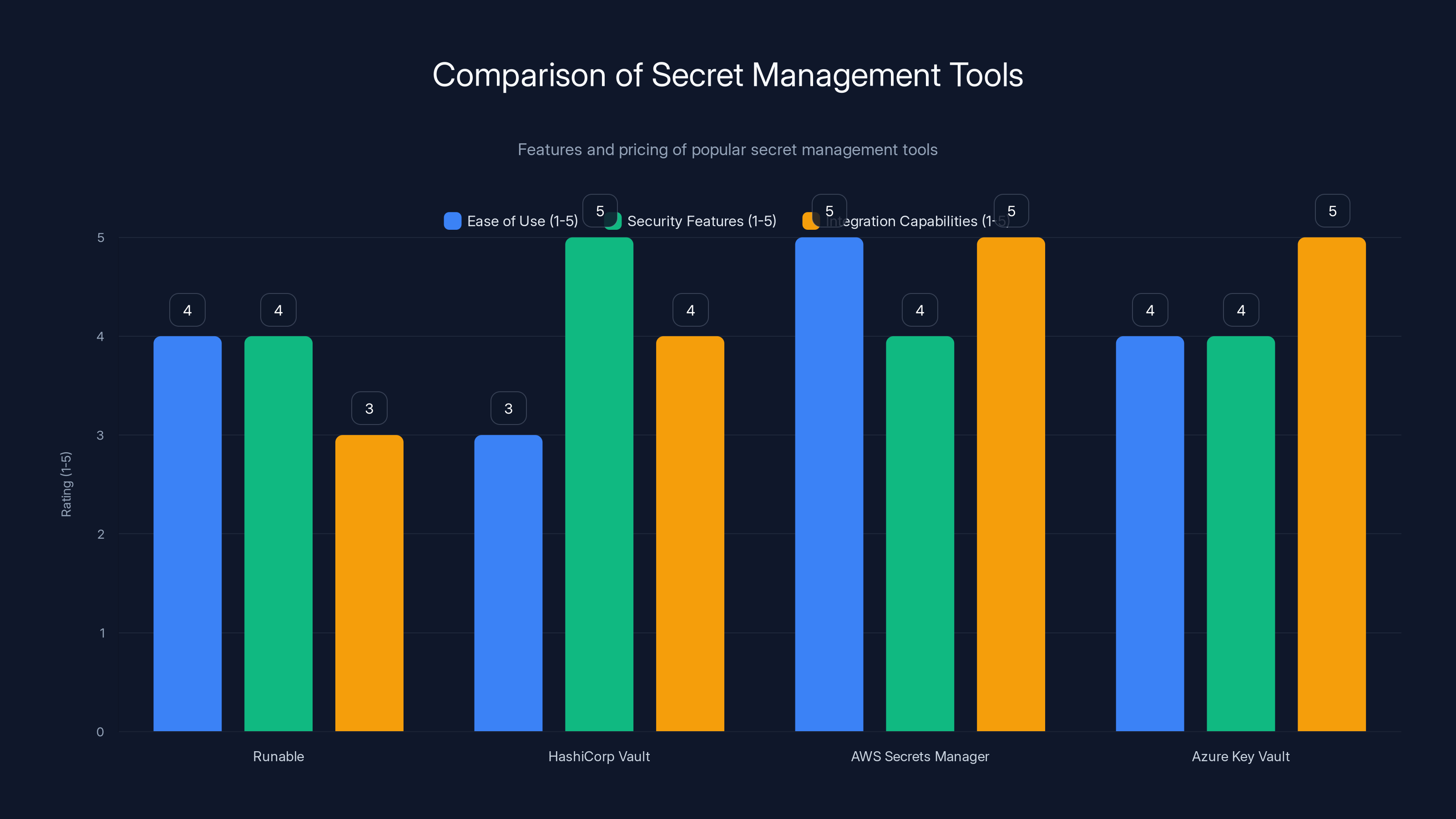

Runable excels in ease of use, while HashiCorp Vault leads in security features. AWS Secrets Manager and Azure Key Vault offer robust integration capabilities. Estimated data based on typical tool evaluations.

The Rise of AI in Software Development

AI has revolutionized many aspects of software development, from automating code generation to facilitating real-time debugging. However, its rapid adoption has also led to unintended consequences. In 2025, the integration of AI into the development pipeline was supposed to enhance productivity and efficiency. Instead, it has contributed to a significant rise in security breaches.

Why AI is a Double-Edged Sword

While AI can automate mundane tasks and provide intelligent code suggestions, it often lacks the nuanced understanding of context that human developers possess. This can lead to the unintentional introduction of security vulnerabilities. For instance, AI tools might suggest using hard-coded credentials for convenience, inadvertently exposing sensitive information.

AI's Role in the GitHub Leak Epidemic

GitHub, a popular platform for collaborative software development, has become a hotspot for these vulnerabilities. The GitGuardian's report indicates that AI-assisted commits were twice as likely to contain exposed secrets compared to manual coding efforts. This is largely due to the fact that AI often operates on the principle of efficiency over security.

The number of secrets leaked on GitHub increased by 34% in 2025, reaching over 29 million. Estimated data shows a consistent rise in previous years.

Common Sources of Leaks

Several factors contribute to the leakage of secrets in repositories:

- Hard-Coded Credentials: Developers sometimes embed passwords or API keys directly in the code for rapid testing, but forget to remove them before pushing to public repositories.

- Misconfigured Project Files: Mismanaged configuration files often contain sensitive information that should not be publicly accessible.

- AI-Generated Code: AI tools might generate code snippets that include sensitive data without proper security checks.

Hard-Coded Credentials: An Age-Old Issue

Even before AI, hard-coded credentials were a persistent problem. However, AI has exacerbated this issue by making it easier for developers to quickly generate and deploy code without thoroughly reviewing it for security lapses.

Best Practices for Managing Secrets

To mitigate the risk of secrets leakage, developers must adopt robust security practices. Here are some best practices:

- Environment Variables: Use environment variables to store sensitive information instead of hard-coding them into the source code.

- Secrets Management Tools: Implement tools like HashiCorp Vault, AWS Secrets Manager, or Azure Key Vault to securely manage and rotate secrets.

- Code Reviews and Audits: Regularly conduct code reviews and audits to identify and remove any exposed secrets.

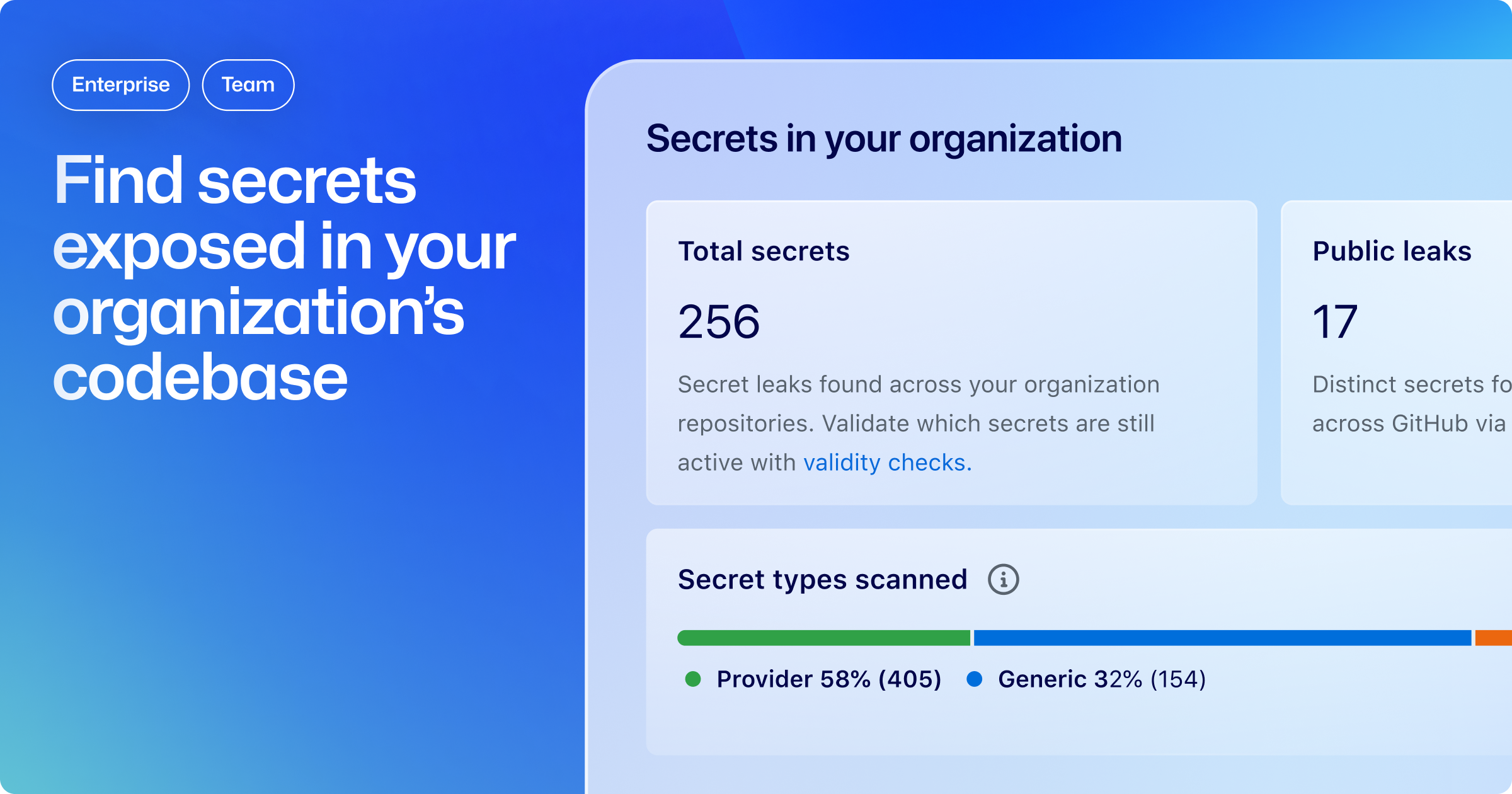

- Automated Scanning: Use automated scanners like GitGuardian's own tool to detect and alert on exposed secrets.

Implementing Environment Variables

One of the simplest yet most effective methods to secure secrets is to use environment variables. This approach keeps sensitive information out of the codebase, reducing the risk of accidental exposure.

bash# Example of setting an environment variable in a Unix-based system

export DATABASE_PASSWORD=your_secure_password

The number of secrets leaked on GitHub increased significantly, with a 34% rise from 2024 to 2025. Estimated data based on reported trends.

AI and Security: A Need for Better Training

AI's potential to revolutionize coding is undeniable, but its current application often lacks the necessary training in security best practices. To address this, developers and AI systems must be trained to prioritize security.

Training AI Systems

AI systems should be trained with a focus on security. This includes:

- Utilizing datasets that emphasize secure coding practices

- Incorporating security checks into AI-generated code

- Developing AI that can recognize and flag potential security threats

Educating Developers

Developers, especially those new to the field, need comprehensive education on secure coding practices. This includes understanding the implications of using AI and being aware of common pitfalls.

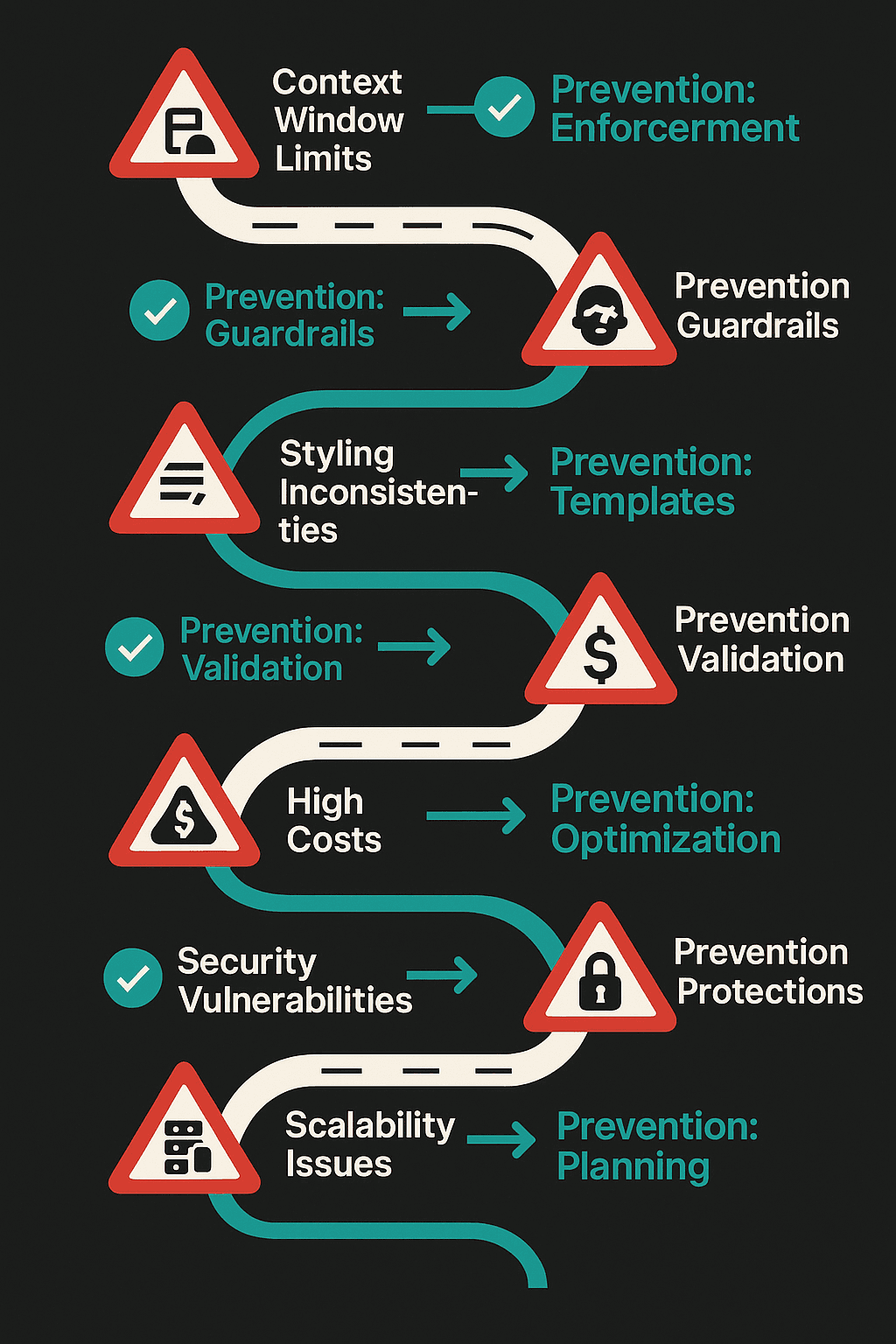

Common Pitfalls and Solutions

Despite the best intentions, developers often fall into the same traps when integrating AI into their workflows. Here are common pitfalls and how to avoid them:

-

Overreliance on AI: Developers might become too reliant on AI for code generation, leading to a lack of understanding of the underlying code.

- Solution: Balance AI usage with manual coding and thorough code reviews.

-

Ignoring Security Alerts: Many developers ignore security alerts due to alert fatigue.

- Solution: Prioritize alerts and focus on high-risk vulnerabilities first.

-

Lack of Regular Audits: Without regular audits, vulnerabilities can go unnoticed for extended periods.

- Solution: Schedule periodic security audits and integrate them into the development lifecycle.

Future Trends in AI and Security

Looking ahead, the relationship between AI and software security will continue to evolve. Here are some trends to watch:

- Increasing Use of AI for Security: AI will not only be a source of vulnerabilities but also a tool for enhancing security through intelligent threat detection and automated response systems.

- Focus on Explainable AI: As AI becomes more integral to development, there will be a push for explainable AI systems that provide transparency into their decision-making processes.

- Stronger Regulations: Governments and organizations are likely to impose stricter regulations on AI usage in software development to mitigate security risks.

Conclusion

The massive leakage of secrets on GitHub in 2025 underscores the need for a more secure approach to integrating AI into software development. By adopting best practices, educating developers, and improving AI training, the industry can hope to curb the rise in security breaches.

For developers and organizations, the takeaway is clear: security must be a priority, not an afterthought. As AI continues to shape the future of software development, balancing innovation with robust security measures will be key to safeguarding sensitive information.

FAQ

What are secrets in the context of software development?

Secrets refer to sensitive information such as API keys, passwords, and tokens that are used to access secure systems and services.

How does AI contribute to the leakage of secrets?

AI can generate code that contains sensitive information without proper security checks, leading to accidental exposure of secrets on platforms like GitHub.

What tools can help manage secrets securely?

Tools like HashiCorp Vault, AWS Secrets Manager, and Azure Key Vault are designed to securely store and rotate sensitive information.

What are some common pitfalls when using AI in development?

Common pitfalls include overreliance on AI, ignoring security alerts, and failing to conduct regular security audits.

How can developers mitigate the risks associated with AI?

Developers can mitigate risks by using environment variables, conducting code reviews, and leveraging automated scanning tools to detect exposed secrets.

What future trends can we expect in AI and software security?

Future trends include the increasing use of AI for threat detection, the rise of explainable AI, and stronger regulations on AI usage in development.

The Best Secret Management Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| HashiCorp Vault | Secure storage | Dynamic secrets management | Free and paid tiers |

| AWS Secrets Manager | Cloud environments | Automated secret rotation | Pay-as-you-go |

| Azure Key Vault | Microsoft ecosystems | Integrated with Azure services | Pay-as-you-go |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- HashiCorp Vault for secure storage

- AWS Secrets Manager for cloud environments

- Azure Key Vault for Microsoft ecosystems

Key Takeaways

- 29 million secrets leaked on GitHub in 2025, a 34% increase.

- AI-assisted commits doubled baseline leak rates.

- Hard-coded credentials remain a major risk factor.

- Use environment variables and secrets management tools to secure information.

- Future trends include AI for threat detection and explainable AI.

- Stronger regulations on AI usage in development are expected.

Related Articles

- Global Phone Outage: Understanding the Impact and Solutions [2025]

- Google Expands Search Live Globally: A New Era in AI-Powered Search [2025]

- Understanding Ransomware Attacks: Lessons from the Marquis Data Theft Incident [2025]

- Hundreds of Millions of iPhones Can Be Hacked With a New Tool Found in the Wild | WIRED

- The Potential Regulation of Prediction Markets: A Deep Dive into Kalshi and Polymarket

- Navigating the Electric Vehicle Odyssey: Challenges, Setbacks, and the Road Ahead [2025]

![Over 29 Million Secrets Were Leaked on GitHub in 2025, and AI Isn't Helping [2025]](https://tryrunable.com/blog/over-29-million-secrets-were-leaked-on-github-in-2025-and-ai/image-1-1773851831032.jpg)