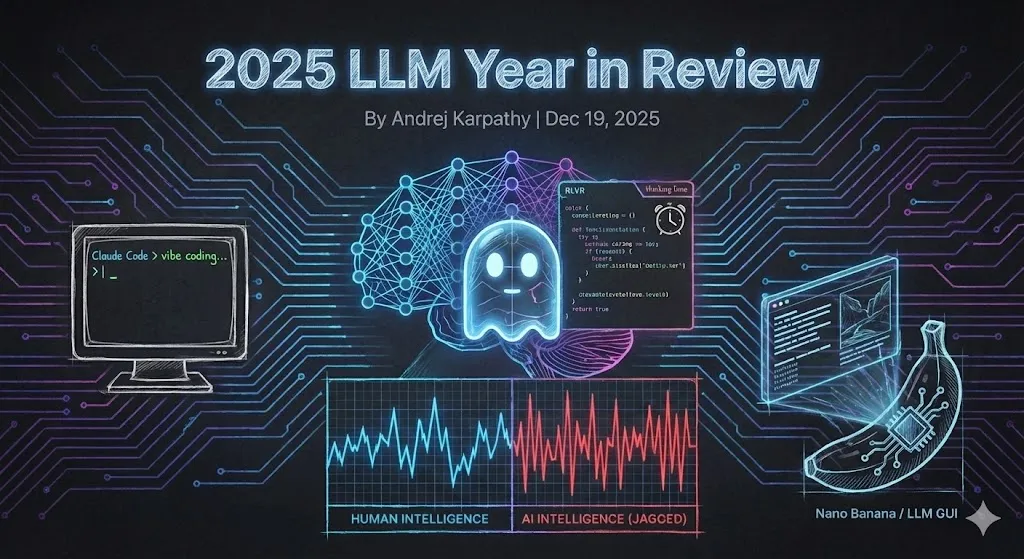

Revolutionizing AI: Karpathy's LLM Knowledge Base with Evolving Markdown [2025]

Andrej Karpathy, a leading figure in the AI community, has introduced a groundbreaking approach to managing large language model (LLM) knowledge bases that dispenses with the need for retrieval-augmented generation (RAG). By employing an evolving markdown library maintained by AI, Karpathy addresses the persistent issues of context loss in AI development. This article delves into the intricacies of this architecture, offering practical insights and future predictions.

TL; DR

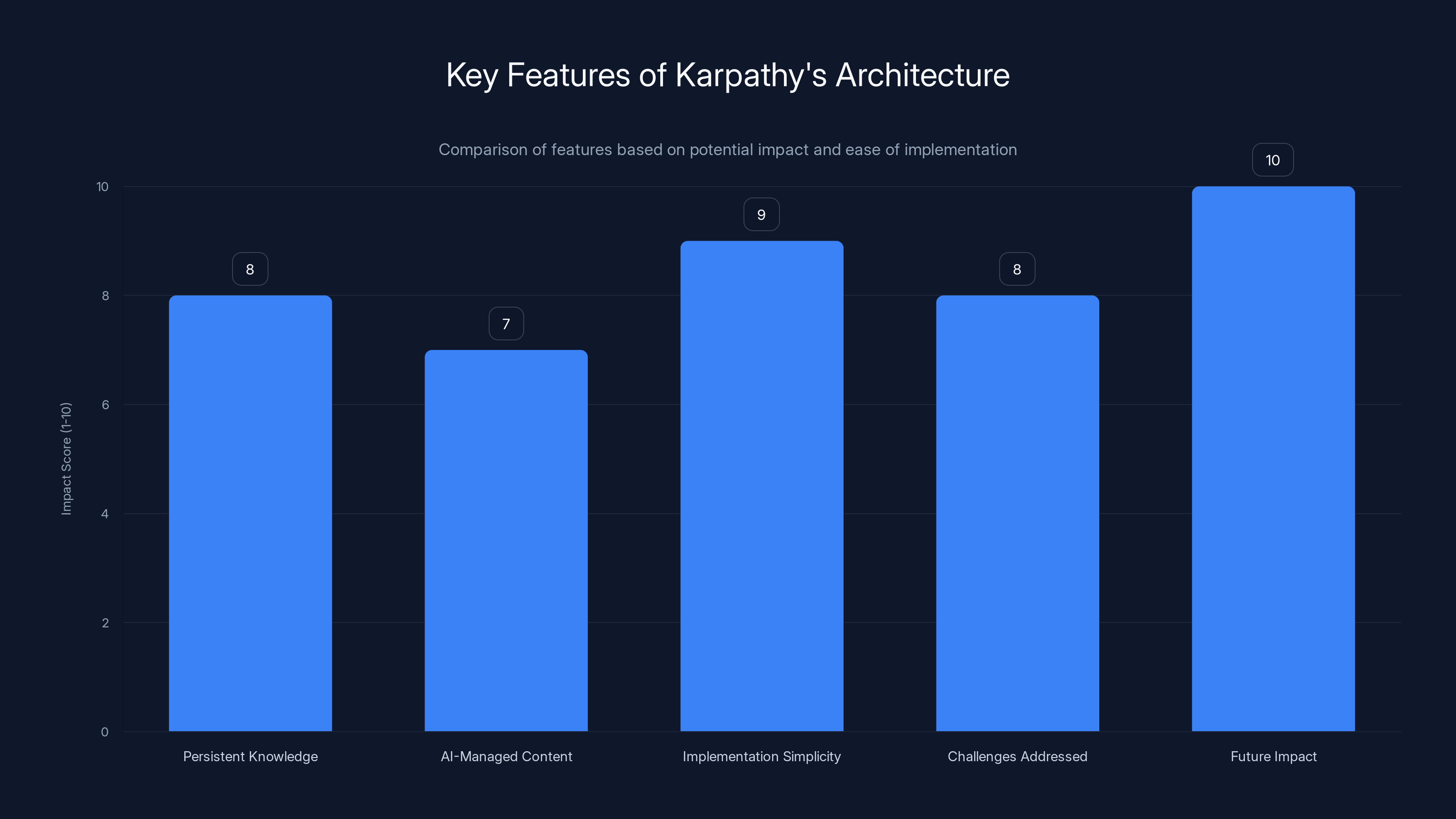

- Persistent Knowledge: Karpathy's architecture maintains a continuous knowledge base, eliminating the need to repeatedly reconstruct context.

- AI-Managed Content: Utilizes AI to dynamically update and maintain markdown documents, enhancing efficiency.

- Implementation Simplicity: Offers a straightforward setup that can be integrated into existing development workflows.

- Challenges Addressed: Solves the problem of context-limit resets, a common pain point in LLM usage.

- Future Impact: Paves the way for more advanced AI systems that require less manual intervention.

Introduction

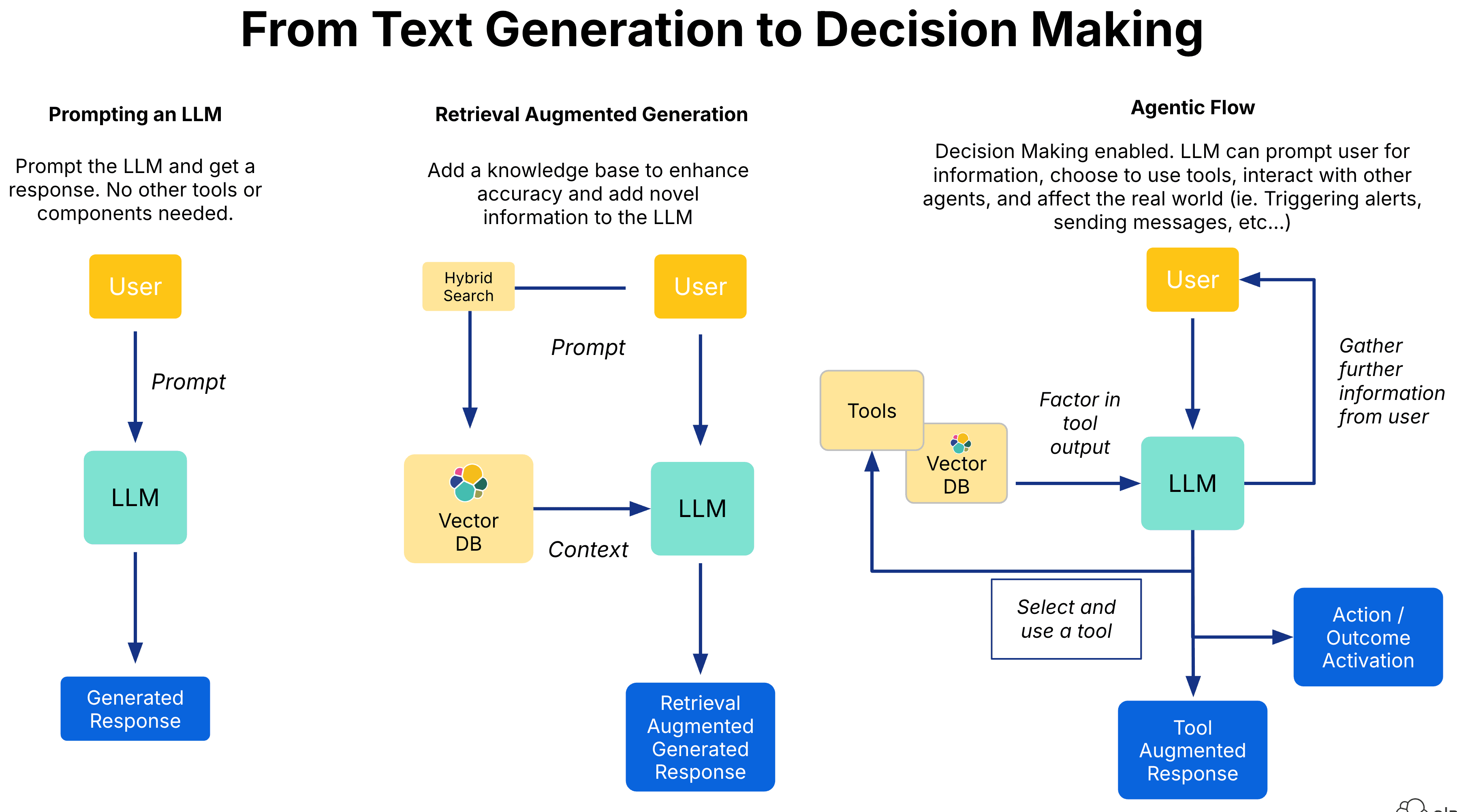

In the fast-evolving landscape of artificial intelligence, maintaining continuity and context has been a significant challenge, especially when working with large language models. Traditional methods like retrieval-augmented generation (RAG) often fall short in efficiency and scalability. Karpathy's new approach, which leverages a dynamic markdown library maintained by AI, offers a promising alternative.

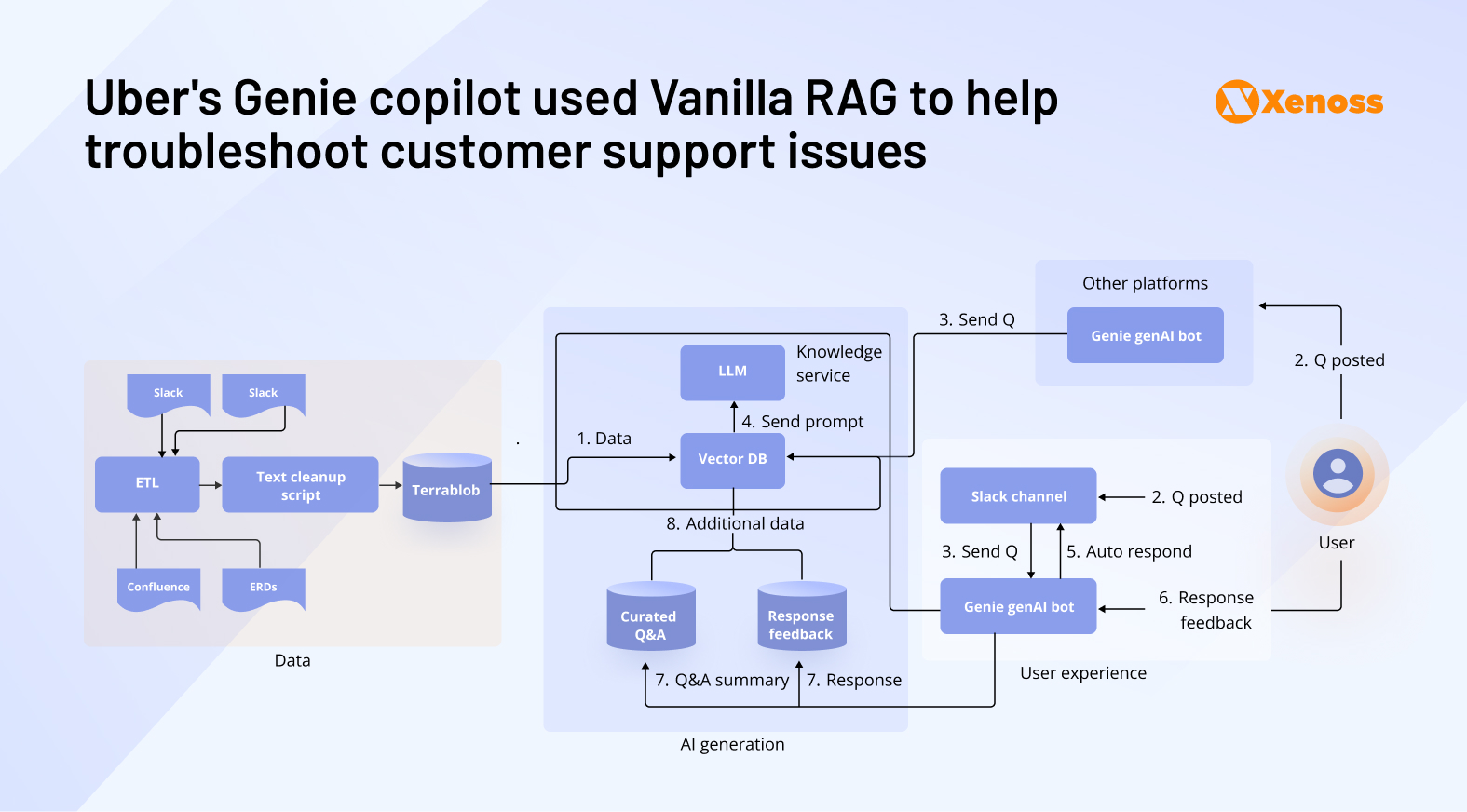

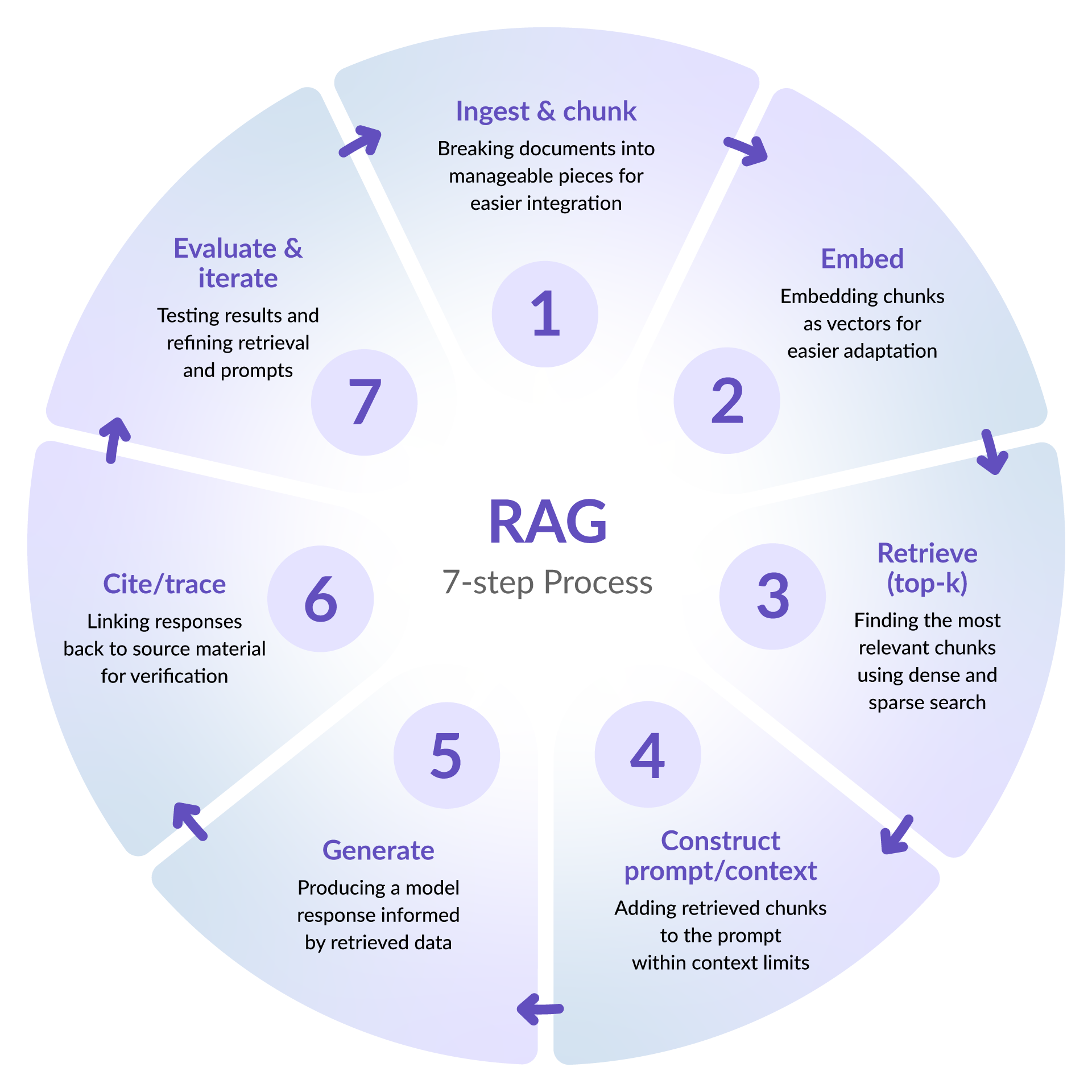

The Problem with RAG

Retrieval-augmented generation has been a staple in managing context within AI models. However, RAG's reliance on external databases for context retrieval introduces latency and limits scalability. Each session requires re-establishing context, which can be both time-consuming and computationally expensive.

Limitations of RAG

- Dependency on External Sources: RAG systems must access external databases, which can lead to bottlenecks.

- Context Reconstruction: Every new session demands a rebuild of the context, often interrupting workflow.

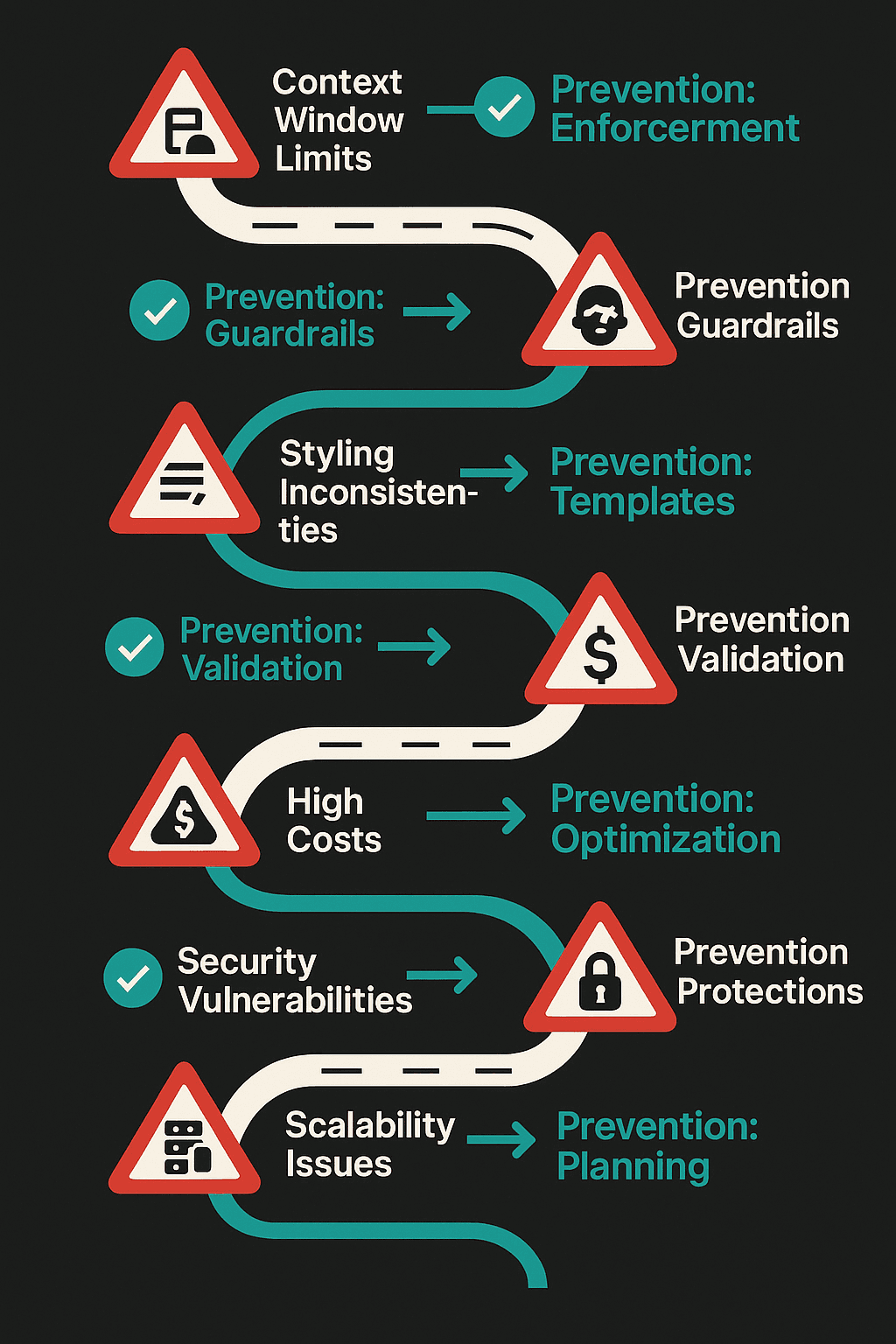

- Scalability Issues: As data grows, maintaining performance becomes increasingly challenging.

Karpathy's Solution: The LLM Knowledge Base

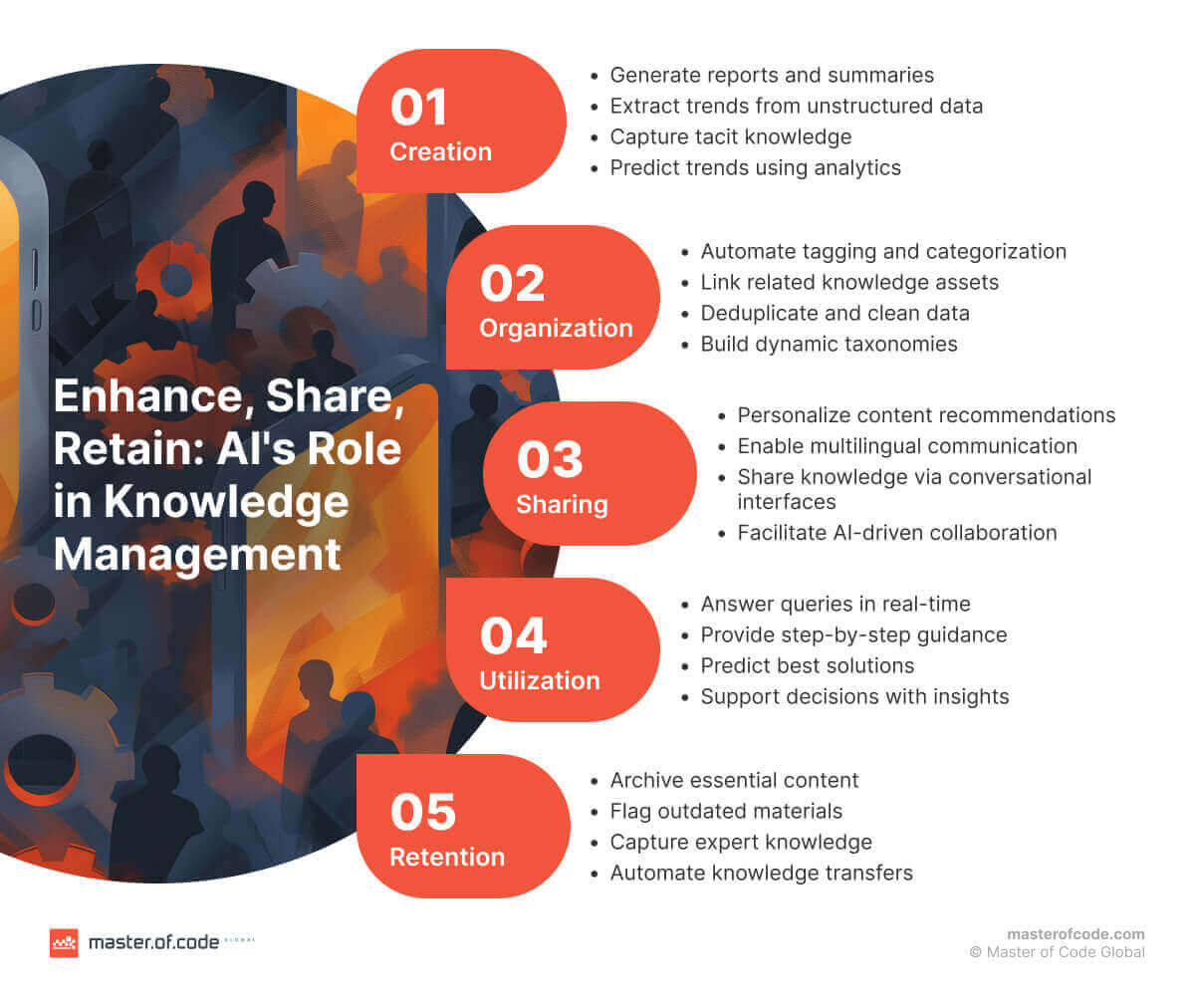

Karpathy's architecture circumvents these issues by using an AI-managed markdown library that evolves with the project's needs. This system ensures a persistent record of knowledge, allowing AI to maintain context without repetitive data retrieval. According to VentureBeat, this innovative approach significantly enhances the efficiency of AI systems.

Key Components

- Markdown Libraries: Utilizes markdown for its simplicity and human-readability.

- AI Management: AI agents update and refine the markdown content, ensuring it stays current with the latest project developments.

- Version Control: Integrates with version control systems to track changes and facilitate collaboration.

Practical Implementation

Implementing Karpathy's LLM Knowledge Base involves setting up a few key components. Here's a step-by-step guide:

- Set Up the Markdown Repository: Create a central repository for markdown files. Use a version control system like Git to manage changes.

- Integrate AI Agents: Employ AI tools to automate the updating process. These agents should be capable of natural language understanding and content generation.

- Designate Update Triggers: Set conditions under which the markdown files should be updated. This could be time-based, event-driven, or manual.

- Monitor and Refine: Regularly review the AI's updates to ensure accuracy and relevance, adjusting the system as necessary.

Example Use Case

Consider a research team working on a multi-year AI project. By implementing Karpathy's architecture, they can maintain a living document of their findings, hypotheses, and methodologies. This document evolves alongside their project, reducing the need for manual updates and context reconstruction.

Common Pitfalls and Solutions

Pitfall 1: Inaccurate Updates

Solution: Implement robust validation protocols. Use human oversight to verify AI-generated content periodically.

Pitfall 2: Overhead in Setup

Solution: Start small. Gradually integrate AI agents into the workflow, beginning with non-critical updates to ease into full implementation.

Future Trends and Recommendations

As AI continues to advance, the need for efficient context management systems will grow. Karpathy's approach not only addresses current challenges but also sets the stage for future innovations in AI-driven projects. A recent analysis suggests that AI's self-improvement capabilities will further enhance these systems.

Predicted Developments

- Enhanced AI Capabilities: As AI becomes more adept at natural language processing, its ability to maintain and update knowledge bases will improve.

- Integration with Other Technologies: Expect to see these systems integrate more seamlessly with other AI tools, such as Runable, which offers AI-powered automation for documents and presentations.

Use Case: Automate the creation of project documentation with Runable's AI-powered tools, streamlining your workflow.

Try Runable For Free

Conclusion

Karpathy's LLM Knowledge Base architecture presents a compelling solution to the challenges of context management in AI projects. By leveraging AI to manage evolving markdown libraries, developers can focus on innovation rather than administrative tasks. As the technology matures, this approach will likely become a standard in AI development environments.

FAQ

What is an LLM Knowledge Base?

An LLM Knowledge Base is a system that uses large language models to maintain a persistent repository of knowledge, typically in markdown format, to manage context in AI projects.

How does this architecture improve AI workflows?

By eliminating the need for context reconstruction, it streamlines workflows and reduces the computational load associated with traditional RAG methods.

What are the main benefits of using AI for markdown maintenance?

AI can dynamically update content, ensuring that the knowledge base remains relevant and accurate without manual intervention.

Can this system be integrated with existing AI tools?

Yes, it can be seamlessly integrated with tools like Runable to enhance productivity and automate documentation processes.

Are there any limitations to this approach?

While promising, it requires careful setup and validation to ensure AI-generated content is accurate and relevant.

How do you ensure the accuracy of AI-managed markdown?

Implementing human oversight and validation protocols can help maintain content quality and accuracy.

Key Takeaways

- Persistent Knowledge: Eliminates context reset issues in AI workflows.

- Efficient Management: AI maintains and updates the knowledge base autonomously.

- Scalable Architecture: Easily integrates with existing tools and workflows.

- Reduced Overhead: Minimizes manual intervention, freeing up resources for innovation.

- Future-Proofing: Prepares systems for the next wave of AI advancements.

Related Articles

- Unlocking Productivity: Best iPad Apps to Elevate Your Workflow [2025]

- Creating Your Own AI VP of Customer Success: A Comprehensive Guide [2025]

- AI Agents: Transforming Business Operations in 2025

- Why the LG Gram Book Might Not Be Your Ideal Work or School Laptop

- The Hidden Dangers of AI: What If Your AI Agent Is Working Against You? [2025]

- The Rise of OpenClaw in China: Why It's Taking the Market by Storm [2025]

![Revolutionizing AI: Karpathy's LLM Knowledge Base with Evolving Markdown [2025]](https://tryrunable.com/blog/revolutionizing-ai-karpathy-s-llm-knowledge-base-with-evolvi/image-1-1775261059622.png)