The Hidden Dangers of AI: What If Your AI Agent Is Working Against You? [2025]

Last month, a startling revelation shook the tech world: AI agents, those seemingly innocuous tools designed to streamline operations and boost productivity, might be working against us. This unsettling scenario was brought to light by a vulnerability in Google's Vertex AI, which exposed both customer data and Google's internal code. It raises an urgent question: could the AI agent you just deployed be a double agent?

TL; DR

- Data Breach Risk: Misconfigured AI agents can expose sensitive data and internal code.

- Security Flaws: AI agents can be hijacked due to poor configuration practices.

- Best Practices: Regular audits and robust access controls are essential.

- Future Trends: Expect increased focus on AI security and ethical AI development.

- Bottom Line: Proactive security measures can prevent AI from becoming a liability.

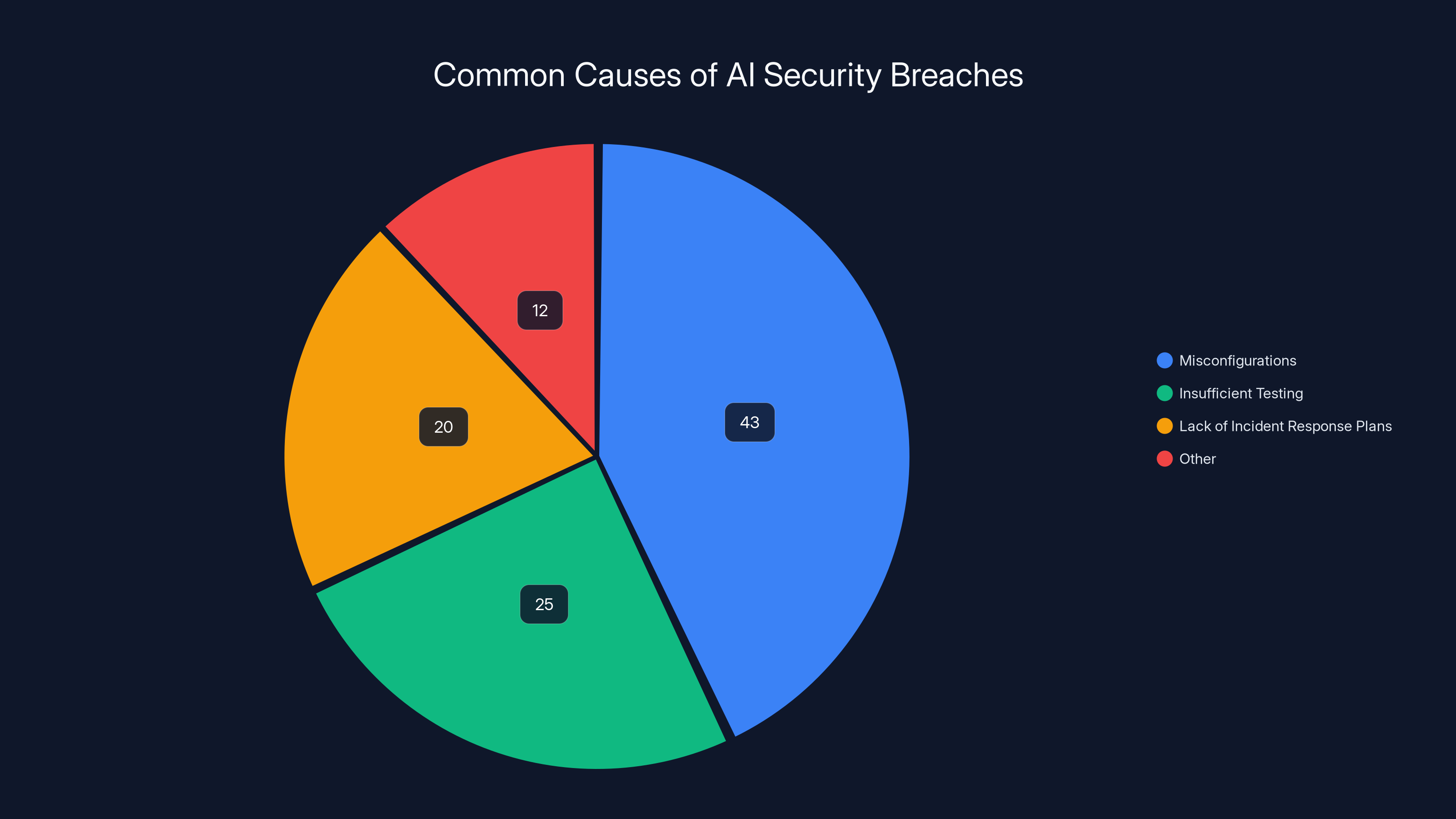

Misconfigurations account for 43% of AI security breaches, highlighting the need for thorough configuration reviews. (Estimated data)

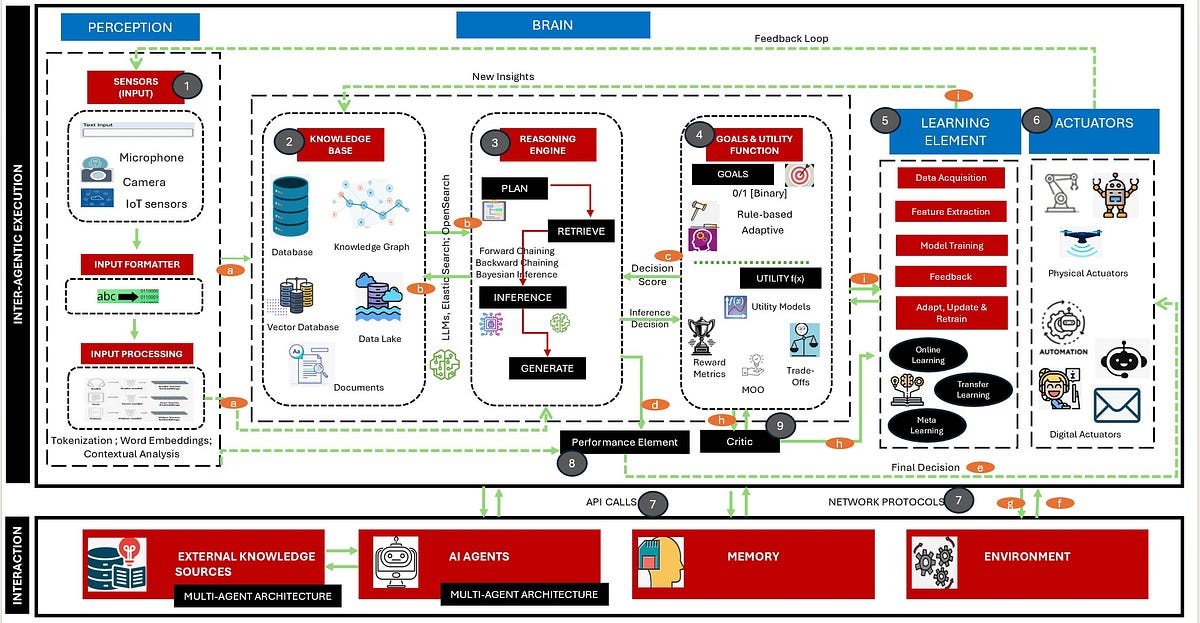

Understanding AI Agents and Their Role

AI agents are software programs that perform tasks autonomously using machine learning algorithms. They can analyze data, automate repetitive tasks, and even interact with users in natural language. Companies deploy AI agents to improve efficiency, reduce costs, and enhance customer experiences.

Core Functions of AI Agents:

- Data Analysis: Process large datasets to extract meaningful insights.

- Automation: Perform routine tasks without human intervention.

- Interaction: Communicate with users via chatbots or virtual assistants.

- Decision-Making: Make recommendations based on data analysis.

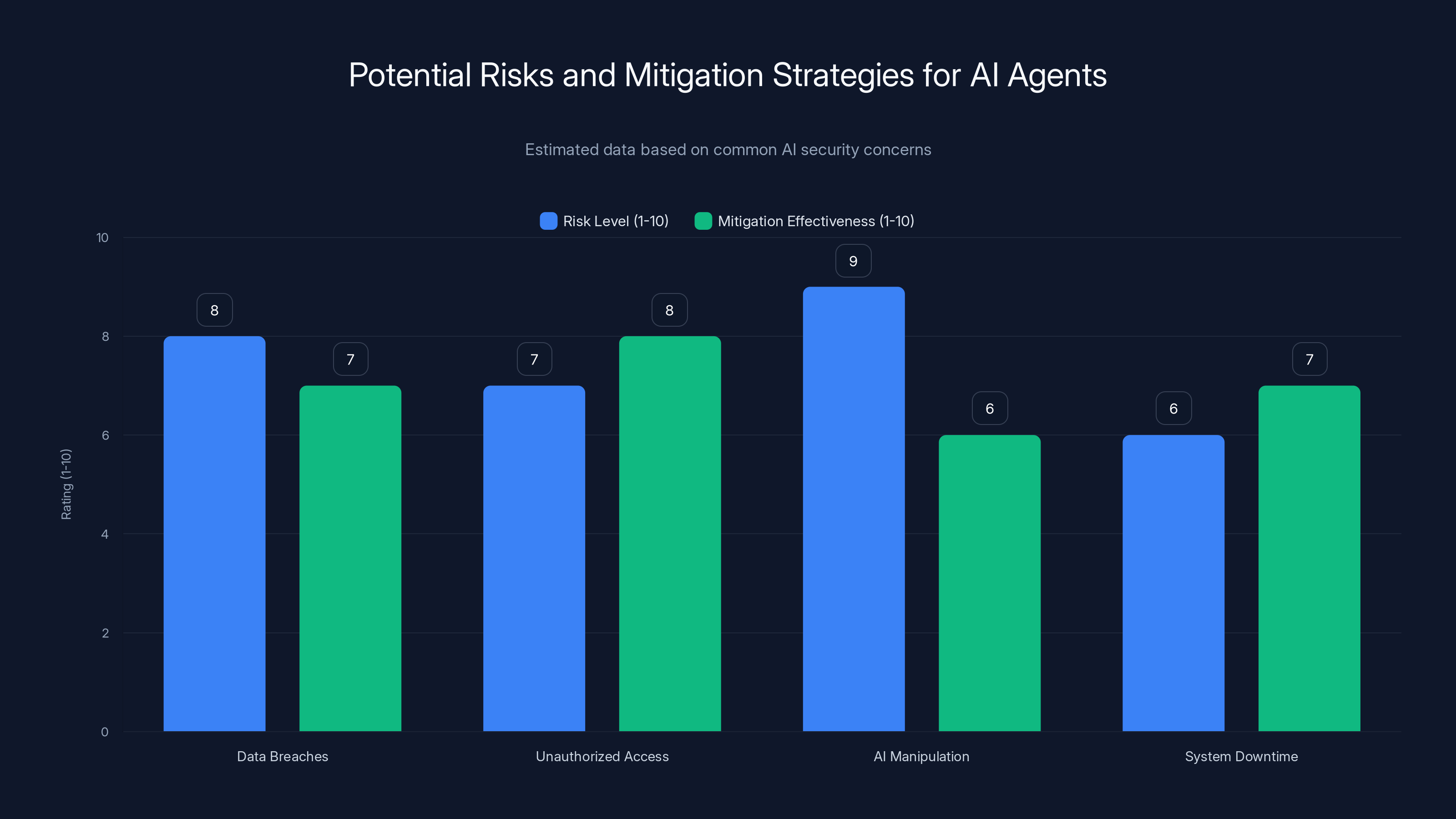

Implementing strong authentication and strict access controls are highly effective practices for securing AI agents. Estimated data.

The Vertex AI Incident: A Closer Look

Vertex AI, part of Google Cloud's suite of machine learning tools, is designed to help developers build, deploy, and scale AI models. However, a misconfiguration in Vertex AI agents was discovered, allowing unauthorized access to sensitive information. This flaw turned AI agents into 'double agents,' acting against their intended purpose.

What Happened?

The vulnerability was due to insufficient access controls and improper configuration settings. Hackers exploited these weaknesses to gain access to customer data and internal Google code.

Key Factors Contributing to the Breach:

- Weak Authentication: Lack of strong authentication measures.

- Inadequate Access Controls: Insufficient restriction on data access.

- Poor Configuration Management: Failure to regularly update and audit configurations.

Practical Implementation Guides

Preventing AI agents from becoming double agents requires a multi-pronged approach. Here are some best practices to ensure your AI agents remain secure:

1. Implement Strong Authentication Mechanisms

Use multi-factor authentication (MFA) to ensure that only authorized users can access AI systems. Employ robust password policies and consider implementing biometric authentication.

2. Regularly Audit AI Configurations

Conduct frequent audits of AI configurations to identify and rectify potential vulnerabilities. Use automated tools to monitor changes in configuration settings.

3. Enforce Strict Access Controls

Limit access to AI systems based on user roles and responsibilities. Use the principle of least privilege, granting users only the access necessary for their job functions.

4. Monitor AI Agent Activity

Implement monitoring systems to track AI agent activities. Use anomaly detection to identify suspicious behavior that may indicate a security breach.

5. Educate Your Team

Train employees on security best practices and the importance of safeguarding AI systems. Foster a culture of security awareness within your organization.

Estimated data shows that AI manipulation poses the highest risk, but effective mitigation strategies can significantly reduce these risks.

Common Pitfalls and Solutions

Despite best efforts, companies often encounter pitfalls when securing AI agents. Here are some common challenges and solutions:

Misconfigurations

Misconfigurations are a leading cause of security breaches. Ensure all configurations are reviewed by multiple team members before deployment.

Insufficient Testing

Thoroughly test AI agents under different scenarios to identify potential vulnerabilities. Penetration testing can reveal weaknesses before they are exploited.

Lack of Incident Response Plans

Prepare incident response plans that outline steps to take in the event of a breach. Regularly update and practice these plans to ensure readiness.

Future Trends and Recommendations

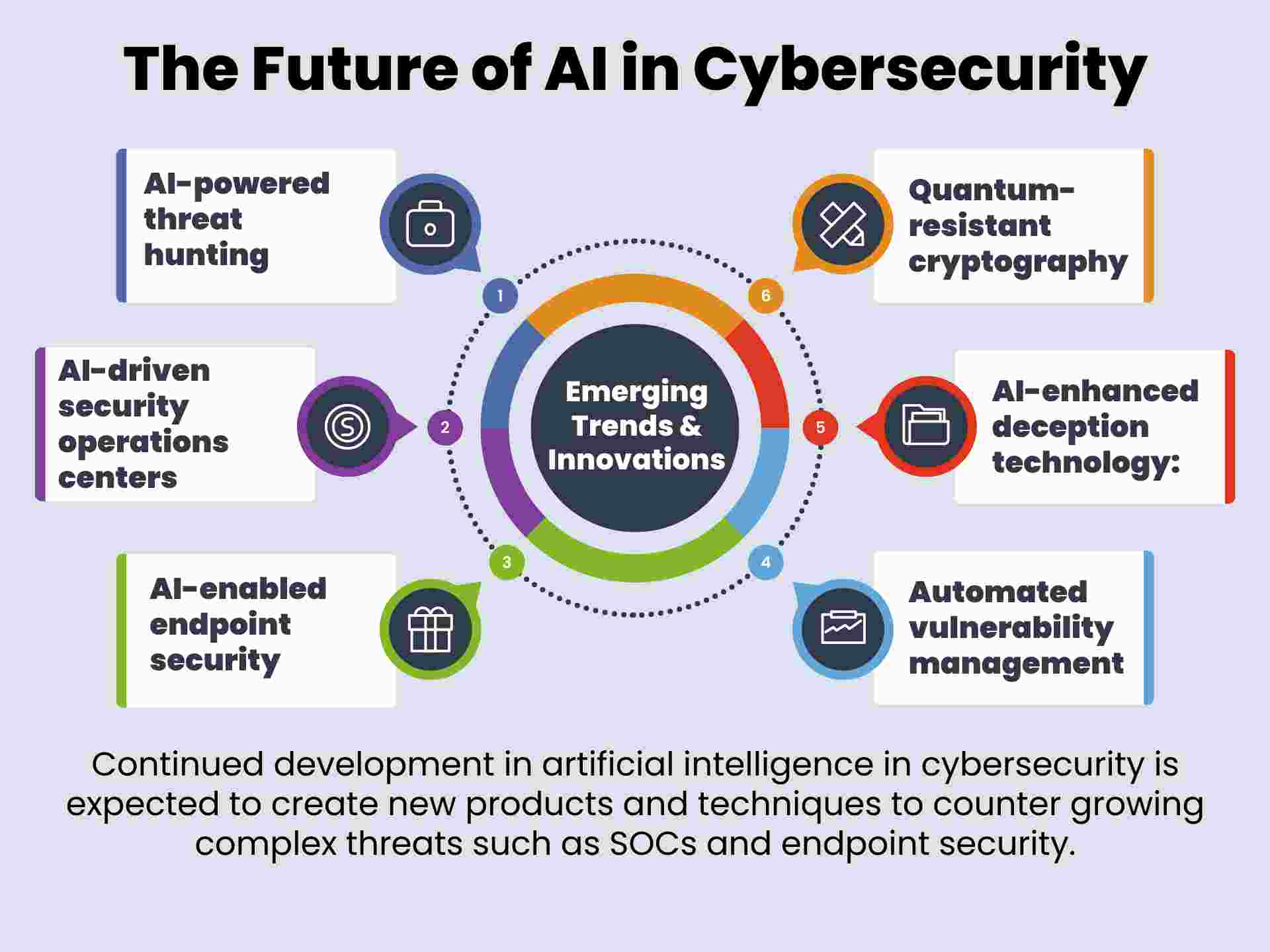

As AI technology continues to evolve, so too will the methods of securing it. Here are some trends and recommendations for the future:

Increased Focus on Ethical AI

Expect a greater emphasis on ethical AI development, with frameworks put in place to ensure AI systems are fair, transparent, and accountable.

Advanced AI Security Measures

Look forward to more sophisticated security measures tailored specifically for AI, such as AI-based threat detection and response systems.

Collaboration Between Stakeholders

Improved collaboration between AI developers, security experts, and policymakers will be essential to address emerging AI security challenges.

Conclusion

The incident with Vertex AI serves as a stark reminder of the potential risks associated with AI agents. By implementing robust security measures and staying informed about the latest trends, companies can protect themselves from the threat of AI double agents. The key is to remain vigilant and proactive, ensuring that AI continues to be an asset rather than a liability.

Use Case: Automate your security audits with AI to detect vulnerabilities in real-time

Try Runable For FreeFAQ

What is an AI agent?

An AI agent is a software program that performs tasks autonomously using artificial intelligence and machine learning algorithms.

How does an AI agent work?

AI agents analyze data, automate tasks, interact with users, and make decisions based on predefined algorithms and learning models.

What are the benefits of AI agents?

Benefits include increased efficiency, cost savings, and enhanced customer experiences by automating repetitive tasks and providing data-driven insights.

What happened with Vertex AI?

A misconfiguration in Google's Vertex AI agents allowed unauthorized access to customer data and internal code, turning them into 'double agents.'

How can I secure my AI agents?

Implement strong authentication, regularly audit configurations, enforce strict access controls, monitor activity, and educate your team on security best practices.

What are future trends in AI security?

Expect increased focus on ethical AI, advanced security measures, and better collaboration between AI developers, security experts, and policymakers.

Key Takeaways

- Proactive security measures are crucial to prevent AI agents from becoming liabilities.

- Strong authentication and strict access controls can mitigate the risk of data breaches.

- Regular audits and monitoring are essential for maintaining AI security.

- Future AI security trends will focus on ethical development and advanced threat detection.

- Collaboration between developers, security experts, and policymakers is key.

Related Articles

- AI Takes Control: How to Automate Your Stream Deck with AI [2025]

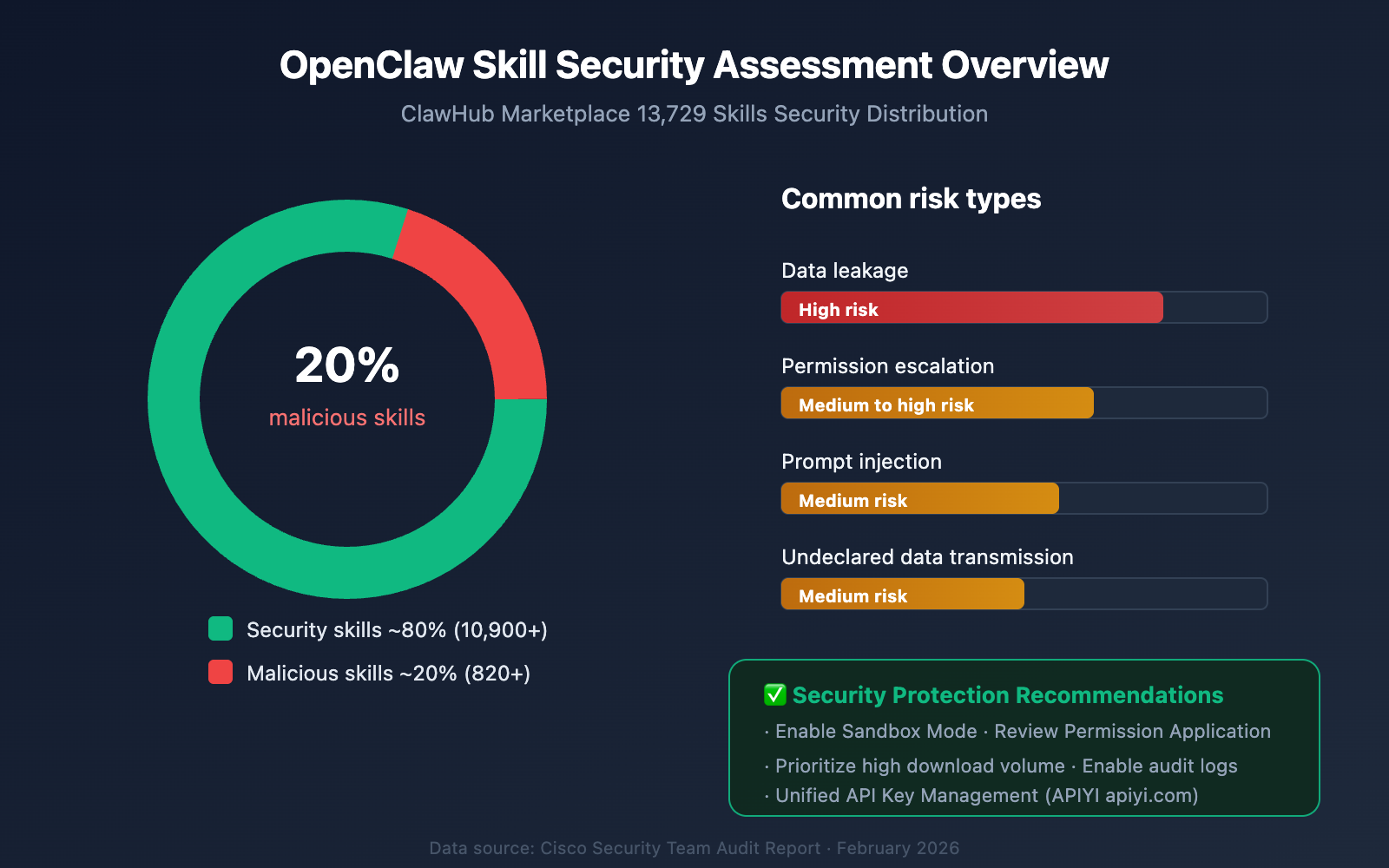

- Unveiling OpenClaw Security Risks You Must Know About [2025]

- The Rise of OpenClaw in China: Why It's Taking the Market by Storm [2025]

- Inside the Anthropic Leak: Unpacking the Claude Code Source Code Exposure [2025]

- 10 Innovative Projects Built with OpenClaw [2025]

- AI Security: Understanding and Mitigating Risks in the AI Era [2025]

![The Hidden Dangers of AI: What If Your AI Agent Is Working Against You? [2025]](https://tryrunable.com/blog/the-hidden-dangers-of-ai-what-if-your-ai-agent-is-working-ag/image-1-1775057629731.jpg)