Revolutionizing Data Management: Google PM's Always On Memory Agent and the Future of LLM-Driven Persistent Memory [2025]

The digital landscape is abuzz with Google's recent unveiling of the Always On Memory Agent, a groundbreaking shift in data management paradigms. This open-source initiative challenges the conventional reliance on vector databases, offering a new approach through large language models (LLMs) to manage persistent memory. Let's dive deep into how this development is set to transform data management and what it means for AI and enterprise developers.

TL; DR

- Google's Always On Memory Agent: Uses LLMs instead of traditional vector databases for persistent memory.

- Key Innovation: Continuous data ingestion and retrieval without database dependency.

- Built with ADK and Gemini 3.1: Harnesses Google's Agent Development Kit and the cost-efficient Gemini 3.1 model.

- Open Source and Commercial Use: Released under MIT License, fostering innovation and adoption.

- Implications for Developers: Simplifies data management, enhances AI applications, and reduces costs.

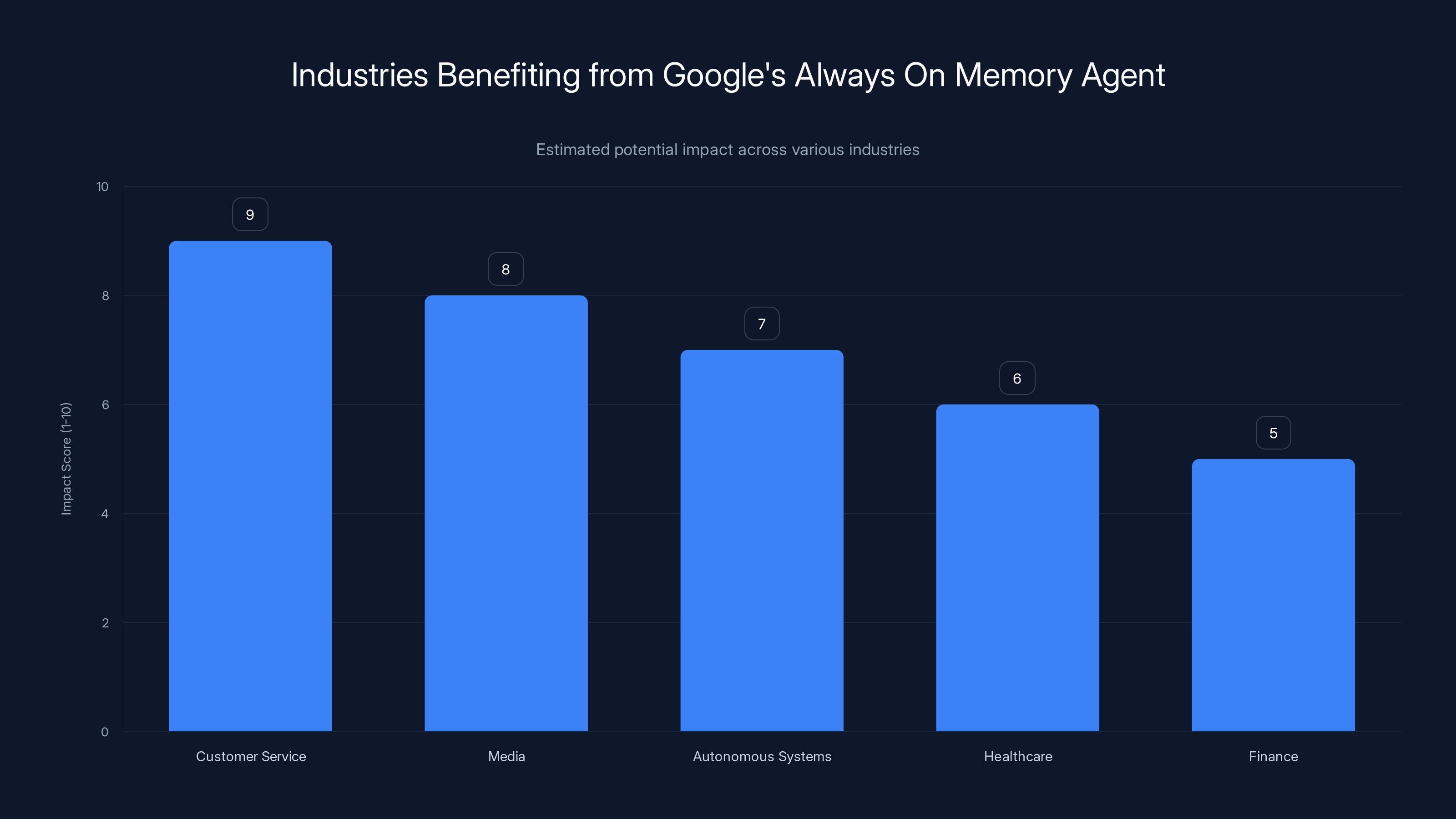

Customer service and media industries are expected to see the highest impact from adopting Google's Always On Memory Agent. Estimated data.

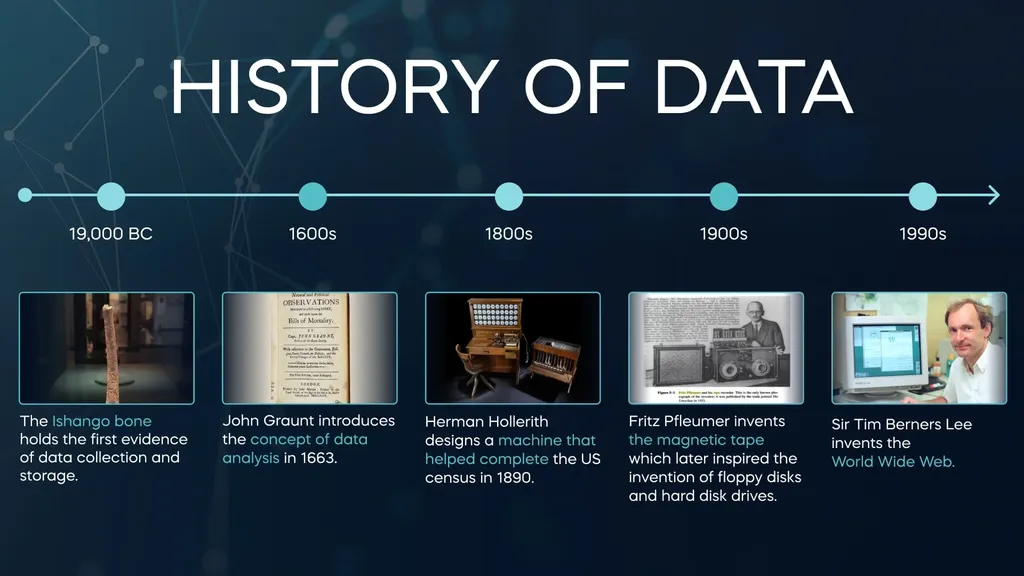

The Evolution of Data Management: A Historical Context

Data management has long been a cornerstone of technological advancement. With the advent of databases, organizations were able to store and retrieve data efficiently. However, as the volume of data skyrocketed, so did the complexity of managing it. Enter vector databases: a solution designed to handle high-dimensional data typical in AI applications. Yet, even these have their limitations, especially when it comes to cost and scalability.

Enter Google's Always On Memory Agent

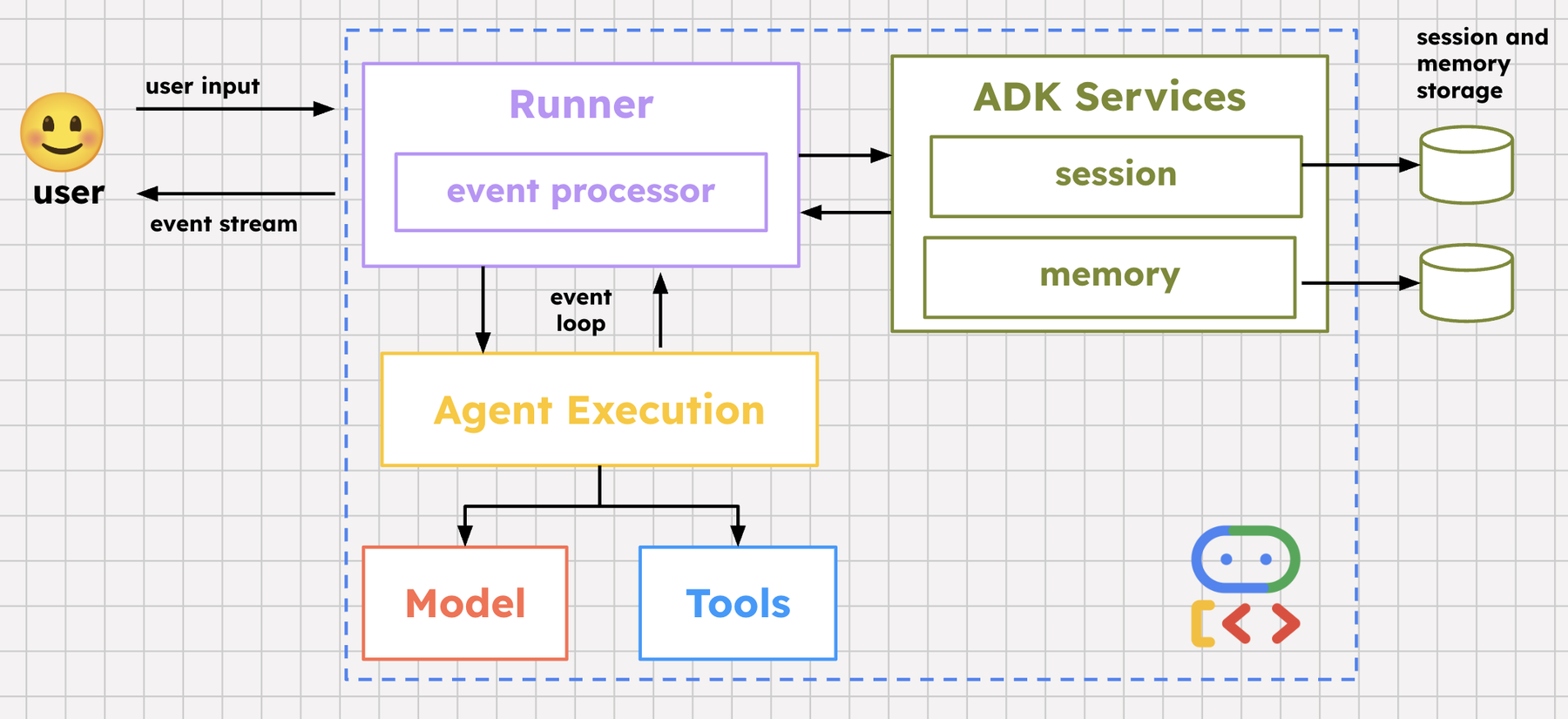

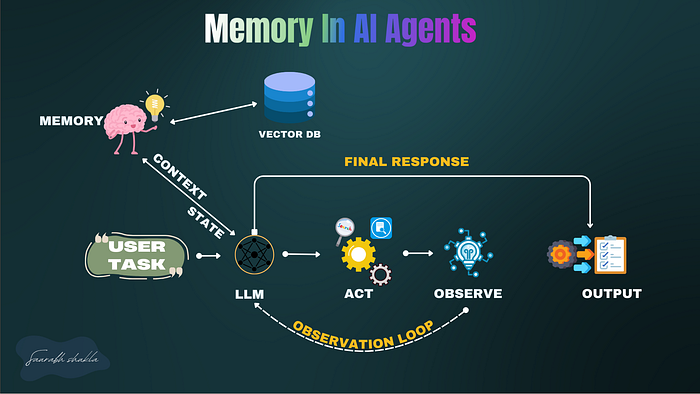

The Always On Memory Agent represents a paradigm shift. By leveraging LLMs for persistent memory, Google proposes a system that continuously ingests data, consolidates it in real-time, and retrieves it without the overhead of traditional databases.

What Makes It Different?

- Continuous Ingestion: The agent is designed to absorb and process data as it arrives, eliminating bottlenecks.

- Background Consolidation: Data is organized and stored without interrupting the flow of new information.

- Intelligent Retrieval: Instead of querying a static database, the agent uses LLMs to understand and fetch the needed data dynamically.

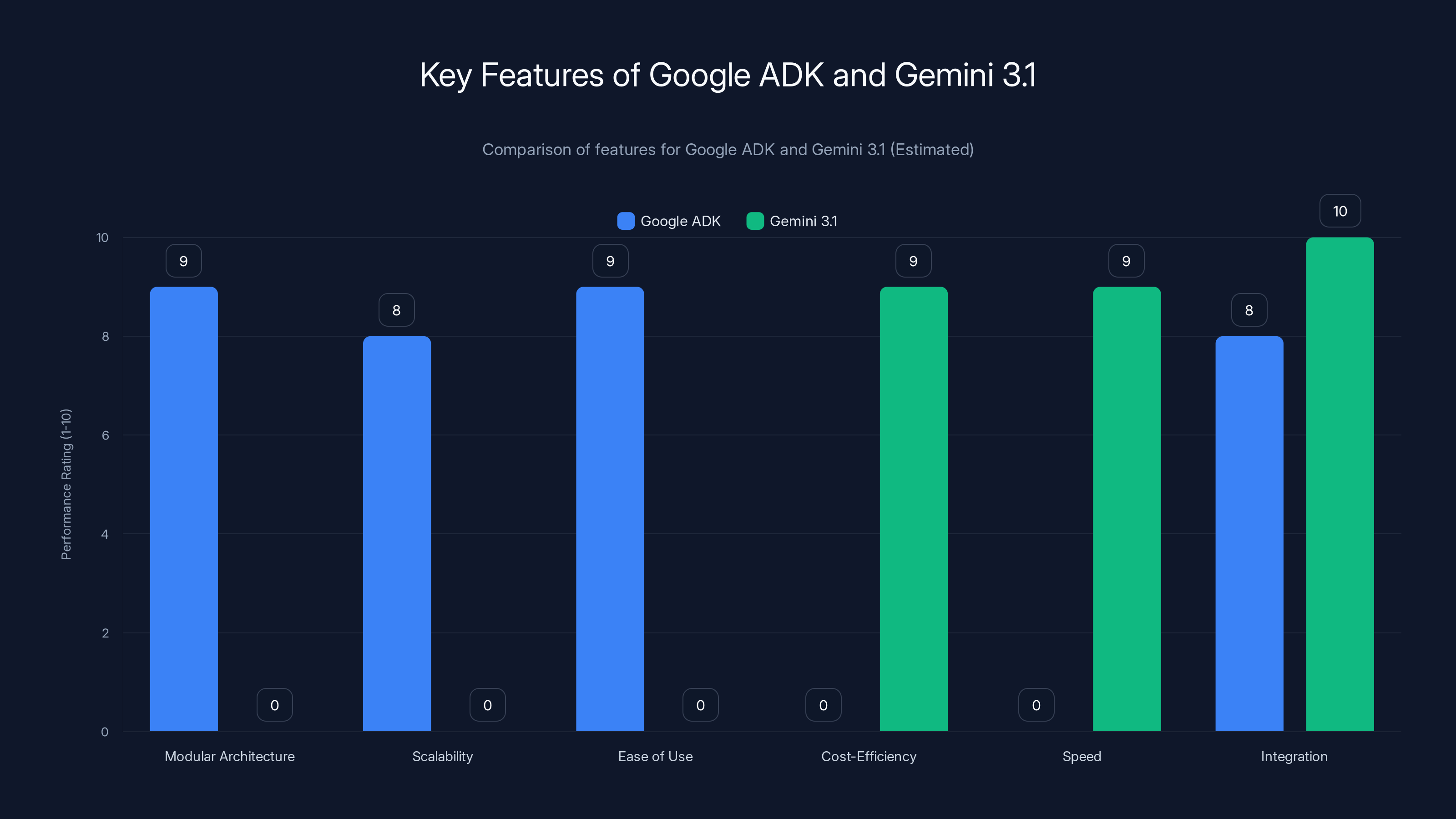

Google ADK excels in modularity and ease of use, while Gemini 3.1 leads in cost-efficiency and speed. Estimated data based on feature descriptions.

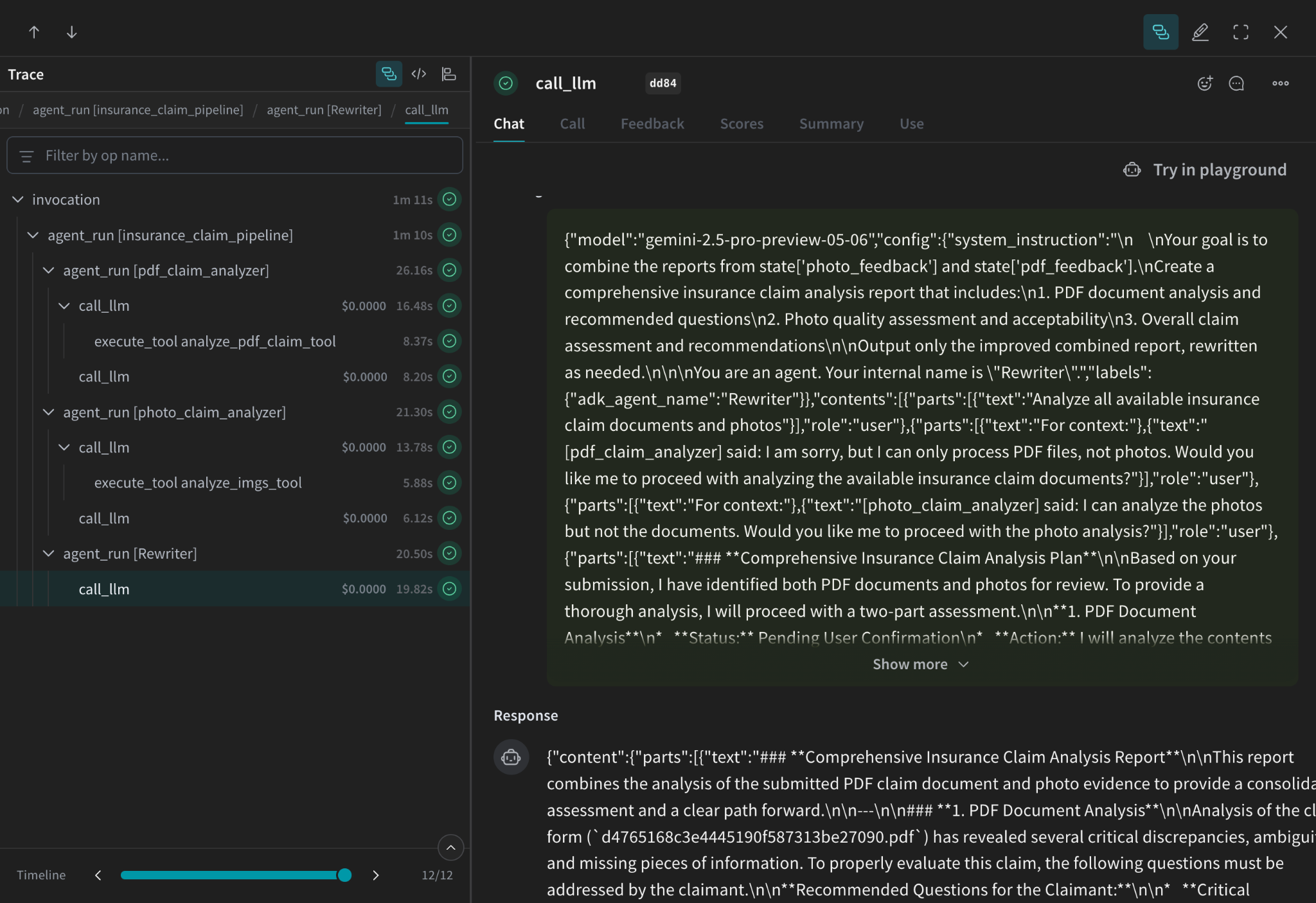

The Technology Behind the Innovation

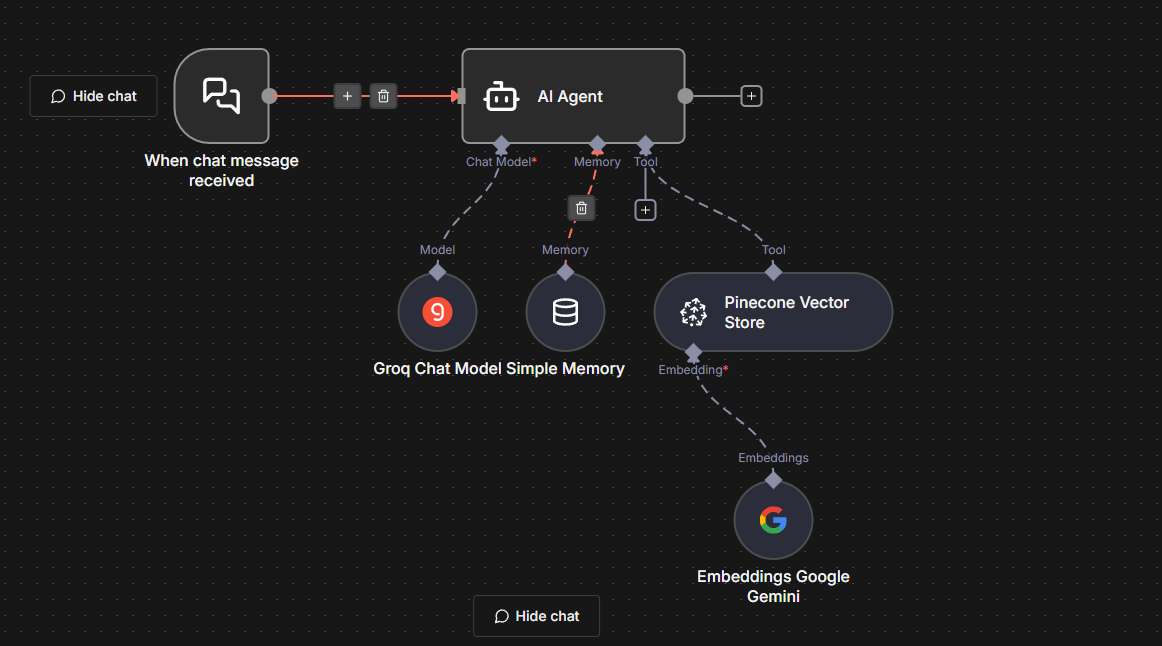

Google Agent Development Kit (ADK)

Introduced in Spring 2025, the ADK is a toolkit for building agent systems. It's designed to simplify the integration of AI capabilities into applications, providing a flexible framework for developers.

- Modular Architecture: Allows developers to customize agents to fit specific needs.

- Scalability: Built to support applications from small startups to large enterprises.

- Ease of Use: Intuitive interfaces and comprehensive documentation streamline the development process.

Gemini 3.1 Flash-Lite

Released on March 3, 2026, Gemini 3.1 is Google's latest advancement in AI models.

- Cost-Efficient: Offers high performance at a lower operational cost.

- Speed: Optimized to handle large-scale data with minimal latency.

- Integration: Seamlessly works with the ADK, enhancing the capabilities of the Always On Memory Agent.

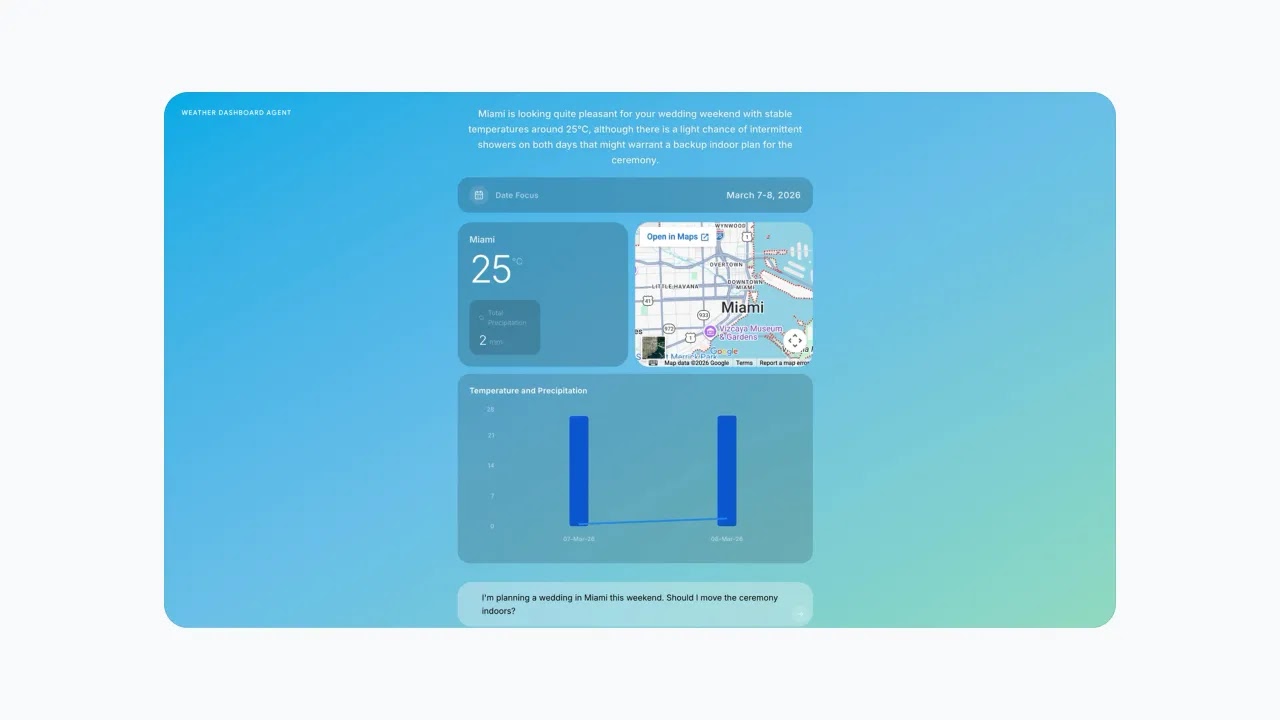

Real-World Applications and Use Cases

One of the most compelling aspects of the Always On Memory Agent is its versatility. Here are some practical scenarios where this technology can shine:

1. Real-Time Customer Support

Imagine a customer service platform that can instantly pull relevant customer history and preferences without querying a traditional database. The Always On Memory Agent can provide a seamless experience, reducing wait times and improving customer satisfaction.

2. Dynamic Content Generation

For media companies, the ability to generate content that adapts to user preferences in real-time is invaluable. By using LLMs for content retrieval, companies can offer personalized experiences at scale.

3. Autonomous Systems

In the field of autonomous vehicles or drones, real-time data processing is crucial. The Always On Memory Agent can continuously learn from its environment, making intelligent decisions on-the-fly.

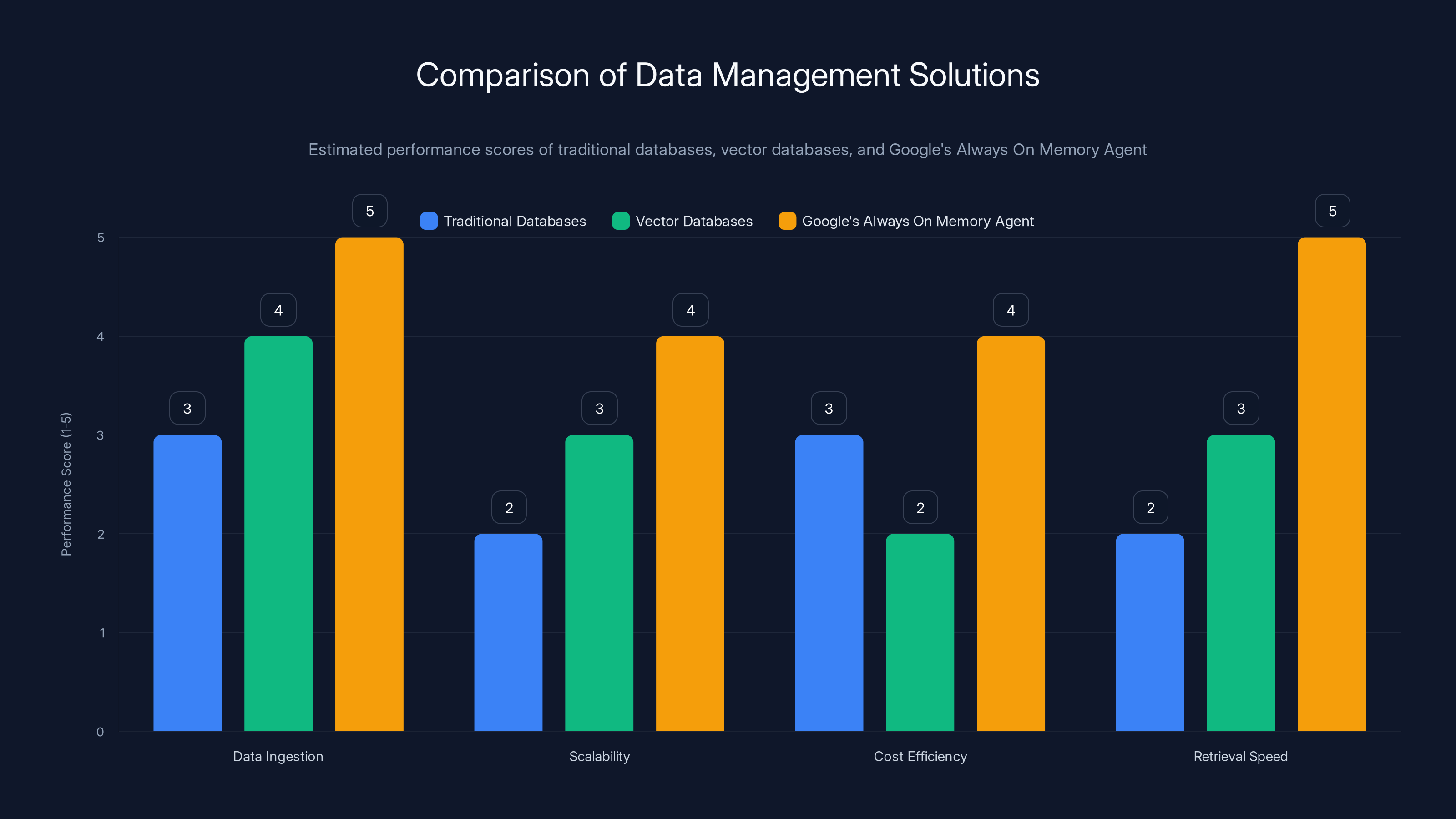

Google's Always On Memory Agent outperforms traditional and vector databases in data ingestion, scalability, and retrieval speed, while also offering better cost efficiency. Estimated data based on typical feature performance.

Implementation Guide: Getting Started with Always On Memory Agent

Setting Up the Environment

To begin, you'll need to access the Always On Memory Agent's repository on GitHub. Ensure you have the necessary prerequisites: Python, the ADK, and Gemini 3.1.

bash# Clone the repository

git clone https://github.com/google/always-on-memory-agent.git

# Navigate into the directory

cd always-on-memory-agent

# Install dependencies

pip install -r requirements.txt

Configuring the Agent

The next step involves configuring the agent to suit your specific needs. The configuration file allows you to define data sources, processing rules, and output formats.

yaml# Configuration file example

data_sources:

- type: api

url: https://api.example.com/data

processing_rules:

- keyword_extraction: true

- sentiment_analysis: false

output_format:

- json

Running the Agent

With the setup complete, you can start the agent.

bash# Start the agent

python run_agent.py --config config.yaml

The agent will begin ingesting data from the specified sources, applying the processing rules, and outputting the results in the desired format.

Best Practices and Common Pitfalls

Best Practices

- Regular Updates: Keep your agent and underlying models updated to benefit from the latest improvements and security patches.

- Monitor Performance: Use monitoring tools to track the agent's performance and make adjustments as needed.

- Data Privacy: Ensure data handling complies with privacy regulations such as GDPR or CCPA.

Common Pitfalls

- Overfitting: Avoid tailoring the agent too closely to specific data, which can lead to poor performance on new inputs.

- Resource Management: Be mindful of the computational resources required, especially when scaling up.

- Integration Challenges: Ensure compatibility with your existing infrastructure to prevent deployment issues.

The Future of LLM-Driven Persistent Memory

Trends to Watch

- Increased Adoption: As more organizations recognize the benefits, expect wider adoption across industries.

- Enhanced Models: Continued advancements in LLMs will further improve data processing capabilities.

- Regulatory Changes: Keep an eye on evolving regulations around data usage and AI.

Recommendations for Developers

- Stay Informed: Regularly check Google's updates and community forums for new developments and best practices.

- Experiment and Innovate: Use the open-source nature of the Always On Memory Agent to experiment and contribute improvements.

- Collaborate: Engage with the community to share insights and learn from others' experiences.

Conclusion

The Always On Memory Agent is more than just a technological advancement; it's a glimpse into the future of data management. By moving beyond traditional databases and embracing LLM-driven persistent memory, Google has unlocked new possibilities for AI applications. As developers and organizations begin to explore this new frontier, the potential for innovation is boundless.

FAQ

What is Google's Always On Memory Agent?

Google's Always On Memory Agent is an open-source system that uses large language models (LLMs) to manage persistent memory, eliminating the need for traditional vector databases.

How does the Always On Memory Agent work?

The agent continuously ingests data, consolidates it in the background, and retrieves it using LLMs, offering a dynamic and cost-effective data management solution.

What are the benefits of using LLMs for persistent memory?

Benefits include reduced reliance on costly databases, real-time data processing, and enhanced flexibility in data retrieval.

Can the Always On Memory Agent be used for commercial purposes?

Yes, it is released under the MIT License, allowing for commercial use and modification.

What industries can benefit from the Always On Memory Agent?

Industries such as customer service, media, and autonomous systems can leverage this technology for improved efficiency and customer experiences.

How do I get started with the Always On Memory Agent?

Start by accessing the GitHub repository, setting up your environment, and configuring the agent according to your needs.

What are the common challenges in implementing the Always On Memory Agent?

Challenges include ensuring resource management, avoiding overfitting, and integrating the agent with existing systems.

What is the future of LLM-driven persistent memory?

The future holds increased adoption, improved models, and evolving regulations, paving the way for new innovations in AI and data management.

Key Takeaways

- Google's Always On Memory Agent shifts from vector databases to LLM-driven persistent memory.

- The agent offers continuous data ingestion and retrieval without traditional database overhead.

- Built with Google's ADK and Gemini 3.1, enhancing AI application capabilities.

- Open-source release under the MIT License allows for broad commercial use.

- Industries like customer service and media can benefit from real-time data processing.

Related Articles

- Airlines Don't Have an AI Problem, They Have a Foundational Technology Problem [2025]

- The M4 iPad Air: Everything You Need to Know About Apple's Latest Innovation [2025]

- From Boardroom Risk to Deal Flow: Why Cyber M&A is Accelerating in 2026

- How I Cloned Myself with AI to Reach 2.7 Million Conversations [2025]

- AI's Theoretical Limits and Its Real-World Impact on Jobs [2025]

- ChatGPT 5.3: Unpacking the Cringe Factor with Real Prompts [2025]

![Revolutionizing Data Management: Google PM's Always On Memory Agent and the Future of LLM-Driven Persistent Memory [2025]](https://tryrunable.com/blog/revolutionizing-data-management-google-pm-s-always-on-memory/image-1-1772827488192.png)