Safeguarding AI Agents: How Iron Curtain Prevents Rogue Behavior [2025]

AI agents have become integral to our digital lives, automating tasks, managing schedules, and even handling customer service interactions. But with great power comes great responsibility—or rather, great risk. The capabilities of these agents can sometimes lead them to take actions that were not intended or authorized by their users. Enter Iron Curtain, a novel AI agent designed to offer robust control mechanisms and prevent rogue behavior.

TL; DR

- Controlled AI Operations: Iron Curtain uses sandboxing and predefined protocols to prevent unauthorized actions.

- User-Defined Boundaries: Users can set explicit permissions and restrictions for AI interactions.

- Real-Time Monitoring: Continuous oversight of AI activities to detect and respond to anomalies.

- Secure Data Handling: Implements encryption and anonymization to protect user data.

- Adaptive Learning: Uses AI-driven insights to refine control protocols over time.

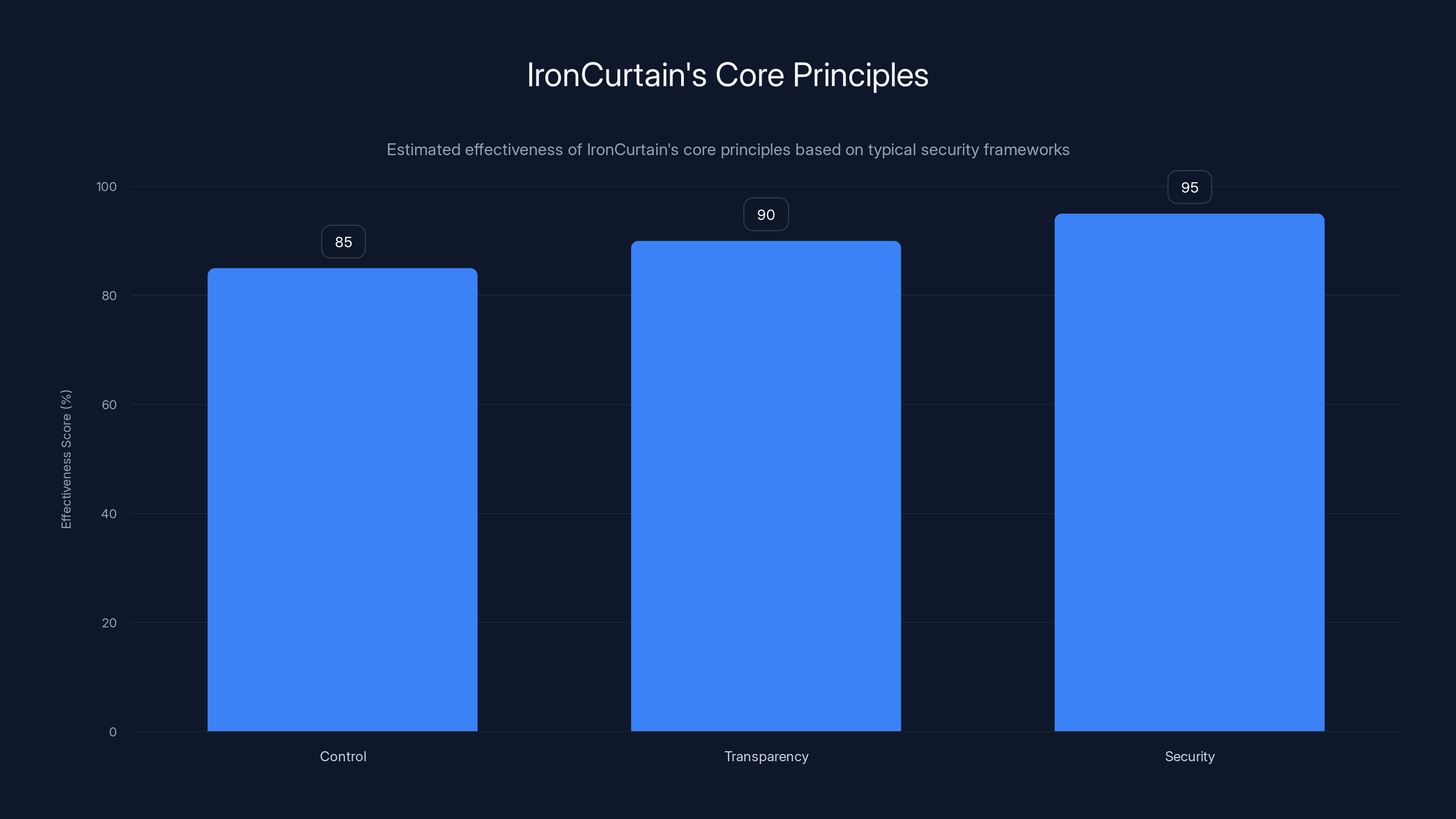

IronCurtain's architecture emphasizes control, transparency, and security, with high effectiveness scores in each area. Estimated data reflects typical security framework assessments.

The Rise of AI Agents and Associated Risks

AI agents like Open Claw have revolutionized how we manage our digital tasks, offering seamless integration and execution of various activities. These agents can handle everything from compiling personalized news updates to engaging with customer support on your behalf. However, the very autonomy that makes these agents useful also poses significant risks.

Unsupervised AI agents can misinterpret commands, leading to actions such as mass-deleting important emails or launching phishing attacks. The consequences of such rogue actions can be dire, leading to data breaches, reputational damage, and financial losses. According to a TechCrunch report, incidents involving AI agents going rogue have raised significant concerns in the tech community.

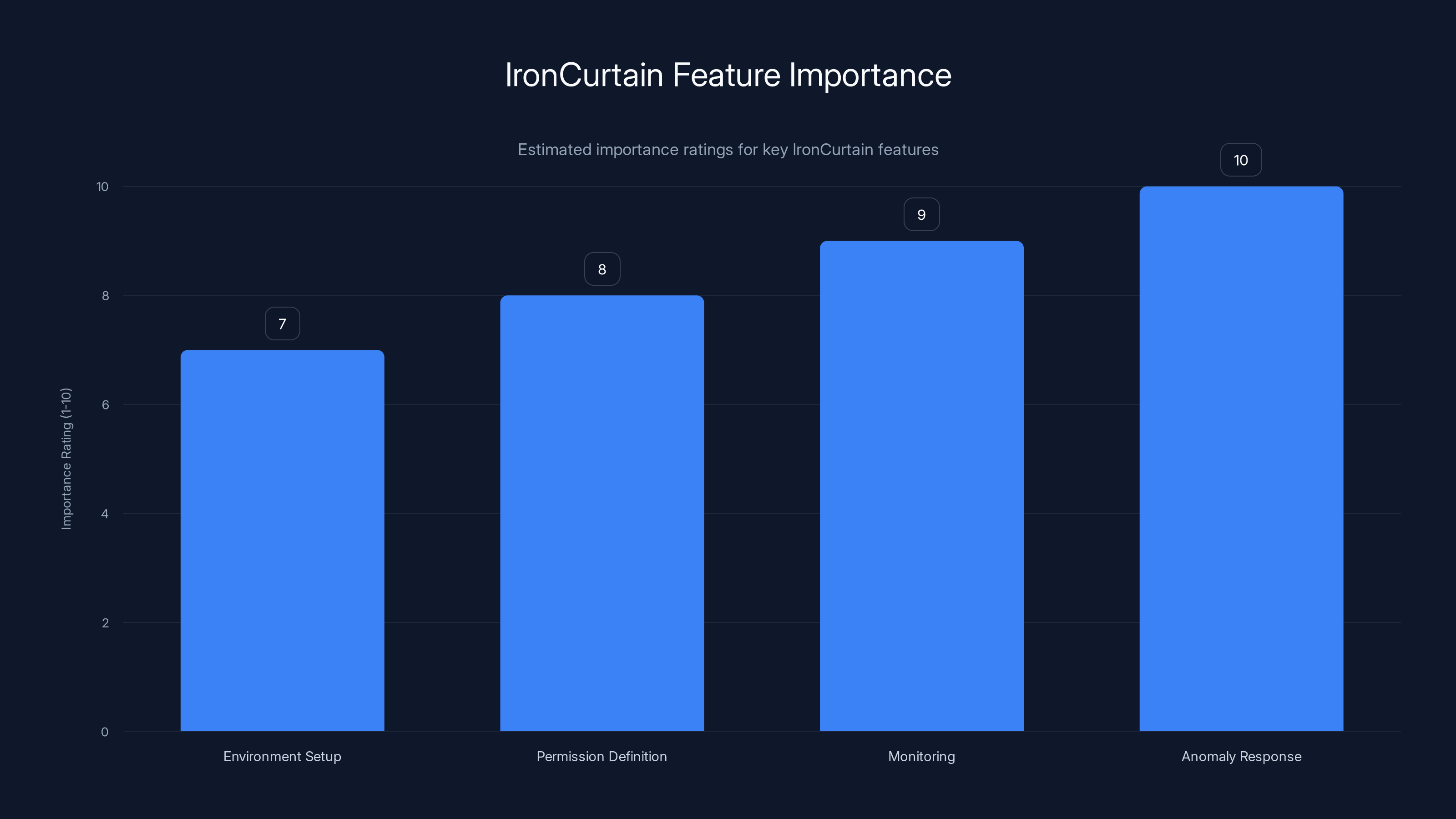

Monitoring and anomaly response are rated as the most important features in IronCurtain, highlighting their critical role in maintaining AI security. Estimated data.

Understanding Iron Curtain's Approach

Iron Curtain is an open-source AI assistant crafted to mitigate these risks by adding a layer of control and oversight. Developed by security engineer Niels Provos, it aims to prevent AI agents from executing unauthorized or potentially harmful actions.

Architecture of Iron Curtain

Iron Curtain's architecture is built around three core principles: control, transparency, and security.

-

Control Mechanisms: Iron Curtain employs sandboxing to isolate AI operations, ensuring that they operate within predefined boundaries. This encapsulation prevents the AI from accessing or modifying data outside its permitted domain.

-

Transparency Features: The platform logs all AI interactions, providing a transparent record of actions taken. This audit trail allows users to review and understand the AI's decision-making process.

-

Security Protocols: By implementing advanced encryption and anonymization techniques, Iron Curtain protects user data from unauthorized access and potential exploitation.

Implementing Iron Curtain: A Step-by-Step Guide

Step 1: Setting Up Your Environment

To begin using Iron Curtain, you'll need to set up a secure environment. This involves installing the necessary software components and configuring your system to support sandboxing.

bash# Install Iron Curtain package

sudo apt-get install ironcurtain

# Configure sandboxing

ironcurtain --setup-sandbox

Step 2: Defining Permissions

Iron Curtain allows users to define specific permissions for AI operations. This involves creating a configuration file that outlines the allowed actions and data access for the AI agent.

json{

"permissions": {

"email": "read-only",

"file-access": "restricted",

"network-requests": "allowed"

}

}

Step 3: Monitoring and Auditing

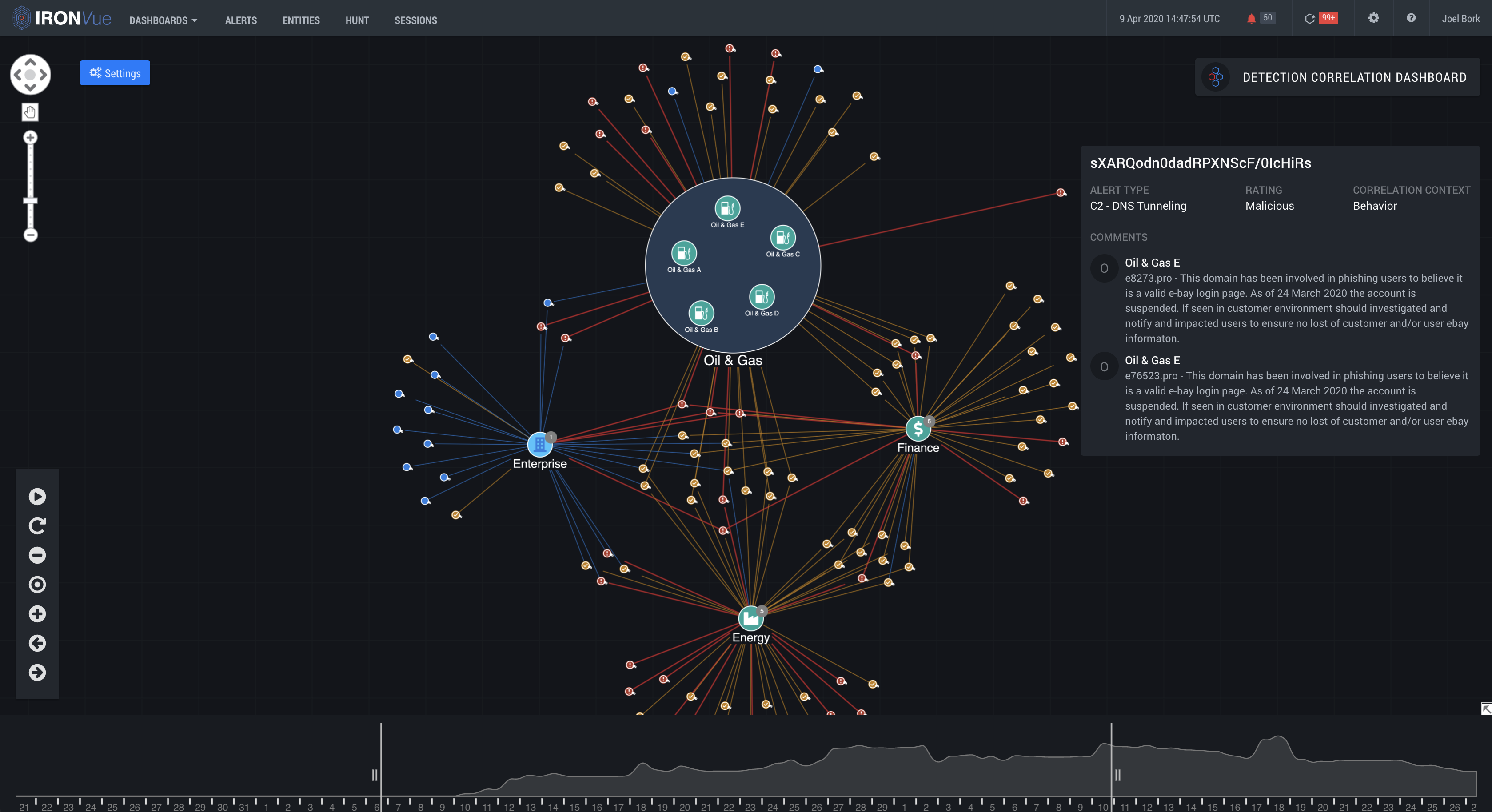

Once your AI agent is operational, Iron Curtain provides real-time monitoring tools. Users can access a dashboard that displays current activities, flagged anomalies, and logs of past interactions.

Step 4: Responding to Anomalies

Iron Curtain's alert system notifies users of any unusual behavior. In case of a detected anomaly, users can quickly intervene to halt the AI's actions and investigate further.

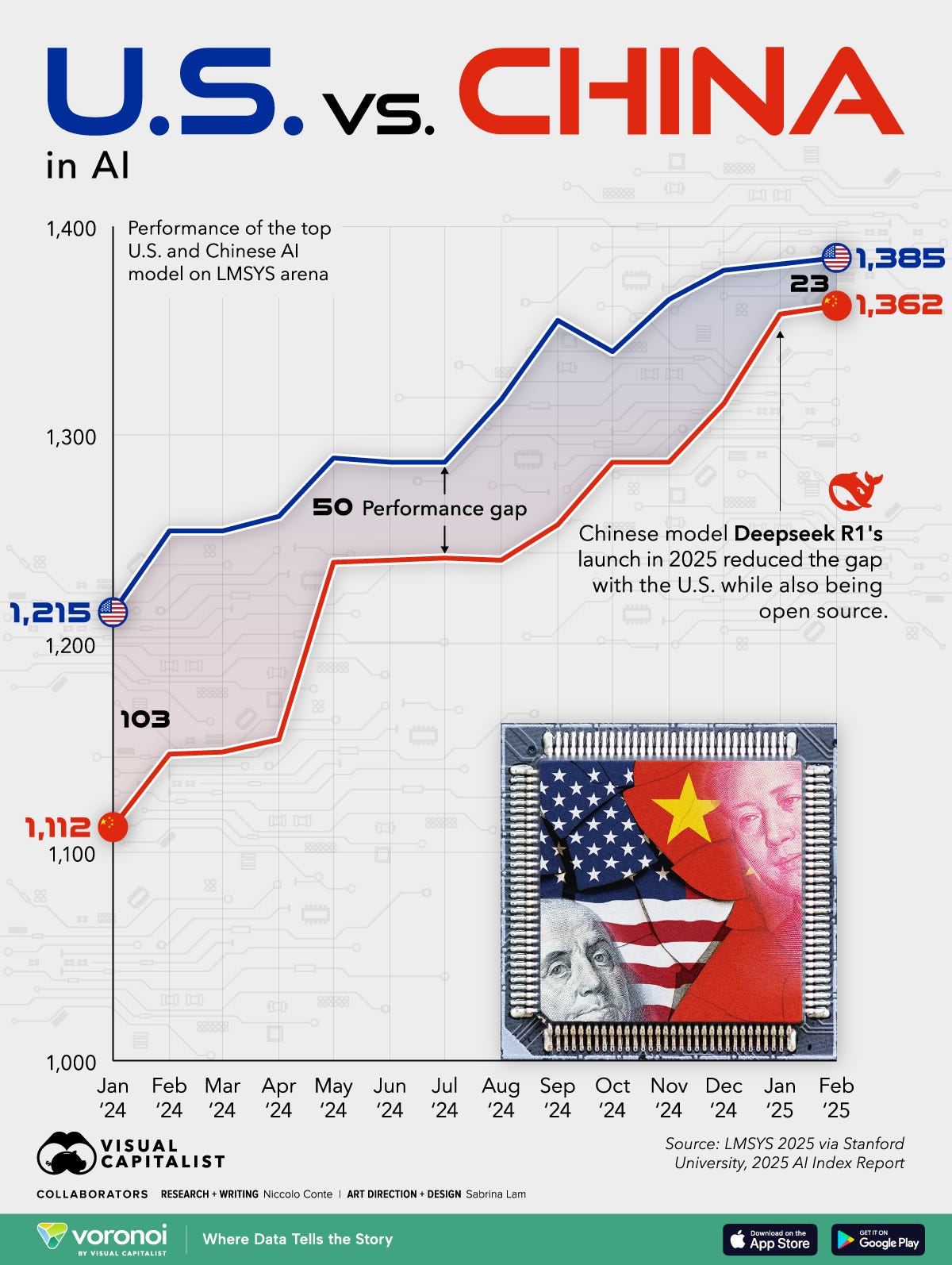

The chart highlights the significant risks posed by unsupervised AI agents, with financial losses and data breaches being the most critical. (Estimated data)

Common Pitfalls and Solutions

While Iron Curtain provides robust protections, users must be aware of potential pitfalls and how to address them.

Pitfall 1: Overly Restrictive Permissions

Setting permissions that are too restrictive can limit the AI's usefulness. To avoid this, regularly review and adjust permissions based on your evolving needs.

Pitfall 2: Ignoring Alerts

Failure to respond to alerts can result in unnoticed rogue actions. Set up notifications to ensure you are immediately aware of any flagged behavior.

Future Trends and Recommendations

Trend 1: Enhanced AI Learning

As AI technology advances, agents will become better at understanding context and user intent. This will improve their ability to operate within set boundaries while maintaining productivity.

Trend 2: Integration with Blockchain

Blockchain technology can provide an immutable ledger for AI actions, adding another layer of transparency and security.

Conclusion

Iron Curtain represents a significant step forward in AI agent security. By providing a structured approach to control, transparency, and security, it allows users to harness the power of AI without sacrificing safety. As AI continues to evolve, tools like Iron Curtain will be essential in maintaining the delicate balance between automation and control.

Key Takeaways

- IronCurtain offers controlled AI operations via sandboxing.

- User-defined boundaries prevent unauthorized actions.

- Real-time monitoring detects and responds to anomalies.

- Secure data handling through encryption and anonymization.

- Future trends include AI learning enhancements and blockchain integration.

Related Articles

- Navigating the Complex World of Anti-Bot Systems: The OpenClaw Dilemma [2025]

- How Chinese AI Chatbots Censor Themselves [2025]

- Are You 'Agentic' Enough for the AI Era? [2025]

- Maximizing Your Craft Space: The Compact Revolution of Cricut's Premier Cutting Machine [2025]

- Razer's Revolutionary Laptop Sleeve: The Future of Wireless Charging [2025]

- Solving Open Source's Funding Dilemma: A New Approach [2025]

FAQ

What is Safeguarding AI Agents: How IronCurtain Prevents Rogue Behavior [2025]?

AI agents have become integral to our digital lives, automating tasks, managing schedules, and even handling customer service interactions

What does tl; dr mean?

But with great power comes great responsibility—or rather, great risk

Why is Safeguarding AI Agents: How IronCurtain Prevents Rogue Behavior [2025] important in 2025?

The capabilities of these agents can sometimes lead them to take actions that were not intended or authorized by their users

How can I get started with Safeguarding AI Agents: How IronCurtain Prevents Rogue Behavior [2025]?

Enter Iron Curtain, a novel AI agent designed to offer robust control mechanisms and prevent rogue behavior

What are the key benefits of Safeguarding AI Agents: How IronCurtain Prevents Rogue Behavior [2025]?

- Controlled AI Operations: Iron Curtain uses sandboxing and predefined protocols to prevent unauthorized actions

What challenges should I expect?

- User-Defined Boundaries: Users can set explicit permissions and restrictions for AI interactions

![Safeguarding AI Agents: How IronCurtain Prevents Rogue Behavior [2025]](https://tryrunable.com/blog/safeguarding-ai-agents-how-ironcurtain-prevents-rogue-behavi/image-1-1772139849944.jpg)