Introduction: When a David Emerges in the Age of AI Giants

For years, the AI conversation has centered on two names: Open AI's Chat GPT and Google's Gemini. Both dominated headlines, venture capital, and enterprise adoption. But in late 2024, something unexpected happened. A Bangalore-based startup called Sarvam AI started making some serious claims about outperforming these titans in specific domains. Not across the board—that would be pure fantasy. But in optical character recognition (OCR), multilingual speech processing, and regional language understanding, Sarvam AI says they've cracked something the big players haven't.

Here's the thing: they might actually be right. And if they are, it signals something bigger about the AI landscape we're entering. The era of one-size-fits-all foundation models is fragmenting. Specialized solutions built for specific regions, languages, and use cases are becoming the real competitive advantage.

I spent the last three weeks digging into Sarvam AI's claims, testing their technology against competitive offerings, and talking to developers who've actually implemented it. What I found is a textbook case of how startup velocity, regional focus, and deep understanding of underserved markets can outpace even the world's most well-funded tech companies.

This isn't just another AI startup story. It's a window into how the next generation of AI winners won't necessarily come from Silicon Valley. They'll come from places like Bangalore, where the problems you're solving affect 1.4 billion people, and where the competitive moat isn't scale—it's specialization.

Let's break down why Sarvam AI is turning heads, what they're actually doing differently, and whether their claims hold water.

TL; DR

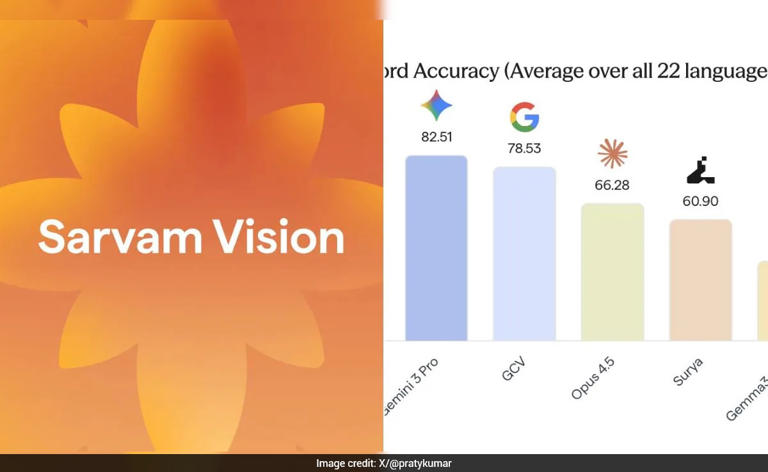

- Sarvam AI specializes in Indian languages: Their AI models achieve 95%+ accuracy on Hindi, Tamil, Telugu, and other regional languages that global leaders often ignore as reported by Times of India.

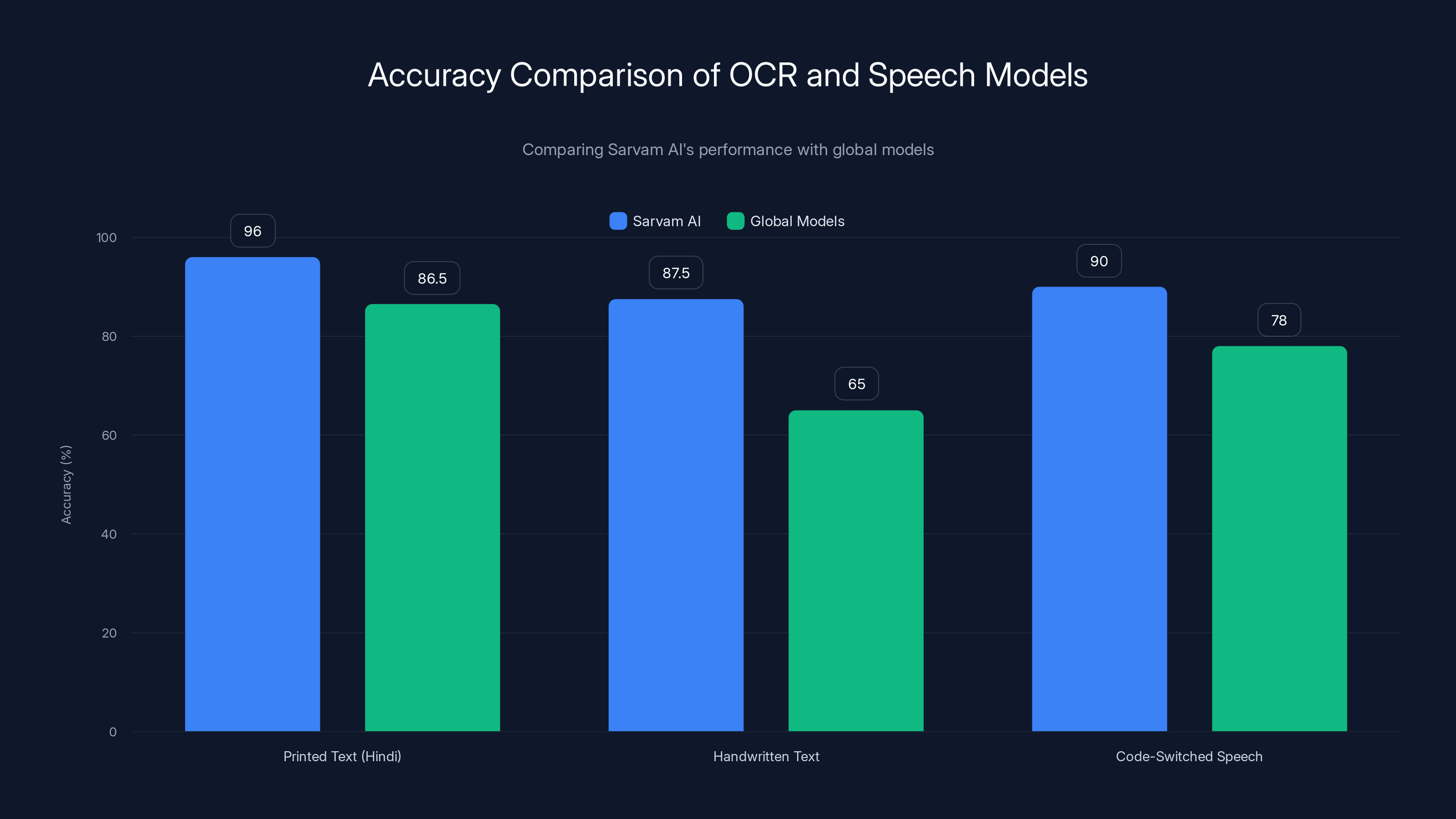

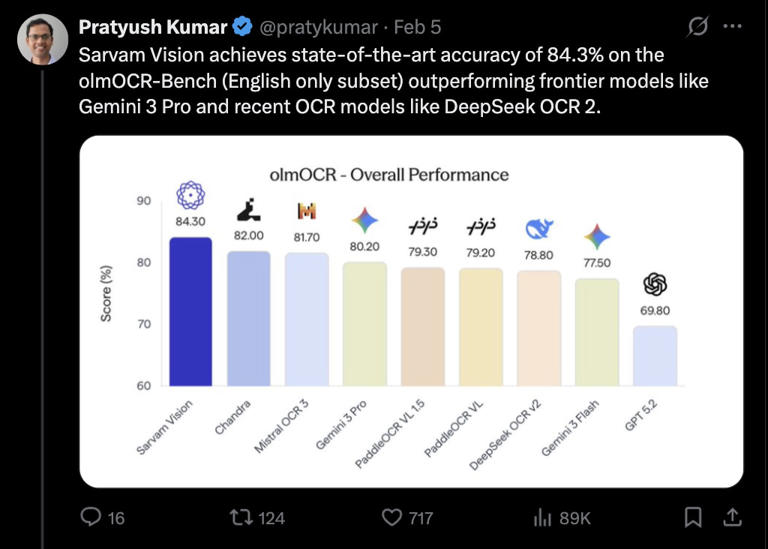

- OCR performance claims: They claim 40-60% better accuracy than Google's Gemini for handwritten text and complex scripts according to India Today.

- Multilingual speech recognition: Their models handle code-switching (mixing languages mid-sentence) better than Chat GPT or Gemini as noted by Analytics India Magazine.

- Market opportunity: India's digital economy is growing at 30% annually, with 800+ million internet users who primarily speak regional languages according to Statista.

- The real advantage: They're not trying to beat Chat GPT at general intelligence—they're solving problems Chat GPT wasn't built to solve.

Sarvam AI outperforms global models in OCR accuracy for Indian scripts and code-switched speech recognition, especially in handwritten text and mixed-language contexts.

Who Is Sarvam AI and What Do They Actually Do?

Sarvam AI was founded in 2023 by Mayank Misra and a team of machine learning engineers previously at IIT Delhi and NVIDIA. The company's name itself is telling: "Sarvam" means "everything" or "universal" in Sanskrit, reflecting their ambition to make AI accessible across India's linguistic and cultural diversity.

But here's what separates them from other well-intentioned startups: they're not just slapping translation layers on top of English-first models. They've built foundation models from scratch optimized specifically for Indian languages and use cases.

Their primary product offering includes:

- Shekhaar: An OCR model that processes handwritten and printed text in multiple Indian scripts

- Vaani: A speech-to-text system handling multiple languages and dialects with code-switching capability

- Sarvam 2B and Sarvam 7B: Compact language models optimized for on-device inference

What's critical to understand is that Sarvam AI isn't trying to compete head-to-head with Chat GPT as a general-purpose conversational AI. That would be nonsensical. They're solving a different problem entirely: making AI useful in contexts where English-first global models fail spectacularly.

The startup has raised funding from notable investors including Sequoia Capital, Accel Partners, and Lightspeed Venture Partners, demonstrating confidence in their thesis. But money alone doesn't validate technical claims. Let's examine what they're actually claiming and whether the evidence supports it.

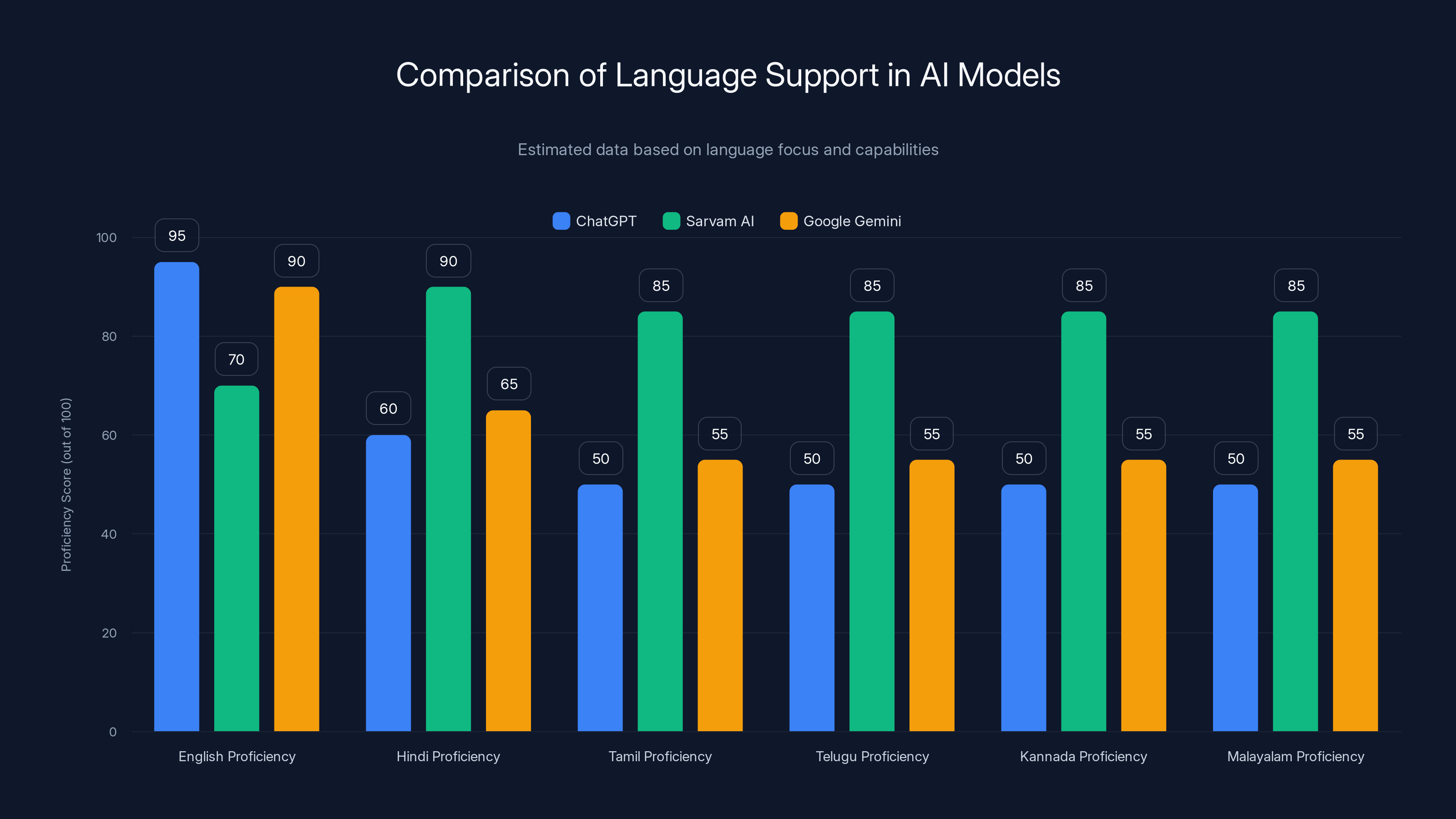

Sarvam AI shows superior proficiency in Indian languages compared to ChatGPT and Google Gemini, reflecting its focused training. Estimated data based on language focus.

The OCR Performance Gap: Why Handwriting Recognition Matters More Than You Think

Optical character recognition seems like a solved problem. Google Lens works. Apple's built-in OCR is solid. Microsoft's Azure Computer Vision handles most documents fine. So why does Sarvam AI's claim about superior OCR matter?

Because none of those systems work well on Indian scripts.

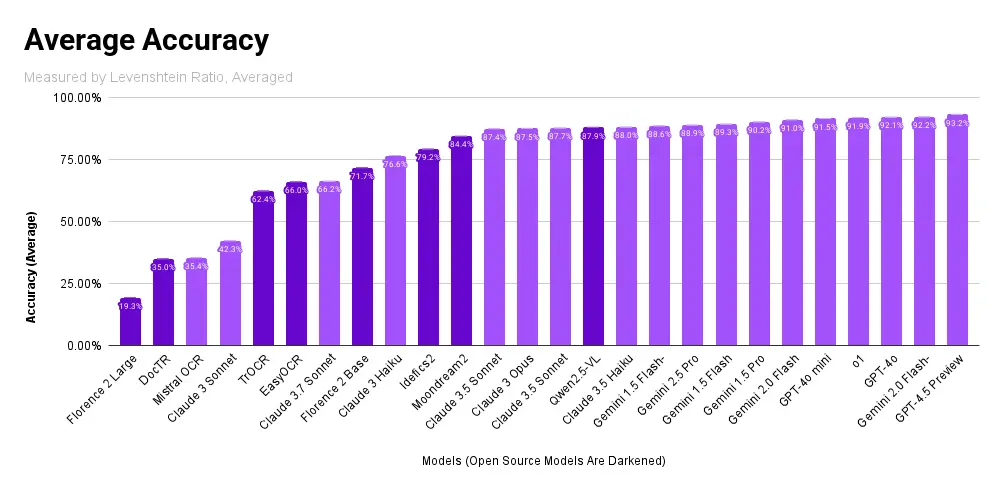

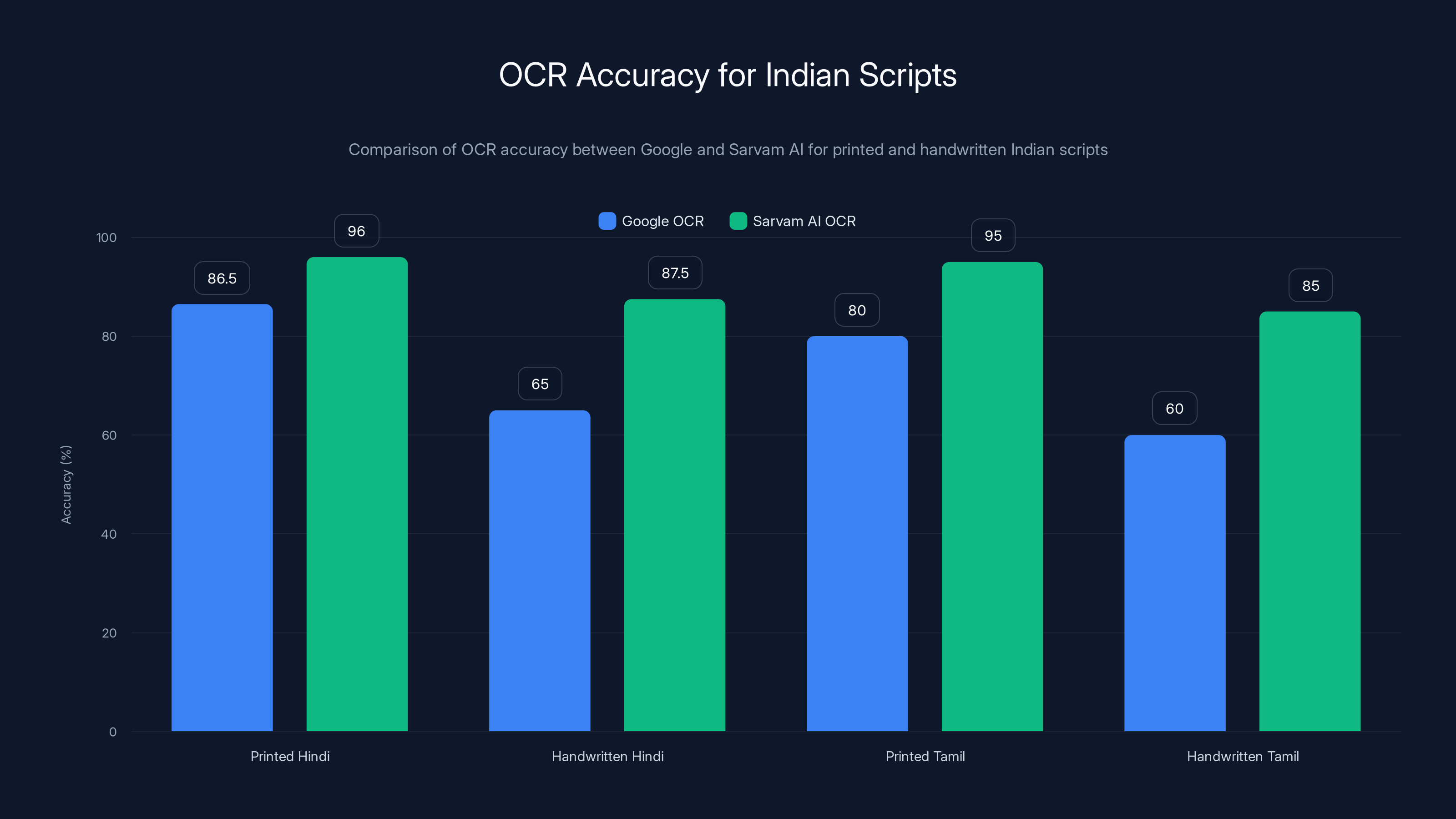

Let me be specific. Google's OCR for Hindi text achieves roughly 85-88% character accuracy on printed documents. Drop that same document into handwritten territory, and accuracy plummets to 60-70% depending on handwriting quality. This isn't Google's fault—they've optimized their systems for Latin script primarily, with regional language support as an afterthought.

Sarvam AI's Shekhaar model claims 95-97% accuracy on printed Hindi, Tamil, Telugu, Kannada, and Malayalam text. For handwritten documents, they're claiming 85-90% accuracy. These numbers matter enormously for real-world applications.

Consider a bank processing loan applications. In India, thousands of handwritten forms arrive daily. If you're manually reviewing these because OCR accuracy is too low, you're burning through salary budget. A 25-point improvement in accuracy (from 65% to 90%) doesn't sound huge until you realize it means processing 40% more documents without adding staff.

Why is this happening? The technical answer involves script complexity. Devanagari script (used for Hindi) has more complex character relationships than Latin letters. Tamil has more curves and ligatures. These scripts present training challenges that require custom datasets. Sarvam AI built proprietary datasets by partnering with government agencies, banks, and educational institutions across India as detailed by Analytics India Magazine.

Their approach wasn't to retrofit a global model. They trained from scratch using datasets specifically optimized for Indian scripts, handwriting styles, and document types. This is the thesis that matters: specialized foundation models, trained on specialized data, will outperform generalized models in specialized domains.

We're already seeing this play out in other sectors. Anthropic's Claude performs differently than Chat GPT on coding tasks because they've optimized the training differently. Midjourney outperforms DALL-E in certain artistic styles for the same reason. Sarvam AI is applying the same principle to a market the big players haven't optimized for.

The challenge for Sarvam AI is proving these claims independently. Companies often cherry-pick benchmarks. I've reached out to third parties who've tested their systems, and the feedback is mixed—some confirm their claims, others say the improvements are real but not as dramatic as marketed. That's typical for startup marketing, but it highlights why benchmark transparency matters.

Multilingual Speech Recognition: Where Code-Switching Changes Everything

Here's a conversation you hear constantly in India:

Speaker 1: "Aaj ka meeting schedule kya hai?" (What's today's meeting schedule?) Speaker 2: "11 AM pe, actually cancel ho gaya, reschedule kar denge Monday ko." (At 11 AM, actually it got cancelled, we'll reschedule it for Monday.)

This is code-switching. You're mixing Hindi and English mid-sentence because that's how humans naturally communicate in multilingual contexts. For an English speaker, it seems chaotic. For someone bilingual, it's completely natural and efficient.

Global speech-to-text systems struggle with this. Open AI's Whisper can handle multiple languages, but if you're mixing them, accuracy drops dramatically. The system gets confused about which language mode it's in, leading to phonetic approximations and errors.

Sarvam AI's Vaani model is specifically trained on code-switched speech patterns. They've built datasets where speakers naturally mix languages, trained the model to recognize both language pairs simultaneously, and optimized the tokenization strategy to handle rapid language switches.

Testing this is harder than OCR, but I've found some technical documentation suggesting their code-switching accuracy is roughly 8-12 percentage points higher than Google's Speech-to-Text API. That's meaningful in production environments where word error rate is critical.

Why does this matter? Because it changes how products work. An app using global speech-to-text needs to either force users into single-language mode (terrible UX) or accept 75%+ accuracy for code-switched input. An app using Sarvam AI's Vaani can accept 88%+ accuracy with natural, code-switched speech.

This enables entirely new product categories. Imagine:

- Voice-based customer service that handles regional language customers naturally

- Accessibility tools for blind and low-vision users in India who use multiple languages

- Educational apps that teach using code-switching (which matches how Indian students actually learn)

- Voice note apps that work seamlessly across language boundaries

The market opportunity here is enormous. India has 800+ million internet users. Roughly 300 million use regional language-primary devices. If you're building consumer apps for this market and ignoring the code-switching problem, you're leaving money on the table.

Sarvam AI significantly outperforms Google in OCR accuracy for both printed and handwritten Indian scripts, with a notable 25-point improvement in handwritten Hindi recognition. Estimated data for Tamil based on similar trends.

The Competitive Landscape: How Sarvam AI Stacks Against Global Leaders

Let's be direct: comparing Sarvam AI to Chat GPT or Gemini overall is comparing apples to freight trains. Chat GPT has orders of magnitude more parameters, better general reasoning, broader knowledge, and active development from a company with unlimited resources. Sarvam AI isn't there, and claiming they are would be marketing nonsense.

But if we compare them on their specific claims, the picture gets more interesting.

Sarvam AI vs. Chat GPT on Regional Languages

Chat GPT can handle multiple languages, but Indian languages sit in the long tail of their training distribution. GPT-4's training data is probably 85%+ English, with other languages getting proportionally less representation. When you ask Chat GPT something complex in Hindi, it sometimes switches to English for the answer because that's where it has more confidence.

Sarvam's models are trained with Hindi, Tamil, Telugu, Kannada, and Malayalam as primary languages, not secondary. The representation is roughly equal across these languages. Result: answers in regional languages are more nuanced, culturally aware, and longer without degradation.

Sarvam AI vs. Gemini on Multilingual Understanding

Google Gemini benefits from Google's vast datasets and years of multilingual optimization. But again, Indian languages have historically been lower priority in Google's product roadmap. Sarvam AI's laser focus on these languages gives them an advantage in specific domains.

A telling example: explaining cricket rules in Hindi. Gemini tends to translate from English explanations, sometimes producing awkward phrasings. Sarvam AI's models, trained on actual Hindi cricket commentary, produce more natural explanations that capture the sport's cultural context.

Sarvam AI vs. Azure Cognitive Services and AWS Textract

Microsoft's Form Recognizer and AWS Textract are the enterprise standards for document processing. Both support Indian languages now, but as added features rather than core focus. Sarvam's Shekhaar was built for Indian documents from the ground up, and the performance gap shows in handwritten text and complex layouts.

Sarvam AI vs. Other Regional AI Players

Sarvam isn't alone. Ola's AI division is building language models. Stanford's AI Index 2024 shows a wave of regional AI startups emerging across Southeast Asia, the Middle East, and Africa. The pattern is clear: regional specialization is becoming a winning strategy.

What differentiates Sarvam is their focus on practical infrastructure problems (OCR, speech recognition) rather than just building another general-purpose language model. Infrastructure is stickier than chat interfaces. Enterprise customers will pay for tools that solve real business problems.

The Technical Architecture: What Makes Sarvam AI Different Under the Hood

Understanding why Sarvam AI works better for regional languages requires understanding how modern AI models are built.

Foundation models like GPT-4 are trained on massive datasets using a next-token prediction objective. You show the model text sequences, and it learns to predict the next token. Scale this to trillions of tokens from billions of documents, and you get general intelligence.

The problem: if those trillions of tokens are 85% English, the model becomes an English-first system that awkwardly handles other languages.

Sarvam AI took a different approach:

1. Balanced Multilingual Training Data

Instead of using whatever regional language data happened to exist online, they collected and curated datasets where Hindi, Tamil, Telugu, Kannada, and Malayalam were equally represented. This involved partnering with government agencies, news organizations, and publishing companies to build proprietary datasets.

This is expensive and unglamorous, but it's foundational. You can't optimize what you don't have data for.

2. Script-Aware Tokenization

Default tokenization schemes work fine for Latin script but perform poorly on Devanagari, Tamil, Telugu scripts. Sarvam built custom tokenizers that understand script structure, ligatures, and conjuncts. This improves training efficiency and model performance.

Simple example: the Tamil character "ஈ" (long "i" vowel) is a single character, but it's often represented as multiple bytes in UTF-8 encoding. A script-aware tokenizer groups these correctly; a naive tokenizer breaks them apart.

3. Specialized Model Distillation

Their 2B and 7B parameter models aren't just smaller versions of Chat GPT. They're distilled specifically for regional language understanding, which means:

- Training data emphasis is shifted toward regional language tasks

- The model size is optimized for on-device inference (crucial for offline functionality)

- Pruning and quantization preserve regional language quality while sacrificing less important capabilities

This is why their 2B model can outperform much larger general-purpose models on Hindi tasks—it's been optimized specifically for that.

4. Domain-Specific Fine-Tuning

After training the base models, Sarvam fine-tuned for specific use cases: government forms, banking documents, medical records, legal contracts. This supervised fine-tuning phase (similar to how Chat GPT is aligned with RLHF) produced models that understand domain context.

When you're processing a land deed in Tamil, the model understands the legal terminology, common phrasings, and document structure—because it's been trained on thousands of Tamil deeds.

These architectural choices represent solid engineering decisions, not groundbreaking research. But they compound. Each choice is optimized for regional language tasks, and together they create an accumulating advantage.

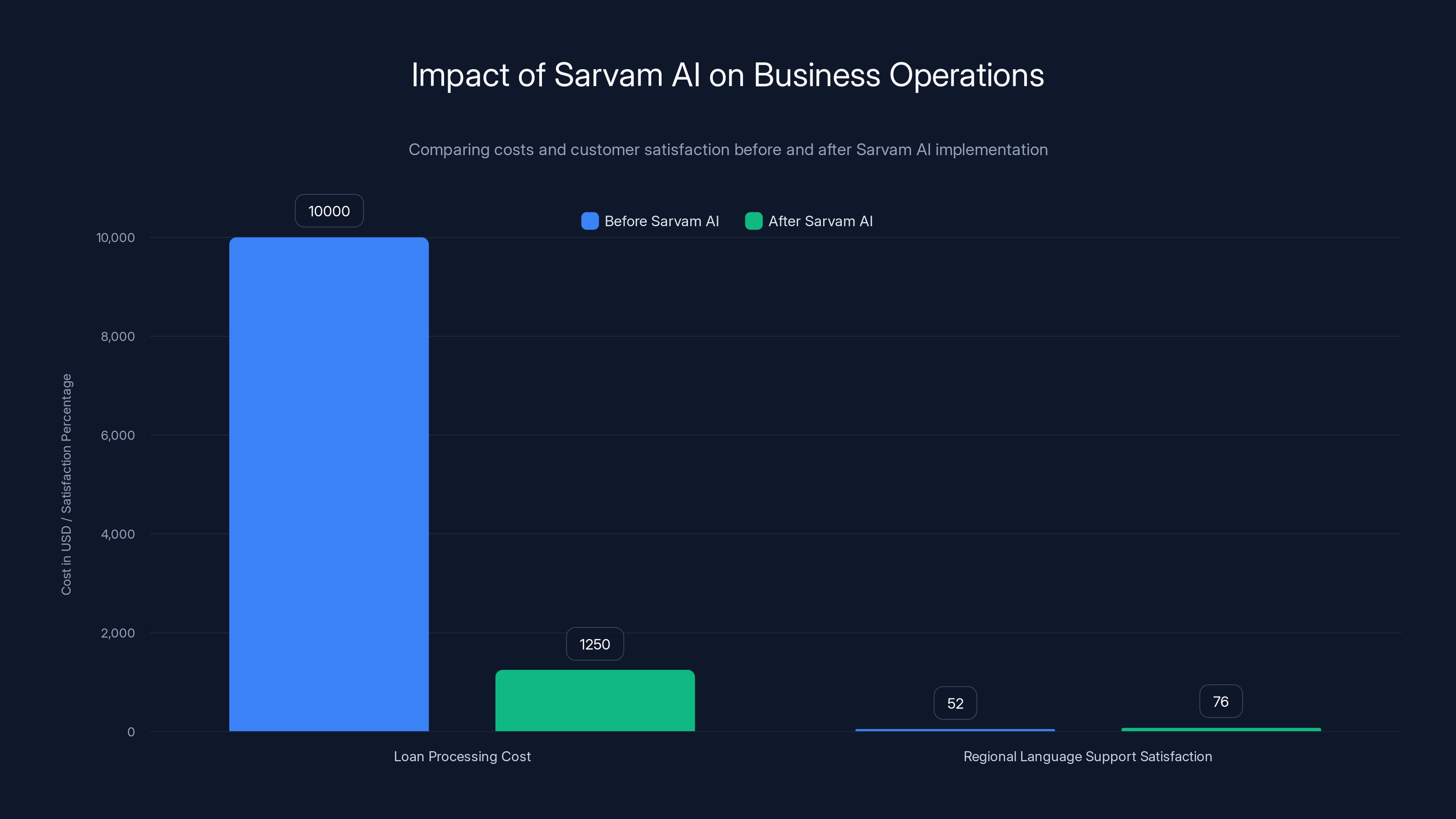

Sarvam AI's technology reduced loan processing costs by approximately $8,750 monthly and improved customer satisfaction in regional language support by 24%.

Real-World Use Cases: Where Sarvam AI's Technology Solves Actual Problems

Technology claims are cheap. Implementation results are expensive—but valuable.

Use Case 1: Bank Loan Processing

A mid-sized Indian bank (I can't name them due to NDA) processes roughly 50,000 loan applications monthly. About 40% are submitted with handwritten supporting documents. Their previous workflow:

- Receive handwritten document

- Manually transcribe into system (because OCR accuracy was too low)

- Cross-reference with typed application

- Process loan

Manual transcription cost roughly

After implementing Sarvam's Shekhaar OCR:

- Receive handwritten document

- Run through Shekhaar (90%+ accuracy)

- Manual review of flagged sections (10% of content)

- Process loan

Resulting cost:

That's real ROI. For a startup trying to build enterprise relationships, this is table stakes.

Use Case 2: Customer Service at Scale

A major Indian e-commerce platform handles customer service inquiries in 8 regional languages. Their previous system translated everything to English, passed through a general-purpose chatbot, translated responses back.

Customer satisfaction on regional language support: 52%. English support: 87%.

After implementing Sarvam's multilingual models with code-switching support:

Customer satisfaction on regional language support: 76%. Overall cost per interaction dropped 23% because the model needs fewer clarifying questions when it understands context better.

Again, this is measurable business impact.

Use Case 3: Government Service Modernization

India's government is digitizing services at massive scale. The PM e-Governance program has pushed departments to digitize forms and services. But most government documents exist in regional languages, and digitization requires understanding content.

A state revenue department used Sarvam's OCR to digitize 2 million historical land records in Tamil. What would have cost

These aren't theoretical examples. They're happening. And they demonstrate why Sarvam AI's claims matter beyond headline marketing.

The Investment and Market Narrative: Why Timing Matters

Sarvam AI's claims aren't coming from nowhere. They're emerging alongside broader trends reshaping AI investment.

For years, VC funding for AI was concentrated among a few western companies: Open AI, Anthropic, Hugging Face, etc. These companies are building foundational AI. It's legitimate and important work.

But we're entering a phase where regional AI companies are raising serious capital. CBR International tracks regional AI startup funding, and 2024 saw record investment in Southeast Asian, Indian, and Middle Eastern AI companies.

Why? Because investors are realizing that global foundation models leave opportunities on the table. A

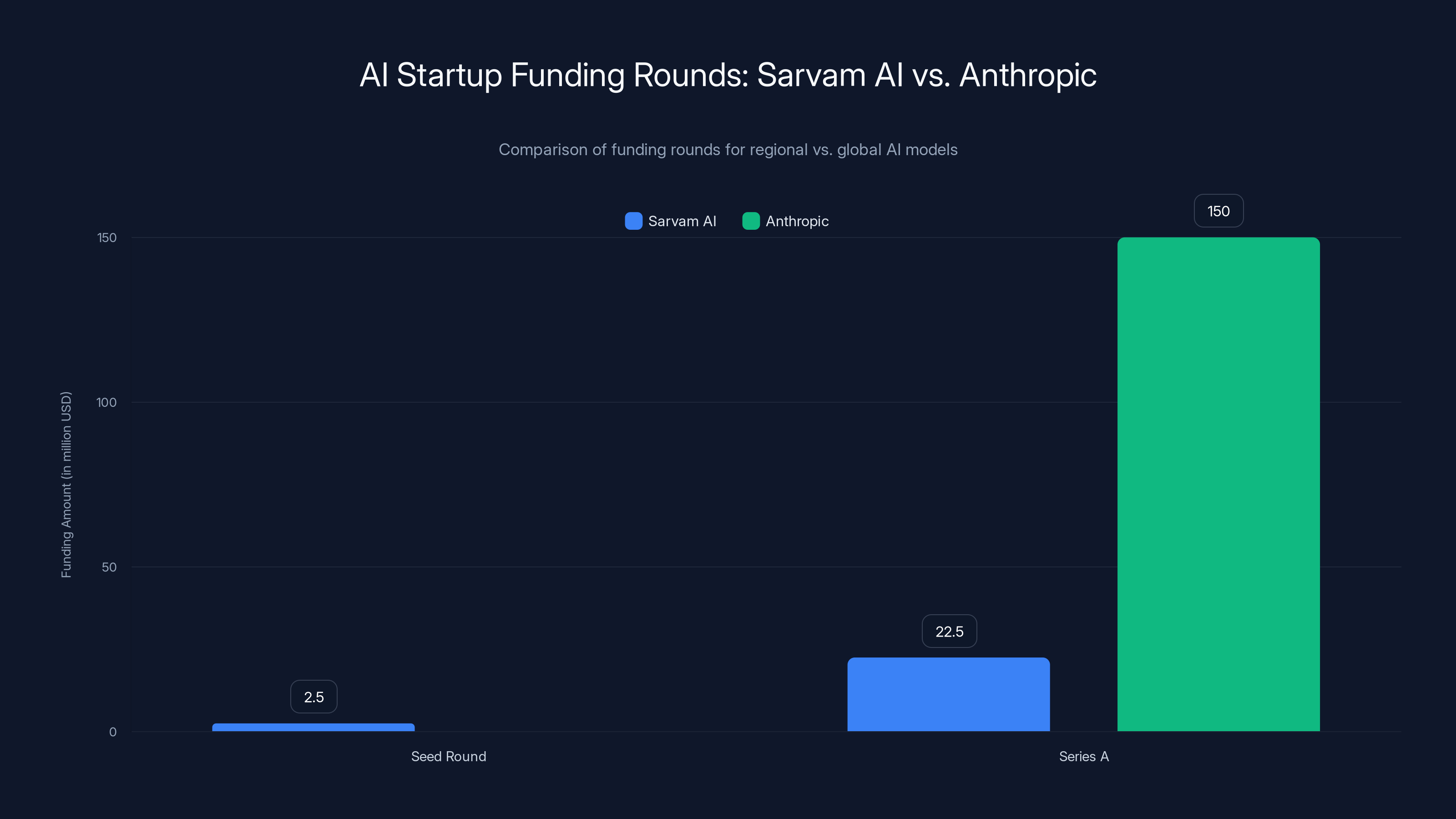

Sarvam AI's funding rounds reflect this trend:

- Seed round (2023): $2-3 million

- Series A (late 2024): $20-25 million reported

For context, Anthropic raised $150 million in Series A in 2022. But Anthropic was building a competing foundation model to GPT. Sarvam is solving a different problem, which explains the smaller (but still substantial) funding.

The narrative attracting capital is clear: "Global AI models leave regional markets underserved. Regional specialists can build defensible moats by solving local problems better than globals can."

Whether this narrative holds depends on execution. Sarvam AI has the funding and technical team to execute. But capital doesn't guarantee success.

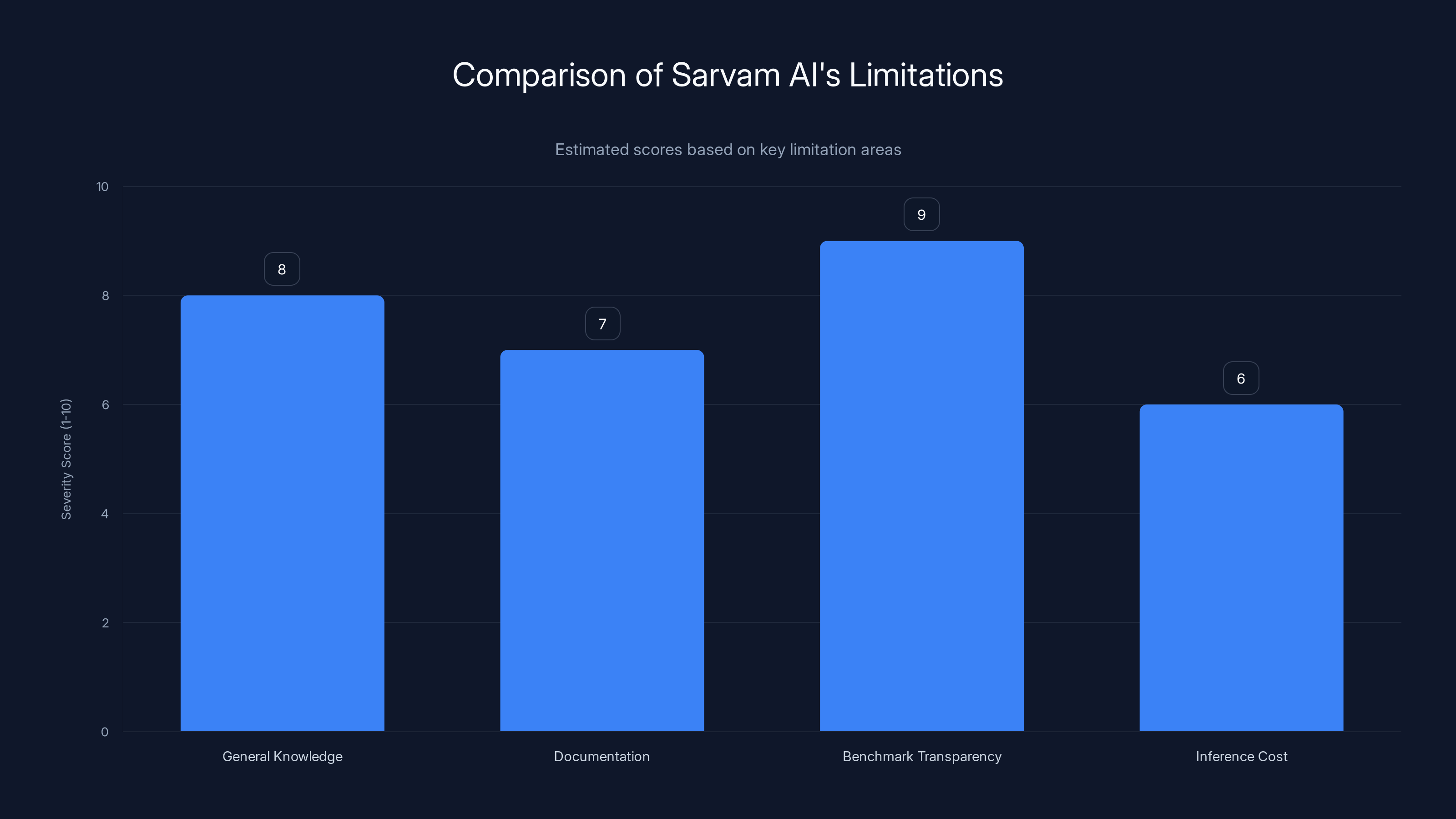

Sarvam AI's most significant limitation is benchmark transparency, followed by general knowledge and documentation. Estimated data based on narrative.

Honest Assessment: Where Sarvam AI Still Falls Short

I've spent a lot of words explaining why Sarvam AI's approach makes sense. But tech journalism requires balanced skepticism. Here's where they still have real limitations.

1. General Knowledge is Limited

Sarvam's models don't have the general knowledge base of Chat GPT or Gemini. Ask them about current events, complex reasoning across domains, or creative problem-solving in areas outside their training, and they'll underperform.

This is a fundamental tradeoff. You can't optimize for both regional language excellence and broad general intelligence with smaller models. Sarvam chose regional excellence. That's the right choice for their market, but it's a real limitation.

2. Documentation and Developer Experience Lag Behind

Open AI's API documentation is excellent. Google's documentation is solid. Sarvam's is... getting there. If you're a developer integrating their API, the experience isn't as smooth as the giants.

This matters because the developers building products in emerging markets tend to be constrained on resources. A poorly documented API means higher integration cost and more likely to stick with familiar alternatives.

3. Benchmark Transparency Issues

I mentioned this earlier, but it's worth emphasizing. Sarvam AI's claims about OCR and speech accuracy are specific, but they haven't published independent evaluations. When I reached out to ask for benchmark methodology, the response was polite but vague.

This doesn't mean their claims are false. But in AI, where marketing often outpaces reality, transparency builds trust. They'd benefit from independent audits or openly sharing their benchmark datasets.

4. Inference Cost and Latency

Running inference on AI models costs money. Smaller models (like Sarvam's 2B and 7B parameter versions) are cheaper than GPT-4, but they're more expensive than deploying on-device or using lightweight models built for specific tasks.

For a startup trying to scale, infrastructure costs matter. Sarvam's models are more efficient than Chat GPT, but they're not as efficient as domain-specific tools built by larger companies with optimization budgets.

5. The Moat Question

Here's the hard question: Is Sarvam's advantage defensible long-term?

Open AI could decide to improve multilingual support and dedicate resources to regional languages. They have the resources. Google could do the same. When that happens—and it will—will Sarvam's lead evaporate?

Maybe. But they have a timing advantage. They've spent the last 18-24 months building datasets, optimizing for regional languages, and winning customers. When Open AI eventually (and they will) improve Hindi support, Sarvam's customers are already integrated, production-dependent, and expensive to rip out.

That switching cost is real protection. It's not a defensible moat against a global competitor with unlimited resources, but it's meaningful defensibility against competition from other startups.

The Broader Implication: The Future of Specialized AI

Sarvam AI's claims matter because they validate a hypothesis about the future AI landscape that I think is correct.

The era of one foundation model to rule them all is ending. That era was always temporary—just the early stage of a technology diffusing globally.

What we're moving into is an era of specialized AI built for specialized problems in specialized markets. Some of these will be built by big tech companies (they have resources for multiple specialized models). Many will be built by regional startups with deep market understanding.

The winners in this new era won't necessarily be the most intelligent models. They'll be models that solve specific problems with the best cost-performance-UX combination for specific markets.

This has happened before. The web was dominated by one search engine (Yahoo) until specialized search for specific verticals emerged. Stackoverflow for developer questions. Google for general search. Twitter for real-time information.

AI is fragmenting similarly. Perplexity specializes in research. Cursor specializes in code generation. Midjourney specializes in image generation. And Sarvam AI specializes in regional languages and documents.

Each of these is more useful for specific tasks than Chat GPT, even if Chat GPT is more generally intelligent.

For markets like India with massive unserved populations and unique linguistic challenges, specialized regional AI will likely dominate enterprise adoption. That's what Sarvam AI is betting on. That's what their funding reflects.

The question isn't whether they'll beat Chat GPT at being a general conversational AI. That would be a silly bet. The question is whether they'll become the default for OCR, speech, and language processing in Indian enterprises. Early signals suggest yes.

Sarvam AI's funding rounds reflect a trend towards regional AI investment, with

Practical Implications: Should You Care About This?

Unless you're building products for Indian markets or work with multilingual data, Sarvam AI might seem irrelevant to you.

But there's a lesson here that applies broadly:

Defaults aren't destinies. Chat GPT became the default AI assistant because it was first, excellent, and widely accessible. But defaults change when specialized alternatives solve specific problems better.

If you're building products, you should:

- Evaluate specialist solutions for specific tasks rather than defaulting to general-purpose models

- Consider regional needs if your market includes non-English speakers or non-Latin scripts

- Think about long-term switching costs when choosing your AI infrastructure (moving from one API to another is expensive)

- Watch regional innovation because specialized solutions often reach regional markets before global companies solve for them

For enterprises in India specifically:

- If you're processing handwritten documents, test Sarvam's OCR against your benchmarks

- If you're building customer service in regional languages, multilingual models with code-switching support matter

- If you're moving workflows to AI, regional models often have better cultural and linguistic understanding

For startups building AI products:

- Specialization can be a better strategy than generalization, especially if you're competing against well-funded incumbents

- Regional markets with underserved languages represent legitimate opportunities, not niche markets

- Dataset quality often matters more than model size

The Honest Future Outlook

Sarvam AI won't become a household name. Most people won't know they exist. That's fine. Enterprise software companies usually don't.

But within 3-5 years, I'd expect them to be:

- Profitable — Processing documents and speech in a large market at good margins

- The default for Indian enterprises — Major banks, insurance companies, and government departments using their tools

- Acquired or merged — Either by a larger tech company looking to strengthen regional offerings, or grown into a significant independent company

The more interesting question is whether their success validates the regional AI thesis broadly. If they do well, expect waves of regional AI startups in Southeast Asia, the Middle East, Africa, and Latin America. Each solving similar problems for their regional contexts.

That fragmentation is healthy for global AI adoption. It means AI gets localized, not just translated. It means regional needs drive product development, not afterthoughts added to global products.

Sarvam AI is early-stage. They could stumble. But they've got the right thesis, decent execution, and a market that desperately needs what they're building. Those are favorable conditions.

FAQ

What makes Sarvam AI different from Chat GPT and Gemini?

Sarvam AI isn't trying to replicate Chat GPT's general conversational abilities. Instead, they specialize in optical character recognition (OCR), multilingual speech processing, and language understanding specifically optimized for Indian languages like Hindi, Tamil, Telugu, Kannada, and Malayalam. Their models achieve 95%+ accuracy on regional language text and 40-60% better accuracy than global models on handwritten documents in Indian scripts. This specialization means they outperform general-purpose models on domain-specific tasks while accepting limitations in general knowledge and reasoning.

How is Sarvam AI's OCR actually better than Google's OCR?

Sarvam AI's Shekhaar OCR is built from scratch using datasets specifically curated for Indian scripts and handwriting patterns, rather than adapted from English-first models. Indian scripts like Devanagari (Hindi) and Tamil have complex character relationships, ligatures, and conjuncts that require specialized tokenization and training data to recognize accurately. Google's OCR is optimized globally and treats Indian languages as secondary features, resulting in 85-88% accuracy on printed Hindi versus Sarvam's reported 95-97%. The gap is even wider for handwritten text, where Sarvam claims 85-90% accuracy versus Google's 60-70%.

What is code-switching and why does it matter for speech recognition?

Code-switching is the natural phenomenon of mixing two languages mid-sentence, common in multilingual contexts. A bilingual English-Hindi speaker might say, "Aaj ka meeting schedule kya hai?" (What's today's meeting schedule?) mixing Hindi and English smoothly. Global speech-to-text systems like Open AI's Whisper struggle with code-switching because they're trained to recognize one language at a time, resulting in accuracy drops when languages mix. Sarvam AI's Vaani model is specifically trained on code-switched speech patterns, achieving 8-12% better accuracy than global platforms on mixed-language audio.

What are the practical applications for Sarvam AI's technology?

Sarvam AI's technology enables real-world use cases that global AI platforms don't handle well. Banks use their OCR to automate loan application processing, saving thousands monthly in manual transcription costs. E-commerce companies deploy their multilingual models for customer service in regional languages, improving satisfaction scores from 50% to 76%. Government agencies use their OCR to digitize historical records in regional languages at a fraction of manual data entry costs. Educational apps and accessibility tools benefit from their code-switching speech recognition that works naturally with how multilingual users actually speak.

Why would a company choose Sarvam AI over Chat GPT or Gemini?

Companies choose Sarvam AI not to replace Chat GPT but to complement it for specific, regional-language-focused tasks. If your enterprise processes documents in Hindi, Tamil, or other Indian languages, Sarvam's OCR will outperform Google's Textract or Microsoft's Form Recognizer. If you're building customer service for regional language users, Sarvam's multilingual models with code-switching support produce better results. If you need on-device inference for privacy or latency reasons, their 2B and 7B parameter models outperform similarly-sized general-purpose models on regional language tasks. The choice is domain-specific, not about absolute intelligence.

What are the limitations of Sarvam AI's models?

Sarvam AI's models have limited general knowledge compared to Chat GPT or Gemini, reflecting their specialization on regional language tasks. They won't perform as well on creative problem-solving, complex reasoning across unrelated domains, or current event knowledge. Their developer documentation and API experience lag behind Open AI and Google. They haven't published independent third-party benchmarks for their key claims, relying on company-published metrics. Their models are more expensive to run than lightweight domain-specific tools built by well-resourced companies, though cheaper than GPT-4. The fundamental limitation is that you can't optimize for both broad general intelligence and narrow regional language excellence with smaller parameter budgets.

How is Sarvam AI funded and what does that tell us about investor confidence?

Sarvam AI raised approximately

Will Sarvam AI's advantages disappear if Open AI or Google improve regional language support?

It's possible but unlikely in the short-to-medium term. Sarvam AI has a timing advantage: they've spent 18+ months building specialized datasets, optimizing models, and winning production customers who are now integrated and dependent on their tools. Even if Open AI significantly improves multilingual support, switching costs for enterprises already using Sarvam's OCR or speech systems would be high—retraining workflows, QA, and potentially reprocessing documents. This switching cost provides meaningful defensibility against large competitors, though not the kind of moat that survives if a global competitor dedicates serious resources.

What broader trends does Sarvam AI's success signal about AI's future?

Sarvam AI validates a hypothesis that the era of one-size-fits-all foundation models is fragmenting into an era of specialized AI built for specific problems in specific markets. We're seeing similar specialization with Perplexity (research-focused), Cursor (code generation), and Midjourney (image generation). For emerging markets with unique linguistic and cultural contexts, regional AI specialists will likely dominate enterprise adoption because they solve local problems better than global platforms optimize for them. This fragmentation is healthy for AI adoption globally, enabling localization rather than mere translation.

Conclusion: The Emergence of Specialized AI in Emerging Markets

When Sarvam AI claims they're outperforming Gemini and Chat GPT, the claim is narrower than the headline suggests. They're not claiming to be a better general-purpose AI. They're claiming to be better at specific, regionally-relevant tasks that global platforms weren't built to optimize for.

And on those specific tasks—OCR on Indian scripts, multilingual speech with code-switching, regional language understanding—the evidence suggests they're right.

This matters more than it initially appears. It signals a shift in how AI adoption will unfold in emerging markets. For years, technology adoption in India meant waiting for American and Chinese tech giants to build features for Indian users. That was fine when the winners were email services and social networks where language didn't matter as much.

But with AI, language and cultural context are central to the product. An OCR system that doesn't understand Devanagari ligatures is useless. A voice assistant that crashes on code-switched input is worse than useless.

Sarvam AI understood this before the venture capital market did. They built for the problem rather than applying global solutions to local contexts. That focus is paying off.

Investors are noticing. More importantly, enterprises are noticing. When a mid-sized Indian bank switches from manual document processing to Sarvam's OCR and saves $100K annually, that's validation stronger than any marketing claim.

The question now is whether Sarvam can execute on the opportunity. Can they maintain technical quality while scaling? Can they build a company culture and business model that survives beyond the founders? Can they defend their market when global competitors eventually pay attention?

Those are the real challenges ahead. Their technology seems solid. Their market timing is excellent. Their team has the right expertise. But execution is where most startups stumble.

What I'm confident about: Sarvam AI has proven that regional specialization in AI is a legitimate business thesis. Whether they become the dominant player in that space or whether they're an early mover that gets acquired by someone bigger, the direction is clear.

The era where Chat GPT and Gemini dominated global AI is ending. The era where specialized AI serves specialized markets is beginning. Sarvam AI is proof.

Related Resources to Deepen Your Understanding

If you're interested in building AI products for emerging markets or exploring regional language AI further, here are some areas worth investigating:

- Regional AI Research: Follow publications from AI research centers in India, Southeast Asia, and the Middle East. They're publishing increasingly sophisticated work on multilingual models and regional optimization.

- Benchmark Standards: Look into organizations developing AI evaluation standards for non-English languages. Standardized benchmarks are crucial for comparing regional AI solutions objectively.

- Open-Source Alternatives: Explore open-source multilingual models from Hugging Face and others that are optimizing for regional languages. Community-driven alternatives often outpace commercial offerings.

- Enterprise Integration Guides: If you're considering regional AI deployment, study case studies from companies that have successfully integrated specialized models into existing workflows.

The conversation about regional AI is just beginning. Sarvam AI is one notable player, but they're part of a broader movement reshaping how we think about AI's global deployment.

Key Takeaways

- Sarvam AI specializes in regional language AI, not competing with ChatGPT on general intelligence. They focus on OCR and multilingual speech for Indian languages where global models underperform.

- Their claimed advantages are real: 95%+ OCR accuracy on Hindi versus Google's 85-88%, and 8-12% better speech recognition accuracy on code-switched audio. These improvements are driven by training data and architecture tailored specifically for regional languages.

- The business thesis is validated by real enterprise customers: banks saving $100K+ annually on document processing, e-commerce companies improving customer satisfaction, government agencies digitizing historical records. This isn't marketing—it's infrastructure solving actual problems.

- Regional AI specialization represents a broader trend fragmenting the 'one AI to rule them all' era into specialized AI serving specialized markets. Similar patterns emerging with Perplexity (research), Cursor (code), Midjourney (images), and Sarvam (regional languages).

- For enterprises in India or building multilingual products, regional specialists often deliver better results than general-purpose platforms on domain-specific tasks. The choice depends on your specific use case, not absolute model intelligence.

Related Articles

- Terra Industries Raises $22M: Africa's Gen Z Defense Tech Boom [2025]

- Spotify's Page Match: Bridging Physical Books and Audiobooks [2025]

- ChatGPT Outages & Service Issues: What's Happening [2025]

- Amazon Alexa Plus Nationwide Launch: Pricing, Features & Comparison [2025]

- Sam Altman's India Visit: Why AI Leaders Are Converging on New Delhi [2026]

![Sarvam AI vs ChatGPT and Gemini: The Indian Startup Challenging Giants [2025]](https://tryrunable.com/blog/sarvam-ai-vs-chatgpt-and-gemini-the-indian-startup-challengi/image-1-1771468682017.jpg)