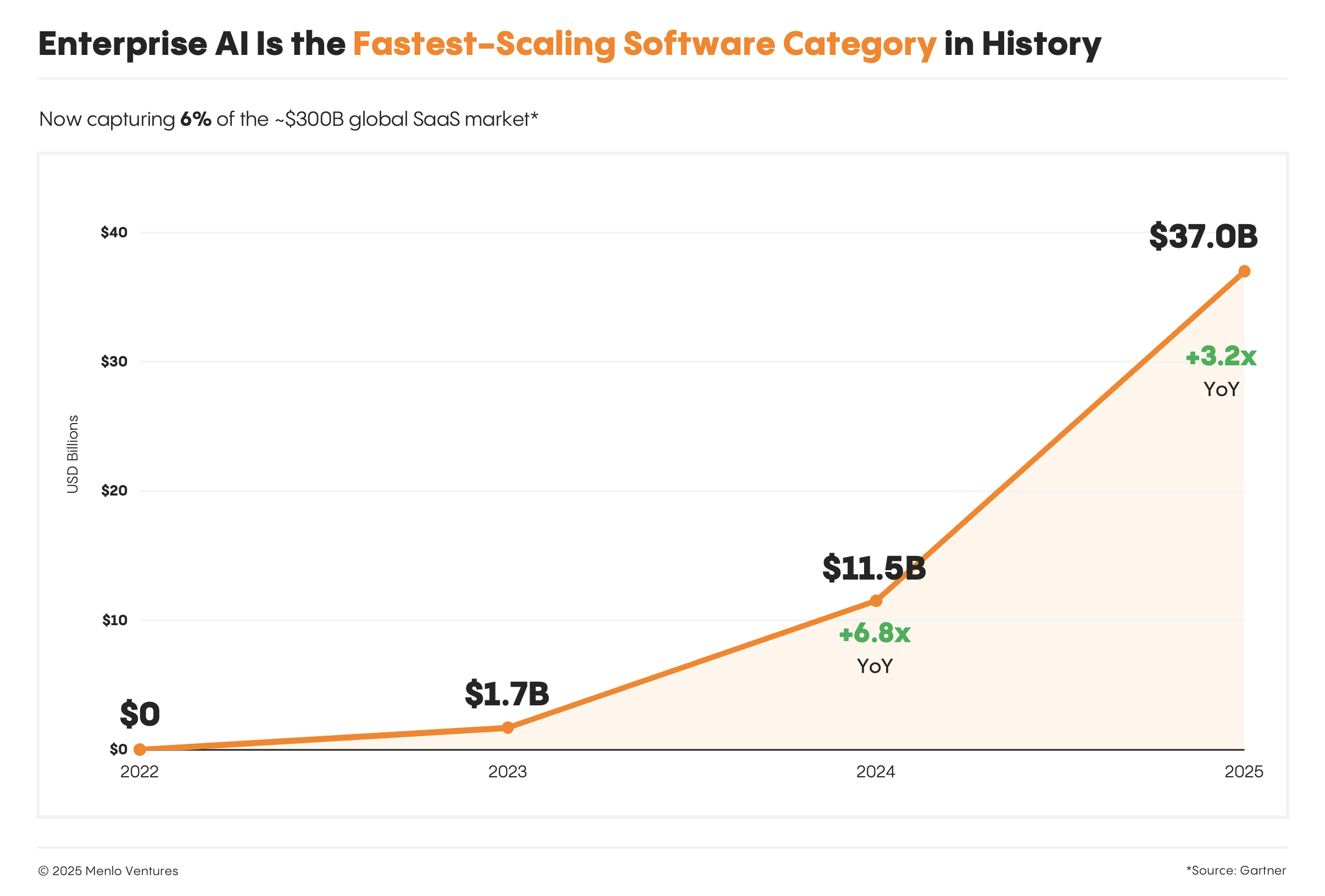

Scaling AI into Production: The New Frontier in Enterprise Infrastructure [2025]

Last month, a leading financial services company faced an unexpected challenge: their AI model, designed to predict market trends, was generating inconsistent results in production. The culprit wasn't the model itself but the infrastructure supporting it. This scenario is becoming increasingly common as enterprises scale AI from experimentation to full-fledged production environments.

TL; DR

- Infrastructure Evolution: Traditional setups struggle with AI demands, prompting a shift to hybrid and multi-cloud environments. According to IBM's insights on data center modernization, these environments offer the necessary scalability and flexibility.

- Data Challenges: Managing and processing vast datasets requires advanced storage and real-time processing solutions. The importance of a semantic layer for trusted AI solutions is emphasized by RSM US LLP.

- Security Concerns: AI introduces new vulnerabilities, necessitating robust cybersecurity measures. IBM has announced new cybersecurity measures to address these challenges.

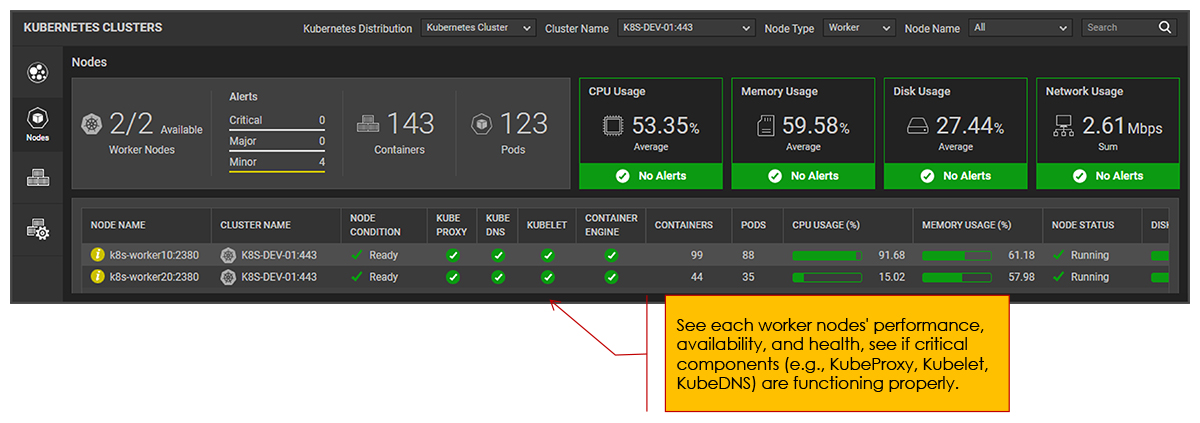

- Scalability Solutions: Containerization and orchestration tools like Kubernetes are essential for scaling AI workloads. NVIDIA discusses running large-scale GPU workloads on Kubernetes.

- Future Trends: Edge computing and AIops are emerging as crucial components for AI infrastructure. As noted by Cloud Native Now, AI is transforming cloud-native operations.

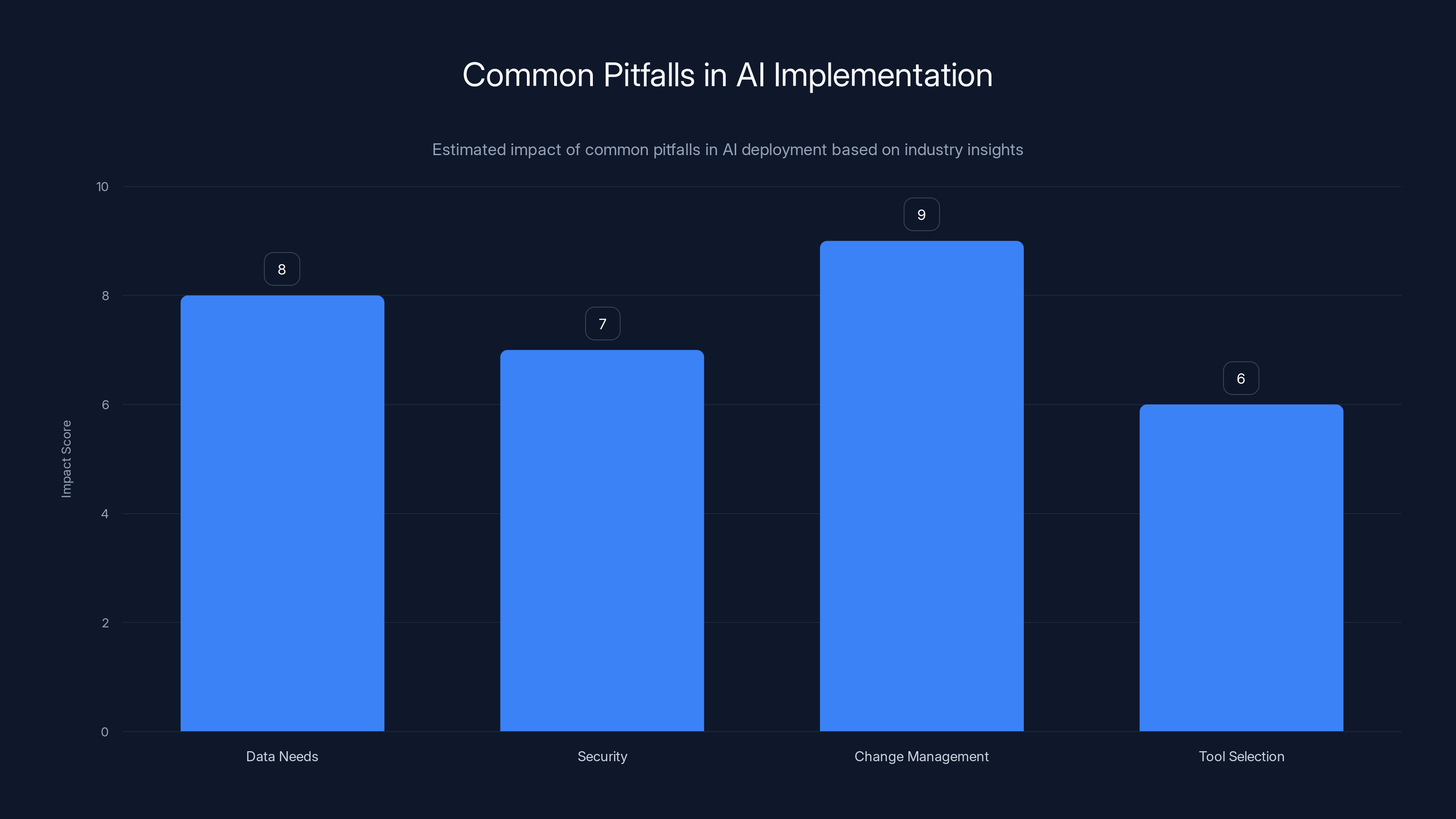

Change management is often the most impactful pitfall in AI implementation, followed closely by data needs and security. Estimated data based on industry insights.

Introduction

Scaling AI into production isn't just a technical challenge—it's a fundamental reshaping of enterprise infrastructure. As organizations transition from AI pilots and proofs of concept to deploying AI at scale, they encounter a myriad of challenges that demand innovative solutions. This article explores these challenges, offers practical implementation guides, and provides insights into future trends.

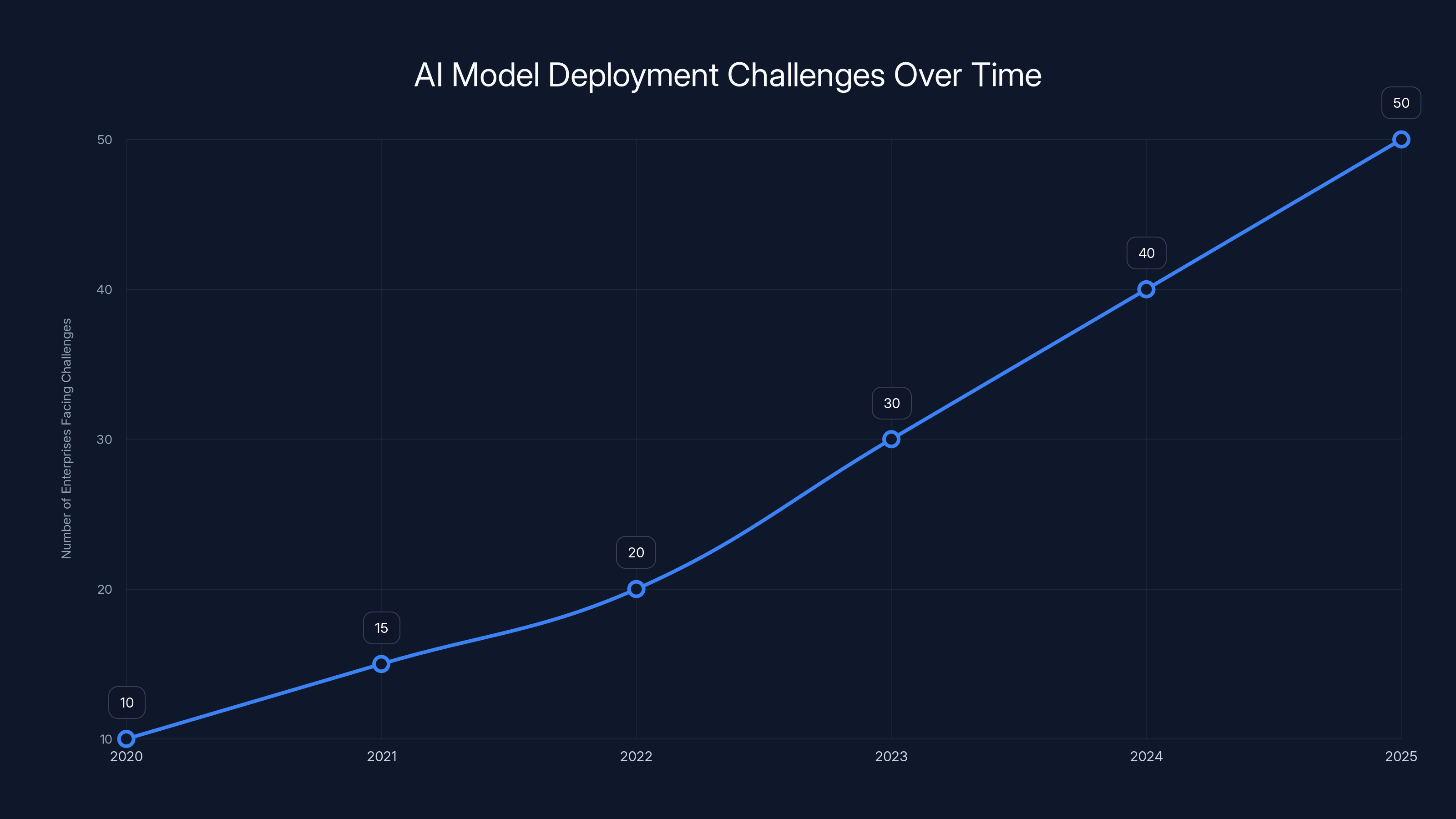

The number of enterprises facing AI deployment challenges is projected to increase significantly by 2025 as more companies scale AI into production. (Estimated data)

The Infrastructure Shift

Traditional vs. Modern Infrastructure

Traditional IT infrastructures, often characterized by monolithic architectures and on-premises data centers, are ill-suited for the dynamic and resource-intensive nature of AI workloads. AI models require rapid data processing, high throughput, and extensive computational resources, which traditional systems struggle to provide.

Modern Infrastructure Solutions:

- Hybrid Cloud: Combines on-premises resources with cloud services, offering scalability and flexibility.

- Multi-Cloud Environments: Utilize multiple cloud providers to optimize cost and performance, as highlighted by IBM's data center modernization.

The Role of Hyperconvergence

Hyperconverged infrastructure (HCI) integrates compute, storage, and networking into a single system, simplifying management and scaling AI workloads. HCI solutions like Nutanix provide a streamlined approach to deploying AI applications, reducing complexity and improving resource utilization.

Data: The Lifeblood of AI

Data Management Challenges

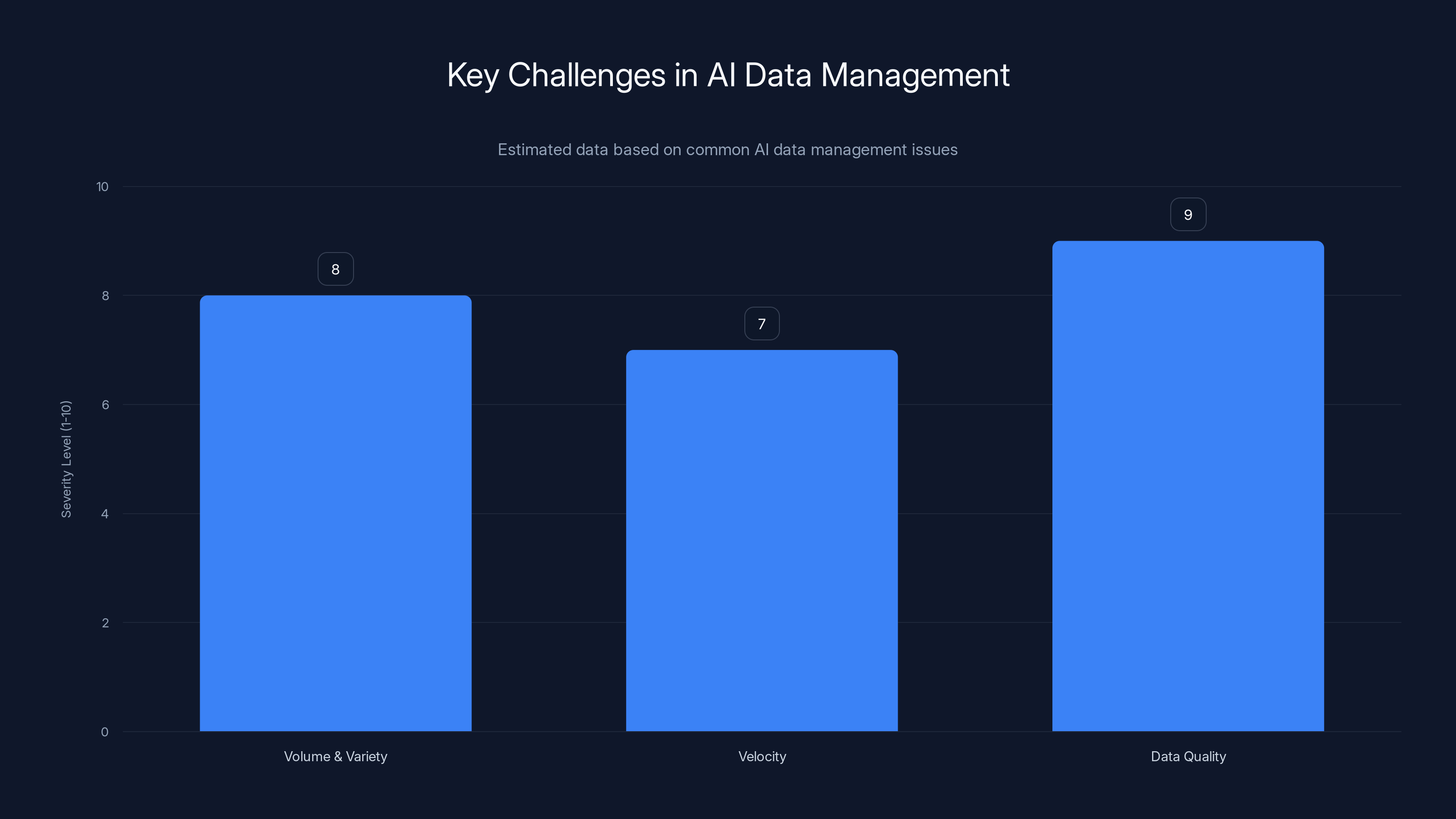

AI models thrive on data, but managing vast datasets presents significant challenges:

- Volume and Variety: AI requires large volumes of diverse data, which can strain storage systems. The data center market is evolving to meet these demands.

- Velocity: Real-time data processing is essential for applications like fraud detection.

Solutions:

- Data Lakes: Centralized repositories that store structured and unstructured data at scale.

- Real-Time Processing Engines: Tools like Apache Kafka and Apache Flink enable real-time data streaming and processing.

Ensuring Data Quality

Data quality directly impacts AI model performance. Enterprises must establish robust data governance frameworks to ensure data accuracy and consistency. Implementing automated data cleansing and validation processes can significantly enhance model reliability.

Data quality is the most critical challenge in AI data management, followed by handling volume and variety. Estimated data.

Security Concerns in AI Infrastructure

Emerging Threats

AI systems introduce new security vulnerabilities, such as model inversion attacks and adversarial examples, which can compromise data integrity and privacy. As Security Boulevard notes, these threats require vigilant monitoring.

Best Practices:

- Regular Security Audits: Continuous monitoring and vulnerability assessments are crucial.

- Encryption and Access Control: Protect sensitive data with strong encryption and role-based access controls.

Compliance and Regulation

With increasing regulatory scrutiny, organizations must ensure AI systems comply with data protection regulations like GDPR and CCPA. This involves implementing transparent data handling practices and maintaining audit trails.

Scalability: Meeting the Demand

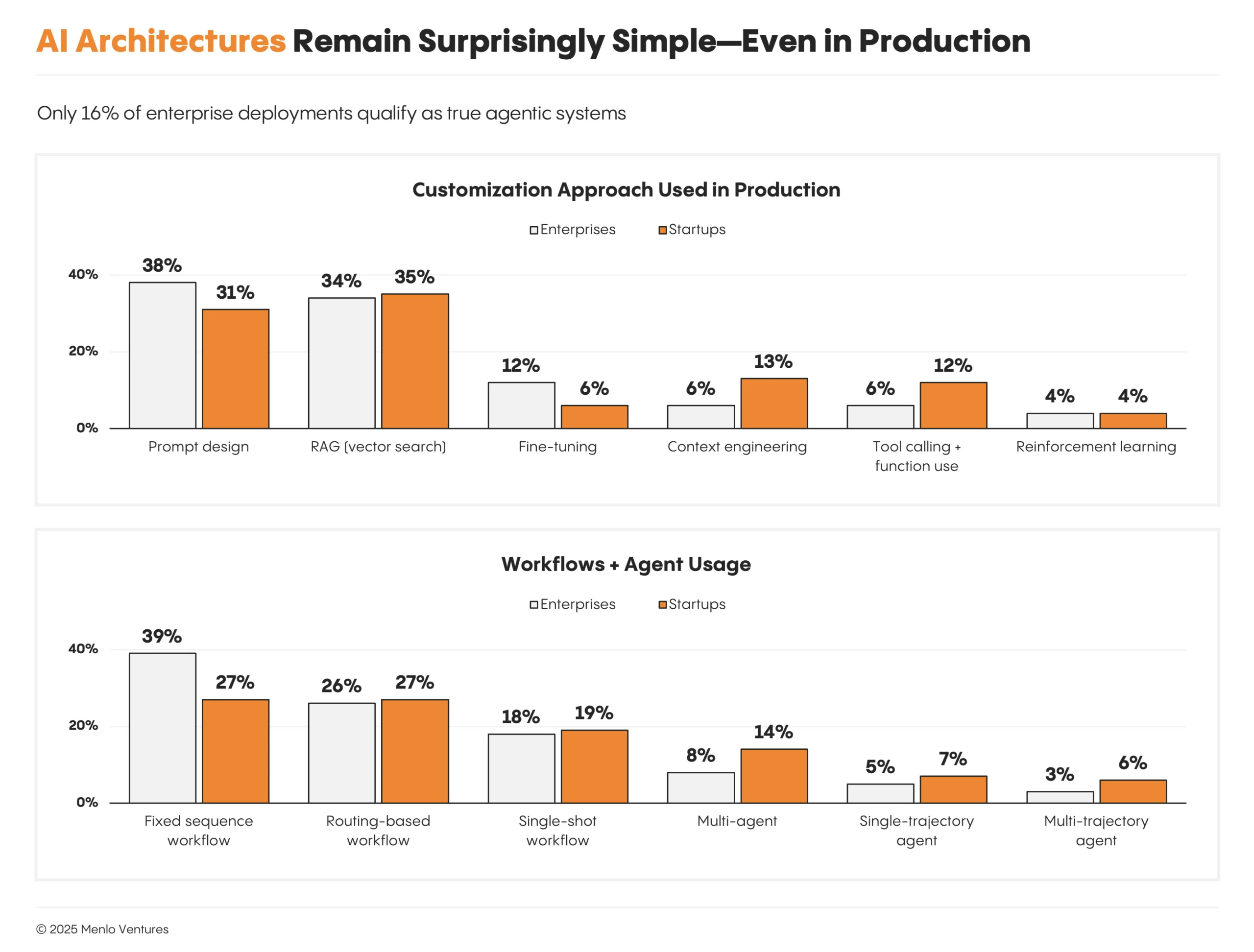

Containerization and Orchestration

Containers encapsulate AI applications and their dependencies, ensuring consistent deployment across environments. NVIDIA highlights the importance of Kubernetes in scaling AI workloads efficiently.

- Docker: Simplifies packaging and deployment of AI applications.

- Kubernetes: Automates scaling, deployment, and management of containerized applications.

Distributed Computing

Distributed computing frameworks like Apache Spark and Hadoop enable processing of large datasets across clusters, providing the computational power necessary for AI at scale.

QUICK TIP: Start with small clusters and scale as needed to optimize resource utilization.

Future Trends in AI Infrastructure

Edge Computing

Edge computing brings computation closer to data sources, reducing latency and bandwidth usage. This is particularly beneficial for AI applications in IoT and autonomous systems. As Brief Glance reports, the data center industry is experiencing a gold rush driven by AI.

- Use Case: Autonomous vehicles processing sensor data in real-time to make driving decisions.

AIops: Automating IT Operations

AIops leverages AI to automate and enhance IT operations, improving system performance and reducing downtime. Platforms like Databricks integrate AIops to monitor and manage complex infrastructures.

Implementation Guides

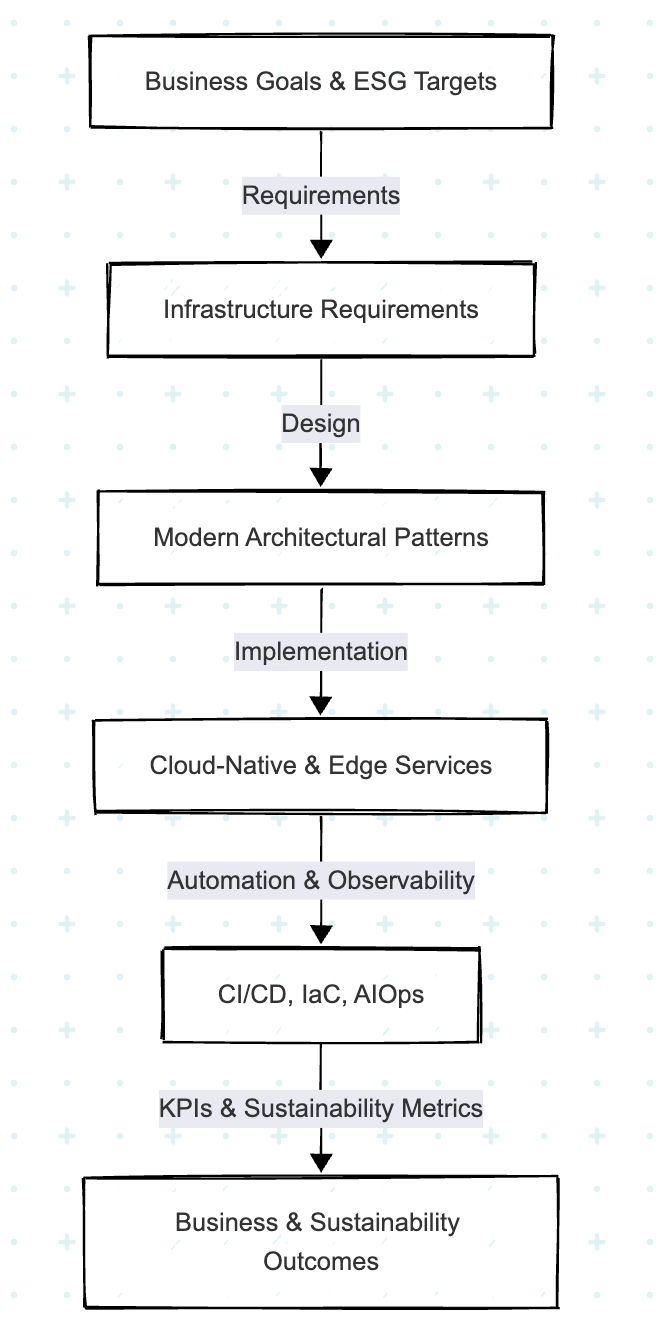

Step-by-Step Deployment

- Assess Infrastructure Needs: Evaluate current infrastructure capabilities and identify gaps.

- Choose the Right Tools: Select platforms and tools that align with business goals and technical requirements.

- Pilot and Iterate: Start with pilot projects to test AI models and infrastructure setups.

- Scale Gradually: Incrementally increase workload and infrastructure capacity based on performance metrics.

Common Pitfalls and Solutions

- Underestimating Data Needs: Ensure data infrastructure can handle expected growth and variety.

- Ignoring Security: Proactively integrate security measures into the AI deployment process.

- Overlooking Change Management: Prepare teams for new workflows and technologies through training and support.

Conclusion

Scaling AI into production demands a comprehensive rethinking of enterprise infrastructure. By adopting modern solutions like hybrid cloud, HCI, and AIops, organizations can overcome challenges and unlock the full potential of AI. As AI technologies continue to evolve, staying ahead of trends like edge computing and enhanced security practices will be crucial for maintaining a competitive edge.

Use Case: Automate your weekly reports with AI to save time and reduce errors.

Try Runable For Free

FAQ

What is AI infrastructure?

AI infrastructure refers to the combination of hardware, software, and networking resources required to deploy, manage, and scale AI applications effectively.

How does cloud computing support AI?

Cloud computing provides scalable resources and flexible environments, enabling rapid deployment and scaling of AI models without significant upfront investments.

What are the challenges of scaling AI?

Challenges include managing large datasets, ensuring data quality, maintaining security, and adapting traditional infrastructure to meet AI's dynamic requirements.

Why is containerization important for AI?

Containerization allows for consistent deployment of AI applications across different environments, improving scalability and resource utilization.

What role does edge computing play in AI?

Edge computing reduces latency by processing data closer to its source, which is crucial for real-time AI applications like autonomous vehicles and IoT.

How can AIops improve IT operations?

AIops automates IT operations using AI, enhancing system performance, reducing downtime, and providing predictive insights for proactive management.

Key Takeaways

- Traditional infrastructures struggle with AI demands, prompting a shift to modern solutions.

- Managing vast datasets is crucial for AI success, requiring advanced data management tools.

- AI introduces new security vulnerabilities, necessitating robust cybersecurity measures.

- Containerization and orchestration are essential for scaling AI workloads efficiently.

- Edge computing and AIops are emerging as critical components of AI infrastructure.

Related Articles

- Autonomous AI Tools Revolutionize Pentagon Operations [2025]

- Meta's Bold Move to AWS: Redefining AI Infrastructure at Scale [2025]

- Top 10 Reasons to Attend SaaStr AI Annual 2026 Last Minute [2025]

- ChatGPT's New Default Model: A Leap in Factuality and Personalization [2025]

- Understanding the Meta Lawsuit: Copyright Infringement in the Digital Age [2025]

- Understanding the Impact of DDoS Attacks on Ubuntu Services [2025]

![Scaling AI into Production: The New Frontier in Enterprise Infrastructure [2025]](https://tryrunable.com/blog/scaling-ai-into-production-the-new-frontier-in-enterprise-in/image-1-1778071207105.jpg)