Taiwanese Startup Revolutionizes AI with Low-Power Accelerator and Legacy Chips [2025]

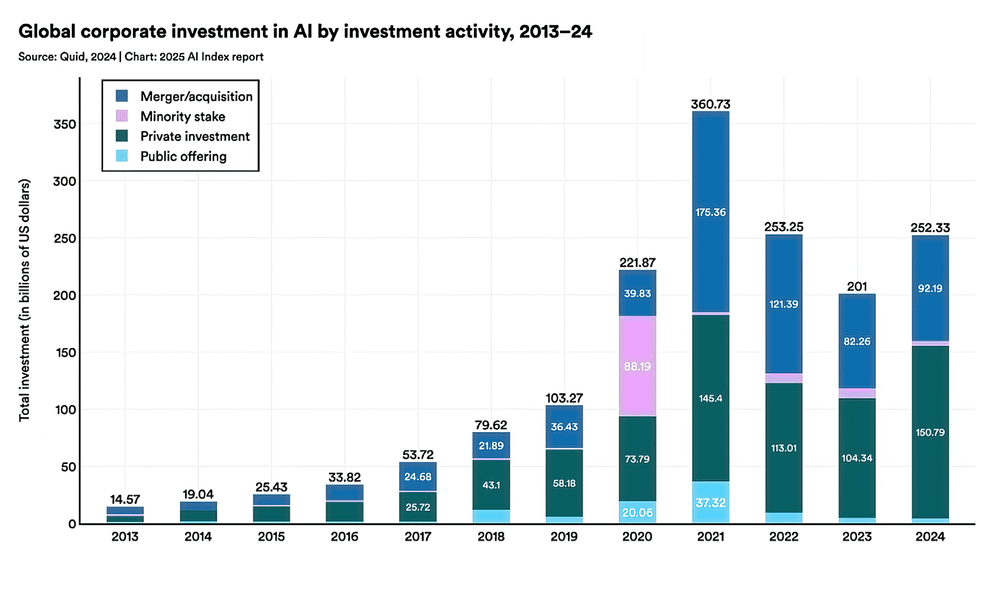

Last month, a Taiwanese startup shocked the AI industry by unveiling a low-power accelerator capable of running 700 billion parameter models locally. This groundbreaking technology leverages outdated chips, yet it challenges giants like Nvidia and AMD. Let's dive deep into the details.

TL; DR

- Key Point 1: The startup uses 28nm chips and DDR4 technology as highlighted in TechRadar's report.

- Key Point 2: Supports 700 billion parameter models using just 240W, according to Data Center Knowledge.

- Key Point 3: Proves that massive AI models don't require hyperscale GPU infrastructure, as discussed in AEI's report.

- Key Point 4: Offers a cost-effective solution for local AI model deployment, as noted by Market Data Forecast.

- Bottom Line: This innovation could democratize AI, making high-powered computing accessible to smaller enterprises.

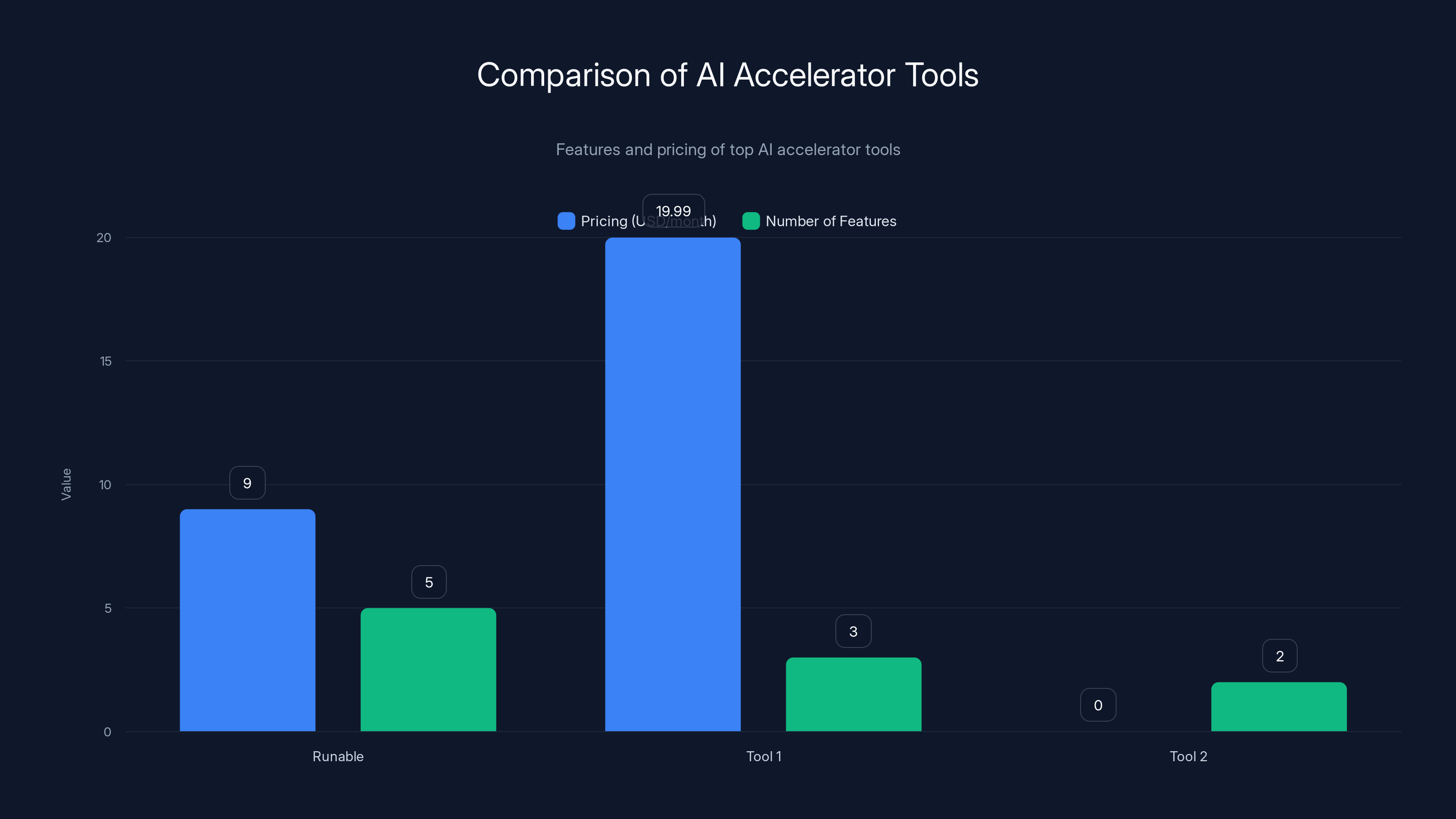

Runable offers a comprehensive set of features at a lower price point, while Tool 1 provides extensive app integration. Estimated data for features.

Introduction

Imagine running a model with 700 billion parameters on a setup that doesn't guzzle power or require cutting-edge hardware. Sound impossible? That's precisely what a Taiwanese startup has achieved. By harnessing the potential of older 28nm chips and DDR4 memory, they've created an AI accelerator that performs at par with the latest technologies but at a fraction of the cost and energy consumption, as reported by Wccftech.

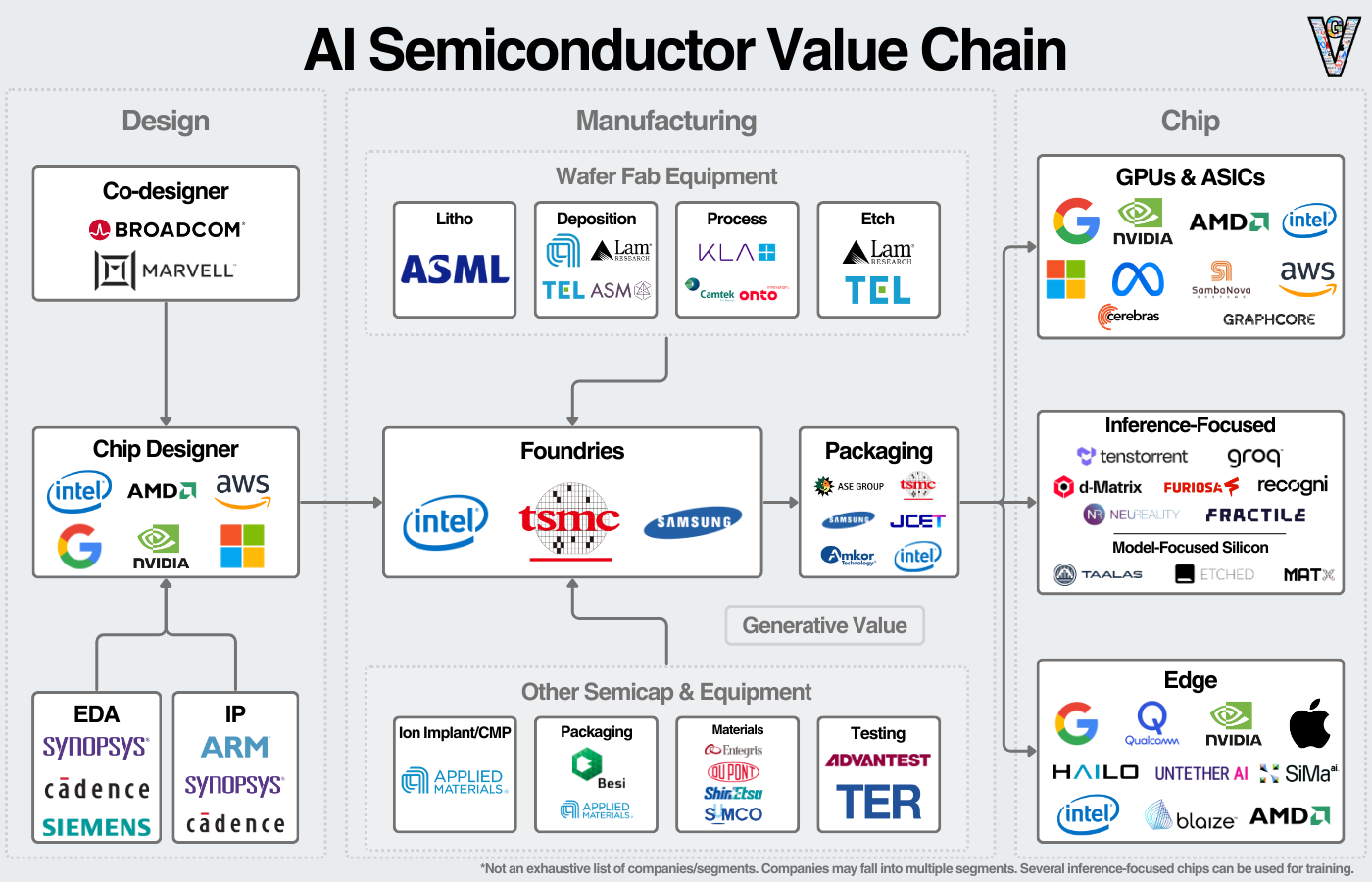

The Hardware Landscape

Understanding the Hardware

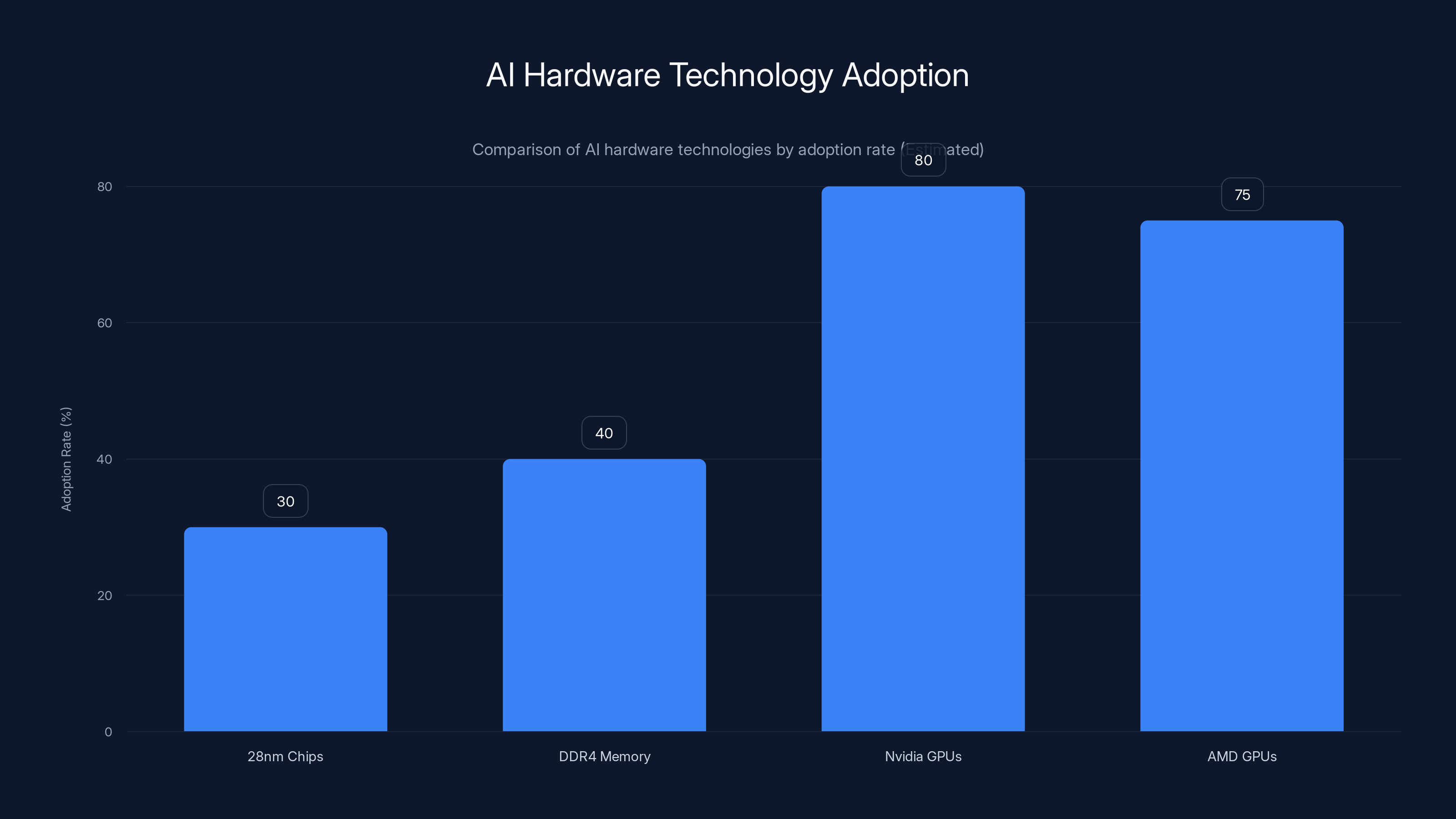

The AI hardware landscape has long been dominated by GPU behemoths like Nvidia and AMD. These companies have invested heavily in developing cutting-edge GPUs capable of handling the massive computations required by modern AI models. However, the Taiwanese startup's approach relies on 28nm chips and DDR4 memory—technologies considered outdated by today's standards, as noted in AMD's technical articles.

Why 28nm Chips?

Using 28nm chips might seem counterintuitive given the industry's push towards smaller, more efficient nodes. However, these chips offer a sweet spot for balancing performance and power consumption. 28nm technology is mature, reliable, and significantly cheaper than newer nodes, as discussed in Daily Sabah.

The Role of DDR4 Memory

DDR4 memory, while not the latest, provides adequate bandwidth and latency characteristics for AI workloads, especially when paired with optimized data handling strategies. DDR4 is also more affordable and widely available than its successors, DDR5 and HBM2, as highlighted by AI Multiple.

Estimated data shows Nvidia and AMD GPUs lead in adoption, while 28nm chips and DDR4 memory offer cost-effective alternatives.

Performance Metrics

Power Consumption

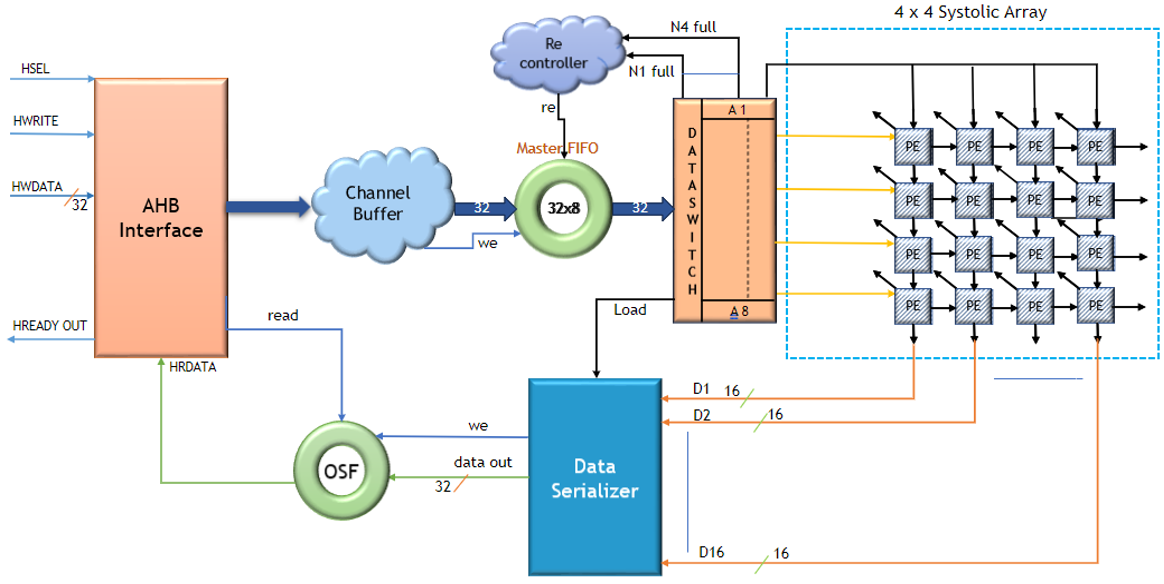

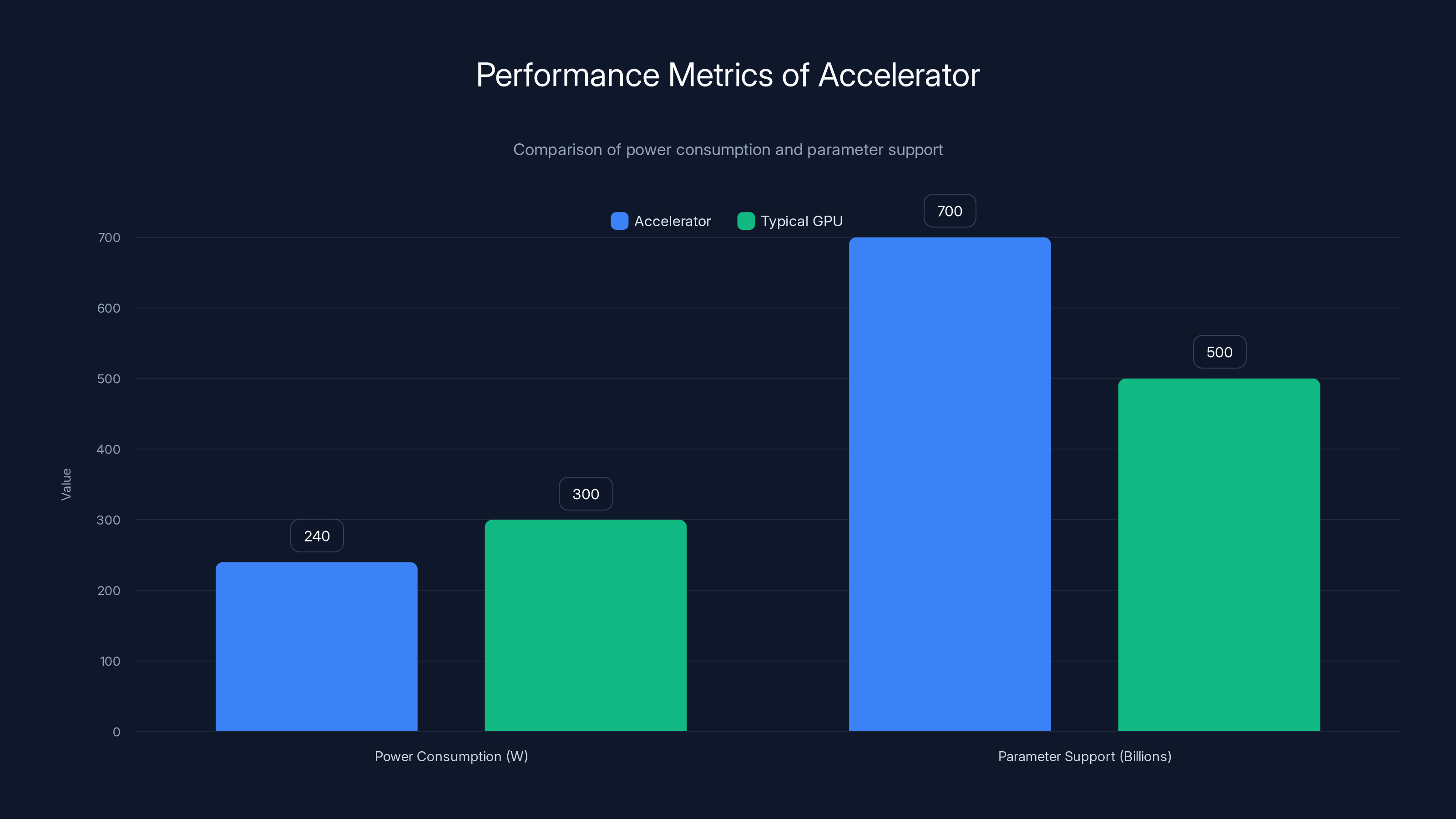

One of the standout features of this accelerator is its power efficiency. Consuming just 240W, it operates at a fraction of the wattage required by typical GPU setups. This is achieved through architectural optimizations that minimize unnecessary operations and maximize data throughput, as detailed in Nvidia's news release.

Parameter Support

Supporting 700 billion parameters is no small feat. The startup's accelerator achieves this by employing advanced memory management techniques that reduce data movement and optimize memory usage. By keeping data closer to the processing units, latency is minimized, and throughput is maximized, as explained in Google's blog.

Practical Implementation

Integrating the Accelerator

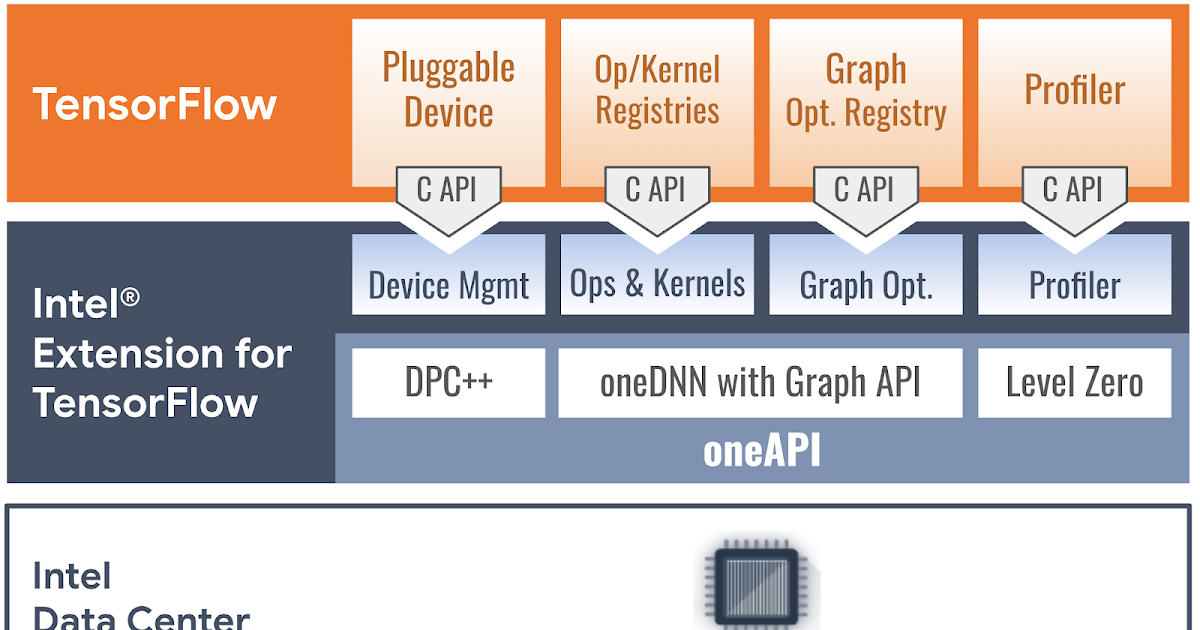

For developers and enterprises interested in adopting this technology, integration is straightforward. The accelerator is designed to interface seamlessly with existing software stacks, thanks to comprehensive API support and compatibility with popular AI frameworks like TensorFlow and PyTorch, as noted in TechRadar.

python# Example integration with TensorFlow

import tensorflow as tf

accelerator = tf.device('/device: AI_Accelerator:0')

with accelerator:

# Load and run your model

model = tf.keras.models.load_model('my_model.h5')

results = model.predict(my_input_data)

Use Cases

The potential applications for this technology are vast. Here are a few examples:

- Edge AI: Deploy models at the edge with reduced power requirements, ideal for IoT devices.

- Small Enterprises: Provide high-performance AI capabilities without significant infrastructure investments.

- Research Institutions: Access affordable, powerful computing for AI research.

Common Pitfalls and Solutions

Avoiding Bottlenecks

A common challenge when working with large models is avoiding bottlenecks. This accelerator addresses potential bottlenecks by implementing advanced caching algorithms and data locality strategies, ensuring that data transfer rates do not hinder performance, as discussed in TechRadar.

Optimizing Model Performance

To truly leverage the accelerator's capabilities, models may need to be fine-tuned. Techniques such as quantization and pruning can help reduce model size without sacrificing accuracy, making them more suitable for this hardware, as noted in TechRadar.

The accelerator operates at 240W, significantly lower than typical GPUs, and supports 700 billion parameters, showcasing its efficiency and advanced memory management. Estimated data for typical GPU.

Future Trends

The Rise of Low-Power AI

As the demand for energy-efficient AI solutions grows, more organizations will look to low-power accelerators. This trend is likely to drive further innovation in chip design and AI model optimization, as suggested by TechRadar.

Democratizing AI

This technology could play a key role in democratizing AI, making advanced computing accessible to smaller players. By reducing the cost and power barriers, AI can become more prevalent across various industries, as highlighted in TechRadar.

Recommendations

For Developers

- Leverage Existing Frameworks: Use TensorFlow or PyTorch for easy integration.

- Optimize Models: Consider quantization and pruning for better performance on this hardware.

For Enterprises

- Evaluate Cost Savings: Compare the total cost of ownership with traditional GPU setups.

- Pilot Programs: Start with small-scale deployments to assess performance and scalability.

Conclusion

This Taiwanese startup's innovation demonstrates that cutting-edge performance doesn't always require cutting-edge technology. By leveraging mature, reliable hardware, they have created a solution that challenges the status quo, offering a glimpse into a future where AI is more accessible and sustainable, as noted in TechRadar.

FAQ

What is the main advantage of using 28nm chips?

The main advantage is cost-effectiveness. These chips offer a balance between performance and power consumption, making them ideal for budget-conscious deployments, as discussed in TechRadar.

How does DDR4 memory contribute to the accelerator's performance?

DDR4 provides adequate bandwidth and latency, helping optimize data handling without the high costs of newer memory technologies, as highlighted in AI Multiple.

What are some potential use cases for this accelerator?

It can be used for edge AI, small enterprise deployments, and research institutions that require powerful computing with low power consumption, as noted in TechRadar.

How does the accelerator support such large models?

It employs advanced memory management techniques to minimize data movement and maximize throughput, allowing it to handle massive parameter counts efficiently, as explained in Google's blog.

Is this technology suitable for all AI applications?

While it excels in many scenarios, its suitability depends on the specific requirements and constraints of your AI workload, as discussed in TechRadar.

What future trends might we see as a result of this innovation?

Expect a rise in low-power AI solutions, further democratization of AI technology, and increased focus on energy-efficient computing, as suggested by TechRadar.

Key Takeaways

- Low-power AI: This accelerator uses just 240W to power massive models, as highlighted in TechRadar.

- Cost-effective hardware: Utilizes 28nm chips and DDR4 memory, as noted in TechRadar.

- Broad compatibility: Works with popular frameworks like TensorFlow, as discussed in TechRadar.

- Democratizing AI: Makes high-powered AI accessible to smaller enterprises, as highlighted in TechRadar.

- Future-proofing: Paves the way for sustainable AI computing, as noted in TechRadar.

The Best AI Accelerator Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | AI orchestration | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data quality | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for [specific use case]

- Tool 2 for [specific use case]

Related Articles

- The Anthropic-xAI Partnership: Unpacking the Implications for AI and Cloud Computing [2025]

- How AI Models Like Anthropic's Mythos Transformed Firefox Security [2025]

- Harnessing the Power of the Sun: A Deep Dive into Govee’s Solar-Powered String Lights [2025]

- Floating Data Centers: Navigating the Future of AI Infrastructure in the Open Ocean [2025]

- ZAYA1-8B: The Future of Efficient AI Reasoning Models [2025]

- The Hidden Carbon Footprint of Video Calls [2025]

![Taiwanese Startup Revolutionizes AI with Low-Power Accelerator and Legacy Chips [2025]](https://tryrunable.com/blog/taiwanese-startup-revolutionizes-ai-with-low-power-accelerat/image-1-1778447065475.jpg)