Testing AI: Unique Challenges and Best Practices for the Modern Enterprise [2025]

Artificial Intelligence (AI) is revolutionizing industries across the globe, yet testing AI systems is a completely different beast compared to traditional software testing. In this article, we'll explore why AI testing demands a unique approach, the challenges companies face, and how to effectively overcome them.

TL; DR

- AI Testing Complexity: Unlike traditional software, AI systems learn and adapt, making testing more complex. According to Simplilearn, machine learning platforms are integral in managing this complexity.

- Human Judgment Required: AI outcomes often require human interpretation to assess accuracy, as emphasized in Cornerstone OnDemand's article on the role of humans in AI oversight.

- Data Dependency: High-quality, diverse datasets are crucial for reliable AI testing. A Nature study highlights the importance of data diversity in AI model accuracy.

- Automation Challenges: While automation aids testing, human oversight remains essential, as discussed in Nature's research on AI testing methodologies.

- Future Trends: AI testing tools will evolve to better handle interpretability and bias detection, as noted in WorldNewswire.

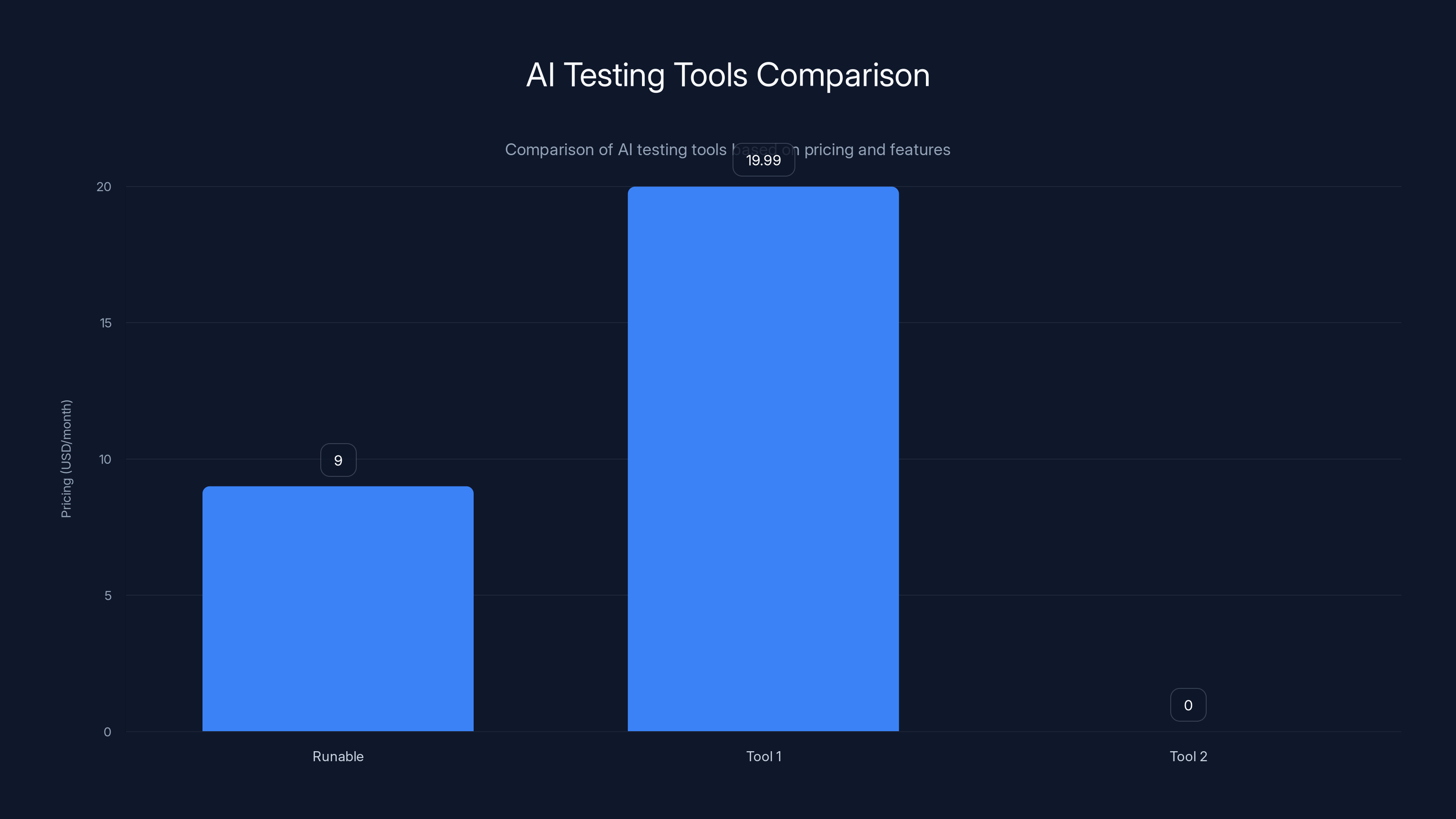

Runable offers the most affordable entry-level pricing at

The Core Differences: AI vs. Traditional Software Testing

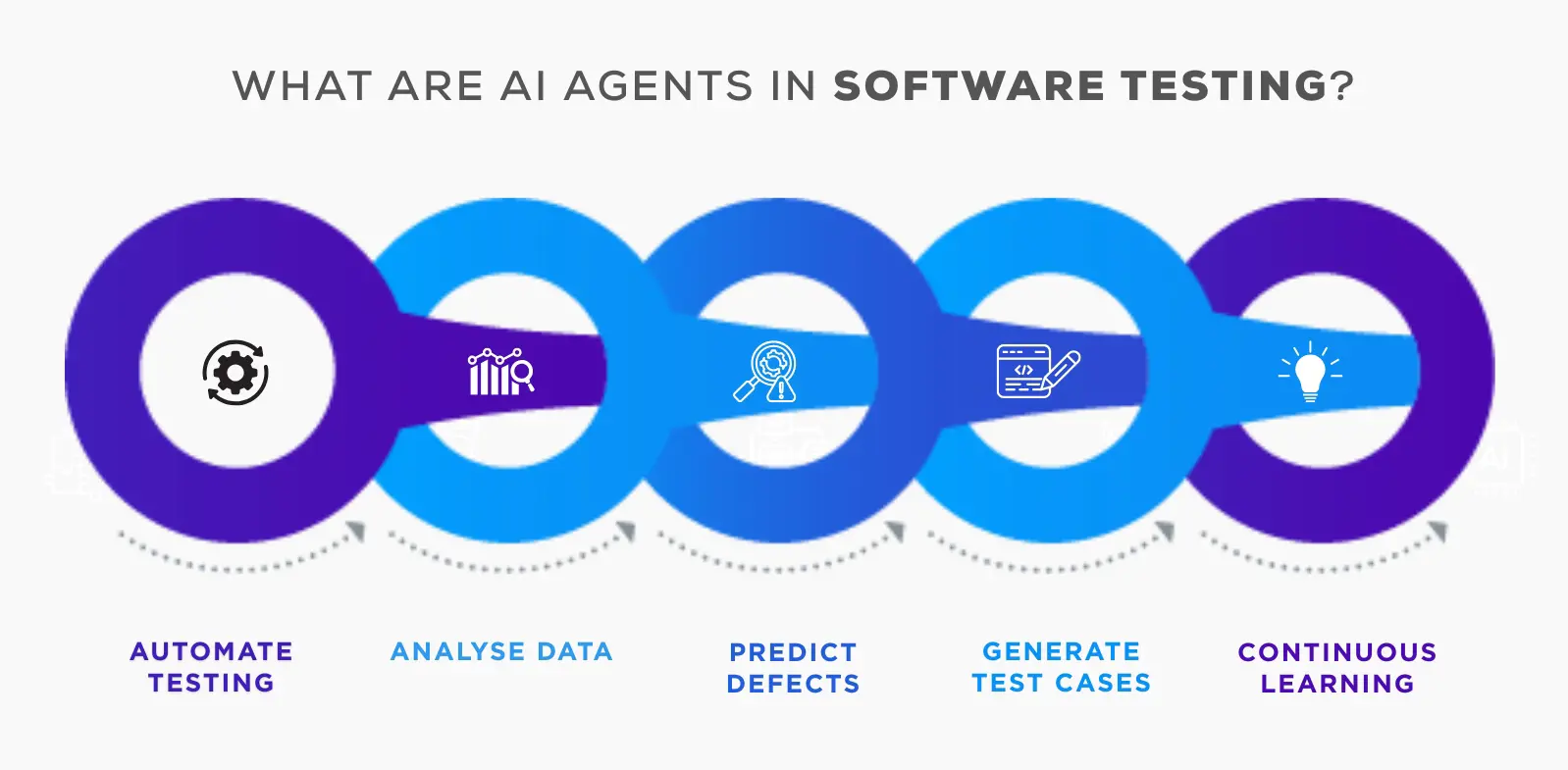

Testing AI isn't just about verifying code functionality. AI systems are dynamic, learning from data and adapting over time. This inherent complexity means traditional testing paradigms fall short. Here's why:

- Dynamic Learning: AI models evolve with more data, unlike static software code, as explained by Halston Media.

- Probabilistic Nature: AI outputs are probabilistic, not deterministic, introducing variability, which is a key point in Britannica's AI debate.

- Interpretability: AI decisions might lack transparency, making results harder to verify, as discussed in Pace University's insights.

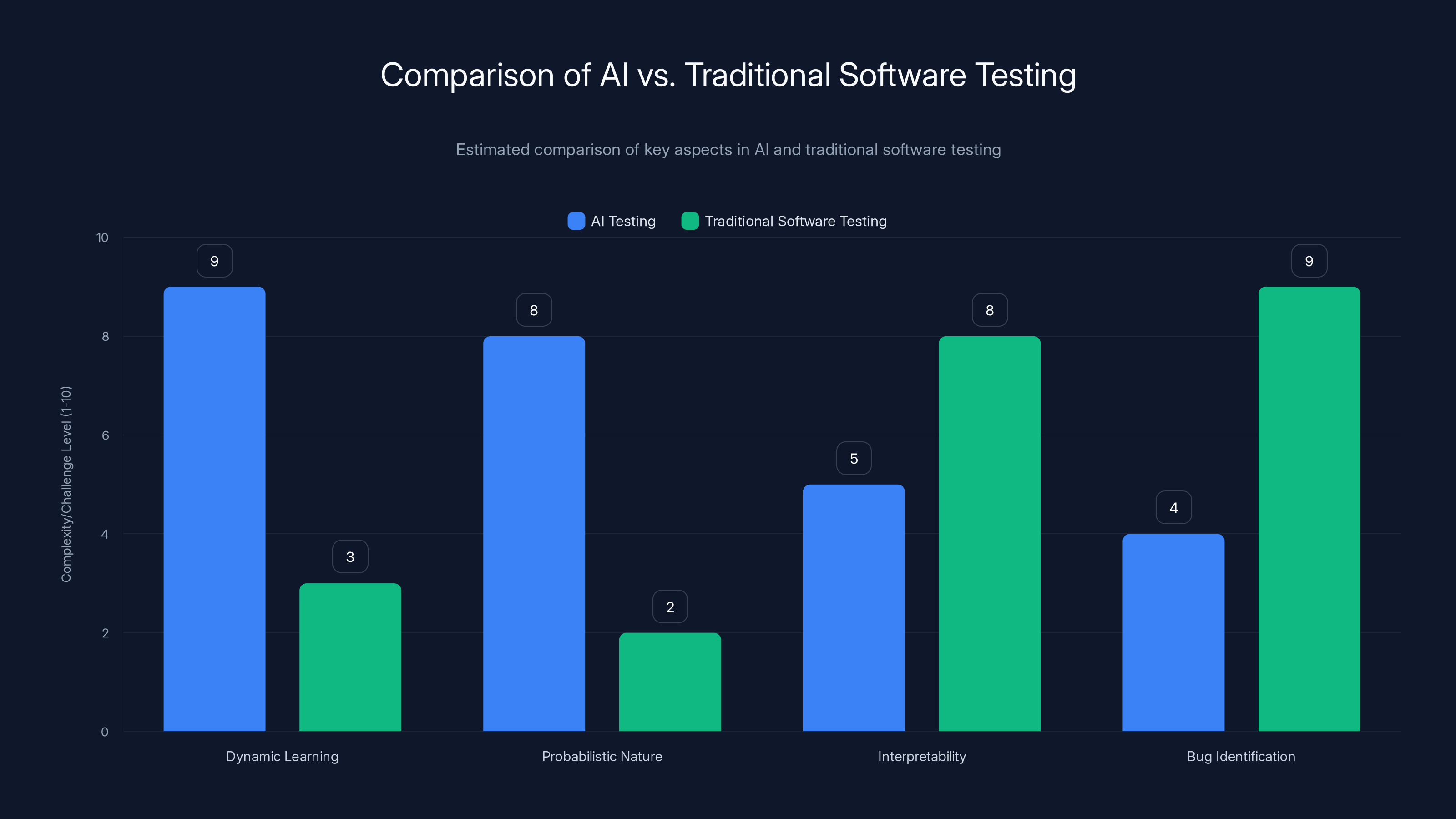

Example: Comparing AI and Software Bug Testing

In traditional software, you can pinpoint a bug in the code and fix it. With AI, "bugs" might manifest as biases in data or incorrect model predictions that aren't as straightforward to correct.

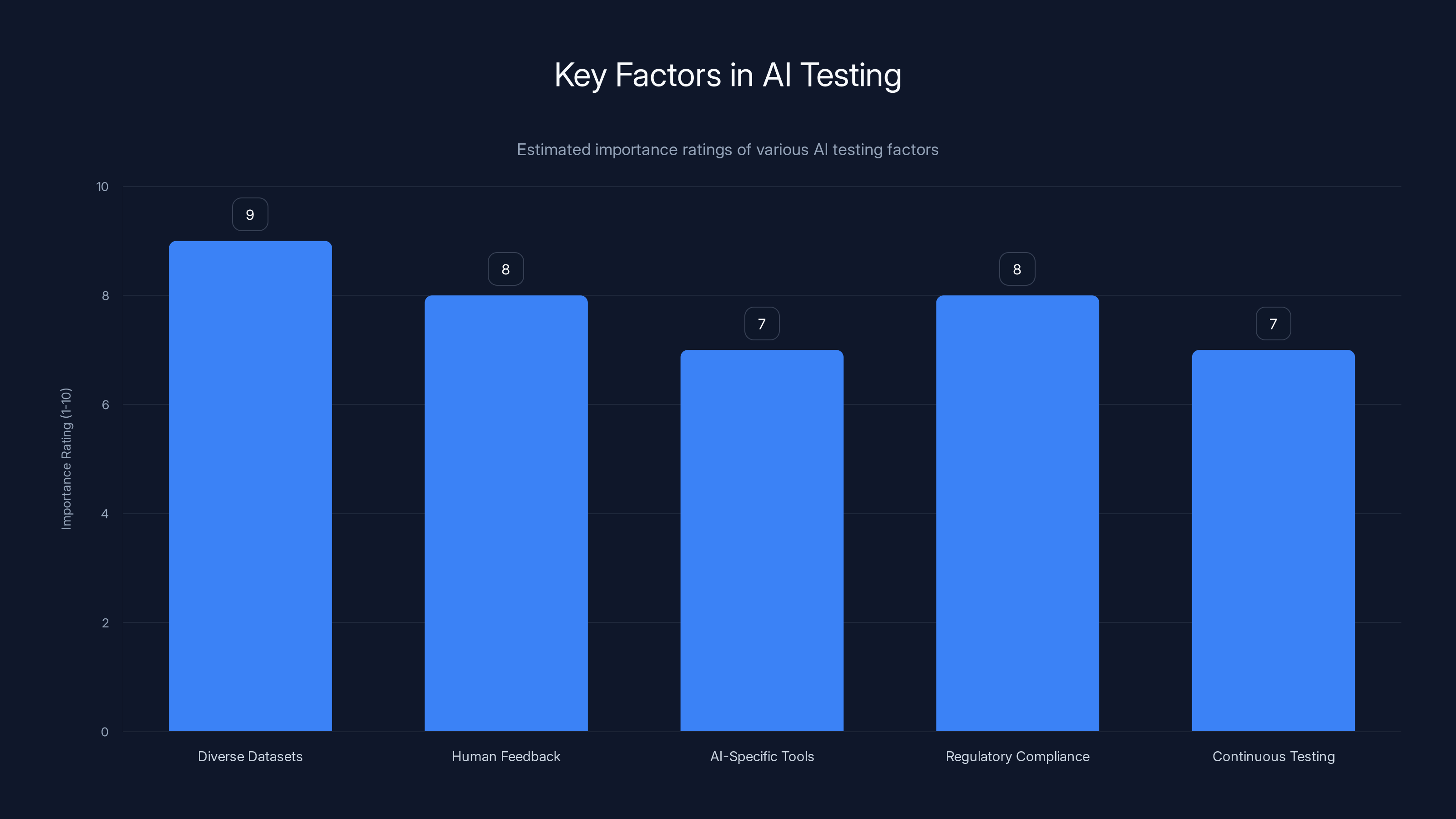

Diverse datasets and human feedback are rated as the most important factors in AI testing. Estimated data.

Why Human Judgment is Critical

AI systems can produce outputs that seem plausible but are incorrect. Human judgment is essential to:

- Validate Results: Human experts assess AI outputs for context and accuracy, as highlighted in Cornerstone OnDemand's article.

- Detect Bias: Humans are better at identifying biases in AI decisions, a point emphasized by National Defense Magazine.

- Ensure Ethical AI: Ethical considerations often require human input, as discussed in The Washington Post.

Use Case: AI in Healthcare

Consider an AI model diagnosing diseases. An incorrect diagnosis could have serious consequences, highlighting the need for human oversight to validate AI outputs.

The Role of Data in AI Testing

Data is the lifeblood of AI systems. High-quality, diverse datasets are crucial for:

- Model Training: Ensures the AI learns accurately, as noted in Syracuse University's data science guide.

- Testing: Real-world data scenarios improve testing robustness, as discussed in Oracle's blog.

Quick Tip:

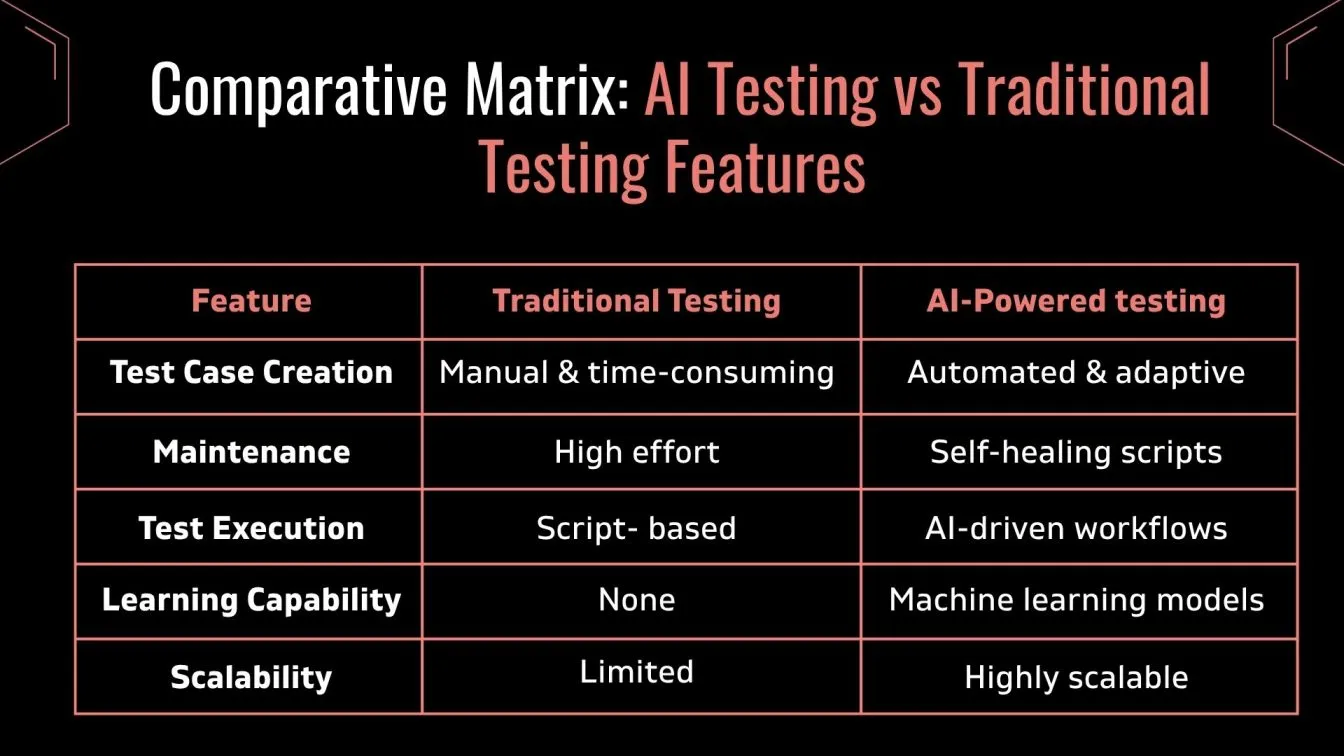

AI testing presents higher complexity in dynamic learning and probabilistic nature compared to traditional software testing, which excels in interpretability and bug identification. Estimated data.

Common Pitfalls in AI Testing

Several pitfalls can hinder effective AI testing:

- Overfitting: Models might perform well on training data but fail in real-world scenarios, as noted by National Defense Magazine.

- Bias: Inherent biases in data can lead to skewed AI results, a concern highlighted in Britannica's AI debate.

- Lack of Transparency: AI decisions can be opaque, complicating validation, as discussed in Pace University's insights.

Solutions:

- Diverse Data: Use diverse datasets to minimize bias and improve generalization, as recommended by Simplilearn.

- Model Explainability: Implement tools that enhance AI interpretability, as discussed in Oracle's blog.

Best Practices for Effective AI Testing

To test AI effectively, companies should:

- Incorporate Human Feedback: Regularly involve domain experts to validate AI outputs, as emphasized in Cornerstone OnDemand's article.

- Use Automated Tools: Leverage AI-specific testing tools for efficiency but maintain human oversight, as noted in WorldNewswire.

- Focus on Ethical AI: Implement ethical guidelines to ensure responsible AI use, as discussed in The Washington Post.

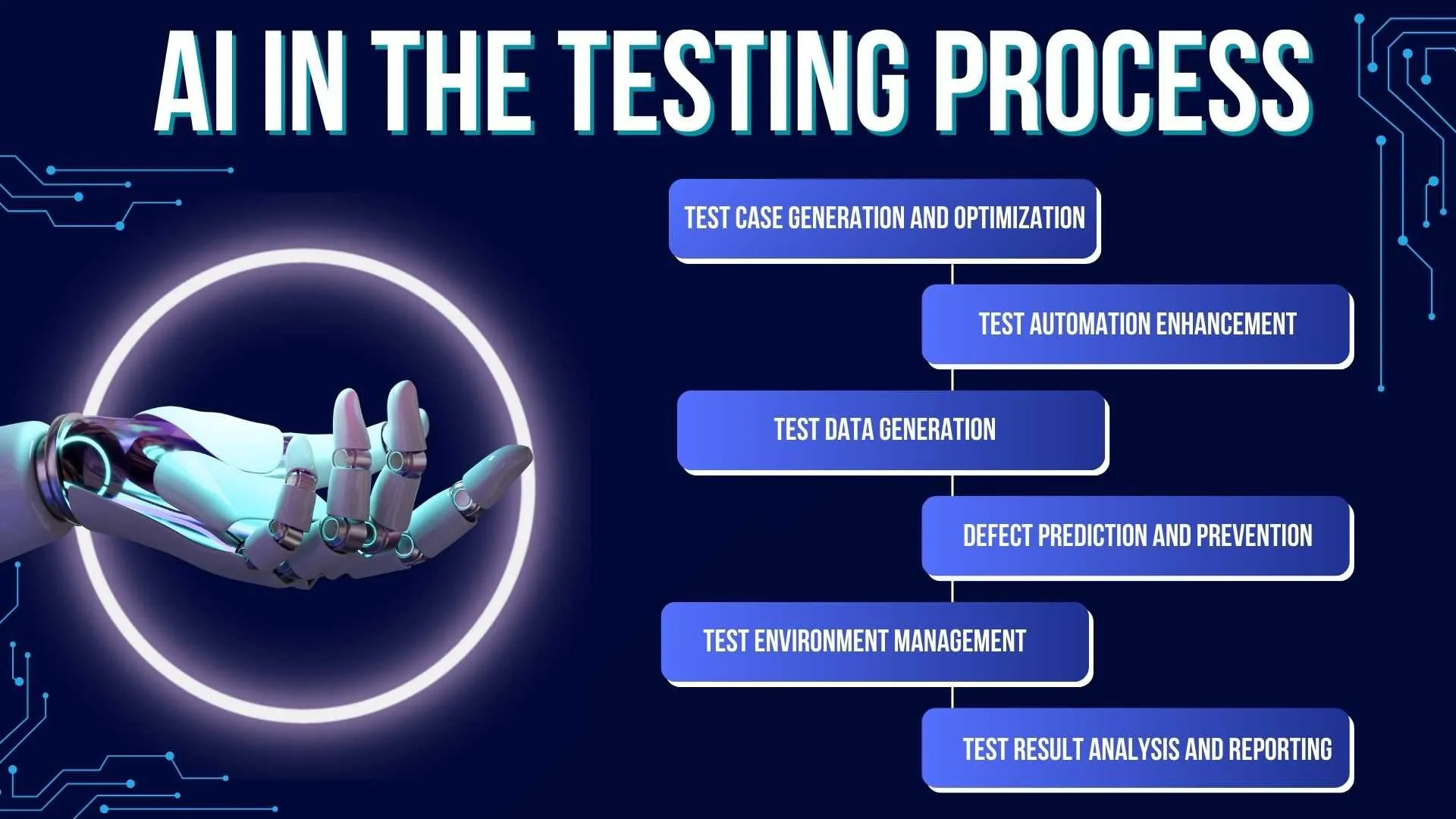

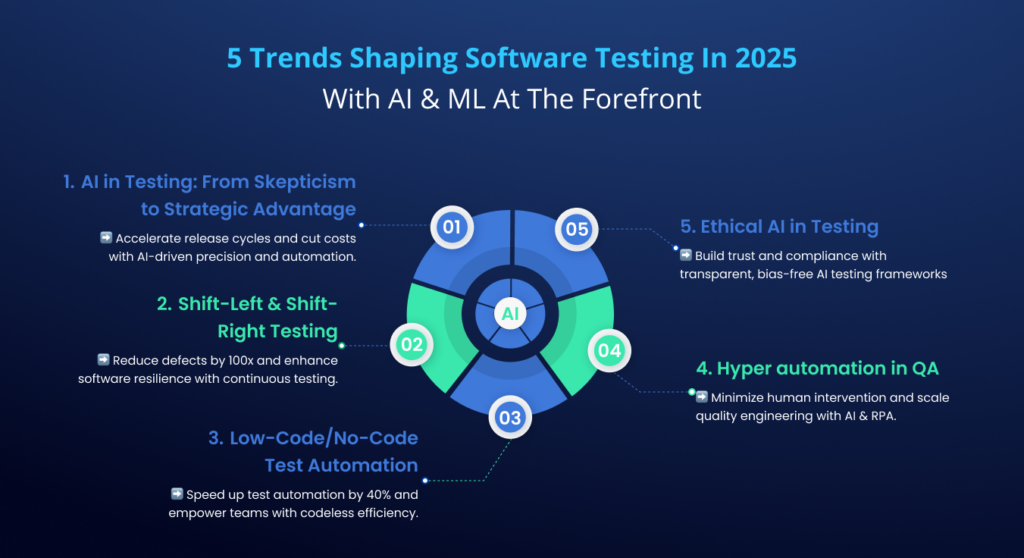

Future Trends in AI Testing

As AI technology evolves, so too will testing strategies:

- Advanced Testing Tools: Expect more sophisticated tools for bias detection and model interpretability, as noted in WorldNewswire.

- Regulatory Compliance: Anticipate stricter regulations around AI testing and deployment, as discussed in The Washington Post.

- Continuous Testing: AI systems will require ongoing testing to adapt to new data and scenarios, as highlighted in National Defense Magazine.

Fun Fact:

Conclusion

Testing AI demands a distinct approach that balances automation with human judgment. Enterprises must adapt their strategies to ensure AI systems are reliable, ethical, and effective. By understanding the unique challenges and implementing best practices, companies can harness AI's full potential without compromising quality or ethics.

FAQ

What is AI testing?

AI testing involves evaluating AI systems to ensure they perform as expected, are free of bias, and operate ethically, as discussed in Britannica's AI debate.

Why is AI testing different from software testing?

AI testing differs because AI systems learn and adapt, producing probabilistic outputs that require human interpretation and judgment, as noted by Halston Media.

How can companies improve AI testing?

Companies can improve AI testing by using diverse datasets, incorporating human feedback, and leveraging AI-specific testing tools, as recommended by Simplilearn.

What are the risks of inadequate AI testing?

Inadequate AI testing can lead to biased results, ethical breaches, and unreliable AI systems, as discussed in The Washington Post.

What trends are shaping the future of AI testing?

Trends include advanced bias detection tools, increased regulatory compliance, and continuous testing methodologies, as noted in WorldNewswire.

How important is data in AI testing?

Data is crucial in AI testing as it directly influences model training and performance, as highlighted by Syracuse University.

What role do humans play in AI testing?

Humans are essential in validating AI outputs, detecting biases, and ensuring ethical AI use, as emphasized in Cornerstone OnDemand's article.

What are the common pitfalls in AI testing?

Common pitfalls include overfitting, bias, and lack of transparency in AI decisions, as discussed in National Defense Magazine.

The Best AI Testing Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | AI orchestration | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data quality | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for AI orchestration

- Tool 2 for data quality

Key Takeaways:

- AI testing requires a unique approach distinct from traditional software testing.

- Human judgment is critical in assessing AI outputs and ensuring ethical use.

- High-quality, diverse datasets are essential for reliable AI testing.

- Future trends include advanced testing tools and increased regulatory compliance.

- Companies can improve AI testing by incorporating human feedback and ethical guidelines.

- Effective AI testing balances automation with human oversight.

- Continuous testing will become increasingly important as AI systems evolve.

- Understanding AI testing challenges helps companies harness AI's potential responsibly.

Key Takeaways

- AI testing requires a unique approach distinct from traditional software testing.

- Human judgment is critical in assessing AI outputs and ensuring ethical use.

- High-quality, diverse datasets are essential for reliable AI testing.

- Future trends include advanced testing tools and increased regulatory compliance.

- Companies can improve AI testing by incorporating human feedback and ethical guidelines.

- Effective AI testing balances automation with human oversight.

- Continuous testing will become increasingly important as AI systems evolve.

- Understanding AI testing challenges helps companies harness AI's potential responsibly.

Related Articles

- I Was Interviewed by an AI Bot for a Job [2025]

- Understanding the Legal Challenges Facing Grammarly's AI 'Expert Review' Feature [2025]

- I Let AI Redesign a Landing Page. It Beat Our Human-Designed Version by 44% [2025]

- Understanding the Impact of AI Chatbots in Facilitating Violence [2025]

- The Rise of AI-Fueled 'Slander Pages': Understanding the Trend and Its Implications [2025]

- Bespoke AI Models: The Future of Filmmaking [2025]

![Testing AI: Unique Challenges and Best Practices for the Modern Enterprise [2025]](https://tryrunable.com/blog/testing-ai-unique-challenges-and-best-practices-for-the-mode/image-1-1773327902541.jpg)