Introduction

Last month, a pivotal legal battle began brewing in the tech community. At the center of this storm is Grammarly, a widely used AI-powered writing assistant. The controversy surrounds its 'Expert Review' feature, which allegedly misrepresented editing suggestions as coming from renowned authors and academics, without their consent. According to Wired, this lawsuit isn't just a legal scuffle—it's a significant marker in the ongoing dialogue about AI ethics, privacy, and intellectual property. As we delve deeper into this issue, we'll explore how such features are technically constructed, the ethical implications they raise, and what this might mean for the future of AI technologies.

TL; DR

- Grammarly's 'Expert Review' feature: Faces a lawsuit for using names of real experts without consent, as detailed in Wired's report.

- Legal implications: Highlights issues of consent and intellectual property in AI.

- AI ethics: Raises questions about transparency and user trust.

- Future impacts: Could influence AI development and regulation.

- Bottom Line: A pivotal case for AI ethics and legal standards.

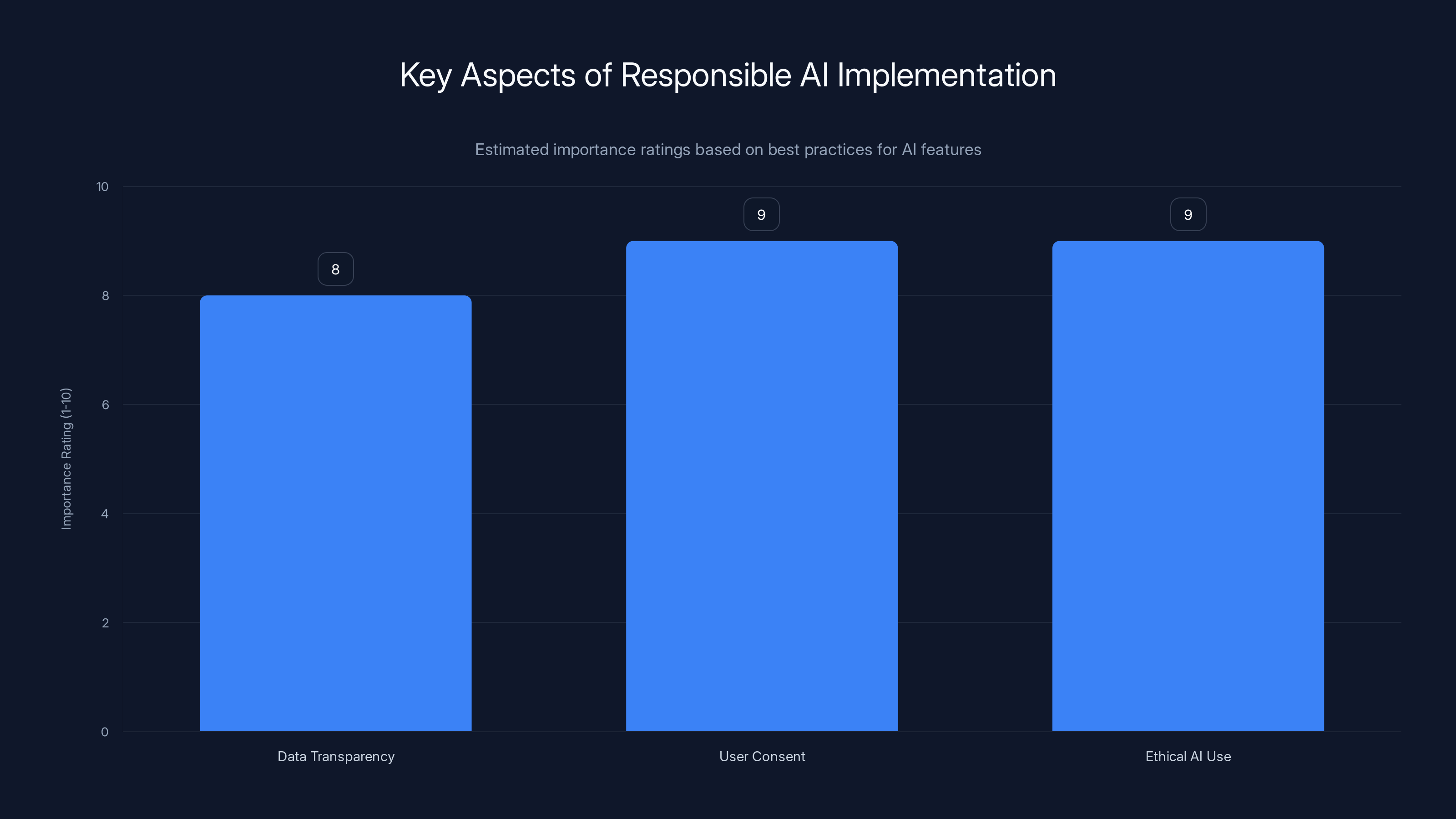

User Consent and Ethical AI Use are rated highest in importance for responsible AI implementation. Estimated data.

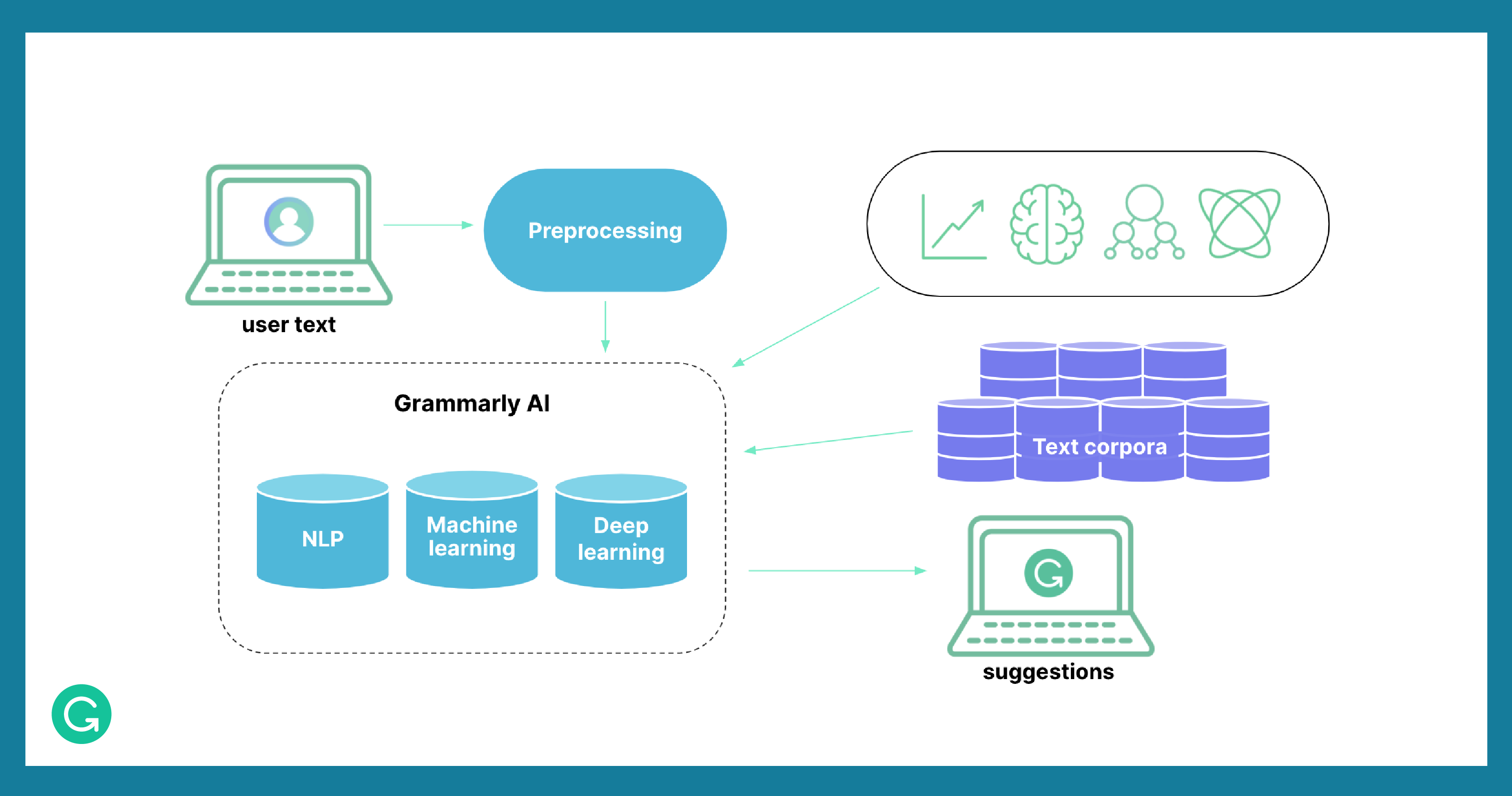

The Anatomy of Grammarly's AI Tools

Before diving into the lawsuit, it's crucial to understand how Grammarly's AI technology works. Grammarly uses a combination of natural language processing (NLP) and machine learning to provide writing suggestions. Their core engine analyzes text for grammar, punctuation, style, and tone, offering real-time corrections.

How 'Expert Review' Works

The 'Expert Review' feature attempts to elevate this by simulating feedback from renowned experts. Ideally, this is done by training AI models on vast datasets that include writings from various authors. The AI then produces suggestions that mimic the style or preferences of these experts, ostensibly to enhance the user's writing with high-quality, expert-level feedback. CryptoRank provides an analysis of how this feature has been perceived.

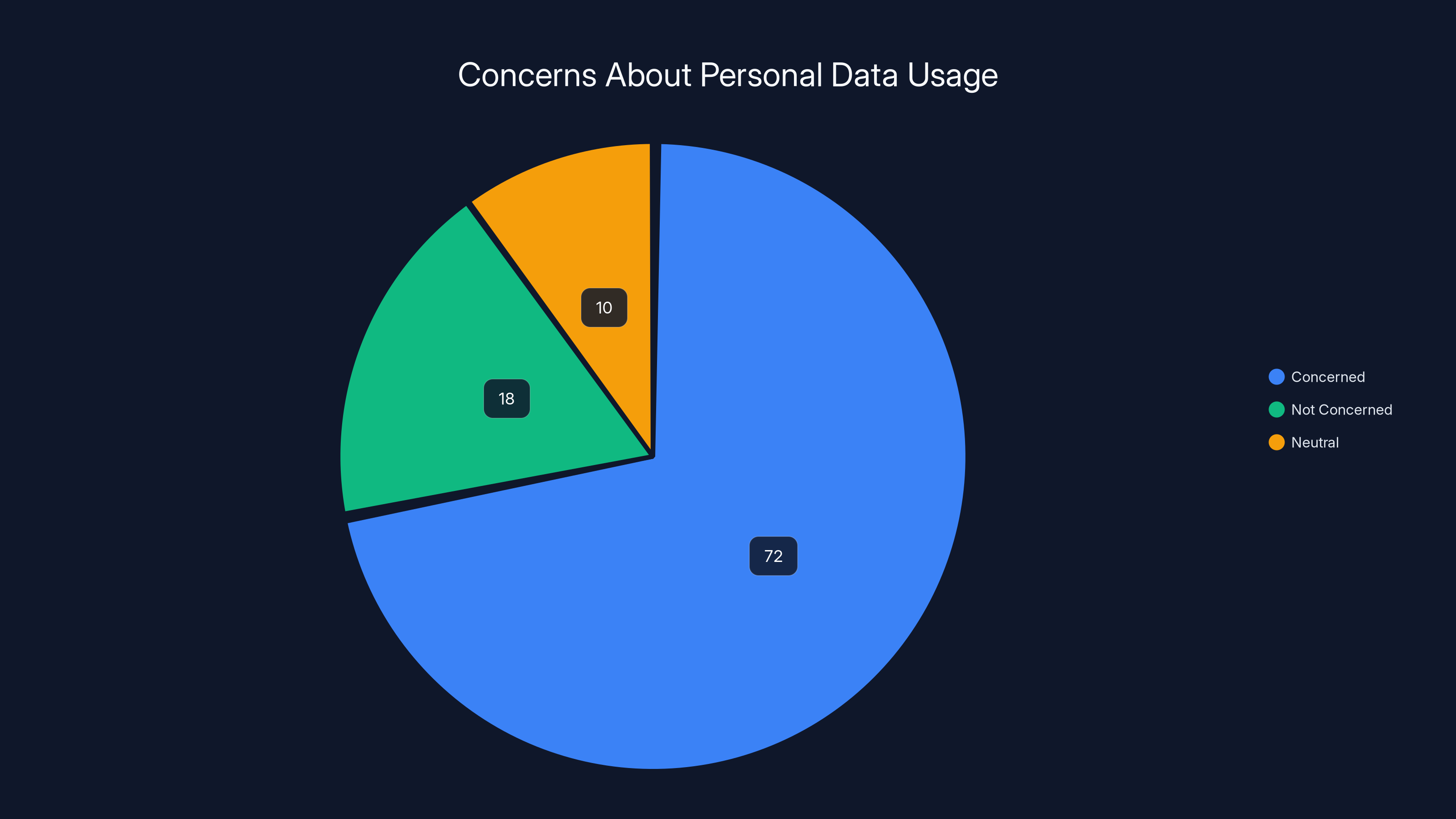

A significant 72% of Americans express concern over how companies handle their personal data, highlighting the importance of transparency and ethical practices in AI development.

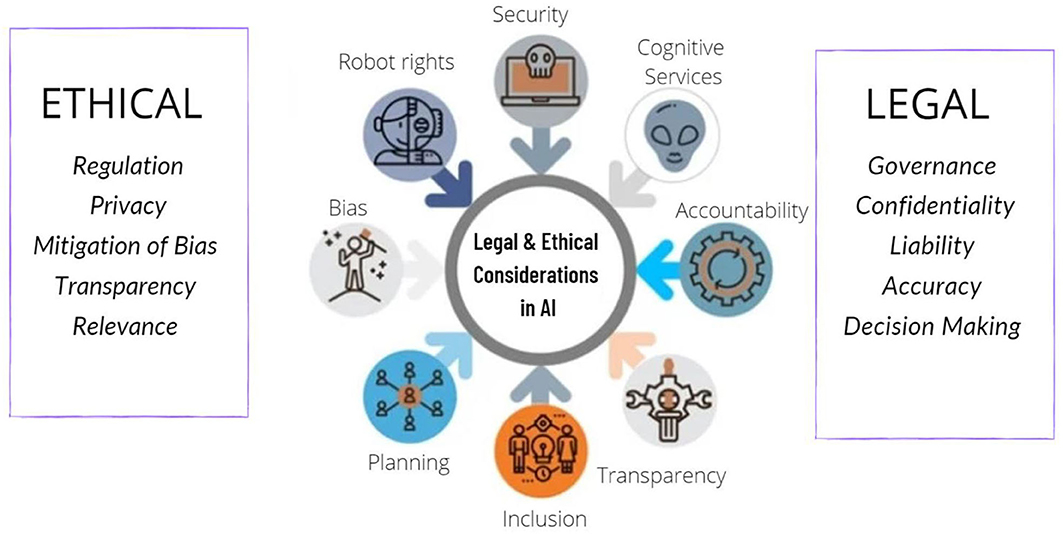

Legal and Ethical Implications

The lawsuit against Grammarly highlights several critical issues:

- Consent and Authorization: Using the likeness or name of a real person in AI outputs without their permission can violate privacy and intellectual property rights, as discussed in TechBuzz.

- Misrepresentation: Presenting AI-generated content as if it were crafted by real experts can mislead users regarding the authenticity of the feedback.

Consent in AI Features

Consent in AI is a complex issue. It involves ensuring that any personal data or likeness used by the AI has been legally acquired and is used with explicit permission. For Grammarly, this would mean securing agreements with individuals whose styles or names are used.

Legal Framework

The legal landscape around AI and privacy is continually evolving. Current regulations like the General Data Protection Regulation (GDPR) in Europe provide a framework for consent but may not fully cover the nuances of AI-generated content.

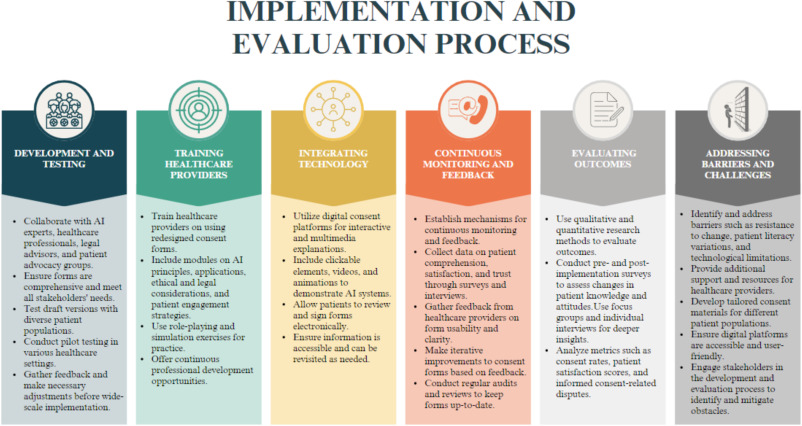

Practical Implementation Guides

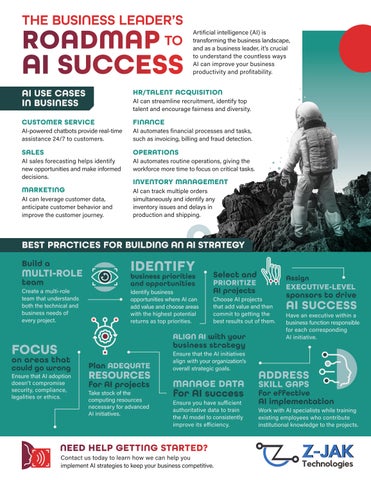

For developers and companies aiming to implement AI features responsibly, several best practices should be considered:

- Data Transparency: Clearly communicate how data is used. This builds trust and complies with regulations like GDPR.

- User Consent: Obtain explicit consent from users before using their data or likeness in AI models.

- Ethical AI Use: Develop AI systems that respect user privacy and intellectual property.

Consent Mechanisms

Implement robust consent mechanisms that allow users to understand and control how their data is used. This could include detailed privacy settings and opt-out options for features like 'Expert Review'.

Consent and authorization are the most critical legal and ethical concerns in AI, followed by misrepresentation. Estimated data based on typical issues.

Common Pitfalls and Solutions

Misleading AI Outputs

One of the primary concerns with AI features like 'Expert Review' is the potential for misleading outputs. Users may believe they are receiving personalized advice from experts when, in reality, it's AI-generated.

Solutions

- Transparency: Clearly label AI-generated content. Indicate which suggestions are AI-based and separate them from any human-reviewed content.

- User Education: Educate users about AI capabilities and limitations. This can be done through onboarding tutorials and in-product guides.

Data Privacy Concerns

Data privacy is a significant concern, especially when AI systems process large amounts of personal data.

Solutions

- Anonymization: Use data anonymization techniques to protect user identities.

- Secure Storage: Implement robust security measures to protect data from breaches and unauthorized access.

Future Trends and Recommendations

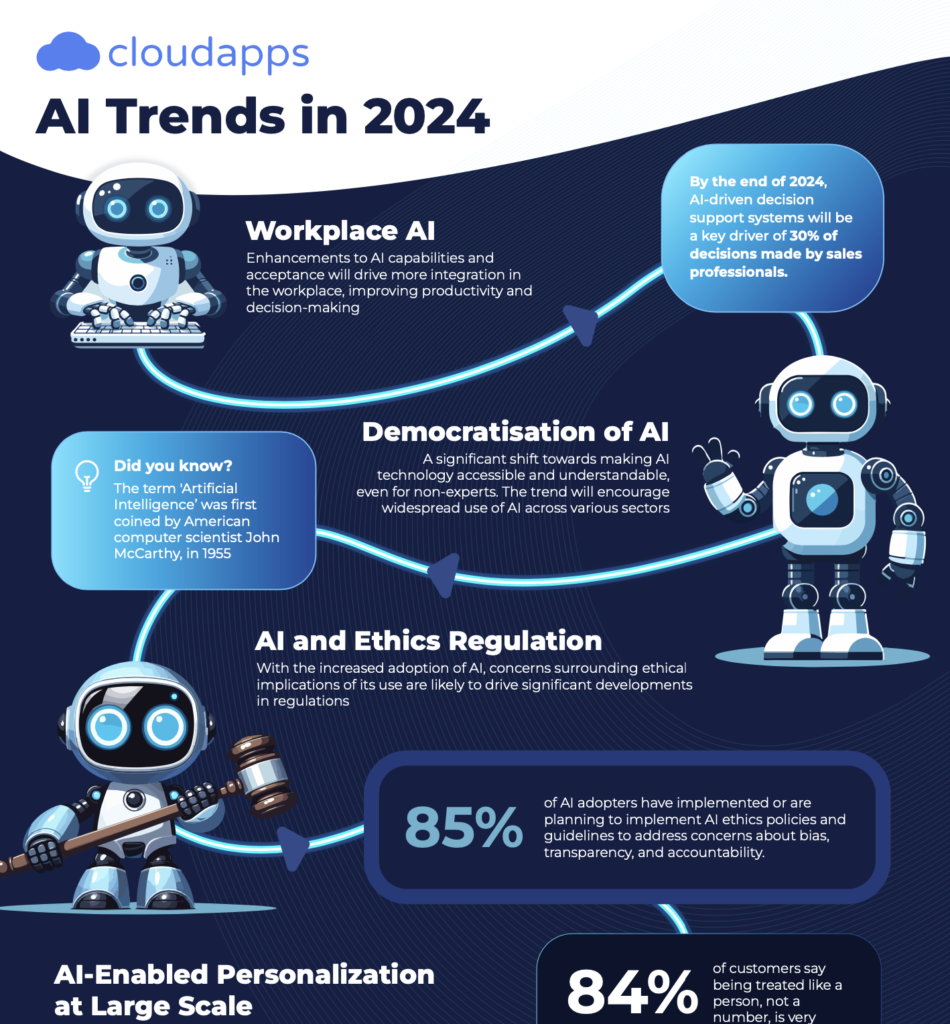

AI Ethics in Focus

As AI technologies become more integrated into daily life, ethical considerations will be paramount. This includes ensuring AI transparency, building systems that are fair and unbiased, and respecting user privacy.

Regulatory Developments

We can expect more comprehensive regulations targeting AI technologies. Developers should stay informed about legal changes and prepare to adapt their practices accordingly, as suggested by Nixon Peabody.

Best Practices for AI Development

Developers can adopt several best practices to ensure their AI systems are ethical and compliant:

- Continuous Monitoring: Regularly audit AI systems to ensure compliance with ethical standards and regulations.

- Inclusive Datasets: Use diverse datasets to train AI models, reducing bias and increasing fairness.

Conclusion

Grammarly's class action lawsuit is a wake-up call for companies leveraging AI technologies. It underscores the importance of ethical AI practices, transparency, and robust consent mechanisms. As we move forward, balancing innovation with user rights will be crucial in shaping the future of AI.

FAQ

What is the 'Expert Review' feature in Grammarly?

The 'Expert Review' feature in Grammarly aims to provide feedback and suggestions that mimic the style of renowned authors and academics. However, it has faced legal challenges for misrepresenting these suggestions as coming from real experts without their consent, as reported by Wired.

How does AI pose legal challenges?

AI can pose legal challenges by infringing on privacy and intellectual property rights, especially when it uses personal data or likenesses without consent. This raises significant ethical and legal concerns.

What are the benefits of AI in writing tools?

AI in writing tools can enhance productivity by providing real-time grammar checks, style suggestions, and tone analysis. However, ethical implementation and transparency are crucial to maintain user trust.

How can companies ensure ethical AI use?

Companies can ensure ethical AI use by implementing transparent data practices, obtaining user consent, and using inclusive datasets to reduce bias. Regular audits and compliance checks are also essential.

What future trends can we expect in AI regulations?

Future AI regulations are likely to focus on data privacy, consent, and transparency. Developers should stay informed about legal changes and adapt their practices to comply with new standards.

How can users protect their data when using AI tools?

Users can protect their data by understanding the privacy settings of AI tools, opting out of unnecessary data usage, and regularly reviewing the permissions they grant to applications.

Key Takeaways

- Legal Challenges: Grammarly's lawsuit highlights the importance of consent and transparency in AI technologies.

- Ethical AI: Developers must prioritize ethical practices to maintain user trust and comply with regulations.

- User Education: Educating users about AI capabilities and limitations can prevent misunderstandings.

- Future Regulations: Anticipate more comprehensive AI regulations focusing on privacy and consent.

- Data Privacy: Implement robust data protection measures to safeguard user information.

Closing Thoughts

As AI continues to evolve, the balance between innovation and ethical practice becomes ever more important. This case serves as a crucial reminder of the responsibilities that come with technological advancements. By adhering to ethical guidelines and legal standards, companies can ensure their innovations benefit society while respecting individual rights.

Related Articles

- The Intricacies of Generative AI in Writing Tools: Lessons from Grammarly's Recent Strategy Shift [2025]

- Understanding the Impact of AI Chatbots in Facilitating Violence [2025]

- I Was Interviewed by an AI Bot for a Job [2025]

- Elon Musk’s Grok: The Controversy, Technical Insights, and Future Implications [2025]

- The Rise of the Silver Collar Workforce: Bridging Human Expertise and AI [2025]

- I Let AI Redesign a Landing Page. It Beat Our Human-Designed Version by 44% [2025]

![Understanding the Legal Challenges Facing Grammarly's AI 'Expert Review' Feature [2025]](https://tryrunable.com/blog/understanding-the-legal-challenges-facing-grammarly-s-ai-exp/image-1-1773263070866.jpg)