The Wolf That Never Was: AI, Ethics, and the Legal Ramifications of Digital Deception [2025]

TL; DR

- AI-generated media poses ethical and legal challenges, especially in emergency situations.

- Case study: A man faces imprisonment for faking a wolf sighting in South Korea using AI.

- Key concerns: Impact on law enforcement, trust in media, and public safety.

- Solutions: Stricter regulations, improved AI detection tools, and public awareness.

- Future trends: Advancements in AI could both enhance and hinder media authenticity.

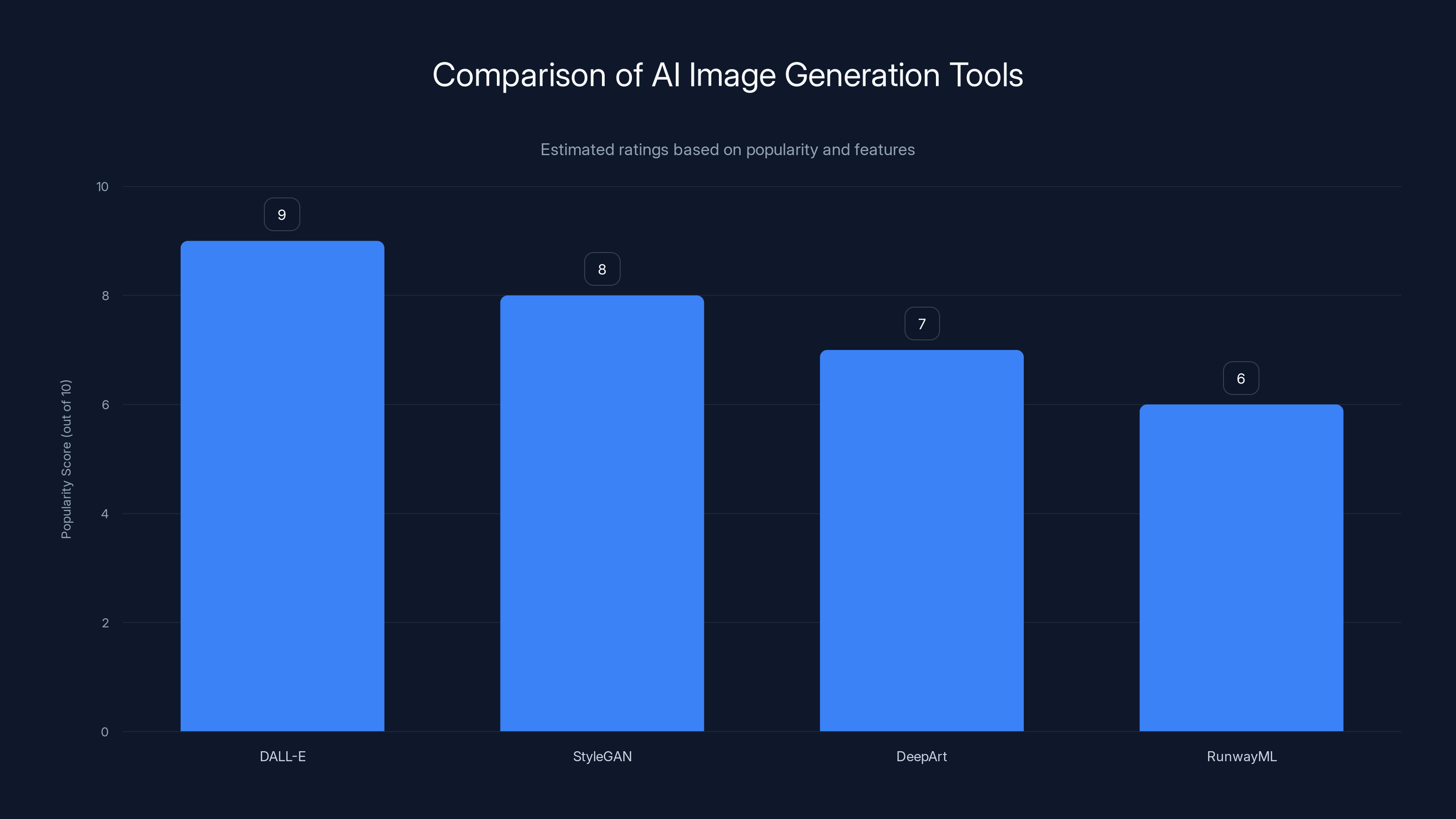

DALL-E leads in popularity due to its ability to create imaginative images from text, followed by StyleGAN known for high-resolution outputs. (Estimated data)

Introduction

In a world increasingly saturated with digital content, the lines between reality and fabrication are becoming more blurred. This was starkly illustrated in a recent case from South Korea, where a man faces up to five years in prison for using artificial intelligence to create a fake sighting of a runaway wolf. This incident not only highlights the potential for AI to disrupt public life but also underscores the urgent need for ethical and legal frameworks to address such technological capabilities, as discussed in Ars Technica.

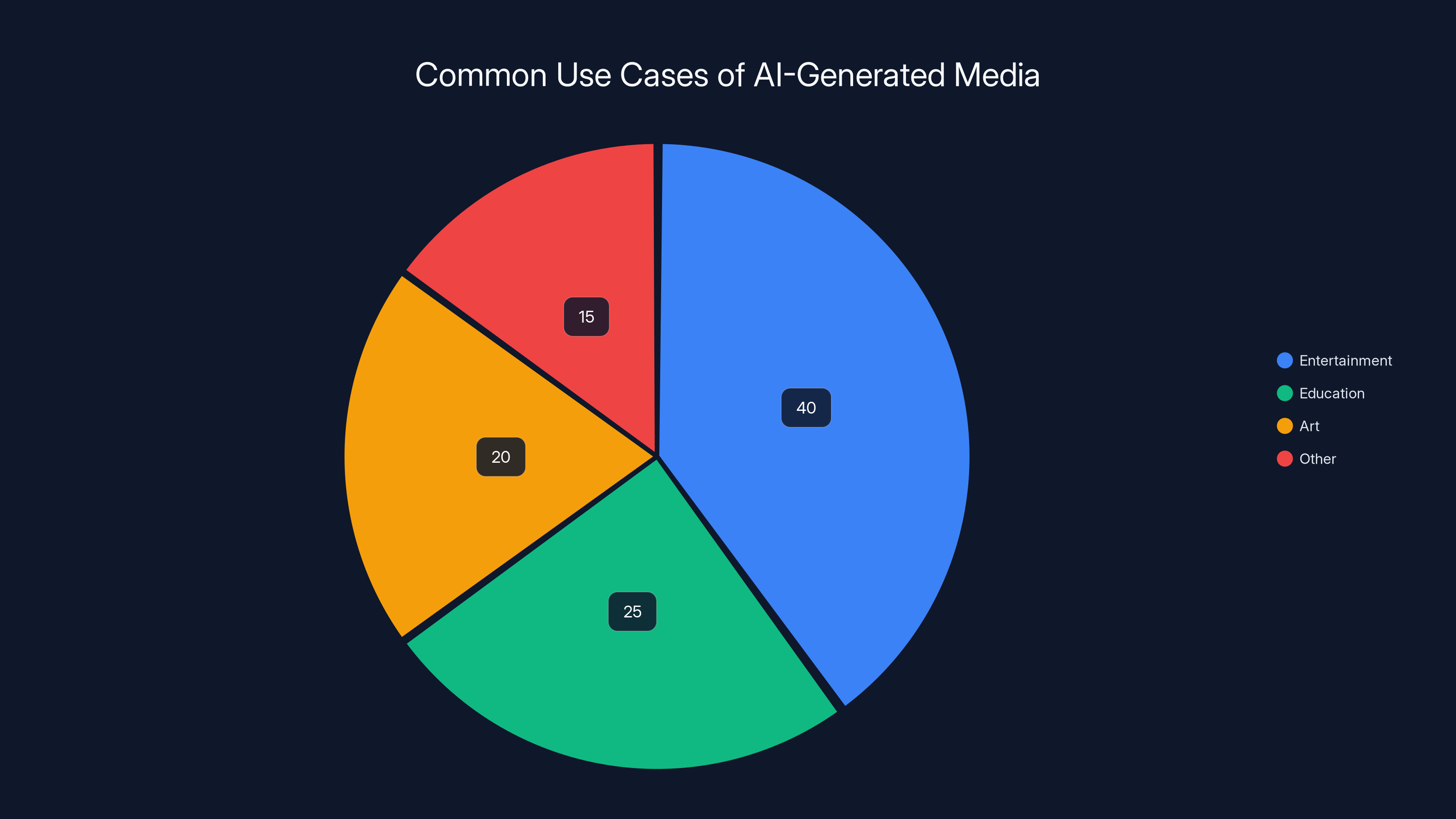

AI-generated media is predominantly used in entertainment, followed by education and art. Estimated data.

The Case of the Runaway Wolf

Background

In Daejeon City, South Korea, a two-year-old wolf named Neukgu escaped from a local zoo, triggering a nationwide effort to ensure its safe return. Neukgu, a third-generation descendant, was pivotal to efforts aimed at reviving the wolf population in South Korea after their extinction in the wild during the 1960s. The escape prompted a multi-agency response involving drones, police forces, and wildlife experts.

The Incident

Amidst this high-stakes operation, a 40-year-old man used AI technology to fabricate an image of Neukgu, claiming to have spotted the wolf in a nearby location. This act of digital deception not only misled the authorities but also potentially endangered the ongoing rescue effort. The image went viral, drawing public attention and diverting resources away from the actual search.

Legal Repercussions

The individual now faces serious legal charges, emphasizing the gravity of using technology to obstruct justice and public safety efforts. The case brings to light the implications of AI in generating realistic but false narratives, challenging the judicial system to adapt to new forms of digital crime, as highlighted in Governing.

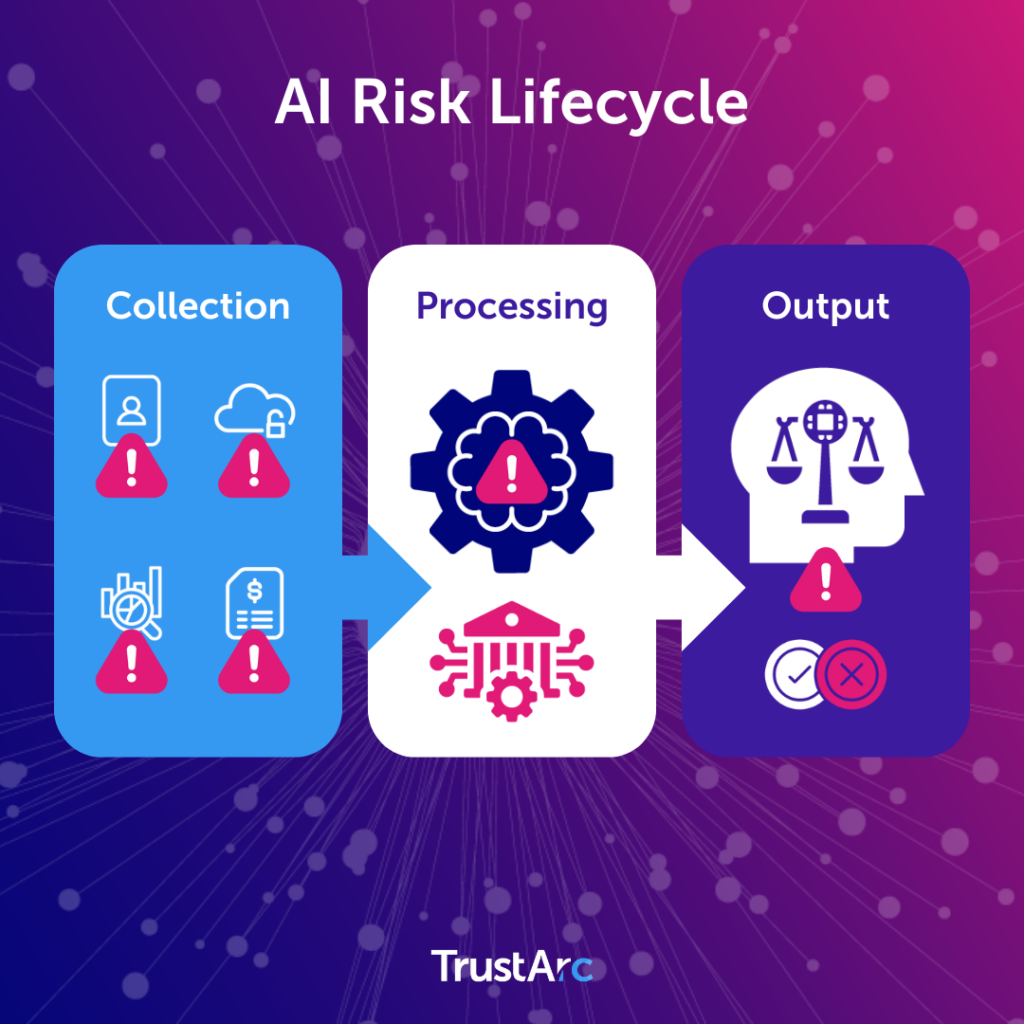

Understanding AI-Generated Media

What is AI-Generated Media?

AI-generated media refers to content created using artificial intelligence algorithms, often designed to simulate human creativity. This includes images, videos, audio, and text that appear authentic but are entirely artificial.

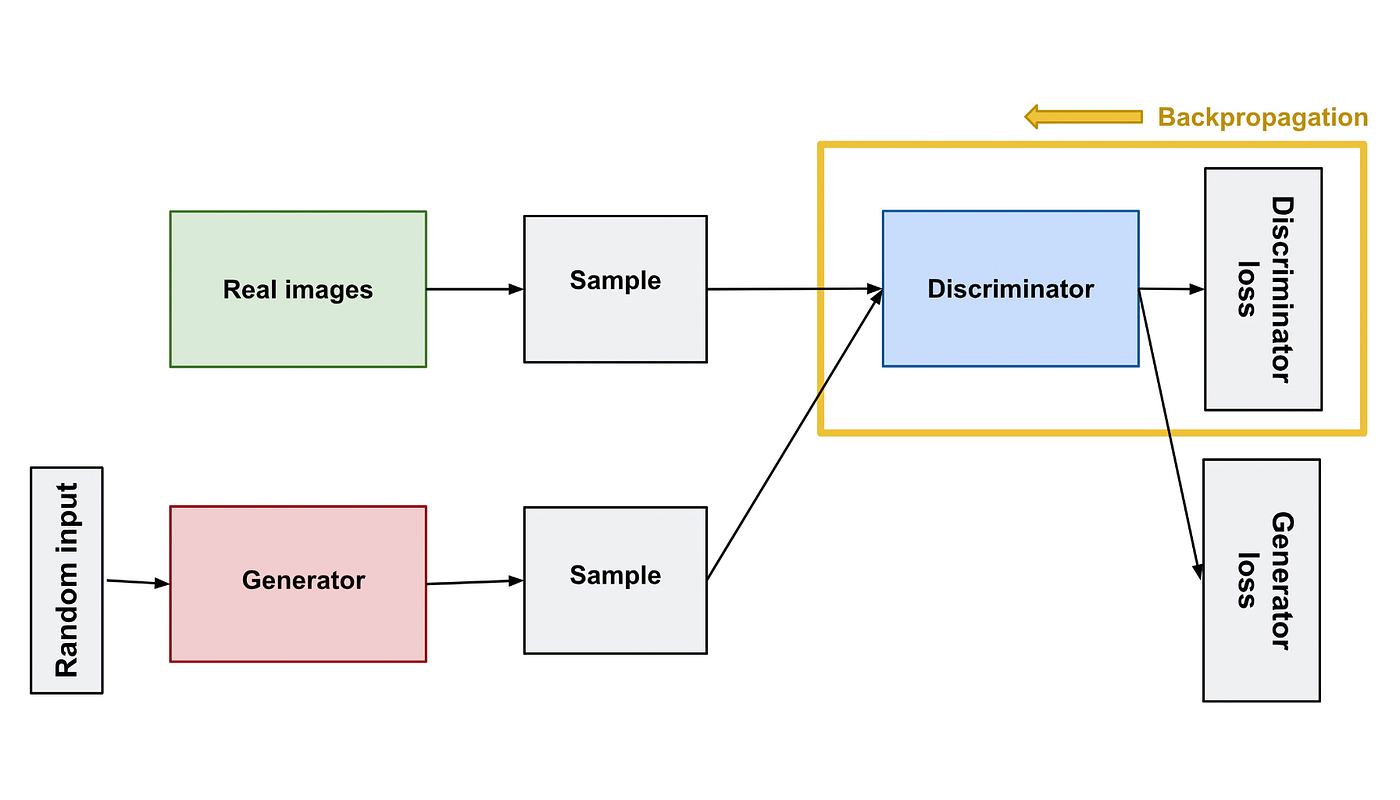

How It Works

AI tools, such as Generative Adversarial Networks (GANs), are commonly used to create these realistic images and videos. GANs consist of two neural networks—the generator and the discriminator—that work together to improve the authenticity of the generated media.

- Generator: Creates fake content.

- Discriminator: Evaluates the content against real data and provides feedback.

Common Use Cases

While often associated with malicious intent, AI-generated media has legitimate applications, including:

- Entertainment: Creating realistic CGI characters.

- Education: Simulating scenarios for training purposes.

- Art: Innovating new forms of digital artwork.

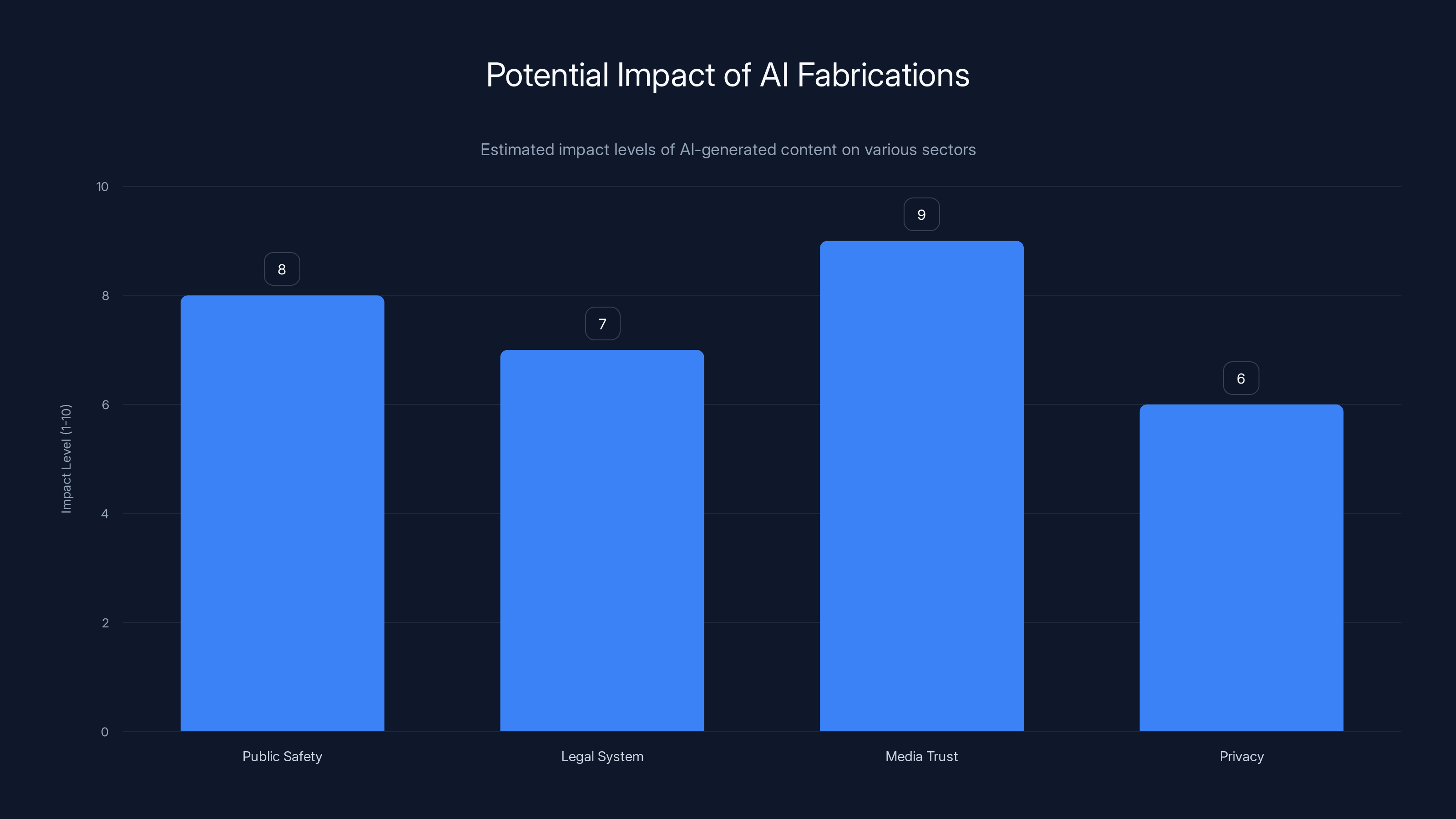

AI fabrications have significant potential to impact media trust and public safety. Estimated data.

Ethical Implications of AI in Media

The Impact on Public Trust

The ease with which AI can produce convincing fake media poses a significant threat to public trust in digital content. As seen in the case of the runaway wolf, misinformation can rapidly spread, leading to misallocation of resources and public panic, as discussed in EDMO.

The Role of Intent

Intent plays a crucial role in determining the ethicality of AI-generated content. While some uses are benign or even beneficial, others—like the wolf sighting hoax—are deceitful, with harmful consequences.

Privacy Concerns

AI-generated media can also infringe on personal privacy, particularly when deepfakes are used to manipulate images of individuals without their consent.

Legal Challenges and Frameworks

Existing Legislation

Many countries are still in the process of developing legal frameworks to address AI-generated content. Current laws often fall short of effectively managing the nuances of digital deception.

- Cybersecurity Laws: Typically focus on unauthorized access rather than content creation.

- Defamation Laws: May apply if fake content harms an individual's reputation.

Case Study: South Korea

In South Korea, the legal response to the wolf sighting hoax underscores the need for updated regulations. The charges faced by the man involve obstructing justice and misinforming public officials, reflecting the serious nature of the offense, as reported by Ars Technica.

Proposed Solutions

To mitigate these challenges, several strategies can be implemented:

- Stricter Regulations: Enacting specific laws targeting AI-generated fake content.

- Improved Detection Tools: Developing advanced algorithms capable of identifying AI-generated media.

- Public Awareness Campaigns: Educating the public on the risks associated with digital misinformation.

Technical Aspects of AI-Generated Images

How AI Generates Images

AI image generation often involves neural networks trained on massive datasets of real images. The networks learn features and patterns, allowing them to create photorealistic images from scratch.

- Training Phase: Involves feeding the network a diverse set of images.

- Generation Phase: The AI uses learned patterns to create new images.

Tools and Technologies

Several tools are popular for AI image generation, including:

- DALL-E: Known for creating imaginative images from textual descriptions.

- Style GAN: Used for generating high-resolution images with various styles.

Practical Implementation Guide

- Choose a Tool: Select an AI tool that fits your project needs.

- Prepare Data: Gather and preprocess data for training.

- Train the Model: Use a powerful computing resource to train your model.

- Generate Images: Test and refine your model to produce desired outputs.

Common Pitfalls and Solutions

Oversight in AI Training

A common issue is inadequate training data, leading to biased or unrealistic image generation. Ensuring diverse and representative data can mitigate this.

Ethical Oversight

Implementing ethical guidelines and human oversight during AI training can prevent misuse of generated media.

Technical Challenges

- Computational Cost: High resources required for training can be prohibitive.

- Quality Control: Ensuring the quality of output images remains a significant challenge.

Future Trends and Recommendations

Advancements in AI

As AI continues to evolve, the potential for more sophisticated and accessible tools will increase, necessitating stronger ethical and legal frameworks, as noted by The New Yorker.

Recommendations for Stakeholders

- Developers: Focus on creating transparent and accountable AI systems.

- Policymakers: Prioritize legislation that addresses emerging digital threats.

- Educators: Incorporate AI literacy in educational curriculums to prepare future generations.

Conclusion

The case of the AI-generated wolf sighting serves as a cautionary tale of the power and perils of digital deception. As technology advances, so too must our ethical, legal, and societal frameworks to protect public trust and safety. By fostering collaboration among technologists, lawmakers, and educators, we can navigate the complexities of AI-generated media and safeguard against its potential misuse.

FAQ

What is AI-generated media?

AI-generated media includes content like images, videos, and text created using artificial intelligence algorithms to simulate realistic human creativity.

How does AI generate images?

AI uses neural networks, particularly GANs, to learn patterns from real images and then creates new, realistic images from scratch.

What are the ethical concerns with AI-generated media?

Key concerns include misinformation, privacy violations, and the erosion of public trust in digital content.

How can AI-generated media be detected?

Advanced detection algorithms, often part of cybersecurity suites, can identify inconsistencies in AI-generated content.

What legal frameworks exist for AI-generated media?

While many countries are still developing specific laws, existing cybersecurity and defamation laws may apply in some cases.

What are the future trends in AI-generated media?

We expect advancements in AI to enhance both creation and detection capabilities, necessitating stronger ethical and legal oversight.

How can stakeholders address the challenges of AI-generated media?

Stakeholders should focus on transparency, accountability, and public education to mitigate the risks associated with digital deception.

Key Takeaways

- AI-generated media poses ethical and legal challenges in emergency scenarios.

- Intent is crucial in determining the ethicality of AI-generated content.

- Stricter regulations and improved detection tools are needed to combat digital deception.

- Public trust in digital content is at risk due to convincing fake media.

- Future AI advancements will require robust ethical and legal frameworks.

Related Articles

- AI is No Longer Borderless: Navigating a Fragmented Future [2025]

- AI Coachella: Silicon Valley's Royalty Inspires the Next Generation of Innovators [2025]

- The Intersection of Reality and AI: Understanding the Complex Case of Iranian Women 'Saved' from Execution [2025]

- DeepSeek V4: The AI Model Revolutionizing Large Language Models [2025]

- Building AI Credibility Through Live Events [2025]

- 5 Reasons to Think Twice Before Using Chatbots for Financial Advice [2025]

![The Wolf That Never Was: AI, Ethics, and the Legal Ramifications of Digital Deception [2025]](https://tryrunable.com/blog/the-wolf-that-never-was-ai-ethics-and-the-legal-ramification/image-1-1777044991941.jpg)