Understanding Instagram's Delayed Teen Safety Features: A Comprehensive Analysis [2025]

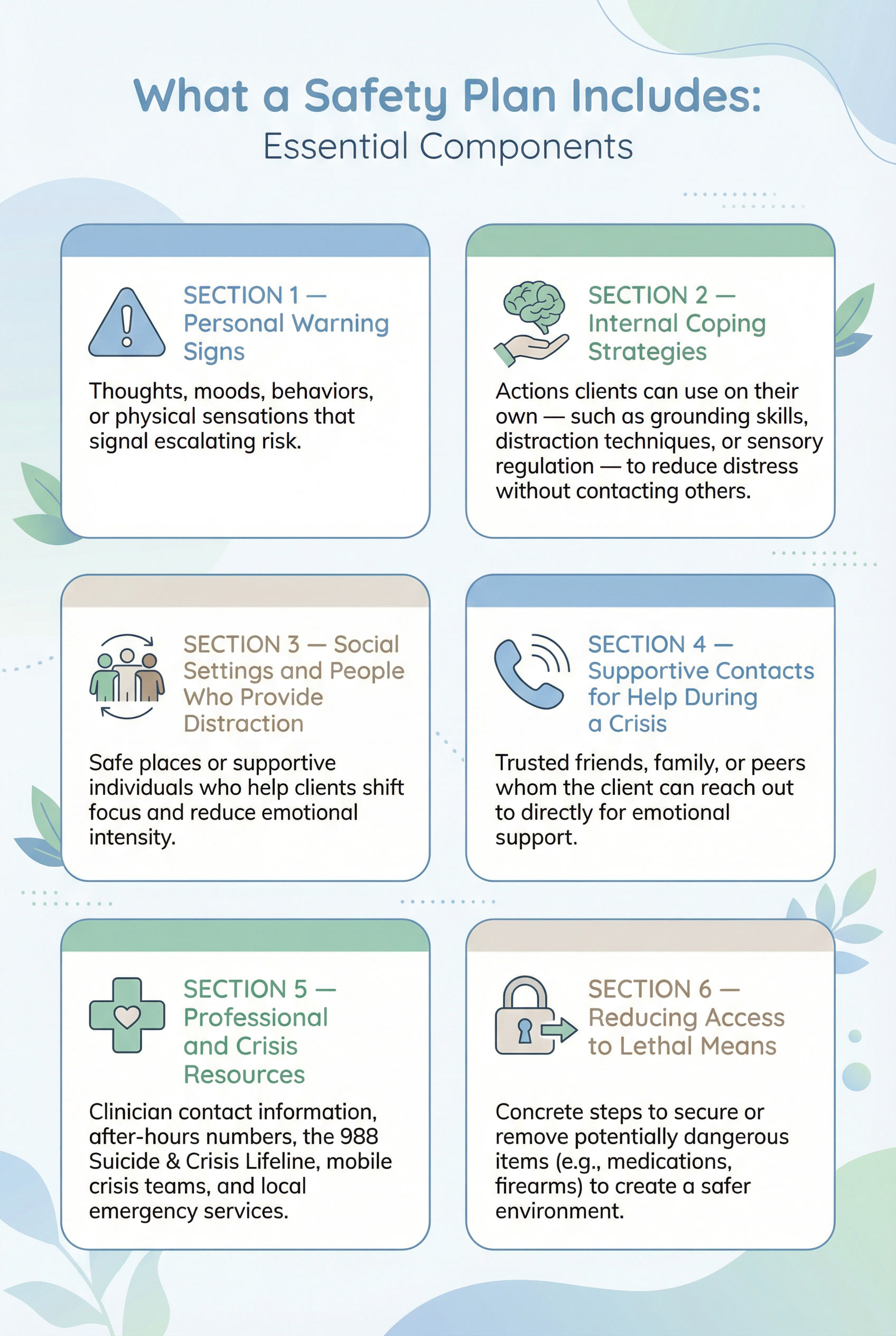

When Instagram announced the introduction of a nudity filter for teen safety in April 2024, it was a move long anticipated by many. The delay in rolling out this feature, however, has been a focal point of legal scrutiny and public debate. This article dives deep into why it took so long to implement such crucial safety features, examines the technical and ethical challenges involved, and explores what this means for the future of social media safety.

TL; DR

- Delayed Implementation: Instagram took nearly six years to introduce a nudity filter in DMs for teens, as highlighted in a TechCrunch article.

- Technical Challenges: Developing a reliable nudity detection system is complex and requires significant resources, as discussed in a recent study.

- Ethical Considerations: Balancing privacy with safety is a major concern for platforms like Instagram, according to Britannica's analysis.

- Future Trends: AI advancements promise better safety features, but also raise new privacy issues, as noted by Bloomberg.

- Recommendations: Transparent communication with users and continuous updates are key to maintaining trust, as emphasized in MarTech's insights.

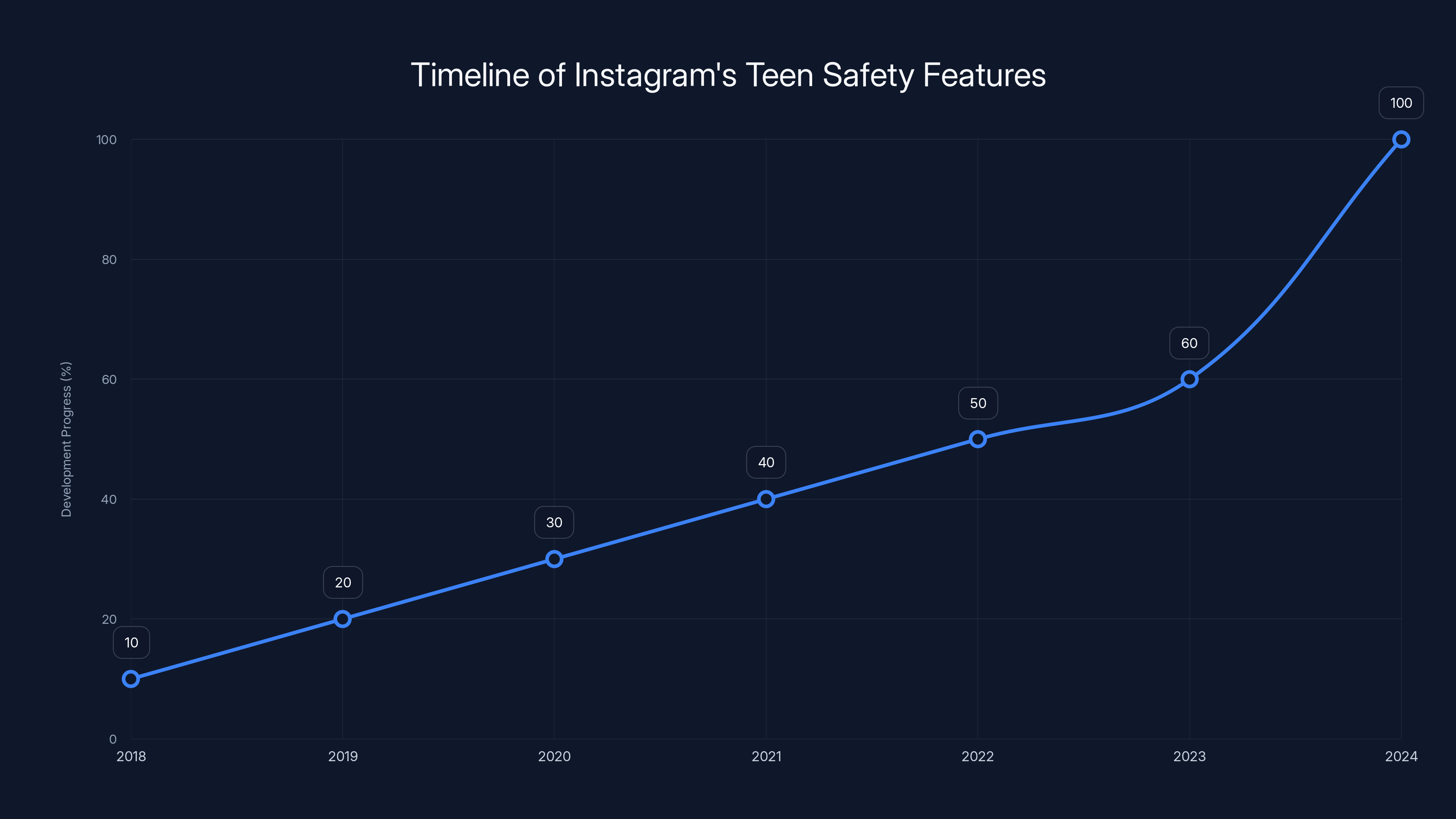

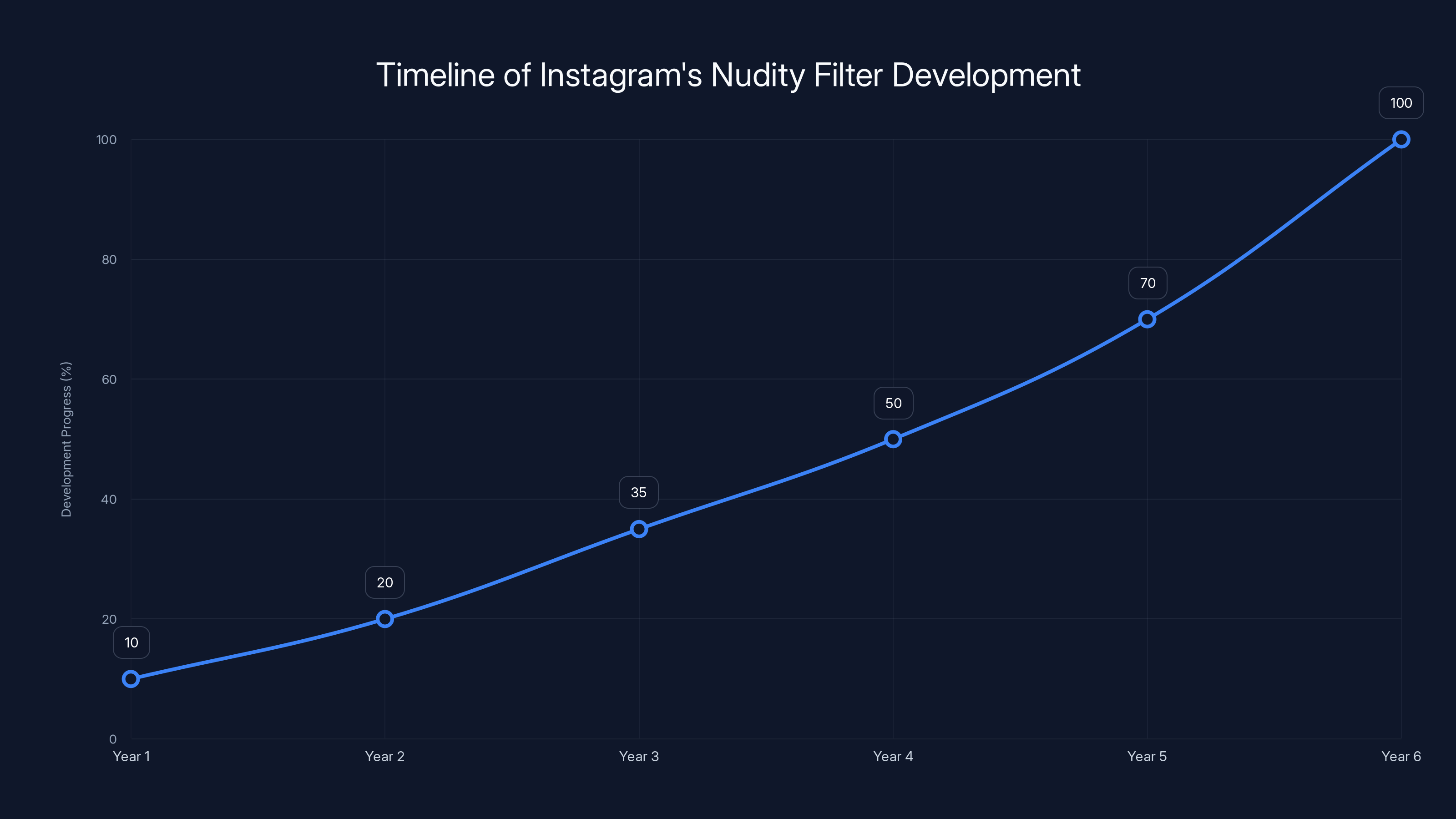

The development of Instagram's nudity filter for DMs shows a gradual increase in progress, with significant advancements leading to its release in 2024. Estimated data.

The Background of Instagram's Teen Safety Features

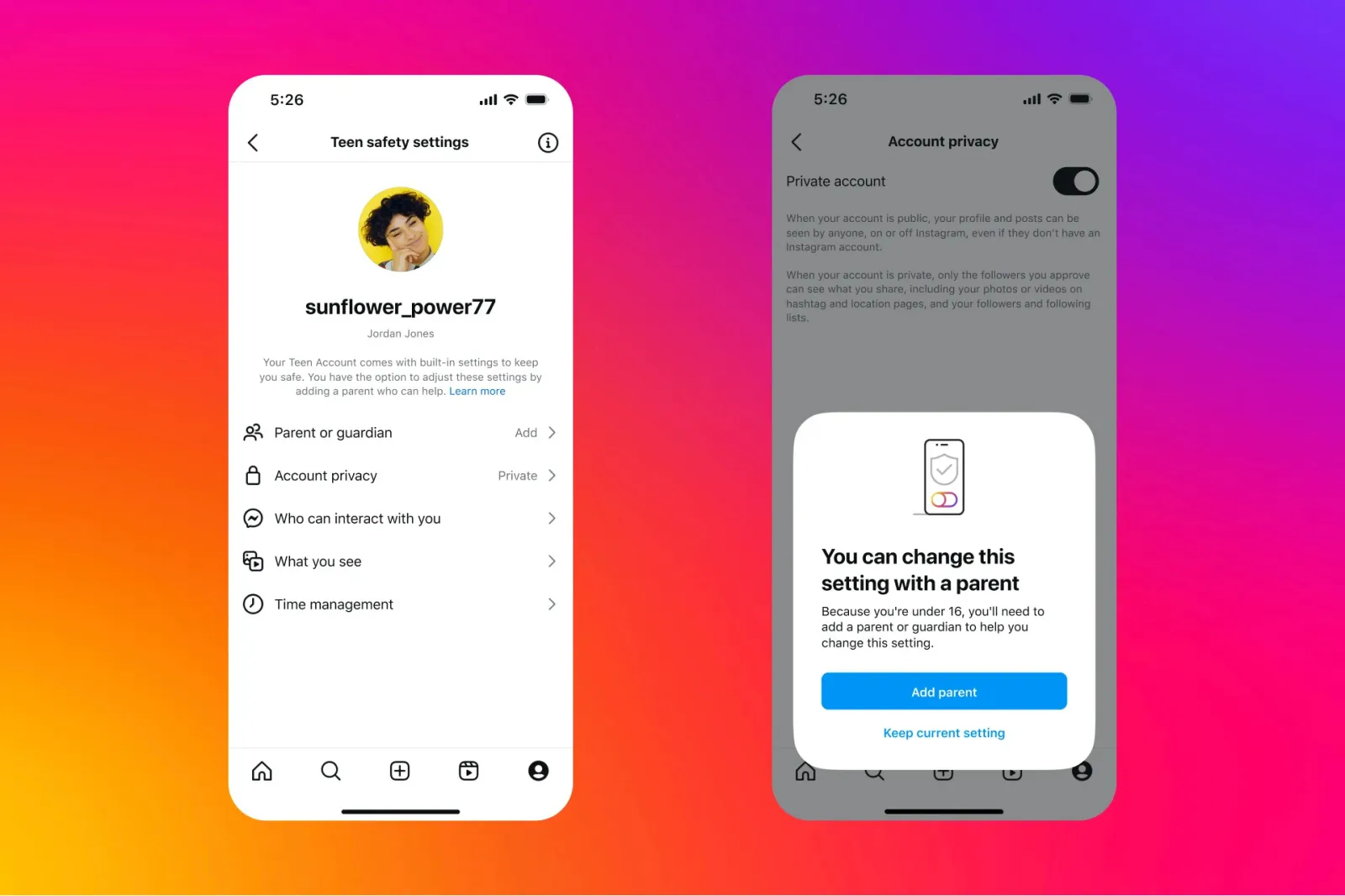

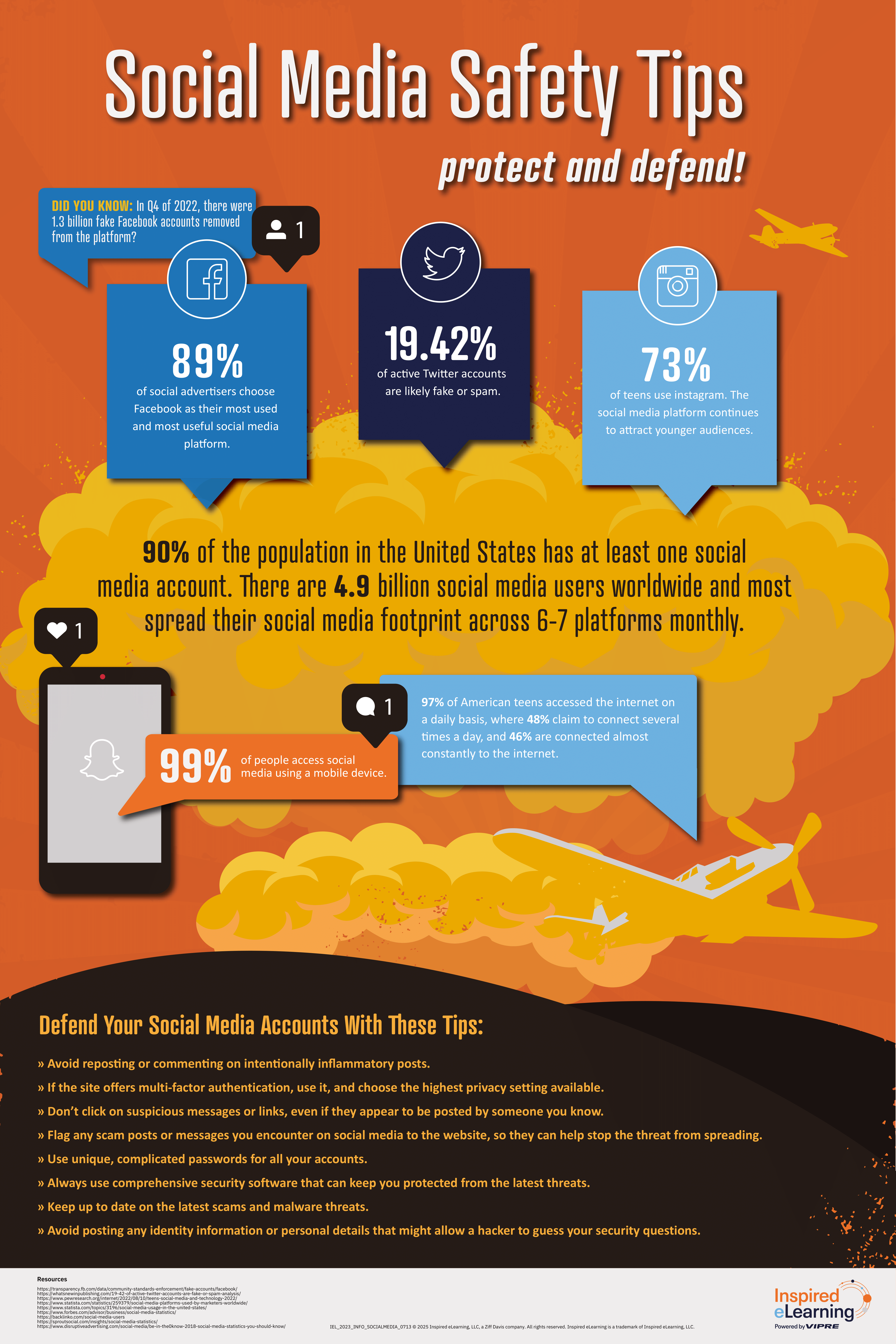

Instagram, owned by Meta, has been under scrutiny for how it manages the safety of its younger users. In recent years, concerns about online safety have intensified, particularly regarding the exposure of teens to inappropriate content. The introduction of a nudity filter for direct messages (DMs) was a long-awaited feature aimed at mitigating these concerns.

The Delay in Rollout

The delay in implementing such a feature has been a point of contention. Court filings reveal that Instagram was aware of potential risks in private messaging as early as 2018. Despite this awareness, the nudity filter was not introduced until 2024. This gap has raised questions about the prioritization of safety features within the company.

Technical Challenges in Developing a Nudity Filter

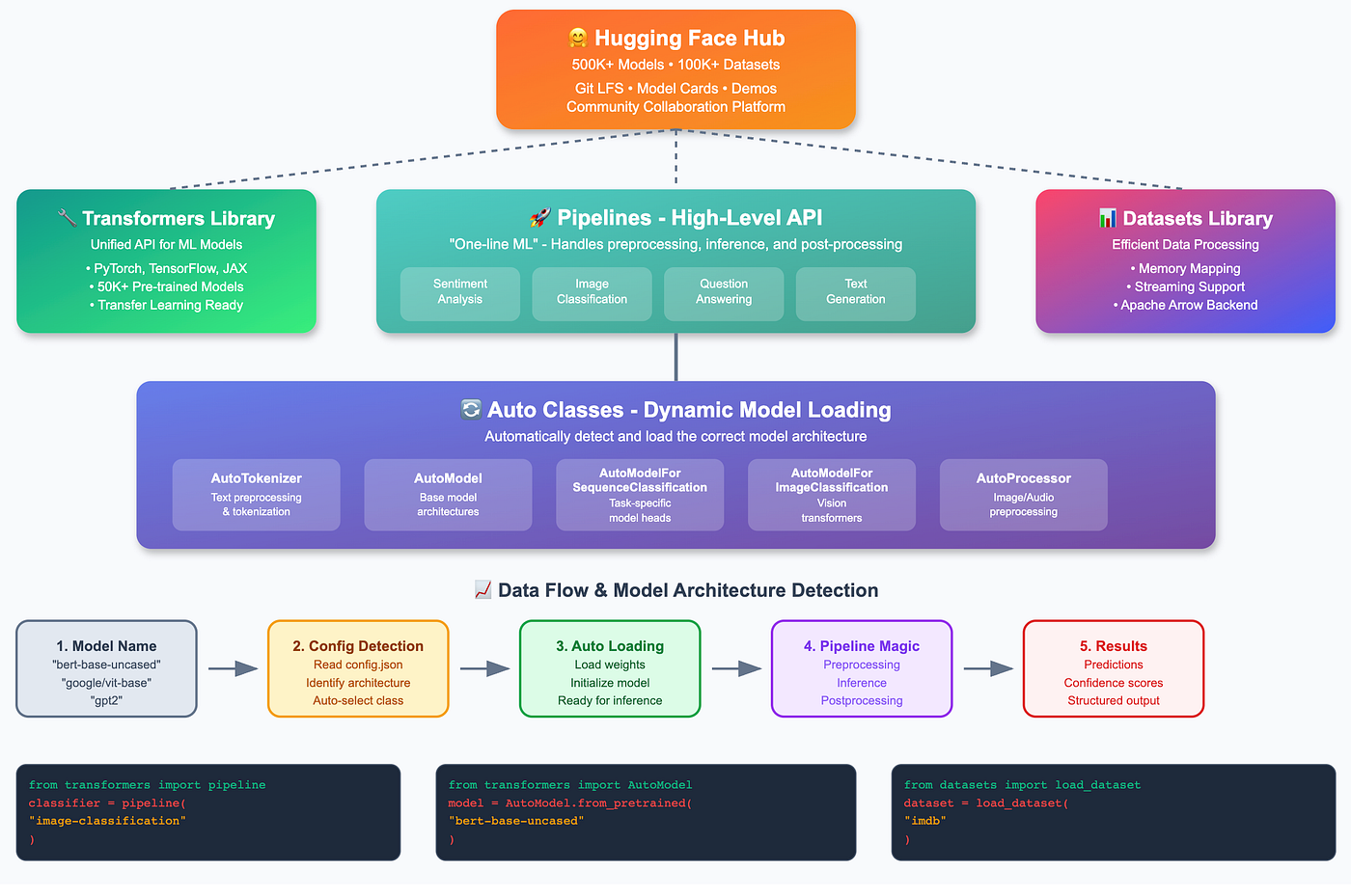

Creating a nudity detection system is not as straightforward as it might seem. It involves the development of sophisticated AI models that can accurately identify explicit content without mislabeling benign images. This requires extensive training datasets, advanced algorithms, and continuous refinement.

Key Components of a Nudity Detection System:

- Deep Learning Models: Utilized for image recognition and classification, as detailed in Frontiers in AI.

- Training Datasets: Large and diverse datasets are needed to train models effectively.

- Real-Time Processing: Systems must process images quickly to maintain user experience.

Ethical Considerations

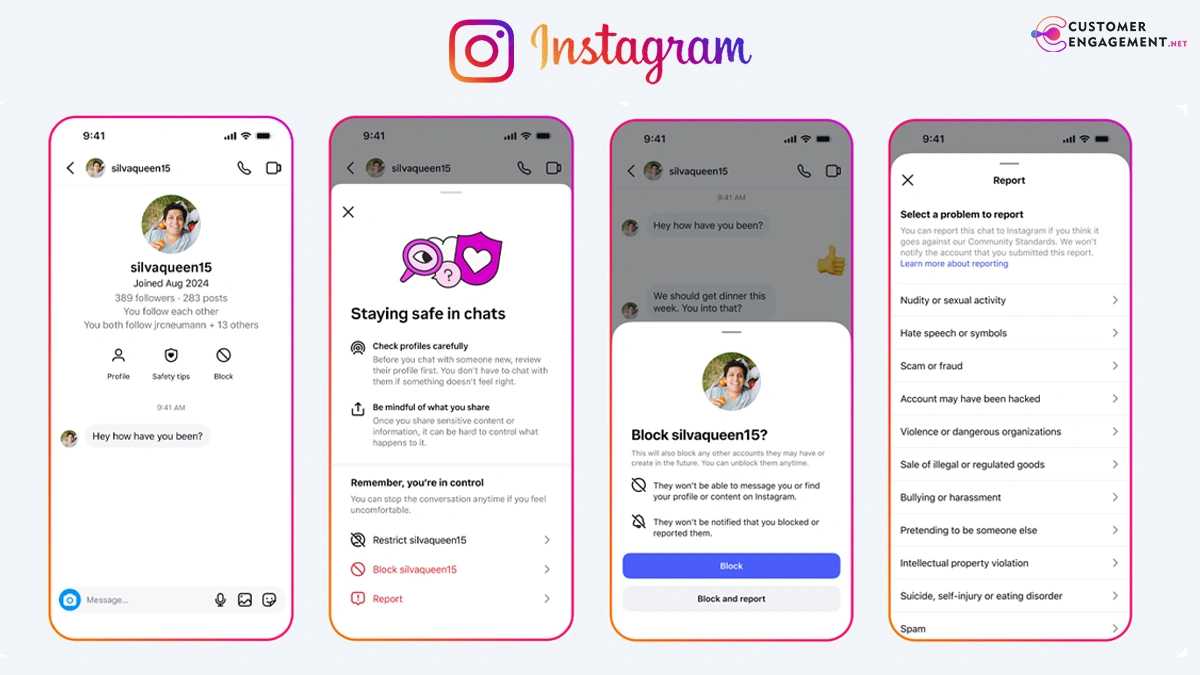

While technical challenges are significant, ethical considerations are equally important. Implementing a nudity filter involves scanning private messages, which raises privacy concerns. Instagram must ensure that user privacy is respected while protecting younger users from harmful content, as discussed in Britannica's overview.

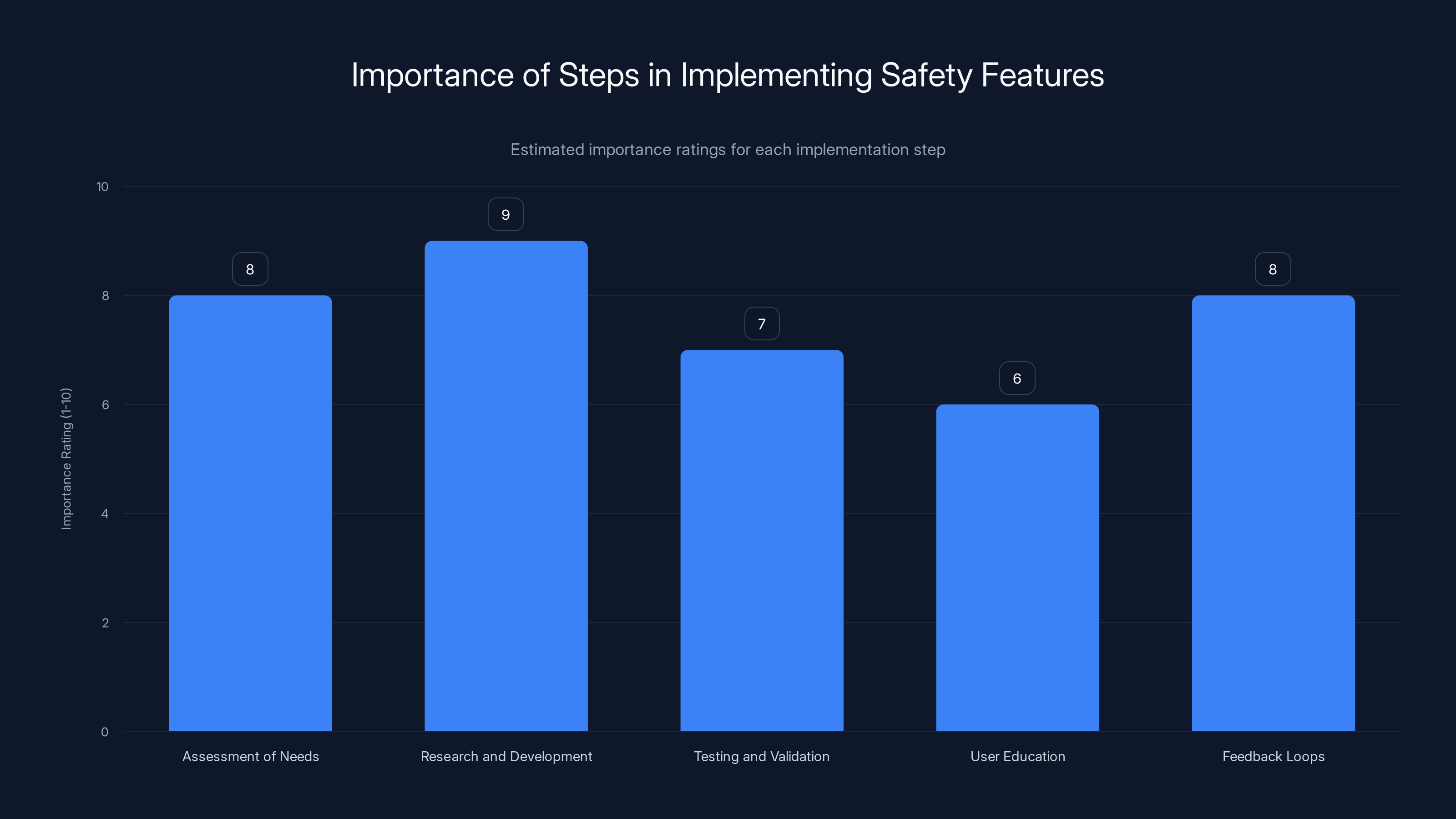

Research and Development is rated as the most critical step in implementing safety features, followed closely by Assessment of Needs and Feedback Loops. (Estimated data)

Practical Implementation Guide for Safety Features

Implementing safety features like a nudity filter involves several key steps:

- Assessment of Needs: Understanding the specific safety issues that need addressing.

- Research and Development: Investing in AI and machine learning to develop robust detection systems.

- Testing and Validation: Ensuring the system works accurately across diverse scenarios.

- User Education: Informing users about new features and how they function.

- Feedback Loops: Gathering user feedback to refine and improve the system.

Common Pitfalls and Solutions

Pitfalls

- False Positives/Negatives: Misclassification of content can lead to user frustration.

- User Privacy Concerns: Overreach in scanning private messages may breach user trust, as noted by the Osceola Sheriff's Office.

- Resource Intensiveness: Developing and maintaining such systems require significant resources.

Solutions

- Continuous AI Training: Regular updates to the AI models can reduce errors.

- Transparency with Users: Clear communication about how data is used can alleviate privacy concerns.

- Efficient Resource Allocation: Prioritizing safety features in budget and resource planning.

Instagram's nudity filter for DMs took approximately six years to develop, reflecting the complexity and resource intensity of creating reliable detection systems. (Estimated data)

Future Trends in Social Media Safety

As AI technology continues to evolve, the potential for more advanced safety features grows. However, these advancements also bring new challenges:

- AI-Driven Personalization: Enhancements in AI could lead to more personalized safety features, tailored to individual user behaviors.

- Increased Regulation: Governments may impose stricter regulations on platforms to ensure user safety, particularly for minors, as suggested by Bloomberg.

- Privacy Innovations: New technologies may emerge to better balance safety and privacy concerns.

Recommendations for Social Media Platforms

To stay ahead in the rapidly evolving digital landscape, social media platforms must:

- Prioritize Safety Features: Regularly update and improve safety mechanisms.

- Engage with Stakeholders: Work with parents, educators, and policymakers to align safety strategies.

- Enhance User Education: Provide clear guidance on how users can protect themselves online.

- Invest in AI Research: Continuously develop AI capabilities to improve detection and response systems.

Conclusion

The delay in implementing Instagram's nudity filter highlights the complex interplay between technology, ethics, and user expectations. While significant progress has been made, the journey towards safer social media platforms is ongoing. By prioritizing safety, transparency, and innovation, platforms like Instagram can better protect their users and build trust in the digital age.

FAQ

What is Instagram's nudity filter?

Instagram's nudity filter is a feature designed to automatically blur explicit images in direct messages to protect users, especially teens, from inappropriate content.

How does the nudity filter work?

The filter uses AI and machine learning to detect explicit content in images sent through direct messages, automatically blurring them and alerting the recipient.

Why was there a delay in rolling out the nudity filter?

The delay was due to technical and ethical challenges in developing a reliable detection system and balancing privacy concerns with user safety.

What are the benefits of the nudity filter?

The nudity filter helps protect teens from exposure to inappropriate content, enhances user safety, and builds trust in the platform.

What challenges do social media platforms face in implementing safety features?

Platforms face challenges like technical resource demands, ethical considerations of privacy, and the need for continuous updates to improve detection accuracy.

How can social media platforms improve user safety?

By prioritizing safety features, engaging with stakeholders, enhancing user education, and investing in AI research, platforms can better protect their users.

Key Takeaways

- Delayed Implementation Insight: Understanding the reasons behind Instagram's delay in rolling out safety features can guide future developments.

- Technical Challenges: Developing reliable AI systems for detecting explicit content is complex but essential.

- Ethical Balancing Act: Ensuring user privacy while enhancing safety is a critical challenge for social media platforms.

- Future Innovations: AI advancements promise more personalized and effective safety features.

- Strategic Recommendations: Platforms should prioritize safety, transparency, and continuous improvement to maintain user trust.

Related Articles

- Discord Delays Age Verification to Address User Concerns [2025]

- UK's Stance on Age Verification: Lessons from Reddit's £14.5 Million Fine [2025]

- Discord's Age Verification Delay: Implications and Future Outlook [2025]

- Resilience in Innovation: How Ukrainian Startups Keep Building [2025]

- KiloClaw: Revolutionizing AI Deployment with Instant OpenClaw Agents [2025]

- Meta's $100 Billion AMD Chip Deal: Paving the Way for Personal Superintelligence [2025]

![Understanding Instagram's Delayed Teen Safety Features: A Comprehensive Analysis [2025]](https://tryrunable.com/blog/understanding-instagram-s-delayed-teen-safety-features-a-com/image-1-1771967186380.jpg)