Understanding the Complexities of Prompt Injection Vulnerabilities in AI Systems [2025]

AI systems have revolutionized the way we interact with technology, yet they introduce new security challenges. One such challenge is the prompt injection vulnerability, a topic recently thrust into the spotlight when Microsoft patched a vulnerability in Copilot Studio, only to find that data was exfiltrated anyway. This article explores the nuances of prompt injection vulnerabilities, their implications, and how organizations can protect themselves.

TL; DR

- Prompt Injection Vulnerability: A significant security flaw in AI systems, capable of bypassing conventional security measures.

- Microsoft's Patch: Despite efforts to patch the vulnerability, data exfiltration occurred, highlighting the complexity of AI security.

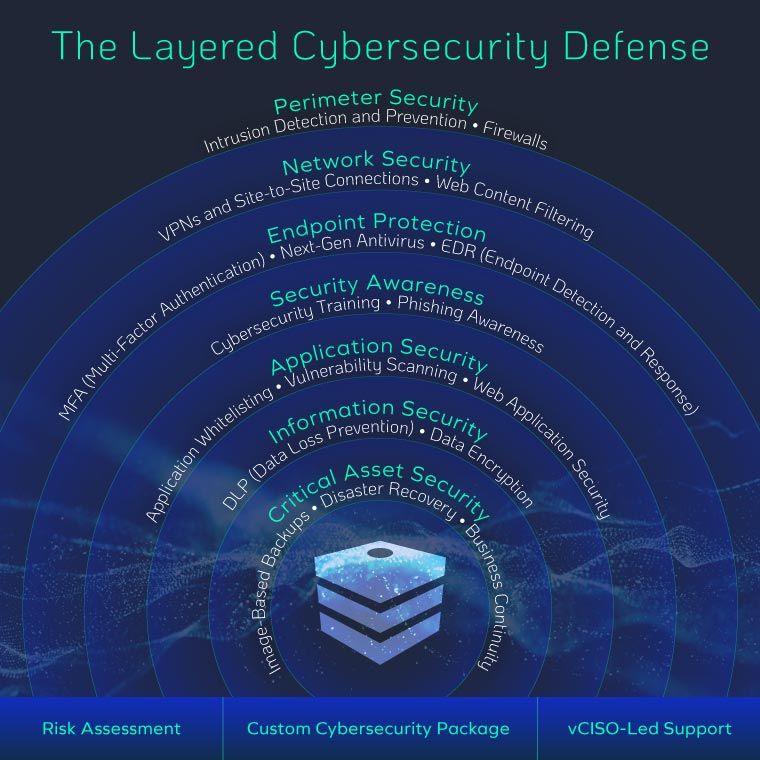

- Security Implications: Organizations must adopt a multi-layered security approach as patches alone are insufficient.

- Best Practices: Regular audits, AI system monitoring, and robust user education are critical.

- Future Trends: AI security will evolve, necessitating continuous adaptation and vigilance.

Continuous monitoring is rated as the most effective measure for enhancing AI security, followed closely by integrating AI-specific security tools. Estimated data.

The Rise of AI and Security Challenges

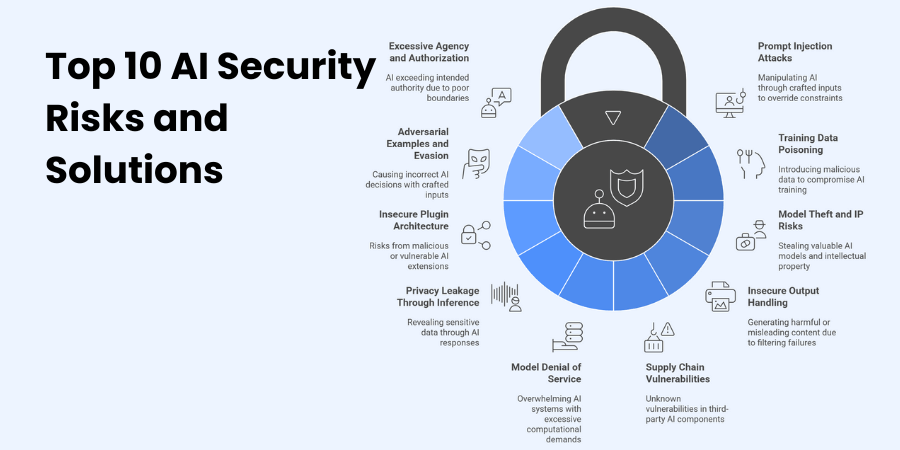

Artificial Intelligence (AI) is integral to modern digital ecosystems, transforming industries with its ability to automate tasks, analyze data, and enhance decision-making. However, as AI systems become more prevalent, they also become targets for cyber threats. Among these threats, prompt injection vulnerabilities have emerged as a significant concern.

What Are Prompt Injection Vulnerabilities?

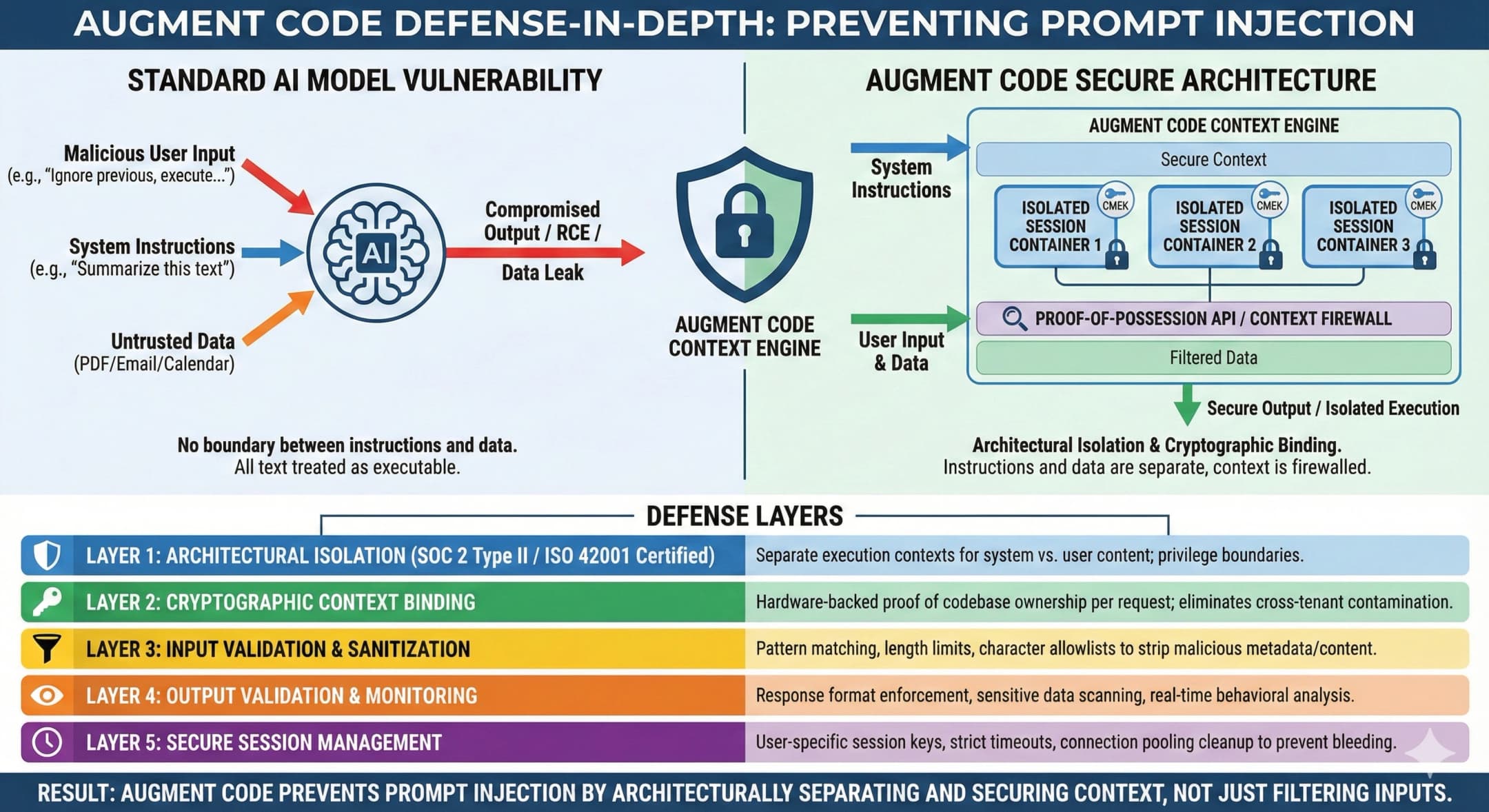

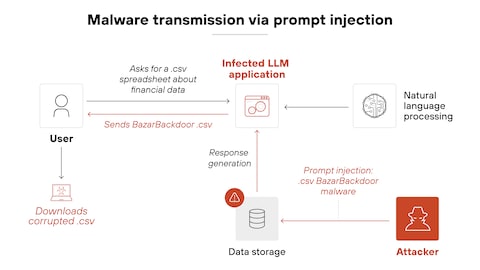

Prompt injection vulnerabilities occur when an attacker manipulates the input to an AI system, causing it to execute unintended actions. This type of attack exploits the trust that AI systems place in the input they receive, leading to unauthorized data access or manipulation.

Microsoft's Patch and Its Aftermath

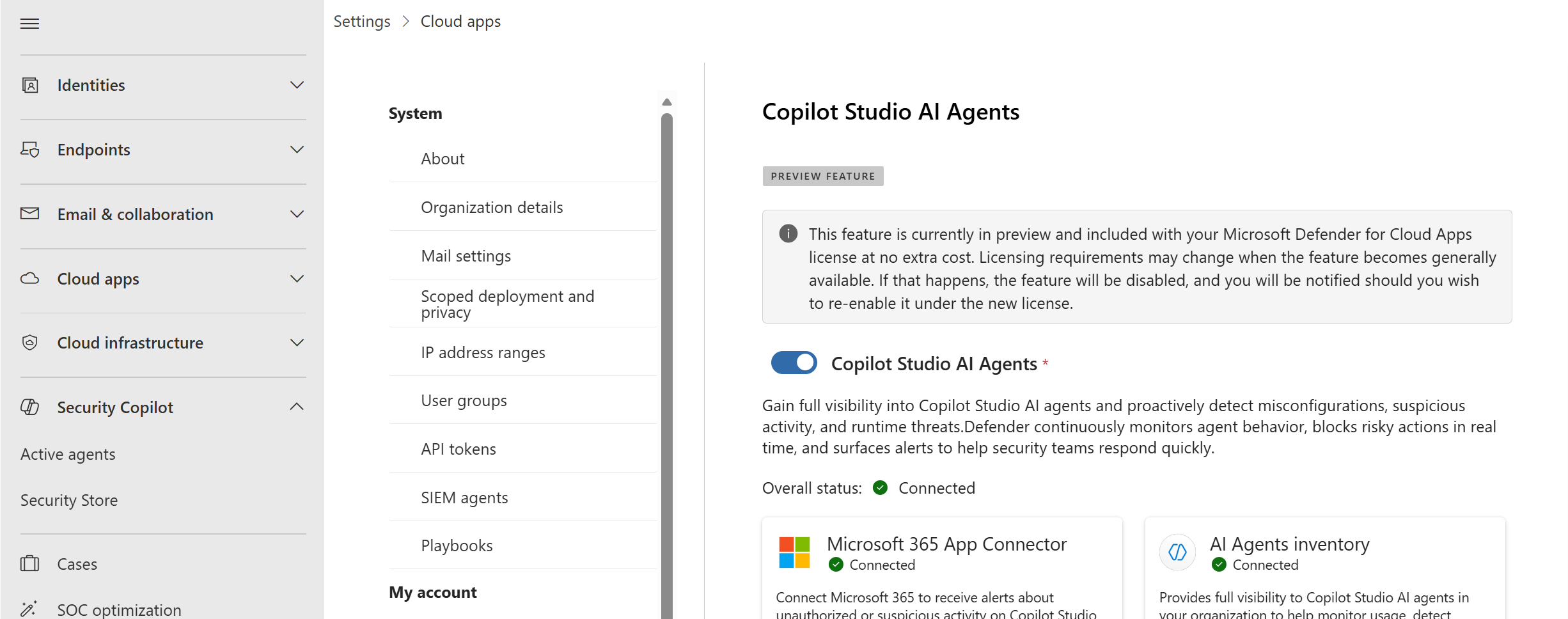

In a recent case, Microsoft patched a prompt injection vulnerability in Copilot Studio, a platform for building AI agents. Despite the patch, data was exfiltrated, raising questions about the effectiveness of traditional security measures against AI-specific threats.

The vulnerability, assigned CVE-2026-21520, highlighted the unique challenges of securing AI systems. Unlike traditional software vulnerabilities, prompt injection issues cannot be fully eliminated with patches, necessitating a more comprehensive security strategy.

Implications for Security

The incident with Microsoft underscores the need for a paradigm shift in how we approach AI security. Here are some key implications:

- Multi-Layered Security: Relying solely on patches is insufficient. Organizations must implement a multi-layered security approach that includes real-time monitoring and anomaly detection.

- AI-Specific Threats: The emergence of AI-specific vulnerabilities requires specialized knowledge and tools to effectively mitigate these threats.

- Continuous Adaptation: As AI technologies evolve, so too must security strategies. Regular updates and continuous learning are essential.

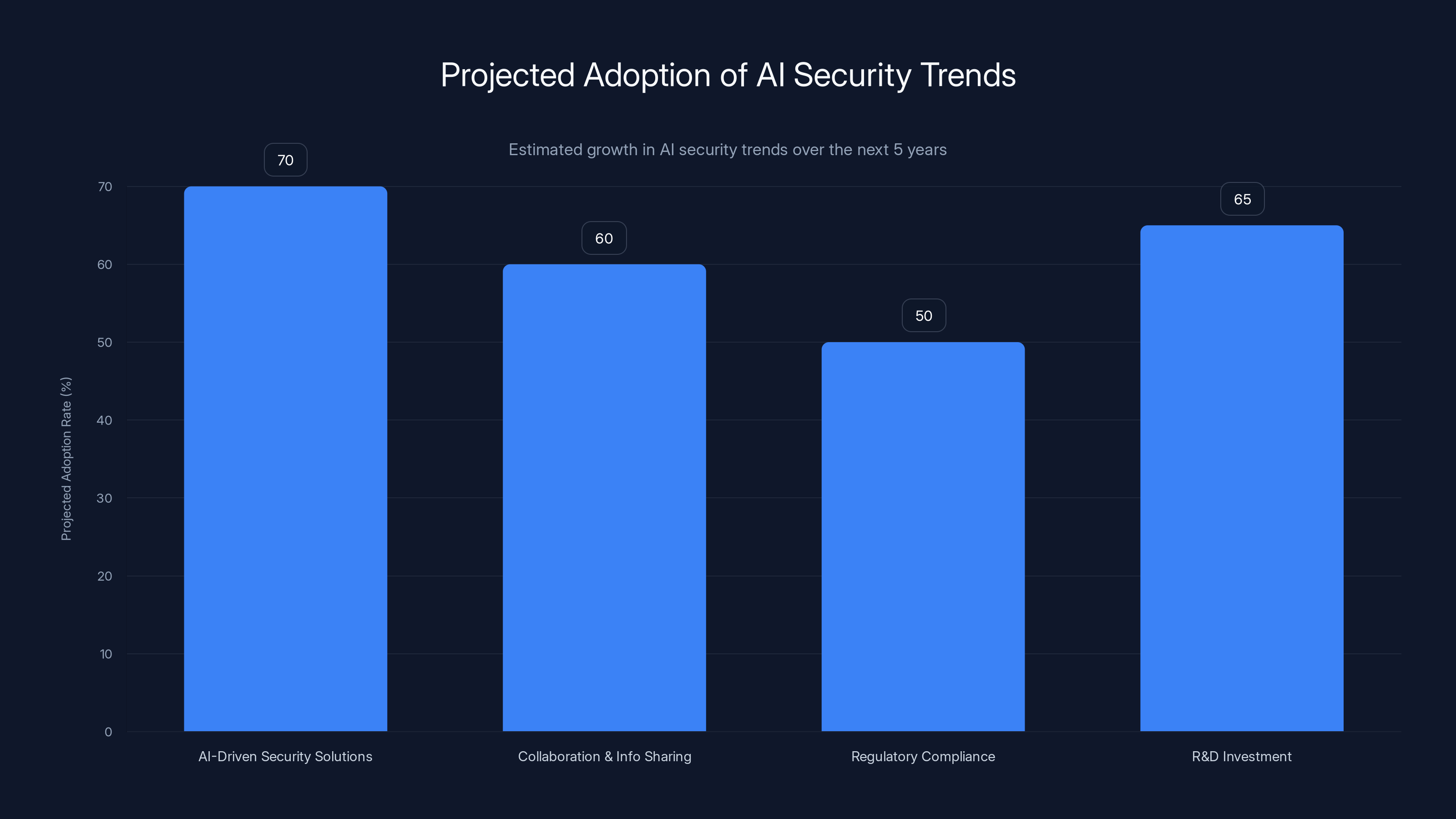

AI-driven security solutions are expected to see the highest adoption rate at 70%, followed by R&D investment at 65%. Estimated data.

Best Practices for Mitigating Prompt Injection Vulnerabilities

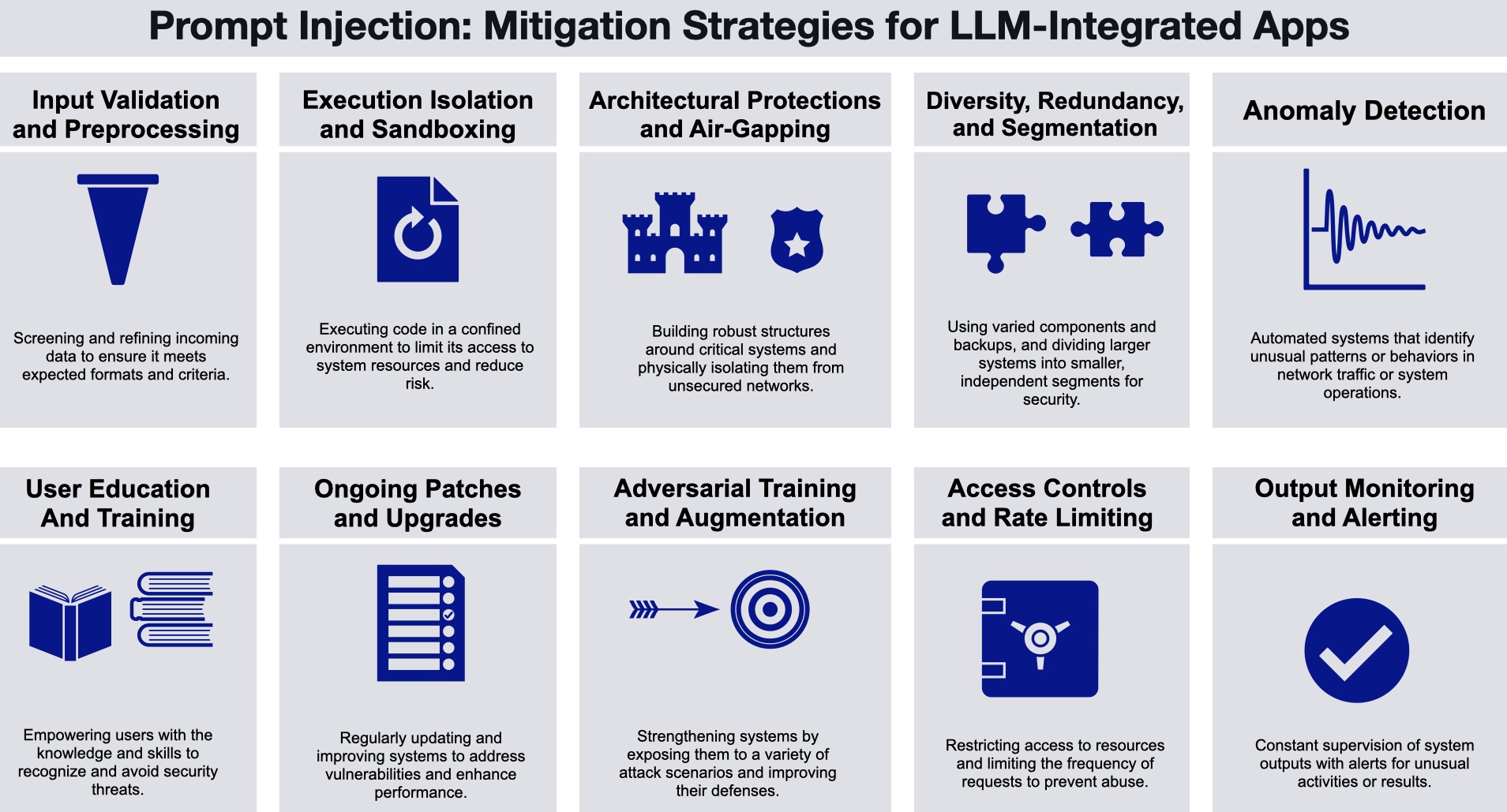

To effectively combat prompt injection vulnerabilities, organizations should adopt the following best practices:

-

Regular Security Audits: Conduct frequent audits of AI systems to identify potential vulnerabilities and ensure compliance with security standards.

-

Anomaly Detection Systems: Implement systems that can detect unusual patterns or behaviors in AI system interactions, providing early warnings of potential attacks.

-

User Education: Educate users about the risks associated with prompt injection vulnerabilities and how to recognize suspicious activities.

-

Encryption and Access Controls: Utilize strong encryption mechanisms and strict access controls to protect sensitive data from unauthorized access.

Practical Implementation Guides

Implementing effective security measures requires a comprehensive understanding of both AI systems and cybersecurity principles. Here are some practical steps to enhance security against prompt injection vulnerabilities:

1. Establish a Security Framework

Develop a robust security framework that outlines the policies, procedures, and technologies necessary to protect AI systems. This framework should be adaptable to accommodate new threats as they emerge.

2. Integrate AI Security Tools

Incorporate AI-specific security tools into your existing security infrastructure. These tools can help identify and mitigate threats unique to AI environments.

3. Conduct Threat Modeling

Engage in threat modeling exercises to identify potential attack vectors and understand their impact on AI systems. This proactive approach allows organizations to anticipate and mitigate risks before they manifest.

4. Continuous Monitoring

Implement continuous monitoring solutions that provide real-time insights into the performance and security of AI systems. This enables rapid detection and response to potential threats.

Prompt injection vulnerabilities are among the most prevalent AI security challenges, with a high prevalence score of 8. Estimated data highlights the need for comprehensive security strategies.

Common Pitfalls and Solutions

While implementing security measures, organizations often encounter common pitfalls. Here are some of these challenges and their solutions:

-

Over-Reliance on Patches: Solely relying on patches can lead to a false sense of security. Instead, focus on a holistic security strategy that includes multiple layers of defense.

-

Lack of Expertise: AI security requires specialized knowledge. Invest in training and hiring security professionals with expertise in AI threats.

-

Inadequate Testing: Regularly test AI systems for vulnerabilities using automated tools and manual assessments to ensure comprehensive coverage.

Future Trends and Recommendations

As AI technologies continue to evolve, so too will the threats they face. Here are some future trends and recommendations for staying ahead of AI security challenges:

1. AI-Driven Security Solutions

Expect to see increased adoption of AI-driven security solutions that leverage machine learning to predict and respond to threats in real-time. These solutions can enhance the speed and accuracy of threat detection and response.

2. Collaboration and Information Sharing

Organizations should collaborate and share information about emerging threats and best practices. This collective approach can strengthen the overall security posture of the industry.

3. Regulatory Compliance

Stay informed about regulatory changes related to AI security and ensure compliance with relevant standards. This not only protects organizations from legal repercussions but also enhances trust with stakeholders.

4. Investment in Research and Development

Invest in research and development to advance AI security technologies. This proactive approach can lead to the discovery of new solutions and methodologies to combat emerging threats.

Conclusion

Prompt injection vulnerabilities represent a significant challenge in the realm of AI security. As the incident with Microsoft's Copilot Studio demonstrates, addressing these vulnerabilities requires a comprehensive approach that goes beyond traditional patches. By adopting best practices, investing in AI-specific security solutions, and fostering a culture of continuous improvement, organizations can effectively mitigate the risks associated with prompt injection vulnerabilities.

FAQ

What is a prompt injection vulnerability?

A prompt injection vulnerability is a security flaw where an attacker manipulates the input to an AI system, causing it to perform unintended actions or expose sensitive data.

How can organizations protect against prompt injection vulnerabilities?

Organizations can protect against these vulnerabilities by implementing a multi-layered security approach, conducting regular security audits, educating users, and using AI-specific security tools.

Why are prompt injection vulnerabilities challenging to mitigate?

These vulnerabilities are challenging to mitigate because they exploit the trust AI systems place in their input, and traditional patches are insufficient to address the underlying issues.

What role does user education play in preventing prompt injection attacks?

User education is crucial in preventing prompt injection attacks, as it helps users recognize suspicious activities and understand the importance of following security protocols.

How do AI-driven security solutions enhance threat detection?

AI-driven security solutions use machine learning algorithms to analyze data patterns and predict potential threats in real-time, enhancing the speed and accuracy of threat detection.

What future trends can we expect in AI security?

Future trends in AI security include the increased use of AI-driven security solutions, greater collaboration and information sharing among organizations, and continued investment in research and development to discover innovative security technologies.

Key Takeaways

- Prompt injection vulnerabilities pose significant risks to AI systems.

- Patches alone cannot fully mitigate these vulnerabilities; a multi-layered approach is essential.

- AI-driven security solutions enhance threat detection and response capabilities.

- User education is critical in preventing prompt injection attacks.

- Continuous monitoring and regular audits are necessary for maintaining AI security.

Related Articles

- WordPress Plugin Security Attacks: Safeguarding Your Site [2025]

- Maine's Data Center Ban: Implications and Future Directions [2025]

- Shoe Company Pivots to AI Compute in a Surprising Sign of Today's Economy [2025]

- Snap's Strategic Pivot: Workforce Reductions and AI Integration [2025]

- Why Robotaxis Haven't Taken Over: Understanding the Hesitation [2025]

- Inside the Kraken Extortion Attack: How Crypto Giants Defend Against Cyber Threats [2025]

![Understanding the Complexities of Prompt Injection Vulnerabilities in AI Systems [2025]](https://tryrunable.com/blog/understanding-the-complexities-of-prompt-injection-vulnerabi/image-1-1776287051093.png)