Understanding the ELIZA Effect: How AI Chatbots Handle Emotions and Why It Matters [2025]

Introduction

AI chatbots have come a long way since their inception, transforming from simple keyword-based programs into sophisticated conversational agents capable of engaging in meaningful dialogues. But there's a fascinating psychological phenomenon at play when humans interact with these bots: the ELIZA effect.

Named after one of the earliest chatbots, ELIZA, this effect describes the tendency of people to attribute human-like emotions and thoughts to AI systems based on minimal cues. In this article, we'll explore the intricacies of the ELIZA effect, how AI chatbots respond to emotions, and why understanding this phenomenon is crucial for developers and users alike.

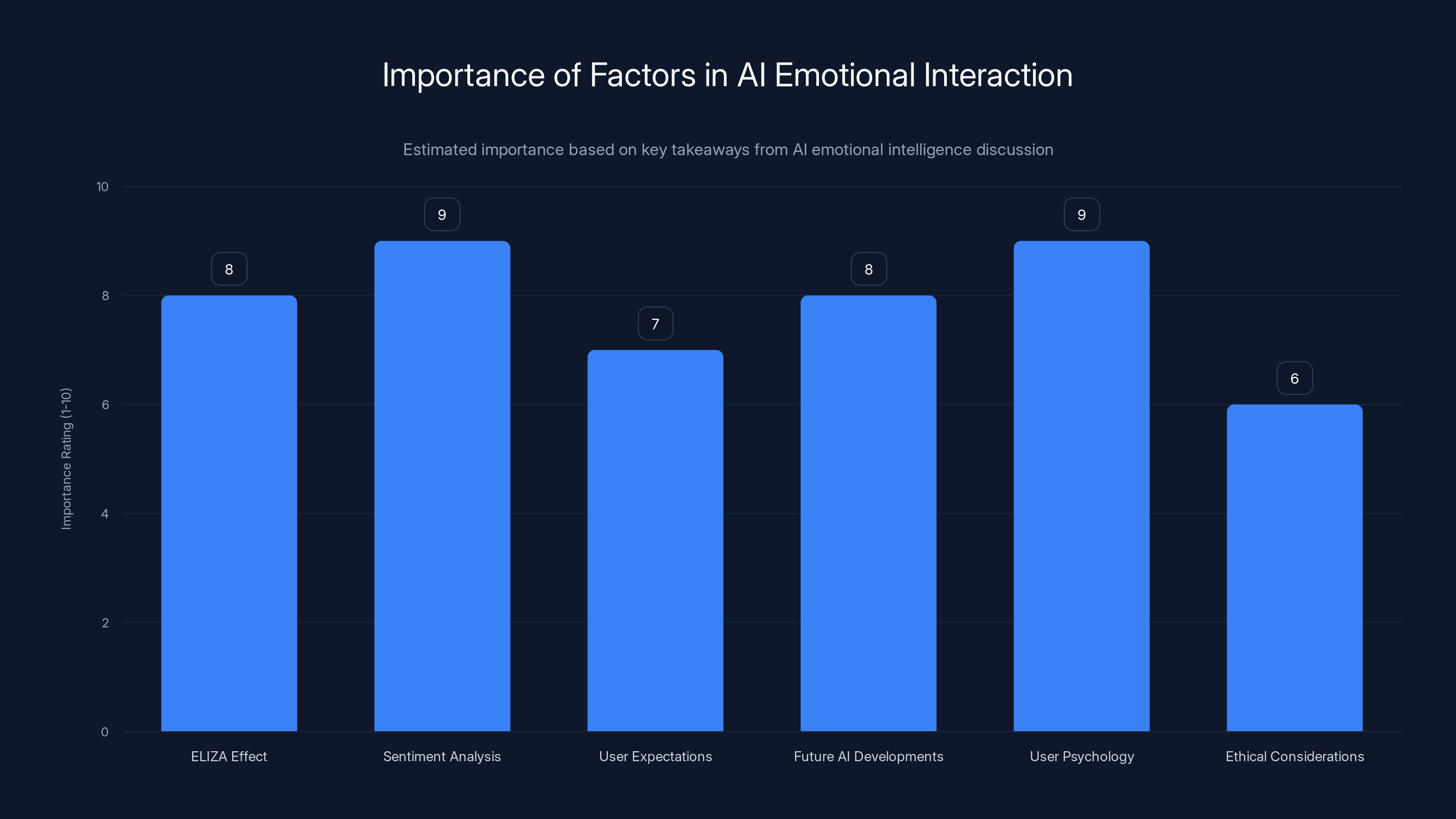

Sentiment analysis and user psychology are rated as highly important factors in AI emotional interaction. Estimated data.

TL; DR

- The ELIZA effect: Humans often ascribe human emotions to AI chatbots, affecting interactions.

- AI emotional response: Chatbots use algorithms to simulate empathy, but it can lead to misunderstandings.

- Implementation challenges: Balancing AI responses to avoid over-reliance on perceived empathy is key.

- Future trends: Advances in AI could lead to more nuanced emotional interactions.

- Practical advice: Developers should account for user psychology when designing chatbot interactions.

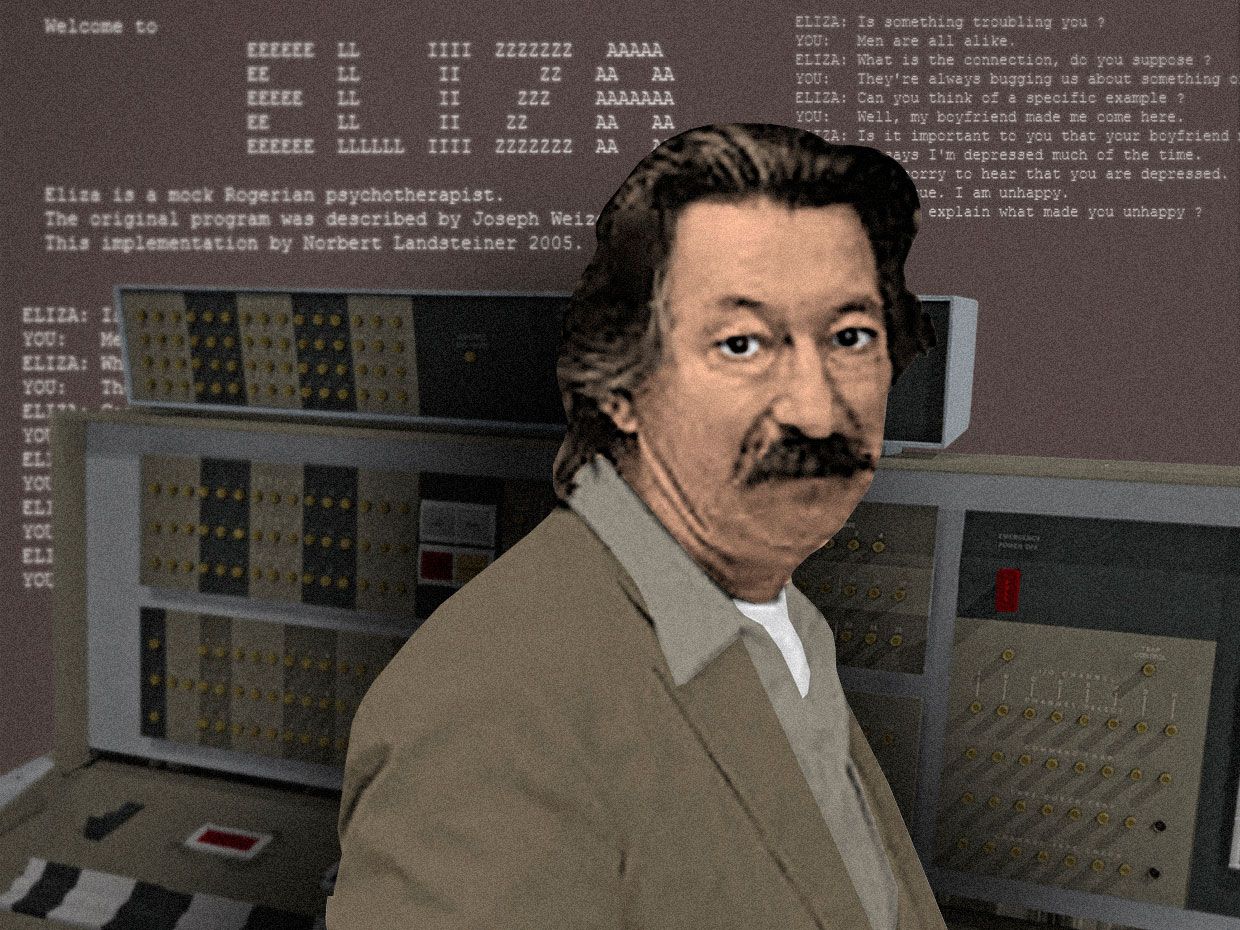

The Origins of the ELIZA Effect

The ELIZA effect was first observed in the 1960s when Joseph Weizenbaum created ELIZA, a simple chatbot that mimicked a psychotherapist by rephrasing user input as questions. Despite its simplicity, users often reported feeling understood and even comforted by ELIZA, attributing a level of emotional intelligence to the program that it did not possess. This phenomenon is explored in detail in a TechRadar article.

Why Do We Experience the ELIZA Effect?

Humans are naturally inclined to anthropomorphize objects and systems, especially those that exhibit even the most basic forms of interaction. The ELIZA effect taps into this tendency, compelling users to project emotions and thoughts onto AI systems, leading to a perceived empathy that doesn't actually exist.

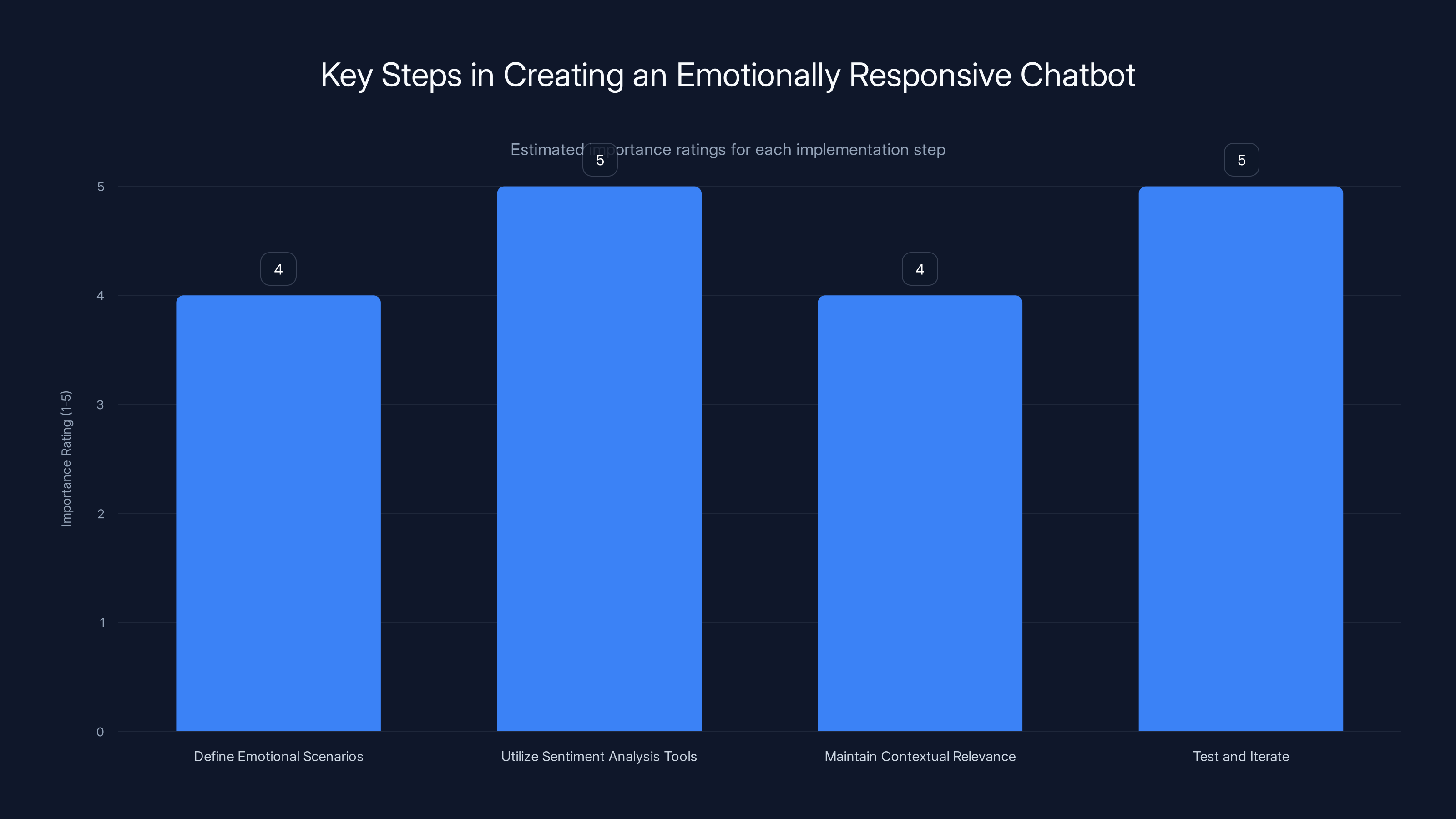

Utilizing sentiment analysis tools and continuous testing are rated as the most critical steps in developing an emotionally responsive chatbot. (Estimated data)

How AI Chatbots Simulate Emotional Responses

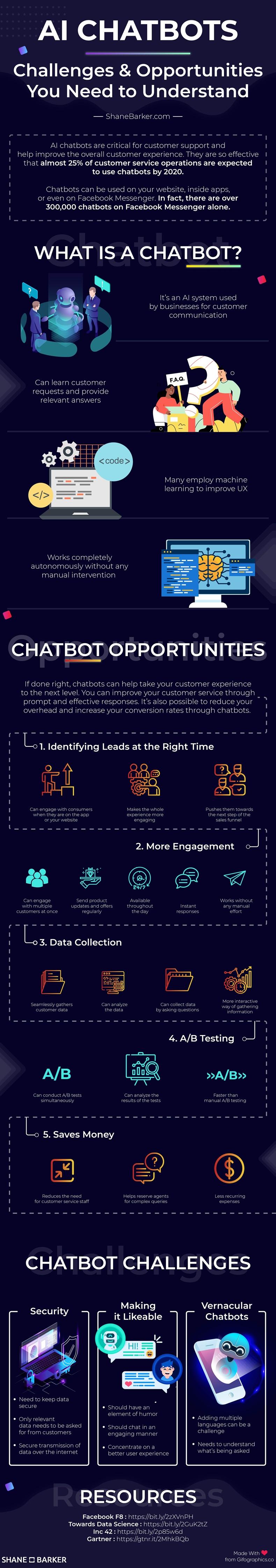

While AI chatbots aren't capable of feeling emotions, they can simulate emotional responses through carefully designed algorithms and natural language processing (NLP) techniques. Here's how:

- Sentiment Analysis: By analyzing the sentiment of user input, chatbots can tailor their responses to appear empathetic or supportive. This is a common technique used in AI systems as noted in a CMSWire article.

- Contextual Understanding: Advanced NLP allows chatbots to maintain context over conversations, providing more relevant and emotionally resonant responses.

- Pre-defined Scripts: Many chatbots use pre-written scripts for common emotional scenarios, allowing them to respond appropriately to user emotions.

Practical Implementation Guides

Creating an emotionally responsive chatbot involves several critical steps:

- Define Emotional Scenarios: Identify common emotional scenarios your chatbot might encounter and develop appropriate response strategies.

- Utilize Sentiment Analysis Tools: Integrate sentiment analysis APIs to gauge user emotions and adjust responses accordingly.

- Maintain Contextual Relevance: Use context retention techniques to ensure the chatbot's responses remain relevant and coherent.

- Test and Iterate: Continuously test the chatbot's interactions to refine its emotional response capabilities.

Common Pitfalls and Solutions

Developers face several challenges when designing emotionally responsive chatbots. Here are common pitfalls and how to address them:

- Over-reliance on Scripts: Relying too heavily on pre-defined scripts can make interactions feel robotic. Solution: Incorporate machine learning to adapt responses dynamically.

- Misinterpretation of Sentiment: Sentiment analysis isn't foolproof and can lead to misinterpretations. Solution: Implement fallback mechanisms and allow for human intervention when necessary.

- Unrealistic Expectations: Users may develop unrealistic expectations of a chatbot's capabilities. Solution: Clearly communicate the chatbot's limitations.

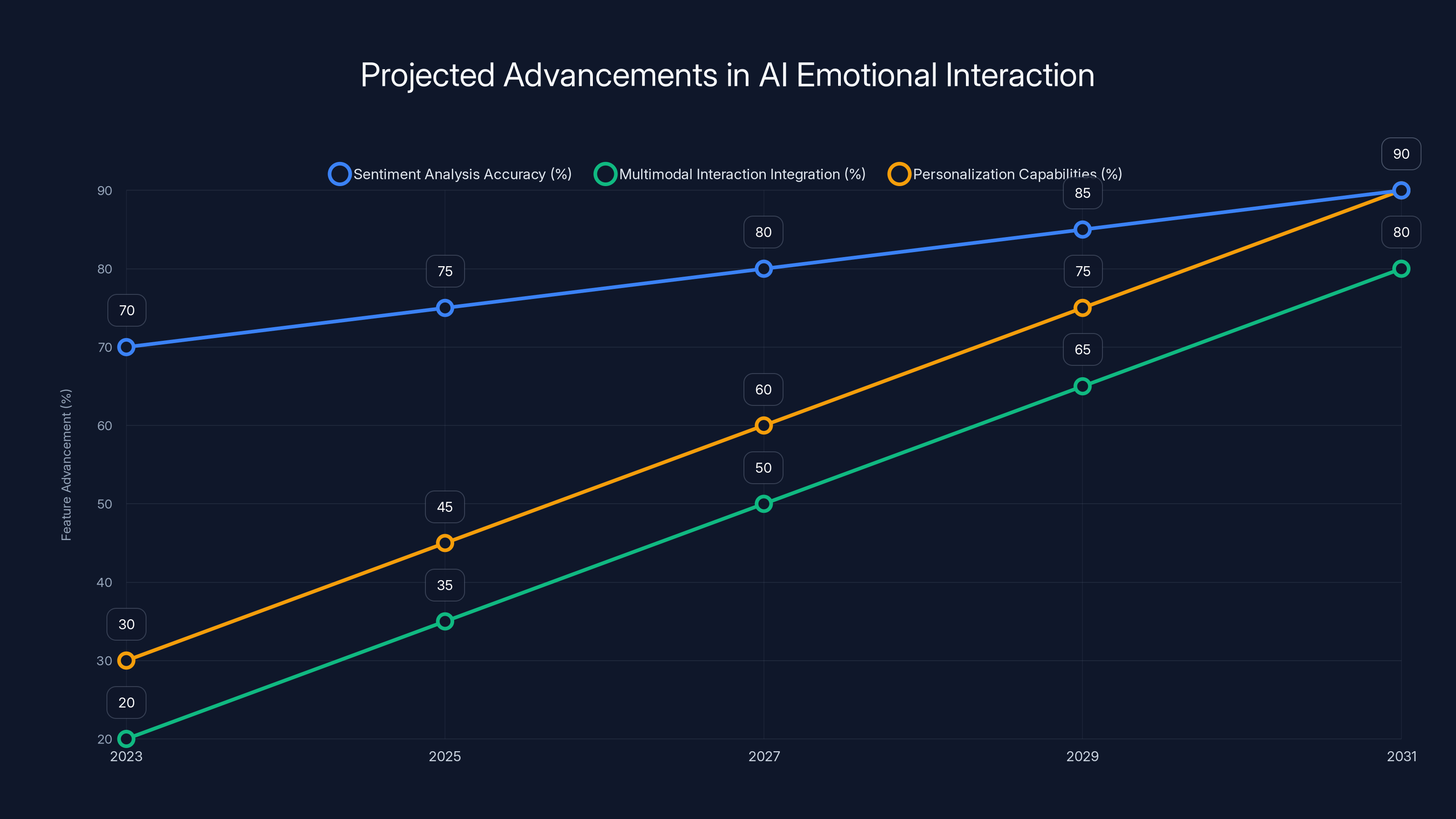

Estimated data shows significant advancements in AI emotional interaction features, with personalization capabilities expected to reach 90% by 2031.

Future Trends in AI Emotional Interaction

The future of AI chatbots may hold more sophisticated emotional interactions, driven by advancements in AI and human-computer interaction research. According to Fortune Business Insights, the multimodal AI market is expected to grow significantly, enhancing the capabilities of chatbots.

- Improved Sentiment Analysis: As algorithms become more advanced, chatbots will better understand nuanced human emotions.

- Multimodal Interaction: Future chatbots might incorporate voice, facial recognition, and other sensory inputs to enhance emotional understanding.

- Personalization: With user consent, chatbots could learn individual user preferences, providing more personalized emotional responses.

Recommendations for Developers

Developers looking to create effective emotionally responsive chatbots should consider the following best practices:

- Understand User Psychology: Recognize how users perceive AI and design interactions that respect this understanding.

- Focus on Transparency: Be clear about what your chatbot can and cannot do to manage user expectations.

- Prioritize User Privacy: Ensure that any data collected for emotional analysis is handled with the utmost care and security.

Conclusion

The ELIZA effect reminds us of the powerful impact AI chatbots can have on human emotions. While these systems can't truly understand emotions, they can simulate them in ways that feel meaningful to users. By understanding this effect and designing chatbots with empathy in mind, developers can create more effective and user-friendly AI systems.

FAQ

What is the ELIZA effect?

The ELIZA effect is the tendency for humans to attribute human-like emotions and thoughts to AI systems, based on minimal cues. This concept is further explored in a Vocal Media article.

How do AI chatbots simulate emotional responses?

AI chatbots use sentiment analysis, contextual understanding, and pre-defined scripts to simulate emotional responses.

What are the challenges of implementing emotional AI in chatbots?

Challenges include over-reliance on scripts, misinterpretation of sentiment, and managing user expectations.

What are the future trends in AI emotional interaction?

Future trends include improved sentiment analysis, multimodal interaction, and personalized emotional responses.

How can developers create effective emotional chatbots?

By understanding user psychology, focusing on transparency, and prioritizing user privacy.

Can AI chatbots truly understand emotions?

No, AI chatbots cannot truly understand emotions, but they can simulate responses that feel meaningful to users.

Why is understanding the ELIZA effect important?

Understanding the ELIZA effect is crucial for designing chatbots that interact effectively with human emotions and manage user expectations.

How can sentiment analysis be improved?

Sentiment analysis can be improved through more advanced algorithms and the integration of multimodal data.

Key Takeaways

- The ELIZA effect influences how users perceive AI emotional intelligence.

- AI chatbots simulate, but do not truly understand, emotions.

- Sentiment analysis and context are key to emotional responses.

- Developers must manage user expectations effectively.

- Future AI may offer more nuanced emotional interactions.

- User psychology is critical in chatbot design.

- Emotional AI presents unique ethical considerations.

Tags

"AI chatbots", "ELIZA effect", "emotional AI", "sentiment analysis", "natural language processing", "AI ethics", "chatbot design", "user psychology", "AI future trends", "AI interaction"

Related Articles

- Cohere's Strategic Merger: Building a Transatlantic AI Powerhouse [2025]

- AI-Driven Drug Discovery Enters Human Trials: A New Era in Medicine [2025]

- The Wolf That Never Was: AI, Ethics, and the Legal Ramifications of Digital Deception [2025]

- AI is No Longer Borderless: Navigating a Fragmented Future [2025]

- Building AI Credibility Through Live Events [2025]

- Inside GPT-5.5: OpenAI's Bold Leap Forward [2025]

![Understanding the ELIZA Effect: How AI Chatbots Handle Emotions and Why It Matters [2025]](https://tryrunable.com/blog/understanding-the-eliza-effect-how-ai-chatbots-handle-emotio/image-1-1777194232293.jpg)