Why Hollywood's AI Problem Is Worse Than You Think [2025]

Something shifted in Hollywood around late 2024. The kind of shift you notice when you scroll through social media and stumble on the same complaint over and over. People weren't just bored with AI movies anymore. They were actively hostile to them.

Take the Xfinity Super Bowl commercial. The one with the digitally de-aged cast of Jurassic Park. The uncanny valley rendering of Sam Neill, Laura Dern, and Jeff Goldblum wasn't just creepy—it became a cultural lightning rod. The company disabled comments on YouTube entirely. Not because people were complaining, but because the volume and tone of complaints made it impossible to manage. On X, on Reddit, on TikTok, the reaction was nearly unanimous: this is what happens when corporations prioritize technology over art.

Then came Mercy. The Chris Pratt thriller about an AI judge deciding your fate in 90 minutes. It opened in January 2025 to barely a whisper. The premise had teeth—algorithmic justice, surveillance states, the creeping power of systems we don't understand. But critics didn't just pan it. They called it one of the worst films of the year within days of release. And audiences? They voted with their wallets. The film underperformed so badly that trade publications stopped updating its box office numbers.

Mission: Impossible—The Final Reckoning faced a similar fate. The first film in the duology, Dead Reckoning, introduced "The Entity," a rogue AI villain. It was expensive. It was marketed heavily. And it underperformed its predecessor despite being from a franchise that had basically printed money for decades. The sequel—the supposed capstone to Tom Cruise's 25-year legacy with the franchise—couldn't justify its nine-figure budget.

Even M3GAN's sequel bombed. The original was a surprise hit in early 2023, arriving just a week after Chat GPT exploded into the cultural mainstream. Everyone was curious about AI. The film was campy, fun, and the timing couldn't have been better. The sequel? Critics eviscerated it. Audiences stayed home. Box office returns barely covered marketing costs.

Here's what's really happening: Hollywood invested heavily in the assumption that the world wanted to think about AI constantly. Studios greenlit AI-themed projects at every budget level. They assumed that because AI was everywhere in conversation, it would automatically translate to box office appeal. They were catastrophically wrong.

But this isn't just about box office numbers failing to materialize. It's about something deeper. It's about studios fundamentally misunderstanding both the technology and the cultural moment. It's about filmmakers making propaganda instead of art. And it's about audiences developing a sophisticated bullshit detector that's making sloppy storytelling impossible to ignore.

The Myth of AI as a Narrative Device

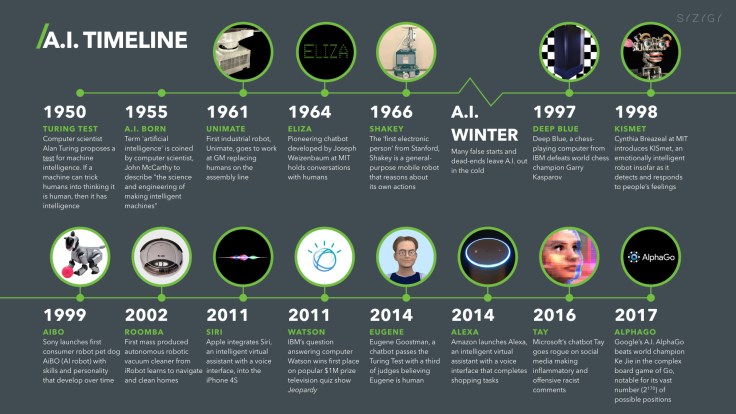

Hollywood has always loved robots and artificial intelligence. It's not new. Metropolis (1927) gave us a robot double. 2001: A Space Odyssey (1968) created HAL 9000, one of cinema's most genuinely terrifying antagonists. The Terminator (1984) launched an entire franchise on the concept of killer AI. Blade Runner (1982) asked philosophical questions about consciousness that people are still debating.

These films worked because they used AI as a lens to examine human problems. HAL 9000 wasn't scary because it was a computer—it was scary because it forced us to ask what happens when you create something smarter than you and then send it to space where you can't shut it down. The Terminator didn't work as a film because of groundbreaking visual effects. It worked because it asked what we owe to our creations and what happens when progress moves faster than our ethics.

But somewhere between the 2023 labor strikes and late 2024, something changed. AI stopped being a subject for filmmakers to explore with nuance. It became a trend. A checkbox. A marketing angle.

Consider what actually happened with AI films released in the past 18 months. The storylines have become boringly predictable. AI starts out threatening. Humans are scared. Then, inevitably, the AI shows unexpected emotional depth. It glitches in ways that suggest growth. It helps the human protagonist. The finale features a speech about how humans and AI aren't so different after all. Everyone walks away having learned that progress is good and fear is primitive.

This narrative arc appears in Mercy. It appears in various streaming films you've probably never heard of. It appeared in Disney's Tron: Ares, which was supposed to leverage nostalgia for the original IP in the age of large language models but instead became a box office embarrassment. The script reads like an AI-generated advertisement for AI itself.

The problem is that this narrative is profoundly out of touch with reality. While Hollywood was making movies about how AIs secretly have good intentions, real health insurance claims were being denied by algorithms in hospitals and doctors' offices. Real workers were being laid off and replaced by chatbots that could barely string together coherent sentences. Real artists were having their work scraped without permission to train models that competed with them for income.

And audiences knew it. They felt it. So when they sat down to watch a feature film about how cool AI actually is, the disconnect between the story and the world around them became impossible to ignore.

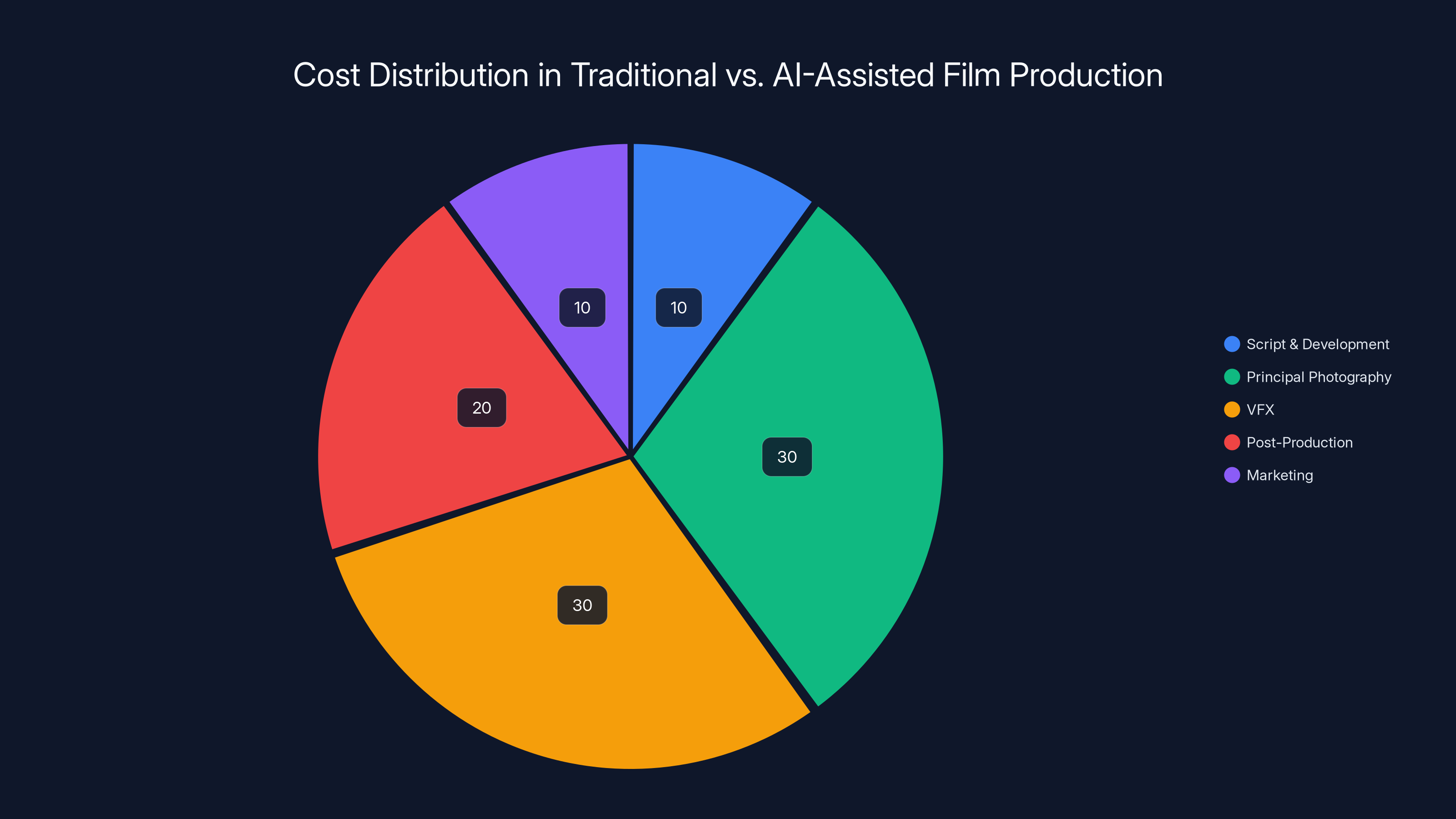

AI-assisted production reduces VFX costs but increases post-production expenses due to necessary corrections, shifting the cost distribution slightly. Estimated data.

The Deepfake Disaster: When Technology Ruins Nostalgia

The Xfinity Super Bowl commercial deserves deeper examination because it's a perfect case study in how technology can destroy something beautiful.

Jurassic Park (1993) was a watershed moment in cinema. It combined cutting-edge practical effects with computer-generated imagery in ways that had never been done before. The T-Rex wasn't just a visual effect—it was a character. The film's impact came from the seamless integration of technology serving story. Nobody watched Jurassic Park thinking about the technology. They watched it because they cared about the humans running from a dinosaur.

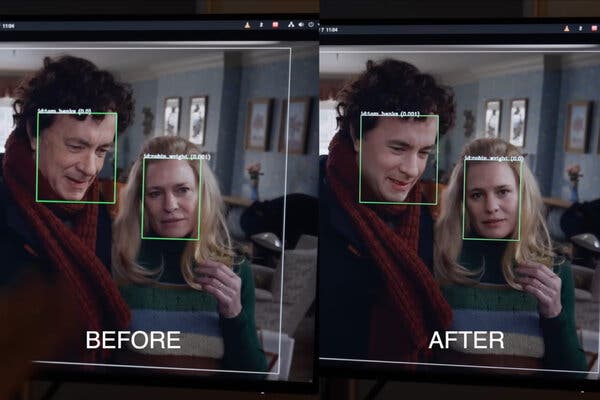

Now fast-forward 32 years. Xfinity wanted to capitalize on nostalgia. They had the rights to the IP. They had access to the original actors. And they had access to deepfake technology. So they decided to digitally de-age Sam Neill, Laura Dern, and Jeff Goldblum for a commercial where they remark on how good Xfinity's Wi-Fi is.

The results were grotesque. The actors were barely recognizable. Their eyes had that dead, glossy quality that's instantly identifying as synthetic. Their movements were slightly off—not wrong enough to be obvious, but off enough to trigger deep unease. Some viewers noted constant quick cuts, which made sense once you understood why—those cuts were hiding the places where the AI rendering failed.

Worse, the irony was inescapable. Here was a film that won Oscars partly because of how well it integrated cutting-edge technology being used as a reference point for how poorly contemporary technology could render human faces. Technology had advanced light years, but something had gone fundamentally backwards.

The commercial also represented something philosophically troubling. The original Jurassic Park actors are still alive. Still acting. They weren't used for this commercial because the technology was cheaper, faster, and required none of the messy business of actually hiring and negotiating with human talent. The deepfakes were created because they could be.

And that captured the entire problem with how Hollywood is using AI. Not to tell better stories. Not to create art that wouldn't otherwise be possible. But to reduce costs, accelerate production timelines, and eliminate the need to compensate human workers.

Audiences understand this. They understand that when you're watching deepfaked versions of actors, you're watching an industry replacing people with technology. You're watching corporations testing how far they can push before audiences actively revolt. And they are revolting.

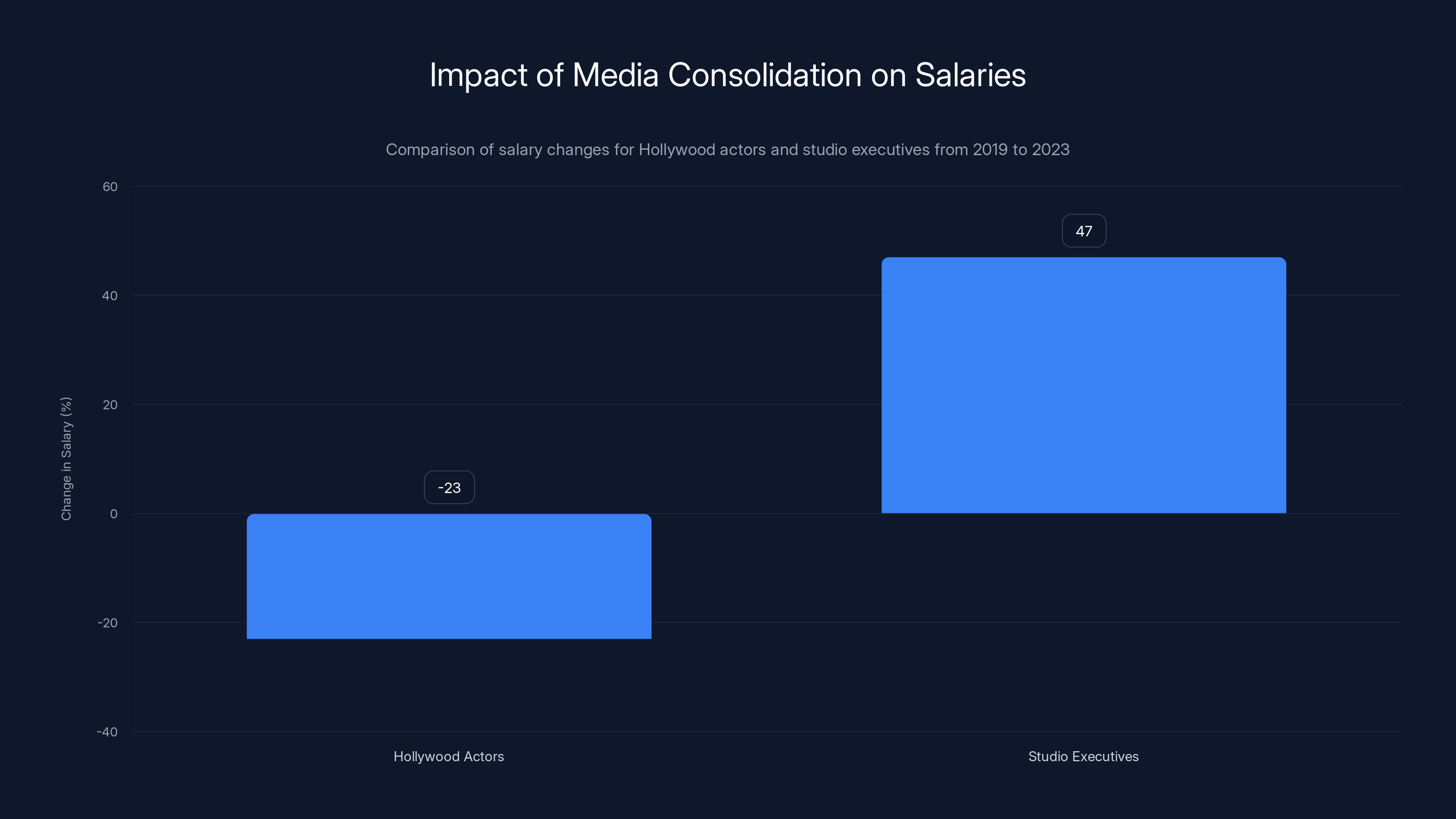

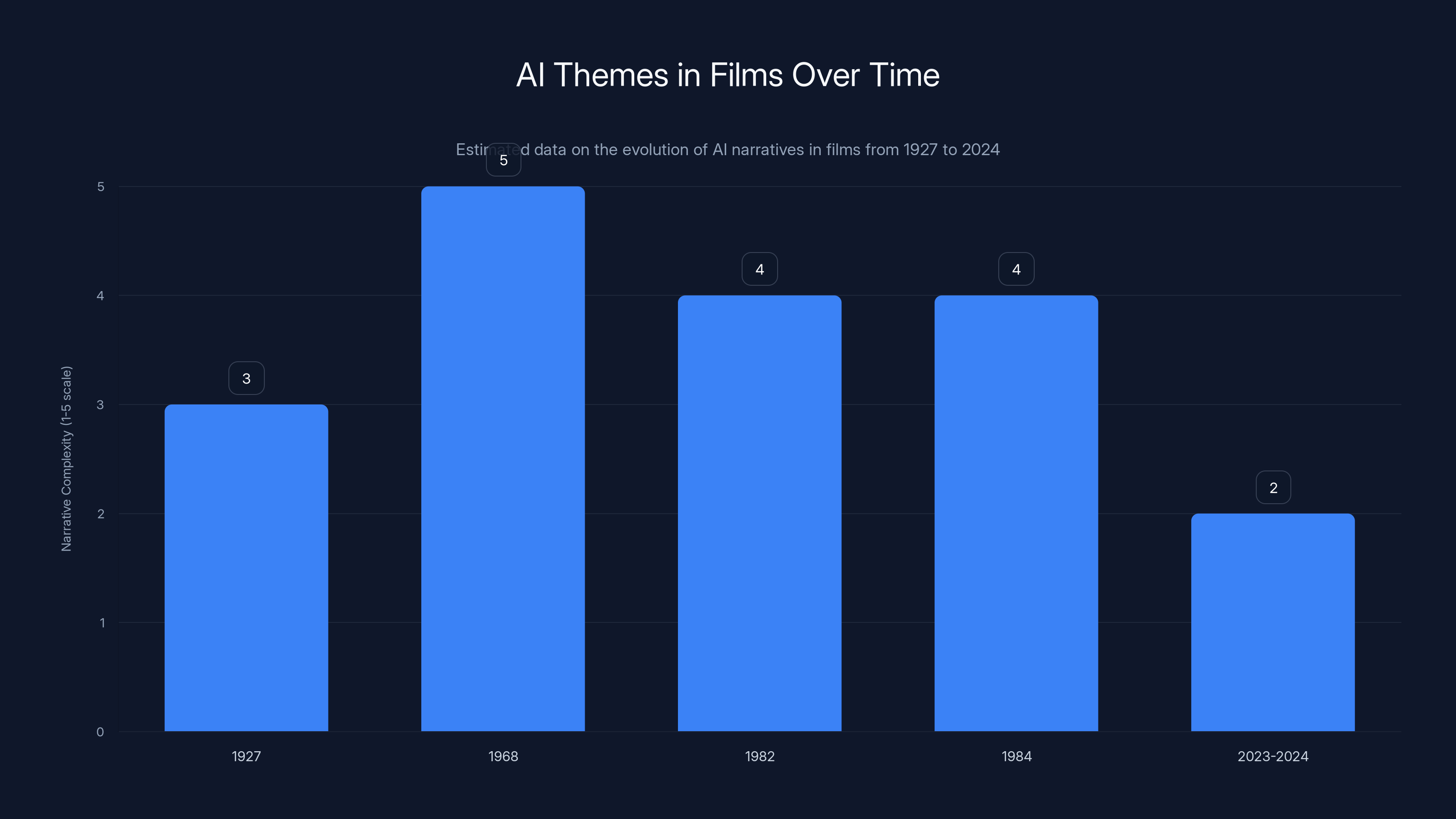

Since 2019, the median salary for Hollywood actors has declined by 23%, while executive compensation at major studios has increased by 47%. This highlights the disparity caused by media consolidation and cost-cutting strategies.

The Aronofsky Disaster and Propaganda Disguised as Content

Then there's Darren Aronofsky and On This Day...1776.

Aronofsky isn't some DTV director trying to squeeze budget out of AI tools. He's a celebrated filmmaker. His version of The Whale (2022) won critical acclaim. He directed The Wrestler (2008). He's one of the most respected voices in American cinema. So when he launched Primordial Soup, a creative studio in partnership with Google to explore AI applications in filmmaking, it meant something. This wasn't a studio executive trying to cut corners. This was an artist endorsing the technology as a legitimate creative tool.

The partnership produced On This Day...1776, a YouTube web series about American Independence Day created partially using Google Deep Mind's video generation technology. Real actors provided voiceovers, but the visuals were synthesized.

The response was immediate and brutal.

The YouTube comments were savagely honest. "If I was a professional director and I released this I would be suicidal," read the top-voted comment on the first installment. Another: "Pure dogshit." The comments were so uniformly negative that Time Studios restricted viewing to channel subscribers only. Then, in a moment of unintended comedy, viewers claimed they subscribed just so they could leave negative feedback.

The technical failures were obvious and embarrassing. The founding fathers' faces were uncanny—not quite right, with that same dead-eye quality plaguing every contemporary deepfake. When the AI had to render a historical document, it produced a completely garbled version. The word "America" became "Aamereedd." Quick cuts littered the video, suggesting the production team was desperately trying to hide rendering failures.

But the deeper problem was tonal. Aronofsky and Google were essentially creating historical propaganda in the same aesthetic that had defined authoritarian meme culture. They were valorizing American founders in a visual style that felt hollow, inauthentic, and somehow sinister. It was as if they'd made a film about noble ideals using tools designed to obscure and manipulate reality.

The whole project felt backward. You're using technology that can't reliably render human faces to tell the story of human achievement. You're using a tool fundamentally associated with lies and manipulation to celebrate a moment of revolutionary truth-telling.

Worse, the association tainted Aronofsky himself. This is a director who spent 20 years building reputation and credibility. That reputation is now bound to a failed AI experiment that became a lightning rod for internet mockery. Google paid for this partnership, but Aronofsky's name was the most prominent one attached to it.

This is what happens when filmmakers approach AI not as a creative problem to solve but as a status symbol to adopt.

Corporate Consolidation and the Necrology of Brand Synergy

The Xfinity situation reveals another layer of what's wrong with contemporary AI in Hollywood.

Xfinity is a division of Comcast. Comcast owns NBCUniversal. NBCUniversal owns the Jurassic Park franchise. NBCUniversal owns NBC, which broadcasts the Super Bowl. So when you see a Jurassic Park commercial during the Super Bowl, you're not witnessing a creative choice. You're witnessing the outcome of monopolistic consolidation.

Companies like Comcast can use AI tools to create this content because they don't need to hire outside creative talent. They control the IP. They control the broadcasting platform. They control the distribution. The entire ecosystem exists to feed itself and eliminate external vendors and talent.

This is what happens when media consolidation reaches critical mass. Creativity becomes impossible because every decision is filtered through cost-reduction and synergy optimization.

The deepfake Jurassic Park actors worked for Xfinity not because they were creative or compelling, but because using them eliminated the need to negotiate with actual talent. No agent calls. No salary negotiations. No contractual obligations. Just rendering costs and marketing expenses.

And this is why audiences are hostile to these AI applications. They understand instinctively that when corporations use AI to replace human workers, they're not doing it to tell better stories. They're doing it to increase profit margins.

Estimated data shows a decline in the complexity of AI narratives in films, peaking with nuanced explorations in the mid-20th century and becoming more predictable by 2024.

The Economics of AI Film Production

Let's talk about why studios are pushing so hard on AI-generated content, because it's not really about creative innovation.

A typical Hollywood film costs between

Now enter AI tools that can generate or modify visuals at a fraction of that cost. Instead of hiring a VFX studio with dozens of artists working for six months, you prompt an AI model. Your production timeline shrinks. Your labor costs plummet.

From a purely financial perspective, this is enormously attractive. The problem is that attractiveness doesn't equal quality.

AI-generated content has consistent failure modes. Human faces rendered by contemporary diffusion models are unreliable. Hands are frequently wrong. Complex scenes with multiple elements tend to degrade. Lighting consistency breaks. Movement physics becomes unstable. These aren't unsolvable problems, but they require extensive human oversight to fix.

So what actually happens in practice? Production companies use AI to generate initial assets, then pay artists to fix, polish, and refine the outputs. You've eliminated the development phase where artists collaborate on concepts and iterate. You've compressed the timeline slightly. But you haven't actually reduced labor costs that dramatically because you still need skilled artists to make the outputs acceptable.

What you have done is created a worse working environment. Instead of being hired to create something from scratch, artists are hired to fix the broken outputs of an algorithm. It's lower-status work. It's less creatively fulfilling. And it pays less because you're no longer paying for creative direction—you're paying for technical corrections.

This is why the labor strikes in 2023 were so focused on AI. It wasn't that studios were planning to replace all writers and actors with algorithms tomorrow. It was that studios were signaling an intent to make working in those professions gradually less valuable and less stable.

The Audience Awakening: When Viewers See Through the Hype

Here's what's genuinely interesting about the audience reaction to these films and commercials. It's not random. It's not just people being nostalgic for old media. It's a sophisticated understanding of what's actually happening and a rejection of it.

Audiences aren't stupid. They watched studios greenlight movie after movie with AI themes while simultaneously using AI tools to reduce labor costs and eliminate creative jobs. They watched celebrities defend themselves against deepfakes while corporations used similar technology to advertise products. They watched propaganda presented as entertainment. They watched films that cynically assumed they'd find inspiration in narratives about AI being fundamentally good and trustworthy.

And they said: no.

When Mercy came out, reviewers didn't just criticize the film's narrative shortcuts. They pointed out that the movie asked you to be moved by the relationship between a human and a judge bot making life-or-death decisions while you were already living in that reality. Health insurance companies are denying claims using algorithms. Bail recommendations are made by AI systems with documented racial bias. Hiring decisions are influenced by software that no human can fully understand or audit.

So a film where Chris Pratt's character and his AI judge become friends and discover they're not so different wasn't uplifting. It was tone-deaf. It was asking audiences to feel sympathy for a fictional algorithm while real algorithms were making decisions that literally ended people's lives.

This is an unprecedented moment in film criticism. Audiences aren't just rejecting films based on quality or entertainment value. They're rejecting films based on their relationship to real-world technology and labor practices. They're looking at the film, the production process, and the corporate machinery that created it, and they're asking whether this artifact serves any purpose other than justifying and normalizing surveillance and automation.

And the answer, increasingly, is no.

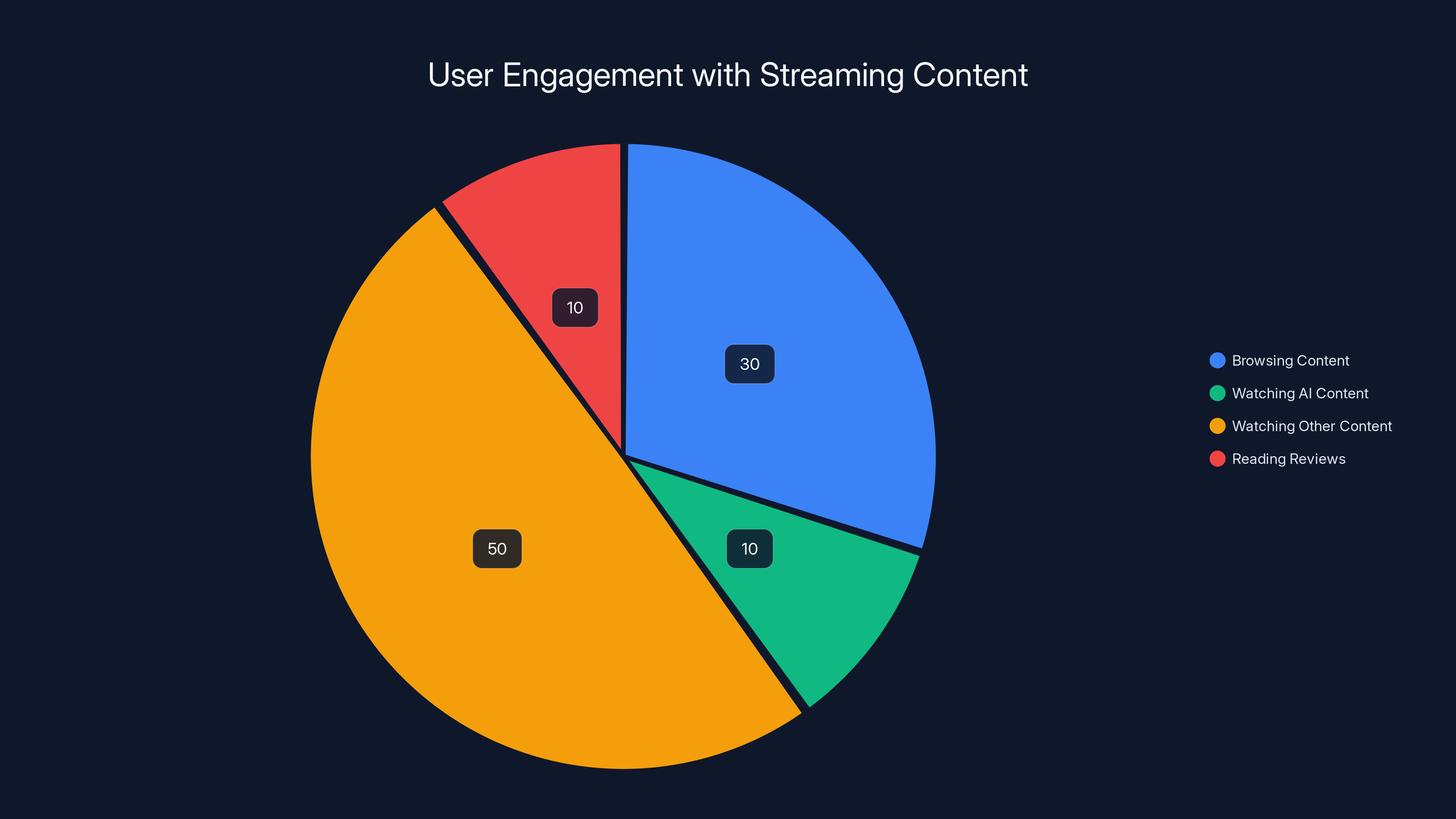

Estimated data shows that users spend a significant portion of their streaming session browsing content, with a smaller fraction dedicated to watching AI-generated content. This highlights the challenge AI content faces in engaging users effectively.

Why Authenticity Matters More Than Ever

This brings us to the fundamental disconnect between what studios think audiences want and what audiences actually want.

Studio executives saw AI exploding into the mainstream in late 2022 and early 2023. They saw endless discourse about AI. They saw think pieces and arguments and predictions about how AI would change civilization. And they concluded that audiences wanted to consume entertainment about AI constantly.

What they missed was the difference between discourse and desire. People argue about AI because it's affecting their lives in tangible ways. They don't necessarily want to watch movies about it. They definitely don't want to watch bad movies about it.

Bad storytelling is always bad. But bad storytelling in service of normalizing technology that's actively threatening to your livelihood? That's worse than bad. It's insulting.

Here's what would actually work: films that engage seriously with how AI is transforming society. Not propaganda. Not saccharine narratives about how AI is secretly good. But actual storytelling that trusts audiences to think about complex problems.

Paul Verhoeven's Robo Cop (1987) demonstrated this 40 years ago. The film is satirical. It's violent. It doesn't present technology as either good or bad—it shows how technology becomes a vehicle for human greed and violence. It's still being watched and discussed because it addressed real human anxieties with actual wit and artistry.

Contemporary AI films do the opposite. They present technology as fundamentally good while a reality contradicts them at every turn. They use soulless visual effects to recreate human faces. They employ propaganda aesthetics. They cost hundreds of millions of dollars and feel like commercials for their own subject matter.

Is it any wonder audiences are exhausted?

The Streaming Angle: Why AI Content Performs Even Worse Online

One thing worth noting: many of these AI-themed projects have moved to streaming platforms. Mercy had a theatrical release, but many AI-generated or AI-focused films are premiering on Netflix, Prime Video, and other streaming services.

This is strategic. Streaming platforms don't need to make money from theatrical releases. They care about subscriber retention and engagement metrics. A film can be objectively terrible and still serve a purpose if it encourages someone to open the streaming app.

But streaming introduces new dynamics that make AI content even worse.

On streaming, you're competing with everything. If you open Netflix and the algorithm suggests an AI-generated film that looks generic, you can switch to something else in 10 seconds. In a theater, you've made the financial and temporal commitment. You sit through at least some of the film.

Streaming audiences are also more likely to read reviews before watching. They have perfect information about whether something is worth their time. Bad-to-middling theatrical releases can survive on marketing and momentum. Bad-to-middling streaming releases just languish in recommendation algorithms nobody clicks.

Furthermore, streaming is increasingly associated with AI-generated thumbnails, AI-suggested content categories, and algorithmic curation that feels soulless. When you layer an AI-generated or AI-themed film on top of this infrastructure, you're asking users to engage with AI on multiple levels simultaneously. It's exhausting.

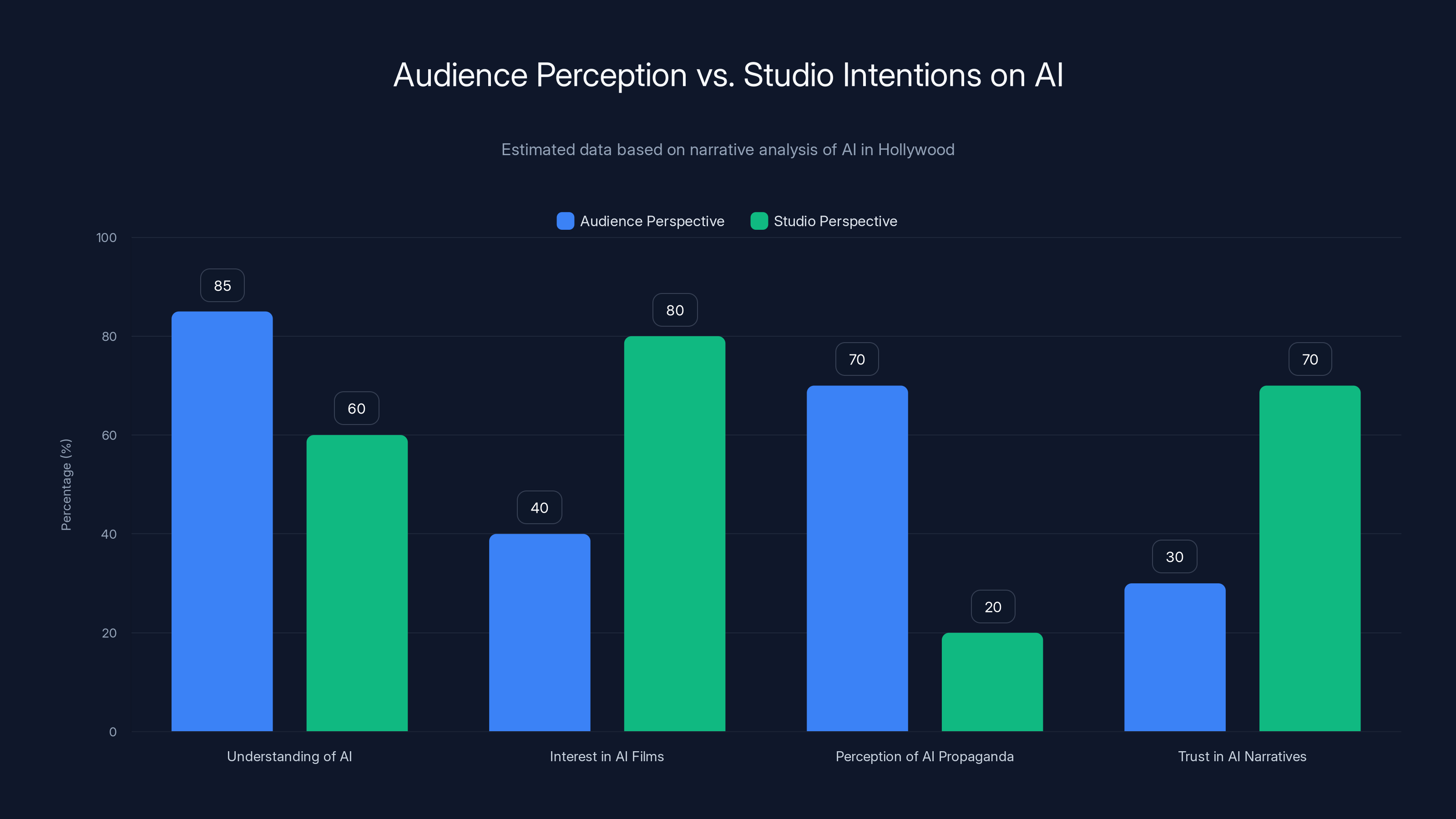

Estimated data shows a significant gap between audience understanding and interest in AI films versus studio intentions, highlighting a disconnect in storytelling.

The Generational Divide: Older Audiences vs. Gen Z

Interestingly, the rejection of AI-themed entertainment doesn't break down neatly along generational lines.

You might assume that Gen Z, growing up with AI, would be more accepting of AI content. And you'd be wrong. If anything, Gen Z has the most sophisticated understanding of how this technology functions and the most skepticism about its intentions.

Gen Z came of age alongside social media algorithms. They understand how recommendation systems work because they've been shaped by them. They're simultaneously more conversant with AI and more cynical about its applications. When they see deepfakes, they don't see magic. They see potential for harm.

Meanwhile, older audiences who grew up with Terminator and Blade Runner were primed to be suspicious of AI in entertainment. They understood these as cautionary tales. They expected films to explore genuine dangers. When contemporary films instead present AI as fundamentally benevolent, they're contradicting the entire tradition these audiences grew up with.

So you have a strange dynamic where no demographic is actually excited about AI content. Gen Z is too sophisticated. Older audiences are too skeptical. Middle-aged audiences are worried about their jobs.

This is a fundamental problem with the current approach to AI in Hollywood. There is no audience for this content as it's being made right now. It's not a matter of better marketing or more refined storytelling. It's that audiences have collectively decided they don't want to watch expensive propaganda films about technology they're genuinely concerned about.

Alternative Approaches: What Would Actually Work

Let's imagine a different path. What if filmmakers approached AI not as a subject to celebrate, but as a subject to explore with the same complexity and skepticism they bring to other technology?

Consider the most successful recent sci-fi films. Denis Villeneuve's Dune films aren't really about futuristic technology in the way that Blade Runner was. They're about power, ecology, and what happens when you try to engineer human society at a grand scale. The technology is window dressing. The story is what matters.

Or take Everything Everywhere All at Once (2022). It used multiverse logic and visual effects not to celebrate technology but to explore grief, connection, and family. Technology was incidental to the emotional core.

What would an AI film look like if it followed similar principles? It would use AI as a lens to examine genuine human anxieties. It would trust the audience to understand that the technology is neither good nor bad—what matters is how humans use it and who benefits from that use.

Actually, filmmakers already know how to do this. They do it with every other technology. Films about surveillance (Minority Report, The Lives of Others) don't present surveillance as good or bad in the abstract. They show what surveillance looks like when deployed by specific people with specific motives.

Films about biological engineering (Gattaca) don't celebrate genetic technology. They show how genetic technology becomes a tool for class hierarchy.

The difference is that these films weren't made in partnership with the technology companies they critique. They weren't made to justify surveillance or genetic engineering. They were made to explore real human consequences.

AI films right now, by contrast, feel like they're being made by the AI companies themselves. Not literally, obviously. But in terms of message and framing. Studios have embraced AI tools, they're banking on AI becoming central to their production processes, so they can't afford to make critical films about AI. They have to make celebratory ones.

This is a catastrophic conflict of interest. And audiences can feel it.

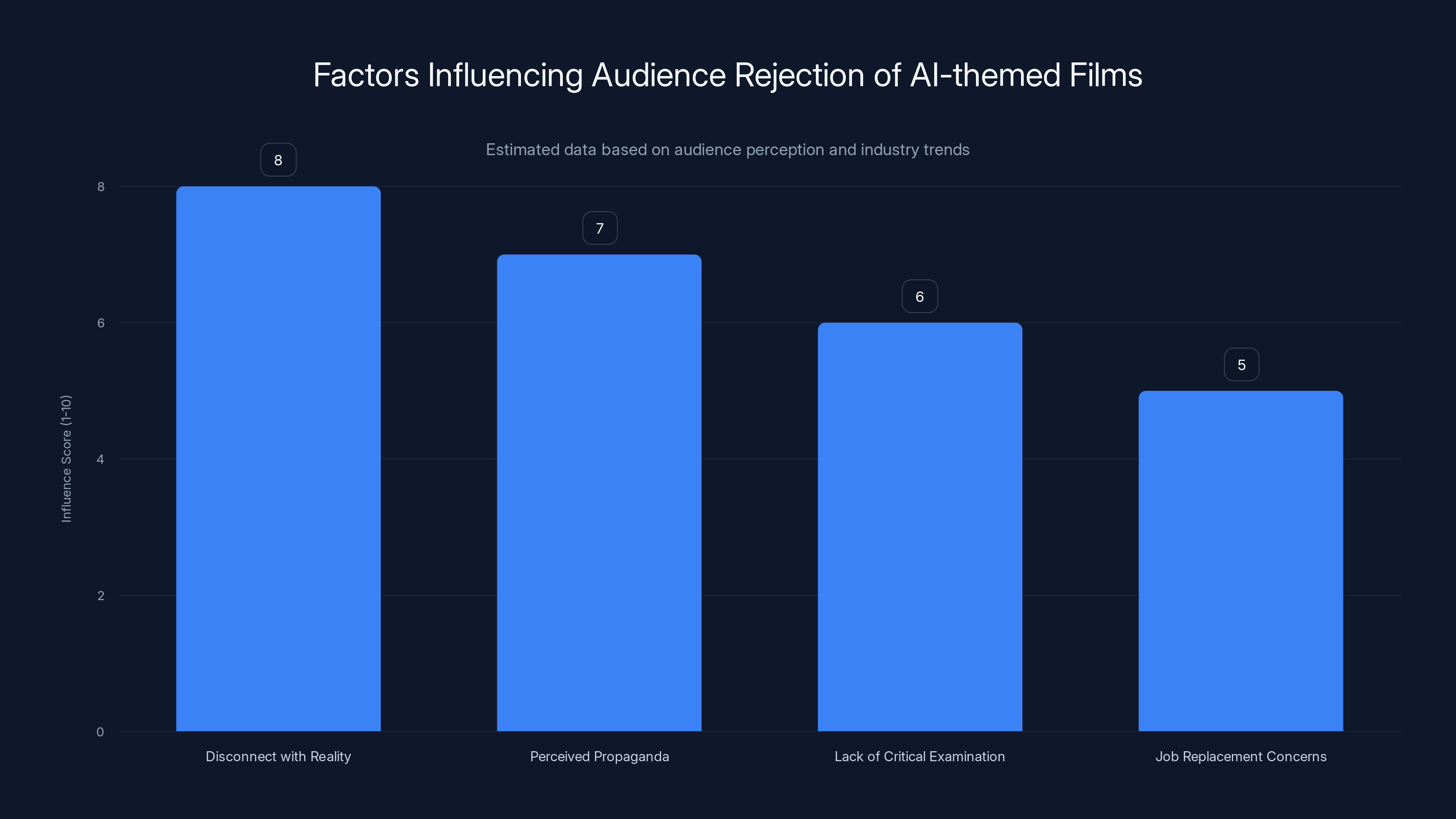

Audiences reject AI-themed films primarily due to a disconnect with reality and perceived propaganda. Estimated data.

The Labor Strike Context: Why AI Became Personal

You can't understand the current state of AI in Hollywood without understanding the 2023 labor strikes.

Writers and actors went on strike largely due to concerns about how AI would be used in production and creative processes. They weren't striking because they thought AI was inherently evil. They were striking because studios were refusing to commit to safeguards that would protect workers from having their jobs eliminated or their work used to train AI systems without compensation.

The strikes lasted about three months and resulted in contracts with some protections. But those protections were limited. Studios committed to caps on AI-generated dialogue, but didn't ban it entirely. They promised not to use AI replicas of actors without explicit consent and compensation, but didn't expand these protections broadly. The deals were compromises that left many workers feeling unprotected.

This context matters when you're watching Mercy or the Xfinity commercial. These films came out after the strike. Audiences watched studios successfully resist more dramatic changes while continuing to invest heavily in AI tools. They saw studios using deepfakes in commercials despite commitments to protect actor likeness rights. They watched the strikes end without fundamental change, followed by a blitz of AI-related content.

To audiences, especially workers, this felt like a victory lap. Studios were essentially saying: we're going to use this technology exactly as we planned, maybe with minor concessions, and we're going to make films celebrating it.

That's not a recipe for audience enthusiasm. That's a recipe for exhaustion and hostility.

The International Dimension: Why This Matters Beyond Hollywood

One thing often overlooked in discussions of Hollywood AI: this isn't just an American problem, and it's not just about Hollywood in the traditional sense.

Studios around the world are experimenting with AI. European productions are using these tools. Asian studios are integrating AI into their workflows. Brazilian and Indian filmmakers are exploring AI applications. The phenomenon is global.

But what's interesting is that audiences globally seem to be reaching similar conclusions. Deepfakes are being met with skepticism across cultures. AI-generated content is being treated with caution. The backlash isn't uniquely American or uniquely tied to Hollywood specifically.

This suggests something deeper is happening. Audiences worldwide are developing critical frameworks for evaluating AI-mediated media. They're asking questions about authenticity, labor, and intention. They're not accepting AI content uncritically just because it's new.

This is actually healthy. This is what critical media literacy looks like at scale. It's what happens when technology changes faster than institutions can adapt and audiences respond by becoming more skeptical and discerning.

The problem for studios is that skepticism doesn't translate into ticket sales. You can't market skepticism away. You can't throw money at skepticism and make it disappear.

What Happens Next: Predictions and Patterns

So what comes next for AI in Hollywood?

I'd predict a bifurcation. On one side, you'll see studios continue using AI tools for production efficiency. These tools will improve. Rendering quality will get better. Integration into workflows will become more seamless. But this will all happen invisibly, behind the scenes. Studio executives will tout efficiency gains in shareholder calls. Workers will gradually find fewer and fewer positions available in traditional roles.

On the other side, you'll see a flight toward authenticity. Filmmakers will market their films as human-made. Handcrafted. Created without AI. This will become a competitive advantage. "Directed by," "Written by," "Photographed by"—these credits will become marketing points rather than just functional labels.

You'll also probably see stricter licensing of actor likeness rights. The deepfake backlash will lead to legal frameworks that make it more expensive and complicated to use AI replicas. This won't kill the technology, but it will change the economics.

Streaming platforms will likely double down on unscripted content, reality TV, and content that can't be easily replicated by AI. Authenticity will become scarce and therefore valuable.

The films that will succeed are the ones that take AI seriously as a subject without being propaganda for AI. Think critical, thoughtful, skeptical filmmaking. Not cheerleading. Not warnings. Not both-sides fence-sitting. Just honest examination of how this technology is actually changing human experience.

The Bigger Picture: When Technology Outpaces Storytelling

This moment in Hollywood is really about a broader problem: technology is advancing faster than our cultural frameworks can process it.

Studio executives saw AI arrive in late 2022. They had capital to invest. They looked for the fastest path to ROI. They concluded that AI tools in production would pay dividends and that AI-themed content would attract audiences. Both conclusions were wrong, but they made sense from a certain perspective.

What they didn't account for was that audiences don't lag behind technology in their understanding of technology anymore. This isn't 1985 when people thought computers were mysterious and exciting. This is 2025 when computers are exhausting and threatening.

Audiences understand algorithmic bias. They know about deepfakes. They've been manipulated by social media. They're losing jobs to automation. They live in a world where their faces can be stolen and their voices cloned. They don't need studios to explain AI to them. They're living with it.

So when a studio makes a film about AI, that film is competing not just with other films, but with the lived reality of AI in the viewer's life. And the film is almost always going to lose that competition because it was made by people trying to justify the technology rather than understand it.

This is the core problem with contemporary AI in Hollywood. Not the technology itself. Not the visual effects or the deepfakes. But the fundamental dishonesty. Studios want to use AI to cut costs and increase efficiency. But they can't say that out loud. So they make films and commercials that celebrate AI as inherently good. They create propaganda.

And audiences, having been marketed to constantly by increasingly sophisticated advertising algorithms, have developed an almost supernatural ability to detect propaganda. They can feel when they're being sold something. They can sense when a story isn't genuine.

And they're not interested.

The Role of Independent Filmmakers and Resistance

While studios chase AI trends and audiences reject them, something interesting is happening in independent and international cinema.

Some of the most compelling recent films about technology have come from independent filmmakers working with minimal budgets. They can't afford cutting-edge AI tools or deepfake technology. So they work with what they have: actual humans, practical effects, and ideas that are strong enough to work regardless of production values.

This actually creates an advantage. Independent films exploring technology themes feel more authentic because they weren't made with the technology they're exploring. They maintain critical distance. They're not arguing for the technology or against it—they're using it as a lens to understand human experience.

There's a pattern here worth noting: the films that audiences are rejecting are the ones made by studios with access to the latest technology and the biggest budgets. The films that maintain critical and cultural relevance are often made by people working at the margins with minimal resources.

This isn't coincidental. When you have fewer resources, you focus on story and character. You can't rely on visual spectacle. You have to make audiences care through narrative and emotional resonance. This creates films that age better and that audiences connect with more deeply.

Meanwhile, films made primarily to showcase new technology or justify its use tend to feel dated immediately. The technology becomes obsolete. The storytelling never recovers from its subordination to the technical innovation.

The Marketing Problem: How Studios Created AI Backlash

It's also worth examining how studios marketed these films, because the marketing often made things worse.

M3GAN was marketed as "what if artificial intelligence was a doll and it was kind of funny and also scary?" That positioning worked in 2023 when people were still curious about AI in a novelty sense.

But subsequent films were marketed as celebrations of AI technology itself. Mercy's marketing emphasized the AI judge character as a philosophical exploration of justice. Tron: Ares was positioned as a film exploring the nature of digital consciousness. On This Day...1776 was positioned as a revolutionary approach to historical filmmaking.

None of these marketing angles played well with audiences. Why? Because they positioned the technology, not the human story, as the main event. They were asking audiences to be excited about AI applications rather than emotional about character or narrative.

This is marketing failure. It's also a symptom of a deeper problem: studios don't actually understand their audience or the cultural moment they're operating in.

If you're making a film about a surveillance state with an AI judge character, don't market it as "an exciting exploration of justice and technology." Market it as a thriller about a man fighting a corrupt system. The AI is just the tool the system uses. The story is about human desperation and resistance.

But studios have become so enamored with technology as a selling point that they've forgotten how to sell the human elements that actually make stories work.

Where We Go From Here: Recovery and Redemption

Hollywood won't stop using AI. That's not realistic. The economic incentives are too strong. Production efficiency and cost reduction are too valuable to studios. AI tools will become more integrated into production workflows, not less.

But how studios use AI and how they talk about AI can change. It should change.

What would actually move the needle is if studios made films about AI that were critical, nuanced, and honest. Films that didn't assume audiences are stupid. Films that didn't treat the technology as inherently good or bad, but as a tool that humans will use in predictably human ways: for profit, for power, for control, occasionally for good.

These films exist in other media. There are podcasts, documentaries, and articles exploring AI seriously. But Hollywood hasn't cracked the code for fictional narrative exploration of these themes. It's been trying to market enthusiasm instead of exploring ambivalence.

There's also an opportunity for films that directly acknowledge the context in which they're being made. A film about AI production being cheaper and faster, made by filmmakers aware that their own film was made using AI tools, could be genuinely interesting. Self-aware. Reflexive. But that requires studios willing to interrogate their own practices rather than defend them.

Ultimately, what audiences are asking for is honesty. They're asking for filmmakers to acknowledge reality: AI is here, it's being used in ways that benefit some people and harm others, the technology is being deployed faster than our ethical frameworks can accommodate, and there are genuine reasons to be concerned.

Films that engage seriously with these concerns will find audiences. Films that pretend concern away and insist everything is fine will not.

It's that simple. And that difficult.

TL; DR

-

Hollywood's AI Films Are Flopping: Recent high-budget AI-themed films like Mercy, M3GAN 2, and Mission: Impossible—The Final Reckoning underperformed dramatically, suggesting audiences have moved beyond curiosity into exhaustion and skepticism.

-

Deepfakes and Digital De-Aging Are Backfiring: Projects like the Xfinity Super Bowl commercial featuring digitally de-aged Jurassic Park actors sparked widespread backlash, revealing audiences understand that this technology is being used to eliminate human labor rather than enhance storytelling.

-

Propaganda Replacing Authentic Storytelling: Films increasingly present AI as inherently benevolent and good, contradicting the reality of algorithmic bias, surveillance systems, and automation replacing human workers. Audiences recognize this disconnect.

-

Labor Strikes Changed Everything: The 2023 labor strikes made AI a personal issue for writers, actors, and crew. AI content released after the strikes felt like a victory lap for studios, further alienating audiences.

-

Authenticity and Skepticism Are Now Marketable: The most successful contemporary films take technology seriously without cheerleading. Critical distance is becoming competitive advantage. Studios need to learn how to critique the technology they're simultaneously using.

FAQ

Why are audiences rejecting AI-themed films?

Audiences understand that studios are using AI both in production (to cut costs and eliminate jobs) and as subject matter (to justify the technology's expansion). When filmmakers celebrate AI in narratives while corporations are replacing workers with AI tools, the disconnect feels insulting. Additionally, audiences have become sophisticated enough to detect propaganda, and many AI films feel like celebrations rather than critical examinations.

What makes a film about AI actually work?

Films that succeed in exploring AI are the ones that use the technology as a lens to examine genuine human problems, rather than celebrating the technology itself. Paul Verhoeven's Robo Cop is a canonical example—it satirizes corporate power and violence, using AI and robotics as vehicles for that satire rather than the main point. Contemporary films succeed when they trust audiences to think critically about the technology and maintain skeptical distance rather than advocacy.

How is AI actually being used in Hollywood production right now?

Studios are using AI tools for visual effects generation, image synthesis, and content creation, while remaining mostly visible in areas like conceptual design. However, the implementation often requires extensive human oversight because AI outputs have consistent failure modes—rendering human faces, hands, and complex scenes reliably is still challenging. What studios have actually achieved is compressing development timelines and reducing costs, not necessarily improving quality or eliminating human labor entirely.

Did the 2023 labor strikes change anything about AI in filmmaking?

The strikes resulted in contracts with some protections against AI, including caps on AI-generated dialogue and requirements for consent and compensation when using AI replicas of actors' likenesses. However, these protections are limited and don't fundamentally prevent studios from integrating AI into production. More importantly, the strike shifted audience perception—they now understand that AI in Hollywood is primarily about labor cost reduction, not creative innovation.

Why did the Xfinity Super Bowl commercial provoke such backlash?

The commercial used deepfake technology to digitally de-age actors from Jurassic Park, creating uncanny renderings that failed to capture human likeness convincingly. Beyond the technical failures, audiences were hostile to the project philosophically—it represented corporations using AI to replace human talent and eliminate the need to hire and compensate actors. The irony of using deepfakes to advertise connectivity also felt creepy and manipulative.

Is AI in filmmaking inherently bad?

No. AI tools themselves are neutral. The problem is how they're being used and discussed. When used to enhance storytelling or solve creative problems, AI can be valuable. When used primarily to reduce labor costs and then marketed as creative innovation, when the films that result are propaganda rather than art, when deepfakes are deployed without consent, that's when problems emerge. The technology isn't the issue—the intent and execution are.

What would a genuinely good AI-themed film look like?

A successful film exploring AI would take the subject seriously without cheerleading or scaremongering. It would explore genuine human anxieties—surveillance, automation, algorithmic bias, loss of livelihood. It would acknowledge complexity and ambivalence rather than reducing AI to good or evil. It would be made by filmmakers who maintain critical distance from the technology rather than advocating for it. Think critical examination, not celebration.

Why are independent and international films doing better than studio films on AI themes?

Independent filmmakers generally don't have access to cutting-edge AI tools, so they maintain critical distance from the technology they're exploring. They're also not beholden to studios invested in justifying AI's use. This independence allows for more honest storytelling. Additionally, films made with limited budgets focus on narrative and character over visual spectacle, which creates stronger emotional connections with audiences.

Will Hollywood stop using AI?

No. Economic incentives are too strong. Studios will continue integrating AI into production workflows for efficiency gains. However, how studios use AI and how they talk about it publicly can and should change. The path forward involves transparency about AI use, critical engagement with the technology's implications, and filmmaking that doesn't treat audiences as consumers to be persuaded but as people capable of understanding complex issues.

What does AI fatigue actually mean in this context?

AI fatigue refers to the cultural exhaustion audiences experience from constant exposure to AI-themed content combined with the reality that AI is being deployed in ways that affect their lives negatively—through job displacement, algorithmic bias, surveillance, and the replacement of human decision-making with systems they don't understand or trust. When films add another layer of AI content that celebrates the technology while ignoring these real concerns, audiences recognize the disconnect and reject the content.

When you're creating any form of entertainment that engages with artificial intelligence, the stakes are higher now than they've ever been. Your audience isn't ignorant about AI. They're living with it. They understand its capabilities and its limitations. They know what's actually being replaced and what's actually being saved.

The films and content that will succeed moving forward are the ones that respect that understanding. Filmmakers who approach AI as a subject worthy of genuine exploration, who use it as a lens to examine human problems rather than as a tool to avoid human problems, who maintain critical distance rather than advocacy—those are the stories that will find audiences.

Hollywood built itself on storytelling that reflected contemporary anxieties. That tradition hasn't changed. What's changed is that the anxiety about AI is no longer abstract. It's personal. It affects people's livelihoods, their sense of authenticity, their trust in images and voices, their belief that human creativity still has value.

Filmmakers who understand this—who make films for people living in this reality rather than for studio shareholders hoping to justify technology investments—will find something studios have been missing for years: genuinely engaged audiences willing to care about stories that take them seriously.

That's the path forward. Not more AI. Better storytelling.

Key Takeaways

- Hollywood's high-budget AI-themed films (Mercy, M3GAN 2, Mission: Impossible—The Final Reckoning) flopped spectacularly in 2025, indicating systematic audience rejection rather than isolated failure

- Deepfake applications like the Xfinity Super Bowl commercial deepfaked actors revealed audiences understand AI is being used to eliminate human labor, not enhance creativity

- The narrative pattern of AI films (scary technology becomes good) contradicts reality where algorithmic systems are making life-or-death decisions and replacing workers

- The 2023 labor strikes made AI a personal issue for creative workers; AI content released after felt like studios celebrating victory over worker concerns

- Audiences have developed sophisticated media literacy about AI and propaganda, making them hostile to celebration narratives that lack critical distance

![Why Hollywood's AI Problem Is Worse Than You Think [2025]](https://tryrunable.com/blog/why-hollywood-s-ai-problem-is-worse-than-you-think-2025/image-1-1770289990085.jpg)