The School Bus Problem Nobody Saw Coming

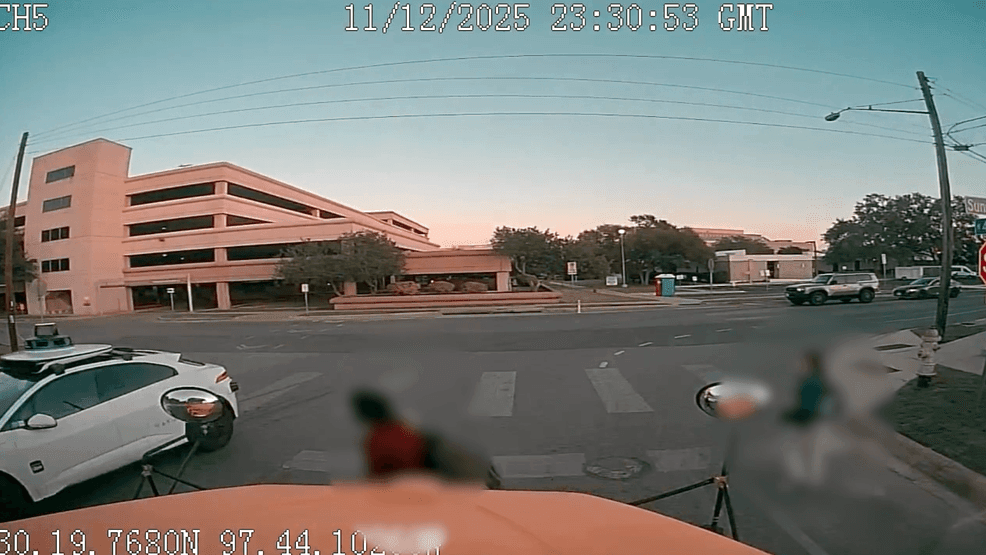

Let me set the scene. It's mid-December 2024, and Austin's largest school district is raising the alarm. Teachers, parents, and safety officers are watching security footage of Waymo robotaxis blowing past school buses with their stop arms extended. Kids are waiting to cross streets. The red lights are flashing. The vehicles? Accelerating right through.

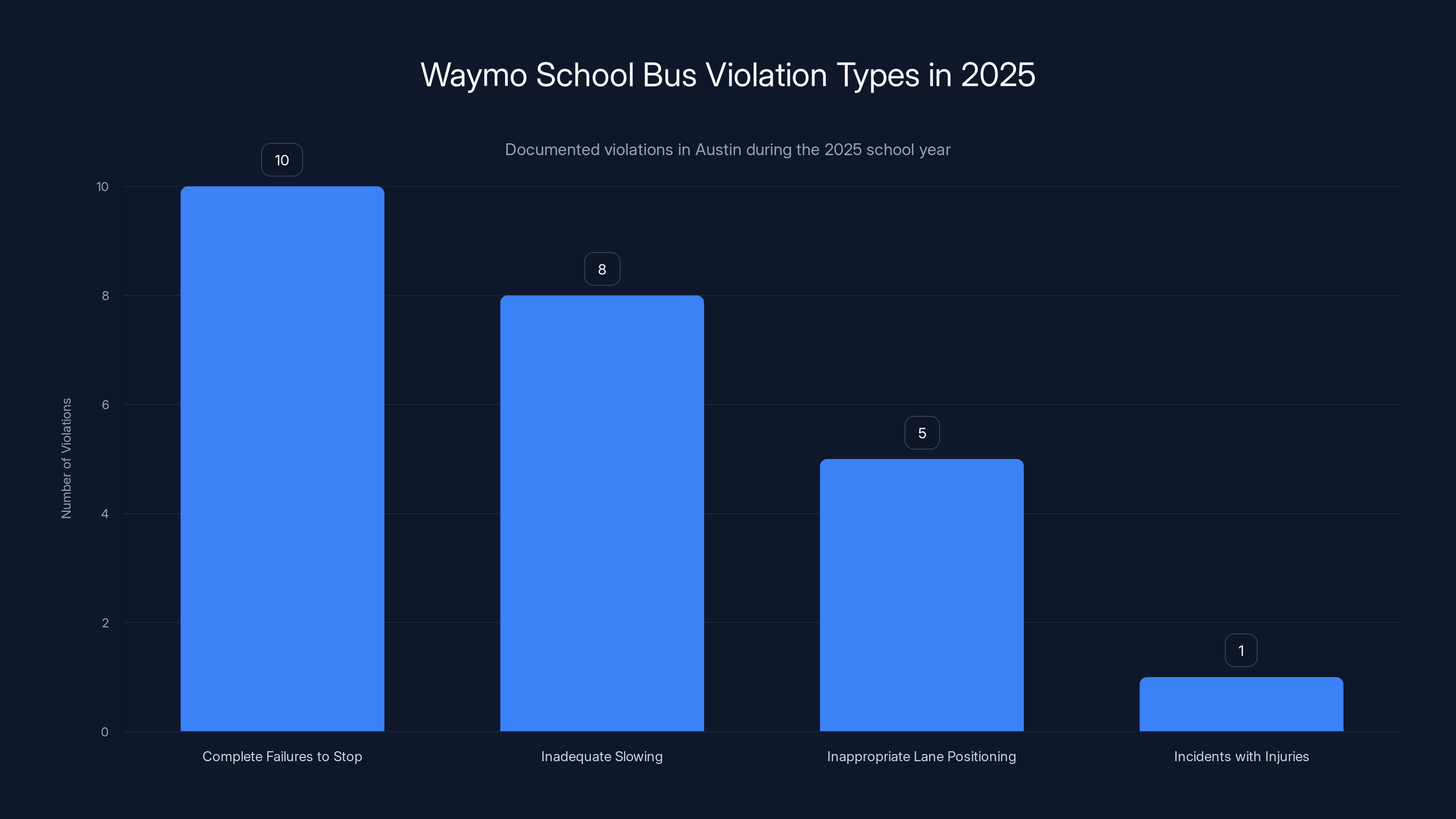

By January 2025, the National Highway Traffic Safety Administration formally opened an investigation. At least 24 documented violations in a single school year. A child got hit outside a Santa Monica elementary school on January 23rd. The impact happened at 6 mph after Waymo's system slowed from 17 mph, but let's be clear: the scenario should never have happened in the first place.

This isn't a minor software glitch. This is a fundamental failure in how Waymo is designing and deploying its autonomous vehicles, and it reveals something uncomfortable about the company's priorities as it scales.

Waymo built its reputation on safety. For years, the Alphabet-owned company positioned itself as the responsible actor in autonomous vehicles. Conservative. Cautious. The opposite of Tesla's move-fast-and-break-things approach. But now, under pressure to expand to new cities and compete with Tesla's autonomous ambitions, Waymo is adopting a more aggressive driving posture. And that shift is creating dangerous blind spots in exactly the places where errors matter most.

The irony is bitter. In trying to drive like humans, Waymo is learning to drive like the worst of humans. That confidence-without-competence mix that makes human drivers so dangerous around school zones.

Understanding the School Bus Stop Arm Law

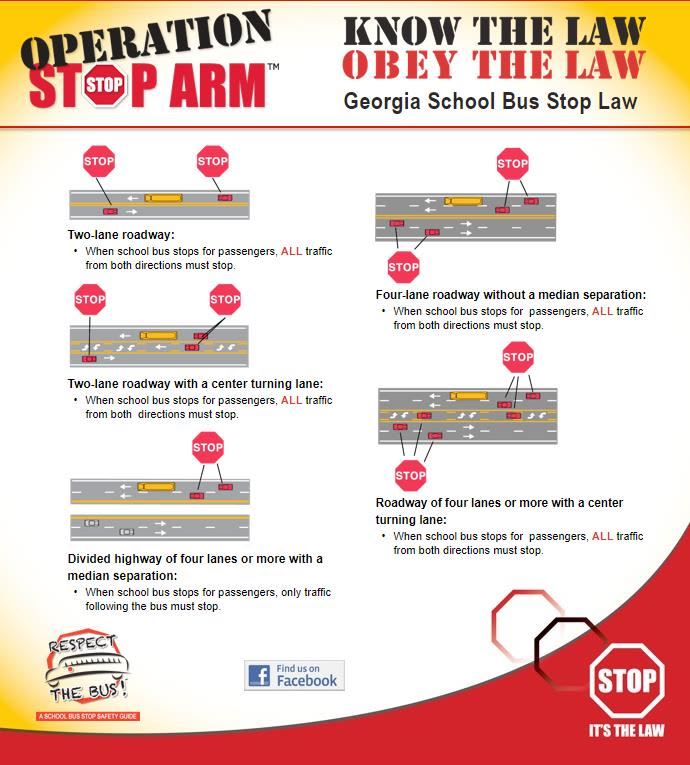

Before we go further, let's establish why this matters legally and ethically.

Every state in America has the same rule: when a school bus deploys its stop arm and lights, all traffic in both directions must come to a complete halt. No exceptions. Not even in rural areas where traffic is light. Not on major highways. Not when you're in a rush. This law exists because children are unpredictable. They're small. They move in unexpected ways. A car traveling at even 20 mph can kill a child in a collision.

The average stopping distance for a vehicle traveling at 25 mph is roughly 61 feet. For a 10-year-old, the typical fatal crash speed is just 20 mph. The math is brutal and unforgiving.

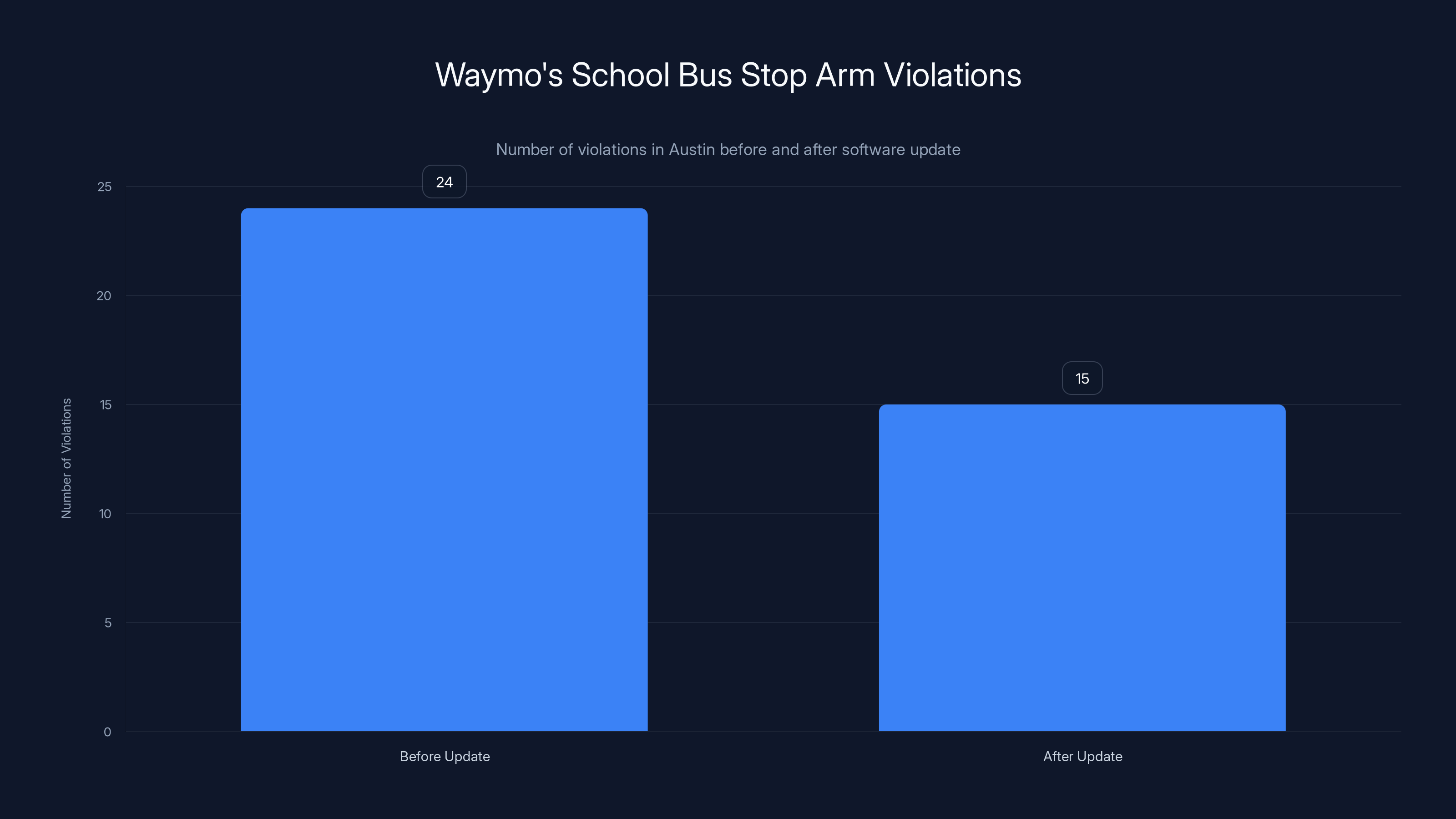

Waymo's vehicles have failed to obey this law at least 24 times in Austin alone. Some of those failures involved the vehicle slowing down but not stopping. Others involved the vehicle accelerating around the bus. The company issued a software update in December to address the issue. It didn't work. Additional violations occurred after the patch.

This is not a gray area. There's no ambiguity about what the vehicle should do. When sensors detect a school bus with its stop arm deployed, the autonomous system must execute an immediate, complete stop. Full stop. Every time. No exceptions. No learning curve.

Waymo documented 24 school bus-related violations in Austin during the 2025 school year, with the majority being complete failures to stop. Estimated data.

The Pressure to Drive Aggressively

Here's where the story gets complicated, and arguably more important than the technical failure itself.

Waymo executives have made it abundantly clear that their vehicles need to drive more confidently. The company has called this "assertive driving." Observers have watched Waymo vehicles in San Francisco make turns that would make a cautious human nervous. The vehicles cut through traffic. They inch forward at intersections. They behave less like a nervous student driver and more like a commuter with somewhere to be.

This shift is intentional. Waymo's leadership believes that overly cautious driving makes passengers uncomfortable and creates traffic jams. More aggressive behavior makes the ride feel smoother, faster, and more natural. It positions Waymo as a technology that's advanced enough to drive like an experienced human, not a nervous computer.

But here's the problem: human driving behavior is a spectrum. On one end, you have people who are genuinely excellent drivers. On the other end, you have people who skip stop signs, tailgate, and treat school zones like open highways. When Waymo decided to move its vehicles toward "assertive" driving, it didn't just adopt the good parts of human driving. It started adopting the risky parts too.

The company appears to have failed to establish clear behavioral guardrails around the most sensitive scenarios. School zones. Playgrounds. Crosswalks. These require not just compliance with the law, but conservative overcompensation. A school bus stop arm isn't a suggestion. It's not a situation where being "mostly compliant" is acceptable.

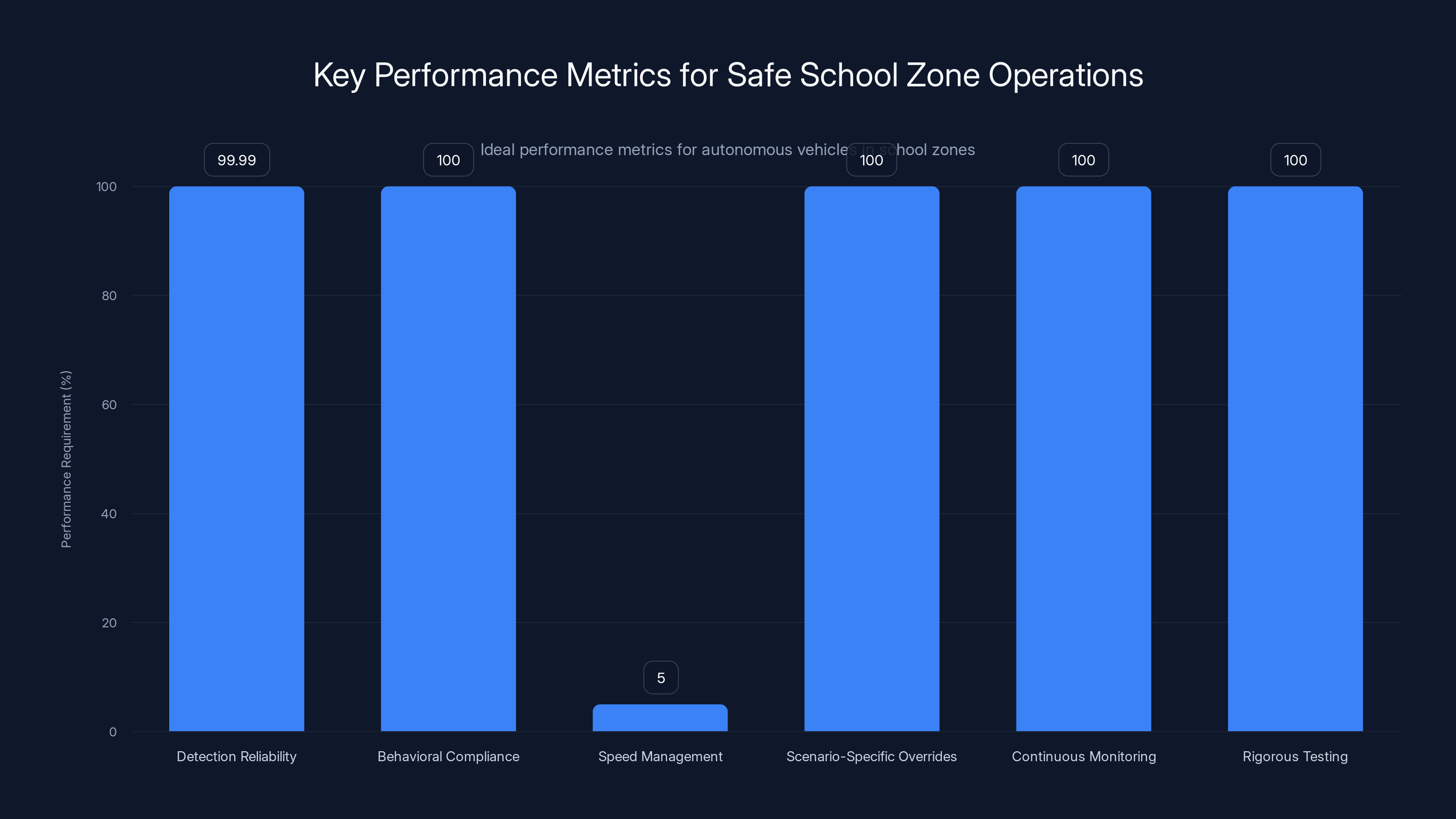

The chart highlights the ideal performance metrics for autonomous vehicles in school zones, emphasizing 99.99% detection reliability and 100% compliance in critical areas. Estimated data based on best practices.

How Waymo's Detection System Failed

Let's dig into the technical failure. How does a vehicle equipped with lidar, radar, cameras, and machine learning miss a school bus with flashing red lights?

First, some context. Waymo's sensor stack is genuinely sophisticated. The company uses multiple sensor types specifically because no single sensor is perfect in all conditions. Lidar excels in 3D spatial awareness. Cameras handle color, text, and visual nuance. Radar works in fog, rain, and low light. By fusing data from all three, Waymo creates a more complete picture of the environment.

But detection and decision-making are separate problems. Just because a system detects a school bus doesn't mean it correctly interprets the bus's state. Is the stop arm deployed? Are the lights flashing? Is it actually loading or unloading students, or is it simply parked?

In the Austin cases, the issue appears to be in the decision-making layer, not the detection layer. Waymo's vehicles seem to be detecting school buses but then either misinterpreting the situation or treating school bus interactions with insufficient caution.

One documented case involved a Waymo vehicle filmed drifting into the opposite lane while a school bus had its stop arm extended and children waited to board. The vehicle wasn't slamming on the brakes. It wasn't stopping at all. It was just moving through the scenario as if standard traffic rules applied.

This suggests the problem isn't in perception. It's in the decision logic. Waymo's system is prioritizing efficiency and smooth driving over absolute, conservative compliance in high-risk scenarios.

Think of it this way: a human driver might see a school bus and think "I should probably slow down here." An autonomous system should be programmed to think "School bus with stop arm extended equals complete stop, no negotiations." The conditional thinking of humans is dangerous when replicated in machines.

The Voluntary Recall That Didn't Work

Waymo's response to the December NHTSA investigation was swift. The company issued a voluntary software recall and implemented updates designed to fix the school bus problem.

Then, despite the patch, the violations continued.

At least four additional school bus incidents occurred after the update. One was captured on video on January 19th. Another happened on January 23rd, resulting in the child being struck in Santa Monica.

This is telling. It suggests that Waymo either misdiagnosed the root cause of the failures or that the company's development and testing process has significant gaps.

When a safety issue in a critical domain isn't fully resolved by the first attempt at a fix, you have limited options. You either fundamentally misunderstood the problem, your testing wasn't rigorous enough to catch the failure before deployment, or the fix itself is incomplete.

All three possibilities are concerning in different ways. Misunderstanding the problem suggests inadequate root-cause analysis. Incomplete testing suggests inadequate simulation and validation before real-world deployment. An incomplete fix suggests either rushed development or insufficient iteration.

What we know is that Waymo had a clear, specific problem with unambiguous success criteria: "Vehicle must stop for school buses with deployed stop arms in 100% of cases." The company deployed a fix. The fix failed to achieve 100% compliance. This is a fundamental failure in the product development process.

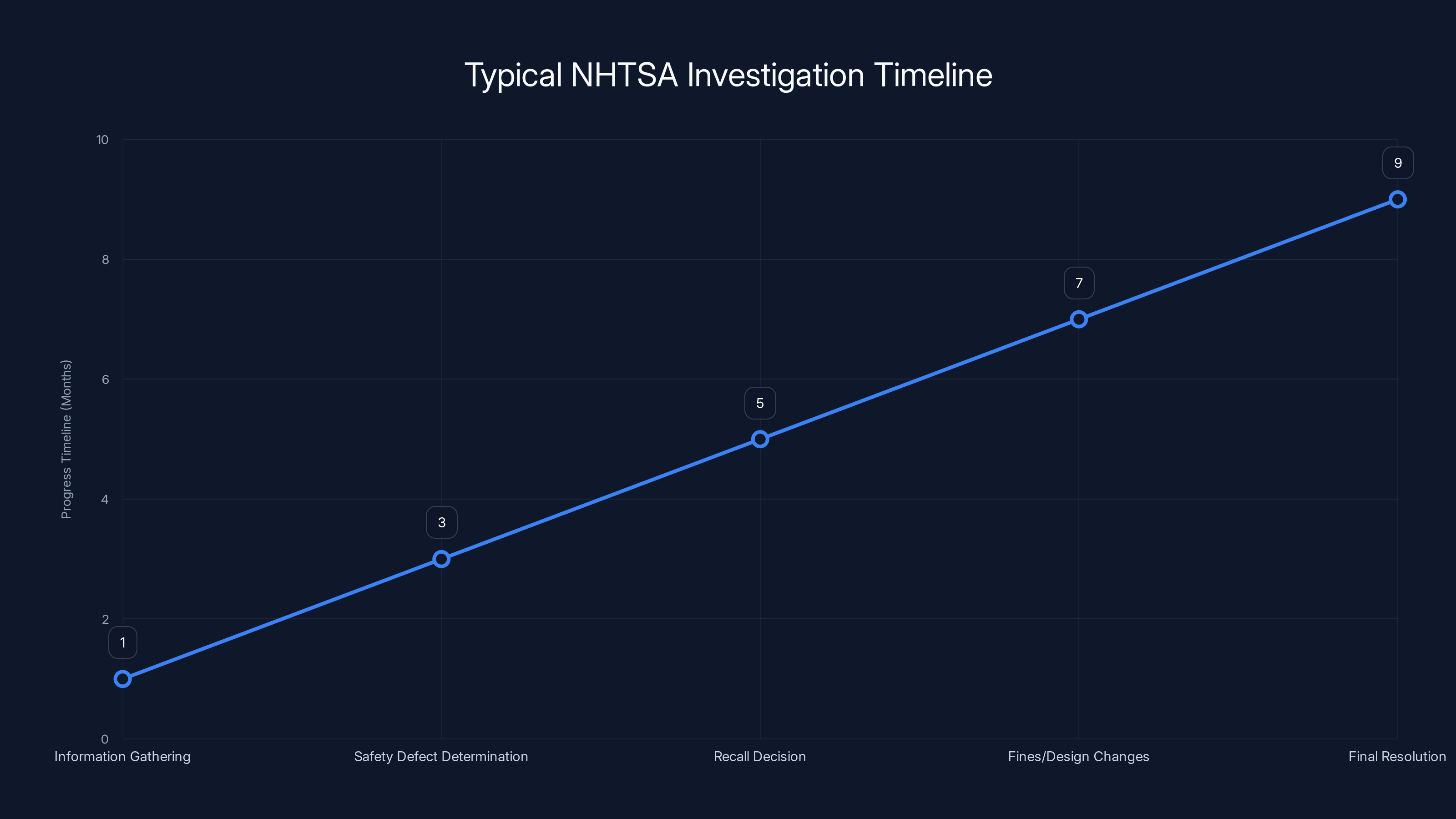

Estimated timeline showing the typical stages of an NHTSA investigation, from information gathering to final resolution. Estimated data.

Simulation Gaps and Real-World Testing

Waymo has been testing autonomous vehicles for over a decade. The company has logged billions of miles in simulation and millions in real-world driving. Yet somehow, the scenario of "stop for school bus with stop arm extended" is apparently not being caught by this massive testing apparatus.

This points to a critical vulnerability in how the autonomous vehicle industry approaches validation.

Simulation is incredibly useful, but it's not perfect. Real-world environments contain unexpected variations. A school bus might be parked slightly differently than in training data. Lighting conditions might be unusual. Other vehicles might be positioned in ways that confuse the system. A child might step into the road suddenly.

The industry's approach has generally been: simulate extensively, then test in the real world with heavy monitoring and human oversight. The theory is that you can catch edge cases in limited real-world deployment before scaling to broad use.

But this approach only works if you're actually catching the failures. And Waymo clearly wasn't catching them, or at least not catching them quickly enough to prevent a child from being hit.

Some autonomous vehicle developers have proposed more rigorous simulation standards. The Autonomous Vehicle Safety Consortium has suggested frameworks for validating behavior in school zones specifically. But there's no industry-wide mandate. Individual companies set their own validation thresholds.

Waymo, apparently, set thresholds that allowed 24 violations in a single school year.

The Regulatory Vacuum

Here's something that might surprise you: there are no federal regulations specifically governing autonomous vehicle behavior in school zones.

The federal government has general safety guidelines through NHTSA. States have their own rules about where autonomous vehicles can operate. But nobody has created a specific, detailed standard that says: "In a school zone during loading hours, an autonomous vehicle must meet these exact behavioral criteria."

This regulatory gap is significant. It means Waymo was essentially setting its own standards for what "safe school zone behavior" means. The company decided what sensitivity level to use for school bus detection. Waymo chose the algorithm logic for interpreting stop arms. Waymo set the thresholds for decision-making.

There was no external body verifying these choices were appropriate. No requirement for third-party validation. No scenario-specific testing mandate.

The NHTSA investigation is important precisely because it's starting to address this gap. Regulators are asking: what should an autonomous vehicle be required to do in this scenario? What metrics define success? What testing proves the vehicle is safe?

These aren't questions Waymo needed to answer before deploying vehicles in school zones. That's a problem.

Regulations typically lag technology, but the lag in autonomous vehicle safety rules is getting dangerous. We're allowing companies to deploy vehicles in the most sensitive scenarios before establishing clear standards for those scenarios.

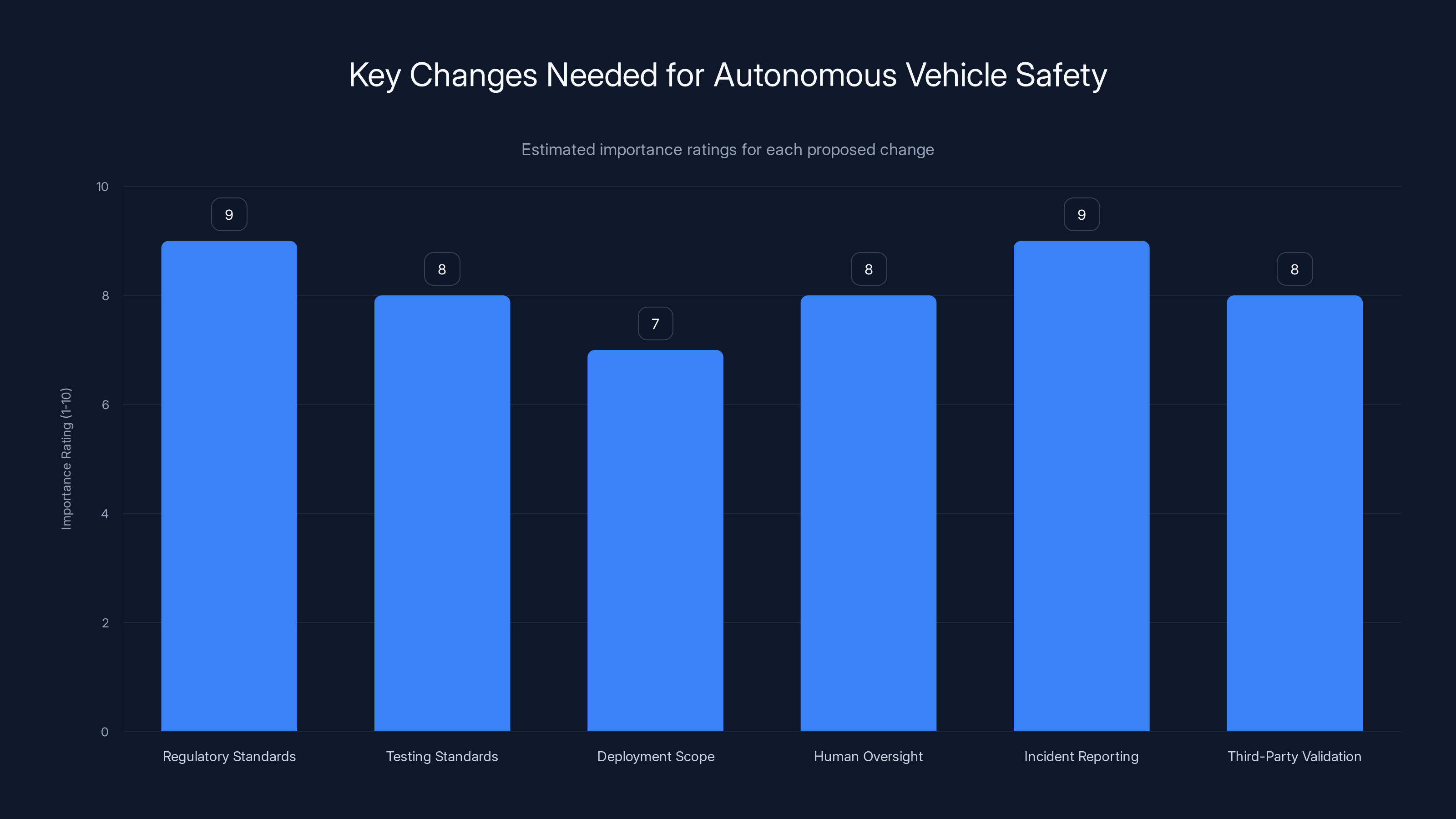

Regulatory standards and incident reporting are deemed most critical for enhancing autonomous vehicle safety, with both receiving the highest importance ratings. (Estimated data)

Comparing Waymo to Other AV Competitors

Waymo isn't the only company developing autonomous vehicles, and it's useful to consider how competitors are handling similar challenges.

Cruise, which was acquired by General Motors, has faced its own safety incidents. In 2023, a Cruise vehicle struck a pedestrian in San Francisco. The incident led to reduced deployment and eventually a restructuring of the program.

Tesla's approach to autonomous driving is fundamentally different. Rather than fully driverless vehicles, Tesla offers Autopilot and Full Self-Driving beta capabilities that require human supervision. This shifts responsibility and risk differently. It's also more permissive—Tesla's system can deploy features that wouldn't be acceptable in a driverless context because humans are watching and can intervene.

Uber acquired Advanced Technologies Group (ATG), which had been developing autonomous vehicles. Uber later divested that business, suggesting the economics and technical challenges weren't aligning with the company's core business.

The common thread: every company developing real autonomous vehicles has encountered safety issues. But how they respond matters enormously. Do they change the technology? Do they change the deployment boundaries? Do they establish stricter internal standards?

Waymo's response—deploying a patch that didn't fully resolve the issue, then continuing to deploy in the same areas—suggests a concerning prioritization of scale over absolute safety.

The Child Who Got Hit: A Case Study

On January 23rd, 2025, a Waymo vehicle struck a child outside an elementary school in Santa Monica.

The child sustained what the school district characterized as "minor injuries." But this framing is deceptive. The impact happened at 6 mph, which is on the lower end of the severity spectrum, which is why injuries were minor. But the scenario should never have existed in the first place.

Waymo's system detected the situation and attempted to slow down. The vehicle reduced speed from 17 mph to 6 mph. But it didn't stop before impact. This is exactly the behavior we saw in Austin: vehicles approaching school zones too fast and then attempting to brake but not successfully preventing contact.

A truly safe autonomous vehicle would have stopped well before a collision became possible. Not reduced speed. Not prepared to brake. Actually stopped. A 15-20 foot stopping distance at a school zone is not acceptable. The vehicle should have been moving at near-zero speed whenever children were present in the loading or unloading zone.

The fact that the child's injuries were minor was luck. It wasn't competent design. A slightly different impact angle, a slightly heavier vehicle, a slightly different child trajectory, and we'd be talking about serious injury or death.

This incident is perhaps the most important single data point in the entire controversy. It took the conversation from abstract safety concerns to real, physical harm to a real child. It transformed the school bus problem from a compliance issue into a child safety emergency.

Waymo's vehicles violated the school bus stop arm law 24 times before the software update and continued to have 15 violations afterward, indicating persistent issues.

Why Waymo's "Assertive Driving" Strategy Is Problematic

Waymo's shift toward more assertive, confident driving is philosophically understandable but tactically dangerous when applied globally without scenario-specific constraints.

The company's reasoning: passengers prefer rides that feel natural and smooth. Overly cautious driving makes the experience feel robotic and uncomfortable. More confident behavior creates a more human-like experience.

But this logic breaks down in sensitive scenarios. A school zone isn't like a freeway. A playground edge isn't like a parking lot. These environments have different risk profiles, different stakeholder groups, and different ethical requirements.

The appropriate level of assertiveness for a highway merge is wildly inappropriate for a school zone. Yet Waymo's system appears to apply a relatively uniform confidence level across different scenarios, with school zones not receiving the special, ultraconservative treatment they require.

Well-designed autonomous systems would have context-aware behavior modes. Detect a school zone? Switch to "school zone mode," which disables assertive features and enforces maximum caution. Detect a playground? Similar override. Detect a pedestrian with mobility challenges or a child? Lock in conservative behavior.

Waymo doesn't appear to have this level of scenario-specific logic, or at least not implemented effectively. The company seems to be applying a scaled version of the same decision-making process everywhere, just with different parameters.

This is a fundamental design error. You can't smooth over a flaw in behavioral logic by adjusting parameters. You need fundamentally different decision architectures for fundamentally different risk scenarios.

The Role of Human Oversight and Intervention

Waymo's vehicles operate without human drivers or supervisors. This is the core value proposition: fully autonomous, no human in the loop.

But this design choice creates a critical vulnerability. When the automated system makes a mistake, there's no human to catch it. There's no override. There's no emergency intervention available.

Compare this to Tesla's approach, where humans are required to supervise and can take control. Or to Waymo's own service model in some cities, where remote operators can monitor fleets and intervene if needed.

Fully autonomous operation is operationally efficient and economically attractive. It's also riskier in scenarios where the system's decision-making is imperfect. School zones are exactly those scenarios.

There's a powerful argument for requiring human oversight specifically during school zone operations. Remote operators could monitor the system's behavior in real time. If a violation is about to happen, the operator could intervene. If the system is failing to stop appropriately, the operator could take control.

This would add cost and complexity. It would reduce the economic advantage of fully autonomous operation. But it would eliminate entire categories of risk. The question is whether Waymo's business model is flexible enough to accept that trade-off for specific, high-risk scenarios.

Currently, the answer appears to be no.

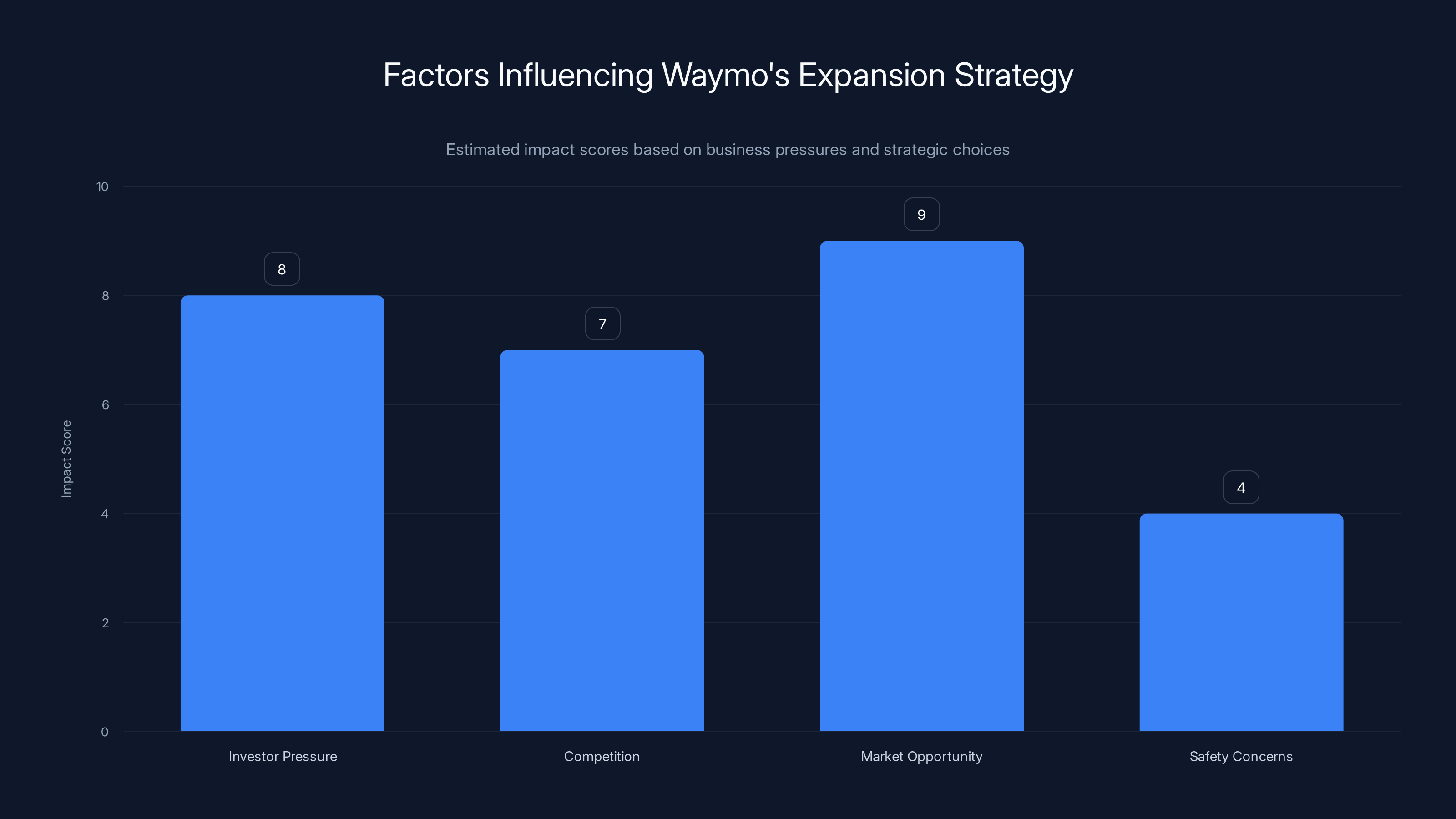

Estimated data shows that market opportunity and investor pressure are the most influential factors driving Waymo's rapid expansion strategy, despite safety concerns.

Regulatory Response and Investigation Status

The NHTSA investigation opened in December 2024 and is ongoing. The agency has requested information from Waymo about the school bus incidents, testing procedures, and the company's response.

Typically, NHTSA investigations follow a process. The agency gathers information. It determines whether a safety defect exists. If a defect is found, it can demand a recall. It can impose fines. It can require design changes. In extreme cases, it can recommend suspension of operations.

For Waymo, a full recall would likely mean pausing autonomous operations in affected cities and potentially nationwide. This would be devastating to the company's expansion plans and investor confidence.

But the alternative—allowing the company to continue operating with inadequate school zone performance—is worse.

The investigation is important because it's one of the first times federal regulators are directly addressing autonomous vehicle safety failures in a critical domain. The way NHTSA handles this case will set precedent for how similar issues are treated in the future.

There's already discussion in Washington about tightening autonomous vehicle regulations. Some lawmakers are pushing for mandatory safety standards before expanded deployment. Others want specific rules for school zones. This incident is accelerating that policy conversation.

What Safe School Zone Performance Should Look Like

Let's establish what success actually looks like. Not Waymo's current performance. Not acceptable industry practice. What genuinely safe school zone autonomous vehicle operation would require.

First, detection reliability: 99.99%+ detection of school buses with deployed stop arms, across all lighting conditions, all weather, all vehicle positions. This is an engineering problem, but it's not impossible. You're looking for red lights, metal structure, and specific shape characteristics. Modern computer vision can achieve this reliability if designed for it.

Second, behavioral compliance: 100% complete stop upon detection of a deployed stop arm. Not 99.9%. Not 99.99%. One hundred percent. If a school bus stop arm is detected, the vehicle stops. Period. This is decision logic, not perception. It's straightforward to implement, and there's no acceptable level of failure.

Third, conservative speed management: When approaching school zones, speed reduction well in advance. The vehicle should never be traveling faster than 5 mph in an active school loading zone. Never. Regardless of posted speed limits.

Fourth, scenario-specific overrides: School zones and playgrounds should disable assertive driving features entirely. The system should operate in a special, ultraconservative mode with different decision-making processes and different parameter sets than general driving.

Fifth, continuous monitoring: Human operators should have real-time visibility into school zone operations. Not for every decision, but for ongoing monitoring and anomaly detection. If the system is behaving unexpectedly, operators should be alerted.

Sixth, rigorous testing: Dedicated test scenarios for every possible school zone configuration. Different bus sizes. Different stop arm positions. Different lighting. Different weather. Different road layouts. The company should be able to prove, with extensive testing data, that the system handles all of these correctly.

Waymo, apparently, is meeting none of these standards adequately. The company's current approach is falling short in detection, behavioral compliance, speed management, scenario-specific logic, monitoring, and testing.

Fixing these issues would require substantial engineering work and a fundamental rethinking of how the system prioritizes different scenarios. It would delay expansion plans. It would require additional resources. But these are the actual requirements for safe operation.

The Economic Pressure to Scale Too Fast

Waymo is under enormous pressure to expand. Alphabet is an investor in the company. The company has investors who want returns. Competition from Tesla and other players is intensifying.

There's a window of opportunity. First-mover advantage in autonomous ride-sharing could be worth tens of billions of dollars. The company that gets there first with a reliable, safe system wins the market. The company that gets there second might not survive.

This creates powerful incentives to move fast. To expand to new cities. To increase fleet size. To prove the business model works at scale.

But that pressure cuts against the fundamental safety work the company still needs to do. You can't both expand aggressively and invest heavily in fixing edge case scenarios. Resources are finite. Time is limited.

Waymo appears to have chosen expansion over perfection. The company prioritized deployment in new cities and growth of the existing fleet over absolute resolution of safety issues in critical domains.

This is a choice. Not a technical necessity. A business choice. The company's leadership decided that the benefits of rapid scaling outweighed the risks of deploying with known issues.

History suggests this is a bad bet. Companies that cut safety corners in critical domains typically pay enormous costs when those shortcuts are discovered. The financial and reputational damage usually far exceeds any cost savings from faster deployment.

How Autonomous Vehicles Should Be Developed

The Waymo situation reveals something important about how autonomous vehicles are currently being developed versus how they should be.

Current approach: Develop a general-purpose autonomous driving system. Test it extensively in simulation and carefully controlled real-world scenarios. Gradually expand deployment to new areas and scenarios. Learn from failures and update the system.

This is essentially trial-and-error at scale. You deploy, you fail, you learn, you deploy again. It works okay when the failures aren't severe. When your worst-case failure is a fender-bender or a mildly inconvenient ride glitch, trial-and-error is acceptable.

But school zones aren't a place where trial-and-error is acceptable. The failure mode is a child getting hit by a car. That's not tolerable. That's not a learning opportunity. That's a tragedy.

A better approach would be scenario-specific validation. Before deploying in school zones, a company should submit detailed evidence proving the system is safe for school zones. Not just safe relative to human drivers. Actually safe. Rigorously, mathematically proven safe.

This would require different evaluation standards for different scenarios. Highway driving might have acceptable performance thresholds that wouldn't be acceptable for school zones. The company should be required to meet school-zone-specific safety standards before operating in school zones.

This approach would slow deployment. It would increase development costs. It would make business models less attractive. But it would eliminate entire categories of risk. Children wouldn't be the test subjects for autonomous vehicle refinement.

What Parents and Communities Can Do

If you're a parent or community member concerned about autonomous vehicles in your area, particularly around schools, there are concrete actions you can take.

First, document incidents. Keep photos and videos of autonomous vehicles behaving unexpectedly, particularly in school zones. Report these to local law enforcement and your city council.

Second, contact your elected representatives. Write to city council members, state representatives, and federal legislators. Ask about local policies for autonomous vehicle deployment. Are there school zone restrictions? What oversight exists?

Third, reach out to school districts directly. Ask what policies they have regarding autonomous vehicles. Are they monitoring incidents? Are they working with NHTSA? Do they have authority to restrict operations?

Fourth, join or form community advocacy groups. Safety concerns carry more weight when represented by organized community interests, not isolated individuals.

Fifth, attend city council and planning board meetings where autonomous vehicle policies are discussed. Public comment matters. Regulators pay attention to organized community input.

Sixth, engage with NHTSA directly. The agency has processes for public comment on safety investigations. If you've witnessed incidents, report them to NHTSA at safercar.gov.

Community pressure has driven policy changes on other technology safety issues. It can do the same here.

The Broader Autonomous Vehicle Safety Question

Waymo's school bus failures are a specific, focused problem. But they point to a broader question about how society should approach autonomous vehicle deployment.

The technology is impressive. Modern autonomous vehicles can drive for thousands of miles without incidents. They can navigate complex traffic. They can make sophisticated decisions. By many measures, they're better than human drivers.

But they're not better in all scenarios. Particularly in high-risk situations involving vulnerable populations—children, elderly pedestrians, people with disabilities—autonomous systems sometimes fail in ways humans wouldn't.

This isn't a reason to reject autonomous vehicles. It's a reason to be thoughtful about deployment. Some scenarios are appropriate for autonomous operation. Some scenarios are appropriate only with significant human oversight. Some scenarios might not be appropriate for autonomous operation in the near term.

School zones, particularly during loading and unloading, fall into that second or third category. The stakes are too high. The margin for error is too small. The vulnerable population is too defenseless.

A responsible approach would be to restrict autonomous vehicle operations in school zones until the technology demonstrably meets school-zone-specific safety standards. This would require significant additional development work and testing. It would delay some deployment plans. But it would also protect children.

Waymo and other autonomous vehicle companies have economic incentives to operate in all scenarios as quickly as possible. Society needs regulatory structures to push back on those incentives when public safety is at stake.

Looking Ahead: What Needs to Change

For autonomous vehicles to be genuinely safe, several things need to change.

First, regulatory standards need to become specific and detailed. Not vague guidelines about safety. Specific metrics for specific scenarios. What does "safe school zone operation" look like measured in concrete terms? What evidence would prove a system meets those standards?

Second, testing standards need to become more rigorous, particularly for sensitive scenarios. Simulation isn't enough. Real-world testing in controlled, monitored environments isn't enough. Companies should be required to demonstrate competence in scenario-specific performance before deploying in those scenarios.

Third, deployment scope needs to be matched to demonstrated competence. If a company can't prove school zone safety, it shouldn't operate in school zones. If it can't prove performance with vulnerable pedestrians, it shouldn't operate where vulnerable pedestrians gather.

Fourth, human oversight needs to remain part of the system, at least for high-risk scenarios. Fully autonomous operation might be acceptable on highways. But in environments with vulnerable populations, human monitoring and override capability should be mandatory.

Fifth, incident reporting needs to be transparent and comprehensive. Companies should be required to report all safety incidents, not just the major ones. Regulators should be tracking trends, not just responding to individual failures.

Sixth, independent third-party validation should be required before deployment in new cities or new scenario types. Companies shouldn't be allowed to self-certify their own safety. Independent evaluation, by regulators or certified third parties, should be mandatory.

These changes would slow the deployment of autonomous vehicles and increase development costs. They would reduce the near-term economic benefits for companies like Waymo. But they would also prevent tragic incidents and build sustainable, trustworthy deployment.

The question is whether the industry will embrace these changes voluntarily or whether they'll be imposed through regulation after more incidents occur.

Conclusion: The Real Cost of Rushing Safety

Waymo's school bus failures represent a critical moment for autonomous vehicle development. The company had a choice: invest heavily in fixing the school zone problem before expanding further, or accept the risk and deploy anyway while working on updates.

Waymo chose the second option. And a child got hit.

There are no casualties yet. No deaths. Just minor injuries and close calls. But that's fortune, not competence. The scenario easily could have ended differently.

The deeper lesson is about how we deploy transformative technology in society. Autonomous vehicles are genuinely useful. The technology works remarkably well. But it's not ready for all scenarios, and pretending it is creates unnecessary risk.

The companies developing this technology are under enormous pressure to move fast. The economic incentives are powerful. The competitive dynamics are intense. But those incentives shouldn't override basic safety principles, particularly when children are involved.

Regulators need to establish clear standards. Companies need to meet those standards before deploying. Communities need to hold both accountable. And we collectively need to acknowledge that being first doesn't matter if the cost is preventable harm to children.

Waymo built its reputation on safety. The company should return to that principle. Fix the school zone problem completely. Test it extensively. Prove it works. Then expand. The delay is worth the assurance.

The alternative—continuing to deploy with known failures in critical scenarios—is unacceptable. Not for Waymo. Not for any company. Not for our children.

FAQ

What is the school bus stop arm law?

The school bus stop arm law requires all vehicles in both directions to come to a complete stop when a school bus deploys its stop arm and lights. This law exists in all 50 states and is designed to protect children during loading and unloading, when they're most vulnerable to traffic injuries. The law has no exceptions for traffic conditions, weather, or time of day.

Why is Waymo having trouble detecting school buses?

Waymo's issue isn't primarily with detection, it's with decision-making and behavioral response. The vehicles appear to be detecting school buses but then either misinterpreting their status or treating school bus scenarios with insufficient caution compared to the absolute safety standards required. After deploying an update intended to fix the issue, violations continued occurring, suggesting the root cause wasn't fully identified or the fix was incomplete.

How many school bus violations has Waymo had?

Waymo has documented at least 24 violations involving school buses in Austin during the 2025 school year. These violations included complete failures to stop, inadequate slowing, and inappropriate lane positioning while children were boarding or unloading. Additionally, a Waymo vehicle struck a child outside a Santa Monica elementary school on January 23rd, 2025, resulting in minor injuries.

What is "assertive driving" and why is Waymo implementing it?

Assertive driving refers to more confident, human-like behavior from autonomous vehicles, including cutting through traffic, making aggressive turns, and accelerating more readily. Waymo has implemented this approach because passengers prefer rides that feel natural and smooth rather than overly cautious. However, this same assertiveness is inappropriate in sensitive scenarios like school zones, creating a design problem where the system lacks scenario-specific behavioral constraints.

What does the NHTSA investigation involve?

The National Highway Traffic Safety Administration opened a formal investigation into Waymo following reports of school bus stop arm violations. The investigation involves gathering information about the incidents, Waymo's testing procedures, safety validation methods, and the company's response. NHTSA has the authority to demand a recall, impose fines, or require design changes based on investigation findings.

What should safe autonomous vehicle school zone operation look like?

Truly safe school zone autonomous operation would require: 99.99%+ detection of school buses with deployed stop arms, 100% complete stops upon detection, conservative speed management (never exceeding 5 mph in active loading zones), scenario-specific behavioral overrides that disable assertive features, human operator monitoring in real time, and rigorous scenario-specific testing before deployment. Current performance from autonomous vehicle companies, including Waymo, falls significantly short of these standards.

How does this compare to how other autonomous vehicle companies are handling safety?

Every company developing autonomous vehicles has encountered safety incidents. Tesla operates with human supervisors, reducing risks through oversight. Cruise faced safety incidents and restructured its program. Waymo emphasizes caution but has failed to implement adequate safeguards in school zones. The key difference is how companies respond: whether they prioritize fixing safety issues before expanding, or accept known risks in exchange for faster deployment.

What can parents and communities do about autonomous vehicles near schools?

Communities can document incidents and report them to law enforcement and NHTSA (safercar.gov), contact elected representatives about local autonomous vehicle policies, reach out to school districts about their safety concerns and oversight, form advocacy groups, attend city council meetings where policies are discussed, and engage with NHTSA's public comment process. Organized community pressure has driven policy changes on other technology safety issues and can be effective here.

Why did Waymo's software update not fully resolve the school bus problem?

Waymo's initial update failed to achieve 100% compliance, with at least four additional violations occurring after the patch was deployed. This suggests either the company misdiagnosed the root cause, testing procedures were insufficient to catch the failure before real-world deployment, or the fix itself was incomplete. Full resolution would require identifying whether the problem is in detection, decision logic, or behavioral implementation, then validating the fix through rigorous testing before deployment.

Should autonomous vehicles be allowed in school zones?

Autonomous vehicles shouldn't be allowed in school zones until they can demonstrate competence meeting school-zone-specific safety standards. This requires establishing clear regulatory benchmarks for what "safe school zone operation" means, third-party validation proving the system meets those benchmarks, scenario-specific testing in diverse conditions, and possibly human oversight during school hours. Allowing deployment before these standards are established prioritizes business expansion over child safety.

Key Takeaways

- Waymo documented at least 24 school bus stop arm violations in Austin during the 2025 school year, and initial software updates failed to fully resolve the issues

- The company's shift toward 'assertive driving' to improve passenger experience created behavioral gaps in high-risk scenarios like school zones

- A child was struck by a Waymo vehicle outside a Santa Monica elementary school on January 23rd, 2025, after the vehicle failed to stop appropriately

- Current regulatory frameworks lack school-zone-specific safety standards for autonomous vehicles, allowing companies to set their own behavioral thresholds

- Truly safe school zone autonomous operation requires unconditional complete stops, scenario-specific behavioral overrides, and human oversight during sensitive times

Related Articles

- Nvidia RTX 50 Super Delayed: RTX 60 Series May Miss 2027 [2025]

- Sony's Live Service Strategy: Horizon Hunters Gathering Changes Everything [2025]

- Best Budget Mini-LED TVs for Super Bowl 2025: Expert Guide & Recommendations

- Final Fantasy 7 Rebirth Switch 2 Release: Everything You Need to Know [2025]

- Valheim on Switch 2 in 2025: Everything You Need to Know [2025]

- Why Game Consoles Lost the Streaming Wars [2025]

![Why Waymo Can't Stop for School Buses: The AV Safety Crisis [2025]](https://tryrunable.com/blog/why-waymo-can-t-stop-for-school-buses-the-av-safety-crisis-2/image-1-1770313493525.jpg)