Introduction

The digital landscape, while a fertile ground for innovation and connectivity, has also become a breeding ground for hate and terror content. In recent years, platforms like 'X' have faced significant scrutiny, particularly in the UK, where regulatory bodies like Ofcom have demanded more stringent measures to curb online hate speech. This article explores 'X's' strategies and commitments to reduce hate content, the technical and operational challenges involved, and the broader implications for social media platforms globally.

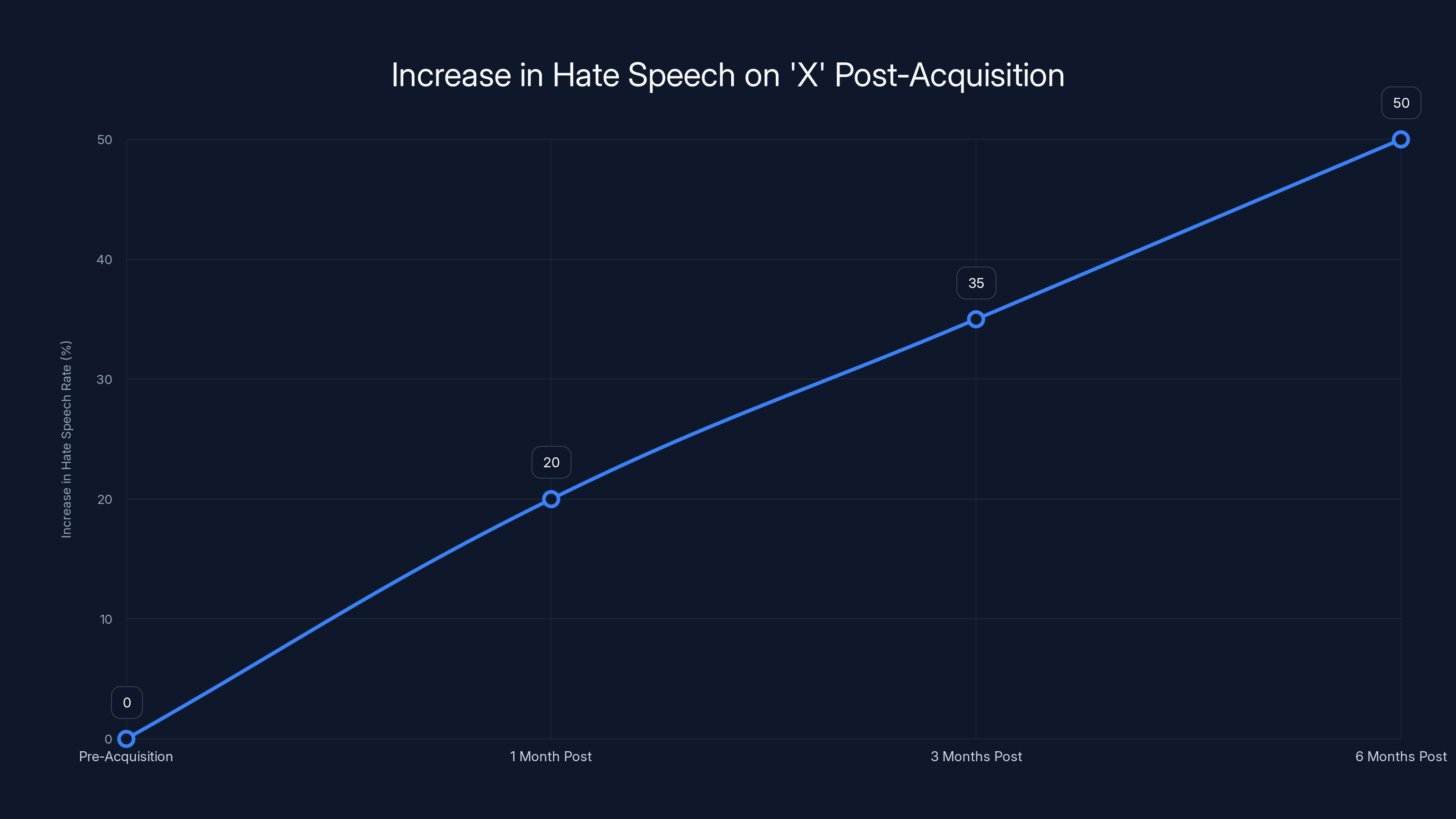

Hate speech on 'X' increased by 50% within six months post-acquisition, driven by bots and algorithmic biases. (Estimated data)

TL; DR

- X has pledged to enhance its review process to tackle hate content more effectively.

- Increased bot activity has been linked to a 50% rise in hate speech post-platform acquisition.

- Regulatory pressure from Ofcom demands swift and decisive action against terrorist content.

- Future trends indicate a shift towards more automated content moderation.

- Public trust and platform integrity hinge on effective implementation of content policies.

The Rise of Hate Content on 'X'

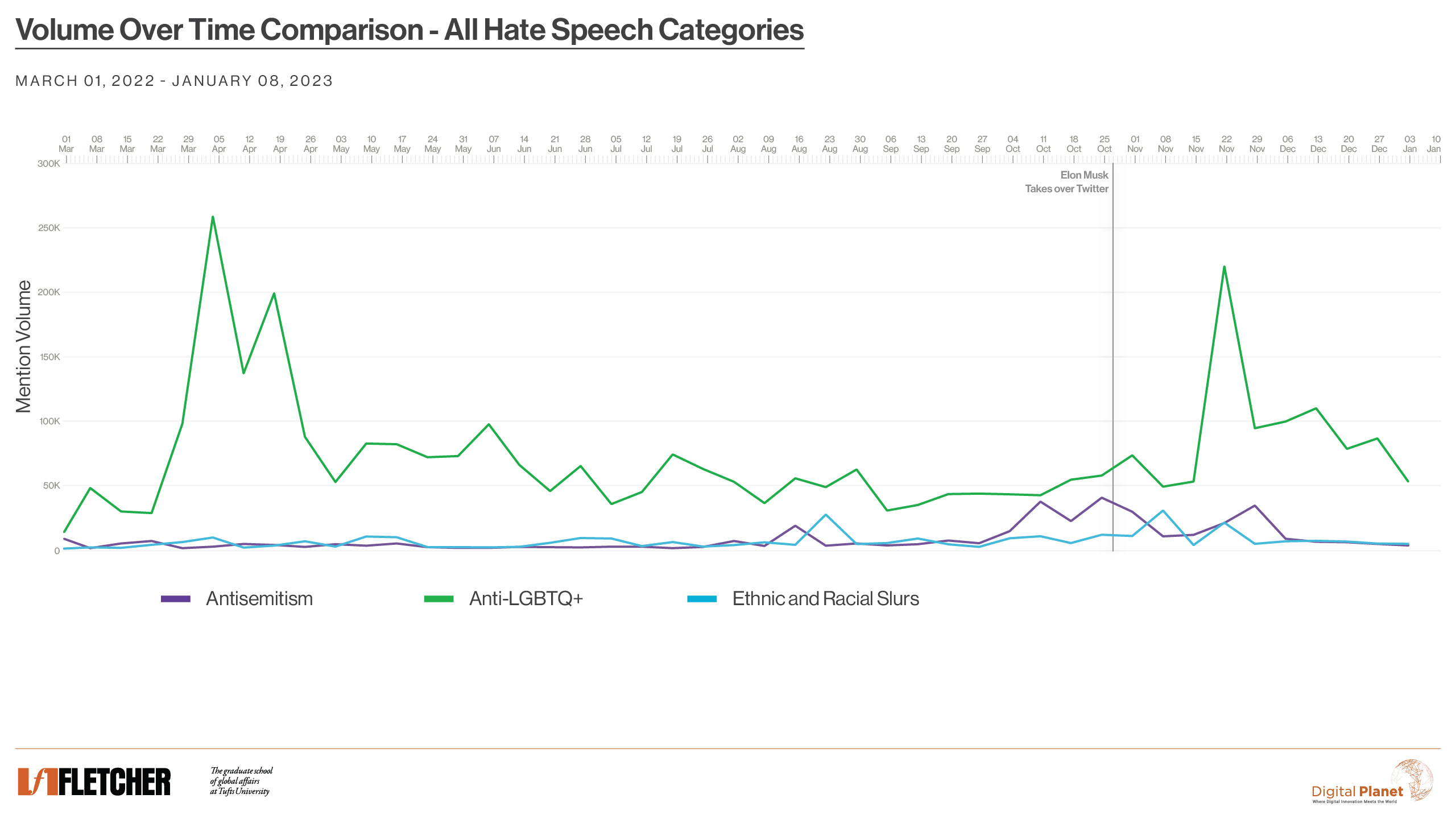

When Elon Musk purchased Twitter, now rebranded as 'X,' the platform saw a marked increase in hate content. According to a BBC report, hate speech rates rose by 50%, largely driven by an uptick in bot activity. This surge in toxic content has not only tarnished 'X's' reputation but has also alarmed regulators and users alike.

Key Drivers of Hate Content

- Bot Proliferation: Automated accounts have been a significant contributor to the spread of hate speech. Bots are programmed to amplify certain narratives, often without human oversight.

- Algorithmic Bias: The algorithms that prioritize content can inadvertently favor sensational or divisive posts that engage users, leading to the unintentional spread of hate content.

- Policy Gaps: Prior to recent changes, 'X's' policies may have been insufficient in swiftly addressing hate speech, allowing it to proliferate unchecked.

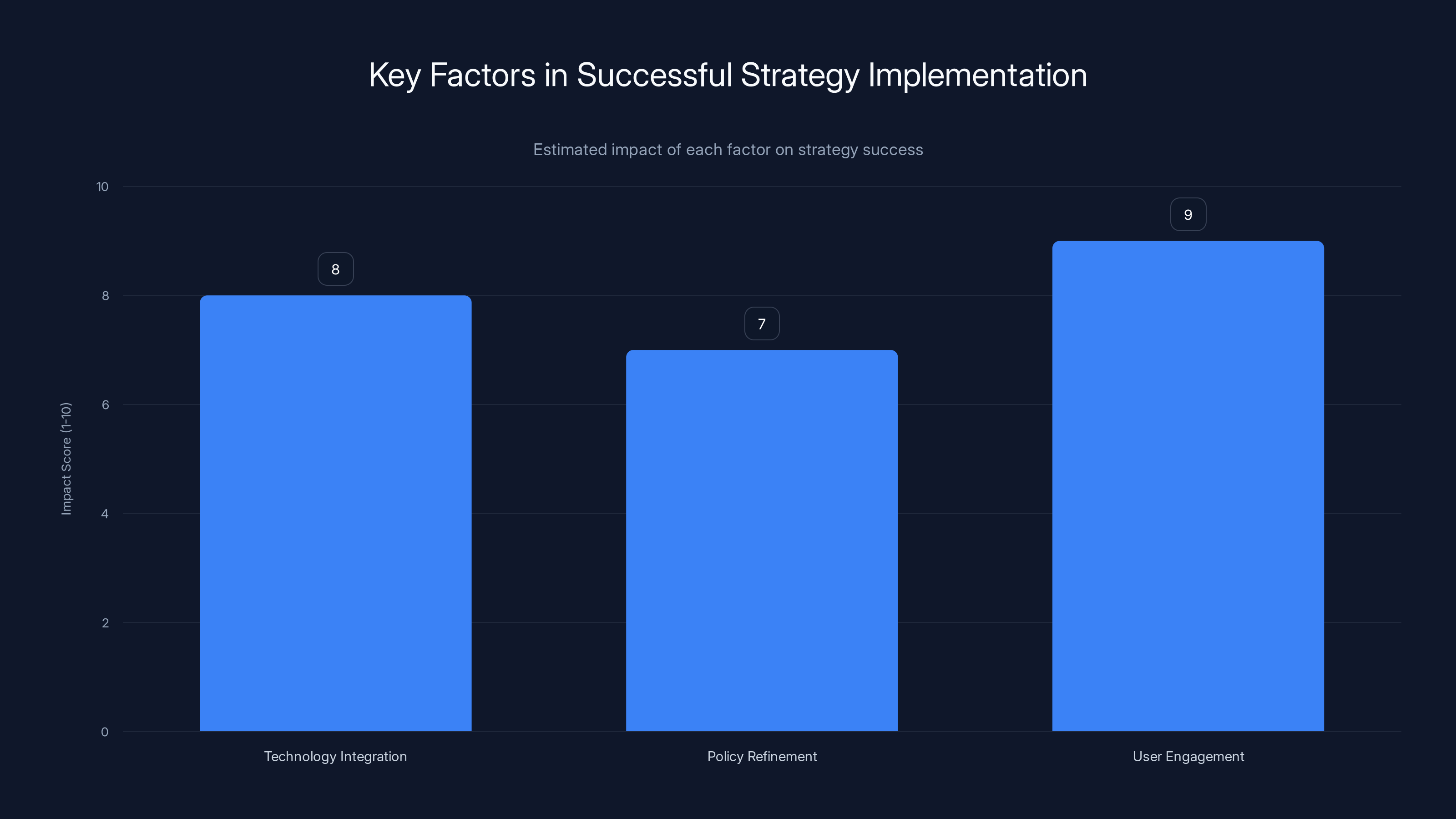

Estimated data shows that user engagement has the highest impact on successful strategy implementation, followed by technology integration and policy refinement.

X's Strategy for Reducing Hate Content

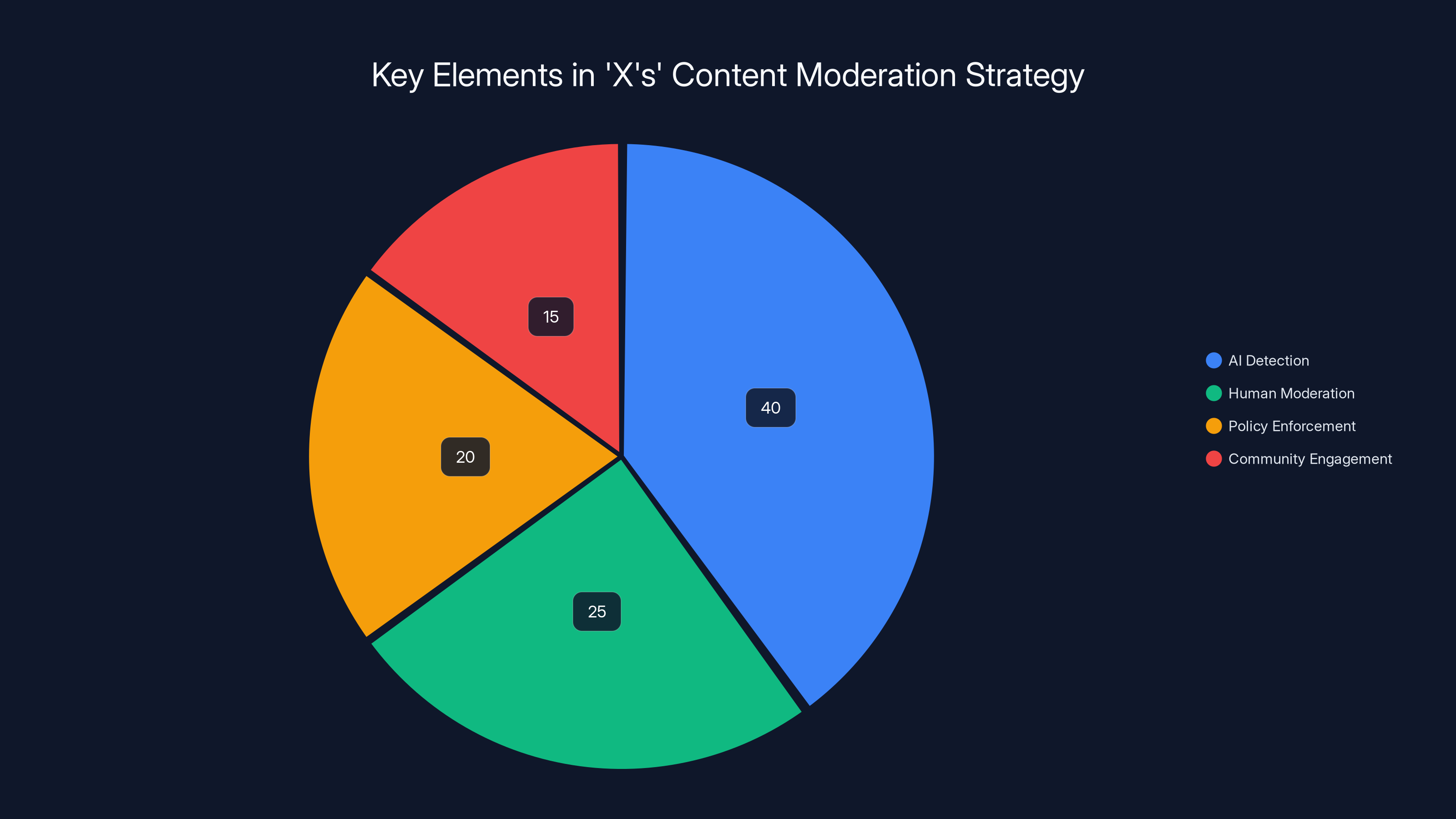

In response to Ofcom's demands, 'X' has outlined several strategies to mitigate hate and terror content. These strategies focus on improving detection, enhancing review processes, and enforcing stricter penalties for violators.

Enhanced Detection Mechanisms

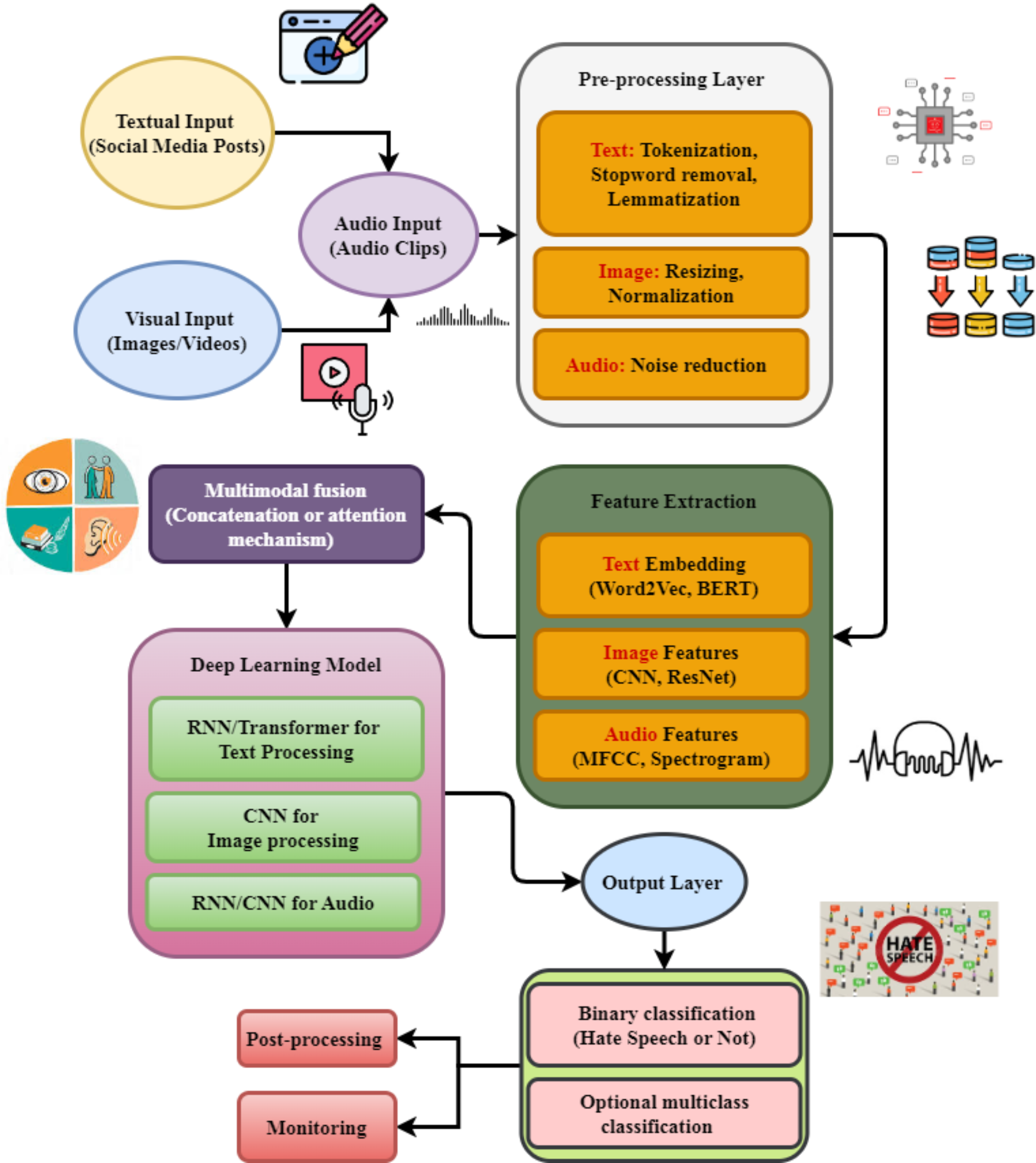

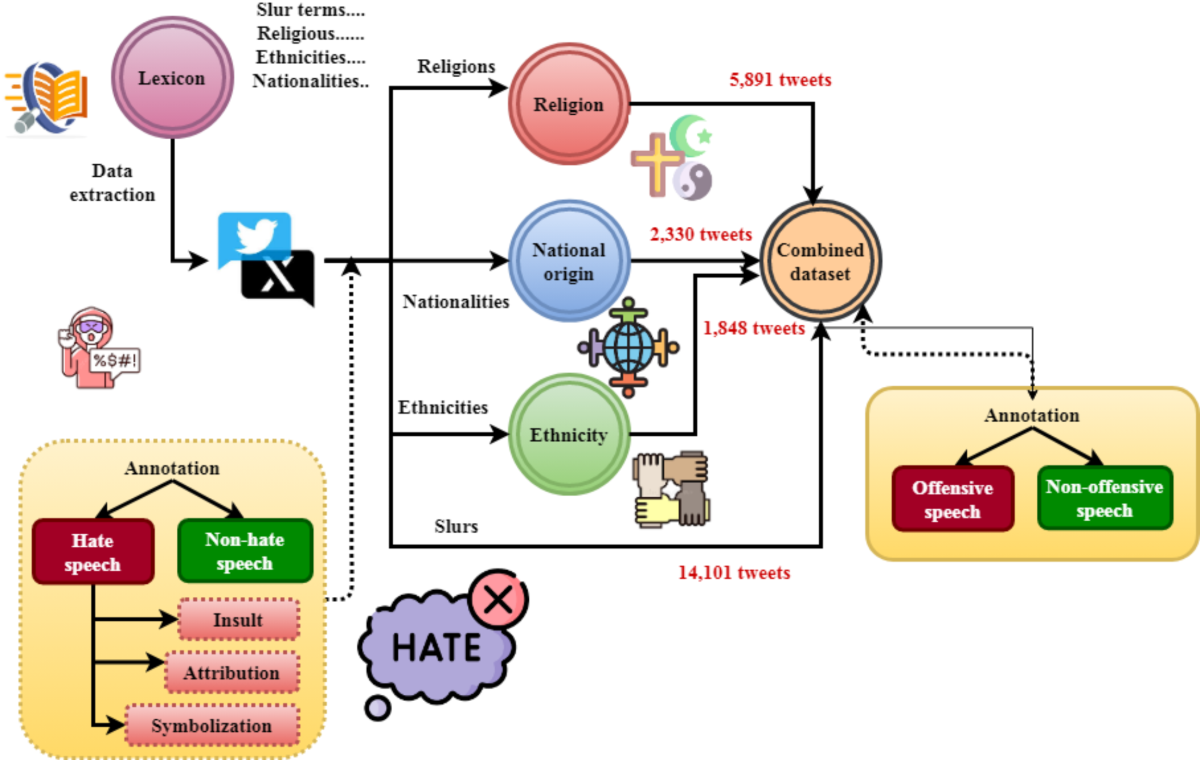

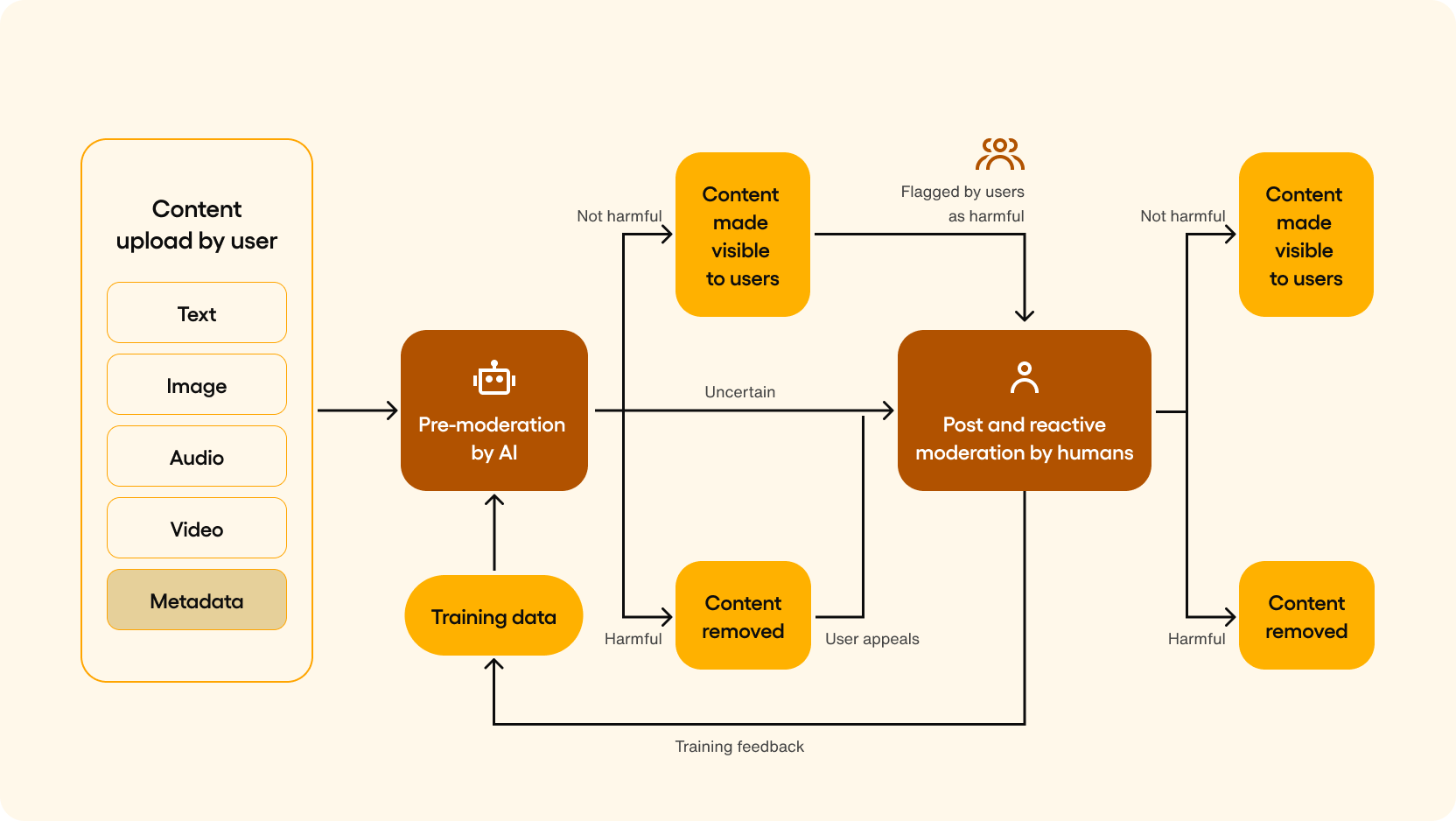

Machine Learning and AI: 'X' is leveraging advanced AI algorithms to detect hate speech with greater accuracy. These systems analyze text patterns, context, and user engagement to identify potentially harmful content, as noted in a recent study.

Real-Time Monitoring: Implementing real-time monitoring systems allows 'X' to quickly flag and review content that is likely to violate community standards, as discussed in Stanford's policy analysis.

Expedited Review Processes

Human Review Teams: While AI plays a critical role, human oversight remains essential. 'X' has expanded its team of moderators to ensure that flagged content is reviewed promptly and accurately.

Priority Queues: Content flagged as potentially involving hate speech or terrorism is placed in priority queues, ensuring swift action is taken.

Legal and Community Enforcement

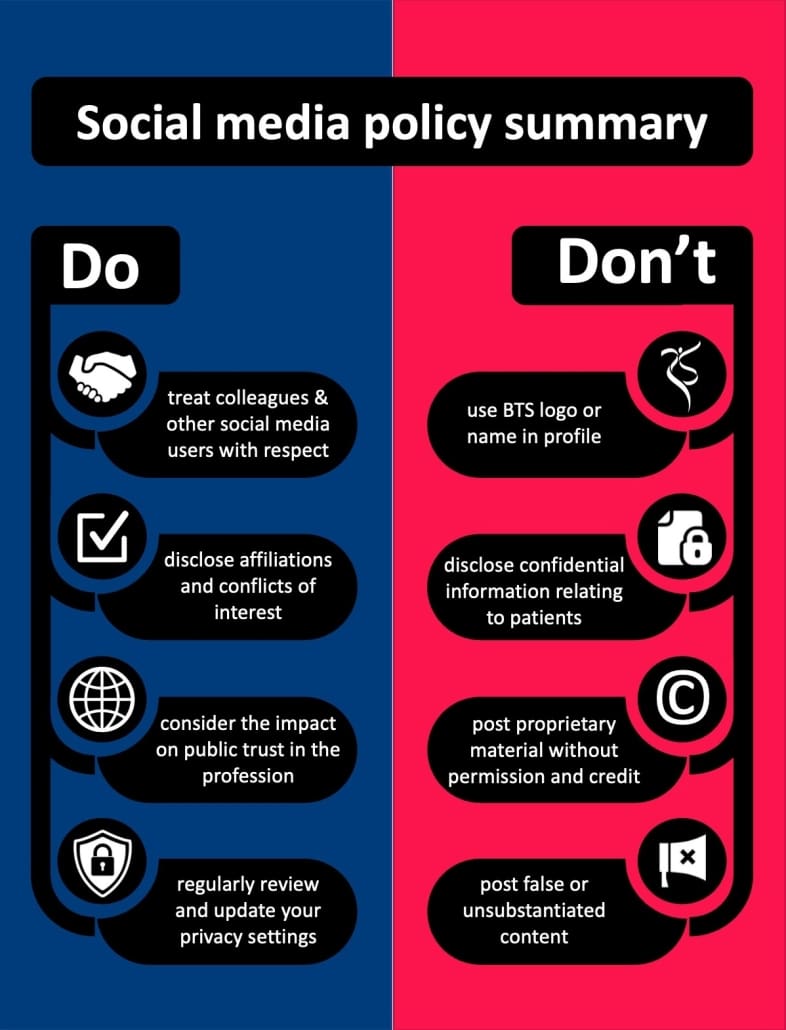

Account Restrictions: 'X' has committed to blocking access to accounts posting illegal terrorist content, particularly those linked to recognized terrorist organizations, as reported by Reuters.

Community Reporting: Empowering users to report hate content is a crucial aspect of 'X's' strategy. Community reporting mechanisms have been streamlined to encourage more user engagement in content moderation.

Technical Challenges and Solutions

Implementing these strategies is not without challenges. From technical limitations to resource allocation, 'X' must navigate several obstacles to effectively reduce hate content.

Algorithmic Challenges

False Positives and Negatives: AI algorithms can sometimes misinterpret content, leading to either the wrongful flagging of benign content or the failure to catch harmful content.

Solution: Continuous training and refinement of algorithms are necessary. 'X' invests in machine learning models that evolve with emerging trends in language and communication.

Scalability Issues

Resource Allocation: Scaling content moderation to handle millions of daily posts requires significant resources.

Solution: 'X' is investing in cloud-based infrastructure to support scalable moderation processes, ensuring that resources can be dynamically allocated based on demand.

Regulatory Pressures and Compliance

In the UK, Ofcom has been at the forefront of pushing for greater accountability from social media platforms. 'X's' commitment to reducing hate content aligns with regulatory expectations and is crucial for its continued operation in the region.

Ofcom's Role

Online Safety Regulations: Ofcom has outlined clear guidelines for online safety, emphasizing the need for platforms to swiftly address hate speech and terrorist content, as detailed in a Financial Times article.

Expectations from 'X': Ofcom expects 'X' to demonstrate not just intent but tangible results in reducing hate content, with periodic assessments to track progress.

Estimated data: 'X' focuses primarily on AI detection (40%) and human moderation (25%) in its content moderation strategy, supplemented by policy enforcement and community engagement.

Practical Implementation Guides

For 'X' to successfully implement its strategies, a structured approach is necessary. This involves integrating technology, refining policies, and enhancing user engagement.

Step-by-Step Implementation

- Technology Integration: Deploy AI tools that integrate seamlessly with existing moderation workflows.

- Policy Refinement: Regularly update community guidelines to reflect current challenges and enforce stricter penalties for violations.

- User Engagement: Foster a community-driven approach by incentivizing users to participate in content reporting and moderation.

Common Pitfalls and Solutions

Even with a robust plan, there are common pitfalls that 'X' must avoid to ensure the effectiveness of its strategies.

Pitfall: Overreliance on Automation

Solution: Balance AI with human oversight to ensure nuanced understanding and context in content moderation.

Pitfall: Inconsistent Enforcement

Solution: Establish clear enforcement protocols that are consistently applied across different types of content and user demographics.

Future Trends in Content Moderation

The landscape of content moderation is rapidly evolving. Here are some trends that 'X' and other platforms may adopt in the future.

Increased Automation

Trend: The use of machine learning and AI will continue to grow, enabling platforms to handle larger volumes of content efficiently, as highlighted in a market overview.

Cross-Platform Collaboration

Trend: Platforms may collaborate to share information and strategies for tackling hate content, creating a unified front against online toxicity.

Recommendations for Platforms

To build a safer online environment, platforms must adopt holistic approaches that involve technology, policy, and community.

Develop Comprehensive Guidelines

Action: Update guidelines regularly to address new forms of hate speech and ensure they are communicated clearly to users.

Foster Transparency

Action: Provide users with insights into moderation processes and decision-making, building trust and accountability.

Invest in User Education

Action: Educate users on the impact of hate content and encourage responsible online behavior through awareness campaigns.

Conclusion

The commitment of 'X' to reduce hate and terror content is a step in the right direction, but it requires sustained effort and innovation. By leveraging advanced technologies, refining policies, and engaging users, 'X' can create a safer digital space. As regulations tighten and public expectations grow, platforms must remain agile, transparent, and accountable in their efforts to combat online hate speech.

FAQ

What is 'X' doing to reduce hate content in the UK?

'X' is enhancing its content moderation processes, leveraging AI for detection, and engaging human moderators to swiftly address hate speech. It also blocks access to accounts promoting terrorist content.

How does AI help in content moderation?

AI analyzes text patterns and user engagement to identify potentially harmful content, allowing for quicker and more accurate detection of hate speech.

What challenges does 'X' face in content moderation?

Challenges include managing false positives/negatives, scalability of moderation processes, and maintaining consistent enforcement of policies.

How is Ofcom involved in regulating 'X'?

Ofcom sets online safety regulations and expects platforms like 'X' to demonstrate effective measures in reducing hate content, with periodic assessments to ensure compliance.

What future trends are expected in content moderation?

Future trends include increased automation of moderation processes and cross-platform collaboration to tackle hate content more effectively.

How can users help reduce hate content on 'X'?

Users can report hate content they encounter and participate in community-driven moderation efforts, contributing to a safer online environment.

What role does community engagement play in content moderation?

Community engagement empowers users to report and flag harmful content, supplementing automated and human moderation efforts for more comprehensive content management.

How can other platforms learn from 'X's' strategies?

By observing 'X's' integration of AI, policy refinement, and user engagement, other platforms can adopt similar strategies to effectively combat hate content.

Key Takeaways

- X's commitment to reducing hate content is driven by regulatory pressure from Ofcom.

- AI and machine learning are central to X's strategy for detecting and moderating hate speech.

- Increased bot activity has significantly contributed to the rise of hate speech on the platform.

- Effective content moderation requires a balance between AI automation and human oversight.

- Future trends in content moderation include increased automation and cross-platform collaboration.

- User engagement and community reporting are crucial components of successful content moderation strategies.

Related Articles

- How the UK is Addressing Illegal Hate and Terror Content Online [2025]

- Inside Spotify's New Wrapped Feature: What Went Wrong and How It Can Improve [2025]

- Meta Faces Legal Challenges Over Scam Ads on Facebook and Instagram [2025]

- Spotify's New Listening Recap: A Deep Dive into Your Musical Journey [2025]

- The Streaming Shift: Vertical Video Feeds and the TikTokification of Prime Video [2025]

- The Fall of Social Media: Navigating the Messy Future of Online Interaction [2025]

![X's Commitment to Reducing Hate Content in the UK: An In-Depth Analysis [2025]](https://tryrunable.com/blog/x-s-commitment-to-reducing-hate-content-in-the-uk-an-in-dept/image-1-1778857496707.jpg)