Introduction

In recent years, the proliferation of illegal hate speech and terror-related content online has become a pressing issue for governments worldwide. The UK, in particular, has taken significant steps to address this challenge by implementing stringent measures aimed at curbing the spread of harmful content on digital platforms. This article delves into the UK's approach, exploring the regulatory framework, technological interventions, and collaborative efforts designed to tackle this growing menace.

TL; DR

- New Regulations: The UK has introduced stricter laws to combat hate speech and terror content, as highlighted in The Guardian.

- Technological Solutions: AI and machine learning are pivotal in identifying and removing illegal content, according to Dig Watch.

- Platform Accountability: Social media platforms face hefty fines for non-compliance, as reported by Reuters.

- Collaborative Efforts: Partnerships with tech companies and international bodies are crucial, as noted in Lenovo's press release.

- Future Trends: Expect more sophisticated AI tools and increased focus on digital literacy, as discussed in Ellucian's blog.

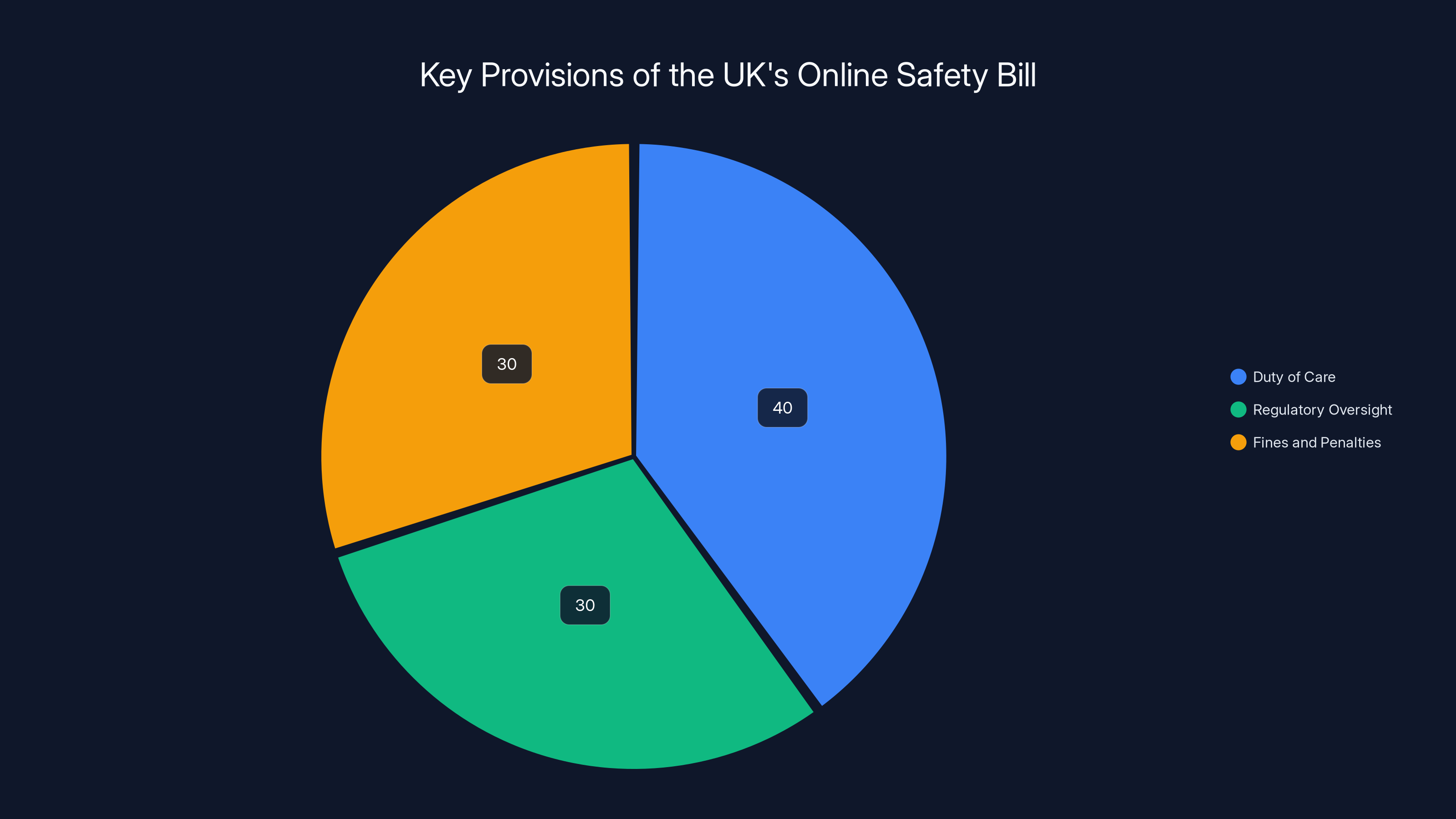

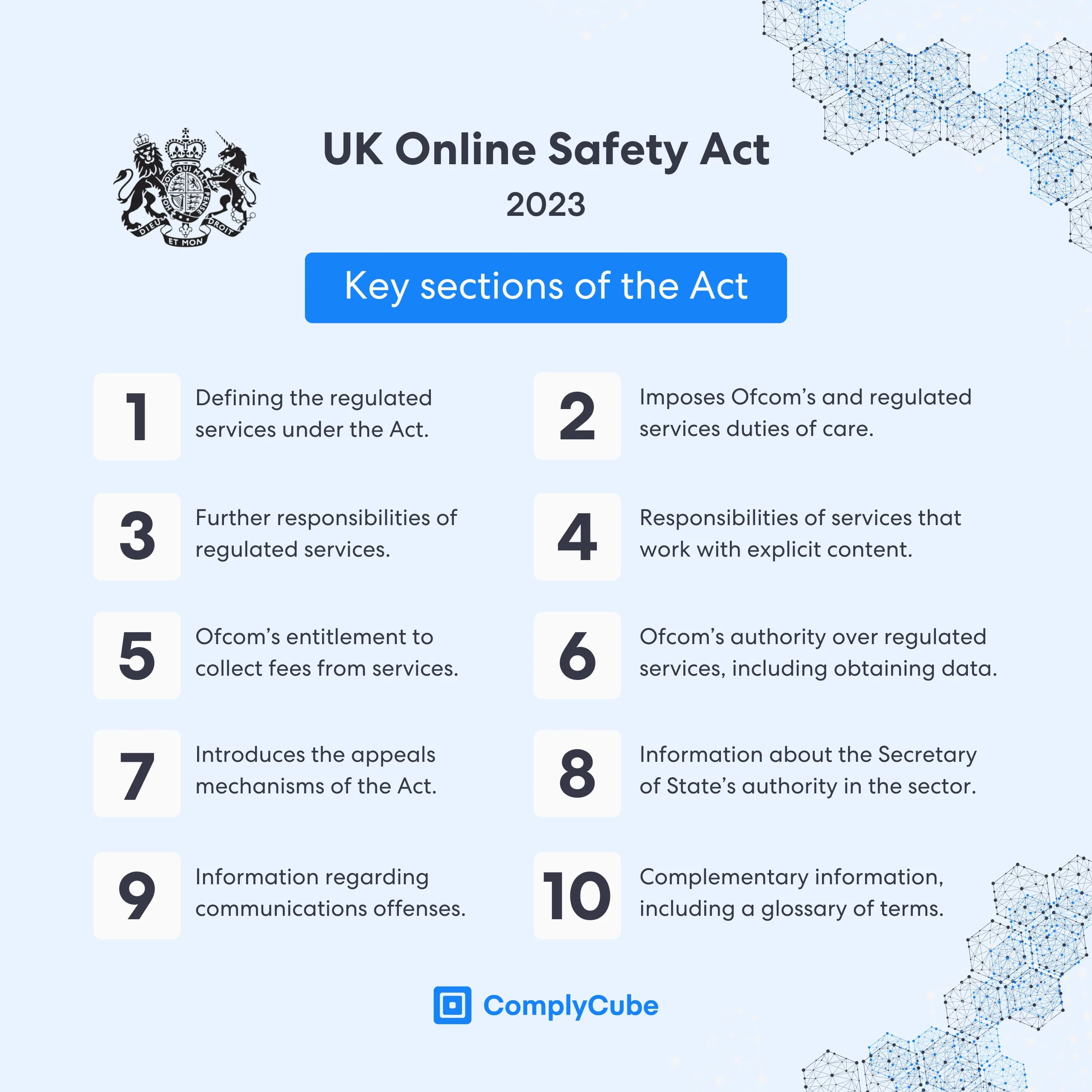

The Online Safety Bill focuses on Duty of Care (40%), Regulatory Oversight (30%), and Fines and Penalties (30%) to ensure user protection from harmful content. Estimated data.

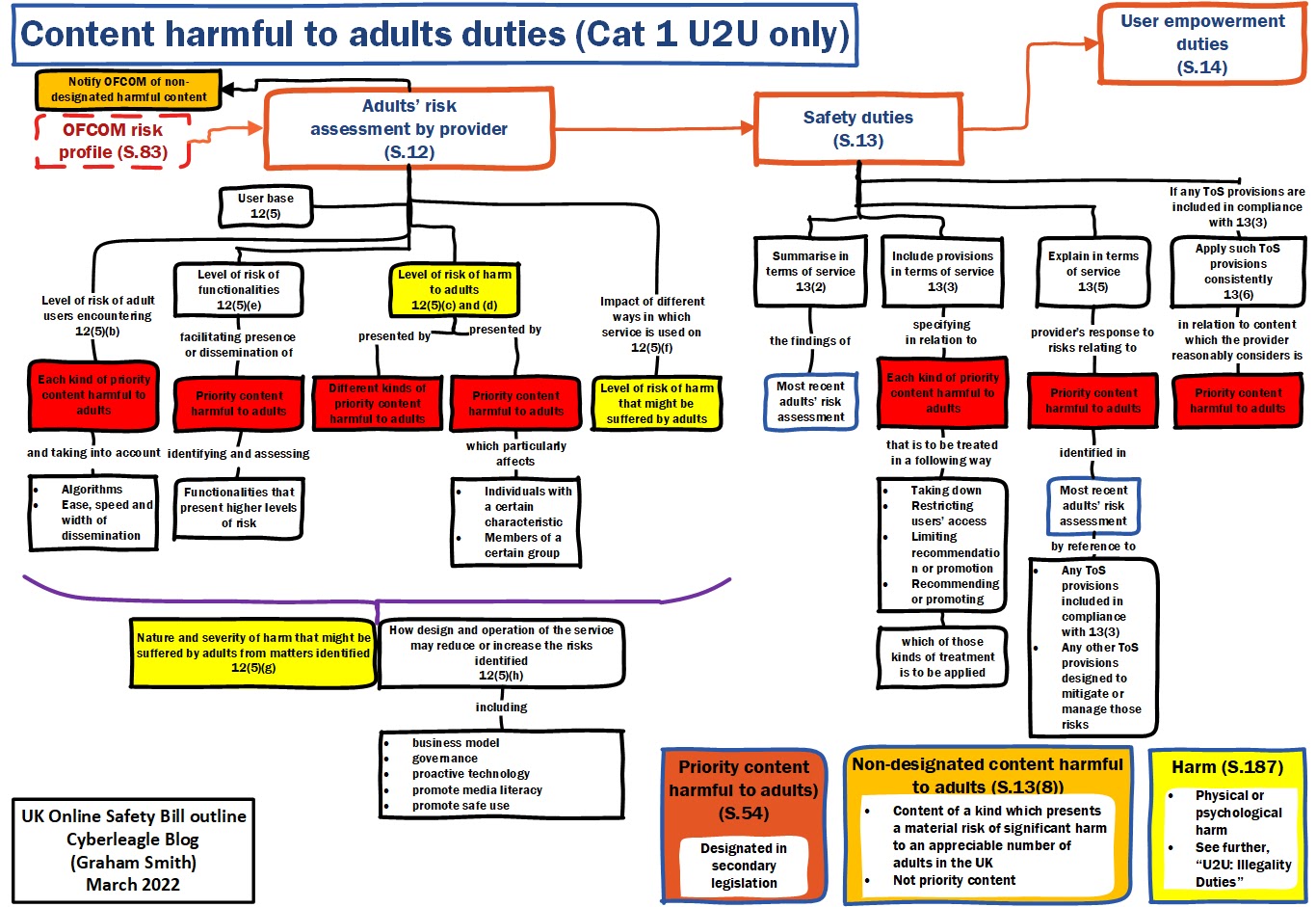

The Regulatory Landscape

The UK's legislative framework has evolved to address the challenges posed by online hate speech and terrorism. The cornerstone of these efforts is the Online Safety Bill, which imposes a duty of care on digital platforms to protect users from harmful content. This includes:

- Identifying and Removing Content: Platforms must proactively identify and remove illegal content.

- Transparency Reports: Regular reports detailing content removal efforts and compliance.

- User Empowerment: Providing tools for users to report harmful content easily.

Key Provisions of the Online Safety Bill

- Duty of Care: Platforms are legally obligated to protect users from harmful content.

- Regulatory Oversight: The Office of Communications (Ofcom) is empowered to enforce compliance, as detailed in Dig Watch.

- Fines and Penalties: Non-compliant platforms may face fines up to 10% of their global revenue, according to Bitdefender.

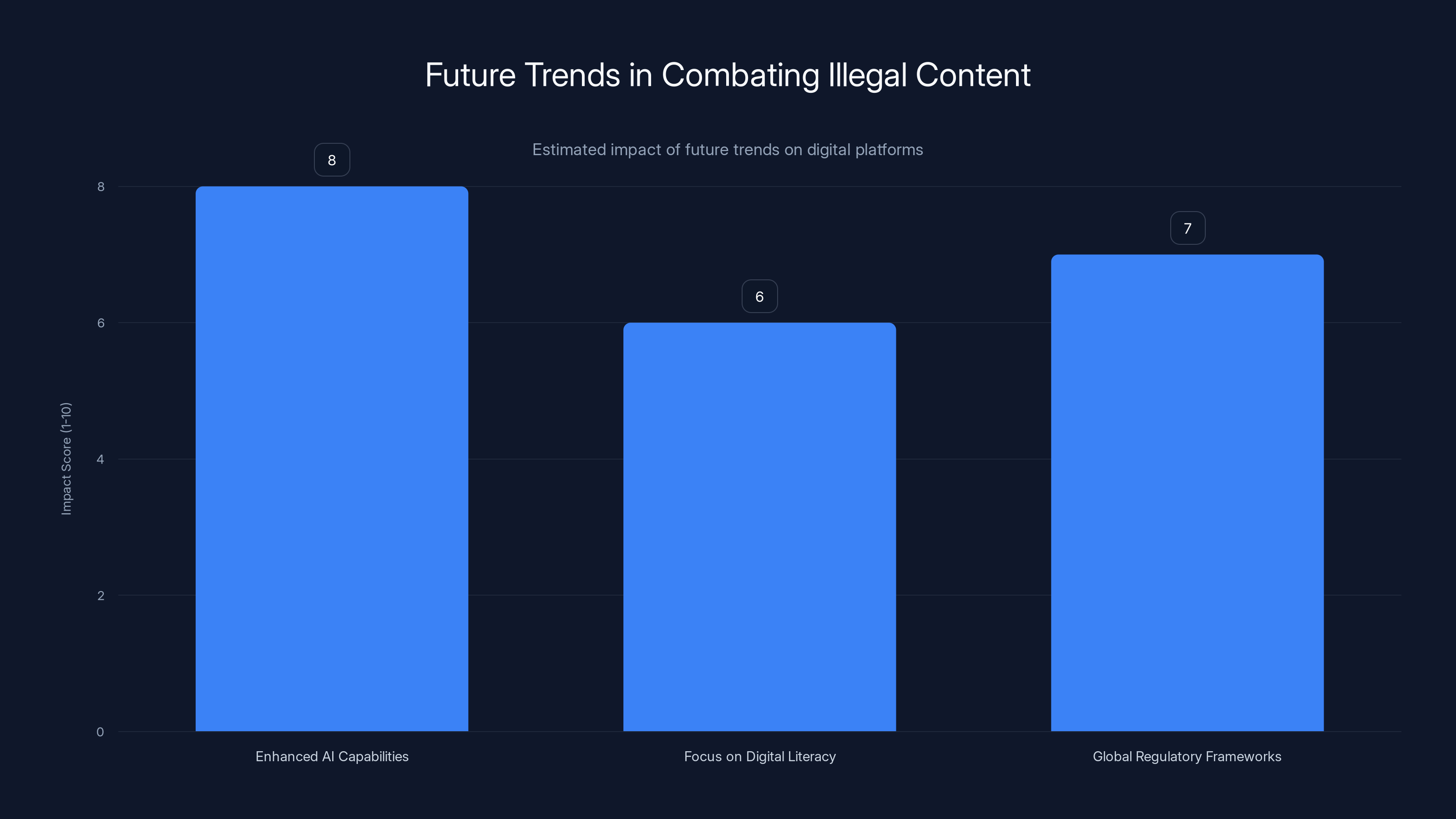

Enhanced AI capabilities are expected to have the highest impact on combating illegal content, followed by global regulatory frameworks and digital literacy. Estimated data.

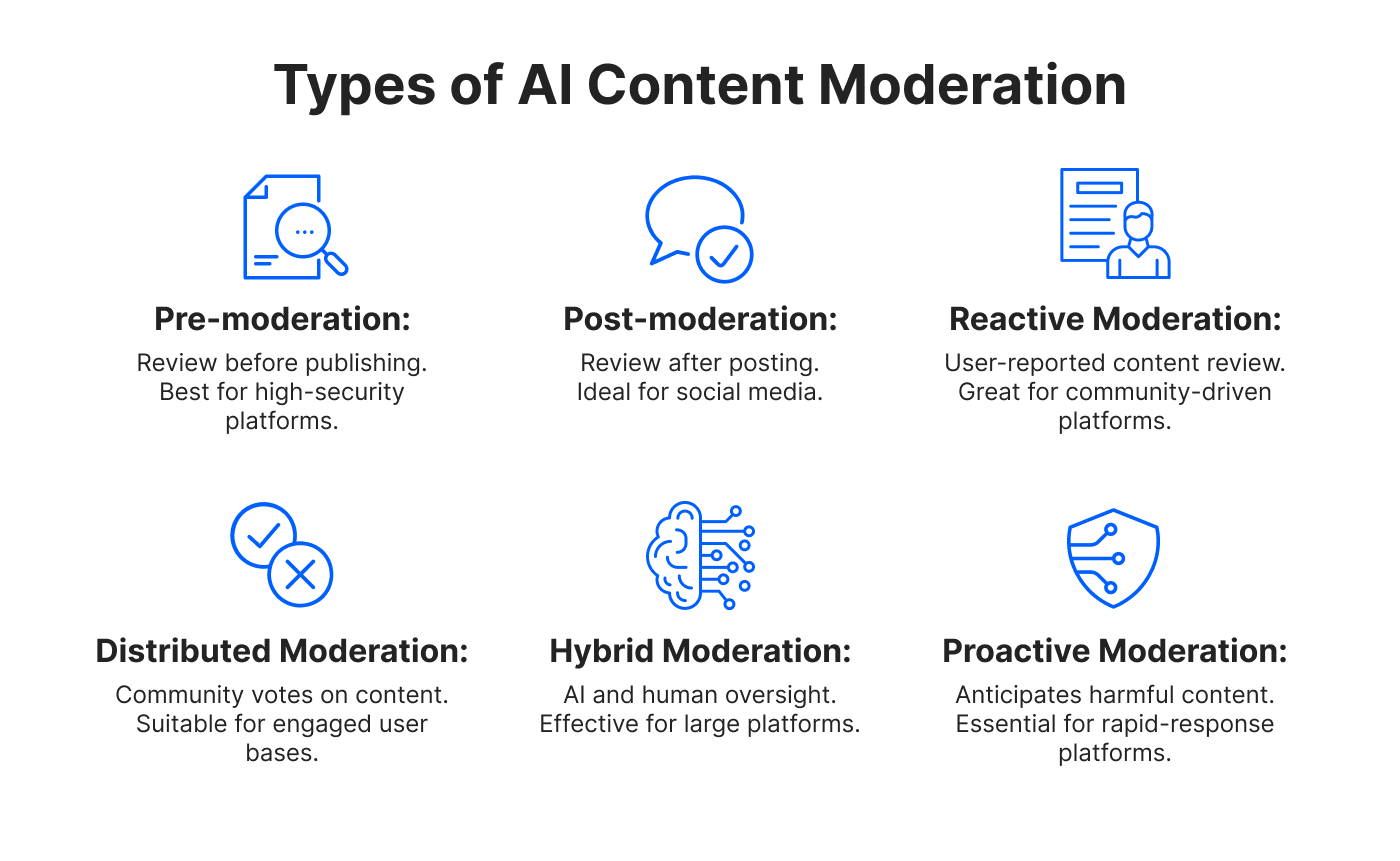

Technological Interventions

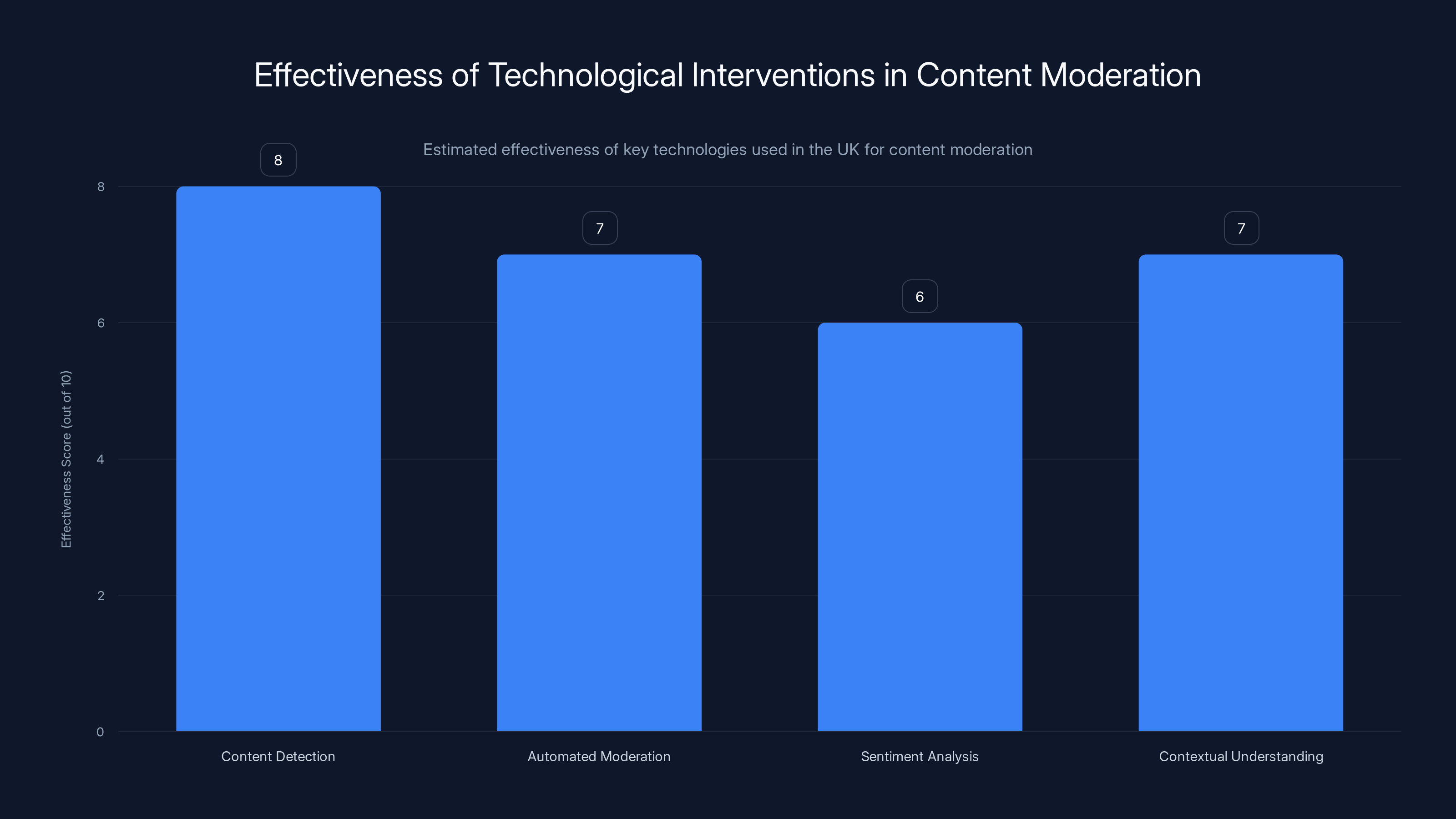

Technology plays a crucial role in the UK's strategy to combat illegal content. Here are some of the key technologies being utilized:

Artificial Intelligence and Machine Learning

AI and machine learning are at the forefront of content moderation efforts. These technologies help in:

- Content Detection: Algorithms analyze text, images, and videos to identify potential hate speech or terror content, as explained in Cambridge's German Law Journal.

- Automated Moderation: AI can automatically flag and, in some cases, remove harmful content.

Natural Language Processing (NLP)

NLP is used to understand the context and sentiment of online posts, improving the accuracy of content detection.

- Sentiment Analysis: Helps in identifying the tone of the content, distinguishing between neutral and harmful messages.

- Contextual Understanding: Enables the system to comprehend the nuances in language, reducing false positives.

Platform Accountability and Compliance

Social media companies and other digital platforms are pivotal in the fight against illegal content. The UK's approach emphasizes accountability through:

- Hefty Fines: Platforms that fail to comply with regulations face significant financial penalties, as noted by Reuters.

- Mandatory Reporting: Regular submission of transparency reports to Ofcom.

- User Reporting Tools: Platforms must provide easy-to-use tools for users to report harmful content.

Case Study: Facebook's Compliance Measures

Facebook has implemented robust systems to comply with the UK's regulations, including:

- AI-Driven Content Moderation: Automated systems to detect and remove harmful content.

- Transparency Initiatives: Regular publication of compliance and content removal reports.

AI and machine learning technologies are highly effective in content detection and automated moderation, with NLP enhancing sentiment analysis and contextual understanding. Estimated data.

Collaborative Efforts

Addressing illegal content is a complex issue that requires collaboration across sectors and borders. The UK has fostered partnerships with:

- Tech Companies: Collaborating with giants like Google and Twitter to enhance content moderation capabilities, as reported in Google's Ads Safety Report.

- International Bodies: Working with entities like the European Union to harmonize regulations and enforcement, as highlighted in Tech Policy Press.

Future Trends and Recommendations

As technology and tactics evolve, so too must the strategies to combat illegal content. Future trends include:

- Enhanced AI Capabilities: Continued development of AI tools for more accurate content detection, as discussed in Lexology.

- Focus on Digital Literacy: Educating users to recognize and report harmful content.

- Global Regulatory Frameworks: Harmonizing international laws to streamline enforcement.

Recommendations for Digital Platforms

- Invest in Technology: Enhance AI and machine learning capabilities.

- Foster Transparency: Regularly update users and regulators on content moderation efforts.

- Strengthen Partnerships: Collaborate with governments and other platforms to share best practices.

Common Pitfalls and Solutions

Despite best efforts, challenges remain in combating illegal content. Common pitfalls include:

- Over-Reliance on Automation: Solely relying on AI can lead to false positives. Solution: Maintain a balance with human oversight.

- Inconsistent Enforcement: Varying interpretations of regulations can lead to inconsistent enforcement. Solution: Clear guidelines and training for content moderators.

Conclusion

The UK's approach to tackling illegal hate and terror content online is comprehensive, involving a blend of regulation, technology, and collaboration. While significant progress has been made, the landscape is ever-changing, requiring continuous adaptation and innovation. By leveraging technology, fostering accountability, and encouraging global cooperation, the UK aims to create a safer digital environment for all.

FAQ

What measures is the UK taking to combat illegal content online?

The UK has introduced the Online Safety Bill, which mandates digital platforms to protect users from harmful content, backed by regulatory oversight and penalties for non-compliance.

How do AI and machine learning assist in content moderation?

AI and machine learning are used to detect and remove illegal content by analyzing text, images, and videos, and improving accuracy through natural language processing.

What role do social media platforms play in these efforts?

Platforms like Facebook and Twitter are required to implement robust moderation systems, provide transparency reports, and empower users with reporting tools.

How important is collaboration in addressing illegal content?

Collaboration with tech companies and international bodies is crucial for sharing best practices, harmonizing regulations, and enhancing content moderation capabilities.

What future trends can we expect in combating illegal content?

Expect advancements in AI tools, a focus on digital literacy, and efforts to harmonize global regulatory frameworks to streamline enforcement.

Key Takeaways

- The UK's Online Safety Bill enforces platform accountability for harmful content.

- AI and machine learning play a crucial role in detecting illegal content.

- Platforms face financial penalties for non-compliance with regulations.

- Collaboration with tech companies and international bodies is vital.

- Future trends include enhanced AI tools and a focus on digital literacy.

Related Articles

- Meta Faces Legal Challenges Over Scam Ads on Facebook and Instagram [2025]

- Stay Safe from iCloud Phishing Scams: What Every Apple User Needs to Know [2025]

- How Hackers Hijack Your Inbox with Clever Tactics [2025]

- Tech Support Scams and How to Combat Them [2025]

- Roblox's New 'Kids' and 'Select' Accounts: A Deep Dive into Age-Appropriate Gaming [2025]

- Stay Safe from Deepfakes: Expert Steps to Verify Before You Act [2025]

![How the UK is Addressing Illegal Hate and Terror Content Online [2025]](https://tryrunable.com/blog/how-the-uk-is-addressing-illegal-hate-and-terror-content-onl/image-1-1778850332682.jpg)