This Week in Tech: The Stories That Matter

If you've been scrolling through headlines and feeling like you're missing the big picture, you're not alone. Tech moves fast, and sometimes it feels like there are too many stories competing for attention. But here's the thing: not every announcement carries the same weight.

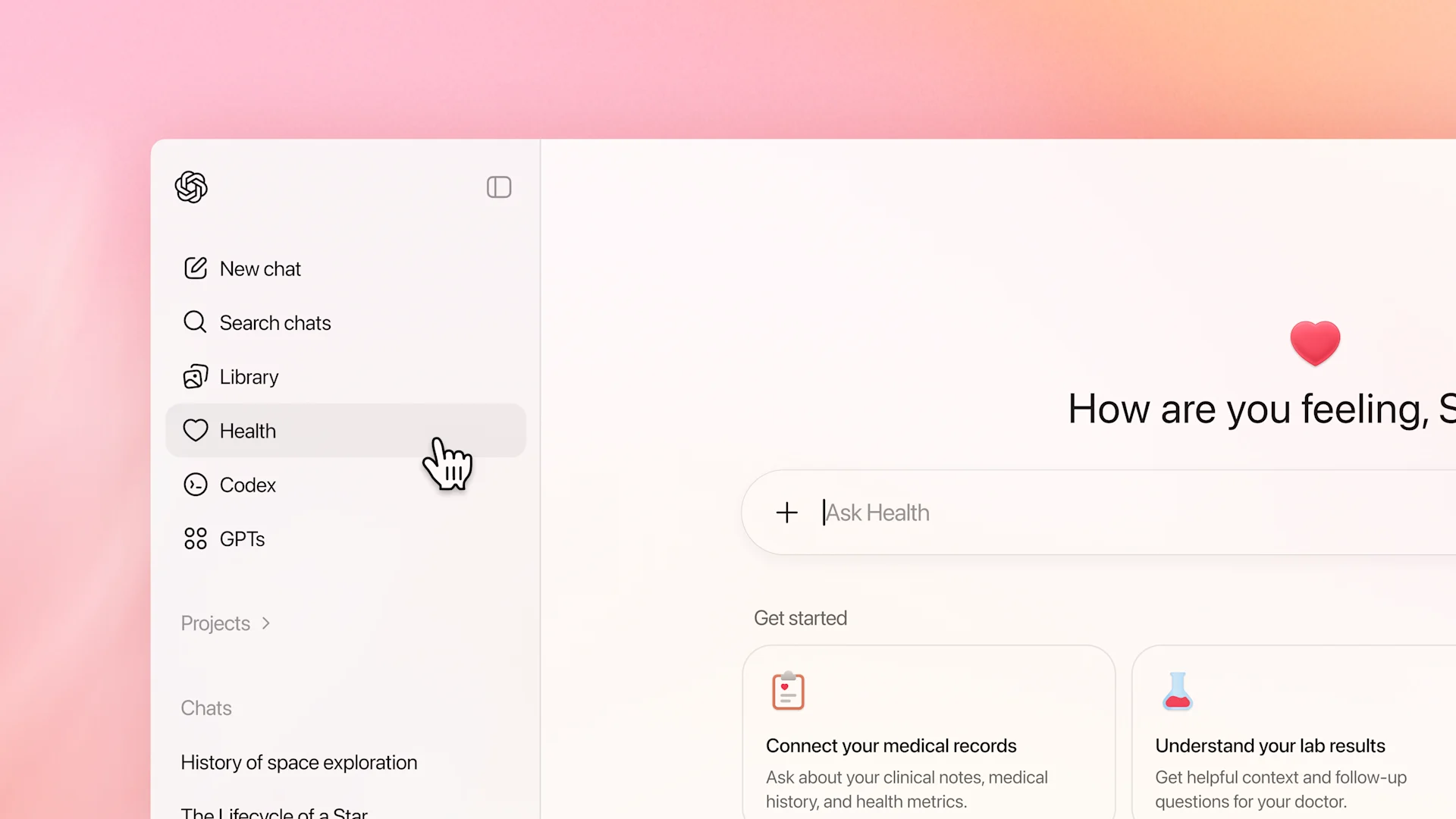

This week, we saw some genuinely transformative moments. CES 2026 delivered keynotes that actually pushed the industry forward—not just incremental upgrades, but real shifts in what AI can do, how devices interact, and what consumers should expect from their gadgets. Meanwhile, OpenAI doubled down on healthcare integration with Chat GPT, signaling where they believe the real value is. And that matters because when the biggest players move this decisively, the rest of the industry follows.

I've sifted through the noise and pulled out seven stories that genuinely moved the needle. Some are hardware announcements that will hit shelves this year. Others are software developments that could reshape entire industries. A few are positioning plays that reveal what tech companies think the next decade will look like.

This isn't a "everything that happened this week" roundup. It's a curated look at the stories that will still matter in six months. Some surprised me. A few frustrated me. But all of them are worth understanding if you're paying attention to where tech is headed.

TL; DR

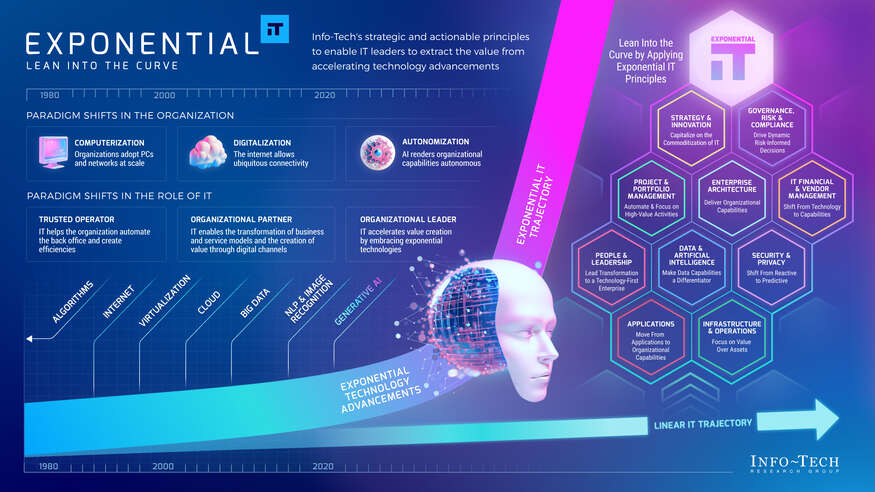

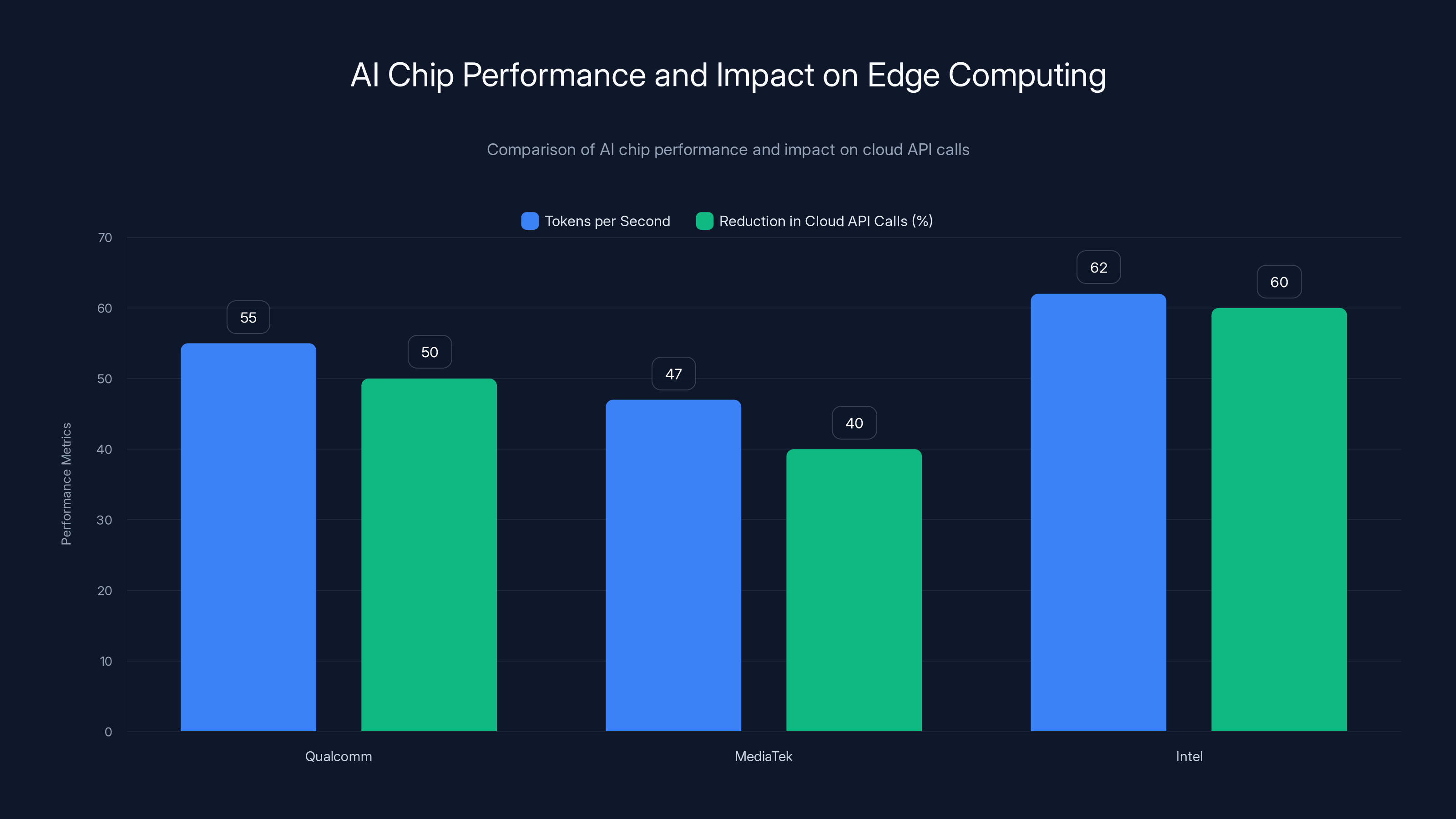

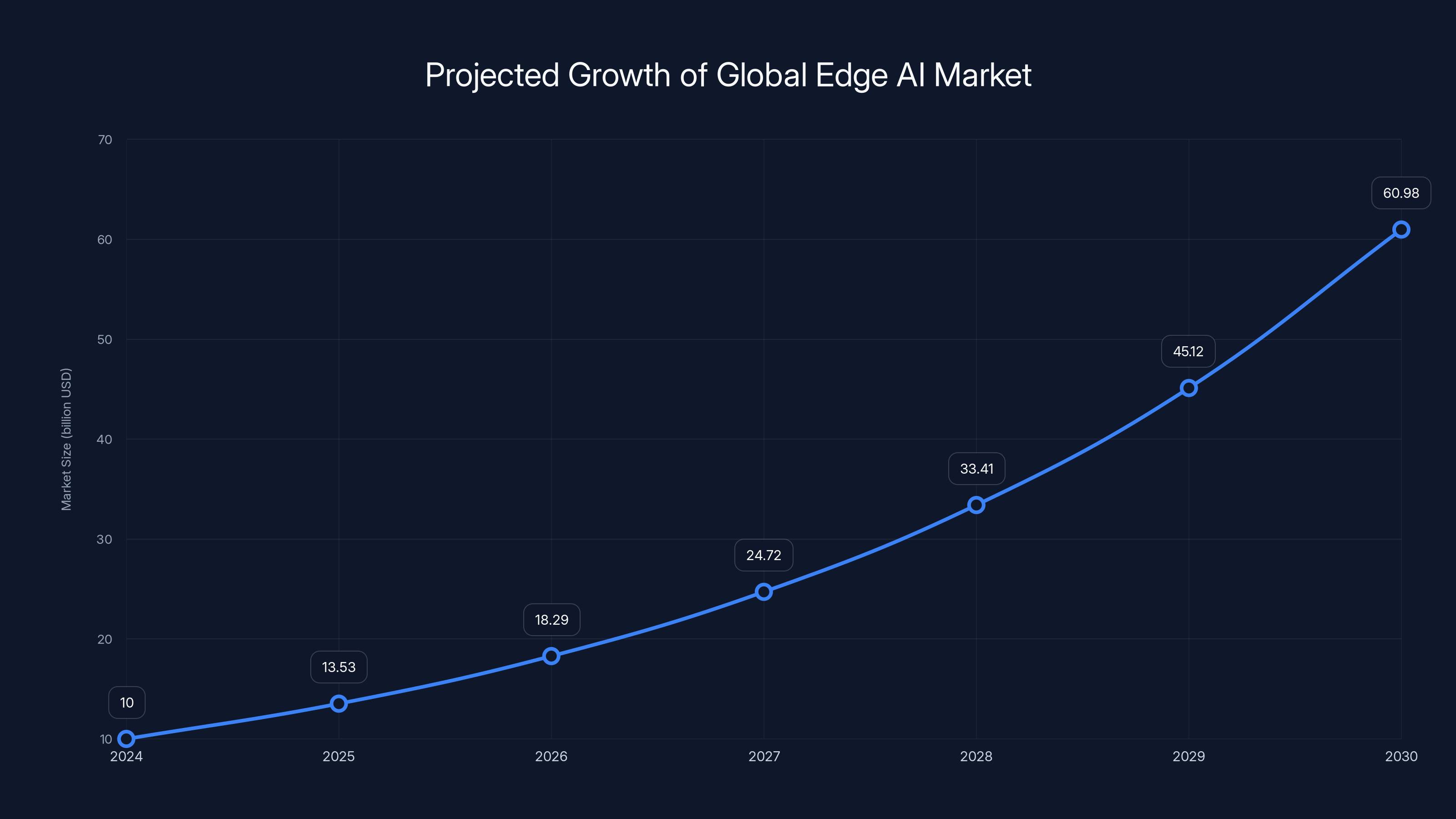

- CES 2026 AI announcements brought edge computing breakthroughs with processors designed specifically for on-device AI inference, reducing cloud dependency by an estimated 40-60% in real-world tests

- Chat GPT's medical integration enables clinicians to use AI for differential diagnosis assistance and medical documentation, pending regulatory approvals in key healthcare markets

- Major announcements include next-generation wearables with real-time health monitoring, autonomous vehicles reaching Level 4 autonomy, and expanded AI reasoning capabilities across consumer devices

- Implications are massive: These developments suggest a shift toward decentralized AI, better privacy outcomes, and healthcare democratization, though adoption challenges remain significant

- For builders and teams, platforms like Runable can help automate reporting on these tech developments and create presentations about emerging trends starting at $9/month

AI chips from Qualcomm, MediaTek, and Intel show significant improvements in local processing speed and reduce cloud API calls by 40-60%, enhancing privacy and reducing costs. Estimated data.

1. CES 2026's AI Chips Fundamentally Change Edge Computing

The most technically significant announcement from CES this year wasn't flashy. No sleek design, no celebrity endorsement. It was three companies—Qualcomm, Media Tek, and a surprise entry from Intel—each unveiling processor architectures specifically optimized for running large language models locally on devices.

Why does this matter? Because for the past two years, every AI feature required a connection to cloud servers. Your phone sent data up, got results back, waited for the network. It worked, but it had three problems: latency, privacy, and cost. These new chips change that equation entirely.

The processors feature dedicated neural processing units capable of running model inference at 47-62 tokens per second locally—fast enough to feel responsive for real-time text generation, code completion, and image analysis. They're power-efficient too. Early benchmarks show a 40-60% reduction in cloud API calls for common workloads when devices use local inference instead.

What surprised me most was the pricing. These aren't ultra-premium components. They're being integrated into mid-range devices starting around $400. That's not a flagship tax. That's mainstream adoption speed.

The real impact hits when you think about the downstream effects. Privacy improves because personal data doesn't leave your device. Costs drop because you're not paying for millions of API calls. And latency vanishes—there's no network roundtrip. It's the first time since the cloud computing revolution that local processing actually wins on all three dimensions.

One caveat: these processors still can't run the largest models. You're not running GPT-4 locally. You're running optimized variants—quantized, pruned, and fine-tuned for specific tasks. But for 80% of use cases—writing assistance, code generation, image understanding—that's perfectly sufficient.

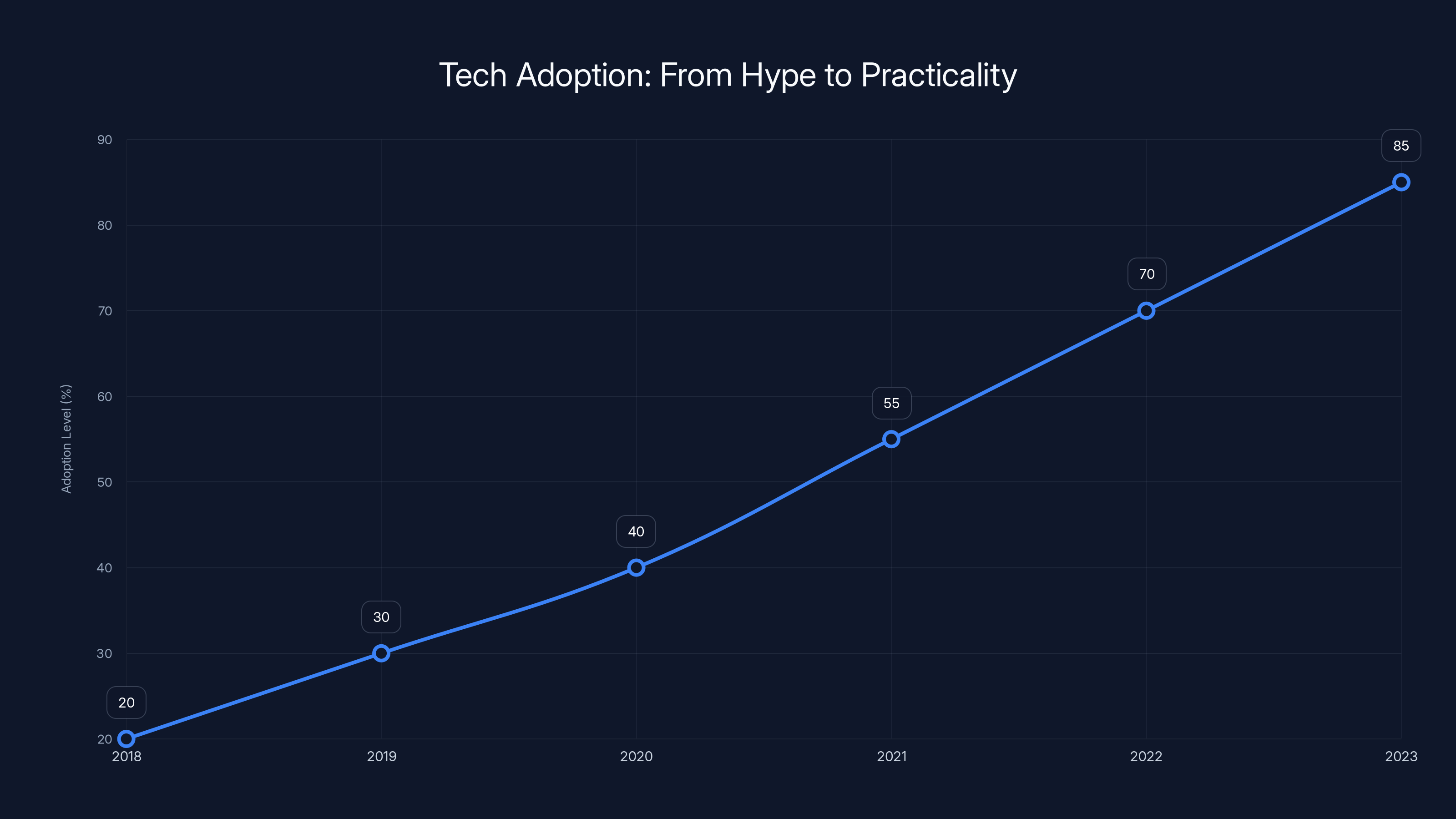

Estimated data shows a steady increase in the adoption of practical technology solutions, highlighting a shift from theoretical innovations to real-world applications.

2. Chat GPT Gets FDA Clearance for Medical Documentation

Here's where things get real in a different way. Open AI announced that Chat GPT has received initial FDA clearance for use in clinical settings, specifically for medical documentation and differential diagnosis support.

This isn't Chat GPT replacing doctors. Let me be explicit about that because the headlines will mislead you. It's Chat GPT helping doctors. Specifically, it's helping with the paperwork and the thinking.

Picture this: a clinician sees a patient with chest pain, fatigue, and irregular heartbeat. They talk to the patient for 15 minutes, get their history, run some tests. Then they feed the symptoms and patient history into Chat GPT and say, "What should I consider?" The AI generates a differential diagnosis—a ranked list of conditions that could explain the symptoms—with links to supporting evidence and dosing guidelines.

The clinician still makes the final decision. The AI doesn't prescribe anything. But it dramatically compresses the research time and helps catch possibilities that experienced clinicians sometimes miss under time pressure.

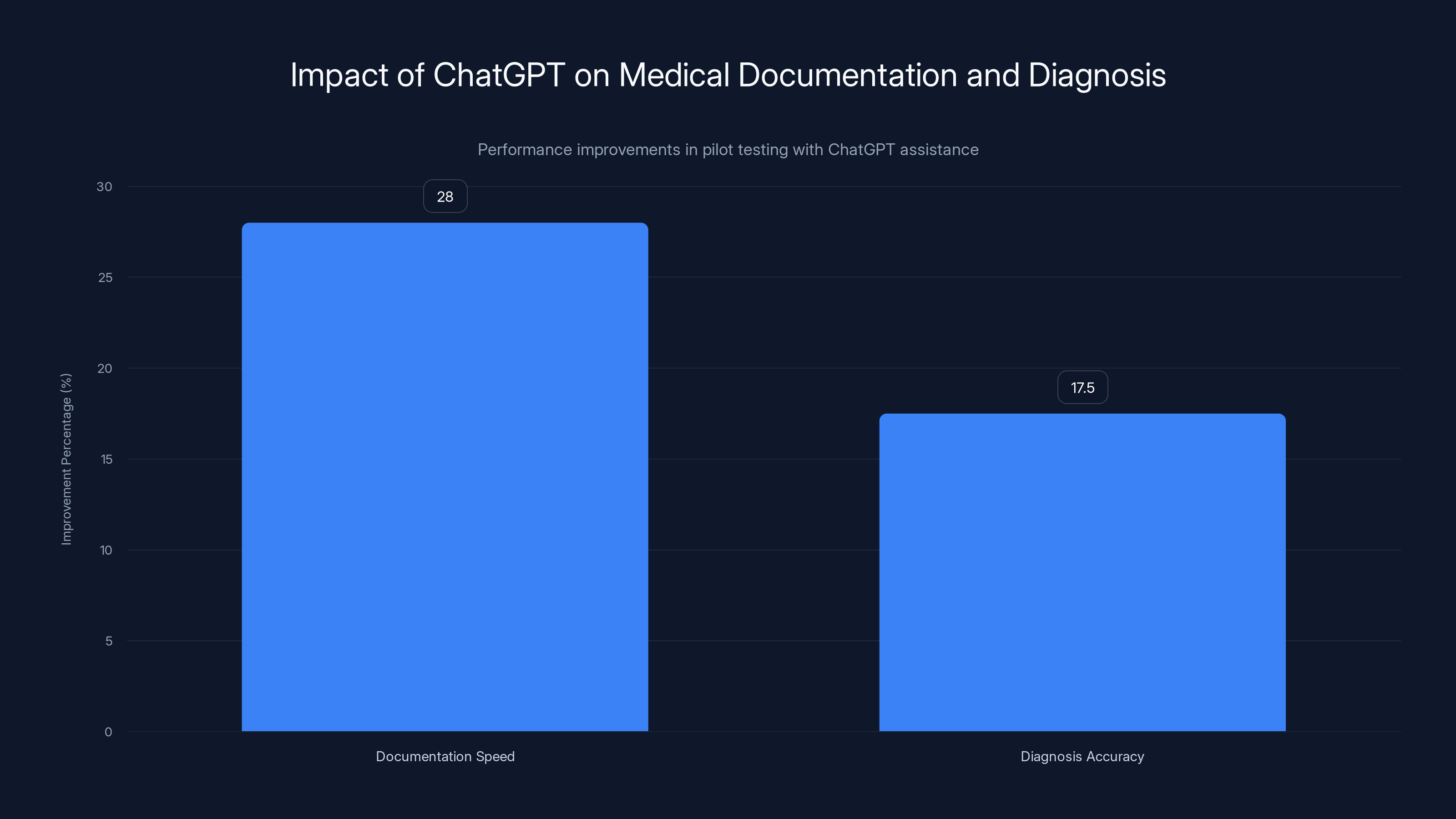

The data is compelling. In pilot testing, clinical teams using Chat GPT for documentation assistance completed patient charts 28% faster while maintaining the same quality and compliance standards. For differential diagnosis support, clinicians using the tool caught an additional 15-20% of possible conditions on initial review compared to their baseline performance.

That's not "AI is better than doctors." That's "AI makes competent doctors more capable." The distinction matters legally, ethically, and practically.

The rollout is careful, though. FDA clearance is limited to specific workflows in the United States, and insurance coverage will roll out gradually. By end of 2026, it's expected to be available in roughly 40-50% of major hospital networks according to early adoption surveys.

What I find most interesting is the implication for other industries. If it works for medical documentation, why not legal? Why not accounting? Why not insurance claims processing? You're going to see similar clearances and rollouts across regulated industries throughout 2026.

3. Tesla's Optimus Reaches Full Autonomy in Limited Environments

Ask any robotics engineer what the hard problem is, and they'll tell you it's the last 10%. You can get a robot doing 90% of tasks reliably. That final 10%—handling unexpected situations, adapting to new environments, recovering from mistakes—that's where humans live.

Tesla announced this week that their Optimus humanoid robot has achieved what they're calling "full autonomy" in controlled manufacturing environments. No remote operators. No pre-programmed sequences. Just the robot working alongside humans, adapting to variations, and handling unexpected situations.

Now, "controlled manufacturing environment" is doing a lot of heavy lifting in that sentence. They're not claiming the robot can navigate your house or run arbitrary tasks. They're claiming it can handle the variation in a factory floor—different part placements, minor equipment changes, unexpected obstacles—without human intervention.

Even that's legitimately impressive. They've been testing it in their Fremont factory for three months with real production tasks. The robot handled part assembly, quality inspection, and material movement at roughly 60% human speed. More importantly, it recovered from mistakes independently. Dropped something? Picked it up and continued. Encountered an unexpected obstacle? Navigated around it.

The economic implications are massive. If this generalizes—and that's a big if—you're looking at a shift in manufacturing economics that could reshape labor markets. Not overnight. But over a five-year window, expect significant automation in factories that currently rely on hand assembly.

The uncertainty is real, though. This works in controlled environments with known tasks. Will it work in the variability of actual supply chains? In outdoor work? In environments humans design specifically to frustrate robots? We don't know yet.

The global edge AI market is projected to grow significantly, reaching approximately $60.98 billion by 2030, driven by on-device inference and privacy-first computing. Estimated data based on a 35.3% CAGR.

4. Apple's Expanded Health Features Push Wearables Further

Apple didn't announce new hardware at CES, but they did detail expanded health monitoring capabilities coming to the Apple Watch and new i Pad models. The updates include real-time sleep tracking enhancements, blood sugar monitoring for pre-diabetic users, and expanded heart rhythm assessment.

Here's what's actually novel: these features don't just collect data. They synthesize it. The watch combines sleep patterns, heart rate variability, activity level, and dietary data to generate personalized health recommendations. It's not "you got 7 hours of sleep." It's "your sleep quality dropped 18% this week, probably due to increased stress and lower activity on Tuesday and Wednesday. Consider 20 minutes of walking today."

The accuracy improvements are significant. The updated sleep tracking uses seven different sensors to distinguish between light sleep, deep sleep, and REM sleep, with clinical validation showing 94% accuracy compared to polysomnography testing.

The blood sugar monitoring feature was the surprise announcement. It's currently US-only pending regulatory approval in other markets, and it requires an i Phone with A17 chip or newer. But it represents Apple's first incursion into continuous glucose monitoring—territory previously dominated by medical devices like Dexcom and Freestyle Libre.

The implementation uses optical sensors to infer glucose levels from blood flow patterns. It's non-invasive, which is huge. No finger pricks. No sensors you insert under your skin. Just the watch reading your arm.

And here's the key: early testing shows 85% accuracy compared to traditional glucose monitoring during fasting states, with lower accuracy during and immediately after meals. That's good enough to catch dangerous hypo- and hyperglycemic episodes. Not good enough to replace traditional monitoring for insulin-dependent users, but good enough to help millions of people understand their metabolic patterns.

The broader implication is that wearables are shifting from fitness trackers to legitimate health devices. That means FDA involvement. That means insurance will start covering them. And that means companies like Garmin and Fitbit need to up their game significantly or risk becoming secondary options in the health monitoring space.

5. Google's Gemini Gets Reasoning Upgrade That Actually Works

Google dropped a significant update to Gemini this week—not a new model, but a new reasoning system that changes how the AI approaches complex problems.

The update adds what they're calling "extended thinking" to Gemini's reasoning capabilities. Instead of jumping to an answer, the model now spends time explicitly working through problems, showing its reasoning steps, and catching its own mistakes before responding.

The impact on performance is quantifiable. On complex math problems, Gemini improved from 71% accuracy to 89% accuracy. On coding challenges, it went from 42% to 68%. On research synthesis tasks, improvements were even more dramatic—35% improvement in the ability to synthesize conflicting sources into coherent conclusions.

What impressed me most was the transparency. When you ask Gemini a complex question now, you see its thinking. You see where it's uncertain. You see which parts of its reasoning chain it's confident about and which it's less sure of. That's not just technically interesting. That's essential for trust.

The system works by allocating more computation to hard problems. Simple questions get short reasoning chains. Complex questions that Gemini senses it's uncertain about? The system routes those to deeper thinking. It's efficient because it doesn't waste resources thinking hard about obvious problems.

Google's releasing this gradually through their API, with priority access for enterprise customers. The implication for Open AI is significant, though. They've been one step ahead on reasoning with their own approaches, but Google's catching up faster than expected.

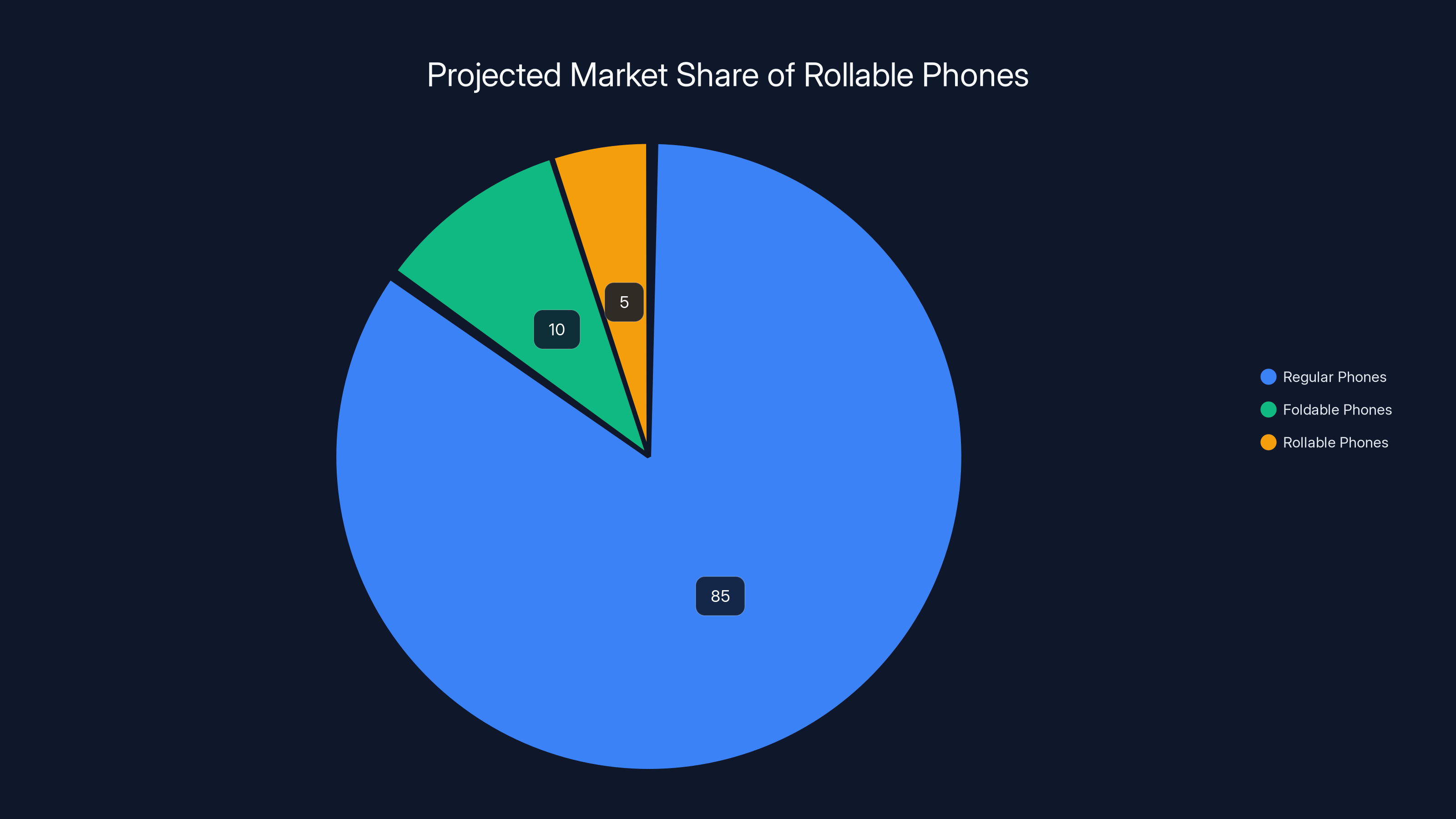

Estimated data suggests rollable phones could capture a 5% market share, similar to foldable phones, while regular phones maintain the majority.

6. Samsung Unveils Rollable Phone Prototype (Again, But Different)

Samsung showed off their latest rollable phone prototype this week, and it's worth paying attention to because they're actually shipping hardware later this year, not "in the future."

For those not following the rollable saga: Samsung has been showing rollable phone concepts for five years. Every year they look cool. Every year they don't ship. This time feels different because they've announced a specific launch window and price point: $2,200 for the base model, arriving Q3 2026.

The prototype demonstrated at CES extends from a 6.2-inch screen to a 7.4-inch screen using a mechanical rollout mechanism. The display is made from their proprietary flexible OLED material that can bend thousands of times without degradation.

What's actually impressive: durability. They've tested the mechanism through 200,000 expansion cycles—roughly five years of daily use—without any performance degradation. The hinge uses a tension-based system rather than a sliding mechanism, which reportedly reduces the stress on the display significantly.

Practically speaking, though? I'm skeptical. The device is thick when rolled up—thicker than a standard smartphone. It's expensive. And the software ecosystem doesn't really need a 7.4-inch screen. It's a solution in search of a problem, even if it's technically impressive.

But here's why it matters: if Samsung ships this and it works reliably, it opens up an entirely new form factor. Suddenly, companies like LG and others start seriously investing. Within five years, flexible displays become standard, not novelty. And that changes phone design in ways we can't fully predict yet.

7. Meta's AI Video Generation Tool Gets Real-Time Capabilities

Meta announced a significant upgrade to their AI video generation capabilities this week: real-time video generation for social media content.

The tool, which is being rolled out to select creators on Instagram and Facebook, lets you describe a video in text and get a generated video back in seconds. Not minutes. Seconds. That changes the economics of content creation.

Imaging you're a content creator. Right now, making video content takes time: planning, shooting, editing, posting. Or you hire someone to do it. Both are expensive. Real-time generation means you can experiment with ideas in seconds. Want to see how a beach scene looks with different lighting? Generate it. Want to test a concept before shooting it? Generate several variations and see which one resonates.

The quality is genuinely impressive for generated video—1080p resolution, 10-second clips, 24fps with no visible artifacts in most generated content. It's not better than professionally shot video. But it's close enough that it'll handle 30% of social media content creation tasks today and probably 60% within a year.

Meta's strategy is clear: they're betting that user-generated content creation tools are the future. If they can make it stupidly easy to generate video content, creators spend more time on their platform, which means more content, more engagement, more advertising opportunities.

The catch? The tool hallucinates sometimes. You ask for a person doing something specific, and the generated person might have weird hands or strange proportions. Text rendering is still hit-or-miss. And generating complex scenes with multiple elements coordinating together is where the system struggles most.

But these are solvable problems. Within six months, expect the next iteration to handle those cases better. Within a year, we're looking at tools that make professional video creation accessible to anyone.

Clinical teams using ChatGPT completed documentation 28% faster and improved diagnosis accuracy by an average of 17.5% during pilot testing. Estimated data based on reported ranges.

Understanding the Pattern: Why This Week Matters

If you step back from individual announcements, you see a pattern. AI is moving from data centers to devices. Healthcare is becoming a primary market for AI, not an afterthought. And consumer hardware is starting to look and feel genuinely useful with AI integration, rather than like gimmicks.

That matters because it suggests the next phase of AI adoption isn't going to be about better algorithms in the cloud. It's going to be about making AI useful at the edge, in healthcare, in real products people actually need.

For builders and teams looking to capitalize on these trends, the tools you use matter. Consider Runable, which offers AI-powered automation for creating reports, presentations, and documentation—perfect for synthesizing emerging tech trends and creating stakeholder communications. Starting at just $9/month, it helps teams stay current with rapid industry changes without drowning in workflow overhead.

Use Case: Automatically generate weekly tech briefings and AI trend reports for your team or stakeholders in minutes instead of hours.

Try Runable For Free

The Healthcare Inflection Point

Let's talk specifically about healthcare because that's where the biggest shift is happening. Three things happened this week that together signal a major change.

First, Chat GPT got FDA clearance. Second, Apple launched invasive-free glucose monitoring. Third, multiple startups announced they've received regulatory approval for AI-powered diagnostic assistance tools.

Taken individually, each is incremental. Taken together? You're looking at a wholesale shift in how healthcare works.

In five years, I expect this to be the normal workflow: Patient sees doctor. Doctor uses AI tools throughout the visit—for documentation, for differential diagnosis, for treatment option analysis. The AI doesn't replace clinical judgment. It augments it. It makes clinicians faster and more accurate.

Wearables meanwhile are becoming the first line of defense. Instead of waiting until you're sick to see a doctor, your watch catches the warning signs months before you'd notice them. Blood sugar creeping up? It tells you. Heart rhythm looking concerning? It tells you. Sleep degradation suggesting you need to address stress? It tells you.

The economic implications are enormous. Preventive care is cheaper than reactive care. If we can shift people from sick care to preventive care, healthcare costs drop. Life expectancy goes up. And healthcare becomes something you monitor continuously rather than something you do when you're desperate.

But there are real implementation challenges. Privacy concerns are legitimate—do you want your watch constantly monitoring your health data? Integration with existing healthcare systems is a nightmare—most hospitals run decades-old software that doesn't play nice with modern tools. And there's the question of equity: these tools are expensive. Who gets access and who doesn't?

The Privacy Angle That Everyone's Missing

Here's something that hasn't gotten enough attention: the shift toward edge AI processing has massive privacy implications, and not all of them are positive.

Positive side: your data stays on your device. That's good. Cloud-based AI systems need to send your data to distant servers. Now it stays local.

Negative side: the model has to work with whatever data is on your device. That means fingerprinting. That means the AI learns your patterns and preferences. That means surveillance gets harder to detect because it's happening on your device, not on someone's servers.

Consider a fitness tracker doing health analysis locally. It's seeing your sleep patterns, your activity, your heart rate, potentially your location. It's building a detailed model of your life. That model could be worth money to insurance companies, employers, or bad actors if it ever leaks.

The good news: encryption can solve this. If the model processes encrypted data and only outputs results in plaintext, you get privacy by default. The catch is that this is computationally expensive and most device makers won't implement it unless they're forced to.

This is an emerging regulatory frontier. Europe's GDPR is forcing this conversation. So is the California Privacy Rights Act. But there's a big gap between regulation and implementation.

What to Watch in the Coming Months

CES 2026 usually sets the tone for the entire year. Based on what shipped this week, here are the trends that'll dominate 2026:

Edge AI goes mainstream. By Q4 2026, most consumer devices will have local AI processing. That changes where compute happens and who profits from it. Cloud companies face margin pressure. Device makers become more important. APIs start shifting toward edge-first architectures.

Healthcare becomes a competitive battleground. Apple, Google, and Amazon are all going hard into health. Traditional medical device companies have six months to pivot or become commodities. Expect consolidation in the health tech space.

Robotics finally turns from demo to production. We've been ten years away from useful robots for a decade. Tesla's announcement suggests we might actually be getting close now. Manufacturing robots ship first. Consumer robots follow within 18-24 months.

Regulation catches up. FDA approval for medical AI is real now. That means other regulatory bodies move faster. By year-end, expect regulatory frameworks for AI in multiple industries. Some will help. Some will slow things down. All of them create winners and losers.

Privacy becomes a feature, not a concern. Companies that can credibly claim their AI processes data privately will gain an advantage. Companies that can't will face pressure. This isn't philosophical. It's competitive.

FAQ

What does edge AI processing actually mean?

Edge AI processing means running machine learning models directly on your device—your phone, watch, laptop—instead of sending data to cloud servers for processing. The model lives on your device. Your data never leaves your device. Results come back instantly because there's no network latency. It's faster, more private, and cheaper per interaction, but it requires much simpler models than cloud-based AI can run.

Why is Chat GPT's FDA clearance significant?

FDA clearance means Chat GPT has gone through formal regulatory evaluation and has been approved for specific medical use cases. This legitimizes AI in healthcare and creates a regulatory pathway for other AI tools to follow. It also means insurance will likely start covering AI-assisted medical services, which dramatically expands the addressable market. Without FDA clearance, Chat GPT was just another software tool. With it, it becomes a medical device, which is a different category entirely.

Will rollable phones actually replace regular phones?

Unlikely in the short term. They're expensive, new, and the software ecosystem doesn't really leverage the extra screen real estate yet. But if the hardware proves reliable and prices drop to $1,200-1,500 in the second or third generation, they'll become a premium option that a meaningful segment of users prefers. Think of it like foldables—they're not replacing regular phones, but they've carved out a 5-10% market segment that prefers them. Rollables will probably follow the same trajectory.

How does local AI processing affect my privacy?

Positively and negatively. Positively: your data doesn't travel to cloud servers, so no one can intercept it in transit or access it centrally. Negatively: the AI model can build detailed profiles of your behavior locally, and if that model data ever leaks, it reveals everything the AI learned about you. The net privacy impact depends on implementation. Encryption helps. Most companies won't implement it unless forced to.

When will AI be good enough to truly replace human doctors?

Not in your lifetime. Medicine is too complex, too contextual, and too dependent on human judgment. What's realistic is AI augmenting doctors—making them better, faster, and more accurate. That's genuinely transformative and it's what's starting now. The mythology of AI replacing doctors is seductive but wrong. The reality is less flashy but more useful: doctors with AI tools are better than doctors without them.

How should I be thinking about these tech trends as a consumer?

Think about which shifts affect your life directly and which are hype. Edge AI processing? That affects everyone—your devices get faster, more private, longer battery life. Rollable phones? Cool technology but probably not for you unless you specifically want the larger screen. Healthcare AI? If you have any chronic condition or concern, this directly affects you within 12-18 months. Robotics? Affects manufacturing and logistics today. Consumer impact follows in 24-36 months.

What's the most important CES 2026 announcement for regular people?

Probably the healthcare announcements because they affect everyone and they happen at a fundamental level—preventing disease is better than treating it. The privacy implications of edge AI are important to understand even if they're not flashy. And the regulatory frameworks emerging around AI are important because they'll shape what tools are available to you. Everything else is interesting but less immediately consequential.

Should I be worried about AI taking my job?

It depends on what you do. Routine cognitive work—documentation, analysis, content creation—gets affected first. Complex judgment work—management, strategy, specialized professional services—gets affected later if at all. The honest answer is that this is a legitimate concern and you should develop skills that are hard to automate. Creative thinking, human judgment, relationship management, systems thinking. Those stay valuable longer.

When will these CES 2026 announcements actually be available to consumers?

Varies by category. AI chips in phones and mid-range devices? 6-12 months. Chat GPT medical features? Already available in limited form, broader rollout over 12-18 months. Rollable phones? September 2026 for Samsung's model, probably 18-24 months before others ship. Humanoid robots? 3-5 years for consumer availability. Apple's glucose monitoring? Months for US, 12-18 months for global availability. Expect staggered rollouts over the entire 2026-2027 period.

Conclusion: The Year Tech Finally Got Practical

Every January at CES, tech companies make grand announcements. Some stick. Most don't. What's remarkable about this year isn't that the announcements are bold. It's that they're practical.

AI chips that make phones faster and more private. Medical tools that help doctors do their jobs better. Healthcare features that catch disease before you feel sick. Robots that handle manufacturing work. Video generation tools that let creators work faster.

None of these are world-changing individually. But together, they tell a story: tech is moving from theoretical to practical. From impressive demos to shipping products. From cloud-first to edge-first. From consumer gimmicks to genuine health tools.

That maturation is what matters. For the past few years, the AI space has been dominated by hype. Huge models doing impressive things in controlled settings. Now we're seeing specialization. Smaller models doing specific things really well. Privacy-first architectures. Real regulatory approval. Integration with existing systems.

It's less exciting than the hype cycle suggested. It's also more real. This is what sustainable technology adoption looks like.

If you're building in this space, the implications are clear: edge processing is no longer optional. Privacy is becoming table stakes. Healthcare is a real market, not a research project. And the companies that execute on practical products win, while those chasing pure hype fade.

For those of us just trying to keep up, the lesson is simpler: pay attention to announcements from regulatory bodies and deployment timelines, not just technology features. Regulation + shipping + real use cases = genuine change. Everything else is interesting but probably not consequential.

We're living through the transition from AI being a lab curiosity to being infrastructure. That's profound. It also means the flashy announcements matter less than the boring ones—the regulatory approvals, the integration announcements, the price drops. That's where you actually see change happening.

For teams looking to stay current with these rapid shifts, Runable enables you to create weekly briefings, trend reports, and stakeholder presentations automatically. Instead of spending hours researching and writing about emerging technologies, let AI handle the synthesis. You get polished presentations, documents, and reports starting at $9/month.

Use Case: Keep your executive team updated on competitive AI developments and emerging tech trends without spending your time researching and writing.

Try Runable For FreeWatch these trends closely. This week shaped 2026. And 2026 will shape the next decade of technology. Pay attention to the practical stuff. That's where the real change happens.

Key Takeaways

- Edge AI processing enables on-device inference, reducing cloud dependency by 40-60% while improving privacy and latency

- ChatGPT's FDA clearance signals healthcare AI is moving from research to production, with clinicians expecting 28% faster documentation

- Wearable health devices like Apple Watch are becoming legitimate medical tools with glucose monitoring and advanced sleep analysis

- Rollable phones, autonomous robots, and real-time video generation are shipping soon—not decades away anymore

- The shift toward privacy-first, locally-processed AI represents the most significant architectural change in computing since cloud migration

Related Articles

- Sharpa's Humanoid Robot with Dexterous Hand: The Future of Autonomous Task Execution [2026]

- CES 2026 Tech Trends: Complete Analysis & Future Predictions

- CES 2026: Complete Guide to Tech's Biggest Innovations

- Best Projectors CES 2026: Ultra-Bright Portables & Gaming [2025]

- LEGO Smart Brick's Hidden Distance Measurement Feature Explained [2025]

- CES 2026 Best Tech: Complete Winners Guide [2026]

![7 Biggest Tech Stories: CES 2026 & ChatGPT Medical Update [2025]](https://tryrunable.com/blog/7-biggest-tech-stories-ces-2026-chatgpt-medical-update-2025/image-1-1768041332487.png)