AI-Powered Parking Enforcement for Bike Lanes: Santa Monica's Smart City Approach

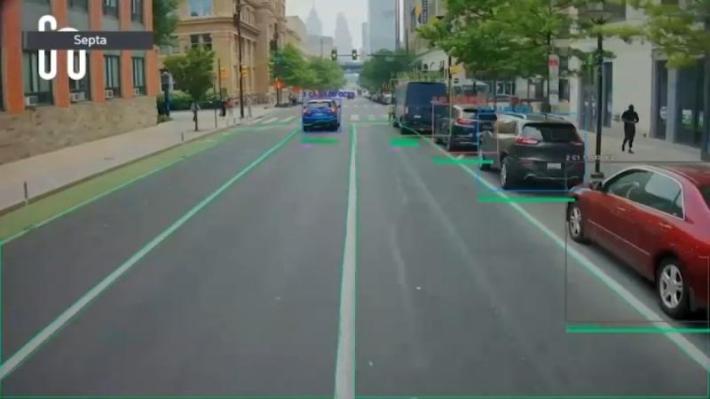

Santa Monica is about to become ground zero for a transportation revolution that most people won't even notice. Starting this April, seven parking enforcement vehicles will roll through the city equipped with artificial intelligence cameras capable of detecting bike lane violations in real time. No officers standing on corners with clipboards. No guesswork. Just algorithms trained to spot a parked car blocking a cyclist's path and automatically capture evidence.

This isn't theoretical stuff anymore. It's happening now, and it's raising important questions about how cities should balance safety, automation, enforcement, and privacy. The technology comes from Hayden AI, a San Francisco-based startup that's been quietly deploying similar systems on buses in Oakland, Sacramento, and dozens of other cities across North America.

What makes Santa Monica's rollout significant is the scale and the vehicle type. Parking enforcement vehicles cover much more territory than buses do. They're everywhere. They follow predictable routes that blanket entire neighborhoods. A single parking enforcement van, equipped with Hayden AI's cameras, can monitor more bike lanes in a single shift than dozens of human officers could in a week.

The story here goes deeper than just "robots are taking jobs" or "Big Brother is watching." It's about how cities can actually solve real problems that have been sitting unsolved for years. Bike lane blocking is genuinely dangerous. It forces cyclists into traffic. It creates confrontation. It enables cars to treat bike infrastructure as overflow parking. And traditional enforcement has never been effective enough to deter it because the resources simply don't exist.

But before we get into the weeds of how this works and what it means, let's step back and understand why this moment matters. Cities have been struggling with bike lane enforcement forever. They've tried everything. Manual patrols. Parking tickets. Increased fines. Public shaming campaigns. None of it has worked at scale. The problem is that enforcement requires constant presence, and constant presence is expensive. You can't afford to park an officer on every block where cars park illegally.

That's where AI comes in. Not as a replacement for human judgment, but as a force multiplier. A camera can look at thousands of vehicles a day. It can work at night, in rain, in fog. It doesn't get tired or distracted. And crucially, it doesn't let bias creep into enforcement decisions the way humans inevitably do.

This article explores how AI-powered parking enforcement actually works, why Santa Monica decided to deploy it, what the technology can and can't do, and what this means for the future of urban transportation. We'll talk to the companies building this stuff, the advocates pushing for it, and the critics who worry about where this technology might lead.

TL; DR

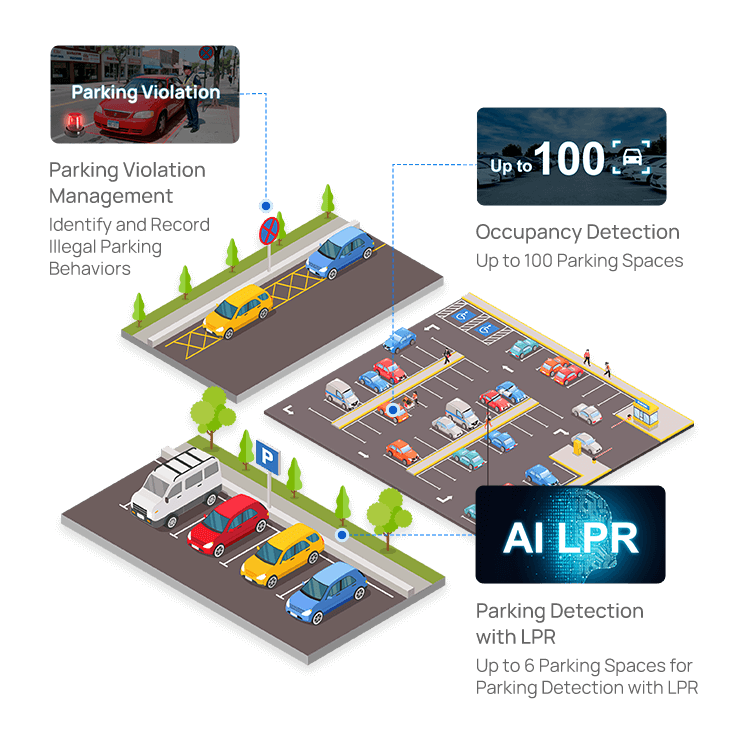

- AI cameras are now scanning for bike lane violations: Santa Monica will deploy Hayden AI's technology on seven parking enforcement vehicles starting April 2025, expanding beyond existing bus-mounted systems.

- Technology detects violations at scale: The system can identify blocked bike lanes, capture license plates, and generate evidence packages automatically without human bias in real-time across entire city zones.

- Safety benefits are measurable: UC San Diego detected 1,100+ parking violations in 59 days with 88% involving bike lane blocking, demonstrating the severity of the problem and the technology's effectiveness.

- Human review remains essential: All evidence packages are sent to law enforcement for verification before violation notices are issued, maintaining accountability and preventing false citations.

- Privacy and accuracy concerns exist: Critics worry about data retention, the potential for function creep, and whether algorithms trained on limited datasets might discriminate against certain vehicle types or neighborhoods.

AI-powered enforcement is estimated to outperform traditional methods in efficiency, consistency, bias reduction, and cost effectiveness. Estimated data based on typical enforcement challenges.

How AI Parking Enforcement Actually Works

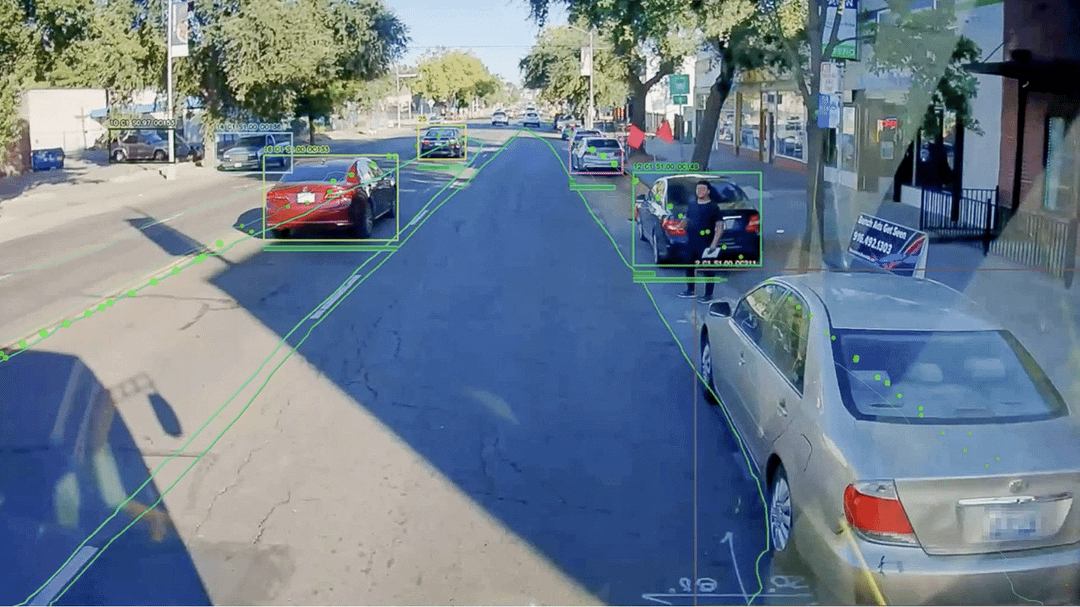

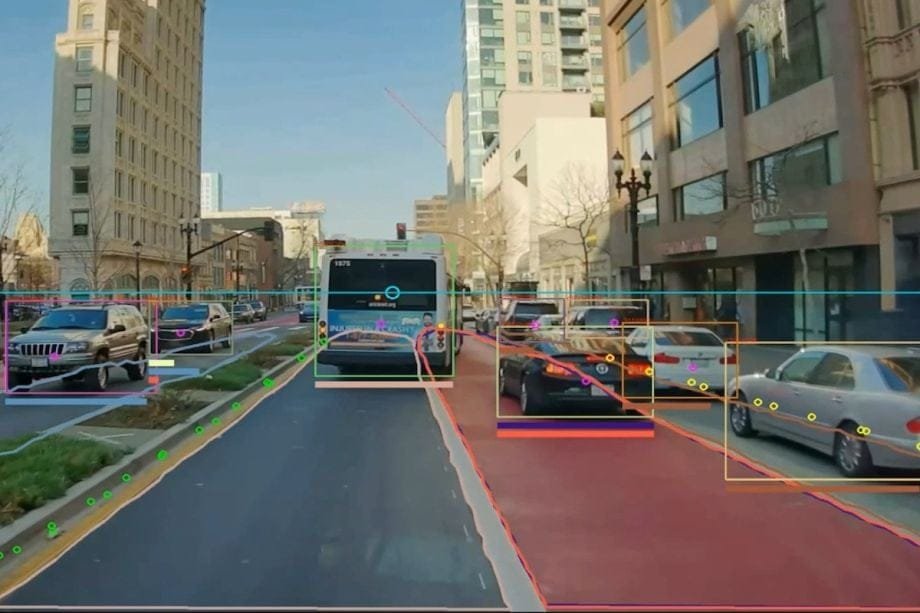

Let's talk about what these cameras are actually doing, because it's more sophisticated than you might think. This isn't just motion detection or simple pattern matching. The system uses computer vision trained on thousands of images of parking violations, street layouts, bike lanes, and urban environments.

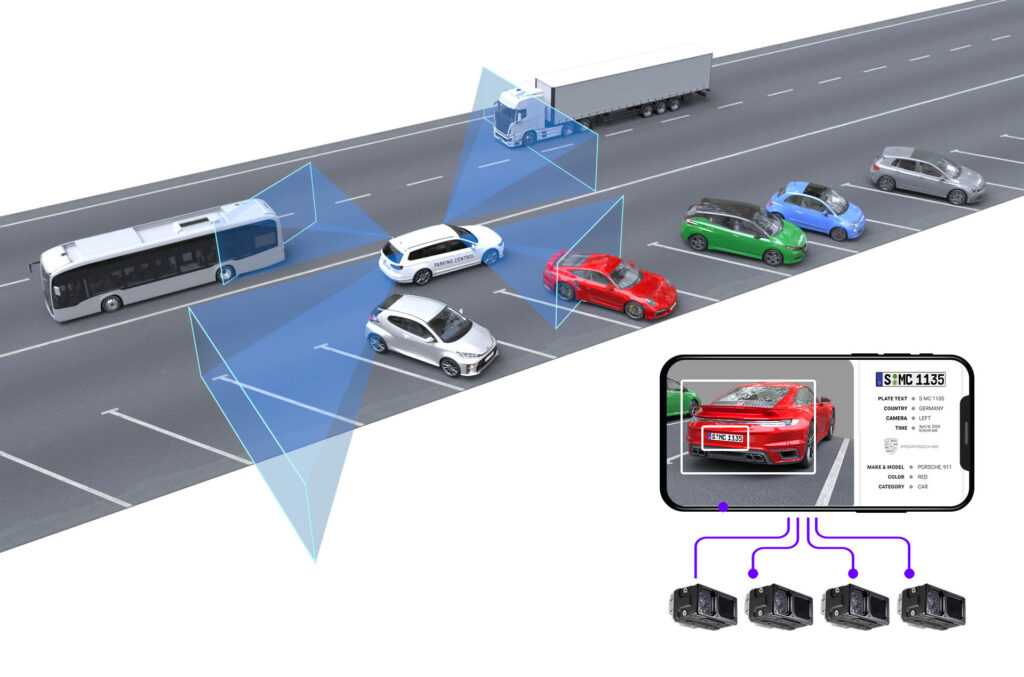

When Hayden AI installs its technology in a new city, it doesn't just drop cameras on vehicles and hope for the best. The first phase is mapping. The company works with city planners and parking enforcement officers to map out every bike lane, bus zone, loading zone, and restricted area. This gives the AI a complete understanding of what "illegal parking" actually means in that specific jurisdiction. Parking rules vary wildly from city to city. What's illegal in Oakland might be legal in Berkeley. The system has to learn local rules.

Once the geography is locked in, the AI trains on violations. It looks at thousands of examples of cars blocking bike lanes, cars in bus zones, cars in handicapped spaces. It learns what a violation looks like from different angles, in different lighting conditions, at different times of day. It learns to ignore shadows, reflections, and parked cars that are legal.

Here's the really important part: when the camera detects what it thinks is a violation, it doesn't just flag it. It captures a ten-second video clip and the vehicle's license plate. This package gets sent to law enforcement, not directly to the parking authority. Human officers review the evidence before any citation is issued. The AI is making an initial detection, but humans are making the final judgment call.

Charley Territo, chief growth officer at Hayden AI, explained it this way: "We tell our system that if you see a vehicle that encroaches on a bike lane, capture a 10-second video and the license plate of that vehicle. From there, an evidence package goes to the police and they will review and verify the elements that a prosecutable violation exists, and then they will issue a violation under state law. If there is no violation, the system doesn't capture any data."

That last sentence is crucial. The system isn't recording everything. It's not creating a database of every car that passes by. It only captures data when it believes a violation has occurred. In theory, this limits privacy intrusions. In practice, this depends entirely on how well the AI is trained and what the city considers a violation.

The technical architecture matters too. Some systems store video locally on the vehicle and transmit only metadata and flagged clips. Others stream everything to cloud servers. Santa Monica hasn't disclosed their setup, but the difference affects privacy implications significantly. Local storage means less continuous surveillance. Cloud storage means faster analysis but potentially more data retention.

One more thing about how this works: the cameras are mounted on the vehicles themselves, not on fixed poles or buildings. This means they only cover areas where the parking enforcement vehicles actually go. They're not creating permanent surveillance networks like fixed traffic cameras do. That's an important distinction when we talk about privacy later.

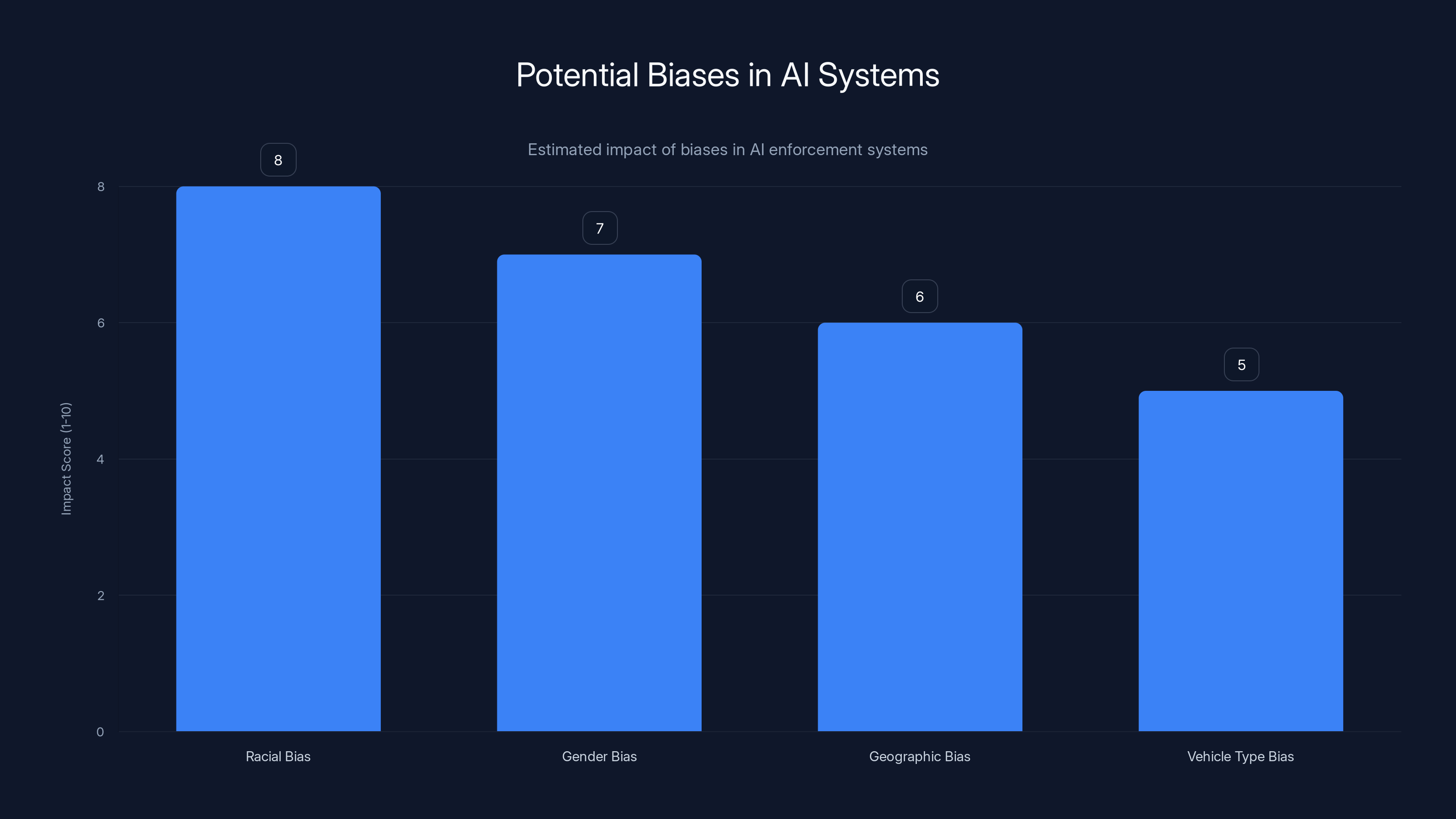

AI systems can exhibit various biases, with racial and gender biases often being the most impactful. Estimated data highlights potential areas of concern.

Why Santa Monica Decided to Deploy This Technology

Santa Monica isn't doing this because the city is excited about AI. The city did this because bike lane blocking is a persistent, measurable, quantifiable problem that traditional enforcement has failed to address.

Santa Monica has been investing heavily in cycling infrastructure for years. The city has built protected bike lanes, added bike parking, created car-free zones during peak hours, and spent millions on bike-related projects. But infrastructure alone doesn't work if people park in the lanes. A bike lane with a car parked in it is just a parking spot.

The problem in Santa Monica is real. Anyone who bikes in the city can tell you that. You build up a head of steam, you approach an intersection, and suddenly there's a car parked where the bike lane should be. You have to merge into traffic. You lose any protection that infrastructure gave you. At that moment, you're more vulnerable to a crash, road rage, or getting hit.

Cynthia Rose, director of Santa Monica Spoke (the local bike advocacy group), put it bluntly: "Enforcement cannot be everywhere at once. If we can extend their arm so these things can get done, and keep our community safe, that is a win. Anywhere where there's bike infrastructure, where I know of, blocking the lane is a problem. It's tantamount to parking in handicapped zones. It's just a flat no."

That language matters. Advocates aren't framing this as surveillance or privacy invasion. They're framing it as a civil rights issue. A bike lane is infrastructure for transportation. Blocking it is like blocking wheelchair access. It's not a minor inconvenience; it's a barrier to equality.

Santa Monica also has data. The city has been analyzing parking violations for years. They know which blocks have the most blocking, which times of day are worst, which vehicles are repeat offenders. They know that current enforcement isn't creating enough deterrent effect. Officers can't be everywhere. Ticketing isn't frequent enough to change behavior.

When Hayden AI approached Santa Monica with their solution, the city saw an opportunity to actually enforce their own rules at scale for the first time. Seven parking enforcement vehicles, equipped with AI cameras, could cover more territory in a single week than the city's entire enforcement team could cover in a month manually.

There's also a budget angle. Automated enforcement, if it works well, could actually reduce pressure on the city's parking enforcement budget while increasing compliance. You're not adding new staff; you're making existing staff more efficient. That's politically easier to sell.

But Santa Monica wasn't the first city to consider this. Hayden AI already has installations in Oakland and Sacramento. The company has systems on buses in New York City, Washington DC, Philadelphia, and dozens of other cities. By fall 2025, Hayden AI announced it had installed 2,000 systems worldwide.

Santa Monica is significant because it's the first city to put this technology on parking enforcement vehicles specifically. That's a bigger step than mounting cameras on buses. It represents a deliberate expansion of AI-based enforcement from transit-specific violations to general parking violations.

The Safety Case: Why Bike Lane Blocking Actually Matters

Before we get into the weeds of AI and automation, we need to understand why this problem is worth solving in the first place. Because honestly, if blocking a bike lane was just a minor inconvenience, this technology wouldn't make sense. But it's not minor.

Blocking a bike lane creates genuine physical danger. When a cyclist encounters a parked car in their lane, they have limited options. They can stop and wait for traffic to clear so they can merge into a car lane. They can slow down and navigate around the car, which might put them in a car's blind spot. They can turn around and find another route. Or they can proceed through traffic anyway, taking a significant safety risk.

Research on bike safety consistently shows that the safest cycling infrastructure is separated from cars. A bike lane separated from traffic by a curb or buffer is dramatically safer than a bike lane painted on the street next to cars. But that safety advantage evaporates if cars are parked in the lane. You've essentially created the most dangerous scenario: cyclists have to cross traffic lanes to get around the obstruction, and car drivers don't expect cyclists to suddenly appear in the traffic lane.

The safety impact compounds over time. If cyclists know that parked cars frequently block their lane, they stop using the infrastructure entirely. They ride in traffic instead. This defeats the whole purpose of building the protected lane in the first place. The city spends money on infrastructure. Drivers block it. Cyclists don't use it. Infrastructure doesn't increase cycling. Nothing improves.

There's also a deterrent effect to consider. If enforcement is visible and consistent, people stop doing the illegal thing. If enforcement is rare, people don't care. The parking enforcement officer might show up once a week in your neighborhood. Drivers know the odds of getting a ticket are low. Multiply that across hundreds of drivers making independent decisions, and you've got a systemic failure of enforcement.

Charley Territo at Hayden AI frames the safety argument in terms of bus safety, but the logic applies to bikes too: "We do that by reducing one of the biggest causes of collisions with buses, moving out of their lanes. So the fewer times they have to make a turn, the fewer instances there are of a crash."

A bus or bike that doesn't have to merge into traffic lanes is safer. Anything that reduces merge attempts reduces collision risk. That's not just theory; that's physics and basic traffic dynamics.

The UC San Diego data point is telling here. In 59 days, the system detected 1,100 violations. That's roughly 18 violations per day on a single campus. If the campus sees maybe 10,000-15,000 parking events per day, that means 0.1-0.2% of parking decisions result in bike lane blocking. That sounds low until you realize it across an entire city. Santa Monica probably sees tens of thousands of parking events per day. Even a 0.1% violation rate means dozens of bike lane blockages every single day.

From a safety and equity perspective, that's unacceptable. It means cyclists can't reliably use their infrastructure. They have to be ready to bail out into traffic at any moment. That's not safe. That's not equitable.

AI-powered parking enforcement significantly outperforms traditional methods in coverage, consistency, operational hours, and labor cost reduction. Estimated data based on typical benefits.

How Hayden AI's Technology Compares to Traditional Enforcement

Let's do a quick comparison between AI-powered enforcement and the way Santa Monica (and most cities) currently enforce parking rules.

Traditional enforcement relies on parking officers walking neighborhoods or driving slowly down blocks, looking for violations. When they spot one, they document it and issue a ticket. This approach has several limitations.

First, it's labor-intensive. A single officer can cover maybe five or ten blocks in a shift. They can't be everywhere. If you park illegally and an officer isn't in that area during that time, you won't get a ticket. Drivers know this. They calculate the risk and find it acceptable.

Second, enforcement is inconsistent. Different officers might interpret the same situation differently. Some officers might aggressively enforce bike lane blocking. Others might focus on other violations. This creates disparities. The same violation might result in a ticket one day and be ignored the next day, depending on which officer is working.

Third, manual enforcement has bias built in. Research consistently shows that parking enforcement, like all enforcement, reflects the biases of the people doing the enforcing. If an officer has unconscious biases against certain types of vehicles or neighborhoods, those biases end up in enforcement patterns. An AI system, if properly trained, can reduce these biases, though it can't eliminate them entirely.

Fourth, traditional enforcement is expensive. You need to pay officers, provide vehicles, manage logistics. The cost per violation detected is significant. This limits how much enforcement a city can afford.

AI-powered enforcement changes these dynamics. A camera system captures data 24/7 or during designated hours. It doesn't get tired or distracted. It applies the same rules consistently to every vehicle. It's not perfect, but it's more consistent than human enforcement.

The cost structure is also different. The upfront cost is high—cameras, servers, software development. But the marginal cost of each additional vehicle or additional neighborhood is low. Once you've built the system, adding a new vehicle is just installing more hardware.

Hayden AI doesn't disclose pricing, but industry estimates suggest that AI parking enforcement systems cost between

However, AI enforcement has limitations too. It can't handle edge cases well. It can't make judgment calls. It can't understand context. If a car is parked in a bike lane because the driver is actively loading a wheelchair-accessible vehicle, should that be a violation? A human might make allowances. An AI system probably won't, unless it's specifically programmed to.

That's why the human review layer matters. Law enforcement reviews evidence before citations are issued. They can see if the AI flagged something that doesn't actually violate the rules. They can understand context. That human layer is essential for fairness.

But here's the tension: if human review is essential, and human review is slow and expensive, then cities might decide to skip it. They might fully automate enforcement to save money. That's when AI enforcement becomes problematic.

Privacy Concerns with Automated Enforcement

Let's be honest about the privacy side of this. An AI camera system on parking enforcement vehicles is surveillance technology. It's capturing images and video of license plates and vehicles. It's generating data about where cars are and what they're doing. Even if it only captures data when it detects a violation, it's still generating surveillance data.

There are legitimate privacy questions here. What happens to the data after enforcement is complete? How long is it retained? Who has access to it? Can it be used for other purposes? What's the oversight mechanism?

Cynthia Rose from Santa Monica Spoke acknowledged this: "While warning of the potential misuse of bulk data collection in general, Rose was on board with Hayden AI's particular plan."

That's a careful statement. It's saying: yes, we understand there are risks with bulk data collection in general, but we think this particular application is justified.

The key protection against misuse is limitation of scope. If the system only captures data when it detects violations, and those violation packages are reviewed and deleted if they don't result in citations, then the surveillance footprint is limited. You're not creating a permanent database of every car in the city.

But this requires trust in the implementation. It requires transparency about data retention. It requires audit trails showing what data was collected, when, and what happened to it. Santa Monica will need to establish data governance policies.

There's also the question of function creep. Today it's bike lane blocking. Tomorrow it's illegal parking generally. The day after tomorrow, it's traffic violations, red lights, speeding. Each expansion seems reasonable in isolation. But collectively, you end up with comprehensive surveillance of all vehicle movement.

This is actually happening in other cities. Automated traffic enforcement systems started with red light cameras. Now they're expanding to speed enforcement, parking enforcement, and general traffic monitoring. Once you have the camera infrastructure in place, the pressure to use it for other purposes becomes intense.

Government agencies argue that expanding the system's use makes sense because the hardware already exists. Private companies argue that they can sell more services if the system can detect multiple types of violations. The technology enables function creep, and function creep is hard to resist.

The other privacy concern is data breaches. Any system that stores license plate images and vehicle data is a target for hackers. There's a black market for license plate data. Insurance companies want it. Private investigators want it. If Hayden AI gets breached, sensitive location data could be exposed.

Hayden AI probably has reasonable security practices. But no system is completely secure. This is a real risk that cities need to factor in.

One approach to mitigating privacy concerns is transparency. Santa Monica should publish an annual report on AI enforcement data: how many violations were detected, how many resulted in citations, how many were reviewed and dismissed, what happened to the data, and how long it's retained. This transparency creates accountability.

Another approach is limiting retention periods. Delete video and license plate data after a certain time period unless it's part of an active enforcement case. Don't build permanent databases of vehicles and their locations.

A third approach is independent audit. Have a third party regularly audit the system to ensure it's being used as intended and not for function creep.

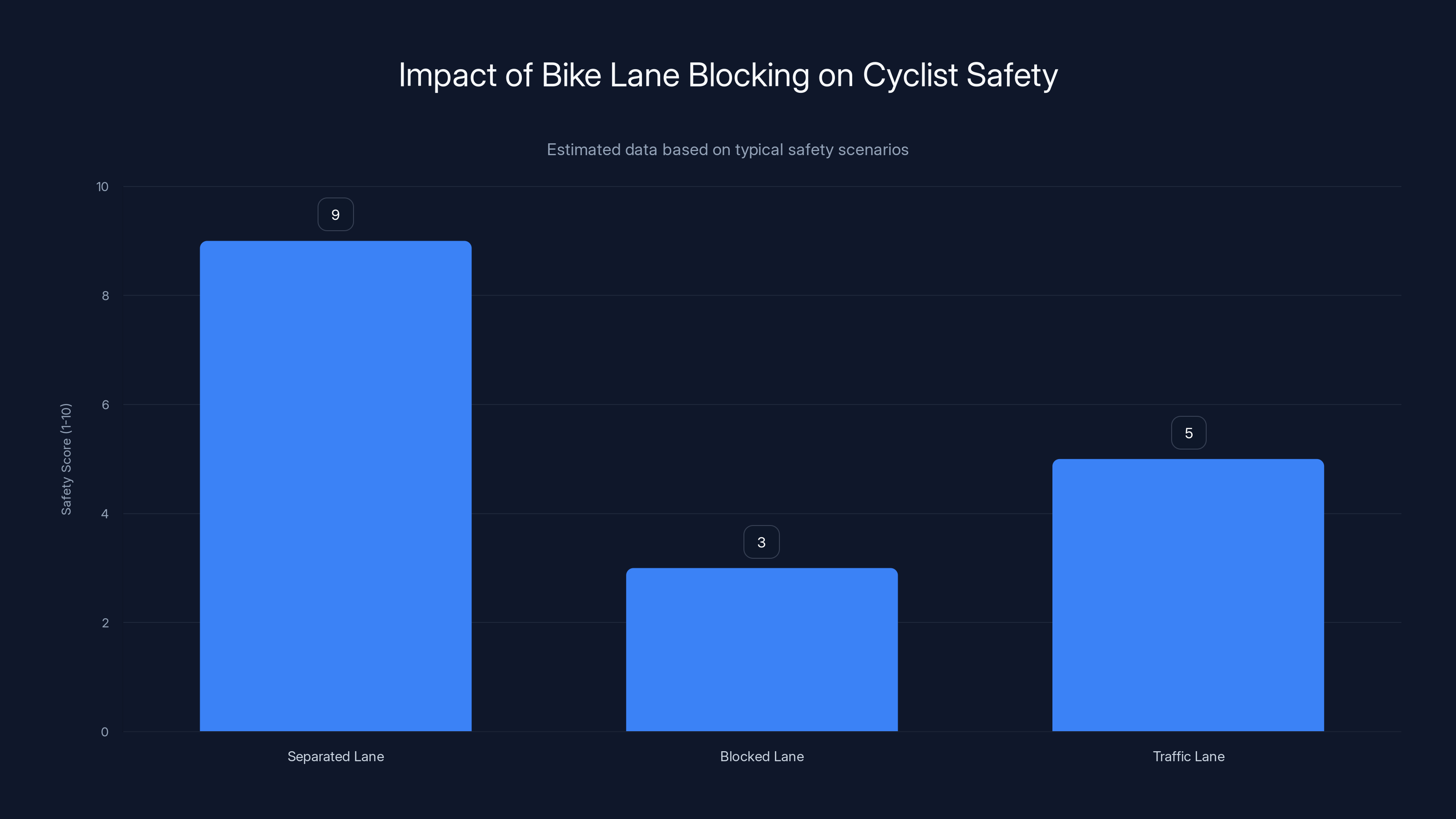

Separated bike lanes offer the highest safety, while blocked lanes significantly reduce safety, forcing cyclists into traffic. Estimated data.

Accuracy and Algorithmic Bias in AI Enforcement

Now let's talk about something that's really important but gets less attention than privacy: is the algorithm actually accurate, and does it have biases?

AI systems are only as good as their training data. If the system was trained primarily on images of common vehicles in certain neighborhoods, it might not accurately detect violations involving less common vehicles or in different contexts. If the training data has geographic biases, the system might work great in wealthy neighborhoods and terribly in poor neighborhoods.

This isn't theoretical. Facial recognition systems have well-documented racial biases. Computer vision systems for pedestrian detection have gender biases. Automated hiring systems have discrimination biases. AI systems inherit and amplify biases from training data.

Hayden AI doesn't publish detailed information about their training data or validation metrics. We don't know how accurate their system is across different vehicle types, lighting conditions, or neighborhoods. We don't know if it has biases. The company should be transparent about this.

One specific concern: how does the system handle vehicles with obscured or damaged license plates? It's supposed to only flag violations where the license plate is clearly visible. But what if the algorithm occasionally fails to detect plates that are actually readable? Or what if it flags violations where the plate is genuinely unreadable?

The UC San Diego pilot detected 1,100 violations in 59 days. Did humans review all 1,100? How many were dismissed because the system made mistakes? What was the false positive rate? We don't have this data.

Another accuracy question: how does the system distinguish between different types of parked cars? A car actively being loaded is different from a car parked and abandoned. A delivery truck is different from a personal vehicle. Does the algorithm understand context, or does it just detect encroachment?

Hayden AI would argue that humans review all evidence before citations are issued, so false positives don't result in undeserved tickets. That's true, but it creates extra work. If the system has a 20% false positive rate, then law enforcement officers spend a lot of time reviewing and rejecting invalid violations.

There's also a subtler bias concern. If the system is more accurate in some neighborhoods than others, enforcement becomes geographically biased. Maybe the system was trained primarily on residential neighborhoods, so it works great there but fails in commercial or mixed-use areas. Or the reverse. This creates unequal enforcement.

Hayden AI should publish validation data showing how well their system works across different conditions. They should test the system in different neighborhoods and show that accuracy is consistent. They should publish statistics on human review and override rates.

Without this transparency, we're asking cities to deploy enforcement technology without knowing if it actually works as promised. That's not a reasonable ask.

The Expansion of AI Enforcement Beyond Parking

Santa Monica's parking enforcement deployment is significant, but it's also part of a much larger trend toward AI-based enforcement across multiple domains.

Hayden AI started with bus-based enforcement. Buses already have cameras for safety and security. Adding computer vision to detect violations was a natural extension. The company now operates systems in multiple cities, with 2,000+ installations as of late 2025.

But parking enforcement on buses is just one application. The same technology can be adapted to detect other violations. Speeding. Running red lights. Seatbelt violations. Illegal turns. Any violation that's visible on video can potentially be automated.

Some jurisdictions are already doing this. Automated red light cameras are common. Speed enforcement cameras exist in many countries. Parking enforcement cameras are expanding. As the technology improves and costs decrease, more cities will adopt it.

The question is whether this expansion is happening with proper oversight and public input. Are cities making deliberate decisions to deploy AI enforcement, or is it happening incrementally as vendors propose new capabilities?

There's also the question of who's pushing this expansion. Is it cities wanting to improve safety and enforcement? Is it private companies wanting to sell more products? Is it a combination?

Hayden AI is a private company. They have an incentive to expand deployment and expand the scope of what their systems can detect. The more cities they operate in, the more data they collect, the better their algorithms become. That's good for their business but potentially problematic for privacy and civil liberties.

There's an information asymmetry here. Hayden AI knows a lot about their technology, its capabilities and limitations. Cities have much less information. They're making decisions based on vendor pitches and limited public data.

This suggests that cities should require independent validation of AI enforcement systems before deployment. Have a third party test the accuracy. Have a third party evaluate the privacy implications. Have a third party audit the training data for biases. Don't just take the vendor's word for it.

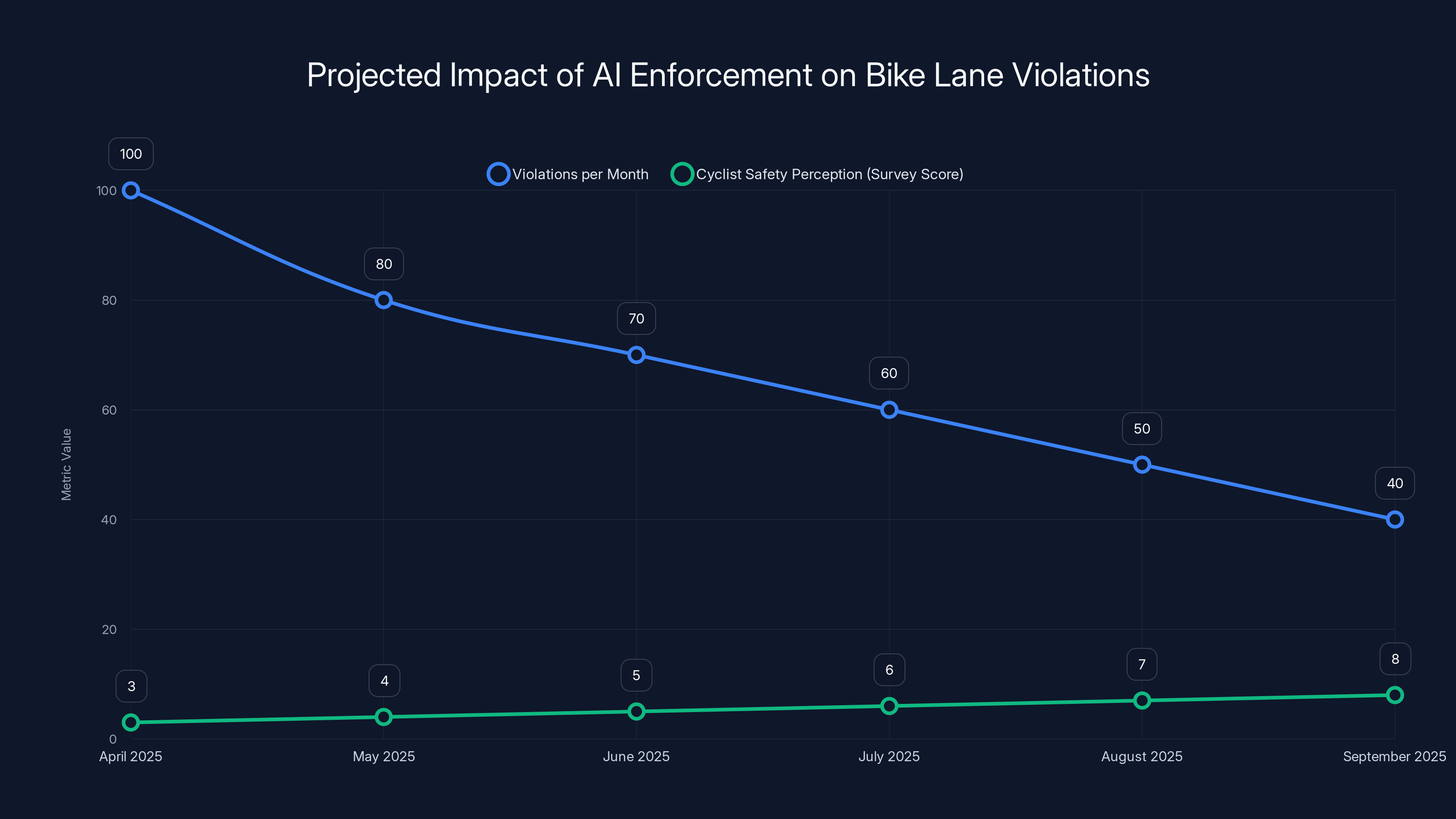

Estimated data suggests a decrease in bike lane violations and an increase in cyclist safety perception over six months following AI enforcement deployment.

How Cities Are Preparing for AI Enforcement Deployment

Santa Monica didn't just decide one day to deploy AI enforcement. There was a process, albeit not a fully transparent one.

First, the city identified a problem. Bike lane blocking was common, enforcement was ineffective. The city looked for solutions. Hayden AI proposed their technology.

Second, there was probably some evaluation period. The city likely looked at pilots in other cities, talked to Hayden AI, discussed the technology with cycling advocates and other stakeholders. This process probably took months.

Third, there was a decision to proceed. The city approved the deployment, acquired the hardware and software, and began implementation.

What we don't know is how much public input happened at each stage. Was there a community board meeting where residents could ask questions? Was there a public comment period? Were there concerns raised and addressed?

Hayden AI mentions that Cynthia Rose from Santa Monica Spoke was supportive, but were there other stakeholder groups? What about privacy advocates? What about communities that might be disproportionately affected by enforcement?

For other cities considering similar deployments, there's a better process:

Stage 1: Problem Definition. Clearly define the problem you're trying to solve. How common is bike lane blocking? What's the impact? How effective is current enforcement? What are the barriers to better enforcement?

Stage 2: Solution Evaluation. Look at multiple approaches. Is AI enforcement the only option? Could you solve the problem with better funded manual enforcement? With public awareness campaigns? With infrastructure changes? What are the trade-offs?

Stage 3: Pilot Program. If you decide to try AI enforcement, start with a limited pilot. One or two vehicles. One neighborhood. Clearly defined time period. Built-in evaluation metrics.

Stage 4: Stakeholder Input. During and after the pilot, gather input from cyclists, residents in the pilot area, civil liberties groups, and enforcement personnel. What worked? What didn't? What concerns emerged?

Stage 5: Independent Evaluation. Have a third party evaluate the pilot. How accurate was the system? Did it have biases? What was the cost per violation detected? Did it actually change behavior?

Stage 6: Policy Development. Based on the pilot and independent evaluation, develop clear policies on how the technology will be used, what oversight mechanisms exist, how data will be retained and protected, and what triggers would prompt ending the program.

Stage 7: Full Deployment. Only after all the above steps should you move to broader deployment.

We don't know if Santa Monica followed all these steps. From the public information available, it seems like the decision moved fairly quickly. That's not necessarily bad, but it suggests limited public process.

Cities thinking about deploying similar technology should move more deliberately. Get the process right. Build public trust. Make informed decisions based on data and evidence.

The Role of Bike Advocacy in AI Enforcement Policy

One of the more interesting aspects of the Santa Monica story is that the local bike advocacy group, Santa Monica Spoke, appears to support the AI enforcement deployment. This is somewhat unexpected because bike advocates often focus on building infrastructure, not on enforcement.

But it makes sense when you think about it. You can build all the protected bike lanes you want, but if cars park in them regularly, the infrastructure is useless. Enforcement is the other half of the equation.

Cynthia Rose's quote is telling: "Enforcement cannot be everywhere at once. If we can extend their arm so these things can get done, and keep our community safe, that is a win."

This is a pragmatic assessment. Manual enforcement doesn't work at the scale needed. AI enforcement could work. Therefore, support AI enforcement. This reasoning is hard to argue with from a practical standpoint.

However, it also points to a potential concern. If bike advocates support AI enforcement, they might not push as hard for oversight and privacy protections. They might accept problematic implementations because they're focused on the enforcement benefit.

There's a tension here that's worth noting. Effective enforcement requires a strong legal framework and consistent application of rules. AI can help with consistency. But privacy and civil liberties also require strong protections. If advocates are focused purely on enforcement benefits, those protections might be neglected.

A healthier approach would be for bike advocates to support AI enforcement while also pushing hard for privacy protections, transparency, and oversight. "We want enforcement, and we want it to respect privacy and civil liberties. We want to see the data. We want independent audits. We want to know how many violations are false positives. We want to understand how the algorithm works."

That kind of principled support is harder than blanket support, but it's more valuable in the long run.

Bike advocates in other cities considering similar deployments should think carefully about this. Your goal is safer cycling infrastructure. AI enforcement might help achieve that goal, but it shouldn't blind you to the broader implications.

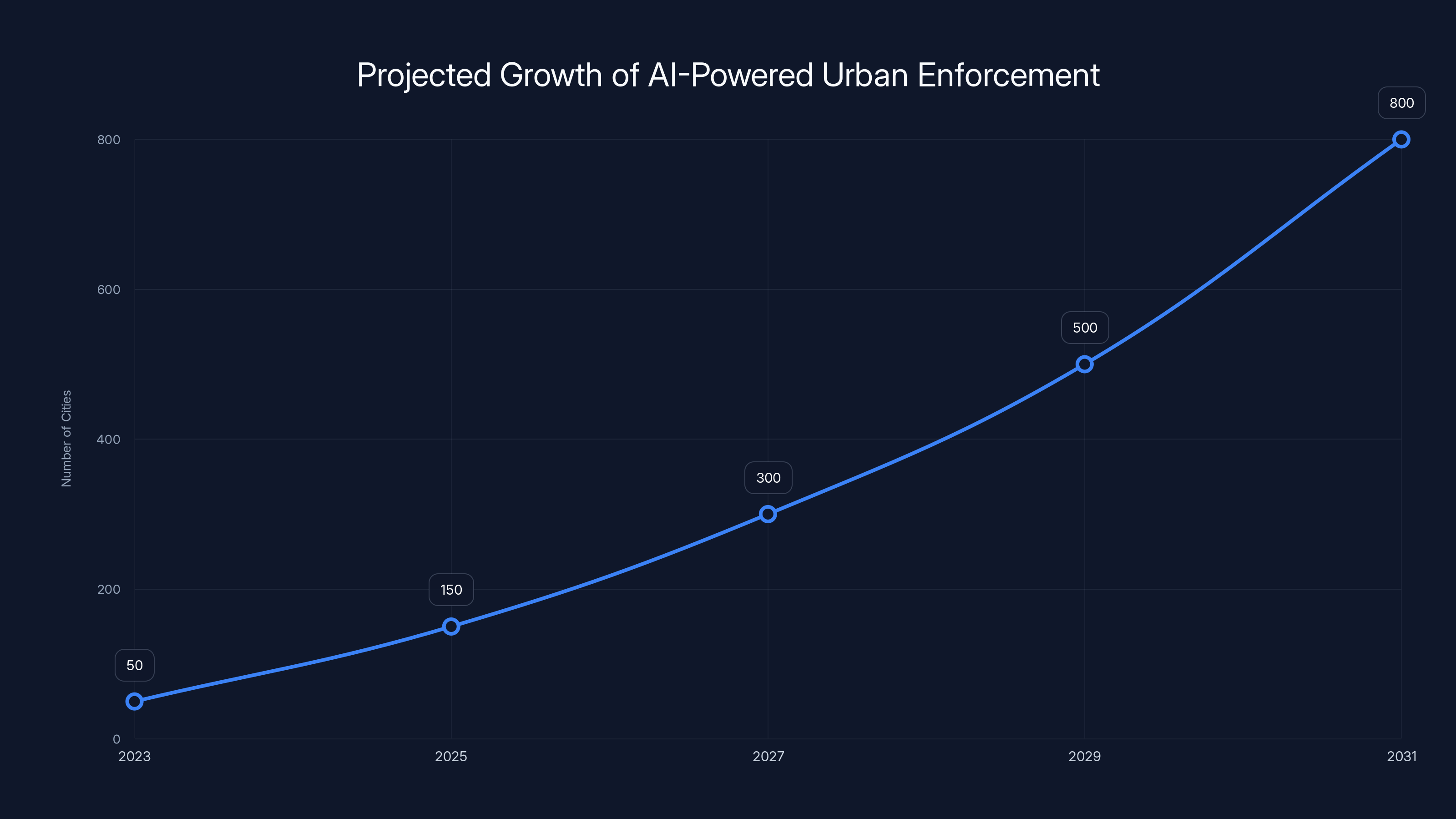

Estimated data shows a significant increase in the number of cities adopting AI-powered enforcement, from 50 in 2023 to 800 by 2031. This growth reflects the expanding capabilities and acceptance of AI in urban management.

Measuring Success: What Metrics Matter for AI Enforcement

If Santa Monica deploys AI enforcement in April 2025, how will we know if it worked? What metrics matter?

Obviously, the first metric is violations detected and citations issued. If the system is working, we should see more citations for bike lane blocking than before. But this metric alone doesn't tell us much. More citations could mean more violations, or it could just mean the system is catching things that were previously missed.

A better metric is violations per unit time. Are bike lane blockages decreasing over time? If enforcement creates deterrent effects, we should see fewer violations as time goes on. Month one might show high violation rates because drivers don't know enforcement exists. Month six should show lower rates as drivers learn that blocking bike lanes results in tickets.

Another important metric is behavior change. This is tricky to measure directly, but you can approximate it. Survey cyclists about their experience in bike lanes. Ask them if they feel safer. Ask them if they have to merge into traffic less often. If enforcement is working, cyclists should report fewer obstructions.

You should also track false positive rates. How many violations flagged by the system are dismissed by law enforcement after review? If this rate is high, the system is creating work for enforcement without actually catching real violations.

Cost metrics matter too. How much does the system cost per violation detected? How much does it cost per citation issued? How does this compare to manual enforcement? If AI enforcement costs more per violation than manual enforcement, it's not a cost-effective solution, even if it's more consistent.

Safety metrics are crucial but harder to measure. Did the deployment result in fewer cyclist-car collisions? This is tricky because bike collisions are rare and affected by many factors beyond bike lane obstruction. You'd need years of data to see a statistical signal. But it's still worth tracking.

Equity metrics should also be part of the evaluation. Is enforcement distributed evenly across neighborhoods? Is any geographic area being disproportionately targeted? Is enforcement concentrated in wealthy areas? Poor areas? This matters because it affects public perception of fairness.

Demographics of cited vehicles could also reveal biases. Are certain types of vehicles being cited more frequently than others? Are commercial vehicles being targeted disproportionately? These patterns could indicate algorithmic bias or human bias in the review process.

Public perception metrics matter too. Do cyclists feel safer? Do residents support the program? Do people trust that it's fair? Public trust is essential for long-term acceptance of any enforcement program.

Santa Monica should measure all of these metrics and publish regular reports. This transparency creates accountability and allows the public to see if the program is actually achieving its goals.

Future Prospects for AI-Powered Urban Enforcement

Looking ahead, AI-powered enforcement is going to expand significantly. The technology is getting better, costs are coming down, and more cities are discovering that it solves real enforcement problems that manual enforcement can't solve.

We'll probably see AI enforcement expand beyond parking violations. Traffic enforcement is a logical next step. Speed cameras are already automated in many places. Imagine those systems getting smarter, incorporating vehicle classification, context understanding, and more sophisticated analysis. Automated traffic courts where citations are issued without human review (hopefully with safeguards against this).

Self-driving cars will change the game entirely. Once most vehicles are autonomous, enforcement shifts from individual drivers to fleet operators and manufacturers. The enforcement targets change. The dynamics change entirely.

But in the near term (next 5-10 years), we'll see AI enforcement proliferate in cities worldwide. It will start with the low-hanging fruit: bike lane blocking, bus lane violations, obvious parking violations. It will expand from there.

The question is whether this expansion will happen thoughtfully or carelessly. Will cities demand independent validation and transparency? Will they implement robust oversight mechanisms? Will they consider privacy implications? Or will they just deploy whatever vendors propose?

I think the answer depends on how cities handle the early deployments. If Santa Monica's program works well, is transparent, and generates public trust, other cities will follow and may implement similar programs thoughtfully. If it generates controversy, privacy concerns, or public backlash, cities will be more cautious.

What would cause backlash? False positives that result in unjust citations. Evidence of algorithmic bias. Function creep beyond bike lane blocking. Data breaches. Lack of transparency. Any of these could sour the public on AI enforcement.

Longer term, I think we'll see a bifurcation. Progressive, well-funded cities with strong civic institutions will implement AI enforcement thoughtfully, with proper oversight and transparency. Desperate cities with weak institutions might implement it carelessly, prioritizing revenue over fairness.

This worries me because enforcement is inherently about power. Government has the power to fine people, restrict movement, etc. When you automate that power, you need to be extra careful about oversight and fairness. The potential for abuse increases.

The Business Model Behind AI Enforcement

Let's talk about who's making money here and what their incentives are, because this matters for understanding how the technology develops.

Hayden AI is a private company. Their business model appears to be selling cameras, software, and support services to cities and transit agencies. They don't make money directly from citations. They make money from city contracts.

This means their incentive is to get cities to deploy their technology, not necessarily to maximize revenue from enforcement. That's actually better than a model where the company makes money from citations, which would incentivize aggressive or unfair enforcement.

But there's still an incentive to expand the scope of what the technology can detect. If Hayden AI can convince cities that their system can detect not just bike lane violations but speeding, red light running, and other violations, they can justify higher prices and bigger contracts.

This is where the incentives become problematic. From Hayden AI's perspective, the more violations their system can detect, the more valuable it is. From a city's perspective, the more violations they can enforce, the more revenue they can generate. These incentives can align to create aggressive enforcement.

But from a fairness and privacy perspective, more enforcement isn't always better. Automated enforcement of minor violations could lead to over-policing of poor communities and disproportionate impacts on vulnerable populations.

There's also a question of data ownership. Who owns the vehicle tracking data generated by these systems? Hayden AI? The city? If Hayden AI retains ownership, they could potentially use the data for other purposes. They could sell aggregated insights to insurers, advertisers, or data brokers. They could use it to train better algorithms that they then sell to other companies.

Data from these systems is valuable. Vehicle movement patterns reveal a lot about human behavior. Cities should carefully consider who owns this data and what restrictions are placed on its use.

Comparing Santa Monica's Approach to Other Cities

Santa Monica isn't the first city to deploy AI enforcement technology. Let's look at how its approach compares to what other cities have done.

Oakland and Sacramento already have Hayden AI's systems on buses. New York City, Washington DC, and Philadelphia have installations. Other cities are piloting different AI enforcement technologies.

What varies across cities is how transparent and thoughtful they've been about deployment. Some cities have conducted public processes, gathered input from stakeholders, and published data on effectiveness. Others have deployed quietly with minimal public visibility.

Santa Monica's deployment appears to be public, with local advocates supporting it. That's better than some other deployments, but we don't have details on how thorough the public process was.

Comparison-wise, Santa Monica is positioning itself as a progressive city using technology to improve safety. That's good marketing. But the question is whether the implementation is actually fair and effective.

Other cities might learn from Santa Monica's experience. If the program succeeds, it could become a model for other cities. If it fails or generates backlash, other cities will be more cautious.

What would make Santa Monica's program a model worth replicating? Transparency about performance data. Clear policies on data retention and use. Independent oversight. Evidence of fairness across neighborhoods. Measurable improvements in cyclist safety. Public acceptance and trust.

What would make it a cautionary tale? High false positive rates. Evidence of bias. Privacy violations. Function creep beyond bike lane enforcement. Public backlash. Lack of transparency.

It's too early to say which direction this will go. The program hasn't launched yet. But it's worth watching.

Challenges and Limitations of AI Parking Enforcement

Let's be realistic about what AI enforcement can and can't do.

It can detect violations at scale and consistently. It can cover more territory than manual enforcement. It can work 24/7. It doesn't get tired or biased in obvious ways.

But it has real limitations.

It can't understand context. A car loading a wheelchair-accessible vehicle might encroach slightly on a bike lane. A human officer might decide not to ticket it. An AI system probably will, unless specifically programmed to recognize and account for this scenario.

It can't handle edge cases. What about delivery vehicles that need to briefly stop in bike lanes to deliver? What about emergency vehicles? What about vehicles that were towed into a bike lane? There are countless scenarios where the right enforcement decision requires human judgment.

It can't adapt to changing rules. If the city decides to temporarily allow parking in bike lanes during a specific event, the AI system needs to be reconfigured. Manual enforcement can adapt more flexibly.

It depends entirely on camera quality, angle, and placement. If a camera misses an area or has a bad angle, violations in that area won't be detected. This creates uneven enforcement.

It can be gamed. If people learn how to park in ways that the camera doesn't detect (certain angles, certain lighting conditions), they can avoid the system.

Most importantly, AI enforcement doesn't address the root cause. Why are people parking in bike lanes? Because there's no parking elsewhere. Because they're in a hurry. Because they don't care. Citations might deter some people, but if the underlying incentive structure doesn't change, some people will keep doing it and just accept the fine as a cost of doing business.

This is why enforcement alone isn't sufficient. You need supply-side solutions too. More parking where people actually need it. Better-designed neighborhoods that discourage car-dependent trips. Public transit that makes driving unnecessary.

AI enforcement can be part of the solution, but it's not the complete solution.

The Politics of AI Enforcement

AI enforcement is political, even though it's often presented as neutral and objective. It's political because enforcement is inherently political.

Consider what Santa Monica is choosing to enforce. Bike lane blocking. This reflects the city's values. Santa Monica has invested in cycling infrastructure. They want people to use it. They're willing to enforce rules to make that happen.

Another city might choose to enforce different violations. Illegal street vending. Camping in public spaces. Loitering. These are more political choices. They reflect different values about how to govern public space.

The technology itself is neutral. A camera is a camera. But the decision to deploy it, where to deploy it, what violations to target, and how aggressively to enforce—these are all political choices.

This matters because it means the deployment of AI enforcement requires democratic legitimacy. People should have input into what gets enforced and how. The decision shouldn't be made by technocrats and corporate vendors alone.

There's a risk that AI enforcement becomes a way to bypass democratic processes. A city council debates whether to increase parking enforcement and decides against it. But then a private company proposes an automated system that avoids the political debate. The system gets deployed with less scrutiny.

Or consider this scenario: a poor neighborhood starts getting heavily enforced by AI cameras. Residents object. Advocacy groups fight the enforcement. But the city decides the system is efficient and revenue-generating, so it stays. This could create perception of unfair enforcement and erode public trust.

For these reasons, the deployment of AI enforcement should go through normal democratic processes. City council approval. Budget approval. Public comment periods. Oversight mechanisms. It shouldn't be positioned as a neutral technical decision made by experts.

Santa Monica might be following this process—we don't have complete information. But it's important for other cities considering similar deployments to ensure they're doing so democratically.

Implications for Cycling and Urban Transportation

If AI enforcement actually works to reduce bike lane blocking, the implications for cycling are significant.

One implication is that cycling infrastructure becomes more reliable and usable. Cyclists know their lane will be protected from cars. They can use cycling as a viable transportation mode, not just on good days when enforcement is happening to be present.

This could lead to mode shift. More people cycling instead of driving. This has huge implications for transportation, emissions, urban design, and public health.

But it depends on cycling infrastructure actually being good. A bike lane is only attractive if it goes somewhere people want to go, is safe, and is pleasant to use. You can't enforce your way to cycling adoption if the infrastructure is lousy.

Another implication is that bike lanes become normalized. When violations are actually enforced, people start treating bike lanes like they treat other traffic lanes. They stop parking in them. They stop ignoring them. Infrastructure gains social legitimacy.

This normalization can spillover to attitudes about cycling generally. If the city is serious enough about cycling to enforce bike lane violations, the signal is that cycling is an important mode. This can shift cultural attitudes.

Longer term, if enforcement is successful in protecting bike lanes, more cities will build more bike lanes. The case for cycling infrastructure becomes stronger when you can actually enforce it.

The flip side: if enforcement is seen as oppressive or unfair, it could create backlash against cycling infrastructure and cyclists generally. People might resent cyclists who they perceive as demanding special protected lanes that are aggressively enforced.

So the fairness and transparency of enforcement matters not just for principled reasons but for practical reasons. How enforcement is perceived affects whether people support cycling infrastructure.

Looking Forward: What We Need to Solve

As Santa Monica and other cities move forward with AI enforcement, there are several things that need to happen to make it work well.

First, transparency. Cities need to publish data on how many violations are detected, cited, and dismissed. They need to show that enforcement is consistent across neighborhoods. They need to explain how the technology works and what it can and can't do.

Second, oversight. There should be independent oversight of AI enforcement systems. Third parties should regularly audit accuracy, check for biases, and verify that systems are being used as intended.

Third, limits. Cities should clearly define what the technology can be used for. Bike lane blocking, yes. Speeding, maybe. But the scope should be narrowly defined and changed only through public process.

Fourth, data governance. Clear policies on data retention, ownership, and use. Data should be deleted after enforcement actions are complete unless there's an active legal proceeding. Aggregated data can be published, but personal data should be protected.

Fifth, appeals process. People cited by automated enforcement should have a clear, accessible appeals process. They should be able to contest citations and have them reviewed by humans.

Sixth, community benefits. Cities deploying enforcement should also invest in cycling infrastructure, safety programs, and community benefits. Enforcement alone creates resentment. Enforcement paired with investment builds support.

If Santa Monica implements these elements, their program could be a model for other cities. If they skip these elements, the program could create problems that take years to fix.

FAQ

What is AI-powered parking enforcement for bike lanes?

AI-powered parking enforcement uses computer vision cameras mounted on vehicles to automatically detect when cars are parked illegally in bike lanes or transit zones. The system captures video evidence and the vehicle's license plate, generating an evidence package that human enforcement officers review before issuing citations. The technology combines artificial intelligence with human oversight to provide consistent, efficient enforcement at scale.

How does Hayden AI's technology work in practice?

Hayden AI's system first maps a city's bike lanes and parking restrictions, then trains its AI to recognize violations based on local rules. When a camera detects a vehicle encroaching on a bike lane with a visible license plate, it captures a 10-second video clip. This evidence package is sent to law enforcement for verification before any citation is issued. Importantly, the system only captures data when it detects a suspected violation, not continuously.

What are the main benefits of AI parking enforcement?

AI enforcement provides several advantages over traditional manual enforcement: it covers more territory efficiently, applies rules consistently without human bias, works 24/7, and reduces the labor costs associated with enforcement. The technology can catch violations that officers might miss, creating a stronger deterrent effect that encourages compliance with bike lane protection rules and improves safety for cyclists and transit users.

What privacy concerns exist with automated enforcement cameras?

The primary concerns are data collection scope, retention periods, and potential misuse. Cities must establish clear policies on how long vehicle data is stored, who has access to it, and whether it can be used for purposes beyond the original enforcement. Function creep is a real risk where enforcement systems gradually expand to monitor additional violations, and there's always potential for data breaches that could expose license plate information and location data to unauthorized parties.

How can cities ensure AI enforcement is fair and unbiased?

Cities should require independent validation of AI systems before deployment, including testing for accuracy across different vehicle types and neighborhoods. They should mandate transparency through published reports on violation detection rates, false positive rates, and enforcement patterns by geography. Human review of all enforcement actions creates an essential accountability layer, and regular audits can identify and correct algorithmic biases.

What happens when AI enforcement systems flag violations that aren't actually violations?

This is handled through human review. Law enforcement officers examine the evidence package generated by the AI system and verify that an actual violation occurred before issuing a citation. If the AI made a mistake or missed context (such as a vehicle briefly loading a wheelchair-accessible passenger), the officer can dismiss the flagged violation. High false positive rates waste enforcement resources and suggest the system needs improvement or retraining.

Could AI enforcement systems expand beyond bike lane violations?

Yes, this is a real concern. Once the camera infrastructure and AI systems are in place, there's pressure to expand to speeding, red lights, and other violations. This function creep can happen incrementally without significant public input. Cities should establish clear limits on what enforcement targets are allowed and require public process to change those limits.

How does AI enforcement compare to hiring more parking enforcement officers?

AI enforcement is more cost-effective at scale once infrastructure is built, with lower per-violation costs than manual enforcement. However, it sacrifices flexibility and context understanding. Manual enforcement can make judgment calls and handle edge cases, while AI cannot. The best approach likely combines both, using AI to maximize coverage while retaining human officers for situations requiring nuance and discretion.

Conclusion

Santa Monica's decision to deploy AI-powered parking enforcement on seven vehicles starting in April 2025 represents a significant milestone in the evolution of urban enforcement technology. It's not the first city to use AI for enforcement, but it is the first to apply the technology specifically to bike lane blocking on parking enforcement vehicles at this scale.

The decision makes sense. Bike lane blocking is a genuine problem that traditional enforcement hasn't solved. Cyclists can't reliably use protected infrastructure if cars park in the lanes. Santa Monica has invested millions in cycling infrastructure. Protecting that investment through enforcement is rational.

The technology works. Hayden AI has installations in dozens of cities and has accumulated significant real-world data. The system can detect violations at scale, apply rules consistently, and provide evidence packages for human review. It's not perfect, but it's better than current alternatives in many ways.

But the technology also raises legitimate concerns. Privacy. Fairness. Function creep. Data governance. These concerns are serious and deserve careful attention. They're not reasons to reject AI enforcement outright, but they are reasons to deploy it thoughtfully.

The key variables for success are transparency, oversight, and community trust. If Santa Monica handles these well, the program can be a model for other cities. If not, it could become a cautionary tale about technology deployed without sufficient safeguards.

The broader story here is about how cities adapt to technology and make governance decisions. AI enforcement is one of many domains where cities are deploying automated systems. The question is whether they're doing so deliberately and democratically, or reactively and carelessly.

For now, all eyes are on Santa Monica. The city is taking a calculated risk. If it works well and generates public trust, the expansion of AI enforcement into other cities will probably accelerate. If it fails or generates backlash, cities will be more cautious.

One thing is certain: bike lane blocking isn't going away on its own. Cities serious about cycling infrastructure will need to enforce the rules protecting it. Whether they use AI, humans, or a combination of both is a choice cities need to make thoughtfully. Santa Monica is making that choice. It will be interesting to see how it works out.

Key Takeaways

- Santa Monica becomes first city to deploy Hayden AI cameras on parking enforcement vehicles to detect bike lane violations in real time

- AI enforcement provides consistent, 24/7 monitoring across larger areas than manual enforcement but requires human review before citations

- UC San Diego pilot detected 1,100+ violations in 59 days with 88% involving bike lane blocking, demonstrating prevalence of the problem

- Privacy concerns around data collection, retention, and potential function creep require clear governance policies and independent oversight

- Algorithmic accuracy and potential bias must be validated through independent testing before and after deployment

![AI-Powered Parking Enforcement for Bike Lanes: Santa Monica's Smart City Approach [2025]](https://tryrunable.com/blog/ai-powered-parking-enforcement-for-bike-lanes-santa-monica-s/image-1-1771025808712.jpg)