AI Security: Understanding and Mitigating Risks in the AI Era [2025]

In the rapidly evolving world of artificial intelligence, security often takes a backseat to innovation. But as recent events have shown, assuming that AI tools are secure by default can lead to significant vulnerabilities.

TL; DR

- AI tools are not automatically secure: Recent flaws in AI systems like Chat GPT highlight the need for vigilance.

- Data leakage is a major risk: AI systems can inadvertently expose sensitive information.

- Regular updates and patches are essential: Keeping AI tools updated is crucial to maintaining security.

- User awareness is key: Educating users about potential risks helps mitigate security breaches.

- Future trends include more robust AI security frameworks: As AI adoption grows, so will the focus on securing these systems.

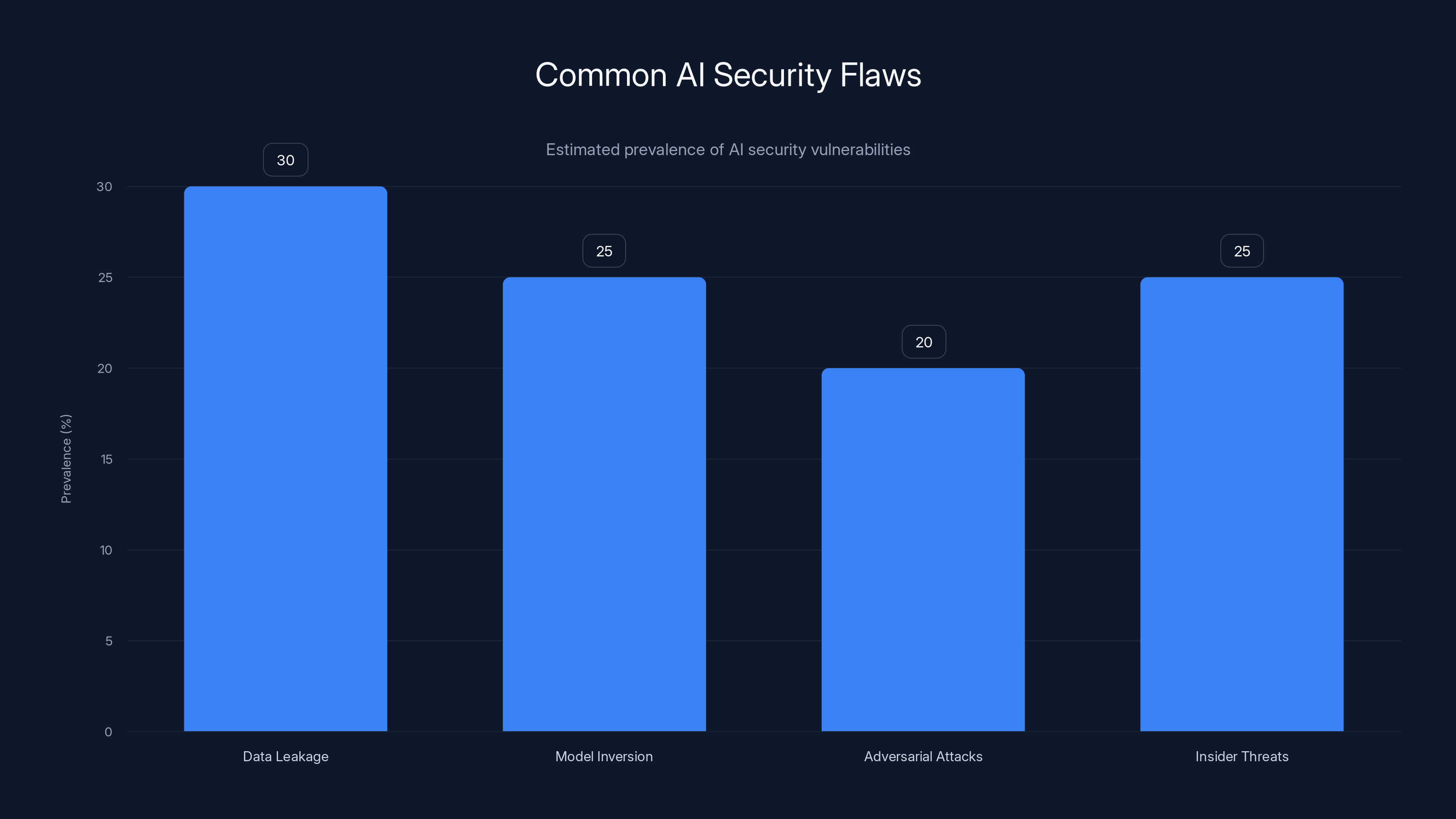

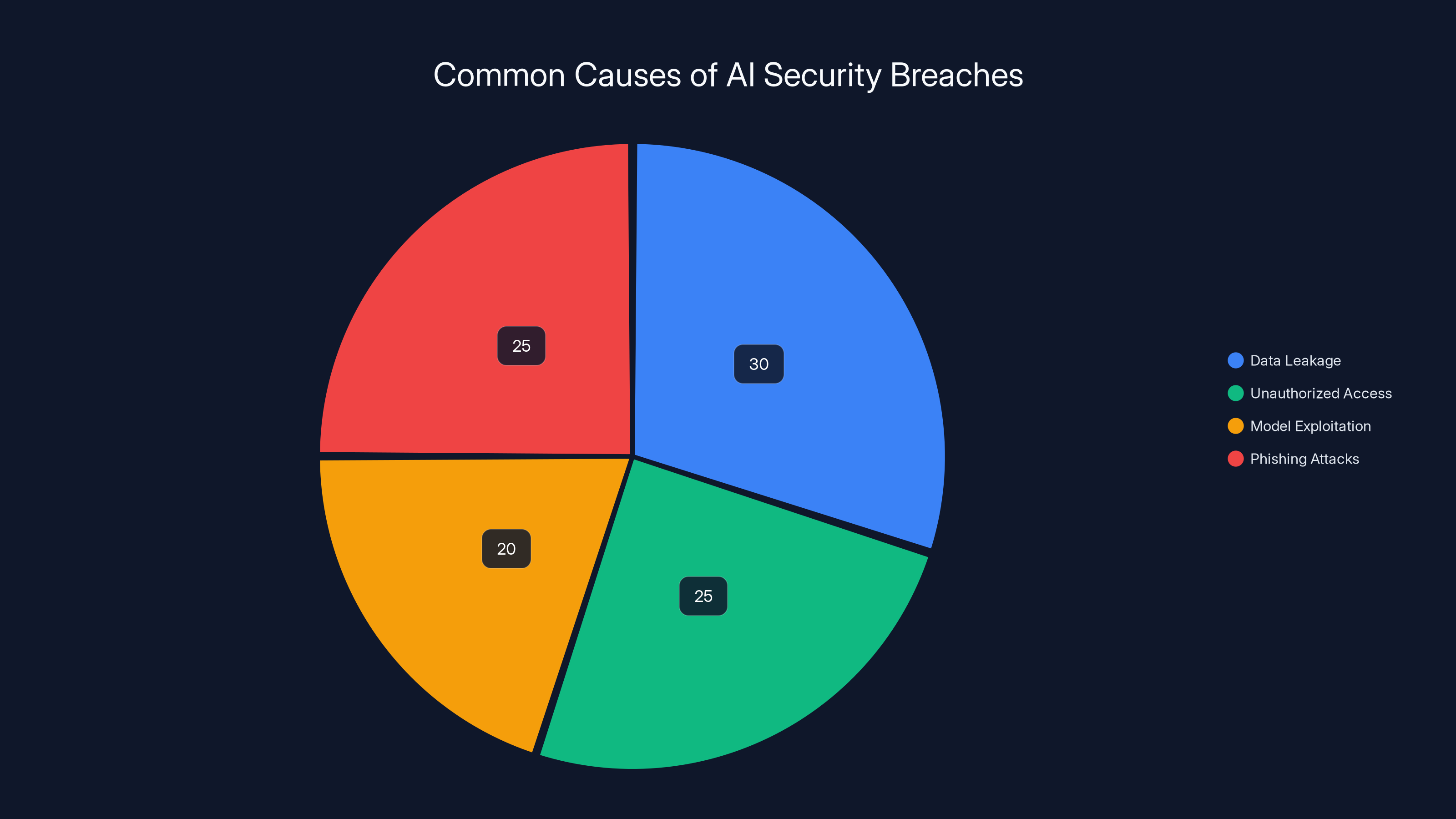

Data leakage and insider threats are estimated to be the most prevalent AI security flaws, each affecting approximately 25-30% of AI systems. Estimated data.

Introduction

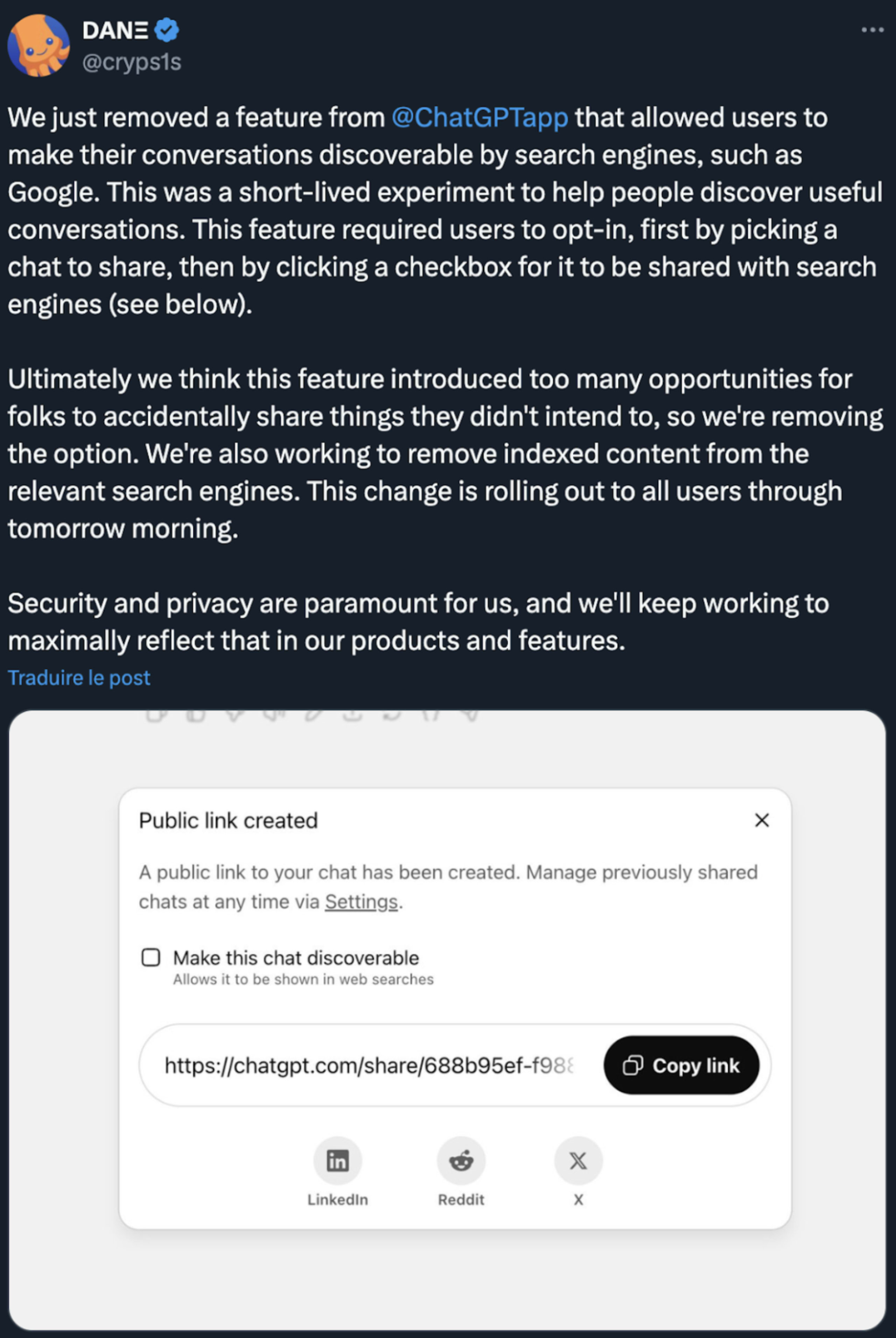

Last month, a security flaw in Open AI's Chat GPT allowed silent data leakage from conversations, highlighting a hard truth: AI tools are not secure by default. This incident underscores the need for a deeper understanding of AI security and proactive measures to ensure data protection. According to a Fortune article, similar incidents have raised concerns about the security of AI models.

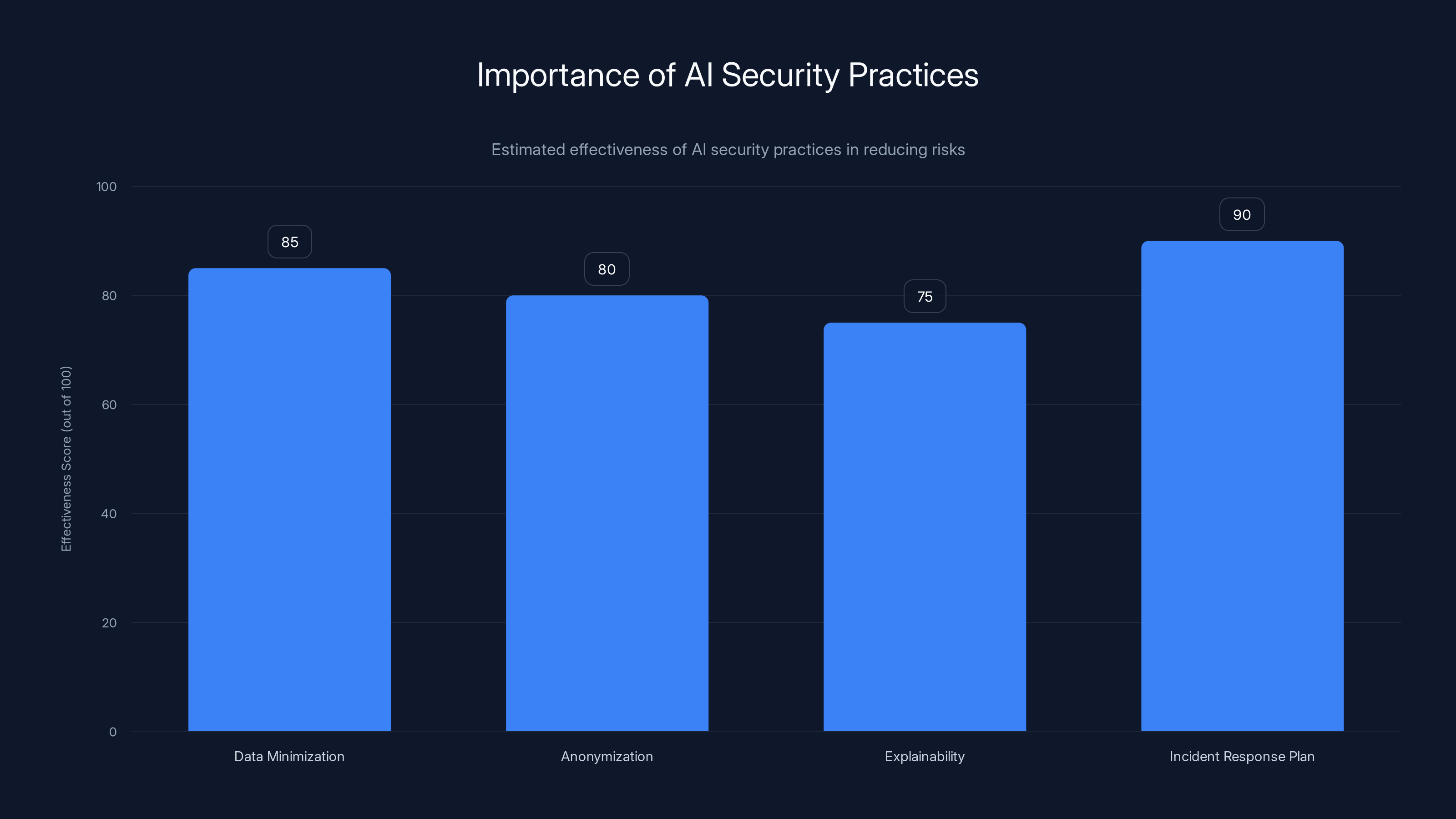

Implementing AI security practices like data minimization and incident response plans can significantly enhance security, with effectiveness scores ranging from 75 to 90. (Estimated data)

The Reality of AI Security

AI tools, while powerful, are not inherently secure. They rely on vast amounts of data and complex algorithms, making them susceptible to various vulnerabilities. The recent issue with Chat GPT is a stark reminder that even the most advanced AI systems can have security flaws. A Wiz Academy article outlines best practices for addressing these vulnerabilities.

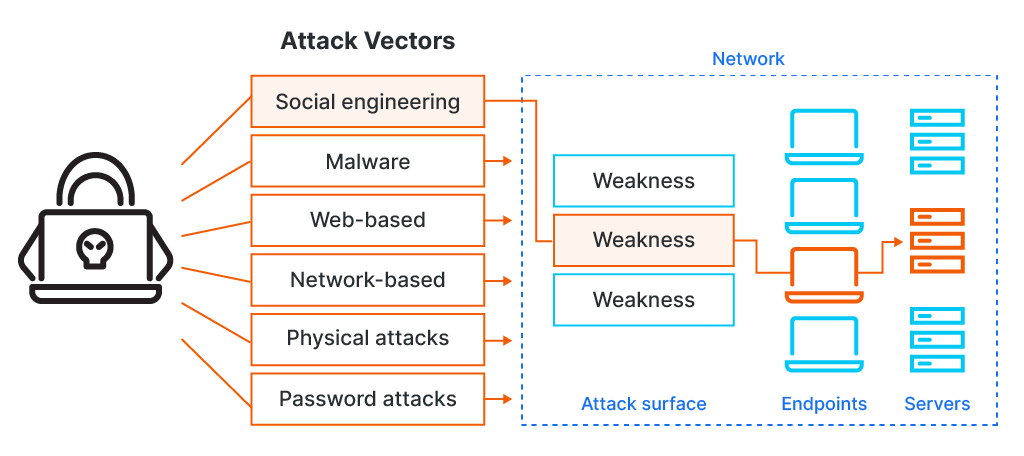

Why AI Tools Are Vulnerable

AI tools process and generate massive amounts of data, often in real-time. This data can be sensitive, containing personal or proprietary information. The complexity of AI models also means that potential vulnerabilities can be hard to detect and patch. The NIST report highlights the challenges in monitoring deployed AI systems.

Common AI Security Flaws

- Data Leakage: AI systems can inadvertently expose sensitive information through unintentional data sharing.

- Model Inversion Attacks: Attackers can reconstruct input data by exploiting model outputs.

- Adversarial Attacks: Malicious inputs can be crafted to deceive AI systems into making incorrect decisions.

- Insider Threats: Employees with access to AI systems can misuse data or models.

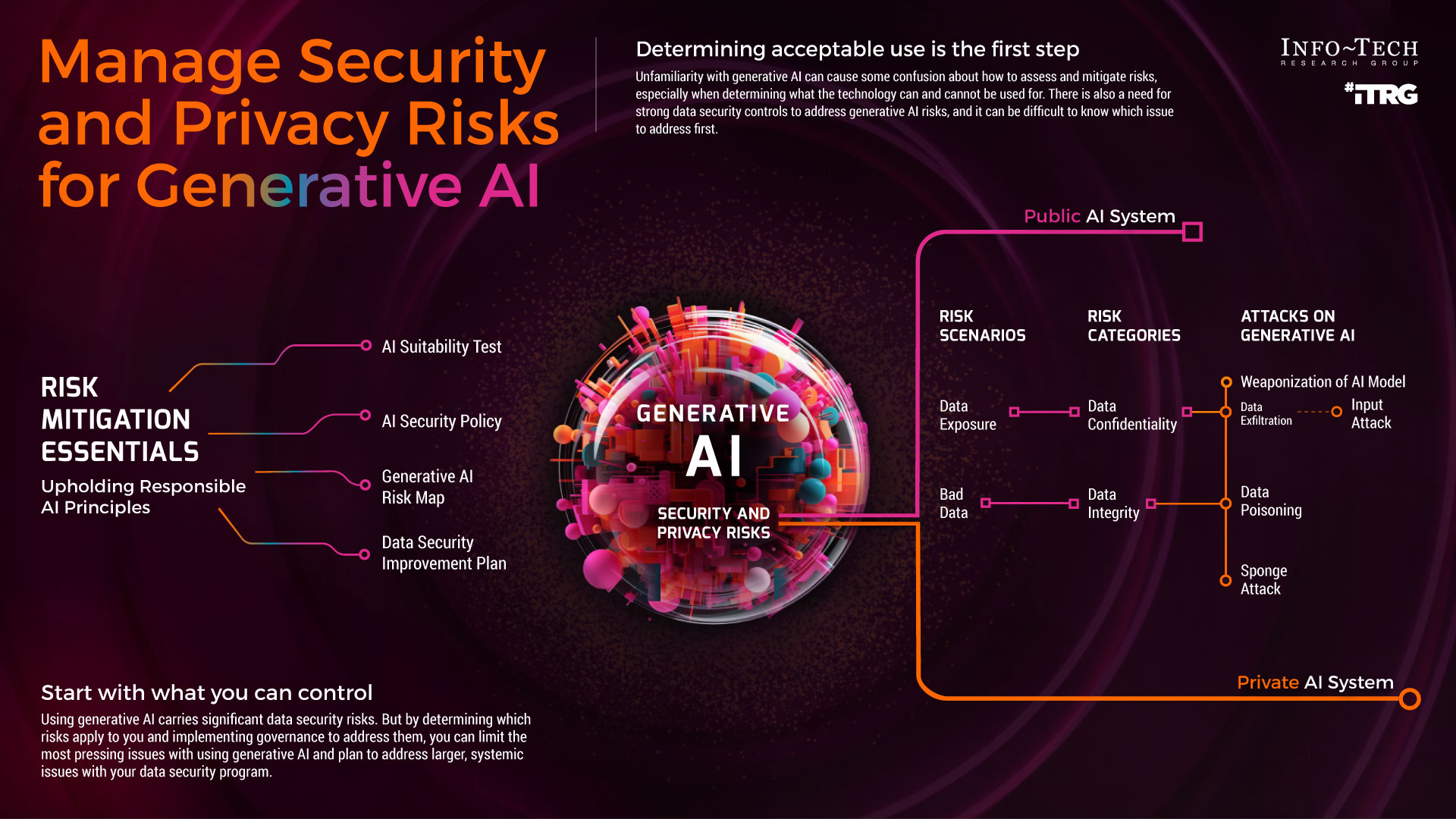

Mitigating AI Security Risks

To protect AI systems, organizations must adopt a multi-faceted approach to security. This includes technical measures, user education, and regular audits. The IT Business Net discusses future challenges and opportunities in AI security.

Technical Measures

- Encryption: Ensure data is encrypted both at rest and in transit. The Oracle AI Automation page emphasizes the importance of encryption.

- Access Controls: Implement strict access controls to limit who can interact with AI systems.

- Regular Patching: Keep AI systems updated with the latest security patches. The Help Net Security forecast highlights the importance of regular updates.

- Monitoring and Auditing: Continuously monitor AI systems for unusual activity and conduct regular security audits.

User Education

Educating users about the potential risks of AI tools is crucial. Users should be aware of what data is being processed and understand the implications of sharing sensitive information with AI systems. Clemson University has initiated educational programs to raise awareness among students and faculty.

Regular Audits

Conducting regular security audits can help identify vulnerabilities and ensure compliance with security best practices. Audits should include both technical assessments and policy reviews. The Financial Content report discusses the importance of secure coding practices.

Data leakage, like the recent ChatGPT incident, accounts for approximately 30% of AI security breaches. Estimated data.

Best Practices for AI Security

Adopting best practices for AI security can significantly reduce the risk of data breaches and other security incidents.

- Data Minimization: Collect and process only the data necessary for AI tasks.

- Anonymization: Remove personally identifiable information from datasets to protect user privacy.

- Explainability: Make AI models more transparent to understand how decisions are made and identify potential biases.

- Incident Response Plan: Have a plan in place to quickly respond to security incidents. The Information Technology and Innovation Foundation discusses how data rules are shaping AI's future.

Case Study: Chat GPT Data Leakage Incident

Open AI's Chat GPT recently experienced a security flaw that allowed data leakage from user conversations. This incident serves as a lesson in the importance of proactive security measures.

What Happened?

A vulnerability in the Chat GPT system allowed unauthorized access to user data. The flaw was discovered by security researchers who were able to demonstrate how data could be silently exfiltrated from conversations. The Microsoft Security Blog discusses how threat actors operationalize AI.

How It Was Resolved

Open AI quickly patched the vulnerability and implemented additional security measures to prevent similar incidents in the future. The incident highlighted the importance of regular security updates and prompt response to discovered vulnerabilities.

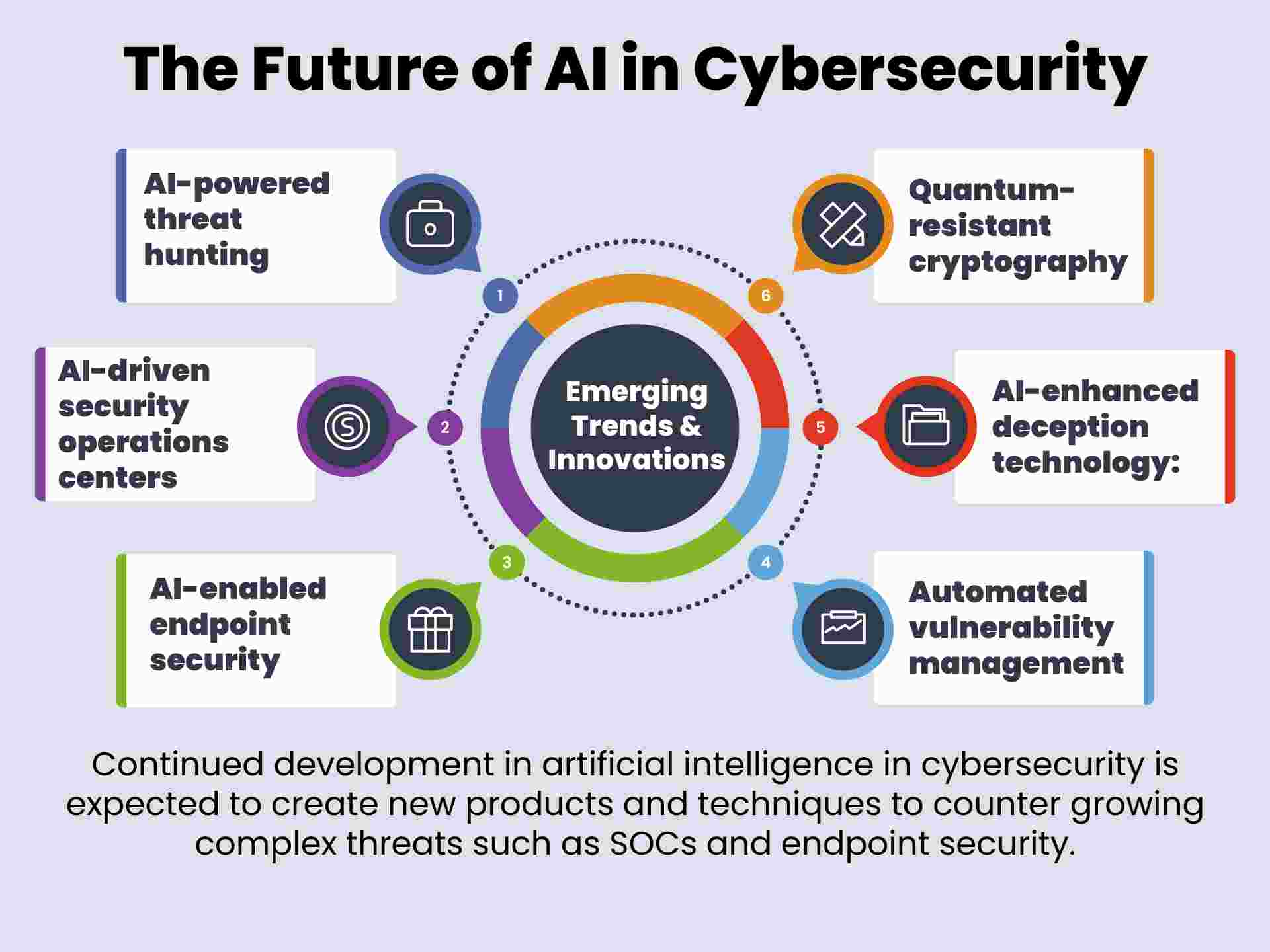

Future Trends in AI Security

As AI continues to evolve, so will the security challenges it presents. However, several trends are emerging that promise to bolster AI security.

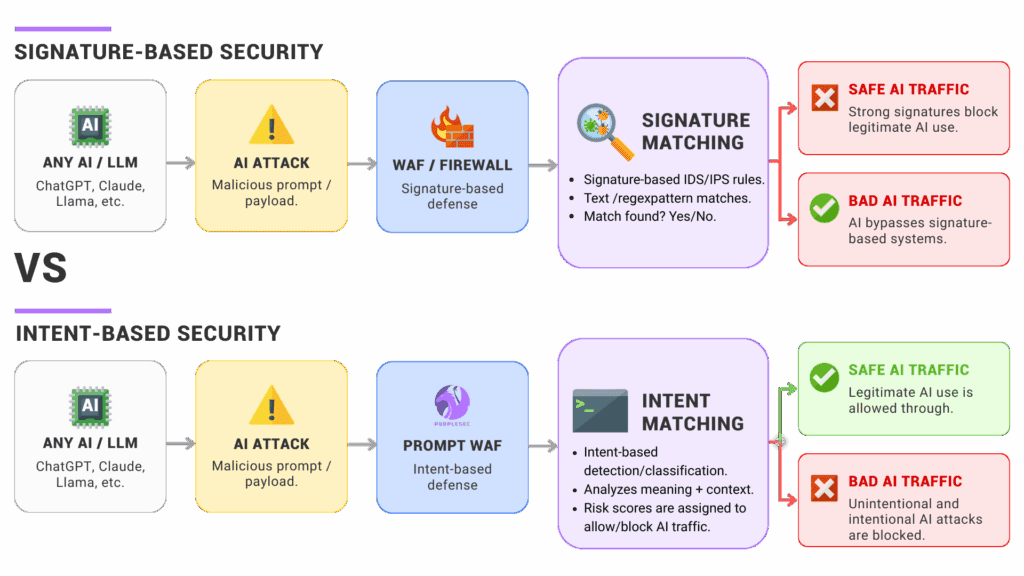

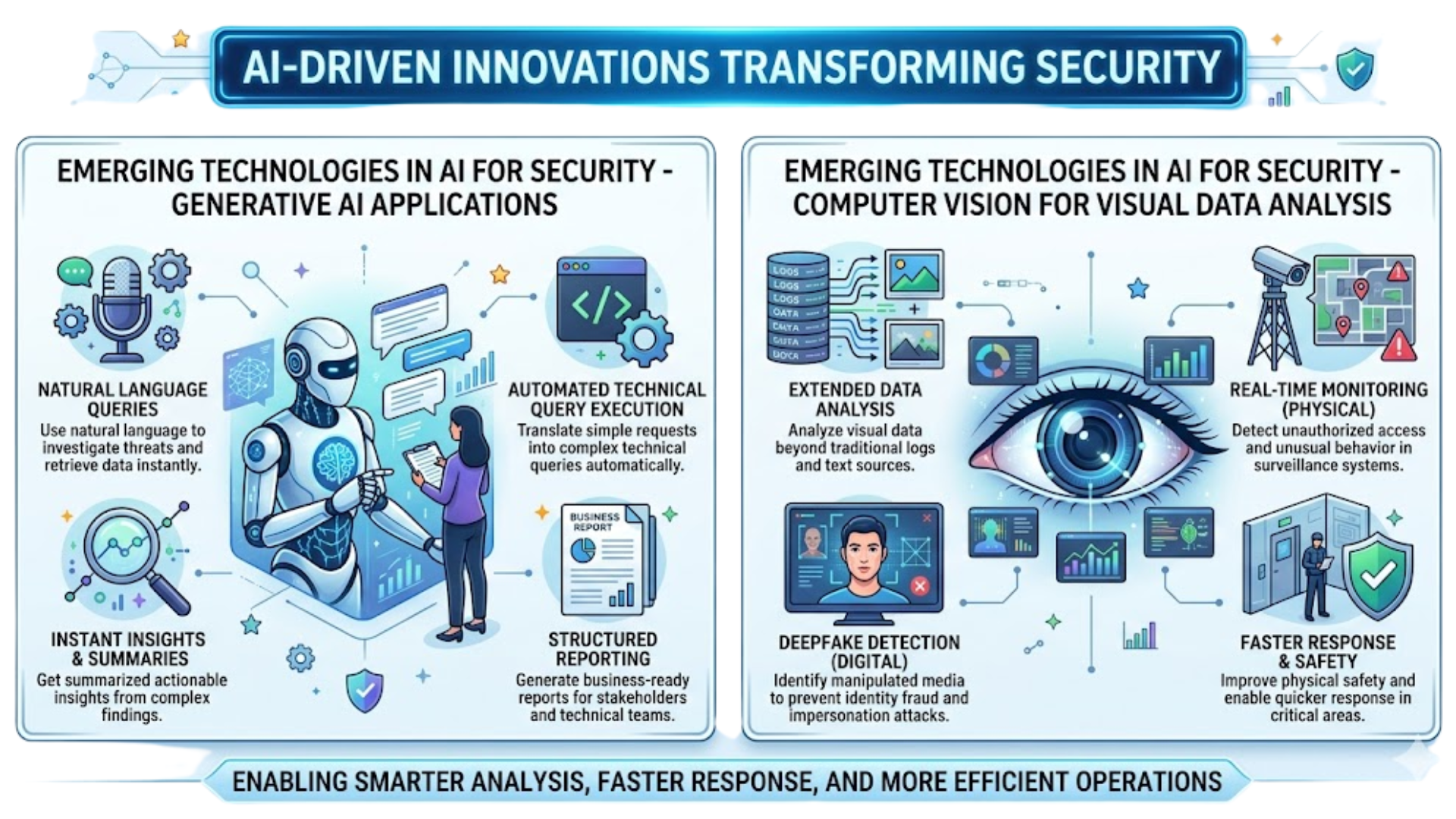

AI-Driven Security Solutions

AI itself is being leveraged to enhance security. AI-driven security solutions can analyze vast amounts of data to detect anomalies and respond to threats in real-time. Frontiers in Computer Science discusses the role of AI in enhancing security.

Improved Security Frameworks

Industry standards and frameworks for AI security are being developed, providing guidelines for securing AI systems. These frameworks will help organizations implement best practices and ensure compliance with regulations.

Privacy-Preserving AI

Techniques such as differential privacy and federated learning are being explored to protect user privacy while still leveraging the power of AI. These methods allow AI models to learn from data without exposing sensitive information.

Conclusion

AI tools offer incredible capabilities, but with great power comes great responsibility. Ensuring the security of AI systems requires a comprehensive approach that includes technical measures, user education, and ongoing vigilance. As AI continues to play an increasingly important role in our lives, the focus on securing these systems will only grow.

FAQ

What is AI security?

AI security involves measures and practices to protect artificial intelligence systems from threats and vulnerabilities, ensuring data integrity and privacy.

How does data leakage occur in AI systems?

Data leakage can occur through vulnerabilities in AI systems that allow unauthorized access or data exposure without user knowledge.

What are some best practices for AI security?

Best practices include data encryption, access controls, regular updates, user education, and adopting privacy-preserving techniques.

How can AI be used to enhance security?

AI-driven security solutions can analyze data to detect anomalies and respond to threats, improving overall security.

What are future trends in AI security?

Emerging trends include AI-driven security solutions, improved security frameworks, and privacy-preserving AI techniques.

Why is user education important in AI security?

Educating users about AI risks helps them make informed decisions about data sharing and enhances overall security awareness.

Key Takeaways

- AI tools are not secure by default; vigilance is necessary.

- Data leakage is a significant risk in AI systems.

- Regular updates and patches are essential for AI security.

- User education is crucial for mitigating security breaches.

- Future trends include robust AI security frameworks.

Related Articles

- Critical Flaw in OpenAI's Codex: Enterprise Security Risks and Solutions [2025]

- ExpressVPN's ExpressAI: A New Era in Privacy-Focused AI Chatbots [2025]

- Citrix NetScaler Flaw: CISA's Patch Warning and What It Means [2025]

- The Impact of AI on Art Schools: Navigating a New Creative Landscape [2025]

- Instagram's New Feature: Paying for Anonymous Story Viewing [2025]

- Mastering Digital Backups: Protecting Your Files and Digital Life in 2026

![AI Security: Understanding and Mitigating Risks in the AI Era [2025]](https://tryrunable.com/blog/ai-security-understanding-and-mitigating-risks-in-the-ai-era/image-1-1774978510400.jpg)