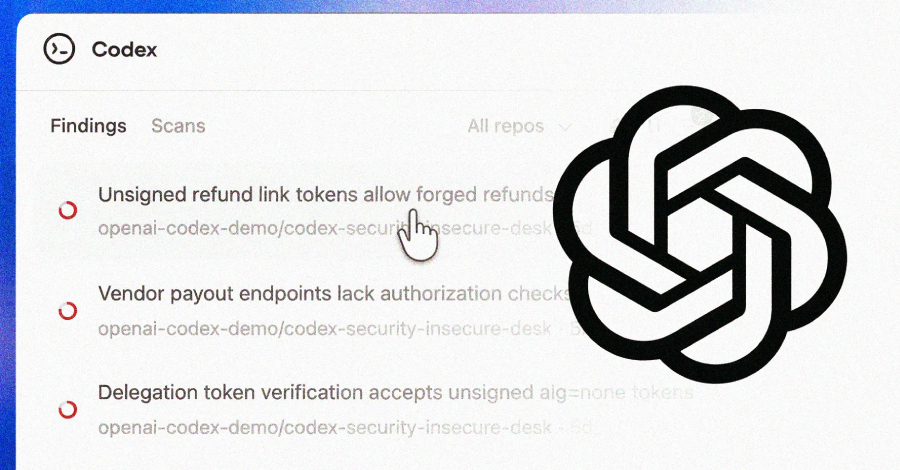

Critical Flaw in Open AI's Codex: Enterprise Security Risks and Solutions [2025]

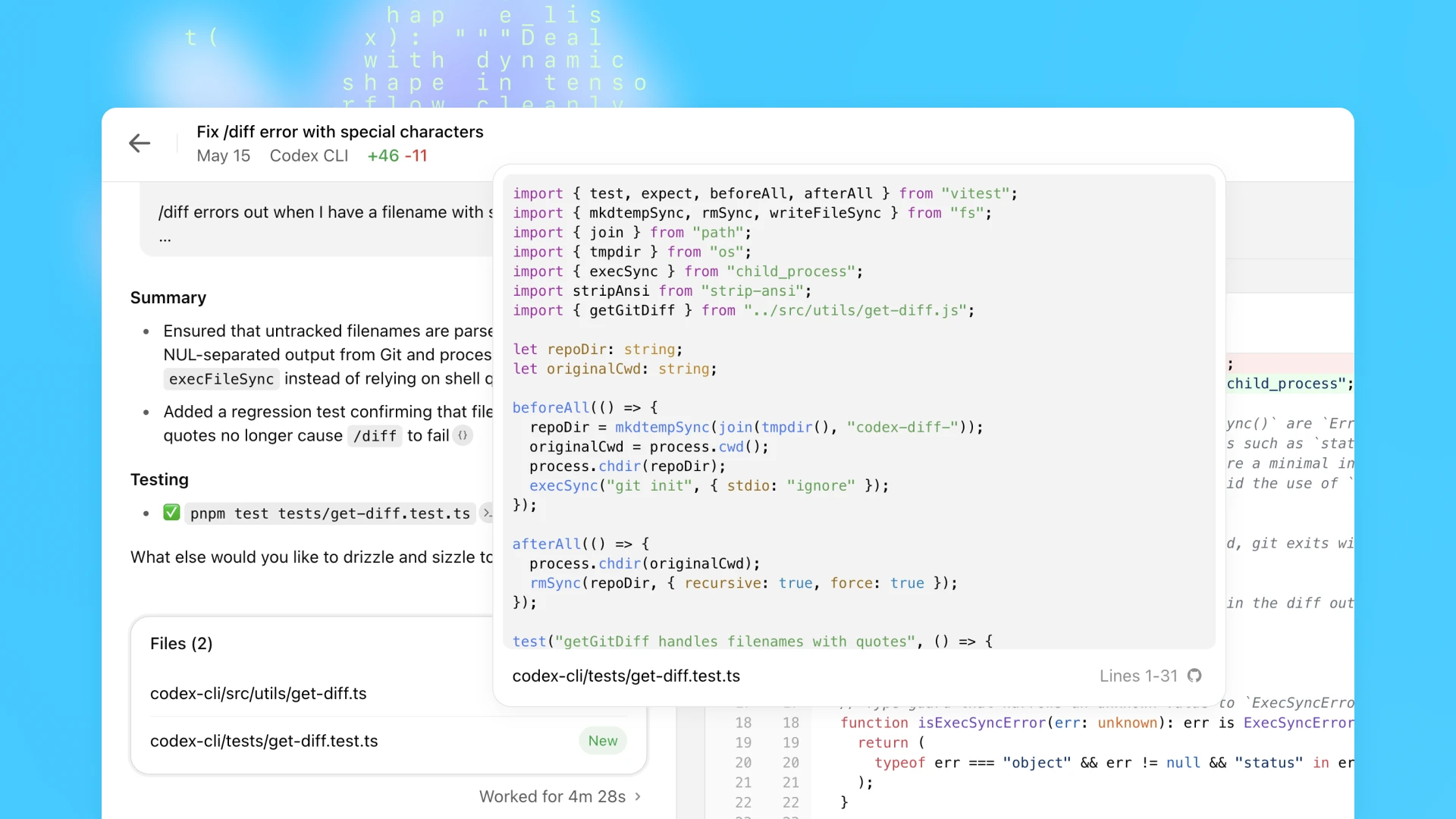

In the ever-evolving landscape of AI development tools, OpenAI's Codex has been a game-changer, transforming the way developers interact with code. However, a recently discovered security vulnerability shook the tech world, raising critical questions about the safety of AI-driven coding aids in enterprise environments. According to SiliconANGLE, this vulnerability could allow unauthorized access to sensitive data and systems.

TL; DR

- Critical Security Flaw: A command injection vulnerability in OpenAI's Codex threatened enterprise data security.

- Enterprise Implications: The flaw allowed unauthorized access to sensitive data and systems.

- Mitigation Strategies: Regular updates, robust access controls, and security audits are essential.

- Future Trends: AI security will involve more advanced threat detection and response capabilities.

- Bottom Line: Staying informed and proactive is crucial for leveraging AI tools securely.

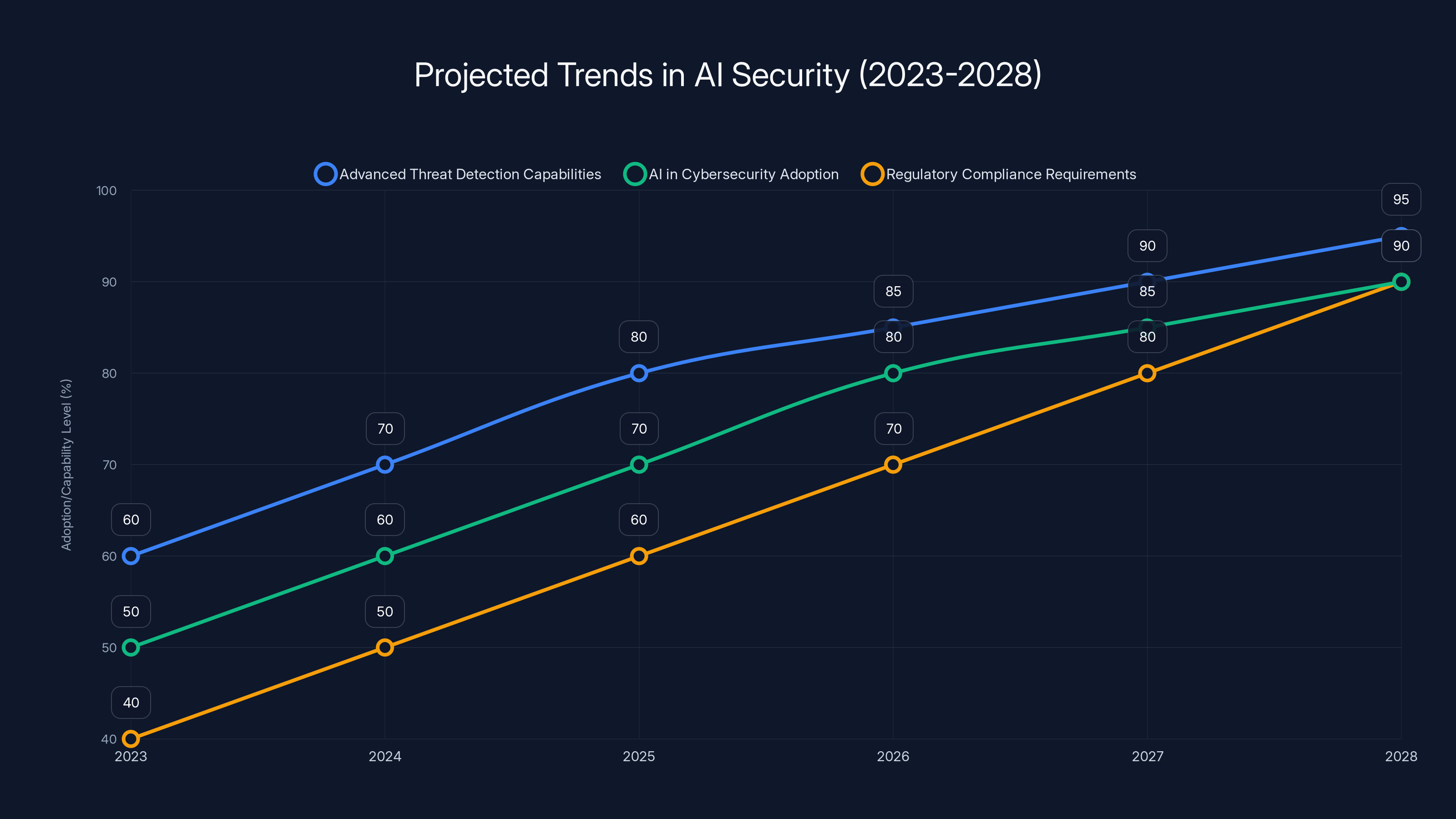

Projected trends show significant growth in AI-driven threat detection and cybersecurity adoption, alongside increasing regulatory compliance requirements. Estimated data.

Understanding OpenAI's Codex

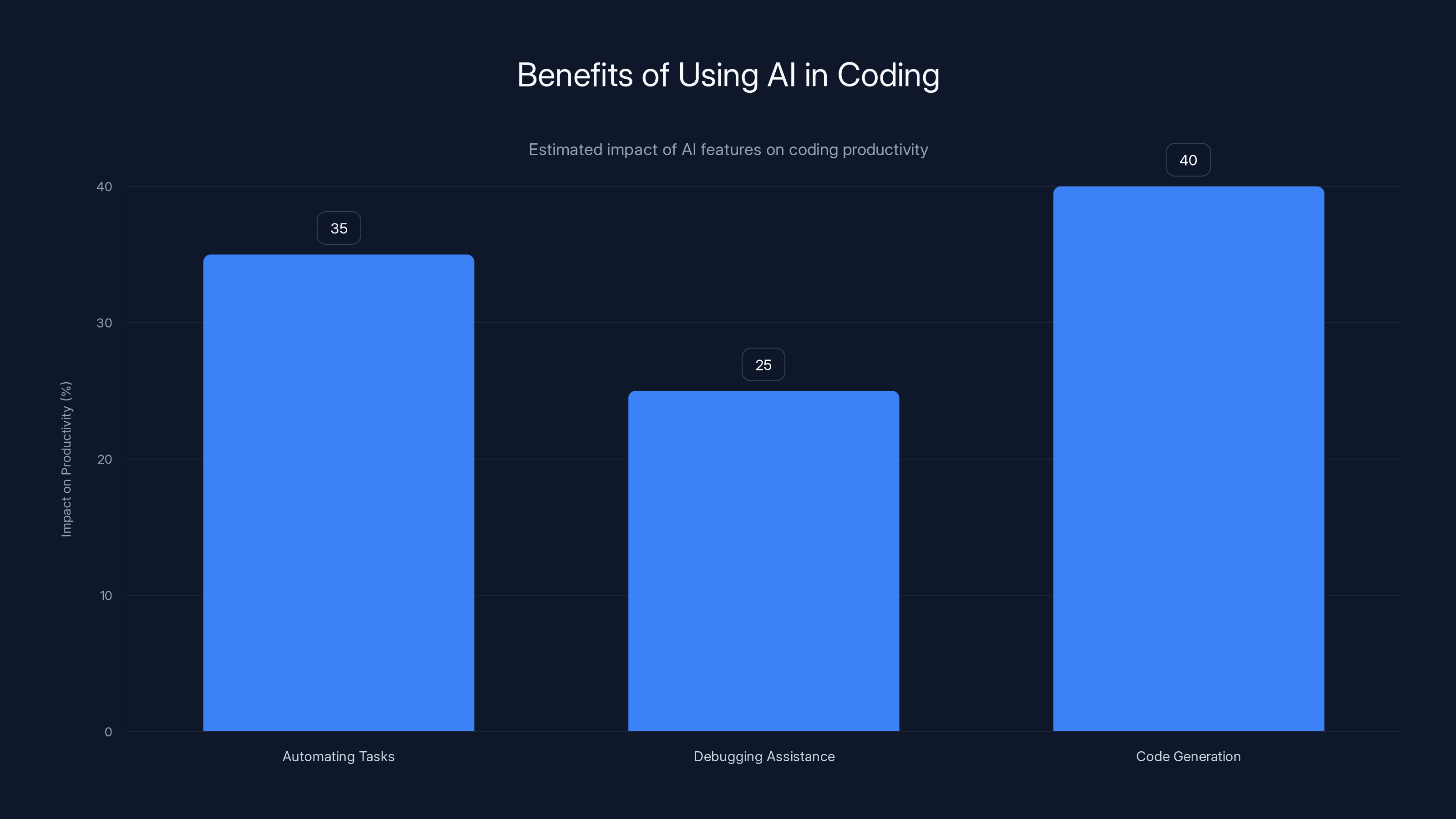

OpenAI's Codex is an AI-powered tool designed to assist developers by generating code based on natural language prompts. It's a descendant of the GPT-3 model, tailored specifically for coding tasks. Codex can interpret simple instructions, automate repetitive tasks, and even debug code, making it a valuable asset for developers.

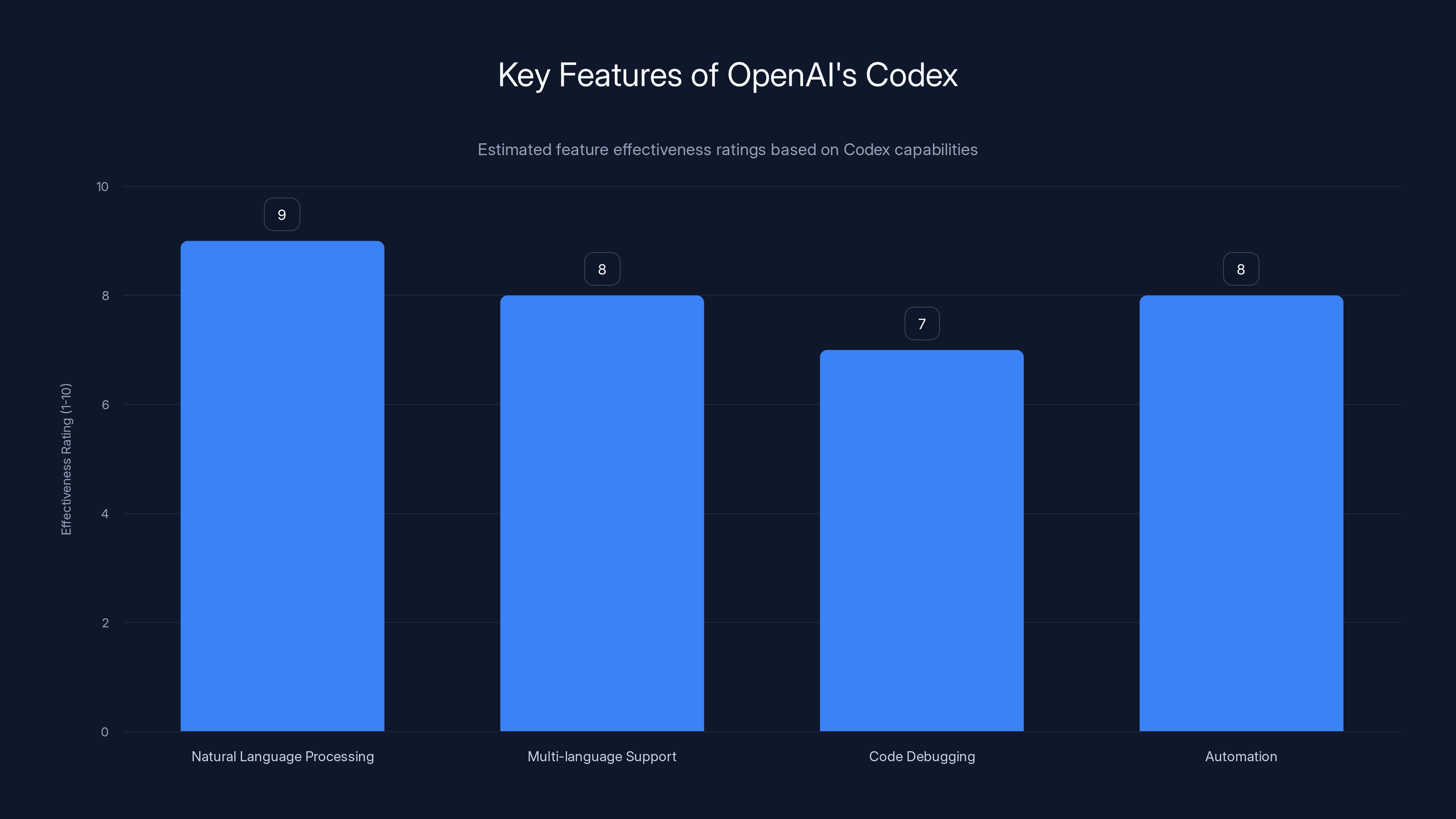

Key Features of Codex

- Natural Language Processing: Codex transforms natural language prompts into executable code.

- Multi-language Support: It supports a variety of programming languages, including Python, JavaScript, and Ruby.

- Code Debugging: Provides suggestions for fixing coding errors.

- Automation: Automates repetitive coding tasks, increasing developer productivity.

However, despite its impressive capabilities, Codex's reliance on AI introduces unique security challenges.

AI in coding significantly boosts productivity by automating tasks (35%), aiding in debugging (25%), and generating code (40%). Estimated data.

The Security Flaw: A Closer Look

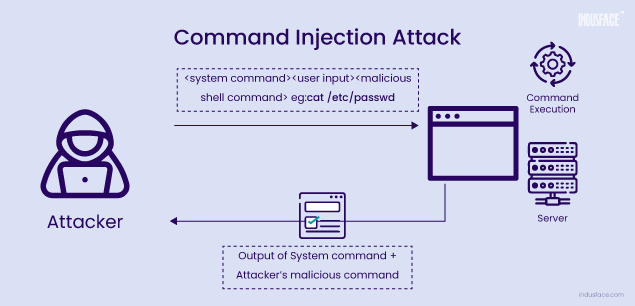

The vulnerability discovered in Codex was a command injection flaw—a critical issue that allowed attackers to execute arbitrary commands on the underlying system. This flaw could potentially expose sensitive data, manipulate databases, and compromise entire enterprise networks. As reported by The Hacker News, OpenAI has addressed this vulnerability with a patch.

How Command Injection Works

Command injection occurs when an application passes unsanitized input directly to a system shell. If Codex's generated code incorporates unsanitized user input, it can inadvertently execute harmful commands.

[CODE EXAMPLE]

pythonimport os

def execute_command(user_input):

os.system(user_input)

# Vulnerable code

execute_command("rm -rf /important-data")

In the example above, unsanitized input can lead to catastrophic data loss.

Real-World Implications

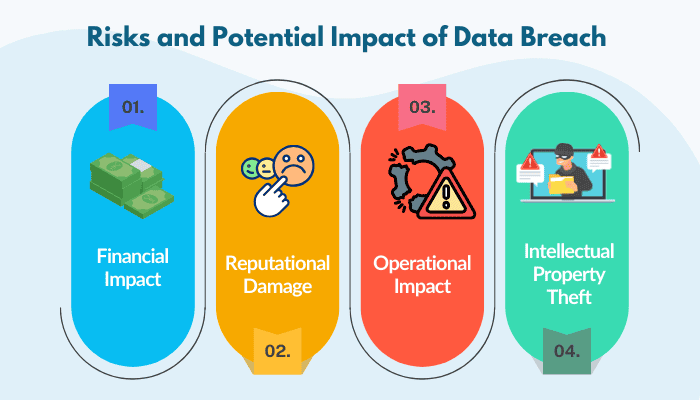

In an enterprise setting, such vulnerabilities can lead to:

- Data Breaches: Unauthorized access to sensitive information.

- Service Disruptions: Compromised systems can lead to downtime.

- Financial Losses: Data breaches can result in significant financial penalties and loss of customer trust.

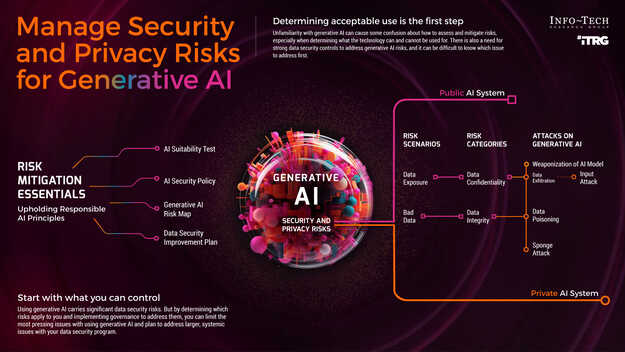

Mitigation Strategies

Addressing these vulnerabilities requires a proactive approach to security. Here are essential strategies for mitigating risks associated with AI development tools like Codex:

1. Regular Updates and Patches

Ensure that AI tools are regularly updated to patch known vulnerabilities. OpenAI has fixed the Codex flaw, so staying updated is crucial.

2. Implement Strong Access Controls

Limit who can access and execute AI-generated code within your organization. Use role-based access controls to minimize risks.

3. Conduct Security Audits

Regular security audits can help identify potential vulnerabilities in your systems and ensure compliance with security standards.

4. Train Developers on Secure Coding Practices

Educate your development team on the importance of validating and sanitizing inputs, even when using AI-generated code.

5. Use AI Responsibly

Integrate AI tools like Codex with caution. Test their outputs rigorously before deploying them in production environments.

Codex excels in transforming natural language into code, with high effectiveness across its key features. Estimated data based on typical AI tool performance.

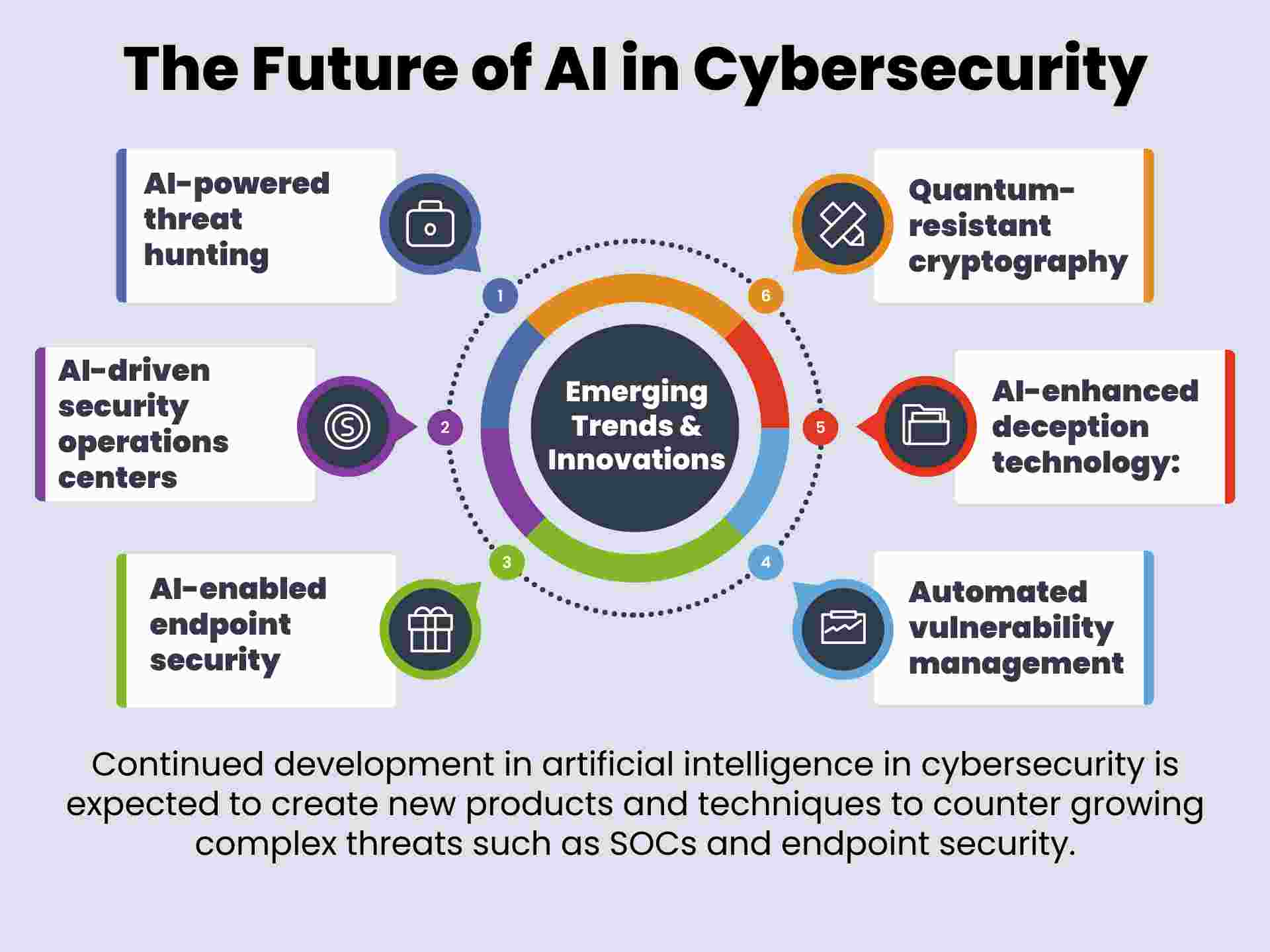

Future Trends in AI Security

As AI tools become more integrated into enterprise workflows, security measures must evolve. Here are some anticipated trends:

Advanced Threat Detection

AI-driven security systems will become more adept at detecting unusual patterns and preventing potential breaches before they occur.

AI in Cybersecurity

AI will not only be a target but also a tool in cybersecurity, helping to automate threat detection and response processes. According to AWS, integrating AI with cybersecurity measures can significantly enhance incident response capabilities.

Regulatory Compliance

Expect stricter regulations around AI use, particularly regarding data privacy and security. Enterprises must stay informed about compliance requirements.

Practical Implementation Guides

Setting Up Secure Development Environments

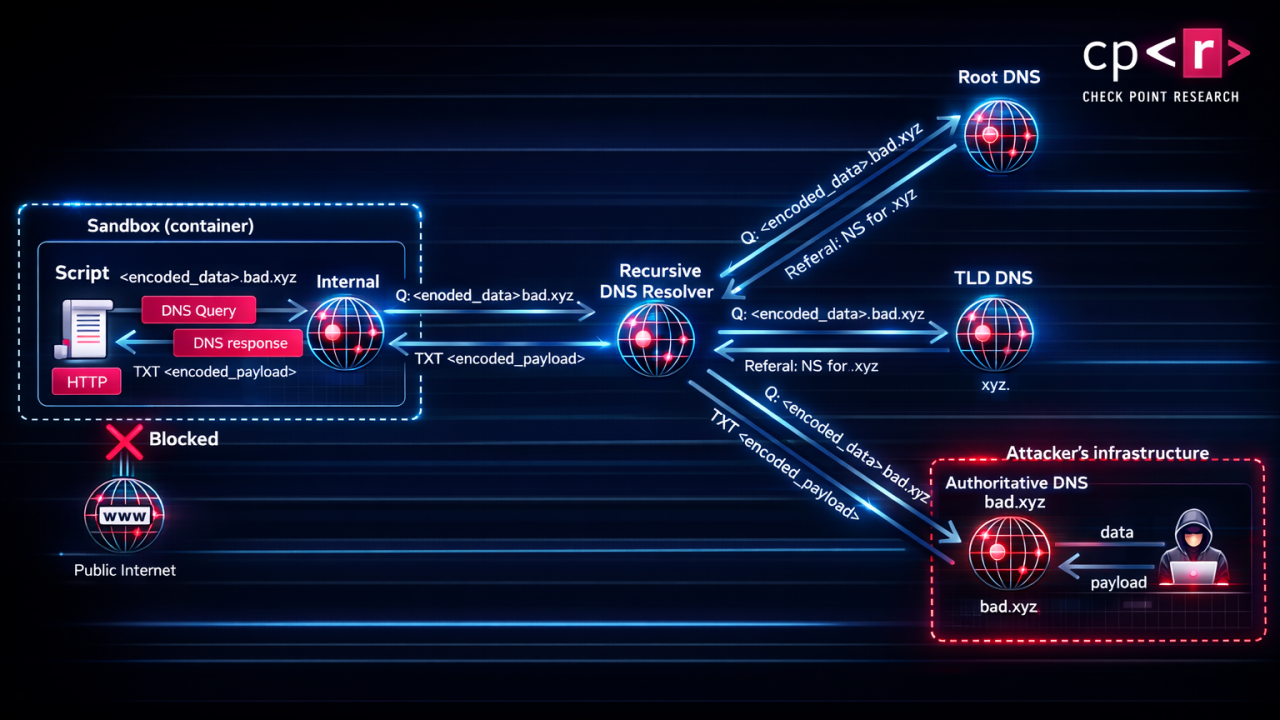

- Isolate AI Tools: Run AI tools in isolated environments to limit potential damage from vulnerabilities.

- Use Virtual Machines: Implement virtual machines to create sandboxed environments for testing AI-generated code.

- Monitor Network Traffic: Use network monitoring tools to detect suspicious activities related to AI tool usage.

Best Practices for AI Tool Integration

- Conduct Risk Assessments: Evaluate the risks associated with each AI tool before integration.

- Start with Pilot Programs: Test AI tools in small-scale deployments before full integration.

- Develop Incident Response Plans: Prepare for potential security incidents involving AI tools.

Common Pitfalls and Solutions

Overreliance on AI

Pitfall: Assuming AI-generated code is inherently secure.

Solution: Always review and test AI-generated code manually before deployment.

Inadequate Input Validation

Pitfall: Failing to validate user inputs in AI-generated code.

Solution: Implement input validation and sanitization techniques rigorously.

Future Recommendations

- Invest in AI Security Training: Equip your team with the skills needed to identify and mitigate AI-related security risks.

- Collaborate with AI Providers: Work closely with AI tool providers like OpenAI to stay informed about security updates and best practices.

- Stay Informed: Keep abreast of the latest developments in AI security to adapt your strategies accordingly.

Conclusion

AI tools like OpenAI's Codex hold great promise for enhancing developer productivity, but they also introduce new security challenges. By understanding these risks and implementing robust security measures, enterprises can harness the power of AI safely and effectively.

Use Case: Automate your code reviews with AI, ensuring secure code generation.

Try Runable For Free

FAQ

What is OpenAI's Codex?

OpenAI's Codex is an AI-powered tool that assists developers by generating code from natural language prompts, improving coding efficiency.

How does command injection affect security?

Command injection allows attackers to execute harmful commands within a system, potentially leading to unauthorized access and data breaches.

What are the benefits of using AI in coding?

AI in coding enhances productivity by automating repetitive tasks, providing debugging assistance, and generating code from simple prompts.

How can enterprises mitigate AI-related security risks?

Enterprises can mitigate risks by keeping AI tools updated, implementing strong access controls, conducting security audits, and training developers on secure coding practices.

What are future trends in AI security?

Future trends include advanced threat detection, increased use of AI in cybersecurity, and stricter regulatory compliance requirements.

Why is input validation important in AI-generated code?

Input validation ensures that user inputs do not contain harmful commands, preventing potential command injections and enhancing security.

Key Takeaways

- OpenAI's Codex vulnerability exposed enterprises to data breaches.

- Command injection flaws allow unauthorized command execution.

- Regular updates and security audits are crucial for protection.

- AI tools require robust access controls and input validation.

- AI security involves advanced threat detection and response.

Related Articles

- Fast Isn’t Finished: Why Production-Ready Still Takes Discipline [2025]

- Intelligence and Trust in AI: Transforming Work with Smarter Tools [2025]

- How to Safely Experiment with OpenClaw [2025]

- European Commission Data Breach: What Happened and How to Respond [2025]

- Citrix NetScaler Flaw: CISA's Patch Warning and What It Means [2025]

- The Impact of AI on Art Schools: Navigating a New Creative Landscape [2025]

![Critical Flaw in OpenAI's Codex: Enterprise Security Risks and Solutions [2025]](https://tryrunable.com/blog/critical-flaw-in-openai-s-codex-enterprise-security-risks-an/image-1-1774975723055.jpg)