Anthropic and Open AI Unveil Free Tools That Challenge SAST's Limitations [2025]

Last month, Anthropic and OpenAI shook the cybersecurity world. They rolled out free tools that exploit a critical flaw in traditional Static Application Security Testing (SAST). These new tools—Anthropic's Claude Code Security and OpenAI's Codex Security—use large language model (LLM) reasoning to identify vulnerabilities that SAST tools typically miss.

TL; DR

- New Tools Unveiled: Anthropic and OpenAI launched free tools that reveal SAST's blind spots.

- LLM vs. Pattern Matching: Unlike SAST's pattern matching, these tools use LLM reasoning to detect complex vulnerabilities.

- Faster Detection: Competitive pressure between these giants promises rapid improvement in detection quality.

- Not a Replacement: These tools complement, not replace, existing security stacks.

- Future of Security: Expect more intelligent, adaptable, and comprehensive security solutions.

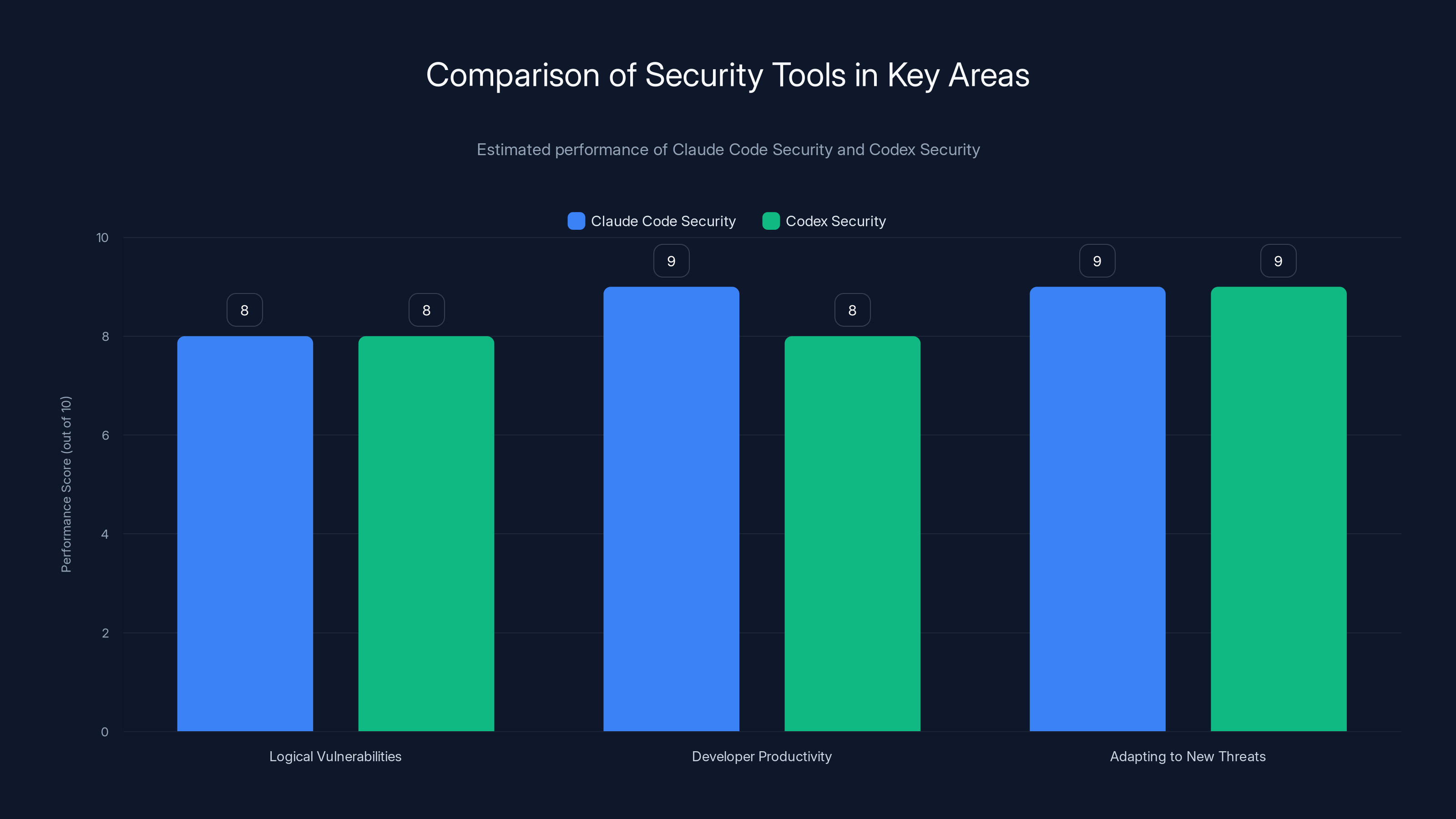

Both Claude Code Security and Codex Security perform well across all key areas, with a slight edge in enhancing developer productivity for Claude Code Security. Estimated data.

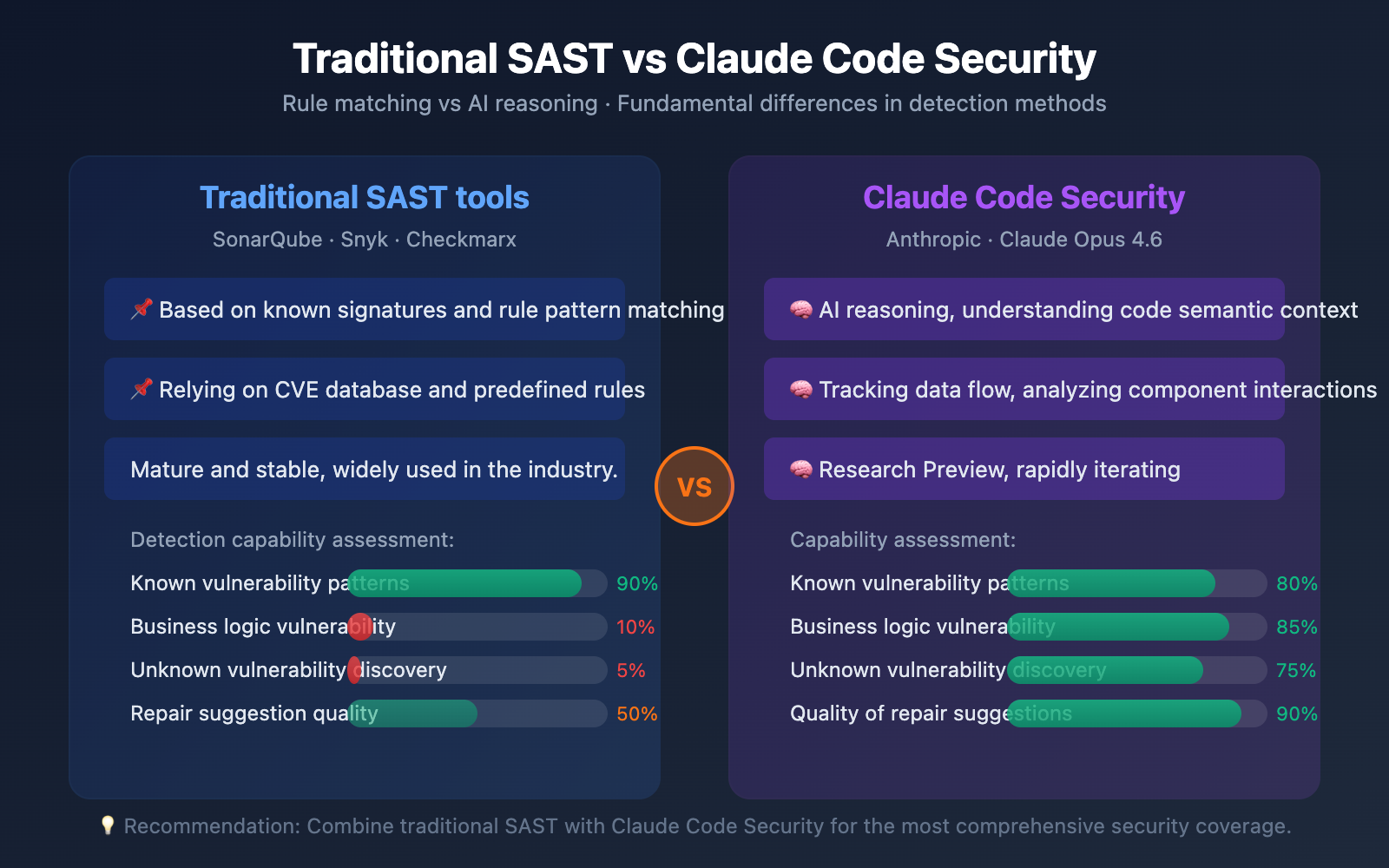

The Problem with SAST

Static Application Security Testing (SAST) has been a staple in software security for years. By analyzing source code, bytecode, or binaries, SAST aims to identify vulnerabilities early in the development process. Traditionally, SAST tools rely on pattern matching—scanning code for known signature patterns tied to vulnerabilities like SQL injection or cross-site scripting.

The Structural Blind Spot: While effective at finding well-documented vulnerabilities, SAST struggles with complex, context-dependent issues. It lacks the ability to understand code semantics thoroughly, often missing logical errors or misuse of APIs that could lead to security breaches.

Example: Consider a scenario where an application incorrectly handles user authentication tokens. A traditional SAST tool might miss this if the vulnerability doesn't match a known pattern.

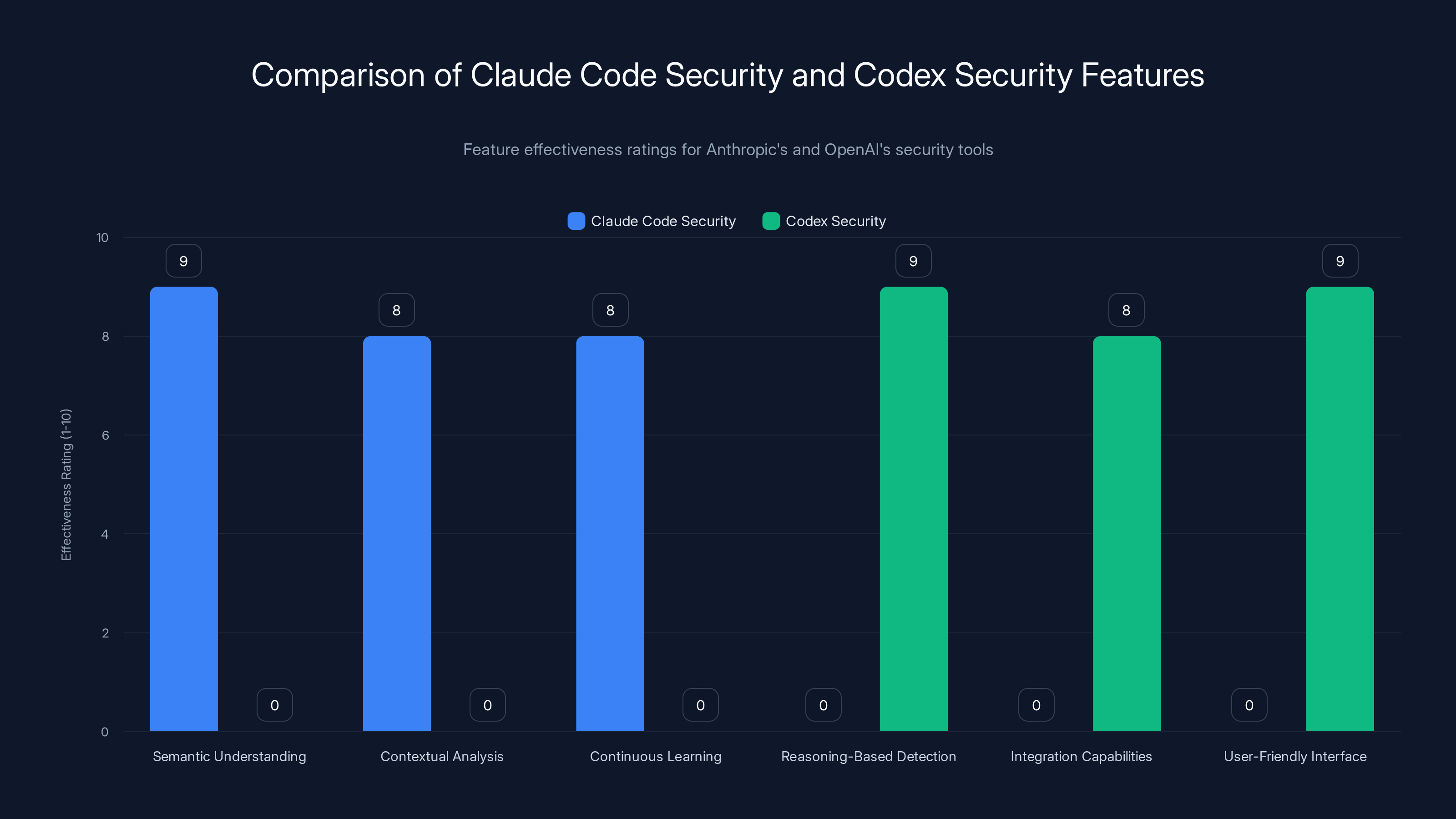

Claude Code Security excels in semantic understanding and contextual analysis, while Codex Security is strong in reasoning-based detection and integration capabilities. Estimated data.

Enter Anthropic and Open AI

Claude Code Security by Anthropic

Claude Code Security emerged as a disruptor. It employs an advanced LLM capable of understanding code semantics, context, and intent. This allows it to detect vulnerabilities that don't fit traditional patterns.

Key Features:

- Semantic Understanding: Analyzes code intent, logic, and semantics.

- Contextual Analysis: Considers the broader application context to identify potential vulnerabilities.

- Continuous Learning: Adapts to new threat landscapes through ongoing learning.

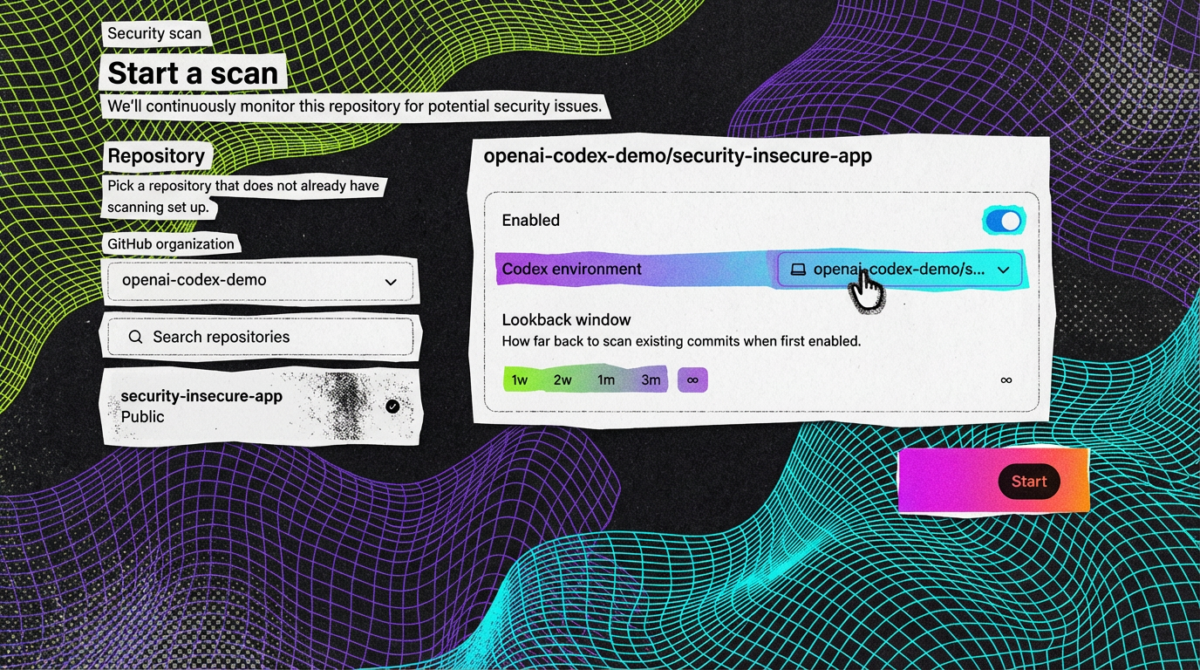

Codex Security by Open AI

OpenAI's Codex Security follows a similar path. Using its LLM, Codex Security focuses on reasoning rather than pattern matching, offering a fresh perspective on application security.

Key Features:

- Reasoning-Based Detection: Identifies vulnerabilities through logical reasoning.

- Integration Capabilities: Seamlessly integrates with existing CI/CD pipelines.

- User-Friendly Interface: Simplifies vulnerability management for developers.

Real-World Use Cases

1. Detecting Logical Vulnerabilities

Both tools excel at finding logical vulnerabilities. For instance, in a financial application where transaction records are improperly validated, traditional SAST might overlook the issue, while Claude Code Security or Codex Security could flag it by understanding the business logic involved.

2. Enhancing Developer Productivity

By integrating with existing development workflows, these tools allow developers to detect and fix vulnerabilities early, reducing the time spent on security audits and bug fixes. This seamless integration enhances productivity and reduces costs associated with post-production fixes.

3. Adapting to New Threats

The LLMs' continuous learning capabilities mean these tools can adapt to emerging threats faster than traditional methods. As new vulnerabilities are discovered, the tools evolve, offering up-to-date protection without manual updates.

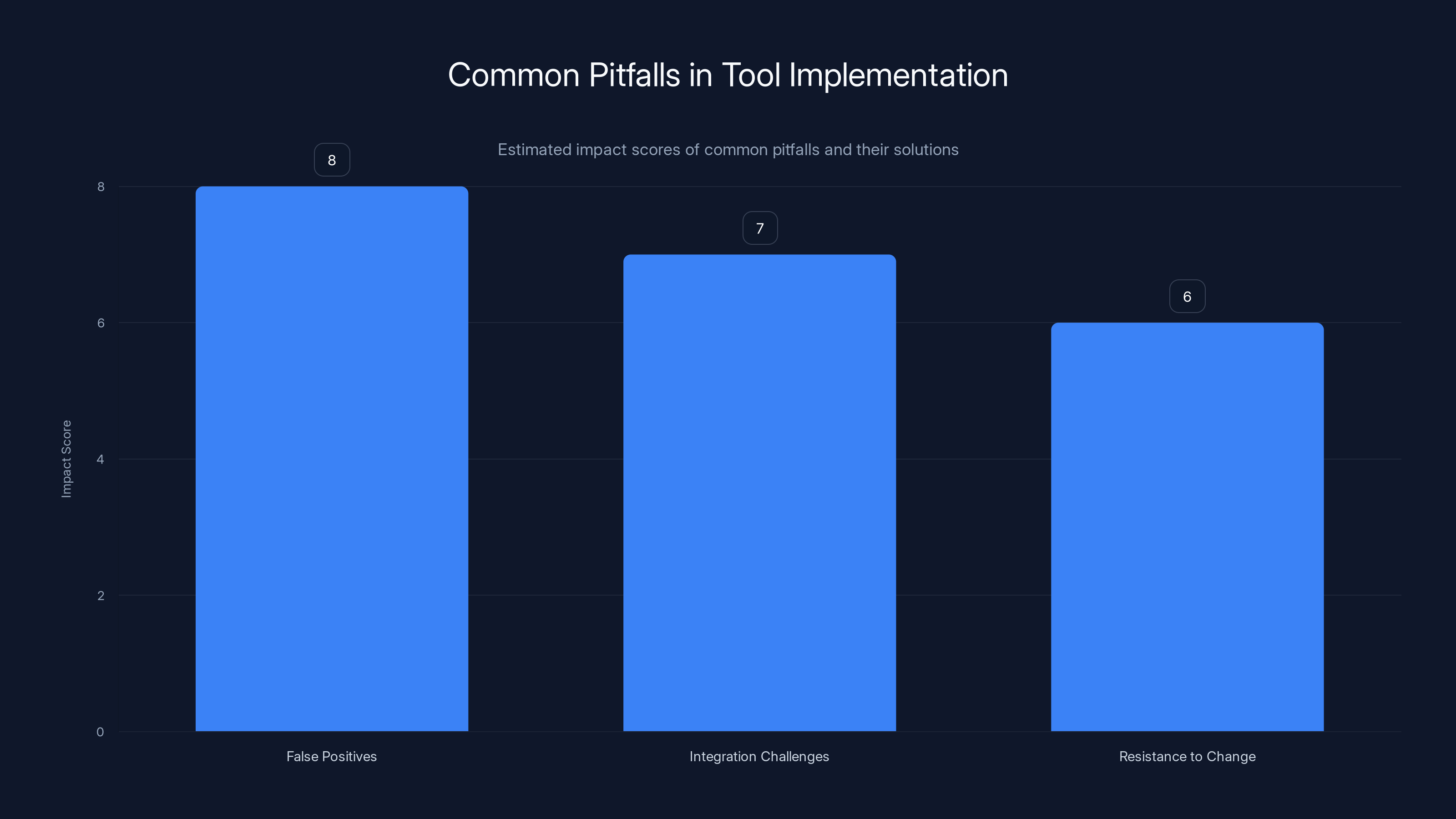

Estimated data showing the impact scores of common pitfalls in tool implementation. False positives have the highest impact score, suggesting they are the most challenging issue.

Implementing LLM-Based Security Tools

Step 1: Integration with Existing Workflows

Integration is crucial for maximizing the benefits of these tools. Both Claude Code Security and Codex Security offer APIs and plugins for popular CI/CD platforms such as Jenkins, GitLab, and GitHub Actions, allowing developers to incorporate them into their existing workflows easily.

yaml# Example GitHub Action configuration for Codex Security

name: Codex Security Scan

on:

push:

branches:

- main

jobs:

security_scan:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v 2

- name: Codex Security Scan

uses: openai/codex-security-action@v 1

with:

api_key: ${{ secrets. CODEX_API_KEY }}

Step 2: Training and Onboarding

Developers must be trained to interpret the results provided by these tools effectively. Unlike traditional SAST, which might produce binary results, LLM-based tools offer nuanced insights that require understanding of the underlying logic.

Step 3: Continuous Monitoring and Feedback

Implement a feedback loop where developers can provide input on false positives or new vulnerabilities. This feedback helps refine the models and improve detection accuracy.

Common Pitfalls and Solutions

Pitfall 1: Overwhelming False Positives

Solution: Start with a pilot program to fine-tune the tools' sensitivity. Gradually expand usage as confidence in the results grows.

Pitfall 2: Integration Challenges

Solution: Leverage community support and documentation for smoother integration. Both tools offer extensive resources to guide users through the setup process.

Pitfall 3: Resistance to Change

Solution: Highlight the long-term benefits of adopting these tools, such as reduced security incidents and cost savings. Demonstrating quick wins can also help ease the transition.

Future Trends in Application Security

1. Increased Adoption of AI-Driven Tools

As LLMs become more sophisticated, their integration into security tools will grow. Expect wider adoption across industries, leading to more comprehensive and intelligent security solutions.

2. Collaboration Between AI Tools and Human Experts

AI tools will complement, not replace, human expertise. The future of application security lies in leveraging AI to handle routine tasks while experts focus on complex, nuanced issues.

3. Evolution of Security Standards

As AI tools become standard, security standards and best practices will evolve to include guidelines for integrating and optimizing their use.

Conclusion

Anthropic and OpenAI's foray into application security with their free LLM-based tools marks a significant shift in the industry. By addressing the structural blind spots of traditional SAST, these tools promise faster, more accurate vulnerability detection. While they don't replace existing security measures, they offer an invaluable complement. The future of application security is a blend of AI-driven tools and human expertise, working together to create safer, more resilient software.

FAQ

What is Static Application Security Testing (SAST)?

Static Application Security Testing (SAST) is a method of analyzing source code, bytecode, or binaries for known vulnerabilities without executing the program. It helps identify security issues early in the software development lifecycle.

How do LLMs improve vulnerability detection?

LLMs improve vulnerability detection by using reasoning and context understanding, which allows them to identify complex issues that traditional SAST tools might miss.

What are the benefits of integrating AI tools into security workflows?

Benefits include faster detection of vulnerabilities, reduced false positives, continuous adaptation to new threats, and enhanced developer productivity.

How can companies ensure a smooth transition to AI-driven security tools?

Companies can ensure a smooth transition by starting with a pilot program, providing training to developers, and gradually integrating the tools into existing workflows.

What future trends can we expect in application security?

Future trends include increased adoption of AI-driven tools, collaboration between AI tools and human experts, and the evolution of security standards to incorporate AI technologies.

Key Takeaways

- Anthropic and OpenAI's free tools reveal SAST's limitations.

- LLM reasoning outperforms traditional pattern matching in vulnerability detection.

- These tools complement existing security stacks, offering faster detection.

- Continuous learning capabilities adapt to emerging threats.

- Expect increased adoption of AI-driven security tools.

Related Articles

- OpenAI and Google Support Anthropic in High-Stakes Legal Battle Against US Government [2025]

- Mastering AI-Driven Code Review: The Future of Software Development [2025]

- OpenAI's Acquisition of Promptfoo: Securing AI Agents for the Future [2025]

- Navigating the Pentagon's AI Controversy: Implications for Startups in Defense [2025]

- Grammarly's Identity Usage Policy: Understanding the Implications and Protecting Your Privacy [2025]

- The Anthropic DOD Lawsuit: Navigating AI Ethics and National Security [2025]

![Anthropic and OpenAI Unveil Free Tools That Challenge SAST's Limitations [2025]](https://tryrunable.com/blog/anthropic-and-openai-unveil-free-tools-that-challenge-sast-s/image-1-1773160489359.jpg)