Australia’s Bold Move on AI Services: Age Verification and App Store Compliance [2025]

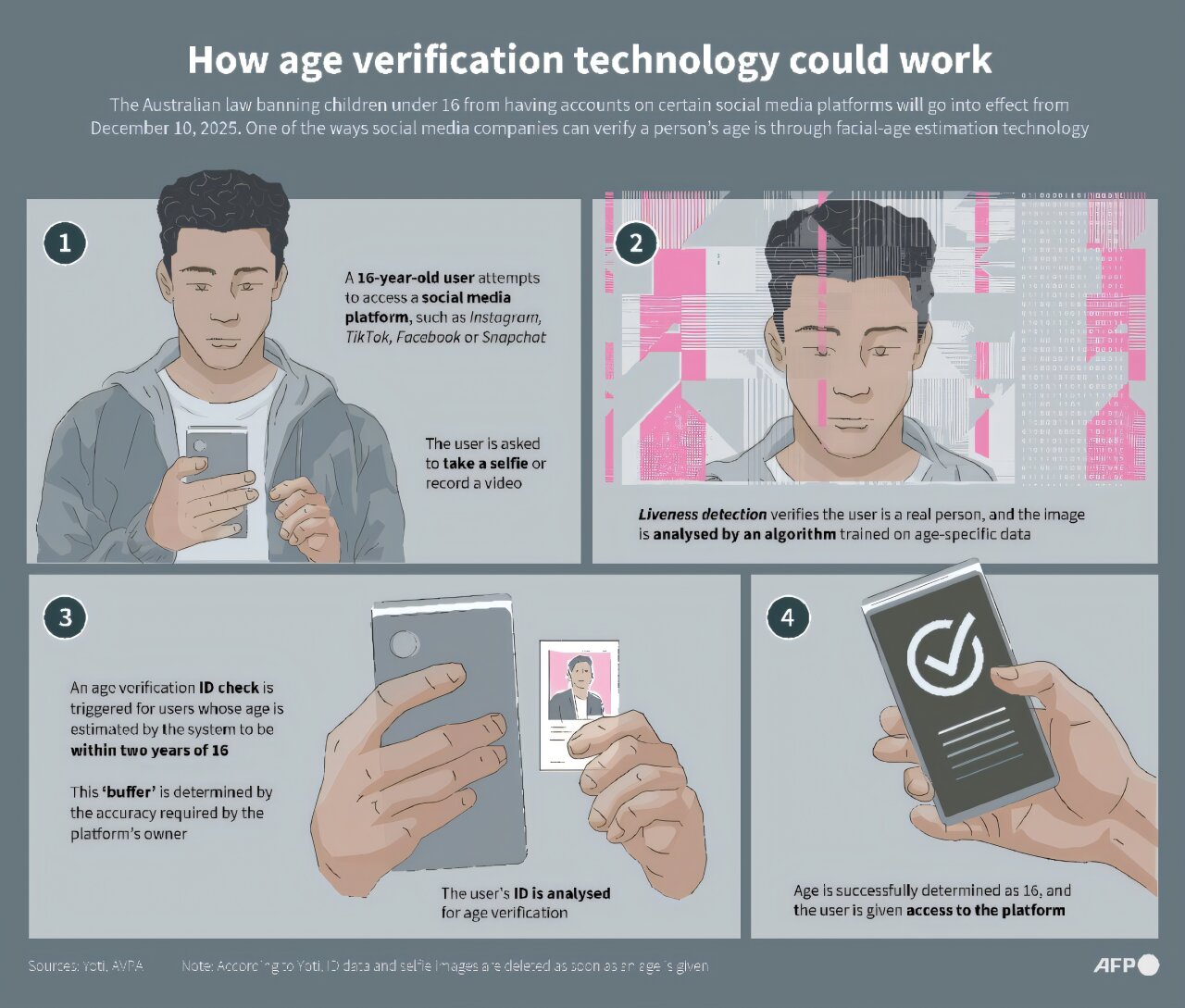

Australia is on the brink of a significant regulatory shift that could reshape the landscape of AI services in the country. The Australian government is considering a mandate that would require app stores to block AI services that do not have age verification mechanisms. This move is part of a broader strategy to protect minors online, following the country’s earlier restrictions on social media access for those under 16, as reported by Time.

TL; DR

- Age Verification Mandate: Australia may require app stores to block AI services without age checks.

- Broader Social Media Strategy: This follows a ban on social media for users under 16.

- Regulatory Compliance: Non-compliance could lead to significant penalties for app stores.

- Technical Implementations: Age verification could involve biometric checks, ID uploads, or parental controls.

- Future Impact: Potential for global policy influence and increased tech innovation.

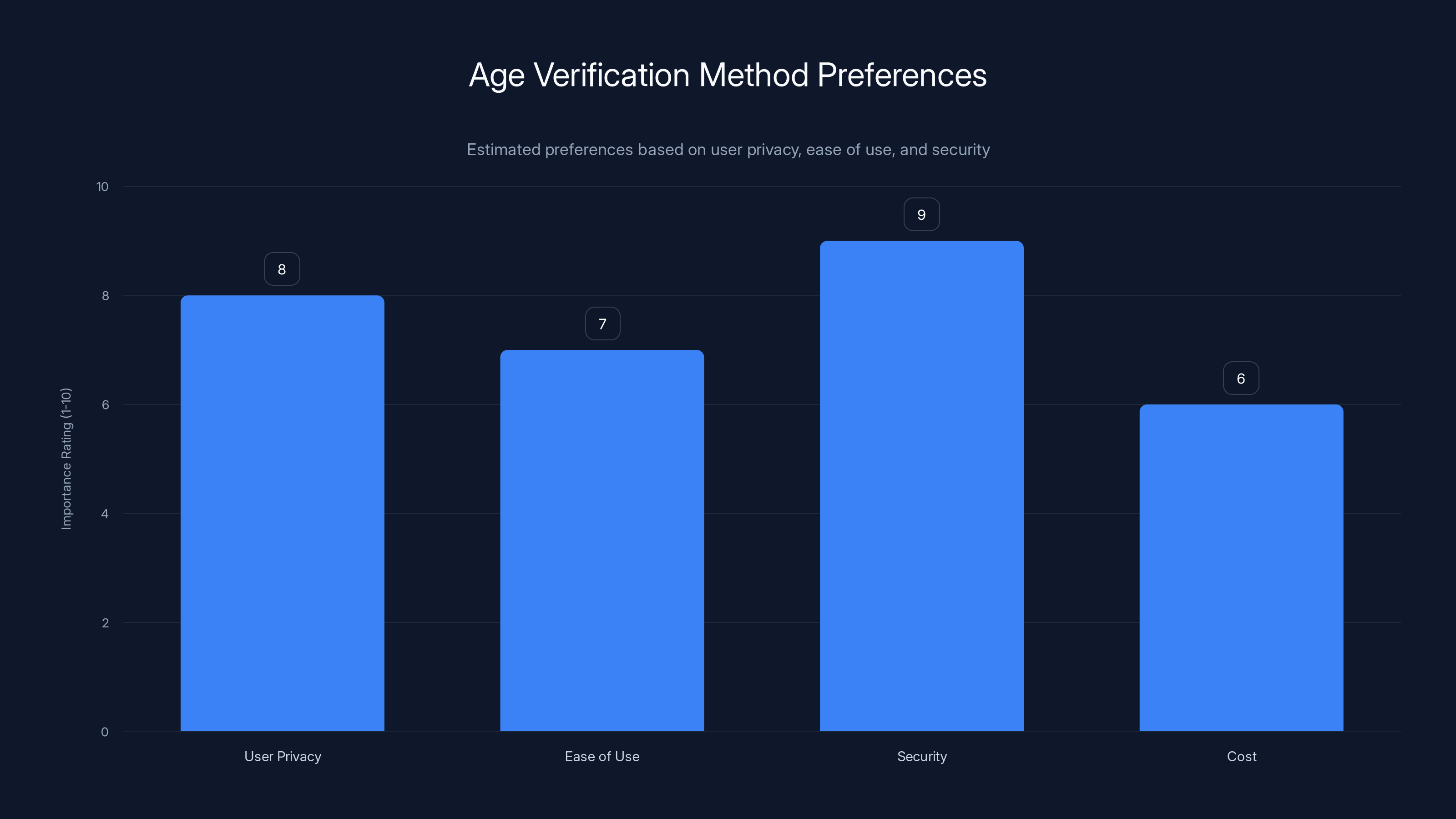

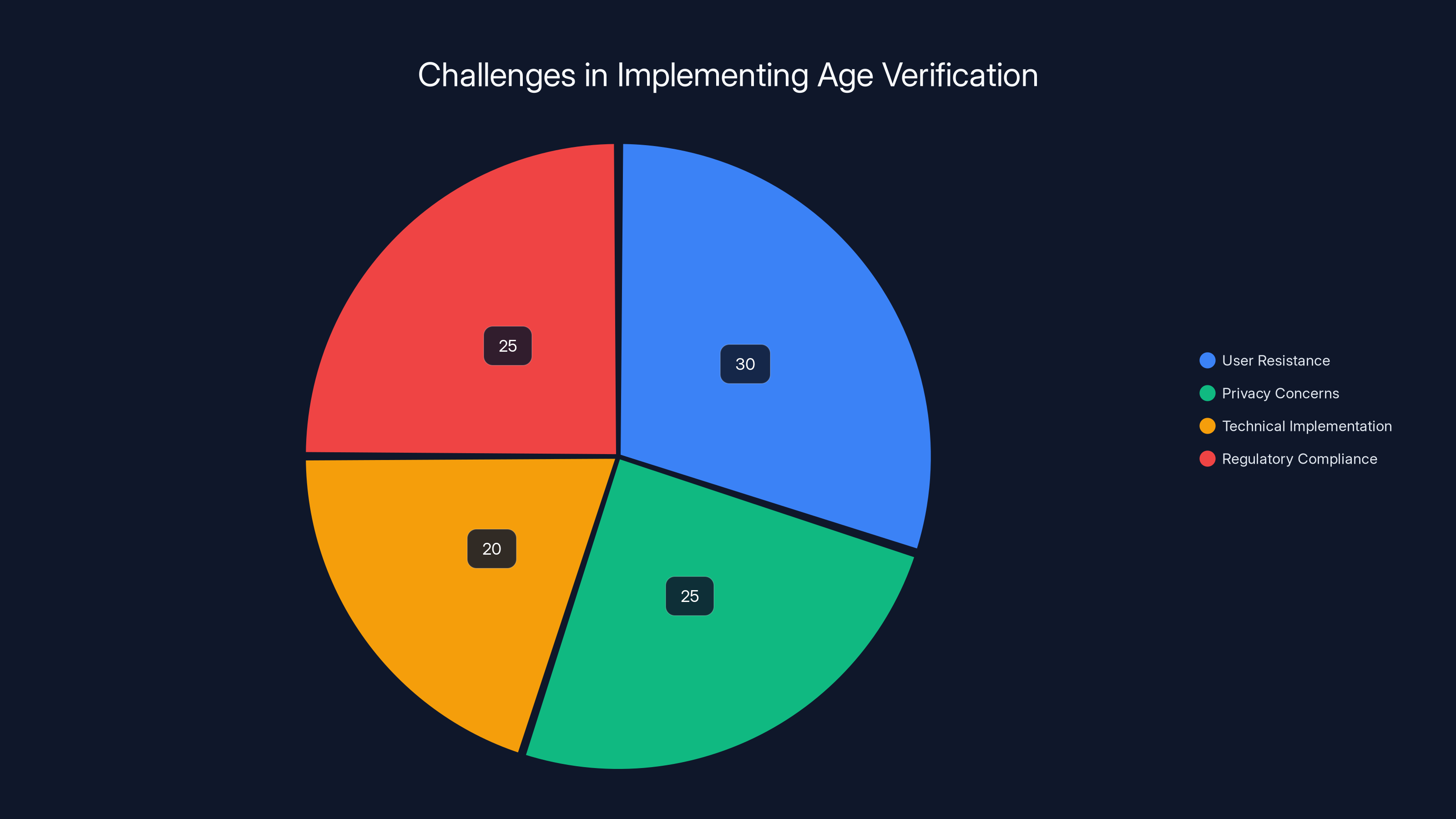

Security and user privacy are the most critical factors when choosing an age verification method. Estimated data based on typical priorities.

Introduction

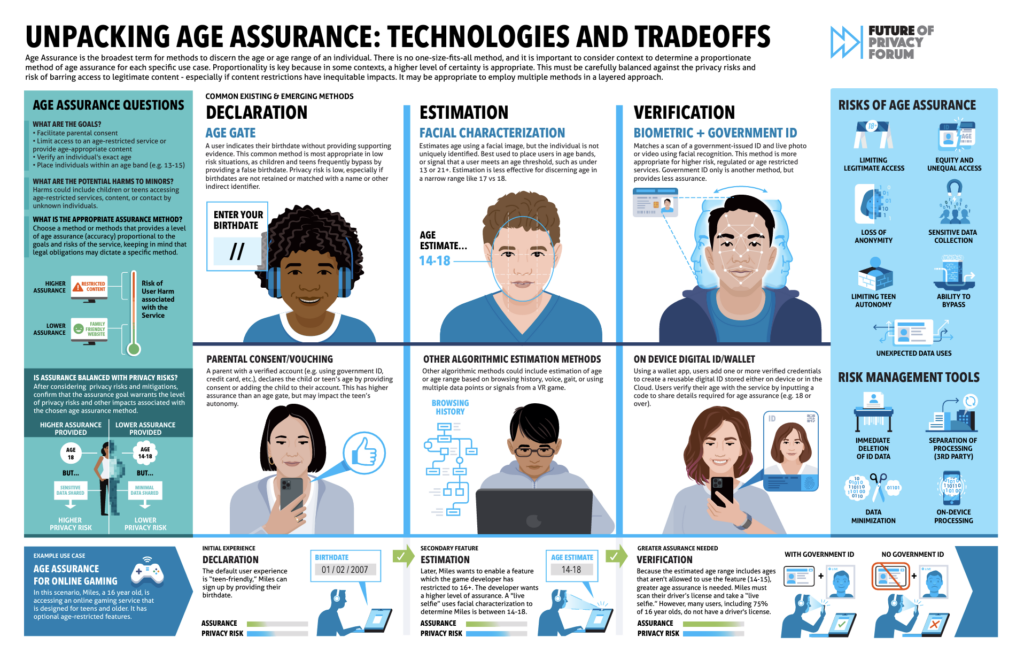

In recent years, the rapid advancement of artificial intelligence has led to an explosion of AI-powered applications and services, from chatbots to sophisticated analytical tools. However, with this growth comes the challenge of ensuring these technologies are used responsibly, particularly by younger audiences. Australia, recognizing the potential risks associated with unregulated AI access, is contemplating a groundbreaking policy that could see AI services blocked from app stores if they fail to implement robust age verification systems. According to Lawfare Media, this could involve requiring users to upload a photo ID for verification.

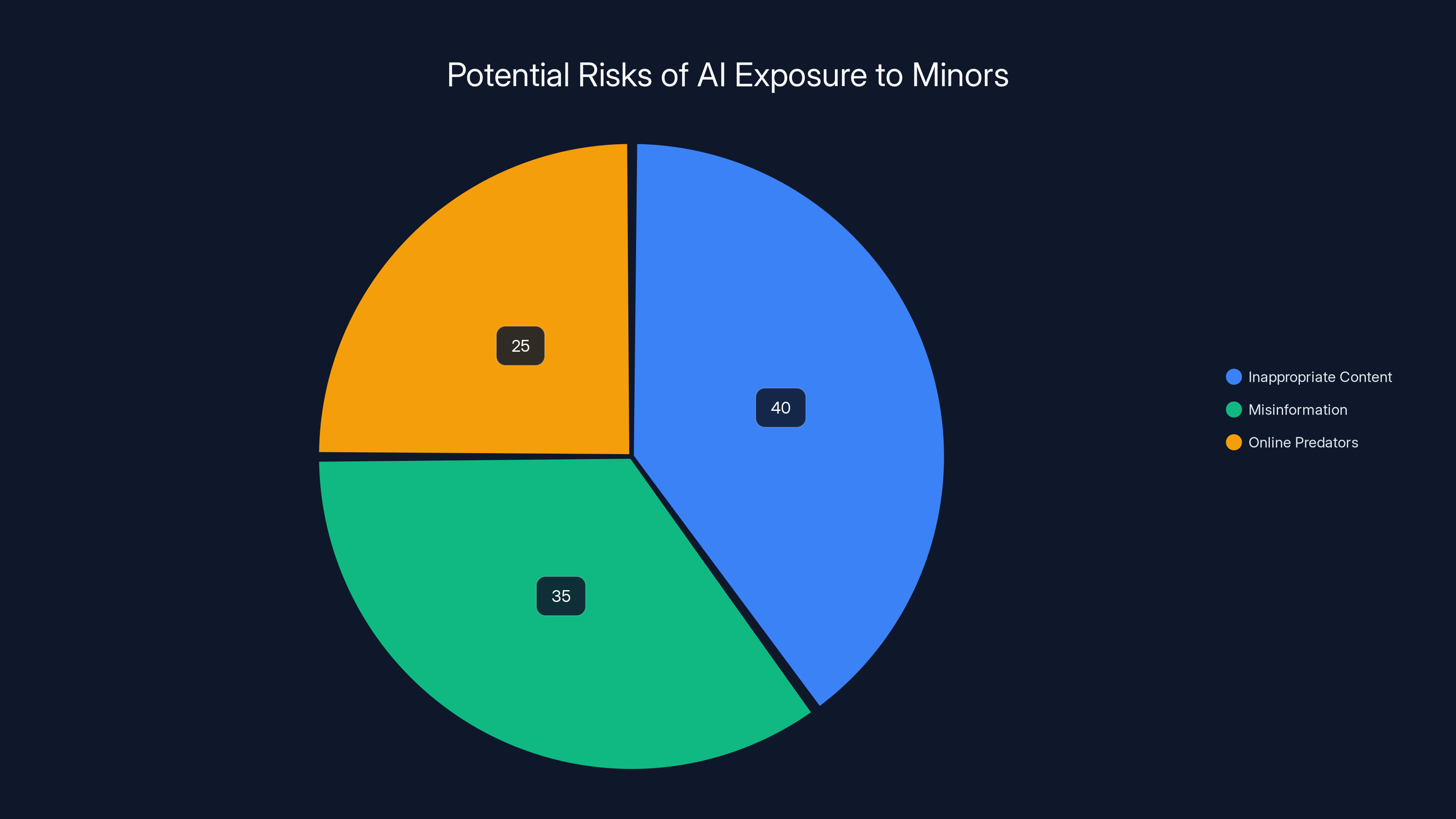

Inappropriate content poses the highest risk to minors using AI services, followed by misinformation and online predators. Estimated data.

The Rationale Behind the Regulation

Australia's decision to consider such regulations stems from a growing concern about the potential misuse of AI by minors. AI services, especially chatbots, can expose young users to inappropriate content, misinformation, and even online predators. By enforcing age verification, the Australian government aims to safeguard its younger population from these risks, as highlighted by Contemporary Pediatrics.

The Broader Context

This move is not occurring in isolation. It follows Australia's earlier decision to restrict social media access for those under 16, a policy aimed at reducing cyberbullying and protecting privacy. The focus on AI services represents a natural progression in the country's efforts to create a safer digital environment for children, as noted by TechCrunch.

Potential Impact on App Stores

If the proposed regulations come into effect, app stores like Google Play and Apple’s App Store will play a critical role in enforcement. These platforms will be required to ensure that any AI services offered comply with the new age verification requirements, or risk being removed from the marketplace, as reported by AppleInsider.

Technical Implementation of Age Verification

Implementing age verification for AI services is not a trivial task. It requires a delicate balance between user privacy, security, and accessibility. Here are some of the technical approaches that could be employed:

1. Biometric Verification

Biometric systems use unique physical characteristics, such as fingerprints or facial recognition, to verify a user's age. These systems are highly secure but raise concerns about data privacy and the potential for misuse, as discussed in IEEE Spectrum.

2. Government ID Uploads

Another method involves users uploading government-issued IDs for age verification. While this is straightforward, it can deter users due to privacy concerns and the inconvenience of the process, as highlighted by Marketplace.

3. Parental Controls

Parental control systems can allow guardians to set age restrictions on devices, ensuring that children cannot access certain apps without approval. This method empowers parents but requires diligent monitoring and management, as noted by AOL.

User resistance and privacy concerns are major challenges in implementing age verification, each accounting for about a quarter of the issues faced. Estimated data.

Practical Implementation Guide

For developers and companies looking to comply with these potential regulations, here are some best practices and implementation steps:

Step 1: Identify Required AI Services

First, determine which of your services will need age verification. This typically includes any service that allows user interaction or provides content that could be inappropriate for minors.

Step 2: Choose an Age Verification Method

Select the most appropriate age verification method based on your service's user base and risk level. Consider factors such as user privacy, ease of use, and security.

Step 3: Implement Verification on the Front End

Ensure that the age verification process is integrated into the app's user interface. This could be a mandatory step during the sign-up process or a gatekeeper for accessing certain features.

Step 4: Securely Manage User Data

Data security is paramount. Implement robust encryption methods to protect user data and comply with data protection regulations, as advised by Built In.

Step 5: Test and Monitor

Conduct thorough testing to ensure the age verification system is functioning correctly. Continuously monitor for loopholes or breaches and update the system as needed.

Common Pitfalls and Solutions

While implementing age verification, several challenges may arise. Here are some common pitfalls and how to address them:

Pitfall 1: User Resistance

Solution: Clearly communicate the necessity and benefits of age verification to users. Provide assurances about data security and privacy.

Pitfall 2: Technical Glitches

Solution: Conduct extensive testing across different devices and platforms to identify and fix technical issues before they affect users.

Pitfall 3: Privacy Concerns

Solution: Adhere to data protection laws and implement transparent data policies. Educate users on how their data is used and protected.

Future Trends and Recommendations

As Australia moves forward with these regulations, several trends and recommendations emerge:

1. Global Influence

Australia's actions could set a precedent for other countries, influencing global policies on AI and age verification, as noted by Bloomberg.

2. Technological Innovation

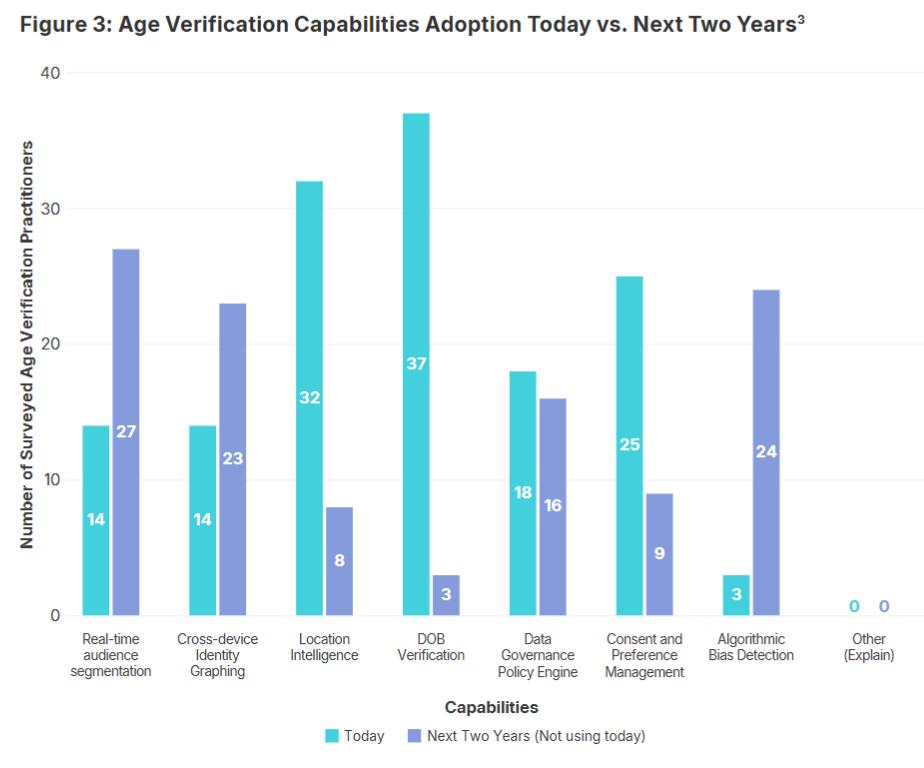

The demand for age verification systems could spur innovation in AI and cybersecurity technologies, leading to more sophisticated and secure solutions.

3. Increased Collaboration

There may be an increase in collaboration between governments, tech companies, and privacy advocates to develop effective and user-friendly age verification systems.

4. Balance Between Privacy and Security

Companies will need to find a balance between securing their platforms and respecting user privacy, possibly leading to new industry standards, as discussed by Politico.

Conclusion

Australia's consideration of age verification for AI services represents a significant step in protecting minors in the digital age. While the implementation of such measures poses challenges, it also offers opportunities for innovation and global policy leadership. As the world watches, Australia could pave the way for a safer, more secure online environment for young users.

Key Takeaways

- Age Verification Mandate: Australia may require AI services to implement age checks.

- Broader Social Media Strategy: This follows a ban on under-16 social media access.

- Technical Implementation: Methods include biometrics and ID uploads.

- Common Pitfalls: User resistance and privacy concerns are key challenges.

- Future Trends: Potential for global policy influence and tech innovation.

- Regulatory Compliance: App stores face removal for non-compliance.

- Balance Needed: Privacy and security must be balanced effectively.

FAQ

What is the proposed Australian regulation on AI services?

Australia is considering a regulation that would require app stores to block AI services lacking age verification systems to protect minors.

How would age verification be implemented?

Possible methods include biometric verification, government ID uploads, and parental controls.

Why is Australia focusing on AI services?

The focus is to prevent minors from accessing inappropriate content and to continue the country's efforts in creating a safer online environment.

What are the potential challenges with age verification?

Challenges include user resistance, privacy concerns, and technical glitches, which need careful planning and execution.

How might this regulation influence global policies?

Australia's decision could set a precedent for similar actions worldwide, influencing global tech policy and innovation in age verification systems.

What role will app stores play in this regulation?

App stores will be responsible for ensuring that AI services comply with the age verification requirements, potentially facing removal for non-compliance.

How can companies prepare for these changes?

Companies should begin assessing their AI services, choosing appropriate verification methods, and ensuring data security to comply with potential regulations.

Related Articles

- Alaska's Bold Move to Tackle AI-Generated CSAM and Youth Social Media Use [2025]

- How 5G Networks Can Track Your Location Without GPS [2025]

- The Future of Foldable Phones and Handheld PCs: A Deep Dive [2025]

- When AI Lies: Navigating the Complexities of Alignment Faking in Autonomous Systems [2025]

- Ensuring Secure AI Usage in the Workplace [2025]

- Understanding Oblivion Malware: A New Threat to Android Security [2025]

![Australia's Bold Move on AI Services: Age Verification and App Store Compliance [2025]](https://tryrunable.com/blog/australia-s-bold-move-on-ai-services-age-verification-and-ap/image-1-1772490862083.jpg)