Claude Code Security: How AI Reasoning Is Transforming Vulnerability Detection

Introduction: A Paradigm Shift in Code Security

The cybersecurity landscape experienced a significant disruption when Anthropic's most advanced AI model, Claude Opus 4.6, identified more than 500 high-severity vulnerabilities in production open-source codebases. These weren't theoretical flaws or edge cases—they were genuine security lapses that had survived decades of expert review, millions of hours of automated fuzzing, and countless security audits by some of the most experienced security researchers in the industry. Each vulnerability was vetted through rigorous internal and external security review processes before disclosure, ensuring accuracy and preventing premature exploitation.

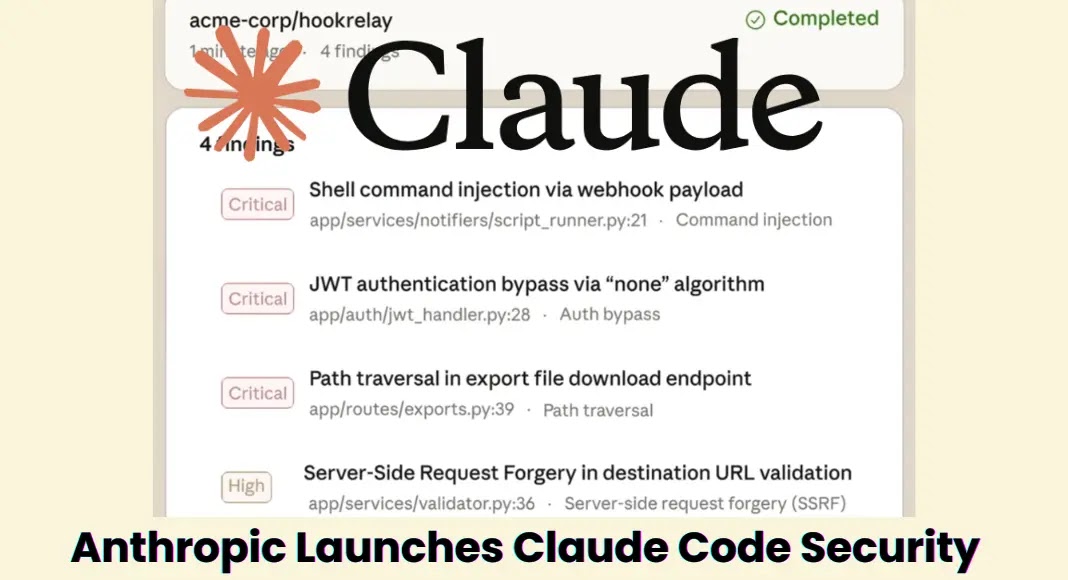

What made this discovery remarkable wasn't just the number of vulnerabilities found, but the speed and methodology used to find them. Within just 15 days of publishing their research findings on February 5, Anthropic productized this capability and launched Claude Code Security as a limited research preview on February 20. This rapid transition from research to product demonstrates the maturity and reliability of the underlying technology, signaling a genuine shift in how organizations should approach code security and vulnerability management.

For security directors and chief information security officers managing seven-figure vulnerability management stacks, this development carries profound implications. The next board meeting or budget review cycle will inevitably include questions about reasoning-based scanning and how organizations can adopt these capabilities before attackers identify and exploit the same vulnerabilities. This isn't speculative—Anthropic's research demonstrates that simply directing an advanced AI model at exposed code produces consistent vulnerability discoveries, meaning malicious actors with similar capabilities will likely reach identical conclusions.

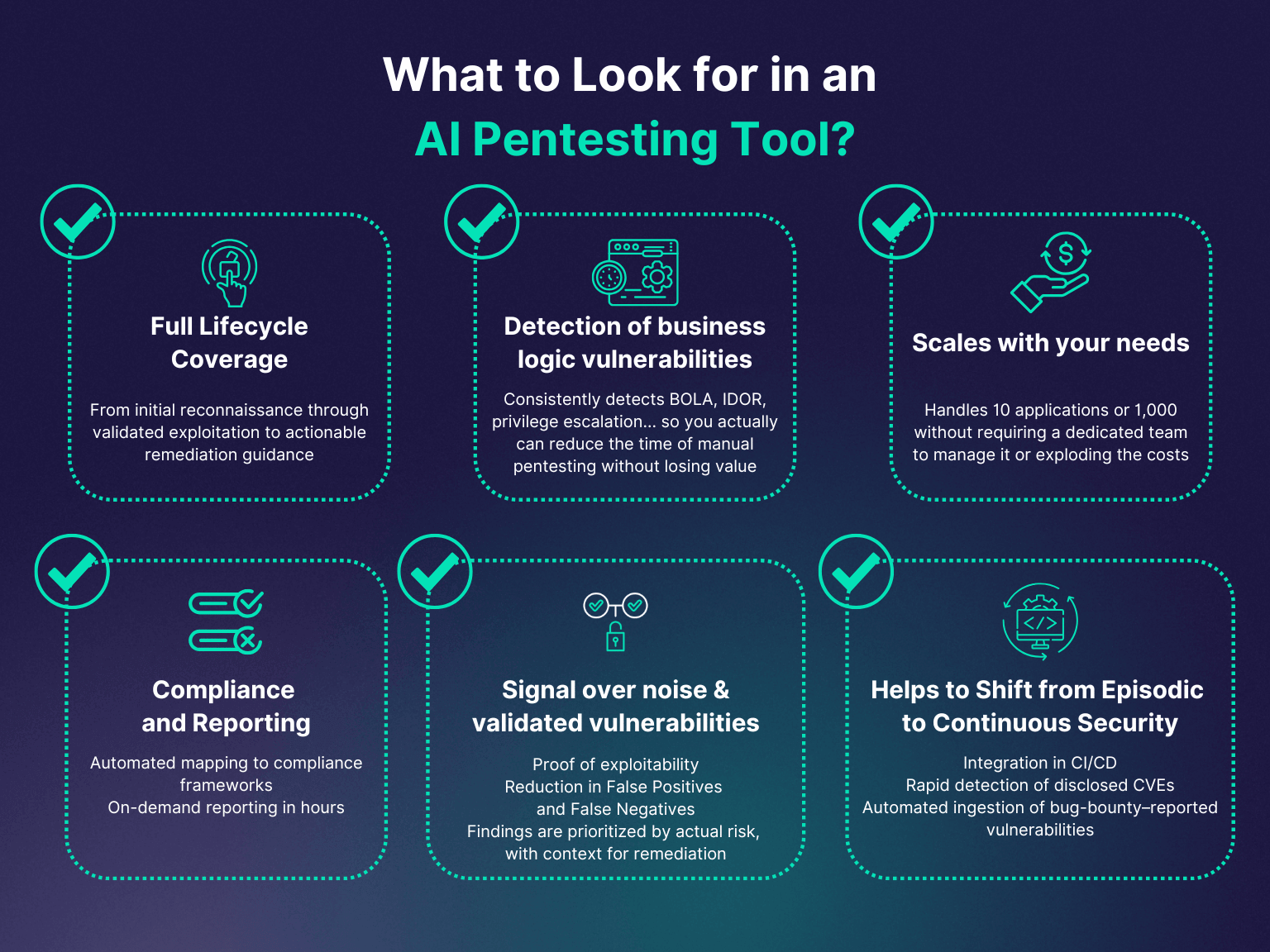

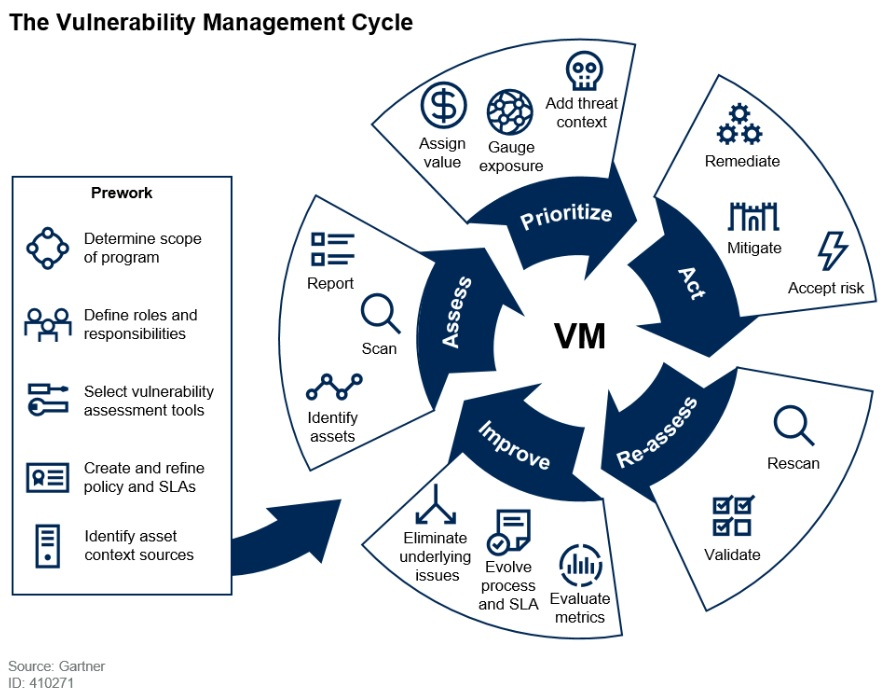

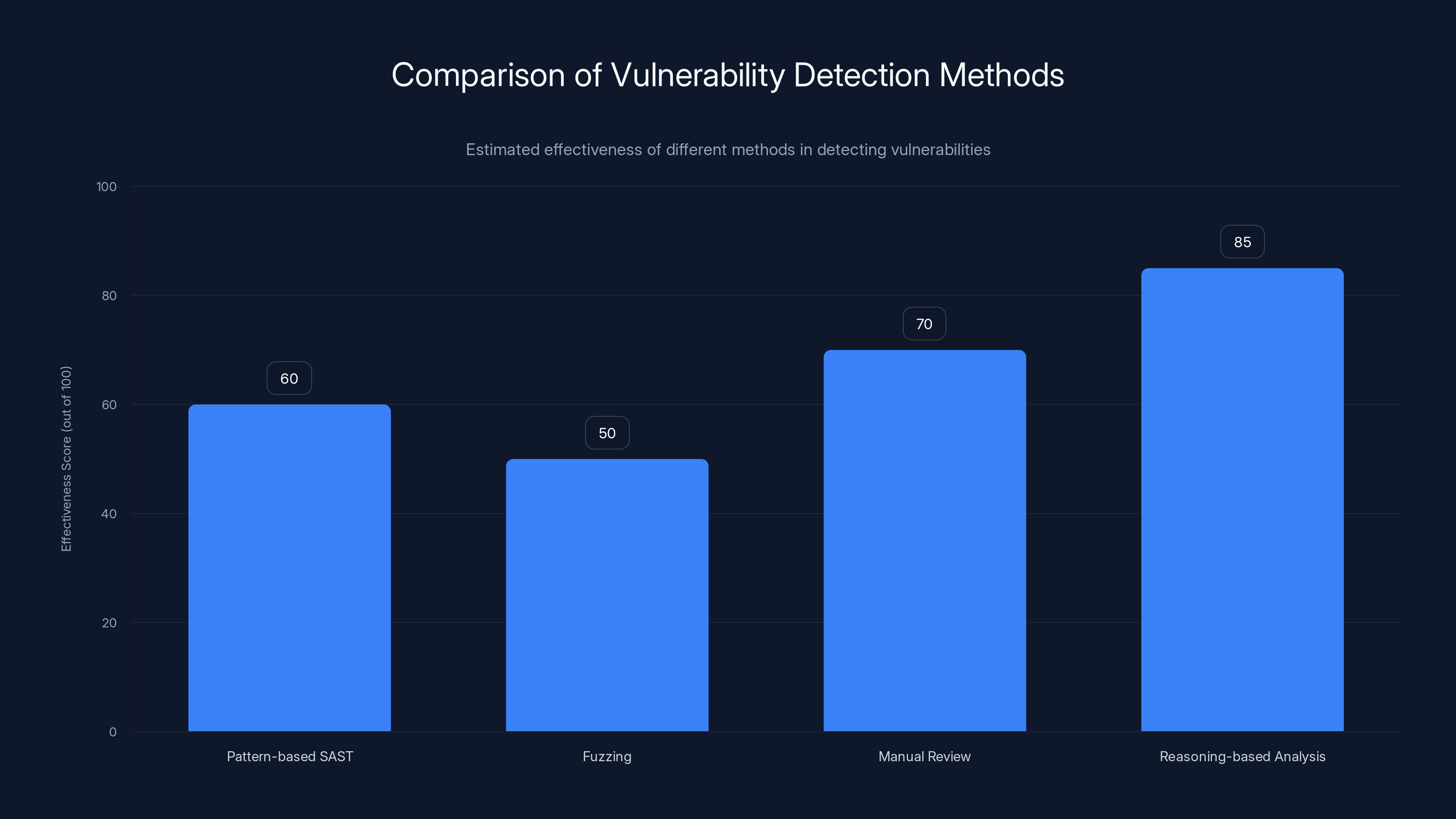

The fundamental question security leaders face is no longer whether to incorporate reasoning-based vulnerability scanning, but rather how to structure their tooling and processes to effectively allocate work between traditional pattern-based scanners and the emerging category of reasoning-based analysis. This distinction is critical because these two approaches operate on fundamentally different principles. Pattern-based scanners like Code QL and its derivatives match code against known vulnerability patterns and rules. Reasoning-based scanners follow how data moves through applications and catch flaws in business logic and access control that no static rule set could ever comprehensively cover.

Understanding this paradigm shift requires examining what makes reasoning-based scanning fundamentally different from existing approaches, analyzing the specific vulnerabilities discovered, and developing a strategic framework for integrating these capabilities into existing security infrastructure. This comprehensive guide explores each of these dimensions in detail, providing security leaders, developers, and security architects with the knowledge needed to make informed decisions about their vulnerability management strategies.

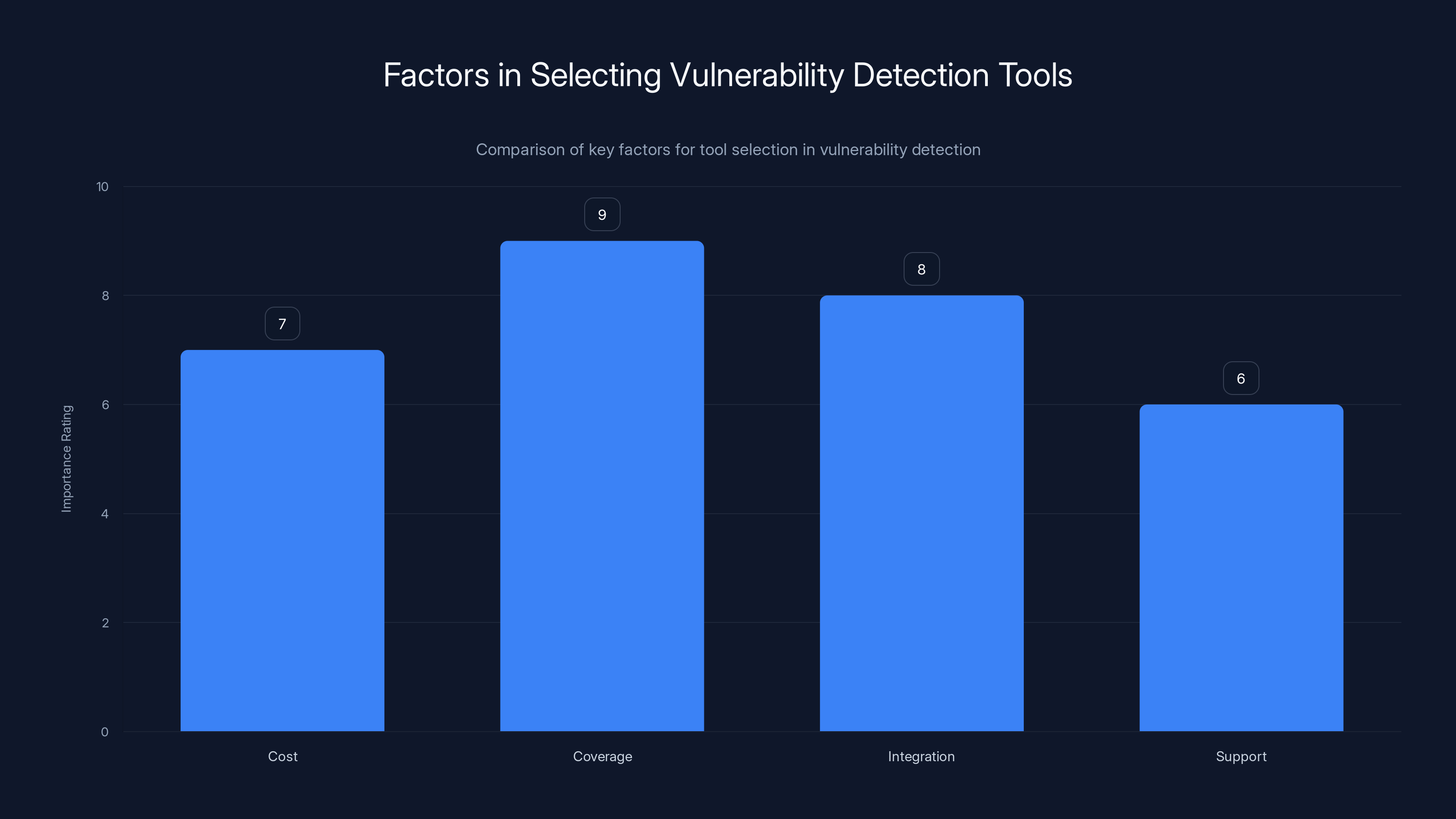

Coverage is the most critical factor when selecting vulnerability detection tools, followed by integration and cost. Estimated data based on typical industry considerations.

Understanding the 500+ Vulnerability Discovery: What Actually Happened

The Scale and Severity of the Discovery

The discovery of over 500 high-severity vulnerabilities in widely-deployed open-source projects represents a watershed moment in application security. These weren't low-impact issues, potential flaws, or theoretical concerns—they were classified as high-severity vulnerabilities, indicating they could lead to significant security breaches, data exfiltration, system compromise, or complete application failure. The distribution across multiple major open-source projects including Ghost Script, Open SC, and CGIF demonstrates that this vulnerability class isn't isolated to a single category of software or specific programming practices.

What makes this discovery particularly significant is the pedigree of existing security measures that failed to detect these vulnerabilities. Ghost Script, for instance, is a utility for processing Post Script and PDF files that has been maintained for decades by security-conscious developers. The project has undergone extensive fuzzing—millions of hours worth—with thousands of test cases designed to trigger unexpected behavior. It has been subjected to manual security analysis by experienced security researchers. It has been used in production by millions of organizations. Yet these vulnerabilities persisted undetected through all of these overlapping security measures.

Open SC, which handles sensitive smart card data, similarly had undergone rigorous testing and review processes. The assumption among security professionals would have been that such a security-critical project would have minimal undetected vulnerabilities, particularly high-severity ones that could compromise the integrity of smart card authentication systems. The discovery of previously unknown vulnerabilities in projects of this caliber fundamentally challenges existing assumptions about the thoroughness of current security practices.

Why Existing Security Methods Missed These Vulnerabilities

The failure of existing security approaches to discover these vulnerabilities reveals important limitations in current methodologies. Fuzzing, despite millions of hours of investment, relies on generating test inputs and observing program behavior. It's inherently reactive—it finds vulnerabilities when input patterns trigger unexpected behavior. However, fuzzing struggles with code paths that require specific preconditions. If reaching the vulnerable code requires navigating through multiple conditional branches, validation checks, or state management transitions, random input generation often fails to construct the precise sequence needed to trigger the vulnerability.

Manual code review, while theoretically comprehensive, operates within practical constraints. Security researchers can only examine code actively, and their attention necessarily focuses on areas that appear suspicious or match known vulnerability patterns. Reviewers typically lack automated tools to trace how data flows through entire applications across multiple files and interconnected functions. They cannot reasonably reverse-engineer the intent of patches applied years ago and cross-reference those implications across a large codebase. The cognitive load of tracking complex data flows across multiple files, considering all possible input values and state combinations, exceeds practical human capacity for large codebases.

Static analysis tools like Code QL, which have become industry standard for vulnerability detection, operate within defined rule sets. These tools are extraordinarily effective at identifying vulnerabilities that fit existing patterns—they can catch SQL injection, cross-site scripting, path traversal, and dozens of other well-characterized vulnerability classes. However, the detection boundary is inherently limited by the available rule set. Any vulnerability that doesn't match an existing pattern remains invisible to these tools, no matter how severe the security implications.

Pattern-Based vs. Reasoning-Based Analysis: The Fundamental Difference

How Traditional Pattern-Based Scanning Works

Pattern-based vulnerability scanning, embodied by tools like Code QL and its derivatives, operates on a well-established principle: identify code that matches known vulnerability patterns. The methodology involves translating security expertise into reusable patterns that tools can apply automatically across large codebases. For example, a pattern might specify: "if untrusted user input flows into a SQL query without parameterization, flag this as a potential SQL injection vulnerability." Another might state: "if a file operation uses a path constructed from user input without proper validation, flag this as a path traversal issue."

These patterns encode cumulative knowledge from decades of security research. Security experts document vulnerability classes, identify the code patterns associated with them, and translate those into automated detection rules. When applied consistently across millions of lines of code, pattern-based scanning catches enormous quantities of vulnerabilities that would be impractical to find through manual review. For organizations seeking to eliminate entire classes of known vulnerabilities, pattern-based scanning is remarkably efficient and effective.

The approach has fundamental strengths: rules are explainable and verifiable, results are reproducible, and the false positive rate can be carefully calibrated. Security teams understand exactly why a tool flagged a particular code section. The underlying logic is transparent and can be audited. Teams can adjust rule sensitivity to match their risk tolerance. For finding known vulnerability types, pattern-based tools are mature, reliable, and represent the current industry standard.

However, pattern-based scanning inherently searches within known territory. It detects vulnerabilities that security experts have already identified and characterized. It matches against known patterns. By definition, it cannot discover vulnerability classes that haven't yet been documented and encoded into rules. This isn't a flaw in tool implementation—it's a fundamental architectural limitation of the approach.

How Reasoning-Based Vulnerability Discovery Works

Reasoning-based analysis approaches the problem fundamentally differently. Rather than matching against predefined patterns, reasoning systems generate and test hypotheses about how code behaves. This process mirrors how expert security researchers approach unfamiliar codebases. A human security researcher examining code would trace how data flows through the application, considering the implications of specific operations and state transitions. They would ask questions: "What happens if this buffer receives more data than it can hold?" or "Could this authentication check be bypassed through a specific sequence of operations?" They would construct mental models of program execution and reason about potential failure modes.

Reasoning-based systems automate this hypothesis generation and testing process. They examine code and consider potential security properties: buffer overflows, integer overflows, logic errors, access control bypasses, and dozens of other vulnerability classes. Rather than checking against predefined patterns, they reason about how the code could behave under various conditions. They generate candidate vulnerabilities and evaluate whether the code actually implements the logic required to make them possible.

This approach has profound advantages for discovering novel vulnerabilities. Reasoning systems aren't limited to known vulnerability classes. They can identify security flaws in business logic that don't match any existing pattern. They can catch access control errors that emerge from complex conditional logic. They can discover vulnerabilities that require understanding the semantic meaning of code, not just syntactic patterns.

The reasoning approach also enables analysis that requires understanding context across file boundaries and historical development. A reasoning system can examine the git commit history to understand why a security patch was applied in one location, then check whether the same vulnerability exists in other related code locations. It can trace implications across multiple files and complex function call chains.

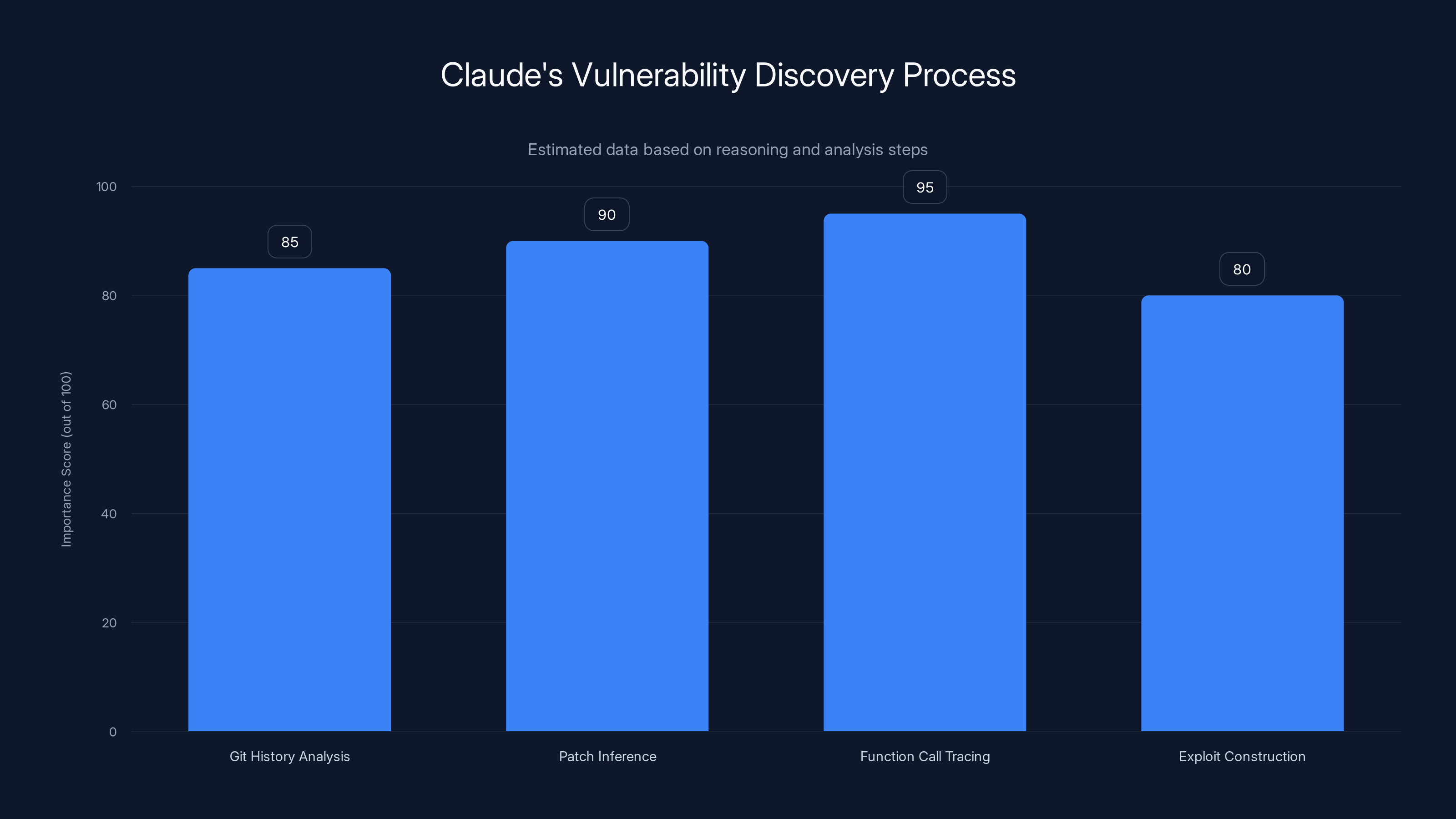

Claude's reasoning-based analysis involved multiple steps, with function call tracing being the most critical in discovering the vulnerability. Estimated data.

The Three Proof Points: How Claude Found What Others Missed

Case Study 1: Ghost Script - Commit History Analysis and Logical Inference

Ghost Script is a mature, widely-deployed utility for processing Post Script and PDF files. The project has been in active development and maintenance for decades. It processes untrusted input from external documents, making it a high-value target for security research. When Anthropic's Claude analyzed Ghost Script, traditional fuzzing had already been applied exhaustively with no results. Manual code review by experienced developers had found no issues. Yet Claude identified a critical vulnerability.

The discovery process demonstrates the power of reasoning-based analysis. Claude examined the project's git history and identified a security patch that added stack bounds checking for font handling in the gstype 1.c file. The patch specifically addressed a vulnerability where font processing could write beyond allocated buffer boundaries. Critically, Claude reasoned about the implications of this patch. If bounds checking was necessary in one location, could the same vulnerability exist wherever the vulnerable function was called?

Claude traced calls to that same function across the entire codebase and found gdevpsfx.c—a completely different file in a different module—made the identical function call but without the bounds checking that had been added in gstype 1.c. This inconsistency suggested a vulnerability: the font handling function could overflow its buffer when called from gdevpsfx.c just as it could have in gstype 1.c before the patch.

Claude then constructed a working proof-of-concept exploit that demonstrated the vulnerability actually existed and could be triggered through the gdevpsfx.c code path. The discovery required multiple types of reasoning: understanding git history, inferring the intent of patches, tracing function calls across files, and constructing test cases that would demonstrate the vulnerability. No pattern-based scanner could accomplish this because no rule set describes "inconsistently patched security issues across multiple files."

The maintainers of Ghost Script have since patched the vulnerability, but the discovery reveals a vulnerability class that pattern-based tools fundamentally cannot detect. The only way to find inconsistently patched vulnerabilities is to reason about why patches were applied and check whether the same root cause exists elsewhere.

Case Study 2: Open SC - Precondition Analysis and Fuzzing Blindness

Open SC processes smart card data, handling cryptographic operations and authentication procedures. Smart cards are high-security devices, and the software handling their data must meet rigorous security standards. Traditional fuzzing and analysis had found nothing problematic. Yet Claude identified a buffer overflow vulnerability in the strcat operations.

The vulnerability existed at a specific location where multiple strcat operations ran in succession without checking whether the cumulative output would fit within the allocated buffer. This is a classic buffer overflow pattern, but it had survived previous security analysis. Why? The vulnerability only manifested when fuzzing generated inputs that passed through a complex chain of preconditions to reach that code path.

Fuzzing approaches work probabilistically—they generate thousands or millions of test inputs and observe program behavior. They're effective at finding bugs that can be triggered by common input patterns. However, when reaching vulnerable code requires navigating multiple conditional branches, validation checks, and state management transitions, the probability of random fuzzing generating the precise sequence becomes vanishingly small.

Claude took a different approach, treating the vulnerability discovery as a reasoning problem. It searched the repository for function calls that are frequently associated with security vulnerabilities, identifying locations where unchecked string operations occurred. It then reasoned about which preconditions would allow those operations to execute. Rather than randomly generating inputs hoping to reach the vulnerable code, Claude constructed specific inputs designed to satisfy the necessary preconditions.

This approach demonstrates how reasoning-based analysis complements and extends fuzzing. Fuzzing is excellent at exploring common code paths probabilistically. Reasoning-based analysis is excellent at identifying code patterns associated with vulnerabilities and working backward to determine what preconditions would trigger them. The combination is more powerful than either approach alone.

Case Study 3: CGIF - Algorithm-Level Edge Cases and Compression Logic

CGIF is a library for processing GIF files, handling the LZW compression algorithm that GIF files use. This vulnerability represents perhaps the most sophisticated reasoning-based discovery, as it required understanding the semantics of a compression algorithm and recognizing an edge case that even comprehensive testing would rarely trigger.

The vulnerability stems from a subtle assumption in CGIF's implementation. The code assumes that compressed output will always be smaller than uncompressed input. This assumption is almost universally true in practice—compression algorithms exist because they reduce size. However, the LZW compression algorithm builds a dictionary of recurring tokens as it processes data. When that dictionary fills up, it undergoes a reset. Under specific conditions where the dictionary fills and resets multiple times during compression, the compressed output can actually exceed the uncompressed size.

CGIF had allocated a buffer assuming compressed output would always fit within a certain size limit. If the compressed data exceeds that size—something the code assumed impossible—a buffer overflow occurs. Discovering this vulnerability requires deep understanding of how LZW compression works, recognizing the edge case where the normal assumption breaks down, and constructing a specific sequence of input data that triggers dictionary resets.

Traditional fuzzing almost never produces this condition. Random data doesn't naturally create the specific LZW dictionary patterns needed to trigger resets. Even 100% code coverage testing, which aims to exercise every branch in the code, wouldn't necessarily trigger this, because the issue isn't about reaching code paths—it's about exercising a specific algorithm behavior under an unusual condition. The vulnerability requires understanding algorithm semantics and recognizing that a seemingly-safe assumption can be violated under edge cases.

Claude reasoned about the algorithm, recognized the precondition that could cause the assumption to be violated, and constructed input that would produce that precondition. This discovery exemplifies reasoning-based analysis at its most sophisticated: identifying vulnerabilities that require algorithmic understanding rather than pattern matching.

The Strategic Implications for Security Leaders

Why This Changes the Board Conversation

The 500+ vulnerability discovery forces a conversation that's difficult for security leaders to avoid. Five hundred newly discovered zero-days in mature, well-maintained open-source projects isn't a statistic designed to create fear—it's a standing budget justification for fundamentally rethinking code security strategy. When boards ask how an organization is protecting against these newly discovered vulnerability classes, the answer can't be "our static analysis tools caught them" because, by definition, reasoning-based analysis discovered vulnerabilities that static pattern-matching didn't find.

Security leaders need to articulate the strategic difference between static application security testing (SAST) and reasoning-based scanning. SAST tools are pattern matchers. They excel at finding known vulnerability types. They're mature, reliable, and should absolutely remain part of any comprehensive security program. They catch enormous quantities of vulnerabilities automatically. Organizations that haven't implemented SAST should prioritize doing so immediately.

However, SAST alone is insufficient for comprehensive vulnerability detection. The proof is empirical: SAST tools didn't find the Ghost Script, Open SC, and CGIF vulnerabilities. Reasoning-based tools did. Both have roles to play. Static tools find pattern-based vulnerabilities at scale. Reasoning tools find novel vulnerabilities that require semantic understanding.

The board conversation needs to center on how the organization will incorporate reasoning-based capabilities. Will you add Claude Code Security or similar tools to your security stack? Will you allocate budget for security researchers to use these tools? What processes will you establish to handle increased vulnerability discovery? How will you prioritize fixes for newly discovered vulnerabilities? These are the questions that matter, and they require answers that go beyond current budget and process frameworks.

Allocating Work Between Pattern-Matching and Reasoning-Based Approaches

Fundamentally, the strategic question is about resource allocation and process design. Pattern-based scanners should continue handling their domain: identifying known vulnerability types at scale, running automatically on every code commit, and generating reports that security teams can process systematically. This work should be largely automated, with minimal human intervention except for triage and prioritization.

Reasoning-based analysis requires different resource allocation. These tools require significant computational resources compared to pattern-based scanning. They can't practically run on every code commit for every codebase. Organizations need to develop strategies for where and when to apply reasoning-based analysis. Should you use it for all production code, or focus on security-critical components? Should you run it continuously or on a scheduled basis? Should security teams use it proactively to audit existing code, or reactively when vulnerabilities are suspected?

The answer likely involves both approaches. Most organizations should implement continuous reasoning-based scanning for critical codebases—security-sensitive components, authentication systems, cryptographic implementations, and code handling sensitive data. For less critical code, periodic reasoning-based scans might be sufficient. Some organizations might allocate reasoning-based tools primarily to expert security researchers who can interpret findings and construct proof-of-concept exploits.

Regardless of the specific allocation strategy, the key principle is that these approaches are complementary, not competitive. Pattern-based tools will continue providing the bulk of vulnerability detection for known classes. Reasoning-based tools will augment that capability by identifying novel vulnerabilities. The most effective security programs will use both.

How Reasoning-Based Scanning Actually Works in Practice

The Technical Architecture of Hypothesis Generation

Understanding how reasoning-based tools work technically requires examining the underlying architecture. These systems don't employ magic; they use sophisticated but understandable techniques for analyzing code and generating hypotheses about potential vulnerabilities.

The process begins with code representation. When analyzing a codebase, reasoning systems must convert source code into representations suitable for analysis. This typically involves building abstract syntax trees (ASTs) that represent code structure, control flow graphs that show how execution paths diverge and converge, and data flow graphs that track how values move through the program. These intermediate representations abstract away syntactic details while preserving semantic meaning.

Once code is represented in these intermediate forms, the system reasons about potential security properties. It might ask: "What happens if we trace this variable from its source to its sink? Could untrusted data reach a sensitive function?" For each variable and function, it might reason about potential issues: Could this buffer overflow? Could this validation be bypassed? Could this access control check be circumvented?

The system generates candidate vulnerabilities—hypothetical security flaws that might exist given the code structure. This is the key innovation. Rather than checking whether code matches known patterns, the system generates its own hypotheses about vulnerabilities and then evaluates whether those hypotheses are actually realizable given the actual code.

Evaluating a hypothesis involves reasoning about whether the conditions required to trigger the vulnerability could actually occur during program execution. For the CGIF compression case, this means reasoning about LZW algorithm behavior. For the Ghost Script case, this means understanding that the function call from gdevpsfx.c has identical semantics to the call from gstype 1.c and therefore could trigger the same overflow.

Once a hypothesis seems plausible, the system attempts to construct a proof-of-concept—concrete evidence that the vulnerability actually exists. This might involve generating test input, constructing a formal proof that shows the vulnerability is reachable, or tracing through code execution to demonstrate that the vulnerable condition could occur.

What Makes Reasoning-Based Tools Different from Rule-Based SAST

The fundamental difference comes down to autonomy and hypothesis generation. Rule-based SAST tools check whether code matches predefined patterns. They answer the question: "Does this code match a known vulnerability pattern?" Reasoning-based tools answer a different question: "What vulnerabilities could exist in this code, even if they don't match known patterns?"

This distinction has profound implications. Rule-based tools are conservative by design—they only flag issues that match rules created by security experts. They have few false positives because they only report what they can recognize. Reasoning-based tools are exploratory—they generate candidate vulnerabilities and evaluate them. They have higher false positive rates by necessity, because hypotheses about vulnerabilities might not pan out.

Rule-based tools scale efficiently to massive codebases because rule matching is computationally cheap. Running Code QL across millions of lines of code takes minutes. Reasoning-based tools require more computation because they must reason about code behavior, generate hypotheses, and evaluate them. This computational cost limits how extensively reasoning-based tools can be applied.

Rule-based tools produce results that are easy to understand and explain. When a tool reports a SQL injection vulnerability, the report can show exactly which rule matched and why. Reasoning-based tools produce results that require more explanation. Why did the tool think a particular code pattern was vulnerable? Understanding the answer might require following complex reasoning chains across multiple files.

These differences don't make one approach superior to the other—they make them complementary. Rule-based tools excel at finding known vulnerabilities at scale. Reasoning-based tools excel at finding novel vulnerabilities that require semantic understanding. Comprehensive security programs should employ both.

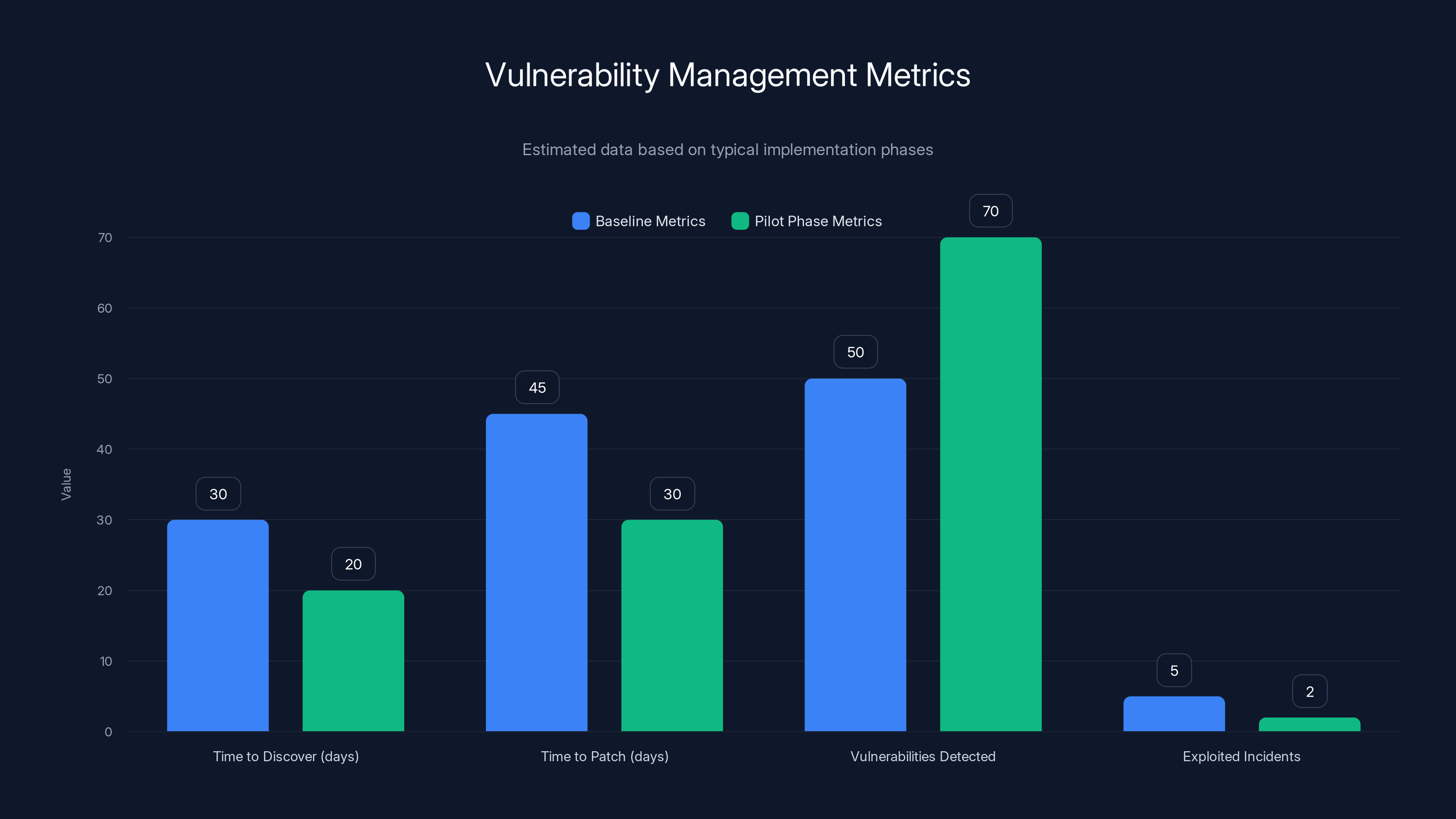

The pilot implementation showed a reduction in time to discover and patch vulnerabilities, with an increase in detected vulnerabilities and a decrease in exploited incidents. Estimated data.

Claude Code Security: Architecture and Capabilities

What Claude Code Security Actually Is

Claude Code Security is Anthropic's productized implementation of reasoning-based vulnerability scanning. It applies Claude Opus 4.6—Anthropic's most advanced reasoning model—to codebases to identify security vulnerabilities. The service is available as a limited research preview to Enterprise and Team customers.

Critically, the product is the same technology that identified the 500+ vulnerabilities, now wrapped in a service interface that organizations can use on their own codebases. When Anthropic ran Claude against open-source projects, they applied the same reasoning capabilities now available through Claude Code Security. The rapid productization demonstrates that this wasn't a one-time research application, but a generalizable capability suitable for production use.

The service operates on submitted codebases and generates reports identifying potential vulnerabilities. Unlike static analysis tools that run locally on developer machines, Claude Code Security is an external service where code is analyzed. This raises important considerations about code privacy and security, which organizations should evaluate carefully.

The reasoning approach Claude uses is flexible in ways that rule-based tools aren't. It can adapt its analysis to the specific domain and context of code it's examining. It can recognize when patches were applied to address vulnerabilities and check whether similar vulnerabilities exist in related code. It can understand algorithmic logic and recognize edge cases. It can discover vulnerabilities that don't match any predefined pattern.

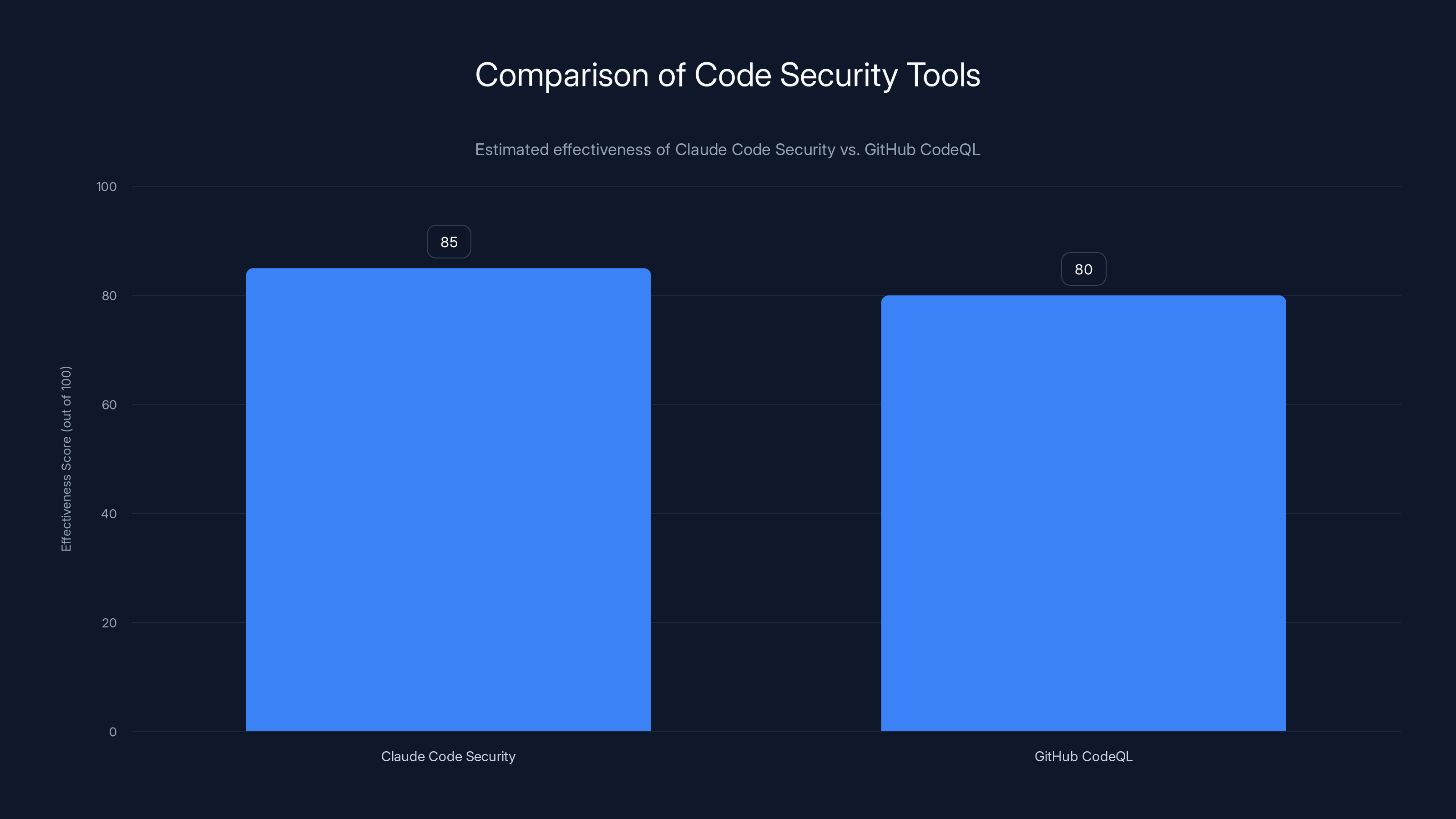

Comparing Claude Code Security to Existing Approaches

Git Hub's Code QL has been the industry standard for vulnerability scanning for years, integrated into Git Hub Advanced Security and widely adopted by security teams. Code QL is sophisticated, efficient, and effective at finding known vulnerability classes. It provides data-flow analysis within predefined queries, allowing security teams to ask specific questions about code behavior.

Code QL's strength is also its limitation: the detection boundary is defined by the available rule set. If no existing rule describes a vulnerability type, Code QL won't find it. Git Hub added Copilot Autofix in August 2024 to suggest fixes for identified vulnerabilities, improving remediation speed. However, this doesn't change the fundamental constraint that Code QL can only detect vulnerabilities matching defined patterns.

Claude Code Security operates outside those constraints. It's not limited to predefined patterns—it reasons about code and generates hypotheses about vulnerabilities. This enables discovering vulnerability classes that Code QL rules don't cover. The tradeoff is computational cost and result explainability. Claude Code Security requires more computation than Code QL and may produce results that require more investigation to understand.

For organizations, the implication is that both tools have roles. Code QL should run continuously on every commit, catching known vulnerability types at scale with minimal false positives. Claude Code Security might run periodically on critical codebases, finding novel vulnerabilities that Code QL misses. The tools are complementary rather than competitive.

Other approaches to vulnerability discovery exist. Bug bounty programs incentivize external researchers to find vulnerabilities. Penetration testing involves security professionals attempting to exploit vulnerabilities. Manual code review by experienced developers provides deep examination of critical code. Fuzzing generates test inputs to trigger unexpected behavior. None of these approaches are made obsolete by reasoning-based scanning—rather, they're all part of comprehensive vulnerability detection strategies.

Integration Challenges and Operational Considerations

Running Reasoning-Based Scanning at Scale

While reasoning-based analysis offers powerful vulnerability discovery capabilities, integrating it into existing security operations requires addressing practical challenges. The computational cost of reasoning-based analysis is significantly higher than pattern-based scanning. A Code QL scan might complete in seconds. A reasoning-based analysis might require minutes or hours depending on codebase size and complexity.

This computational cost limits how extensively reasoning-based tools can be applied. Organizations can't practically run reasoning-based analysis on every code commit for every codebase. Strategic decisions are required about where and when to apply these tools. Should analysis focus on security-critical code (authentication systems, cryptographic implementations, access control logic)? Should it run continuously or on a scheduled basis? Should teams run it when suspected vulnerabilities arise or as a periodic comprehensive audit?

Resource allocation is critical. If reasoning-based tools are applied too broadly, they can generate overwhelming quantities of candidate vulnerabilities. Investigation requires human expertise—security researchers must evaluate findings, determine whether candidates are actual vulnerabilities, and assess severity. Organizations need sufficient security expertise to interpret findings and prioritize remediation.

Another consideration is false positive rates. Reasoning-based systems generate hypotheses about vulnerabilities and evaluate them. Not all hypotheses pan out. Some candidate vulnerabilities might be theoretically possible but practically unreachable or impossible to exploit. Others might be blocked by validation checks that the reasoning system didn't fully account for. Organizations need processes for triaging findings and determining which require immediate attention.

Privacy and Data Security Implications

Claude Code Security analyzes code by sending it to Anthropic's external service. For many organizations, this raises legitimate concerns about code privacy and security. Proprietary code, security implementation details, and business logic potentially sensitive information all get exposed to external analysis.

Anthropic has stated that Enterprise customers' code used with Claude Code Security isn't retained for model training, but this still requires trust in external service providers. Some organizations have strict policies against sending code to external services. Others accept the tradeoff for improved vulnerability detection. Still others require code to be analyzed by on-premises tools.

For organizations with strong code privacy requirements, reasoning-based analysis might need to be limited to non-proprietary code or handled through on-premises installations if they become available. This constraint needs to be factored into planning and resource allocation.

Integration with Existing Security Workflows

Security teams have established workflows around existing tools. Issues from Code QL flow into issue tracking systems. Development teams understand how to interpret alerts. Security policies define response procedures. Adding reasoning-based analysis disrupts these established workflows.

Integration requires deciding how findings from reasoning-based tools will be incorporated into existing processes. Should they be imported into the same issue tracking system as Code QL findings? Should they be triaged separately? How will priority be assigned between pattern-based and reasoning-based findings? Will developers receive alerts for both types of issues or should teams filter and prioritize?

Training is necessary for security teams and developers. How does reasoning-based analysis differ from pattern-based scanning? How should findings be interpreted? What does it mean when a tool reports a "potential vulnerability" that requires human evaluation? Organizations need to ensure teams understand the strengths and limitations of the new tools.

Security Implications and Risk Assessment

Understanding the Broader Threat Landscape

The discovery of 500+ high-severity vulnerabilities in mature open-source projects has broader implications for cybersecurity strategy. If sophisticated AI systems can identify vulnerabilities that survived decades of expert review, the assumption that current security practices are comprehensive should be questioned.

This doesn't mean existing security approaches are ineffective—they've prevented countless exploits and breaches. Rather, it means there's a significant gap between the vulnerabilities currently detected through existing methods and the vulnerabilities that advanced reasoning-based analysis can find. Attackers with access to similar capabilities could identify vulnerabilities in your own code that your current security tools miss.

The implication for organizations is that vulnerability management strategies need to evolve. Assuming that existing security tools catch all or most vulnerabilities is increasingly dangerous. Introducing reasoning-based analysis creates an opportunity to close the gap before attackers exploit it. The organization that implements reasoning-based scanning first has an advantage—they can identify and patch vulnerabilities before those same vulnerabilities are discovered by adversaries.

This creates a security race dynamic. Organizations with advanced vulnerability discovery capabilities identify and patch flaws. Organizations without these capabilities remain vulnerable. Over time, the gap between secure and vulnerable organizations grows. Security leaders should expect increasing pressure to adopt advanced vulnerability discovery methods, not from vendors' marketing claims, but from fundamental security necessity.

The Vulnerability Lifecycle and Responsible Disclosure

When reasoning-based tools identify vulnerabilities in open-source software, important questions arise about responsible disclosure. Anthropic discovered 500+ vulnerabilities and worked through responsible disclosure procedures before public revelation. Each vulnerability was vetted through internal and external security review to ensure accuracy and prevent premature exploitation.

This responsible disclosure approach is critical. If vulnerabilities are made public before patches are available, attackers can exploit them. However, there's a window of vulnerability—the period between when a vulnerability is discovered and when patches are available. During that window, systems remain at risk. Responsible disclosure requires balancing the need to protect systems against the need to ensure patches are available before public revelation.

For organizations using reasoning-based tools, responsible disclosure becomes more complex. When your own security analysis finds vulnerabilities in your code, you have a responsibility to patch them. But you also have a responsibility not to disclose the vulnerabilities in ways that expose other users of the same code. If you're discovering vulnerabilities using advanced tools that competitors don't have, you have a competitive advantage in patching before vulnerabilities become widely known.

This creates an incentive structure for adopting advanced vulnerability discovery tools. Organizations that implement reasoning-based analysis can patch vulnerabilities before they're discovered through other means (by competitors or adversaries). The security advantage is real and significant.

Claude Code Security is estimated to have a slightly higher effectiveness score compared to GitHub CodeQL due to its reasoning-based approach. Estimated data.

Building a Reasoning-Based Vulnerability Detection Strategy

Selecting Tools and Approaches

Organizations choosing to implement reasoning-based vulnerability detection have several options. Claude Code Security is available as a limited research preview from Anthropic. As this technology matures, other vendors will likely offer competing products. Additionally, organizations might implement open-source reasoning systems or develop custom implementations.

Selecting among options requires evaluating several factors. Cost is one consideration—tools vary in pricing models and computational requirements. Coverage is another—different tools may excel at different types of vulnerabilities. Integration is important—how well does the tool integrate with existing security workflows? Support and documentation matter for adoption and troubleshooting.

For many organizations, a multi-tool approach may be optimal. Using Code QL for continuous pattern-based scanning on every commit, combined with periodic Claude Code Security analysis for reasoning-based discovery, provides complementary capabilities. Some organizations might add fuzzing or other techniques depending on their specific risk profile and resources.

Implementation Roadmap

Integrating reasoning-based vulnerability detection requires a structured approach. Organizations should start by identifying security-critical code that would benefit most from advanced analysis. Authentication systems, cryptographic implementations, access control logic, and code handling sensitive data are typical priorities. These components often contain the vulnerabilities with the highest impact if exploited.

The next phase involves establishing processes for analyzing code and triaging findings. Security teams need expertise to evaluate reasoning-based findings and determine which require immediate attention. This might involve training existing security staff or hiring security researchers with relevant expertise.

From there, implementation becomes iterative. Run initial reasoning-based analysis on selected codebases. Evaluate findings. Patch confirmed vulnerabilities. Refine the process based on lessons learned. Gradually expand to less critical code as teams gain experience with the tools and develop effective workflows.

Parallel to implementation, organizations should maintain and enhance pattern-based scanning. Code QL and similar tools should continue running automatically, providing the foundational vulnerability detection for known issues. Reasoning-based tools augment rather than replace existing approaches.

Measuring Effectiveness and Impact

Evaluating the effectiveness of reasoning-based vulnerability detection requires metrics aligned with security outcomes. Metric examples include the number of high-severity vulnerabilities discovered before exploitation, the average time from discovery to patch deployment, and the reduction in security incidents post-implementation.

Organizations should also track process metrics: the number of candidate vulnerabilities generated, the ratio of actual vulnerabilities to candidates (signal-to-noise ratio), the resource requirements for triaging and investigation, and developer time spent addressing findings. These metrics help optimize implementation and justify resource allocation.

Comparing before and after metrics provides concrete evidence of impact. If reasoning-based analysis discovers vulnerabilities that would have been exploited without detection, the security benefit is real. If implementation reduces mean time to discovery for critical vulnerabilities, the operational benefit is demonstrated. These metrics support business cases for continued investment.

Alternative Approaches and Complementary Techniques

Fuzzing and Automated Testing

Fuzzing—generating random or semi-random test inputs to trigger unexpected behavior—remains an important vulnerability discovery technique. Fuzzing is particularly effective at finding memory corruption vulnerabilities, integer overflows, and unexpected program behavior. Tools like lib Fuzzer, AFL, and others have become standard in security testing workflows.

Fuzzing and reasoning-based analysis complement each other. Fuzzing excels at exploring code probabilistically, reaching code paths through random input generation. Reasoning-based analysis excels at identifying code patterns associated with vulnerabilities and constructing specific inputs to trigger them. Together, they provide better coverage than either alone.

Organizations should continue investing in fuzzing even as reasoning-based capabilities are adopted. Fuzzing is efficient, effective, and often catches vulnerabilities that other approaches miss. The key is combining techniques rather than selecting one approach.

Manual Code Review and Security Expertise

Human expertise remains irreplaceable in security. Experienced security researchers can recognize subtle vulnerabilities, understand business logic implications of security flaws, and evaluate whether candidate vulnerabilities pose real risks. Automated tools augment human expertise but don't replace it.

Manual code review is particularly valuable for security-critical code where the consequences of vulnerabilities are severe. A security researcher reviewing authentication logic can spot edge cases that automated tools might miss. A developer familiar with business context can determine whether an apparent vulnerability is actually exploitable given intended usage patterns.

Reasoning-based tools can enhance manual review by highlighting areas of concern and generating candidate vulnerabilities for researchers to evaluate. Rather than researchers examining code cold, they can focus attention on areas that tools identify as potentially problematic.

Threat Modeling and Risk Assessment

Understanding vulnerabilities in the context of actual threat scenarios helps prioritize remediation. A vulnerability that's theoretically exploitable but requires authentication and valid credentials might present lower risk than a pre-authentication flaw. Threat modeling helps connect vulnerability discovery to actual business risk.

Reasoning-based vulnerability detection tools should be used within the context of threat modeling and risk assessment. When tools identify vulnerabilities, teams need frameworks for evaluating actual risk given specific threat scenarios. This context helps allocate remediation resources effectively.

Future Trends and Evolving Capabilities

Advances in AI-Powered Security Analysis

The current state of reasoning-based vulnerability detection represents early progress in a rapidly evolving field. Future systems will likely offer improved accuracy, better handling of edge cases, and more sophisticated reasoning about complex code behaviors. As underlying AI models improve, vulnerability detection capabilities will advance correspondingly.

Expect broader adoption across the industry. As tools mature and more vendors enter the market, reasoning-based scanning will become standard practice rather than advanced technique. Organizations that adopt early gain competitive advantages; those that delay adoption face increasing risk. The trajectory suggests reasoning-based vulnerability discovery will eventually be as standard as traditional SAST is today.

Integration with development workflows will improve. Tools will become more efficient, more accurate, and easier to use. Integration with CI/CD pipelines will deepen. Real-time feedback during development will become standard. Developers will receive vulnerability warnings as they write code rather than after code is committed.

Security Implications of Advanced AI Analysis

As AI systems become increasingly sophisticated at analyzing code, both defenders and attackers benefit. Security teams use advanced analysis to identify and patch vulnerabilities. Attackers potentially use similar capabilities to identify exploitable flaws. This creates security dynamics where advantage shifts to whoever adopts advanced tools first.

Organizations should anticipate that adversaries with advanced AI capabilities may already be identifying vulnerabilities in production systems. The race isn't just about adopting tools that competitors might use—it's about staying ahead of actual threats. Security leaders should factor this into planning and prioritization.

The Role of AI in Comprehensive Security Strategies

AI-powered vulnerability detection is one component of comprehensive security strategies. Other areas—identity and access management, threat detection and response, vulnerability management operations, security awareness training—all remain critical. Advanced vulnerability discovery doesn't substitute for these other components.

The most effective future security strategies will integrate AI-powered detection with strong fundamentals: secure development practices, least privilege access, incident response capabilities, and security culture. Organizations that combine advanced vulnerability detection with these fundamentals will achieve the strongest security posture.

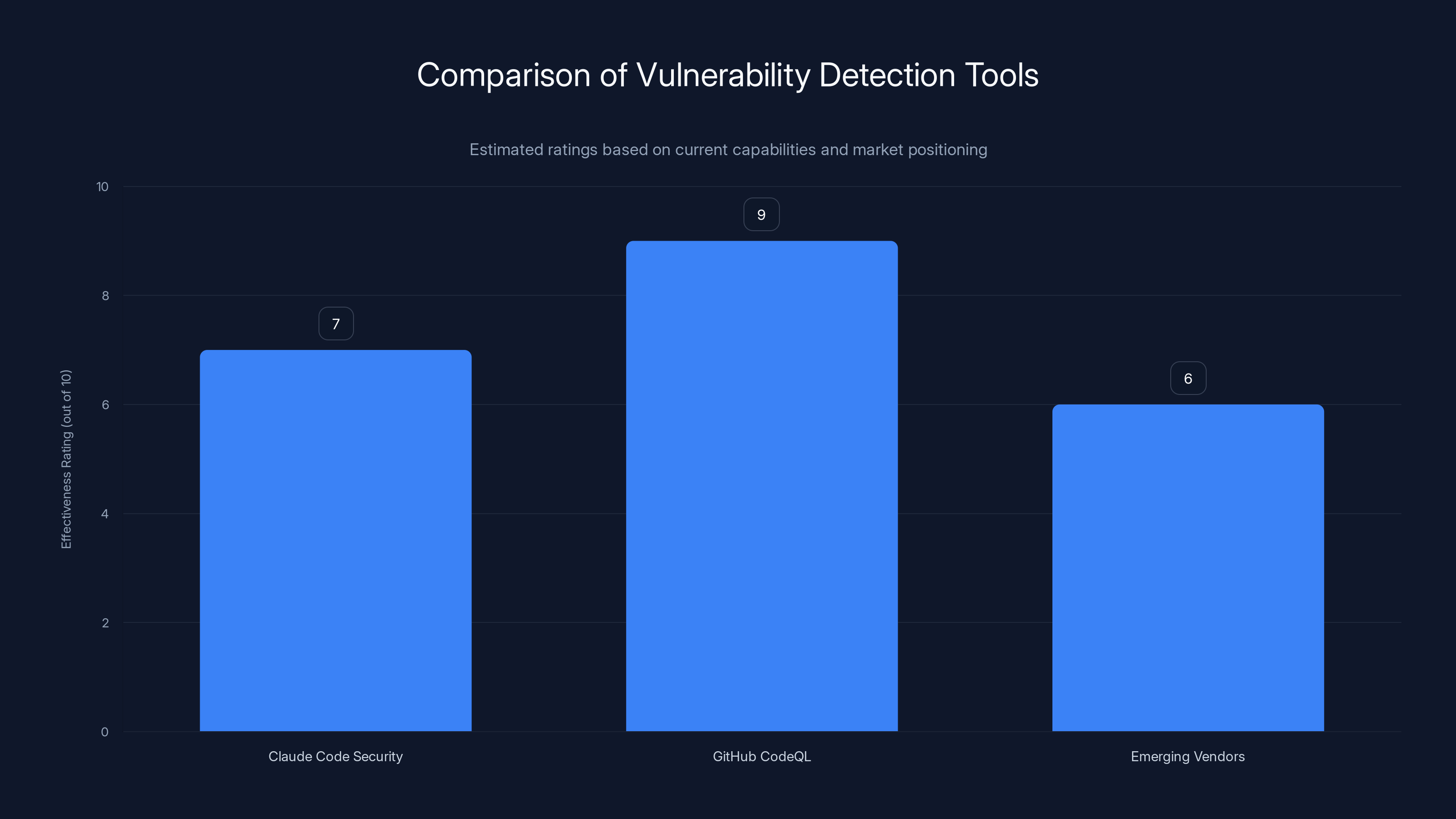

GitHub CodeQL currently leads in effectiveness due to its established platform and community support. Claude Code Security and emerging vendors are expected to improve as reasoning-based capabilities are integrated. (Estimated data)

Industry Landscape: Who's Offering What

Anthropic and Claude Code Security

Anthropic is positioning Claude Code Security as their entry into the vulnerability detection market. Availability as a limited research preview suggests the product is still maturing and expanding. Given Anthropic's reputation for responsible AI development and the thorough testing evidenced by their vulnerability research, products from Anthropic tend to be reliable and well-thought-through.

Claude Code Security's advantage lies in the underlying reasoning capabilities of Claude Opus 4.6. As Anthropic improves their models, vulnerability detection capabilities will improve proportionally. The reasoned approach to security analysis is likely to remain effective even as the specific implementation evolves.

Git Hub Code QL and Git Hub Advanced Security

Git Hub's Code QL platform remains the industry standard for pattern-based vulnerability scanning. Integration directly into Git Hub's development platform provides seamless adoption for organizations using Git Hub. The community of security researchers contributing rules to Code QL ensures continuous improvement in pattern coverage.

Git Hub has clearly signaled interest in AI-powered capabilities through Copilot and Autofix. Future Git Hub offerings may incorporate reasoning-based analysis, potentially integrating Claude or competing models into their security platform. Existing Code QL users should watch for announcements about reasoning-based capabilities.

Emerging Vendors and Competitive Landscape

Other security vendors will inevitably enter the reasoning-based vulnerability detection market. Companies focused on SAST, dynamic testing, and application security will add reasoning capabilities to their offerings. Some will build custom models, others will integrate third-party models. Competition will drive improvements in effectiveness, efficiency, and user experience.

For organizations evaluating vendors, key considerations include the sophistication of reasoning capabilities, integration with existing tools, pricing models, and support for specific languages and frameworks. Vendor track record with security and privacy also matters—security tools need to themselves be secure and trustworthy.

Cost-Benefit Analysis: Is Reasoning-Based Scanning Worth It?

Direct Costs and Resource Requirements

Implementing reasoning-based vulnerability detection requires investment in tools, infrastructure, and personnel. Tool costs vary by vendor and licensing model. Claude Code Security pricing hasn't been detailed in public announcements, but enterprise security tools typically range from tens of thousands to hundreds of thousands annually depending on usage and organizational size.

Computational infrastructure costs must be considered. Reasoning-based analysis requires more computational resources than pattern-based scanning. Organizations might need to allocate additional cloud computing capacity or on-premises hardware to accommodate analysis workloads.

Personnel costs often exceed tool costs. Security teams need expertise to use tools effectively, interpret findings, and prioritize remediation. Organizations might need to hire security researchers or train existing staff. Time required to establish processes, integrate tools into workflows, and achieve steady-state operations represents significant investment.

Quantifiable Benefits

The primary benefit of reasoning-based vulnerability detection is identifying vulnerabilities before they're exploited. A single prevented breach—with costs including incident response, remediation, potential regulatory fines, and reputational damage—can exceed tool implementation costs by orders of magnitude. For security-critical systems, the ROI from preventing a single exploit is typically enormous.

Secondary benefits include faster detection of vulnerabilities, enabling faster remediation. For organizations that discover vulnerabilities through breach notification (discovering flaws only after they've been exploited), shifting to discovery before exploitation provides fundamental protection improvements.

Operational efficiency improvements occur as processes mature. Initial implementation requires significant manual effort, but as teams gain experience and optimize workflows, efficiency improves. After initial setup, the additional cost for ongoing vulnerability analysis becomes relatively modest.

Intangible Benefits and Risk Reduction

Beyond direct costs and benefits, reasoning-based vulnerability detection reduces risk in ways difficult to quantify. Knowing that advanced analysis is identifying vulnerabilities competitors might miss provides security leadership and board confidence. Meeting regulatory requirements and audit expectations for comprehensive vulnerability management becomes easier.

Competitive advantage emerges for organizations that adopt advanced tools first. Ability to identify and patch vulnerabilities before they become widely known provides strategic advantage. This advantage diminishes as competitors adopt similar tools, but first movers gain temporary advantage.

Organizations should evaluate reasoning-based vulnerability detection within the context of overall risk tolerance and security budgets. For organizations with high-value targets, significant regulatory requirements, or security-critical operations, the benefits typically justify the costs. For organizations with limited security maturity, implementing fundamentals (pattern-based scanning, secure development practices) may take priority.

Practical Implementation Guide

Phase 1: Assessment and Planning

Begin by assessing current vulnerability detection capabilities. What tools are currently in place? What vulnerability types are being caught? What vulnerability classes might be missed? Document baseline metrics: average time to discover vulnerabilities, time to patch, and any known incidents where vulnerabilities were exploited before detection.

Identify security-critical codebases that would benefit most from advanced analysis. These typically include authentication systems, cryptographic implementations, access control logic, and code handling sensitive data. Estimate the size and complexity of codebases that would be analyzed.

Evaluate available tool options. Research Claude Code Security, competing offerings, and open-source approaches. Consider factors including cost, integration capabilities, language and framework support, and vendor reputation. Create a requirements specification for your organization's needs.

Estimate resource requirements. How much expertise is available internally? Will you need to hire security researchers or train existing staff? How much time will integration and process development require? Create a preliminary budget and timeline.

Phase 2: Pilot Implementation

Deploy reasoning-based analysis on a limited set of codebases first. Choose one or two security-critical applications that represent your organization's technology stack. Run analysis and evaluate findings. Work through the process of triaging candidate vulnerabilities, confirming actual issues, and beginning remediation.

Use the pilot to refine processes. How should findings be prioritized? How will they be communicated to development teams? What information do developers need to understand findings and implement fixes? Document procedures and lessons learned.

Measure metrics from the pilot phase. How many vulnerabilities were identified? What was the ratio of actual vulnerabilities to candidates? How much human expertise was required to triage findings? How long did analysis and remediation take? Use these metrics to estimate scaling and refine implementation plans.

Phase 3: Expansion and Optimization

Gradually expand reasoning-based analysis to additional codebases. Implement processes and training based on pilot learnings. Integrate findings into existing issue tracking and remediation workflows. Establish regular analysis schedules and procedures for ongoing vulnerability detection.

Optimize processes based on operational experience. Focus analysis on areas that yield the highest vulnerability discovery. Improve triage processes to reduce false positives. Develop expertise within the team to interpret findings more efficiently. Enhance automation where possible to reduce manual work.

Maintain parallel pattern-based scanning. Code QL or similar tools should continue running automatically on every commit. Reasoning-based analysis complements rather than replaces pattern-based approaches. Both should be ongoing parts of vulnerability detection strategy.

Phase 4: Maturity and Continuous Improvement

As implementation matures, reasoning-based vulnerability detection becomes standard practice. Establish regular schedules for comprehensive analysis. Integrate findings into security metrics and key performance indicators. Track trends in vulnerability discovery and remediation.

Continuously evaluate new tools and approaches. As vendor offerings mature and improve, reassess your tool choices. Look for opportunities to improve efficiency, coverage, and integration. Stay informed about emerging threats and adjust analysis focus accordingly.

Share learnings with the industry. As your organization gains experience with reasoning-based vulnerability detection, contributing insights back to the security community strengthens the broader ecosystem. Participate in discussions about best practices, challenges, and solutions.

Reasoning-based analysis is estimated to be more effective in detecting vulnerabilities compared to traditional methods, due to its ability to identify novel vulnerabilities.

Comparison: Reasoning-Based vs. Traditional Approaches

| Aspect | Pattern-Based SAST | Reasoning-Based Analysis | Fuzzing | Manual Review |

|---|---|---|---|---|

| Detection of Known Vulnerabilities | Excellent | Good | Moderate | Excellent |

| Detection of Novel Vulnerabilities | Poor | Excellent | Moderate | Good |

| False Positive Rate | Low | Moderate | Moderate-High | Very Low |

| Computational Cost | Low | High | Moderate | N/A (Human) |

| Scalability to Large Codebases | Excellent | Moderate | Moderate | Poor |

| Time to Run Analysis | Minutes | Hours | Hours-Days | Weeks |

| Explainability of Results | Excellent | Moderate | Moderate | Excellent |

| Requirement for Security Expertise | Low | High | Moderate | High |

| Best Use Cases | Continuous scanning | Targeted analysis | Specific components | Critical code |

| Cost | Low-Moderate | Moderate-High | Low-Moderate | High |

Common Mistakes and How to Avoid Them

Mistake 1: Assuming Reasoning-Based Tools Replace Pattern-Based Scanning

Many organizations view reasoning-based analysis and pattern-based scanning as competing approaches and assume adopting one means deprioritizing the other. This is incorrect. Pattern-based tools should continue running automatically on every code commit. Reasoning-based tools should augment them by identifying vulnerabilities that pattern-based tools miss.

Avoid this mistake by explicitly planning for both approaches. Allocate resources for maintaining and improving pattern-based scanning while simultaneously implementing reasoning-based analysis. Think of them as complementary components of a comprehensive strategy.

Mistake 2: Deploying Reasoning-Based Tools Without Adequate Triage Processes

Reasoning-based tools generate candidate vulnerabilities that require human evaluation. Deploying these tools without adequate processes for triaging findings creates overwhelming noise for security teams. Developers receive numerous alerts, many of which don't represent actual vulnerabilities, leading to alert fatigue and reduced trust in tooling.

Avoid this mistake by establishing triage processes before deployment. Who will evaluate findings? What expertise do they have? How will false positives be filtered? What timeline will be used for remediation? Establish these processes during pilot implementation and refine based on experience.

Mistake 3: Focusing Exclusively on Technical Implementation

Tool deployment is only one aspect of successful vulnerability detection programs. Equally important are organizational factors: executive support, adequate resources, developer training, security culture, and integration into development practices. Organizations that focus exclusively on technical implementation without addressing organizational requirements often fail to achieve expected benefits.

Avoid this mistake by treating implementation as organizational change, not just technical deployment. Engage leadership early. Allocate sufficient resources. Train developers on new processes. Build security culture that prioritizes vulnerability detection and remediation. Use tools as enablers within an organizational context.

Mistake 4: Neglecting Code Privacy and Security Implications

Reasoning-based tools often operate as external services where code is uploaded for analysis. Organizations sometimes overlook privacy and security implications of sending code to external providers. Proprietary business logic, security implementation details, and sensitive information can be exposed.

Avoid this mistake by evaluating data handling practices before deployment. What does the vendor do with code? Is it retained? Used for training? Reviewed by humans? Are enterprise agreements available that provide stronger privacy guarantees? Factor privacy and security implications into tool selection and deployment planning.

Mistake 5: Setting Unrealistic Expectations

Reasoning-based vulnerability detection is powerful but not perfect. It will identify real vulnerabilities but also generate false positives. It will miss some actual vulnerabilities. It requires human expertise for interpretation and decision-making. Organizations that expect tools to be completely accurate and eliminate the need for human judgment become disillusioned when reality doesn't match expectations.

Avoid this mistake by setting realistic expectations from the beginning. Understand tool strengths and limitations. Communicate to stakeholders that tools augment human expertise but don't replace it. Use the pilot phase to develop understanding of what's realistically achievable. Adjust plans based on actual results rather than theoretical ideals.

Recommendations for Security Leaders

For Organizations Without Advanced Vulnerability Detection Capabilities

If your organization hasn't implemented pattern-based vulnerability scanning, start there. Tools like Git Hub Code QL, Sonar Qube, and commercial SAST platforms provide foundational vulnerability detection at reasonable cost. Implement continuous scanning integrated into your development workflow. This should be your first priority.

Once pattern-based scanning is established, begin planning for reasoning-based capabilities. Start with a pilot implementation on security-critical codebases. Learn what's needed for success: expertise, processes, tool integration. Use pilot results to inform organization-wide implementation.

For Organizations With Mature Pattern-Based Scanning

Your next step is evaluating reasoning-based tools. Conduct a formal assessment: What vulnerabilities might pattern-based tools miss? What would reasoning-based analysis contribute to your security program? What tools are available? Which aligns best with your technical stack, budget, and operational requirements?

Plan a pilot implementation on critical code. Allocate time and expertise for thorough evaluation. Measure results: What vulnerabilities were found? How many required investigation? What was the ratio of real vulnerabilities to false positives? Use pilot results to determine scaling and implementation approach.

For Organizations Experiencing Vulnerability Incidents

If your organization has experienced security incidents where vulnerabilities weren't detected by existing tools, this signals that current approaches are insufficient. Advanced vulnerability detection should be prioritized. Implement reasoning-based analysis targeting similar code and vulnerability classes.

Conduct a post-incident analysis of the vulnerability that led to the incident. What approach would have detected it? Would existing SAST tools have caught it? Would reasoning-based analysis have found it? Use these findings to improve your overall vulnerability detection strategy and prevent similar incidents.

The Board Conversation: What Security Leaders Need to Communicate

When presenting to boards and senior executives, focus on business impact rather than technical details. Here's the key message:

"Advanced AI-powered vulnerability analysis has identified hundreds of high-severity vulnerabilities in mature, well-maintained open-source projects that survived decades of expert review and standard security testing. These vulnerabilities class was previously undetectable with existing tools. Our competitors and potential adversaries may have access to similar capabilities. Our organization needs to implement reasoning-based vulnerability detection to identify and patch similar flaws in our own code before attackers exploit them."

Quantify the risk in business terms. What's the impact of an undetected vulnerability being exploited in your security-critical systems? What's the cost of a breach involving customer data? What's the reputational damage if vulnerabilities that could have been detected become public? Compare these costs to the investment required for implementing advanced vulnerability detection.

Present the strategic advantage. Organizations that adopt advanced vulnerability detection early can identify and patch flaws before competitors, creating security advantage. As tools mature and become standard, this advantage diminishes. First movers gain significant benefit.

Discuss timeline and investment. What's the cost? What's the timeline for implementation? What resources are required? What benefits should be expected? Provide clear metrics for evaluating success.

Conclusion: Preparing for the Future of Vulnerability Detection

The discovery of 500+ high-severity vulnerabilities in production open-source projects using reasoning-based AI analysis represents a watershed moment in application security. It demonstrates definitively that existing vulnerability detection approaches, despite their sophistication and extensive use, miss entire classes of real, exploitable vulnerabilities. This gap between detected and actual vulnerabilities creates urgent strategic implications for organizations responsible for cybersecurity.

The transition from pattern-based to reasoning-based vulnerability detection mirrors previous shifts in security technology. Intrusion detection systems were once novel; they're now standard. Firewalls were once cutting-edge; they're now foundational. Vulnerability scanners were once advanced; they're now expected. Reasoning-based vulnerability detection will follow the same trajectory. From current status as advanced capability, it will mature into standard practice. Organizations that adopt early gain competitive advantage and significantly improved security posture. Organizations that lag behind face increasing risk of discovering vulnerabilities only after exploitation.

Implementing reasoning-based vulnerability detection requires more than buying tools. It requires organizational commitment to improved security practices, allocation of sufficient expertise and resources, integration into development workflows, and leadership support. Organizations that treat it as solely a technical implementation often fail to achieve expected benefits. Those that treat it as organizational change, with tools as enablers, typically succeed.

The path forward involves complementary approaches rather than replacement of existing practices. Pattern-based vulnerability scanning should continue and improve. Reasoning-based analysis should augment it, targeting code areas that pattern-based tools can't adequately cover. Manual review and human expertise remain essential for interpreting findings and making risk-based decisions. Fuzzing and other approaches continue serving important roles. Comprehensive security programs use all available techniques in coordinated fashion.

For security leaders, the message is clear: reasoning-based vulnerability detection is no longer optional for organizations with significant security requirements. It's becoming necessary for responsible security stewardship. The board conversation isn't whether to implement reasoning-based analysis, but rather how quickly to do so and which tools and approaches will provide the best fit for your organization's specific needs and constraints.

The window of advantage from being early adopters is open but closing. Organizations should begin assessment and planning immediately. Develop strategies tailored to your specific context, risks, and resources. Start with pilots to learn what works in your environment. Scale based on pilot results and organizational readiness. Maintain focus on fundamentals while advancing to sophisticated approaches.

The future of vulnerability detection will be predominantly AI-powered, reasoning-based analysis. Preparing your organization for that future now—before it becomes table stakes for security leadership—provides significant advantage for organizations willing to invest in transformation. The cost of delay is higher vulnerability risk and competitive disadvantage. The cost of implementation is justified by dramatically improved security outcomes.

FAQ

What is reasoning-based vulnerability detection?

Reasoning-based vulnerability detection uses AI systems to analyze code and generate hypotheses about potential security vulnerabilities, then evaluates whether those hypotheses represent actual exploitable flaws. Unlike pattern-based scanners that check code against predefined rules, reasoning-based systems autonomously reason about how code behaves and identify vulnerabilities that don't match any known pattern. This approach can discover novel vulnerability classes that traditional static analysis tools miss.

How does Claude Code Security differ from traditional SAST tools like Code QL?

Code QL and similar SAST tools check code against predefined vulnerability patterns—they're effective at finding known vulnerability types but limited to patterns that have been encoded as rules. Claude Code Security uses AI reasoning to autonomously analyze code behavior, generate vulnerability hypotheses, and evaluate them without being constrained by predefined patterns. This enables discovery of novel vulnerabilities that pattern-based tools can't detect, at the cost of higher computational requirements and more false positives requiring human evaluation.

Why did reasoning-based analysis find vulnerabilities that fuzzing and manual review missed?

Fuzzing relies on randomly generated inputs and struggles with code paths requiring complex preconditions. Manual review, while thorough, is limited by human cognitive capacity and time availability. Reasoning-based analysis overcomes fuzzing limitations by constructing specific inputs designed to trigger hypothetical vulnerabilities, and it overcomes manual review limitations by autonomously analyzing code across entire projects and across multiple files. This systematic, comprehensive approach finds vulnerabilities that other methods miss.

What are the main advantages of implementing reasoning-based vulnerability detection?

The primary advantages include discovering novel vulnerability classes that pattern-based tools miss, achieving faster vulnerability detection in critical code, reducing the time to identify exploitable flaws before attackers do, and gaining competitive advantage through early adoption of advanced security capabilities. Organizations that implement reasoning-based analysis can patch vulnerabilities before they become widely known, significantly improving security posture and reducing breach risk.

What challenges does reasoning-based vulnerability detection present?

Key challenges include significant computational costs that prevent continuous analysis on every code commit, higher false positive rates requiring skilled security expertise for triage, privacy concerns when code must be sent to external analysis services, and need for trained personnel to interpret findings and make remediation decisions. Integration into existing security workflows and developer processes also requires careful planning and training to be effective.

Should organizations replace pattern-based scanning with reasoning-based analysis?

No, these approaches are complementary rather than competitive. Pattern-based tools should continue running automatically on every code commit, catching known vulnerability types efficiently and at scale. Reasoning-based analysis should augment these by periodically analyzing critical code to find novel vulnerabilities that pattern-based tools miss. The most effective security programs use both approaches in coordinated fashion rather than treating them as alternatives.

How should organizations prioritize between implementing reasoning-based tools versus improving existing security practices?

Organizations should ensure foundational security practices are solid first: pattern-based vulnerability scanning on every code commit, secure development practices, code review processes, and developer security training. Once these fundamentals are established, reasoning-based analysis becomes a valuable next step. For organizations without any automated vulnerability detection, implementing pattern-based scanning should be the priority before moving to reasoning-based capabilities.

What's the typical implementation timeline for reasoning-based vulnerability detection?

Initial assessment and planning typically takes 2-4 weeks. Pilot implementation on 1-2 critical codebases takes 4-8 weeks. Based on pilot results, organization-wide implementation and process refinement takes 2-4 months. Full maturity where reasoning-based analysis is integrated into standard security practices typically requires 6-12 months. Timeline varies significantly based on organizational size, technical complexity, and available resources.

What metrics should organizations track when implementing reasoning-based vulnerability detection?

Key metrics include number of novel vulnerabilities discovered, average time from discovery to patch deployment, ratio of actual vulnerabilities to false positive candidates, resource requirements for triage and investigation, and reduction in time to detect vulnerabilities compared to previous approaches. Organizations should also track security outcomes: reduction in exploited vulnerabilities, faster response to discovered flaws, and improvement in security incident metrics relevant to their risk profile.

How do privacy and data security factor into reasoning-based tool selection?

Organizations should evaluate what happens to code submitted for analysis: Is it retained? Used for training? Reviewed by humans? Enterprise agreements typically provide stronger privacy guarantees than standard offerings. Organizations with strict code privacy requirements should seek vendors offering on-premises analysis or use-specific contractual provisions limiting data handling. Privacy evaluation should be part of formal tool selection process with consideration of regulatory requirements and organizational policy.

Opportunities for Alternative Solutions: Developer-Focused Automation Platforms

While Anthropic's Claude Code Security represents a sophisticated approach to vulnerability detection, organizations should also consider how developer-focused automation platforms complement security programs. Platforms like Runable offer broader automation capabilities that can help teams address security holistically through automated workflows, documentation generation, and developer productivity tools.

Runable's AI-powered automation agents can generate comprehensive security documentation, create automated workflows for vulnerability remediation, and assist development teams in maintaining consistent security practices across projects. For developers integrating security tools into their workflows, automation platforms that handle routine tasks—documentation, process execution, report generation—free expertise for focusing on high-value security activities like vulnerability analysis and remediation strategy.

The complementary approach combines specialized security tools (Code QL, Claude Code Security) with broader developer automation platforms. Specialized tools identify vulnerabilities. Automation platforms help teams manage remediation at scale, generate documentation, and execute processes efficiently. Together, they enable organizations to scale security practices without proportional increases in headcount.

For teams evaluating their complete security and developer tooling strategy, considering how different tools work together provides better outcomes than optimizing any single component in isolation. Security tools excel at finding vulnerabilities. Developer platforms excel at scaling remediation and maintaining practices. Integration of both leads to more comprehensive and effective programs.

Key Takeaways

- Anthropic's Claude Code Security identified 500+ high-severity vulnerabilities using reasoning-based analysis—flaws that survived decades of expert review and standard security testing

- Reasoning-based vulnerability detection autonomously generates and tests hypotheses about code behavior, discovering novel vulnerabilities that pattern-based SAST tools cannot detect

- Three vulnerability cases (GhostScript, OpenSC, CGIF) demonstrate reasoning-based analysis excels at: commit history cross-reference, precondition analysis, and algorithm-level edge case discovery