Explainable AI is Making Black Box Models Obsolete in the Agentic Era

Last month, a major financial institution faced a crisis. Their AI-driven credit scoring system flagged an unprecedented number of legitimate transactions as fraudulent. As the team scrambled to identify the root cause, they realized their reliance on a black box model left them blind to the decision-making process behind these errors. This incident highlighted a growing issue in the AI landscape: the need for explainability.

TL; DR

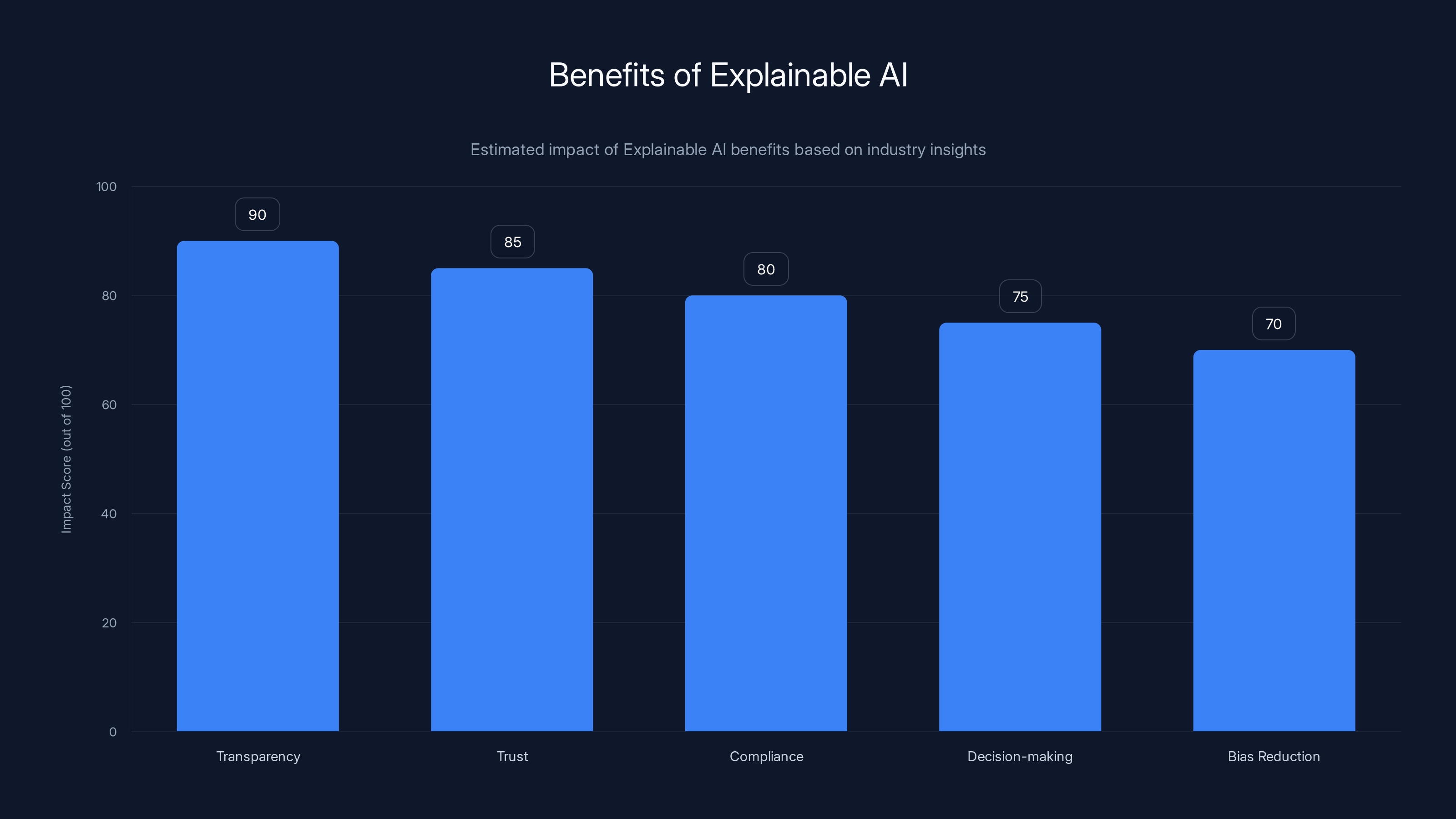

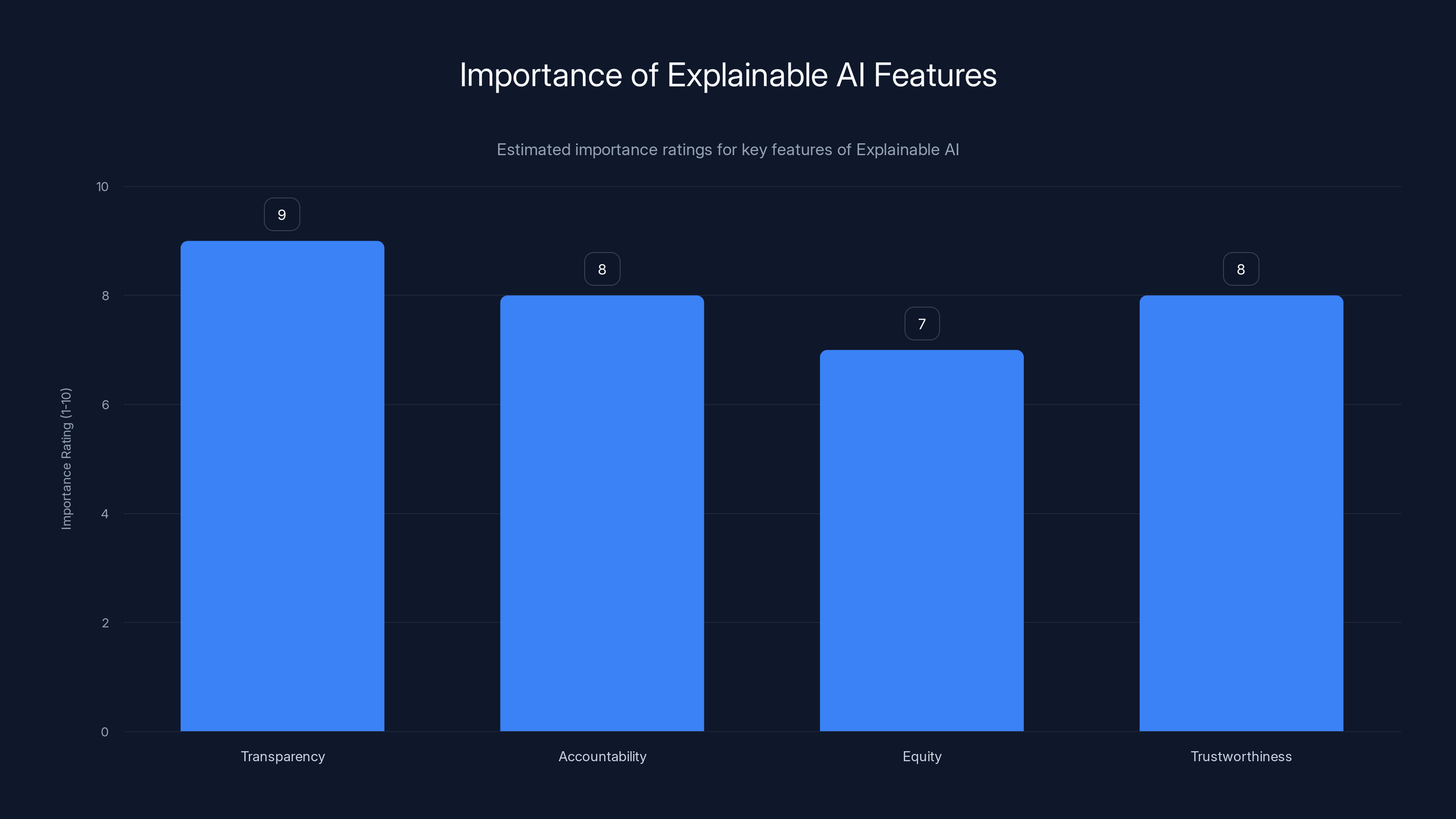

- Transparency is Key: Explainable AI enhances trust and accountability by making decision processes transparent.

- Black Box Models Lagging: The inability to understand or interpret black box models limits their usability in dynamic environments.

- Agentic AI Demands Clarity: As AI systems evolve to perform autonomous tasks, clarity in decision logic becomes crucial.

- Implementing XAI: Techniques like LIME and SHAP are essential for adding explainability to AI models.

- Future Trends: Expect a shift towards regulatory frameworks mandating explainability in AI applications.

Explainable AI significantly enhances transparency and trust, with notable benefits in compliance and decision-making. (Estimated data)

The Rise of Explainable AI

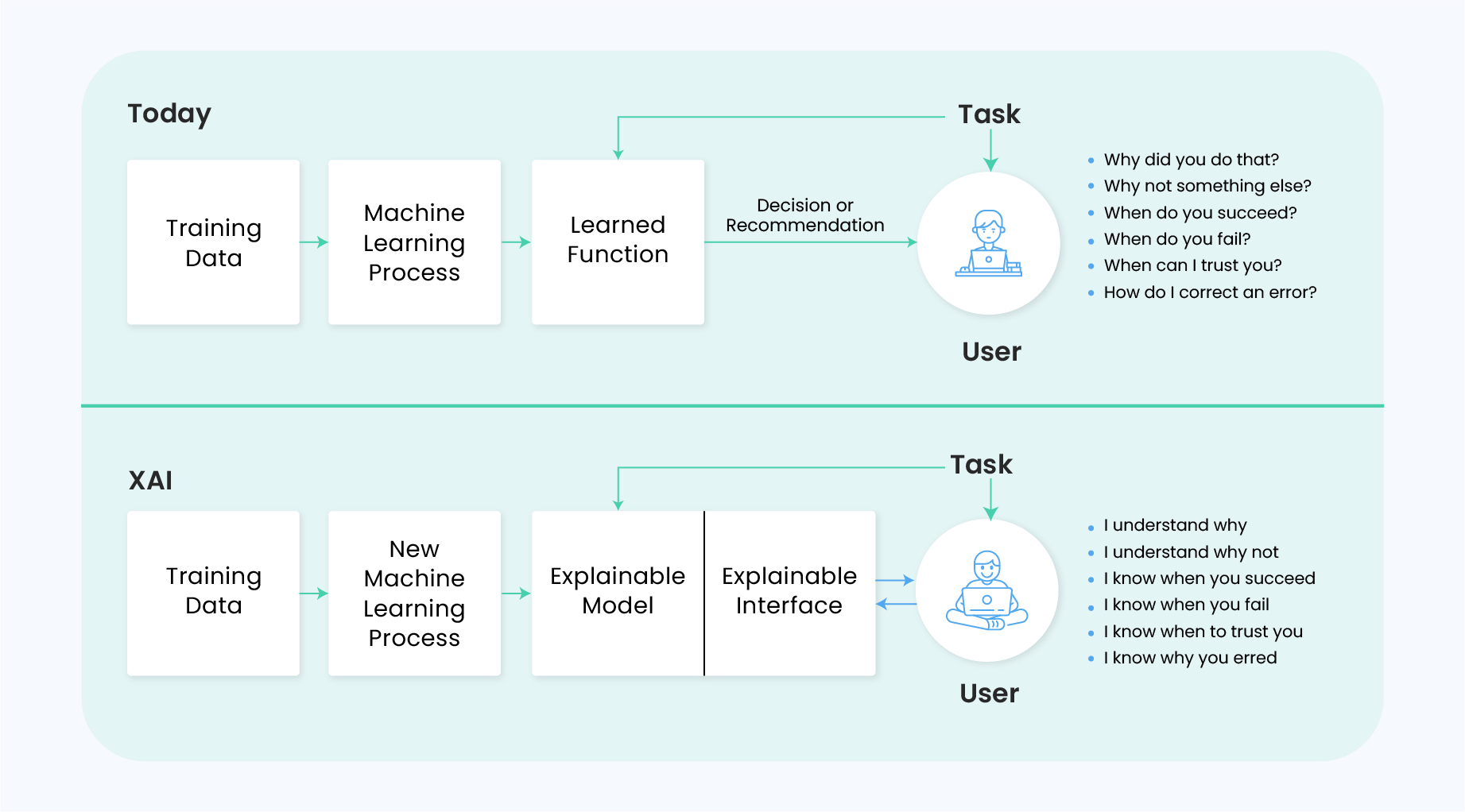

Explainable AI (XAI) refers to systems designed to make their decision-making processes transparent and understandable to humans. Traditional black box models, like deep learning neural networks, deliver high accuracy but fail to provide insights into their internal workings. XAI bridges this gap by allowing stakeholders to see not just the 'what' but the 'why' behind AI decisions.

Why Black Box Models Fall Short

Black box models are often characterized by their opacity. While they can process vast amounts of data and identify complex patterns, they do so in ways that are difficult to interpret. This is particularly problematic when AI systems are used in critical areas such as healthcare, finance, and autonomous vehicles, where understanding decision rationale is paramount.

- Lack of Accountability: When decisions go wrong, it's challenging to pinpoint errors.

- Regulatory Challenges: Increasing regulations demand greater transparency in AI operations.

- Trust Issues: Users are more likely to trust systems when they understand the decision-making process.

The Agentic Era: A New Paradigm

In the agentic era, AI systems aren't just tools—they act as autonomous agents capable of reasoning and making decisions without human intervention. This evolution necessitates a shift from black box models to more transparent systems, as discussed in MIT Sloan's exploration of agentic AI.

Key Characteristics of Agentic AI:

- Autonomy: Ability to operate independently.

- Adaptability: Learning from new data to improve decision-making.

- Accountability: Traceability of decisions and actions.

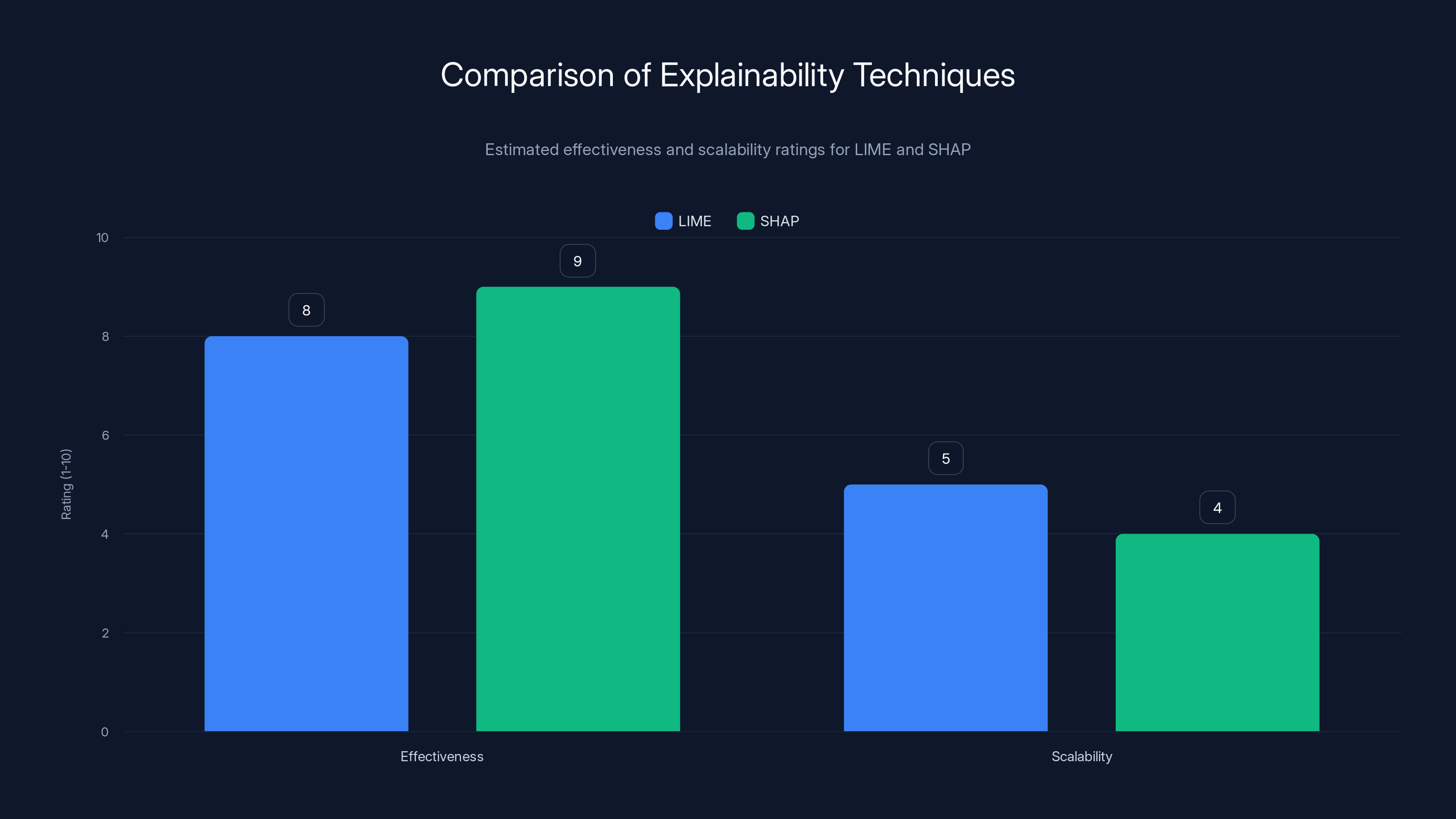

LIME and SHAP are both highly effective in providing model explanations, but SHAP is slightly more effective. However, LIME is more scalable than SHAP. Estimated data.

Techniques for Achieving Explainability

Several techniques have been developed to enhance the explainability of AI models. These methods aim to shed light on how models make decisions, thus improving transparency and accountability.

Local Interpretable Model-agnostic Explanations (LIME)

LIME is a technique that approximates black box models with simpler, interpretable models to explain individual predictions. It works by perturbing the input data and observing the changes in predictions, allowing for a better understanding of the model's behavior.

- Advantages: Works with any classifier, provides local explanations.

- Limitations: May not be scalable for large datasets.

pythonimport lime

import lime.lime_tabular

explainer = lime.lime_tabular. Lime Tabular Explainer(training_data, mode='classification', feature_names=feature_names, class_names=class_names, discretize_continuous=True)

exp = explainer.explain_instance(data_row, predict_fn, num_features=5)

exp.show_in_notebook(show_all=False)

SHapley Additive ex Planations (SHAP)

SHAP leverages cooperative game theory to assign a value to each feature based on its contribution to the prediction. This method provides a consistent and fair way to interpret model outputs.

- Advantages: Offers global and local explanations, applicable to any model.

- Limitations: Computationally intensive for large datasets.

pythonimport shap

explainer = shap. Explainer(model)

shap_values = explainer.shap_values(data)

shap.summary_plot(shap_values, features=data, feature_names=feature_names)

Implementing Explainable AI in Practice

Transitioning from black box models to explainable AI involves several steps. Here’s a practical guide for implementing XAI in your organization.

- Identify Critical Areas: Focus on areas where explainability is crucial, such as compliance, customer trust, and complex decision-making.

- Choose the Right Tools: Select appropriate XAI techniques like LIME or SHAP based on your specific needs.

- Integrate with Existing Systems: Ensure that explainability solutions can be seamlessly integrated without disrupting operations.

- Train Stakeholders: Educate team members on the importance of XAI and how to interpret model explanations.

- Continuously Monitor and Improve: Regularly review and refine your XAI implementations to adapt to evolving needs.

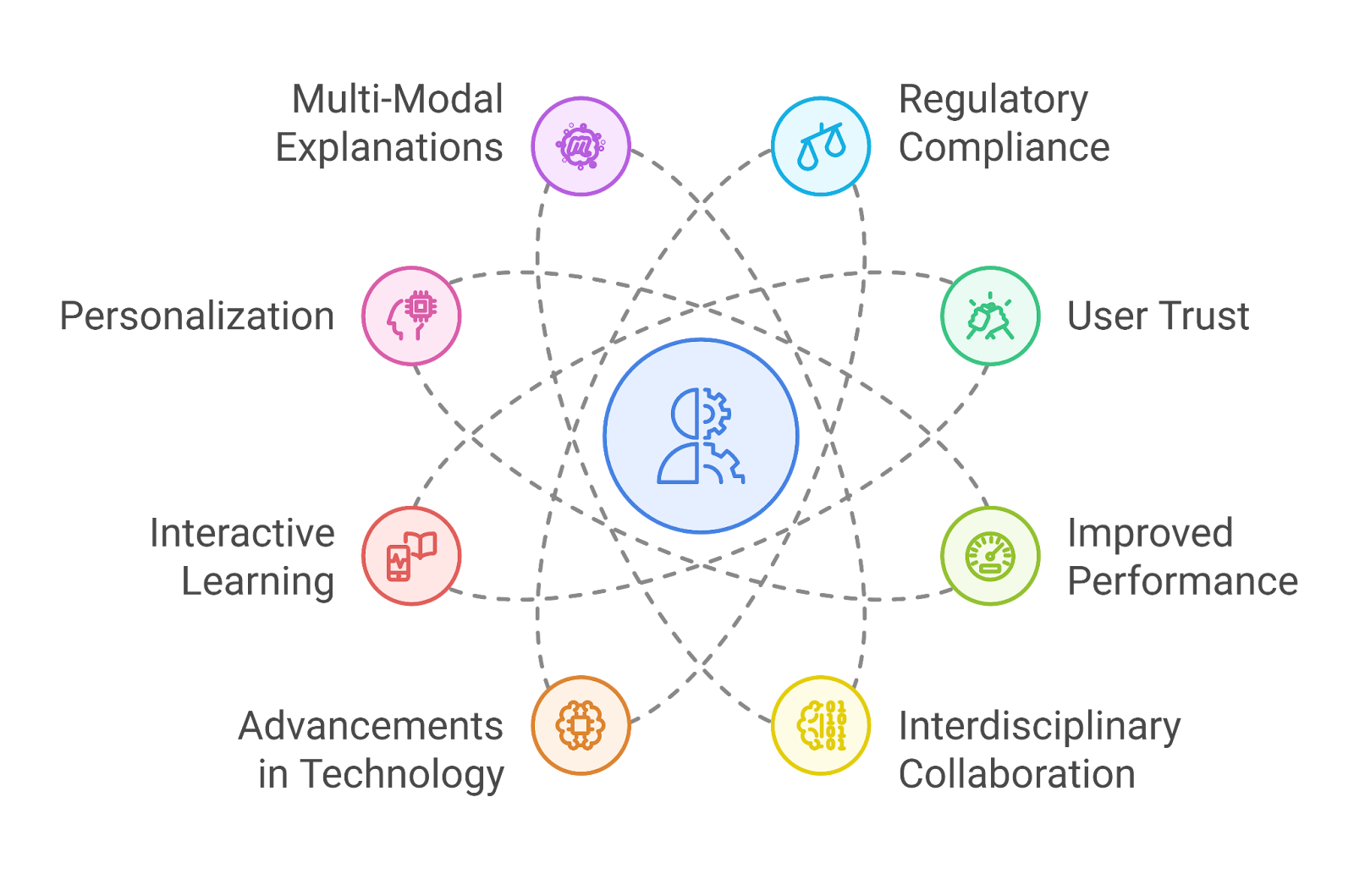

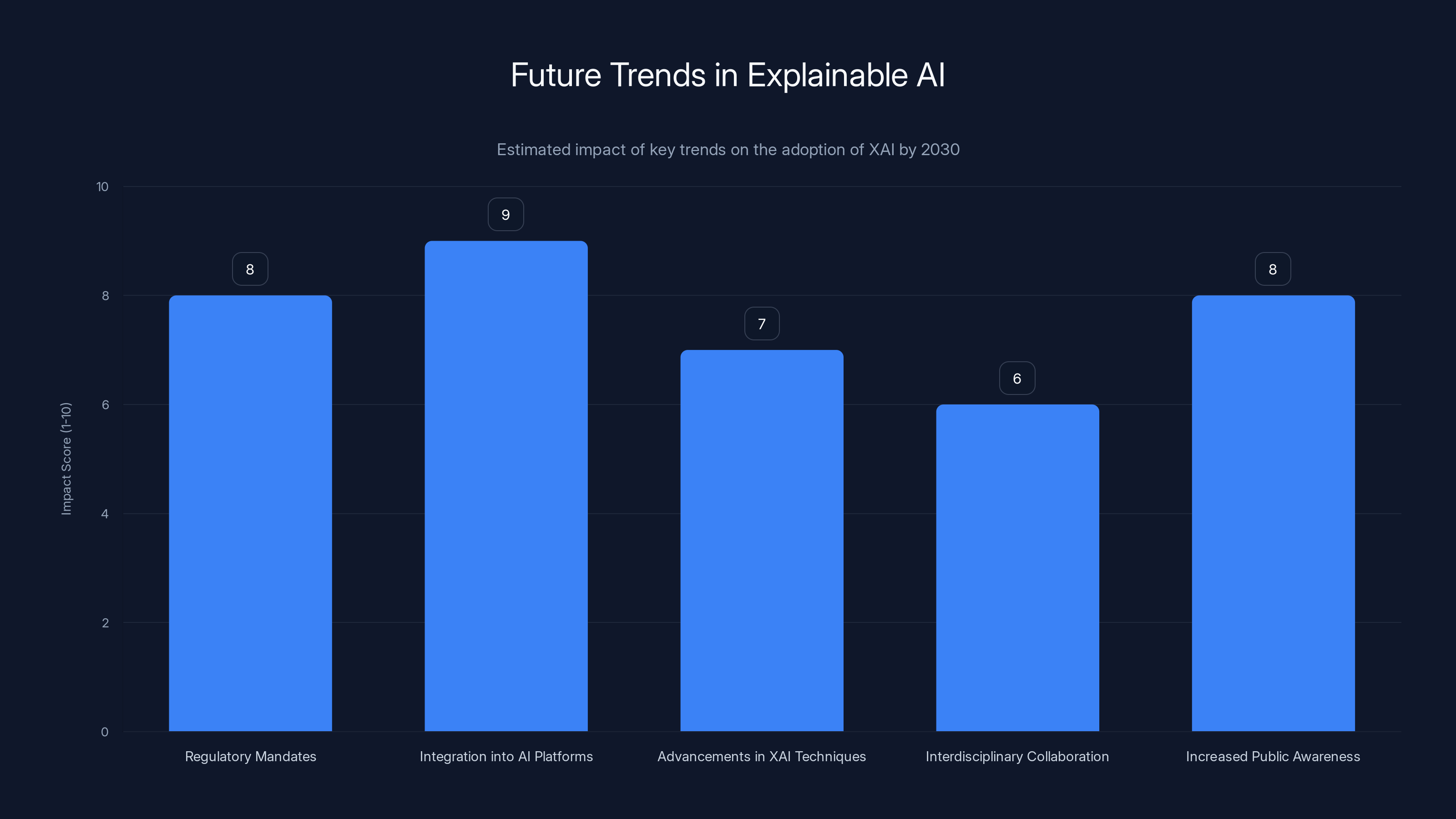

Integration of XAI into AI platforms and regulatory mandates are expected to have the highest impact on the adoption of explainable AI by 2030. Estimated data.

Real-World Use Cases of Explainable AI

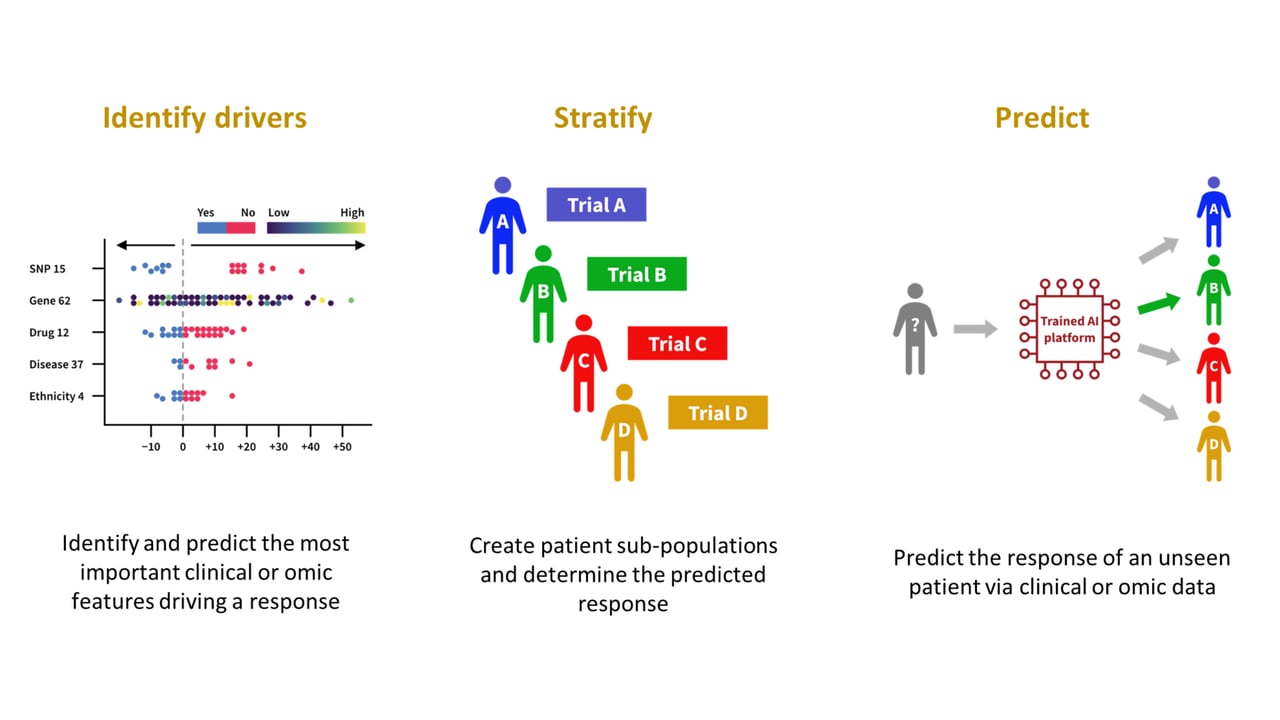

Healthcare Diagnosis

In healthcare, XAI helps clinicians understand AI-driven diagnosis recommendations. For instance, an AI system might suggest a diagnosis based on patient data, but without explainability, doctors might not trust the recommendation. The importance of explainability in healthcare is underscored by the framework for bias detection and regulatory compliance.

- Example: An XAI model highlights key factors such as age, blood pressure, and genetic markers influencing a diagnosis.

- Benefit: Enhances trust and aids in informed decision-making.

Financial Services

Financial institutions use XAI to improve decision-making in areas like credit scoring and fraud detection. By understanding the factors influencing a decision, banks can better manage risk and improve customer relations. This aligns with the insights from Forbes on the importance of explainable AI.

- Example: A bank uses SHAP to explain why a loan was denied, providing transparency to customers.

- Benefit: Reduces bias and ensures compliance with regulatory standards.

Autonomous Vehicles

Autonomous vehicles rely on AI for navigation and decision-making. XAI provides insights into the decision processes that lead to actions like lane changes or emergency braking.

- Example: XAI systems explain the rationale behind route choices based on traffic patterns and road conditions.

- Benefit: Increases safety and user confidence in autonomous systems.

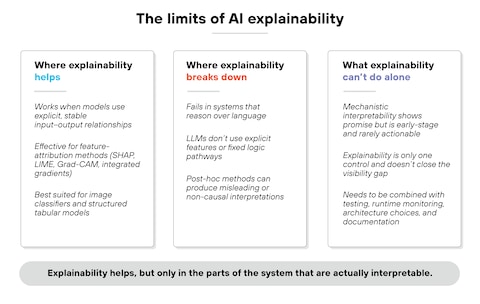

Common Pitfalls and Solutions

Implementing XAI is not without challenges. Here are some common pitfalls and how to address them:

-

Overfitting to Explanations: Avoid creating models that overly focus on being explainable at the cost of accuracy.

- Solution: Balance complexity and interpretability by using hybrid models.

-

Complexity of Techniques: XAI methods can be complex and resource-intensive.

- Solution: Simplify where possible and leverage cloud-based solutions for scalability.

-

Resistance to Change: Teams may resist transitioning to XAI due to perceived complexity or inertia.

- Solution: Provide training and demonstrate the tangible benefits of XAI.

Transparency and accountability are rated as the most important features of Explainable AI, highlighting their role in fostering trust and equity. Estimated data.

Future Trends and Recommendations

As AI continues to evolve, expect several trends to shape the landscape of explainable AI:

- Regulatory Mandates: Governments and industry bodies will likely introduce regulations requiring AI explainability to ensure accountability and fairness, as discussed in the EU AI Act's transparency requirements.

- Integration of XAI into AI Platforms: Major AI platforms will embed XAI capabilities natively, making it easier for organizations to adopt.

- Advancements in XAI Techniques: Continuous research will lead to more efficient and user-friendly XAI methods.

- Interdisciplinary Collaboration: Teams combining data science, ethics, and policy expertise will drive the development of responsible AI systems.

- Increased Public Awareness: As more people understand AI's role in daily life, demand for transparency will grow.

Conclusion

Explainable AI is not just a technical challenge but a fundamental shift in how we interact with intelligent systems. By making AI processes transparent and accountable, organizations can better leverage AI's potential while mitigating risks. As we move deeper into the agentic era, the importance of explainability will only grow, paving the way for more equitable and trustworthy AI systems.

Use Case: Automate your compliance reports with AI to ensure transparency and accuracy

Try Runable For Free

FAQ

What is Explainable AI?

Explainable AI (XAI) refers to artificial intelligence systems that provide human-understandable insights into their decision-making processes, enhancing transparency and trust.

How does Explainable AI work?

Explainable AI uses techniques like LIME and SHAP to generate human-readable explanations of model predictions, allowing users to understand the factors influencing AI decisions.

What are the benefits of Explainable AI?

Benefits include increased transparency, improved trust in AI systems, compliance with regulations, enhanced decision-making, and reduced bias, as supported by Forbes.

Why are black box models becoming obsolete?

Black box models are becoming obsolete because they lack transparency, making it difficult to understand or trust their decisions, especially in critical applications.

What are some challenges in implementing Explainable AI?

Challenges include balancing accuracy with interpretability, managing the complexity of XAI methods, and overcoming organizational resistance to change.

How can organizations transition to Explainable AI?

Organizations can transition by identifying critical areas for explainability, choosing appropriate XAI tools, integrating them with existing systems, and providing training to stakeholders.

What are future trends in Explainable AI?

Future trends include regulatory mandates for explainability, advancements in XAI techniques, integration of XAI into AI platforms, interdisciplinary collaboration, and increased public awareness.

How does Explainable AI impact industries like healthcare and finance?

In healthcare, XAI aids in understanding AI-driven diagnosis recommendations, while in finance, it improves transparency in credit scoring and fraud detection, enhancing trust and compliance.

Key Takeaways

- Explainable AI enhances transparency, fostering trust in AI systems.

- Black box models are increasingly inadequate in dynamic environments.

- Agentic AI demands clear, understandable decision-making processes.

- XAI techniques like LIME and SHAP are crucial for model transparency.

- Future AI regulations will likely mandate explainability.

- Interdisciplinary collaboration will drive responsible AI development.

Related Articles

- A Roadmap for Responsible AI Development [2025]

- Finding Stability in an Age of Relentless AI Innovation [2025]

- Why AI Hallucinations Are Actually Useful [2025]

- The Cowan Paradox: Why AI Agents Won’t Let You Do Less Work. They’ll Make You Do More

- Revolutionizing Memory Efficiency: The New KV Cache Compaction Technique [2025]

- Unmasking Grammarly: Identity Concerns and the Future of AI Writing Assistance [2025]

![Explainable AI is Making Black Box Models Obsolete in the Agentic Era [2025]](https://tryrunable.com/blog/explainable-ai-is-making-black-box-models-obsolete-in-the-ag/image-1-1772984162533.jpg)