Open AI's New Voice Intelligence Features: A Comprehensive Guide [2025]

Voice intelligence has transformed the way we interact with technology, and Open AI's latest API update is set to push the boundaries even further. With the introduction of GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper, developers now have access to state-of-the-art tools for building applications that can converse, transcribe, and translate in real-time. This article delves into these new features, exploring their capabilities, potential use cases, and the technical intricacies involved in their implementation.

TL; DR

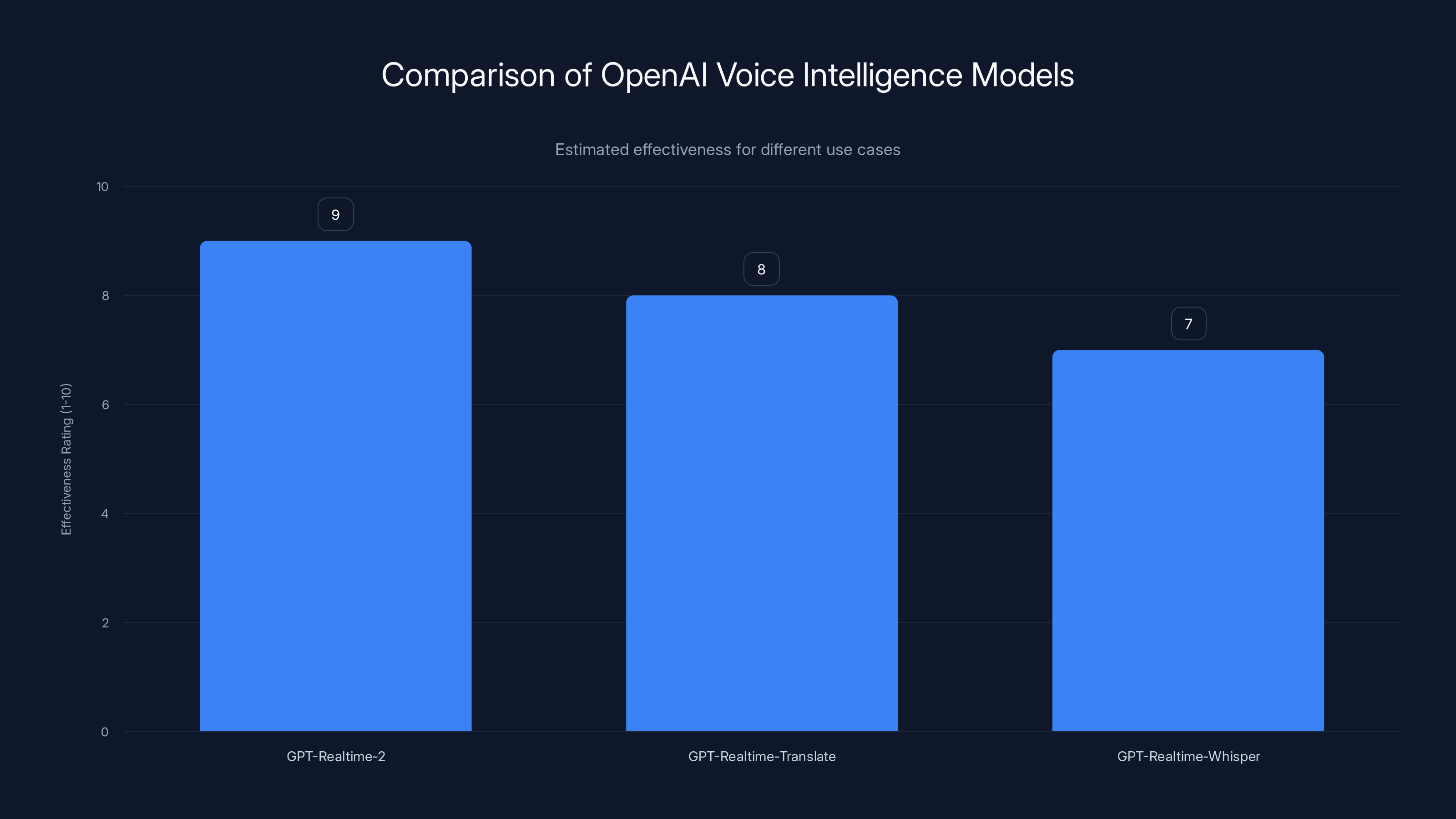

- GPT-Realtime-2: Offers enhanced conversational abilities with GPT-5-class reasoning, creating more realistic vocal simulations as noted by 9to5Mac.

- GPT-Realtime-Translate: Supports translation in real-time across 70+ input and 13 output languages, maintaining conversational flow according to Let's Data Science.

- GPT-Realtime-Whisper: Provides live speech-to-text capabilities, ideal for transcription needs as highlighted by Interesting Engineering.

- Implementation Tips: Best practices for integrating these features into your applications.

- Future Trends: Predictions on how voice intelligence will evolve and its impact on technology.

GPT-Realtime-2 is highly effective for natural conversation, while GPT-Realtime-Translate excels in translation tasks. Estimated data based on typical use cases.

Understanding Open AI's Voice Intelligence Suite

Open AI's latest API enhancements are designed to help developers create more interactive and intelligent applications. The suite includes three major components: GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper. Each of these features brings unique capabilities to the table, enabling developers to build applications that can converse naturally, translate efficiently, and transcribe accurately.

GPT-Realtime-2: Conversational Intelligence

At the heart of Open AI's voice intelligence offering is GPT-Realtime-2. This model builds upon its predecessor, GPT-Realtime-1.5, by incorporating GPT-5-class reasoning. The result is a system capable of engaging in more complex and nuanced conversations, simulating realistic vocal interactions that can handle intricate requests, as detailed by Blockchain News.

Key Features of GPT-Realtime-2:

- Advanced Reasoning: Leverages GPT-5 capabilities to understand and respond to complex queries.

- Natural Language Processing: Improved NLP techniques for seamless conversation.

- Adaptive Learning: Continuously learns and adapts from interactions to refine responses.

Real-World Use Case: Imagine a customer service bot that not only addresses basic queries but also understands context and previous interactions to provide personalized support. This capability reduces the need for human intervention and enhances the customer experience.

Implementation Challenges: While GPT-Realtime-2 offers impressive capabilities, developers may face challenges in optimizing the model for specific use cases. Fine-tuning the model to understand industry-specific jargon or slang can require additional training data and effort.

GPT-Realtime-Translate: Breaking Language Barriers

Language translation has seen significant advancements, and GPT-Realtime-Translate aims to take it a step further by offering real-time translation services. Supporting over 70 input languages and 13 output languages, this feature ensures that language is no longer a barrier in global communication, as reported by Quartz.

Key Features of GPT-Realtime-Translate:

- Real-Time Processing: Translates conversations on-the-fly without noticeable delays.

- Wide Language Support: Comprehensive coverage of both input and output languages.

- Contextual Understanding: Maintains the conversational context to provide accurate translations.

Real-World Use Case: Consider a multinational corporation conducting a global meeting with participants speaking different languages. GPT-Realtime-Translate can facilitate real-time communication, ensuring everyone understands the discussion as it happens.

Potential Pitfalls and Solutions: One challenge with real-time translation is maintaining accuracy in rapidly spoken languages or dialects. Developers can address this by integrating language models tailored to specific dialects or using pre-processing steps to clean audio inputs.

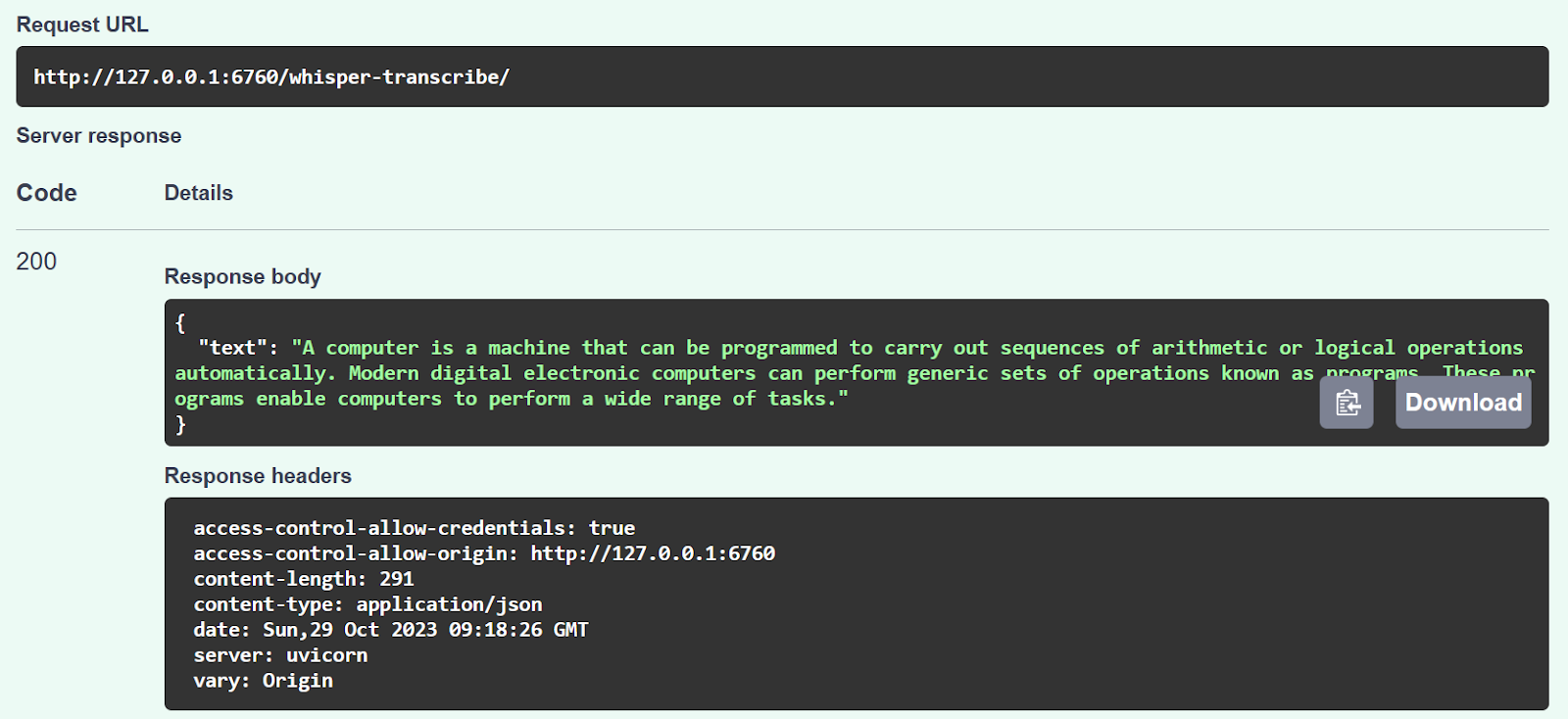

GPT-Realtime-Whisper: Accurate Transcription

Transcribing speech to text accurately and in real-time is crucial for many applications, from meeting notes to accessibility tools. GPT-Realtime-Whisper addresses this need by providing robust speech-to-text capabilities, as described by The Decoder.

Key Features of GPT-Realtime-Whisper:

- High Accuracy: Achieves near-human-level transcription accuracy.

- Real-Time Output: Delivers transcriptions instantly as speech is detected.

- Noise Reduction: Incorporates advanced noise filtering to improve clarity.

Real-World Use Case: An educational platform can leverage GPT-Realtime-Whisper to provide real-time captions for live lectures, making content accessible to students with hearing impairments.

Implementation Best Practices: To ensure optimal performance, developers should focus on high-quality audio inputs and consider using external noise-canceling hardware or software.

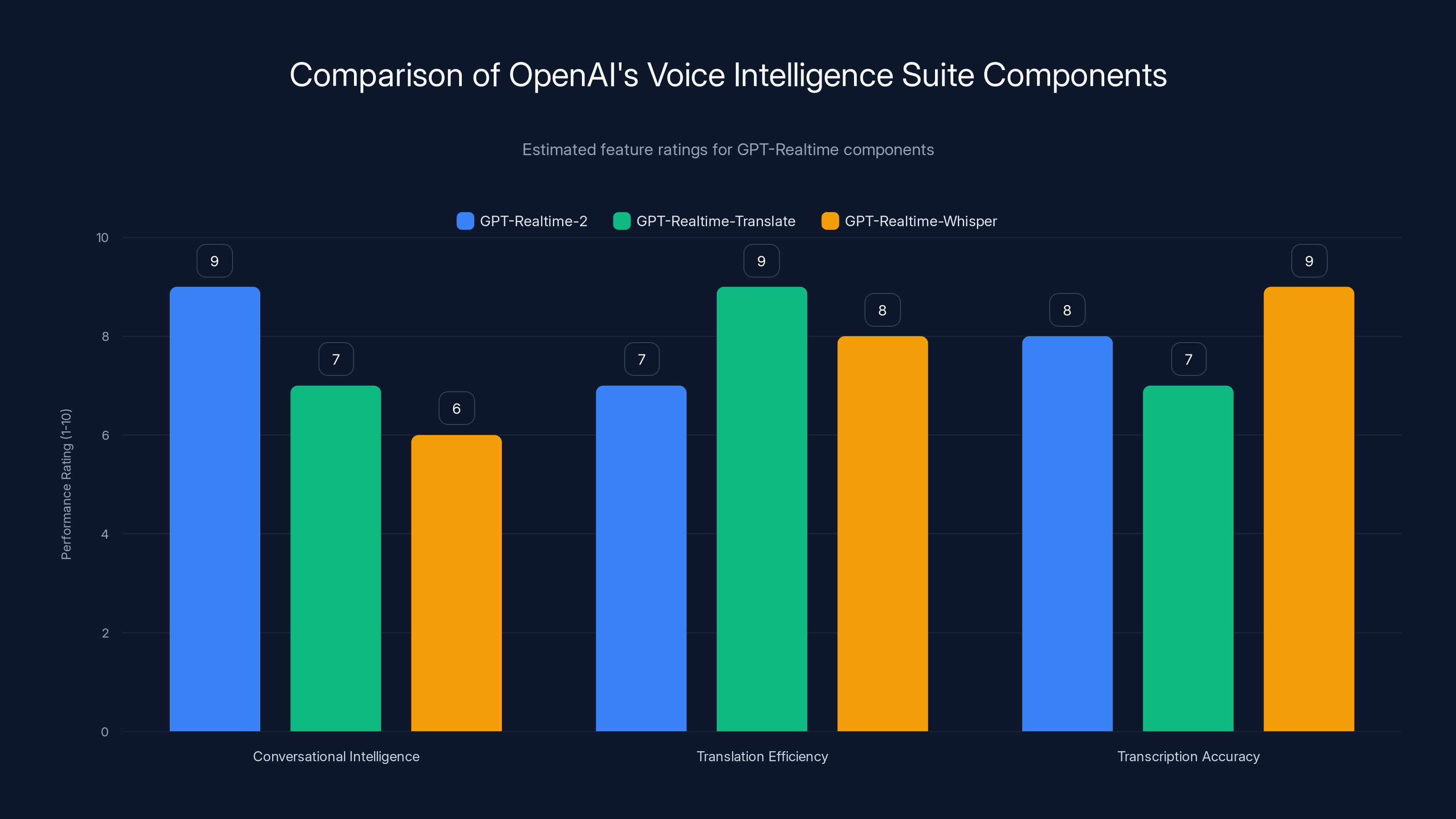

GPT-Realtime-2 excels in conversational intelligence, while GPT-Realtime-Translate and GPT-Realtime-Whisper lead in translation efficiency and transcription accuracy respectively. Estimated data.

Technical Implementation Guide

Integrating Open AI's voice intelligence features into your applications requires careful planning and execution. This section provides a step-by-step guide to implementing these features effectively.

Step 1: Setting Up the API

Before diving into implementation, ensure that you have access to Open AI's API. Sign up on their official website and obtain your API key.

Checklist for API Setup:

- Register for an Open AI developer account.

- Obtain your unique API key.

- Review the API documentation for specific endpoints and parameters.

Step 2: Choosing the Right Model

Each voice intelligence feature is designed for specific use cases. Selecting the right model is crucial for achieving the desired results.

Considerations for Model Selection:

- GPT-Realtime-2: Ideal for applications requiring natural conversation and customer interaction.

- GPT-Realtime-Translate: Best for real-time language translation needs.

- GPT-Realtime-Whisper: Suitable for transcription services and accessibility tools.

Step 3: Integrating the API

With the API key and model selection in hand, you can proceed to integrate the API into your application.

Integration Steps:

- Authentication: Use your API key to authenticate requests to Open AI's servers.

- Endpoint Configuration: Set up API endpoints for each feature you plan to use.

- Data Handling: Ensure secure data transmission and storage, especially for sensitive information.

- Testing: Conduct thorough testing to identify any issues with the integration.

Sample API Request:

json{

"model": "gpt-realtime-2",

"input": "Hello, can you help me with my order?",

"output": "Of course! What seems to be the issue?"

}

Step 4: Optimizing Performance

To ensure seamless user experiences, optimize the performance of your application with these best practices.

Performance Optimization Techniques:

- Load Balancing: Distribute API requests evenly to prevent server overload.

- Caching: Utilize caching strategies for frequent requests to reduce latency.

- Error Handling: Implement robust error handling to manage API outages gracefully.

Step 5: Monitoring and Feedback

Once your application is live, continuous monitoring and feedback collection are essential for ongoing improvement.

Monitoring Tools:

- Analytics: Track API usage metrics to understand user behavior.

- Feedback Systems: Implement systems to collect user feedback on feature performance.

- Error Logs: Regularly review error logs to identify and resolve issues promptly.

Common Pitfalls and Solutions

Despite the advanced capabilities of Open AI's voice intelligence features, developers may encounter challenges during implementation. This section outlines common pitfalls and solutions to help you navigate potential issues.

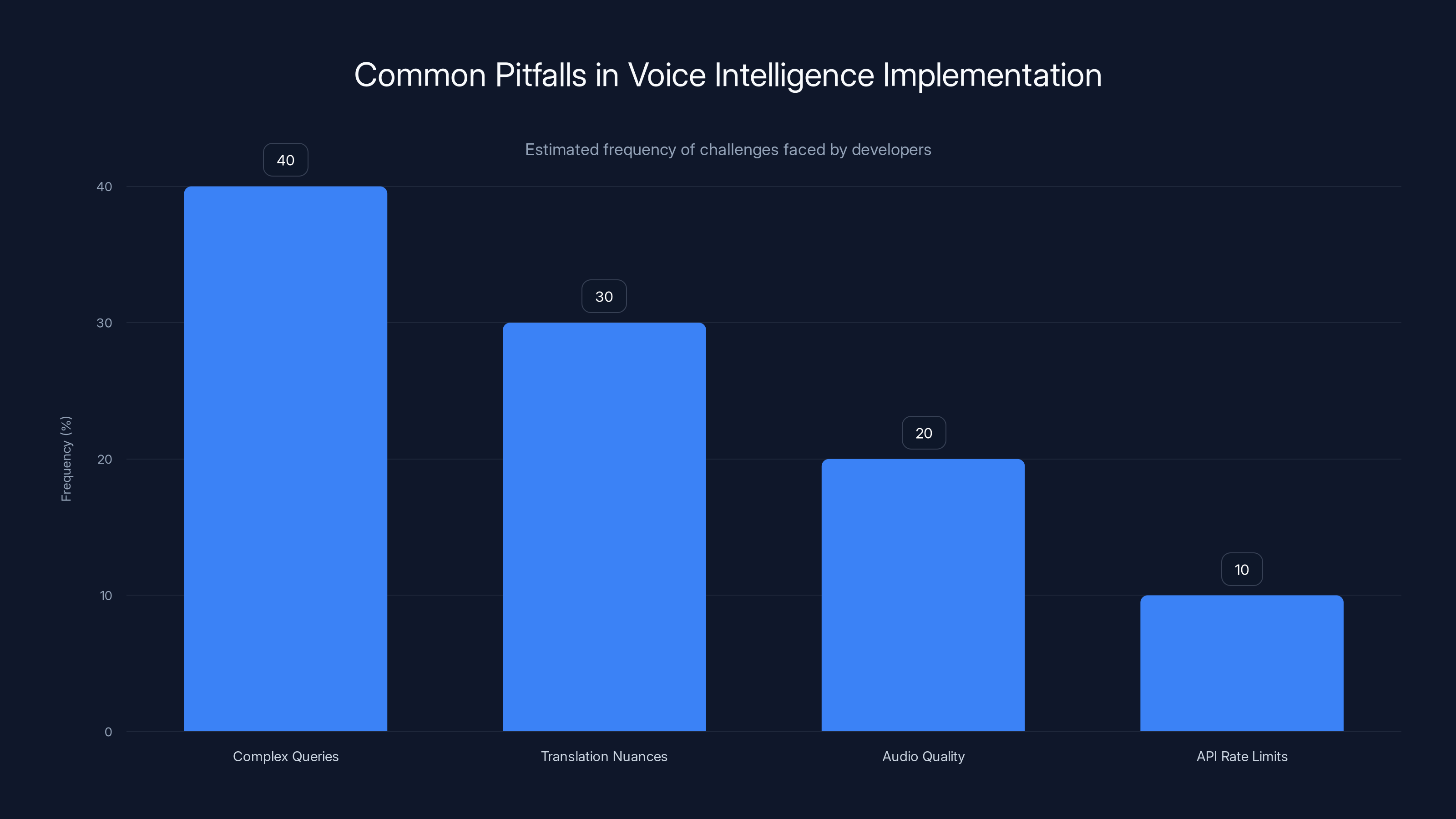

Pitfall 1: Handling Complex Queries

Challenge: GPT-Realtime-2 may struggle with highly complex or ambiguous queries.

Solution:

- Break down complex queries into simpler sub-queries.

- Use context-aware prompts to guide the model's responses.

Pitfall 2: Language Translation Nuances

Challenge: GPT-Realtime-Translate may miss nuanced meanings in certain languages.

Solution:

- Customize language models with region-specific datasets.

- Incorporate human-in-the-loop for critical translations.

Pitfall 3: Audio Quality Issues

Challenge: Poor audio quality can lead to inaccurate transcriptions by GPT-Realtime-Whisper.

Solution:

- Use high-quality microphones and noise-canceling technology.

- Pre-process audio files to enhance clarity and reduce noise.

Pitfall 4: API Rate Limits

Challenge: High-volume applications may hit API rate limits, affecting performance.

Solution:

- Implement request throttling to manage API call rates.

- Contact Open AI for higher rate limits if necessary.

Complex queries and translation nuances are the most common challenges developers face when implementing voice intelligence features. Estimated data.

Future Trends in Voice Intelligence

The future of voice intelligence is promising, with significant advancements on the horizon. Here are some key trends to keep an eye on:

Trend 1: Multimodal Interaction

As AI systems become more sophisticated, expect to see greater integration of voice, visual, and textual inputs for more immersive user experiences.

Trend 2: Emotion Detection

Voice intelligence will increasingly incorporate emotion detection, enabling applications to respond intuitively to users' emotional states.

Trend 3: Personalized AI Assistants

Future AI assistants will offer highly personalized interactions, learning from user preferences and behaviors to tailor responses accordingly.

Trend 4: Wider Language Support

Expect to see expanded language support, allowing voice intelligence systems to cater to even more diverse global audiences.

Trend 5: Enhanced Security Features

As voice intelligence becomes more prevalent, security features will evolve to protect user privacy and data integrity.

Conclusion

Open AI's latest voice intelligence features represent a significant leap forward in AI capabilities. By leveraging GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper, developers can create applications that not only understand but also interact with users in more natural and meaningful ways. With careful implementation and a focus on continuous improvement, these tools can transform the way we communicate with technology, breaking down language barriers and bridging communication gaps in real-time.

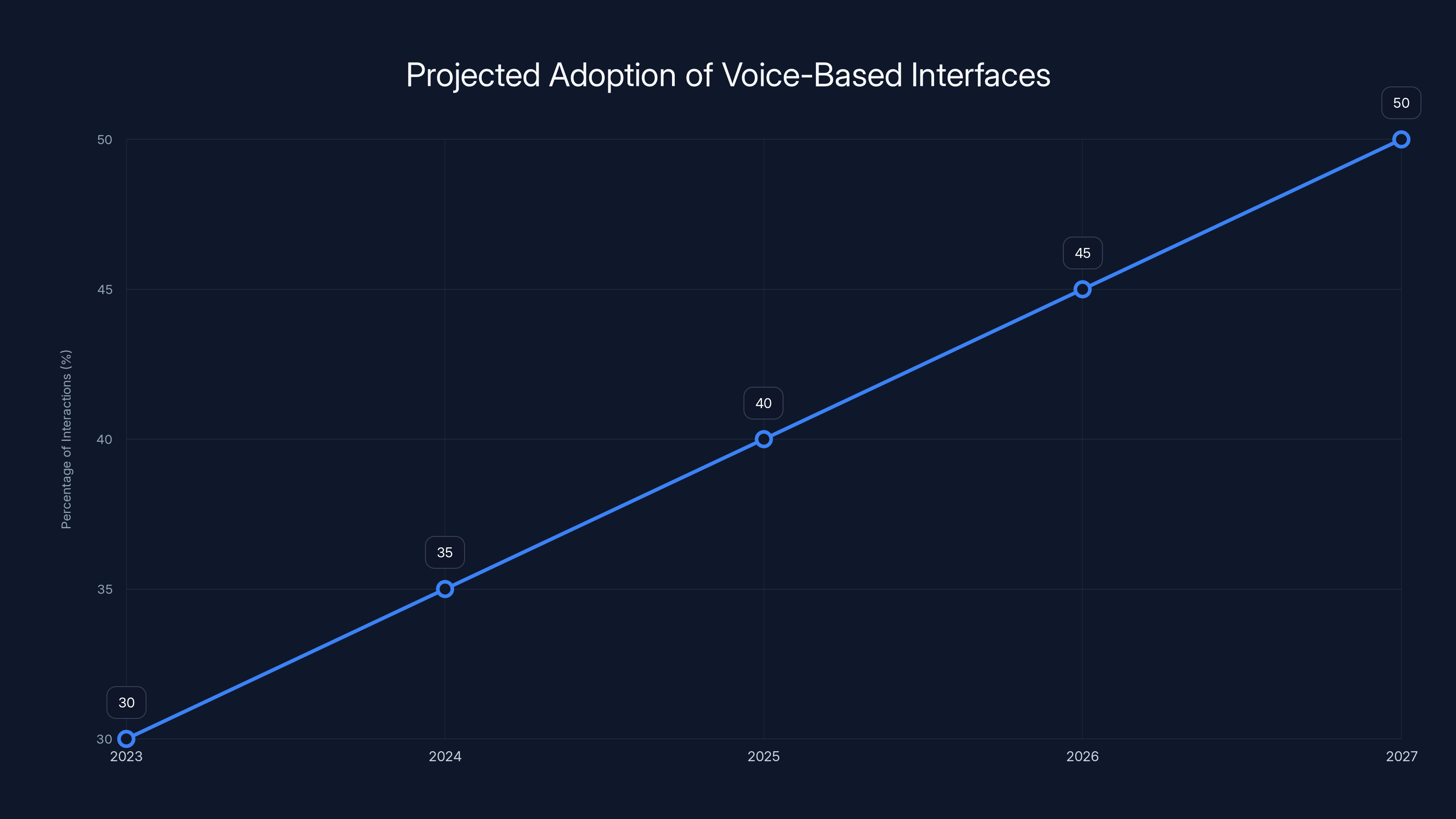

By 2027, over 50% of interactions with technology are expected to involve voice-based interfaces, highlighting the growing importance of voice intelligence. (Estimated data)

FAQ

What is GPT-Realtime-2?

GPT-Realtime-2 is a voice model developed by Open AI that enhances conversational capabilities with GPT-5-class reasoning, allowing for more complex and nuanced interactions.

How does GPT-Realtime-Translate work?

GPT-Realtime-Translate provides real-time translation services, supporting over 70 input languages and 13 output languages, and maintaining conversational flow and context.

What are the benefits of GPT-Realtime-Whisper?

GPT-Realtime-Whisper offers live speech-to-text capabilities with high accuracy and noise reduction, making it ideal for transcription and accessibility tools.

How can I integrate Open AI's voice intelligence features into my application?

To integrate these features, you'll need to set up an Open AI API key, choose the appropriate model, configure API endpoints, and optimize performance through load balancing and caching strategies.

What are some common challenges with implementing voice intelligence features?

Common challenges include handling complex queries, managing language translation nuances, ensuring audio quality for accurate transcription, and dealing with API rate limits.

What future trends can we expect in voice intelligence?

Future trends include multimodal interaction, emotion detection, personalized AI assistants, wider language support, and enhanced security features.

Key Takeaways

- OpenAI's GPT-Realtime-2 enhances conversational capabilities with advanced reasoning.

- GPT-Realtime-Translate supports real-time translation in over 70 input languages.

- GPT-Realtime-Whisper provides accurate live transcription with noise reduction.

- Integrating these features requires careful API setup and performance optimization.

- Future trends include multimodal interaction and emotion detection in voice AI.

Related Articles

- ChatGPT's New Default Model: A Leap in Factuality and Personalization [2025]

- China's Moonshot AI Raises $2B Amid Open-Source AI Boom [2025]

- Anthropic's Expansion: Doubling Claude Code Usage and Partnering with SpaceX [2025]

- Snap and Perplexity's $400M Deal: What Happened and What's Next [2025]

- Google's Gemma 4: Unlocking Speed with Speculative Decoding [2025]

- Chrome's 4GB AI Download Controversy: Unpacking User Consent and Privacy Implications [2025]

![Exploring OpenAI's New Voice Intelligence Features: A Comprehensive Guide [2025]](https://tryrunable.com/blog/exploring-openai-s-new-voice-intelligence-features-a-compreh/image-1-1778193246378.jpg)