Five Signs Data Drift is Undermining Your Security Models [2025]

Data drift is one of those sneaky issues that can quietly dismantle your machine learning (ML) models if you're not vigilant. You're probably relying on these models to keep your cybersecurity game tight, spotting malware, analyzing network threats, and more. But what happens when the data feeding them starts to change? Let's dig into this.

TL; DR

- Data Drift Defined: When input data's statistical properties change over time, impacting ML model accuracy.

- Impact on Security: Leads to increased false negatives and false positives.

- Early Detection: Crucial for maintaining cybersecurity integrity.

- Strategy Implementation: Regular data monitoring and model retraining.

- Future-Proofing: Incorporate adaptive learning techniques.

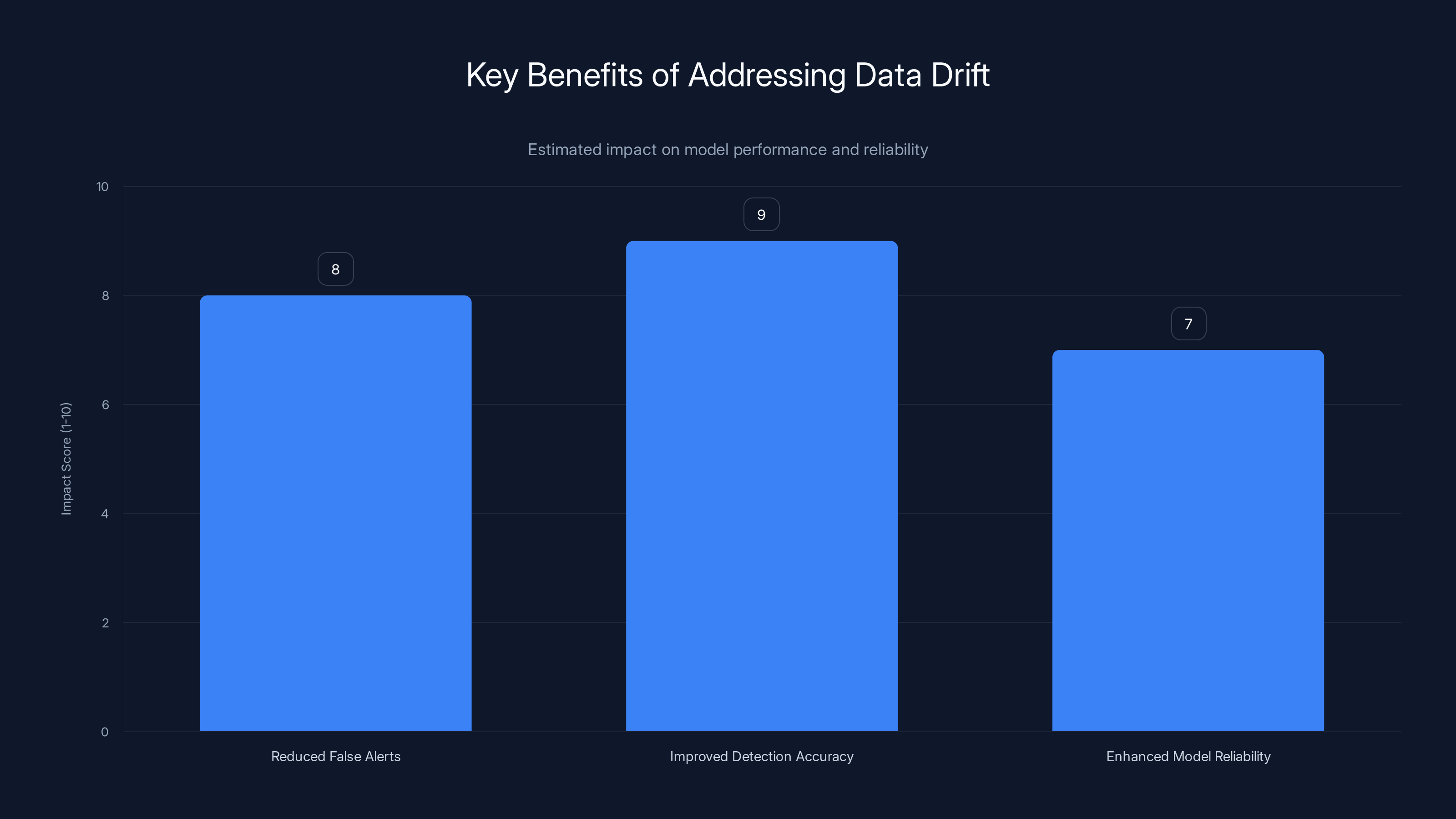

Addressing data drift significantly reduces false alerts, improves detection accuracy, and enhances model reliability. (Estimated data)

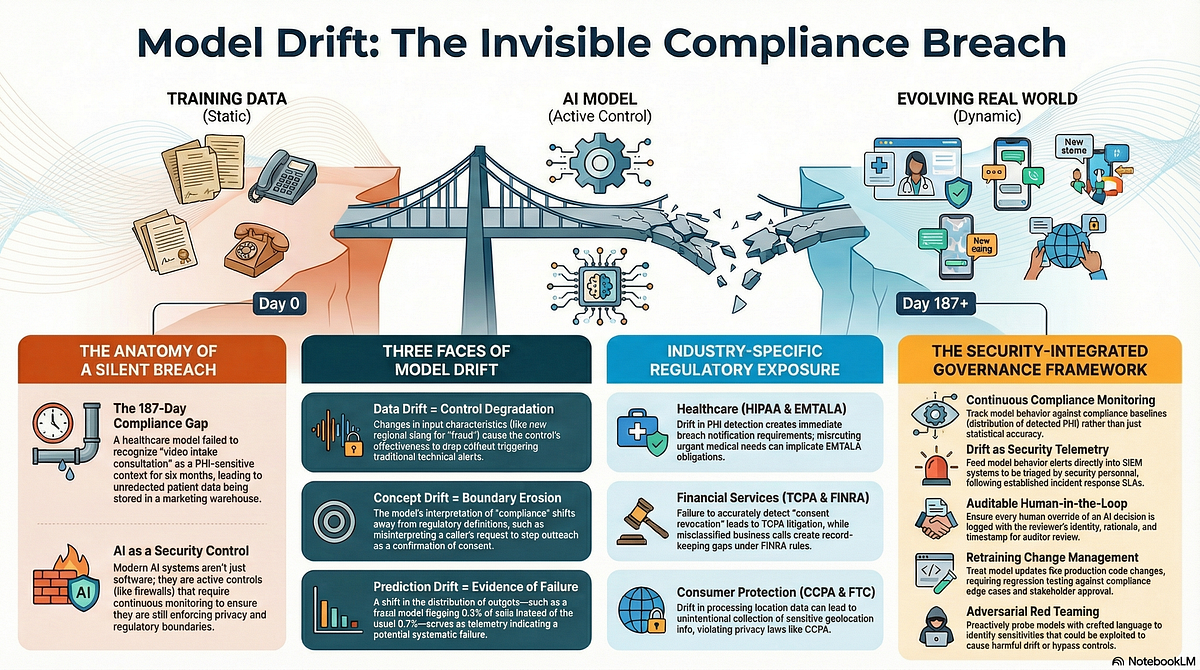

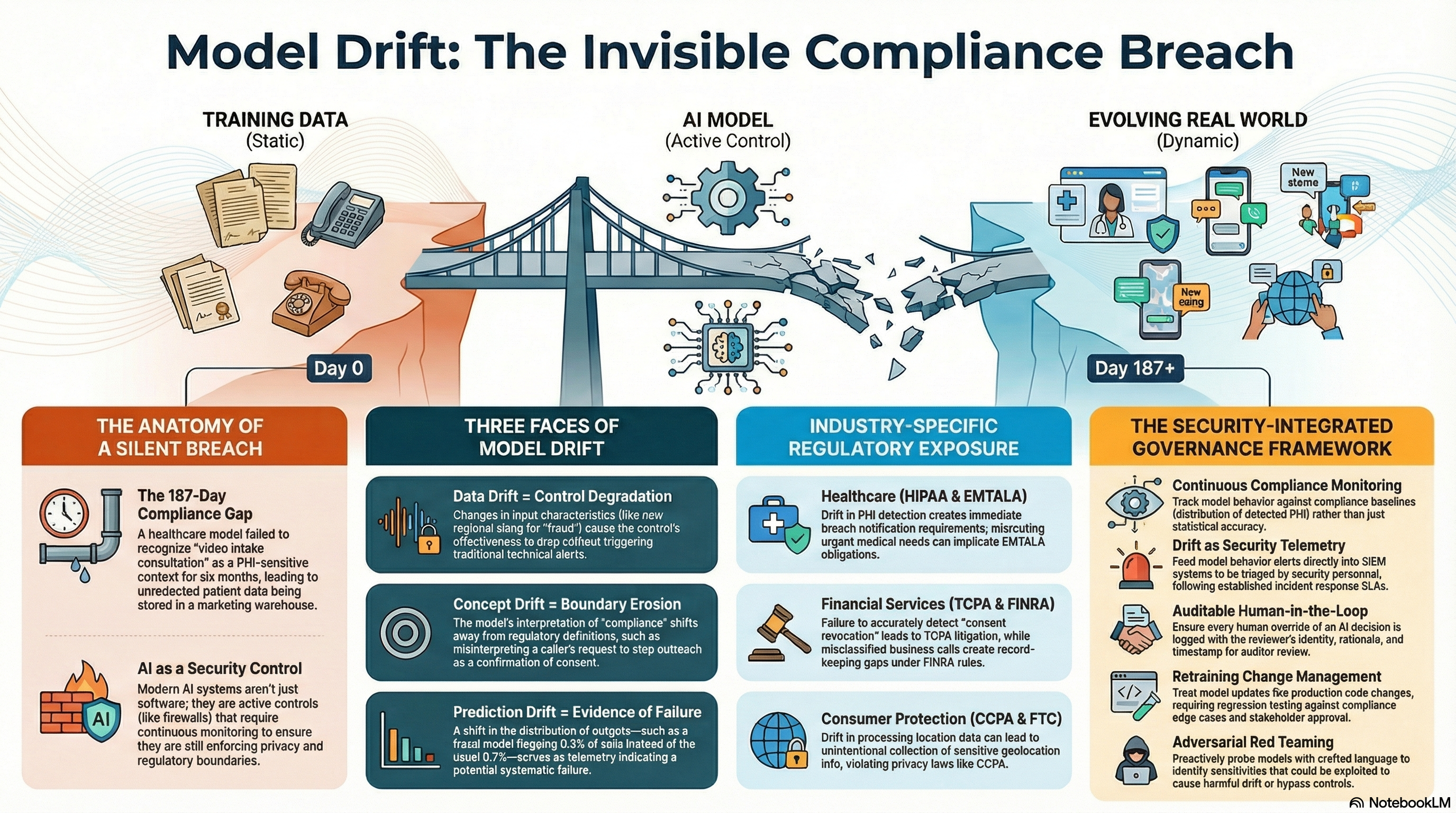

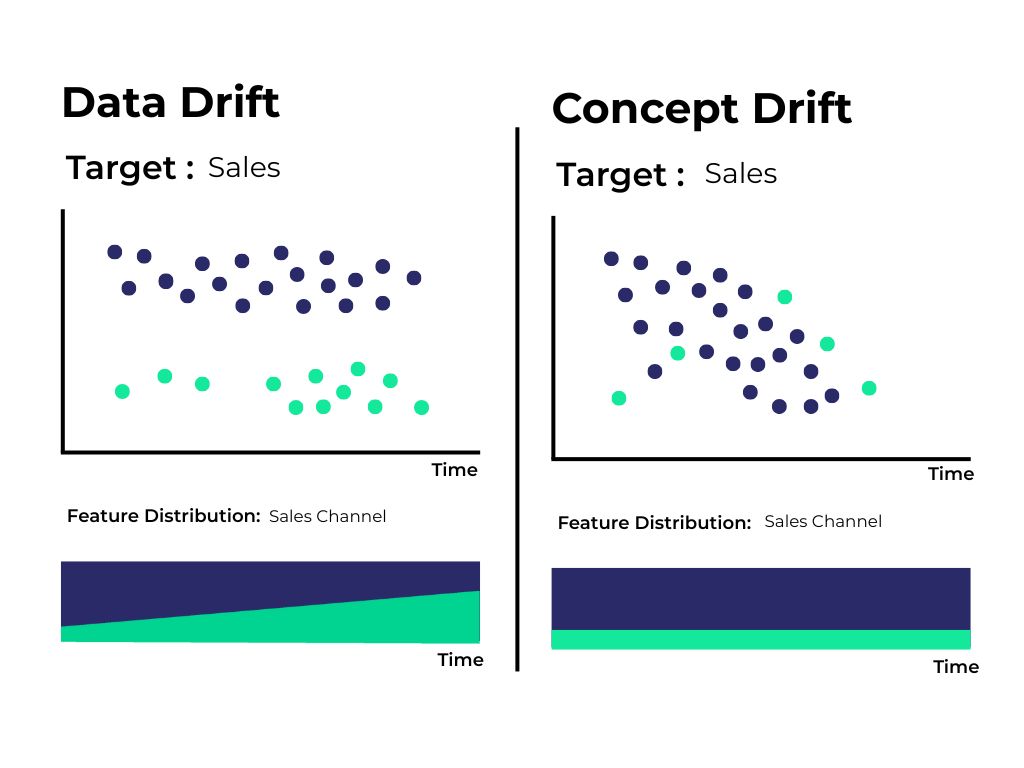

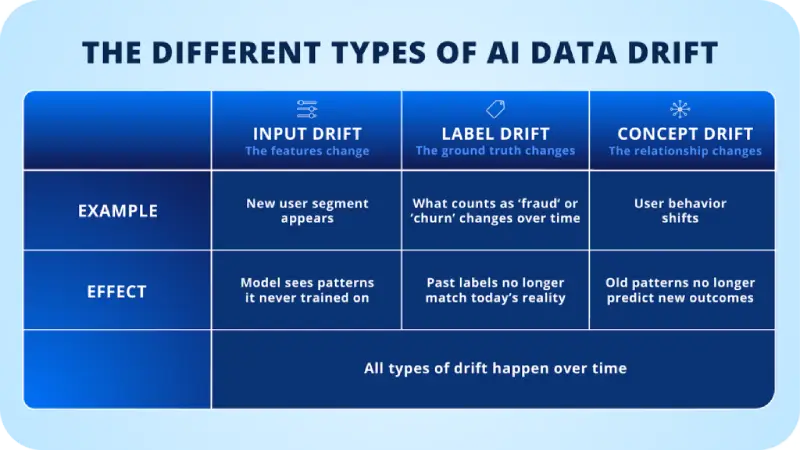

Understanding Data Drift

Data drift happens when the statistical properties of the data your machine learning models are fed change over time. Imagine training a model with data from last year. It might not recognize the new types of cyber threats popping up today. That's data drift, and it's a big deal in security.

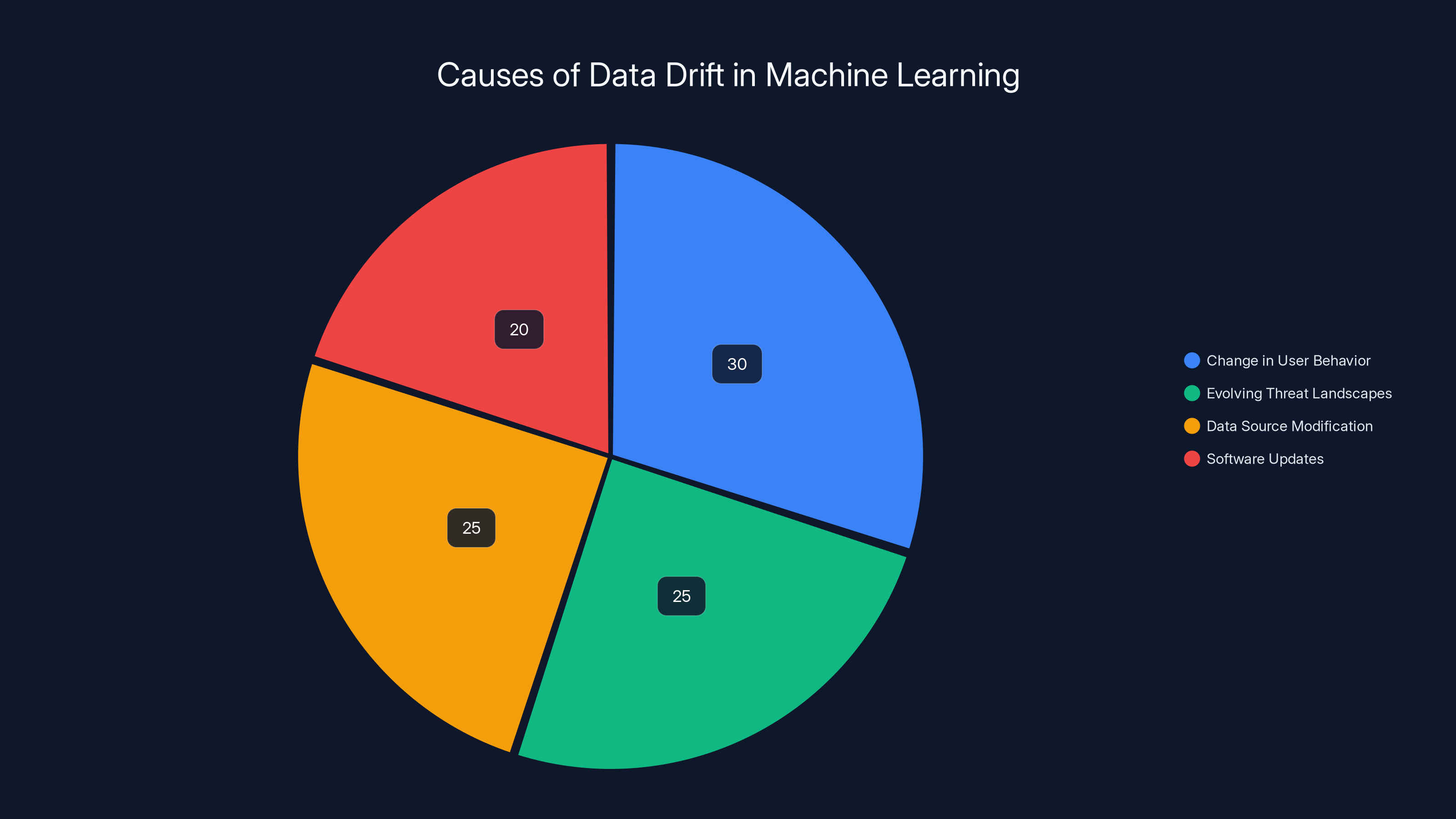

What Causes Data Drift?

- Change in User Behavior: Users tend to change how they interact with systems. What was normal a year ago might be suspicious today.

- Evolving Threat Landscapes: Cyber threats are constantly evolving, with attackers developing new methods to bypass security.

- Data Source Modification: Changes in data collection methods or new data sources can alter input data characteristics.

- Software Updates: Updates in software and platforms can lead to changes in how data is logged and interpreted.

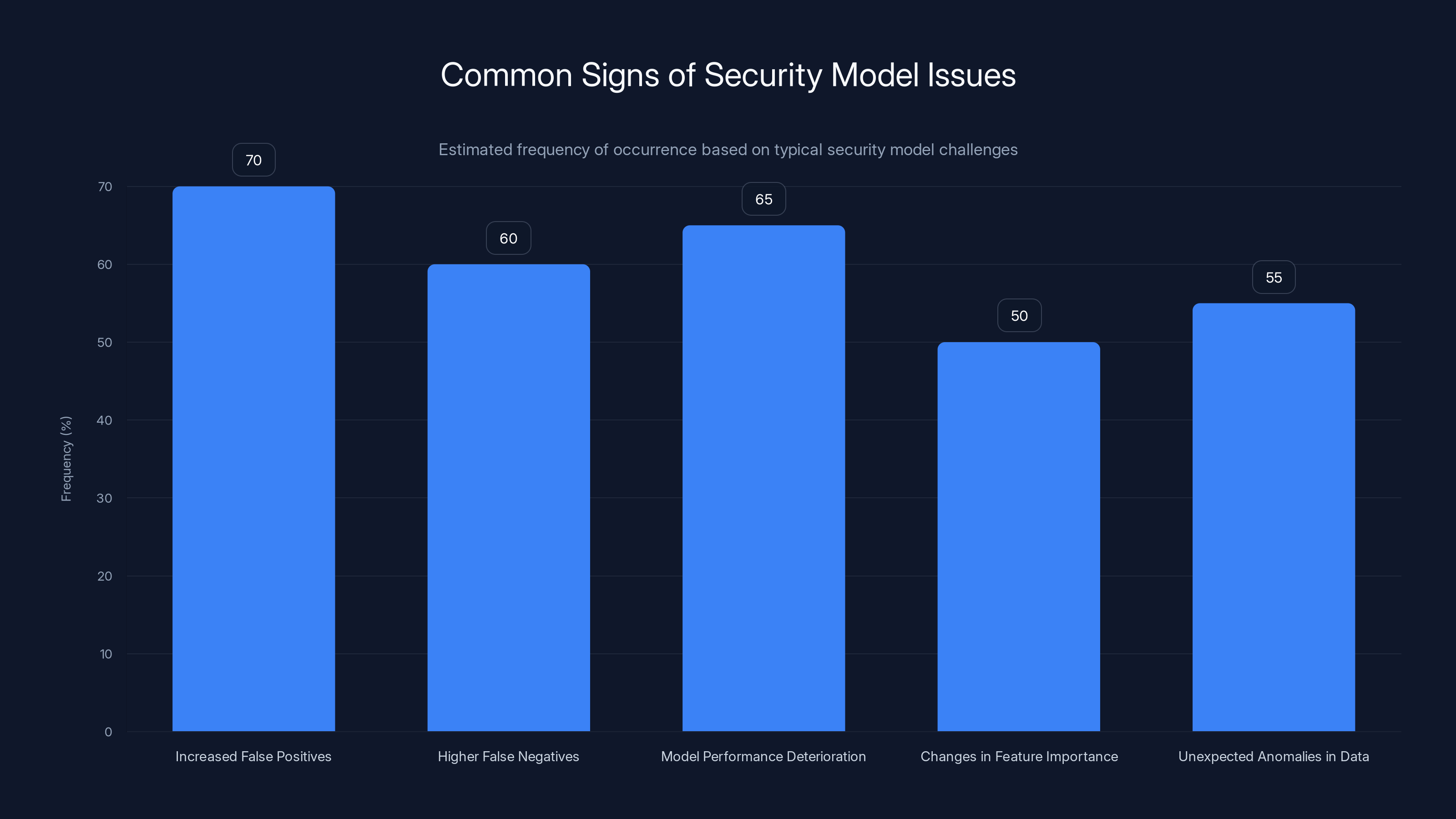

The chart illustrates estimated frequencies of common signs indicating issues in security models. Increased false positives and model performance deterioration are among the most frequently observed signs. Estimated data.

Signs Your Security Models Are Affected

1. Increased False Positives

If your security model starts flagging too many legitimate actions as threats, you might be looking at a case of data drift. This can lead to alert fatigue, where real threats might get ignored because of the noise.

- Example: A network intrusion detection system begins flagging benign traffic more often due to outdated training data.

2. Higher False Negatives

On the flip side, when your model misses actual threats, it's a serious issue. A model trained on old attack patterns might not catch new, sophisticated threats.

- Example: Malware detection tools fail to identify new variants of existing malware because the model hasn't been updated with recent data.

3. Model Performance Deterioration

A drop in overall model performance, such as lower accuracy or precision, can be a strong indication of data drift.

- Example: The precision of a phishing detection model decreases over time as phishing tactics evolve.

4. Changes in Feature Importance

If the importance of features in your model changes significantly, it's a sign that the relationships between data inputs and outputs have shifted.

- Example: A network security model starts giving more weight to previously less important features, indicating a shift in threat patterns.

5. Unexpected Anomalies in Data

Sudden spikes or drops in your data distributions can signal drift.

- Example: A sudden increase in login attempts from a specific region might indicate a shift in attack vectors.

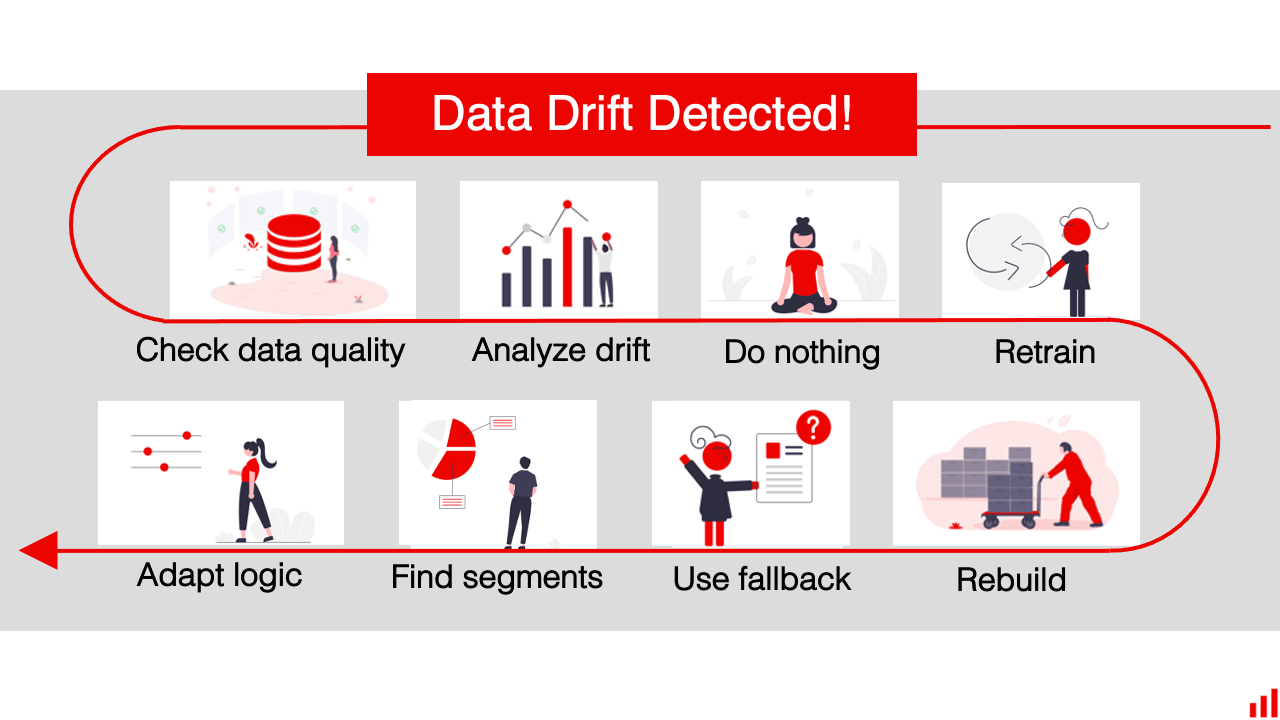

Practical Steps for Addressing Data Drift

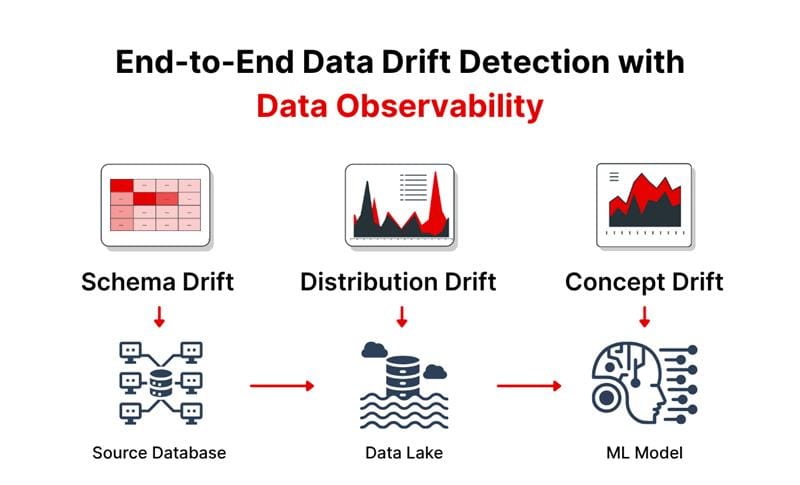

Regular Monitoring and Alerts

Implementing a system to regularly monitor data inputs and model outputs is crucial. Use alerts to catch significant deviations.

- Tools for Monitoring: Platforms like Runable can aid in setting up automated monitoring for data drift.

Scheduled Model Retraining

Retraining models at regular intervals or when significant drift is detected helps maintain performance.

- Retraining Frequency: This can vary based on the speed of data change but should be at least quarterly.

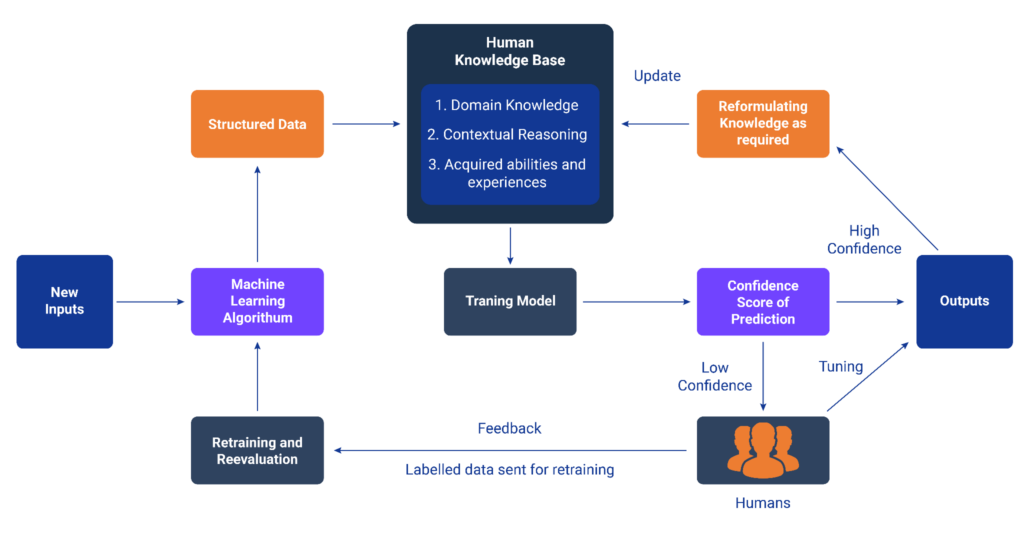

Utilizing Adaptive Learning

Incorporate adaptive learning models that can adjust to new data patterns without complete retraining.

- Benefits: Reduces the need for frequent retraining and helps in maintaining model accuracy.

Estimated data shows that changes in user behavior and evolving threat landscapes are major contributors to data drift, each accounting for around 25-30% of the impact.

Future Trends in Managing Data Drift

Increased Adoption of Continuous Learning

As data drift becomes more recognized, continuous learning models that adapt to new data in real-time will become standard.

Integration of Explainable AI (XAI)

Explainable AI will help security teams understand why models make certain decisions, aiding in the identification of drift.

Enhanced Data Quality Tools

Investments in tools that ensure data quality and integrity will increase, providing more robust defenses against drift.

Common Pitfalls and Solutions

Pitfall 1: Ignoring Early Signs

Many teams fail to act on early signs of data drift, leading to larger issues down the line.

- Solution: Set alerts for minor deviations to catch drift early.

Pitfall 2: Over-reliance on Historical Data

Relying too heavily on historical data can cause models to become outdated quickly.

- Solution: Ensure your training data is regularly updated with recent information.

Best Practices for Maintaining Robust Security Models

- Data Integrity Checks: Regularly verify the accuracy and consistency of your data.

- Stakeholder Involvement: Involve key stakeholders in monitoring and addressing data drift.

- Model Validation: Perform frequent validations to ensure models remain effective.

- Diversified Data Sources: Use diverse data sources to train models, reducing the impact of drift.

Conclusion

Data drift is a silent disruptor, compromising security models without much notice. By recognizing the early signs and implementing robust monitoring and retraining strategies, you can safeguard your systems against evolving threats. As the landscape shifts, staying proactive with adaptive learning and explainable AI will be key to future-proofing your security infrastructure.

FAQ

What is data drift?

Data drift occurs when the statistical properties of input data change over time, impacting the performance of machine learning models.

How does data drift affect security models?

It leads to increased false positives and negatives, reducing the efficacy of models in threat detection.

What are the benefits of addressing data drift?

Benefits include reduced false alerts, improved threat detection accuracy, and enhanced model reliability, as supported by Venture Beat.

How can I detect data drift early?

Implement regular data monitoring and use alerts to catch significant deviations in data patterns.

What tools can help manage data drift?

Platforms like Runable offer features for automated data drift detection and model retraining.

Are there future trends in managing data drift?

Yes, increased adoption of continuous learning models and integration of explainable AI are expected trends.

How often should models be retrained?

Retraining frequency depends on the speed of data change but should be at least quarterly.

What is adaptive learning?

Adaptive learning involves models that can adjust to new data patterns without the need for complete retraining.

How can I maintain data quality?

Invest in data quality tools and perform regular integrity checks to ensure consistent and accurate data.

What is explainable AI (XAI)?

Explainable AI helps security teams understand model decision-making processes, aiding in identifying drift.

Key Takeaways

- Data drift impacts model accuracy by altering input data characteristics.

- Security models become less effective as false positives and negatives increase.

- Regular monitoring and alerts are crucial for early data drift detection.

- Adaptive learning reduces the need for frequent model retraining.

- Future trends include continuous learning and integrating explainable AI.

Related Articles

- Rockstar Games and the Third-Party Data Breach: Understanding the Fallout [2025]

- Understanding the Impact of Cybersecurity Breaches on Gaming Industry: A Deep Dive [2025]

- Washington's AI Data Center Tax Rollback: Industry Repercussions and State Policy Shifts [2025]

- Understanding X's New Policy on Clickbait: A Deep Dive [2025]

- The Talent Tug-of-War: Who's Snatching the Brains Behind Self-Driving Vehicles? [2025]

- Understanding AI: A Simple Guide to Common Terms and Concepts [2025]

![Five Signs Data Drift is Undermining Your Security Models [2025]](https://tryrunable.com/blog/five-signs-data-drift-is-undermining-your-security-models-20/image-1-1776022419193.png)